Red-MIRROR: Agentic LLM-based Autonomous Penetration Testing with Reflective Verification and Knowledge-augmented Interaction

Web applications remain the dominant attack surface in cybersecurity, where vulnerabilities such as SQL injection, XSS, and business logic flaws continue to cause significant data breaches. While penetration testing is effective for identifying these…

Authors: Tran Vy Khang, Nguyen Dang Nguyen Khang, Nghi Hoang Khoa

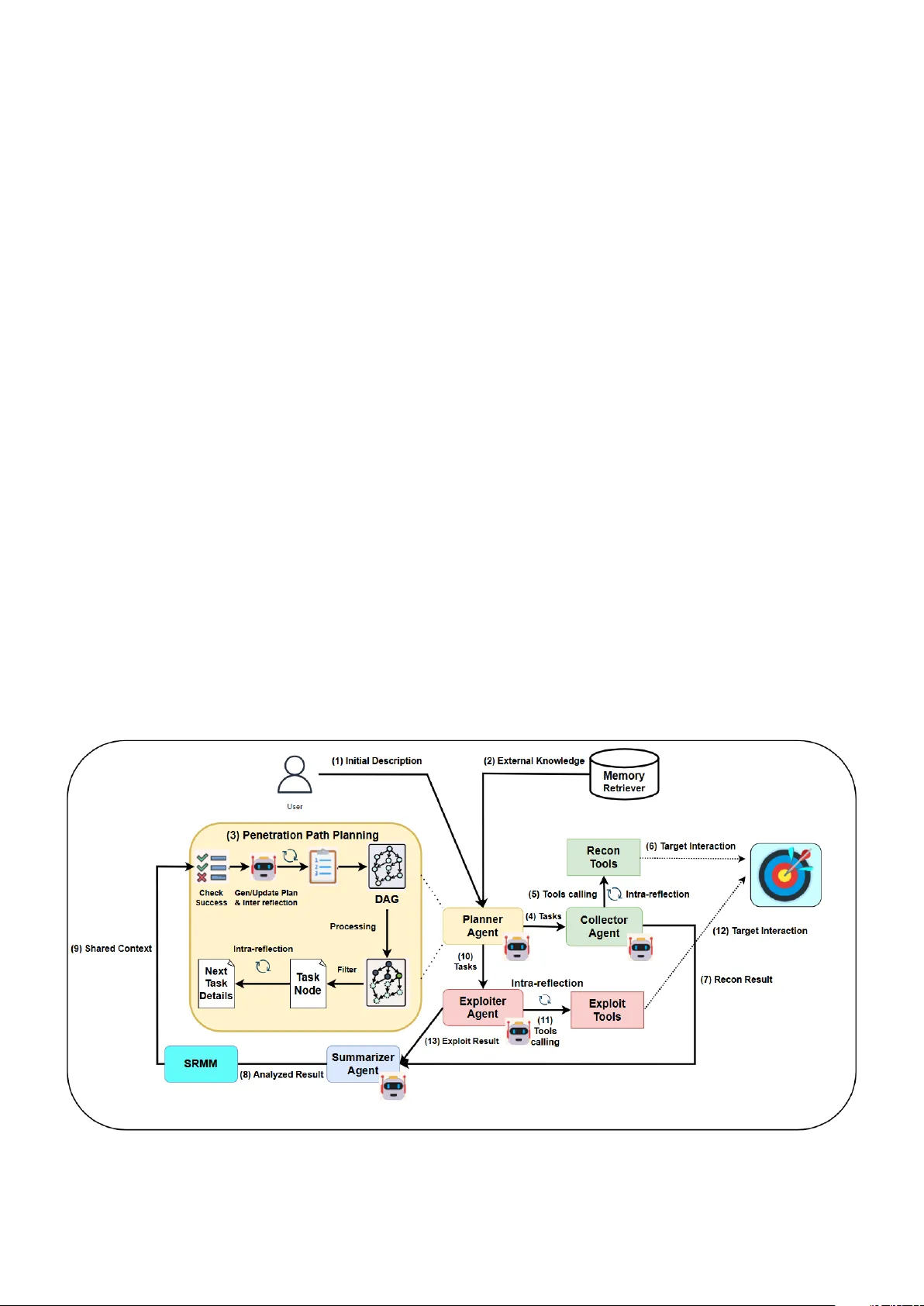

Red-MIRR OR: Agentic LLM-based Autonomous Penetration T esting with Reflecti ve V erification and Kno wledge-augmented Interaction T ran Vy Khang a,b , Nguyen Dang Nguyen Khang a,b , Nghi Hoang Khoa a,b , Do Thi Thu Hien a,b , V an-Hau Pham a,b , Phan The Duy a,b, ∗ a Information Security Lab, University of Information T echnology , Ho Chi Minh City , V ietnam b V ietnam National University , Ho Chi Minh City , V ietnam Abstract W eb applications remain the dominant attack surface in c ybersecurity , where vulnerabilities such as SQL injection, XSS, and business logic flaws continue to cause significant data breaches. While penetration testing is e ff ectiv e for identifying these weaknesses, traditional manual approaches are time-consuming and heavily dependent on scarce expert knowledge. Recent Large Language Models (LLM)-based multi-agent systems hav e shown promise in automating penetration testing, yet they still su ff er from critical limitations: ov er-reliance on parametric kno wledge, fragmented session memory , and insu ffi cient v alidation of attack payloads and responses. This paper proposes Red-MIRR OR, a nov el multi-agent automated penetration testing system that introduces a tightly coupled memory–reflection backbone to explicitly gov ern inter-agent reasoning. By synthesizing Retriev al-Augmented Generation (RA G) for e xternal kno wledge augmentation, a Shared Recurrent Memory Mechanism (SRMM) for persistent state management, and a Dual-Phase Reflection mechanism for adaptive v alidation, Red-MIRROR pro vides a robust solution for complex web e xploitation. Empirical ev aluation on the XBOW benchmark and V ulhub CVEs sho ws that Red-MIRR OR achieves performance comparable to state-of-the-art agents on V ulhub scenarios, while demonstrating a clear advantage on the XBO W benchmark. On the XBO W benchmark, Red-MIRR OR attains an overall success rate of 86.0 percent, outperforming PentestAgent (50.0 percent), AutoPT (46.0 percent), and the V ulnBot baseline (6.0 percent). Furthermore, the system achiev es a 93.99 percent subtask completion rate, indicating strong long-horizon reasoning and payload refinement capability . Finally , we discuss ethical implications and propose safeguards to mitigate misuse risks. K eywor ds: Penetration T esting, Lar ge Language Model, Multi-Agent System, W eb Application 1. Introduction The rapid proliferation of web applications has substantially expanded the e xternally accessible attack surface of modern digital infrastructures. The V erizon Data Breach In vestigations Report (DBIR) 2025 identifies Basic W eb Application Attacks as one of the fiv e most prev alent breach patterns, accounting for approximately 12% of ov er 12,000 confirmed data breaches ana- lyzed worldwide [ 1 ]. In absolute terms, this corresponds to more than one thousand real-world compromise incidents attributable to web-facing systems, demonstrating that web applications re- main a consistently e xploited entry point in lar ge-scale breach scenarios. Trends in vulnerability reporting further highlight the prominence of application-layer weaknesses. According to O W ASP’ s T op 10 classifications, the number of publicly recorded CVE entries mapped to Injection-related weaknesses increased from approximately 32,000 in 2021 to around 64,000 in 2025, reflecting a substantially lar ger corpus of publicly docu- mented injection vulnerabilities in recent vulnerability datasets ∗ Corresponding author Email addr esses: 22520628@gm.uit.edu.vn (Tran Vy Khang), 22520617@gm.uit.edu.vn (Nguyen Dang Nguyen Khang), khoanh@uit.edu.vn (Nghi Hoang Khoa), hiendtt@uit.edu.vn (Do Thi Thu Hien), haupv@uit.edu.vn (V an-Hau Pham), duypt@uit.edu.vn (Phan The Duy) [ 2 , 3 ]. Giv en their inherent public accessibility and direct expo- sure to untrusted inputs, vulnerabilities such as SQL Injection (SQLi) and Cross-Site Scripting (XSS) continue to present a critical security challenge, enabling attackers to compromise sensitiv e user and enterprise data [ 3 , 4 ]. Penetration testing is widely regarded as one of the most e ff ectiv e approaches for identifying web security vulnerabilities, assessing their severity , and proposing appropriate mitigation strategies [ 5 ]. Howe ver , traditional penetration testing often requires organizations to in vest significant time and financial resources in b uilding and maintaining highly skilled security teams with years of practical experience [ 6 , 7 ]. T o address these limitations in cost and human resources, automated security testing solutions ha ve been acti vely studied and developed, particularly those integrating Large Language Models (LLMs) with multi-agent systems [ 8 , 9 , 10 ]. Such LLM- based approaches demonstrate strong capabilities in penetration testing through e ff ecti ve pattern-matching for vulnerability de- tection, handling uncertainty in dynamic en vironments, and cost-e ff ectiv e integration [ 11 ]. While the automation of pene- tration testing o ff ers significant promise for defensi ve security , it also introduces dual-use risks, as the same capabilities could be repurposed for malicious activities [ 12 ]. Consequently , the dev elopment of such systems must be framed within a “Re- sponsible AI Research” paradigm, emphasizing their role as Pr eprint submitted to Elsevier Mar ch 31, 2026 tools for red teaming, vulnerability discov ery , and proacti ve de- fense rather than enabling cybercrime. Recent studies such as V ulnBot [ 13 ], PentestAgent [ 14 ], xO ff ense [ 15 ], Autopentest [ 16 ], AutoPT [ 17 ] and PTFusion [ 18 ] demonstrate that LLM- based agents, when combined with multi-agent architectures and external kno wledge sources through Retriev al-Augmented Generation (RAG), are capable of e ff ectiv ely exploiting web application vulnerabilities. Howe ver , despite the above advancements, sev eral funda- mental architectural bottlenecks hinder their reliability and e ff ec- tiv eness in real-world scenarios, which can be categorized into three critical limitations. First, existing systems su ff er from inef- ficient memory and session management [ 13 , 14 , 17 ]. Informa- tion maintained within a single conv ersational history often leads to memory fragmentation, resulting in degraded performance in long-horizon attack scenarios. As interactions accumulate, criti- cal tokens from early reconnaissance phases may be truncated or diluted by later outputs, prev enting the agent from e ff ectiv ely linking initial discov eries to subsequent exploitation steps. For example, V ulnBot [ 13 ] reports challenges related to context loss and session continuity , particularly in multi-phase penetration workflo ws. This limitation hampers coherent long-horizon rea- soning [ 19 ], which is essential for e xecuting complex, multi-step attack chains. Second, current approaches lack robust quality control for request handling [ 13 , 14 , 17 ]. W ithout a mechanism to v alidate payloads before ex ecution, agents can repeatedly issue malformed requests, increasing detection risks. For in- stance, selecting an in valid injection point can prematurely f ail an entire testing workflo w without meaningful feedback. Third, integrated testing tools are often insu ffi ciently specialized for modern applications. Automated frame works typically rely on general-purpose command-line tools such as Nmap or SQLMap, which lack the granularity needed to test complex, dynamic application logic found in contemporary web en vironments. T o bridge this gap and address these critical architectural lim- itations, we propose Red-MIRROR ( Red -teaming M ulti-agent I ntrospectiv e R easoning for R obust O ff ensiv e R esearch), an automated multi-agent penetration testing system that enhances memory and session management during inter -agent interactions and testing workflo ws. In particular , our framework le verages Retriev al-Augmented Generation (RAG) to provide external knowledge relevant to penetration testing. In addition, Red- MIRR OR introduces mechanisms for validating, analyzing, and refining requests and attack plans based on observ ed responses during testing, thereby establishing a closed-loop feedback pro- cess. Finally , Red-MIRR OR integrates a set of specialized web testing tools, further improving its e ff ectiv eness in exploiting vulnerabilities in web applications. Moreov er , we contribute by constructing a fine-tuning and training dataset for a mid-scale LLM to ev aluate the potential of self-hosted open-source models in comparison with commer- cial models. W e also dev elop a subtask-lev el benchmark based on a subset of the XBOW [ 20 ] benchmark and selected CVEs from V ulhub [ 21 ], allowing for a more detailed ev aluation of test cov erage and step-by-step ex ecution capabilities across the penetration testing workflo w . In summary , we make the follo wing four key contrib utions: • W e introduce Red-MIRROR , the first multi-agent pen- etration testing framework that tightly couples a Shared Recurrent Memory Mechanism (SRMM) with Dual-Phase Reflection and RAG-augmented kno wledge. This back- bone explicitly governs inter-agent reasoning and adaptive validation, ov ercoming memory fragmentation and pay- load hallucination in long-horizon attacks. Extensiv e ev al- uation on the XBOW benchmark demonstrates an ov erall exploitation success rate of 86.0% (vs. 50.0% PentestA- gent, 46.0% AutoPT , and 6.0% V ulnBot baseline) together with a 93.99% subtask completion rate, establishing a ne w state-of-the-art in autonomous web penetration testing. • W e curate a specialized, high-fidelity fine-tuning dataset of 1,644 prompt-response pairs co vering CVE descriptions, CAPEC attack patterns, and MITRE A TT&CK techniques. By applying LoRA on Qwen2.5-14B, we demonstrate that a mid-scale open-source LLM can achieve competitiv e pentesting performance against commercial models, sig- nificantly lo wering the barrier for self-hosted o ff ensiv e AI research. • W e construct a fine-grained subtask-lev el benchmark de- riv ed from XBOW subsets and real-w orld V ulhub CVEs. This benchmark enables precise measurement of recon- naissance, exploitation, and reflection capabilities across multi-step workflo ws, addressing a critical ev aluation gap in prior LLM-based pentesting studies. • W e explicitly address the dual-use risks of o ff ensive AI through a comprehensive ethical analysis and propose practical safeguards (role-based access control, audit log- ging, and RA G knowledge gating) that ensure responsible deployment of Red-MIRR OR in authorized red-teaming en vironments. The remainder of this paper is organized as follows. Sec- tion 2 re views the background and related w ork on automated penetration testing and LLM-based agents. Section 3 describes the architecture of our Red-MIRR OR framework, detailing the components for memory management and reflecti ve reasoning. The experimental results and comparati ve analysis are presented in Section 4 . Section 5 provides a discussion on the findings, limitations, and ethical implications. Finally , we conclude our research and suggest future directions in Section 6 . 2. Background and Related W ork 2.1. P enetration T esting and Automation Challenges Penetration testing (pentest) is a comprehensiv e security as- sessment methodology that ev aluates the rob ustness of computer systems, networks, and applications by simulating real-world adversarial attacks. Unlike automated vulnerability scanning, which primarily detects known weaknesses using signature- based techniques, penetration testing emphasizes exploitabil- ity , impact assessment, and contextual risk validation through 2 adaptiv e attack execution [ 22 ]. This process often requires hu- man e xpertise to interpret system beha vior , adjust strategies, and reason ov er multiple intermediate findings. Standardized framew orks such as the Penetration T esting Execution Standard (PTES) [ 23 ] and NIST guidelines define pen- etration testing as a multi-phase and iterati ve process, typically encompassing reconnaissance, scanning and analysis, e xploita- tion, and reporting. These phases are tightly interdependent: information gathered during exploitation frequently informs further reconnaissance, while partial failures may require refor - mulating attack strategies. In web application security , e ff ectiv e penetration testing additionally demands session awareness, un- derstanding of application logic, and dynamic payload adapta- tion in response to server-side behaviors. V ulnerabilities such as SQL Injection, Cross-Site Scripting (XSS), and authentica- tion bypasses often require multi-step interactions rather than single-shot exploits. Despite its e ff ectiveness, traditional penetration testing is time-consuming, labor -intensive, and di ffi cult to scale across large or continuously ev olving systems. Modern web appli- cations frequently undergo rapid dev elopment cycles, making manual testing insu ffi cient to keep pace with emerging vulnera- bilities. As a result, there is a growing demand for automated pentesting solutions that can replicate expert reasoning while maintaining e ffi ciency and scalability . Recent studies such as V ulnBot [ 13 ], PentestAgent [ 14 ], xO ff ense [ 15 ], Autopentest [ 16 ], AutoPT [ 17 ] and PTFusion [ 18 ] demonstrate that LLM- based agents, when combined with multi-agent architectures and external kno wledge sources through Retriev al-Augmented Generation (RA G), can e ff ectively exploit web application vul- nerabilities. Howe ver , despite these advancements, these systems exhibit sev eral common limitations. First, systems such as V ulnBot, PentestAgent, and xO ff ense su ff er from ine ffi cient memory and session management still leads to context fragmentation in long- horizon multi-phase workflo ws. Second, approaches including V ulnBot, AutoPT , and Autopentest lack robust v alidation mech- anisms, often resulting in blind or malformed payload ex ecution without systematic verification due to persistent LLM hallucina- tions and insu ffi cient auto-checks. Third, existing frame works such as AutoPT , and PentestAgent rely on loosely integrated or general-purpose tools leading to ine ffi cient and inconsistent exploitation beha vior caused by imprecise command generation and heterogeneous output fusion. Automating penetration testing remains challenging due to its reliance on long-horizon reasoning, uncertainty management, and expert decision-making [ 19 , 24 ]. While recent systems ha ve incorporated scripted workflo ws or rule-based engines, such approaches struggle to generalize across heterogeneous targets and complex application logic. More recent research has ex- plored the use of intelligent agents to replicate portions of e xpert reasoning, enabling automated systems to conduct reconnais- sance, select candidate attack vectors, and v alidate exploitation outcomes. Howe ver , existing automated pentesting framew orks often su ff er from fragmented context management, limited adapt- ability , and unreliable verification of exploit success, particularly in extended testing sessions. These limitations motiv ate the ex- ploration of reasoning-centric and memory-aware approaches that can better capture the iterative and stateful nature of real- world penetration testing. 2.2. Lar ge Language Models for Security Reasoning and Knowl- edge A ugmentation LLMs are neural architectures trained on large-scale textual corpora to perform a wide range of language understanding and generation tasks. Most contemporary LLMs are b uilt upon the T ransformer architecture, which employs self-attention mecha- nisms to model long-range dependencies within input sequences [ 25 ]. These properties make LLMs suitable for security-related applications, including vulnerability reasoning, payload gen- eration, HTTP request analysis, and interpretation of server responses [ 26 ]. T wo primary strategies are commonly used to adapt LLMs to domain-specific tasks: prompt engineering and fine-tuning. Prompt engineering exploits in-context learning by carefully constructing input prompts that include structured instructions or few-shot examples, guiding model behavior without mod- ifying model parameters. While this approach enables rapid experimentation and deployment, it is constrained by finite con- text windows and often exhibits unstable performance when applied to complex, multi-step reasoning tasks such as penetra- tion testing workflo ws [ 19 ]. Fine-tuning, in contrast, inv olves further training a pre- trained LLM on task-specific datasets to align its internal repre- sentations with domain requirements. Parameter-e ffi cient fine- tuning techniques, such as Low-Rank Adaptation (LoRA) [ 27 ], significantly reduce computational costs while enabling e ff ec- tiv e specialization. In security conte xts, fine-tuning can enhance a model’ s understanding of exploit patterns, vulnerability tax- onomies, and structured output formats, leading to improved consistency and reliability in automated systems. Despite these advances, LLMs inherently su ff er from knowl- edge staleness and hallucination, particularly in fast-e volving do- mains such as cybersecurity . Retriev al-Augmented Generation (RA G) [ 28 ] addresses these limitations by integrating e xternal knowledge retriev al mechanisms into the generation process. By augmenting prompts with relev ant documents retriev ed from curated kno wledge bases, RA G enables LLMs to access up-to- date vulnerability descriptions, e xploit techniques, and testing guidelines without frequent retraining. In penetration testing applications, grounding model outputs in authoritati ve sources such as OW ASP documentation or CVE databases improves factual accuracy , reduces hallucinations, and enables smaller or mid-scale LLMs to achiev e competitiv e performance in special- ized security tasks. 2.3. Multi-Agent Arc hitectures with Memory and Reflection Mechanisms Multi-agent systems consist of multiple autonomous agents that collaborate to achie ve comple x objectiv es through task de- composition and coordination [ 8 ]. This paradigm closely mirrors real-world penetration testing practices, where reconnaissance, exploitation, and analysis are typically performed by special- ists with distinct responsibilities. Recent LLM-based pentesting 3 framew orks increasingly adopt multi-agent architectures to dis- tribute roles such as attack planning, payload execution, and response interpretation, improving modularity and scalability . Howe ver , naiv e multi-agent implementations often rely on con versational message passing as the primary coordination mechanism. Such designs can lead to redundant communica- tion, inconsistent system states, and loss of critical contextual information during long-horizon tasks [ 29 ]. These issues are par- ticularly pronounced in penetration testing, where maintaining session state, tracking discovered endpoints, and reasoning ov er prior exploit attempts are essential for e ff ecti ve attack planning. T o address these challenges, recent research has explored memory-augmented and recurrent T ransformer architectures that introduce shared or persistent memory abstractions for long-horizon reasoning [ 30 ]. Shared Recurrent Memory Mecha- nism (SRMM)–inspired approaches enable agents to accumulate salient information ov er time and access a common contextual state without relying solely on explicit message exchanges. At a system le vel, such shared memory mechanisms support consis- tent session handling, reduce redundant exploration, and enhance coordination across agents during extended penetration testing workflo ws. In addition to memory , reflection mechanisms ha ve been proposed to enable intelligent agents to e valuate outcomes and refine future actions based on feedback [ 31 ]. Reflection is par - ticularly important in automated penetration testing to av oid re- peated failures, validate e xploit attempts, and adapt strategies to dynamic defenses such as input sanitization or W eb Application Firew alls. Dual-phase reflection paradigms conceptually sepa- rate local, short-term ev aluation from global, long-term strategy refinement. By combining intra-reflection, which assesses the correctness of immediate actions, with inter-reflection, which analyzes outcomes across agents and iterations, automated sys- tems can iterativ ely improve decision-making quality . T ogether , memory-centric coordination and reflection-based adaptation form a conceptual foundation for more reliable, stable, and scal- able automated penetration testing systems. In contrast to prior systems that primarily introduce reflection as a local heuristic or prompt-lev el correction [ 14 ], Red-MIRR OR operationalizes reflection as a system-le vel control mechanism tightly coupled with persistent shared memory and planning. 3. Methodology 3.1. Motivating Example: Long-Context Chained Exploitation T o illustrate the challenges of long-horizon reasoning in autonomous penetration testing, consider a benchmark instance in volving a reflected XSS vulnerability protected by server-side filtering. During the reconnaissance phase, the agent accesses the main page and discovers the endpoint /page . A request to /page?name=test rev eals that the parameter name is reflected inside an HTML attribute as follo ws, confirming that user input is embedded within a quoted attribute conte xt. The exploitation phase requires iterati ve experimentation. Initial payloads such as are sanitized by the server . Subsequent attempts reveal a system- atic transformation: any injected substring matching the pattern <[a-z/] is remov ed or neutralized before rendering. For ex- ample, inputs beginning with