Unsupervised Behavioral Compression: Learning Low-Dimensional Policy Manifolds through State-Occupancy Matching

Deep Reinforcement Learning (DRL) is widely recognized as sample-inefficient, a limitation attributable in part to the high dimensionality and substantial functional redundancy inherent to the policy parameter space. A recent framework, which we refe…

Authors: Andrea Fraschini, Davide Tenedini, Riccardo Zamboni

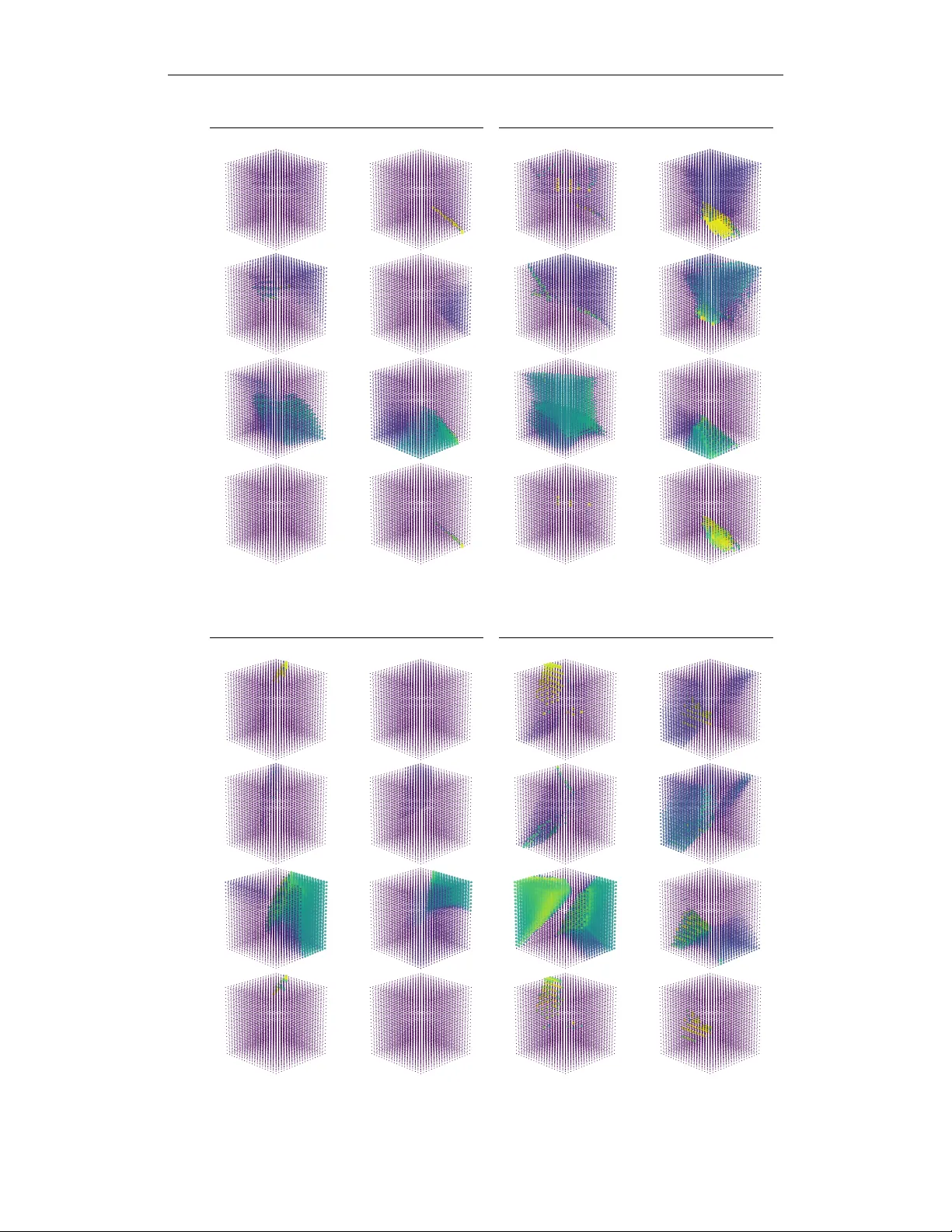

Cover Page Unsupervised Beha vioral Compression: Lear ning Low-Dimensional P olicy Manif olds through State-Occupancy Matching Andrea Fraschini, Davide T enedini, Riccardo Zamboni, Mirco Mutti, Marcello Restelli Keyw ords: reinforcement learning, unsupervised reinforcement learning, unsupervised representation learning Summary Deep Reinforcement Learning (DRL) is widely recognized as sample-inef ficient, a limitation attributable in part to the high dimensionality and substantial functional redundancy inherent to the policy parameter space. A recent framew ork, which we refer to as Action-based Polic y Compression (APC), mitigates this issue by compressing the parameter space Θ into a low- dimensional latent manifold Z using a learned generati ve mapping g : Z → Θ . Ho wev er , its performance is sev erely constrained by relying on immediate action-matching as a reconstruction loss, a myopic proxy for behavioral similarity that suffers from compounding errors across sequential decisions. T o overcome this bottleneck, we introduce Occupanc y-based Policy Compression (OPC), which enhances APC by shifting beha vior representation from immediate action-matching to long-horizon state-space co verage. This modification forces the generati ve model to organize the latent space around true functional similarity , promoting a latent representation that generalizes ov er a broad spectrum of behaviors while retaining most of the original parameter space’ s expressi vity . Finally , we empirically validate the advantages of our contrib utions across multiple continuous control benchmarks. Contrib ution(s) 1. This paper introduces an information-theoretic uniqueness score deri ved from the entropy decomposition of the mixture state occupanc y to curate unsupervised polic y datasets for manifold learning. Context: Prior work on Latent Behavior Compression ( T enedini et al. , 2026 ) curates datasets using nov elty search based on k -nearest neighbors in the Euclidean space of immediate policy actions corresponding to randomly sampled states, which, as demonstrated by the experiments presented in Section 5.1 , often fails to capture comple x behavioral uniqueness. 2. This paper proposes a fully differentiable Behavioral Compression objective that directly minimizes the di vergence between true and reconstructed mixture occupanc y distributions using an importance sampling estimator . Context: Prior work ( T enedini et al. , 2026 ) employed a behavioral reconstruction loss based on the di vergence of the immediate action distrib ution relativ e to randomly sampled states. Ho wev er , such a measure has been shown to be a rather ultra-conserv ati ve proxy for behavioral similarity (Prop. E.1, Metelli et al. , 2018 ). 3. Through an extensi ve empirical campaign, we demonstrate that our methodology preserv es unique, high-performing behaviors during dataset curation, yields a more behaviorally rich latent manifold, and successfully enables latent optimization in complex en vironments. Context: Prior work ( T enedini et al. , 2026 ) lacked quantitativ e analysis on the effect of dataset curation, presented in Section 5.1 , and proposed methods with action-based proxies that collapsed in more complex en vironments (e.g., Hopper). Unsupervised Beha vioral Compression: Lear ning Low-Dimensional P olicy Manif olds through State-Occupancy Matching Andrea Fraschini ♣ , † , Davide T enedini ♣ , † , Riccardo Zamboni ♣ , Mirco Mutti ♠ , Marcello Restelli ♣ andrea.fraschini@mail.polimi.it, {davide.tenedini, riccardo.zamboni, marcello.restelli}@polimi.it, mirco.m@technion.ac.il ♣ Politecnico di Milano ♠ T echnion † Equal contribution Abstract Deep Reinforcement Learning (DRL) is widely recognized as sample-inefficient, a limitation attributable in part to the high dimensionality and substantial functional redundancy inherent to the policy parameter space. A recent frame work, which we refer to as Action-based Policy Compression (APC), mitigates this issue by compressing the parameter space Θ into a low-dimensional latent manifold Z using a learned generativ e mapping g : Z → Θ . Howe ver , its performance is severely constrained by relying on immediate action-matching as a reconstruction loss, a myopic proxy for beha vioral similarity that suffers from compounding errors across sequential decisions. T o overcome this bottleneck, we introduce Occupancy-based Policy Compression (OPC), which enhances APC by shifting beha vior representation from immediate action-matching to long-horizon state-space coverage. Specifically , we propose two principal impro vements: (1) we curate the dataset generation with an information-theoretic uniqueness metric that deli vers a di verse population of policies; and (2) we propose a fully dif ferentiable compression objecti ve that directly minimizes the div ergence between the true and reconstructed mixture occupancy distributions. These modifications force the generati ve model to org anize the latent space around true functional similarity , promoting a latent representation that generalizes over a broad spectrum of beha viors while retaining most of the original parameter space’ s expressi vity . Finally , we empirically validate the advantages of our contrib utions across multiple continuous control benchmarks. 1 Introduction The success of Deep Reinforcement Learning (DRL) in complex continuous control relies hea vily on the highly expressi ve nature of deep neural networks ( Lillicrap et al. , 2016 ; Schulman et al. , 2017 ). Ho wever , optimizing these high-dimensional policies tabula r asa is notoriously sample- inef ficient ( Duan et al. , 2016 ; Ag arwal et al. , 2022 ). This inefficienc y stems in part from a fundamental functional redundancy: Due to the comple x symmetries of neural architectures, v ast and disparate regions of the parameter space often collapse into identical agent behaviors. Searching this massi ve, unstructured space blindly ignores the underlying functional geometry of the en vironment. This limitation becomes a se vere bottleneck in multi-task or Unsupervised Reinforcement Learning (URL) 1 settings ( Laskin et al. , 2021 ), where an agent must train a model that rapidly adapts to a variety of previously unkno wn downstream tasks, instead of learning from scratch. T o address this redundancy in the policy parameter space, prior work has proposed v arious ap- proaches to compress the policy space onto lower-dimensional latent manifolds (e.g., Eysenbach et al. , 2018 ; Mutti et al. , 2022 ; Faccio et al. , 2023 ; T enedini et al. , 2026 ; Li et al. , 2026 ). In this stream, Action-based Policy Compression (APC, T enedini et al. , 2026 ) explicitly shifts the learning paradigm from parameter optimization to behavior optimization. APC lev erages a generativ e model to compress a highly redundant policy parameter space into a low-dimensional, behaviorally structured latent manifold. By curating a div erse dataset of random policies and training an autoencoder to reconstruct them, agents can subsequently bypass the raw parameter space entirely . Instead, they perform task-specific fine-tuning by directly navigating the compact latent space. By following this recipe, APC compresses policy architectures with several thousand parameters into tiny tw o- or three-dimensional latent spaces, while retaining most of the functional expressi vity to address a variety of tasks in classical continuous control domains, such as Mountain Car and Reacher . While conceptually and empirically promising, the practical adaptability of APC beyond these domains is sev erely constrained by the technical solutions adopted to train the latent space. In particular , to organize this latent space functionally rather than geometrically , APC relies on an action- matching objectiv e, training the autoencoder to minimize the discrepancy between the immediate action outputs of the original and reconstructed policies. Howe ver , measuring behavioral similarity through immediate action di vergence is a mathematically loose and myopic proxy for long-horizon control ( Metelli et al. , 2018 ). Because errors compound ov er sequential decisions, policies that appear functionally identical at the single-action level can induce drastically dif ferent trajectories and global state visitations. Furthermore, T enedini et al. ( 2026 ) curate the initial pre-training dataset of policies using nov elty search in the action space, which frequently discards rare, high-performing behaviors, sev erely impov erishing the topological diversity of the resulting manifold. T o overcome these limitations, we introduce Occupancy-based Policy Compression (OPC), representing a paradigm shift in latent manifold learning for DRL. W e abandon imme- diate action-matching entirely in fav or of global state-space coverage. Specifically , we introduce two principal algorithmic enhancements. First, we curate the pre-training dataset using an information- theoretic uniqueness metric that actively selects policies based on their contribution to the global state-visitation entropy , retaining a much broader spectrum of rare behaviors. Second, we propose a fully dif ferentiable compression objectiv e that explicitly matches the true mixture occupancy dis- tribution of the policy population. By ev aluating policies based on the exact states they visit ov er the full horizon, OPC completely bypasses the compounding errors of action-matching, forcing the generativ e model to organize the latent space around true, long-horizon functional similarity . Then, to support the design choices behind OPC, we address the following: Research Questions: ( Q1 ) Does curating policies based on their contrib ution to state-space coverage preserve a wider spectrum of rare, high-performing beha viors compared to action-space novelty search? ( Q2 ) Does optimizing a compression objectiv e based on state-space visitation patterns yield a more behaviorally meaningful latent space than action-based beha vioral reconstruction? ( Q3 ) Does navigating an occupancy-matched latent space improve sample ef ficiency and task performance compared to standard baselines and action-matched manifolds? Content Outline and Contrib utions. In Section 2 , we establish the necessary notation. In Section 3 , we detail the e xisting APC framew ork and expose the limitations of action-matching. In Section 4 , we introduce our OPC framew ork. Finally , in Section 5 , we address our Research Questions with extensi ve empirical e vidence, demonstrating that OPC retains richer beha vioral modes, constructs superior latent topologies, and enables fast fe w-shot adaptation to various do wnstream tasks. 2 2 Preliminaries Notation. For a set A of finite size |A| , we denote ∆( A ) its probability simplex. For two distributions p, q , the Kullback-Leibler (KL) di ver gence is D KL ( p ∥ q ) and the differential entropy of p is H ( p ) . Interaction Protocol. W e model the interactions between the agent and the environment as a (finite- horizon) Controlled Markov Process (CMP). A CMP is a tuple M : = ( S , A , P , µ, T ) , where S is the state space and A is the action space. An episode begins with the initial state s 0 ∼ µ ∈ ∆( S ) , where µ is the initial state distribution. At each timestep t = 0 , . . . , T , where T < ∞ is the episode horizon, the agent observes the current state s t , takes action a t ∈ A , such that the model transitions to the next state s t +1 ∼ P ( · | s t , a t ) according to the transition model P : S × A → ∆( S ) . The agent acts according to a stochastic policy π : S → ∆( A ) such that π ( a | s ) denotes the conditional probability of taking action a in state s . This interaction induces a trajectory τ : = ( s 0 , a 0 , s 1 , a 1 , . . . , s T ) . Occupancy Measure. The deployment of polic y π in M induces a mar ginal distribution o ver the state space, kno wn as the (state) occupancy measur e d π ∈ ∆( S ) , which represents the av erage visitation probability of state s ov er the episode length: d π ( s ) = 1 T +1 P T t =0 Pr( s t = s | π ) . If we consider the deployment of a population of M distinct policies Ψ = { π 1 , . . . , π M } , we define the mixture occupancy measure d Ψ ∈ ∆( S ) as the (uniform) mixture of indi vidual occupancies: d Ψ ( s ) = 1 M P M i =1 d π i ( s ) , ∀ s ∈ S . W e consider parametric policies π θ represented by neural networks parametrized by P -dimensional weight vectors θ ∈ Θ ⊆ R P , where Θ represents the policy parameter space. W e denote the collection of representable policies as the P olicy Space Π Θ . As shorthand, we use the parameter vector θ in place of its induced policy π θ and the parameter space Θ in place of the policy space Π Θ . Non-Parametric Estimation. In continuous spaces, a direct computation of the D KL ( f ∥ f ′ ) between distributions f , f ′ is often intractable. Other approaches, such as particle-based estima- tors , are used to approximate these quantities using the finite set of states of collected trajectories. Specifically , Ajgl & Šimandl ( 2011 ) propose an importance sampling k -NN estimator : b D K L ( f ∥ f ′ ) = 1 N N X i =1 ln k / N P j ∈N k i w j , w j = f ′ ( x j ) /f ( x j ) P N n =1 f ′ ( x n ) /f ( x n ) , (1) where N is the number of particles, N k i is the set of indices of the k -nearest neighbors of x i , and w j are the self-normalized importance weights of samples x j . 3 Background: Action-based Policy Compression In Reinforcement Learning (RL), an agent interacts with a CMP equipped with a gi ven reward signal R : S × A → R , augmenting the CMP into a Mark ov Decision Process (MDP , Puterman , 2014 ) M R := M ∪ R . The agent’ s goal is typically to find a high-dimensional parameter vector θ ∗ = arg max θ ∈ Θ J R ( θ ) that maximizes the expected cumulati ve sum of re wards J R ( θ ) obtained by playing the policy π θ . This process is notoriously sample-inefficient, partially due to a functional r edundancy : v ast, distinct regions of the parameter space Θ collapse into identical agent beha viors. As first noted by T enedini et al. ( 2026 ), this inefficiency is se verely exacerbated in Unsupervised Reinforcement Learning (URL, Laskin et al. , 2021 ): the agent learns a task-agnostic repre- sentation M via unsupervised pr e-training on a reward-free CMP , then lev erages it during supervised fine-tuning to rapidly optimize the newly re vealed rew ard R . The APC Framework. This redundancy in URL was first addressed directly via Action-based Policy Compression (APC, T enedini et al. , 2026 ): the high-dimensional policy parameter space Θ ⊆ R P is compressed into a compact, behaviorally structured latent space Z ⊆ R k , with k ≪ P . By shifting the downstream search space from parameters to beha viors, APC enables efficient task adaptation. The framew ork operates in three sequential stages: 3 1. P olicy Dataset Curation: T o model the behavior manifold, APC generates a large pool of policies via random weight initialization. This naïve approach is computationally light, b ut prone to generating redundant, low-ener gy policies. T o filter this pool into a div erse training dataset, APC employs a k n - nearest-neighbor no velty search in the action space , selecting policies that produce diverse immediate action distributions across a set of M collected states. Formally , they threshold the dataset to keep only the top percentile of policies according to the following score: ρ APC ( π θ ) = 1 k n X i ∈N k n D KL ( π θ ∥ π θ i ) . (2) 2. Latent Behavior Compr ession: APC defines the objectiv e of Policy Compression as finding a generativ e mapping g ∗ : Z → Θ , such that: ∀ θ ∈ Θ , ∃ z ∈ Z : g ⋆ = arg min g D KL d sa π θ ∥ d sa π z . (3) T o achiev e such an objectiv e, APC employs an Autoencoder (AE, Hinton & Salakhutdinov , 2006 ), consisting of an encoder f ξ : Θ → Z and a decoder g ζ : Z → Θ , parametrized by ξ and ζ , respectiv ely , trained to minimize the div ergence between immediate actions of the original and reconstructed policy , defined as L B ( ξ , ζ ) = E θ ∼D Θ D KL π θ ∥ π ( g ζ ◦ f ξ )( θ ) . This serves as a computationally efficient proxy to Eq. 3 . 3. Latent Behavior Optimization: Once the decoder g ζ is trained and frozen, it serves as the task-agnostic representation M for fine-tuning. For a do wnstream task R , the agent searches for an optimal code z ∗ = arg max z ∈Z J R ( θ = g ζ ( z )) . Because Z is low-dimensional, APC em- ploys Policy Gradient with Parameter-based Exploration (PGPE, Sehnke et al. , 2008 ). PGPE treats the decoder as a black box, av oiding costly Jacobian computations by sampling latent codes z from a hyper -policy ν ϕ (e.g., a Gaussian distrib ution parameterized by ϕ = ( µ , σ ) ). The hyperparameters are updated via a Monte Carlo gradient estimator ov er N trajectories: ˆ ∇ ϕ J R ( ϕ ) = 1 N N X i =1 ∇ ϕ log ν ϕ ( z i ) R ( τ i ) . (4) Limitations: The Action-matching Bottleneck. While APC successfully reduces the dimensionality of the policy space, its reliance on immediate action-matching poses a fundamental limitation for long-horizon continuous control. Theoretically , APC justifies this proxy by relying on the fact that bounding immediate action div ergence also bounds the true discrepancy between state occupancies. Metelli et al. (Prop. E.1, 2018 ) pro vide an upper bound to this quantity for H -horizon settings, namely H sup s ∈S D KL ( π θ ( · | s ) ∥ π θ ′ ( · | s )) . In practice, due to compounding errors across sequential decisions, policies with near-identical action distributions can yield drastically dif ferent state-occupancy measures. This myopic behavioral representation in both the dataset curation and beha vior compression phases hinders APC’ s ability to retain rare, b ut highly structured beha viors, sev erely impov erishing the topological diversity of the resulting latent manifold. Overcoming these limitations requires a paradigm shift: designing and optimizing beha viors based on long-horizon state-space cov erage, rather than immediate actions. 4 Methodology: Occupancy-based P olicy Compr ession T o overcome the inherent limitation of action-matching proxies, we introduce Occupancy-based Policy Compression (OPC). While retaining APC’ s three-stage pipeline, we introduce funda- mental algorithm shifts to the dataset curation, manifold learning, and fine-tuning phases: 1. P olicy Dataset Curation: Like APC, we initially generate a large candidate pool of policies Ψ via random weight initialization. Ho wev er , rather than using a k -NN search in the action space, we propose an information-theoretic selection criterion. W e aim to identify policies that maximize the cov erage of the feasible state space. T o do so, we score each candidate policy based on its contribution 4 to the differential entrop y of the mixture occupancy distrib ution H ( d Ψ ) = 1 M P M i =1 ρ OPC ( θ i ) , where each component is defined as: ρ OPC ( θ i ) = [ H ( d θ i ) + D KL ( d θ i ∥ d Ψ )] , ∀ θ i ∈ Ψ . (5) Intuitiv ely , this metric selects policies that e xhibit both high individual exploration (high entropy H ( d θ i ) ) and are statistically div erse from the population average (high di vergence from the mixture d Ψ ). T o make this computationally tractable for lar ge populations, we approximate each occupancy distribution d θ i with a Gaussian Mixture Model (GMM) fitted on trajectories collected in a trajectory dataset D τ . A complete deriv ation and tractable estimators of ρ can be found in Appendix B . The final training dataset D Θ is curated by selecting the top-scoring policies from Ψ according to ρ . 2. Latent Behavior Compression: In the compression phase, OPC redefines the fundamental theoretical objecti ve of Policy Compression. The original APC frame work aimed for strict, 1-to-1 state-action occupancy matching (Eq. 3 ). Ho wev er , forcing a generative model to perfectly reproduce individual policies in isolation is overly restricti ve and discourages the discovery of a smooth, continuous behavioral topology . Instead, we argue that the true goal of manifold learning should be to preserve the global state-space coverage of the dataset as a whole. Therefore, OPC shifts the objecti ve from indi vidual matching to mixture-occupancy matching . W e seek a generativ e mapping g ⋆ and a corresponding population of latent codes Z ∈ Z | Ψ | that minimizes the div ergence between the mixture occupancy of the original population Ψ and of the reconstructed population g ( Z ) = { g ( z ) | z ∈ Z } : ∃ Z ∈ Z | Ψ | : g ⋆ = arg min g D KL ( d Ψ ∥ d g ( Z ) ) . (6) By relaxing the strict 1-to-1 reconstruction constraint, this objectiv e allows the generativ e model to organize the latent space purely around global, task-agnostic beha viors. Furthermore, unlike APC, which falls back on an action-matching proxy to approximate its objectiv e, we can optimize Eq. 6 directly . The autoencoder minimizes the empirical loss L B ( ξ , ζ ) = D KL ( d D Θ ∥ d D b Θ ) , where D b Θ = ( g ζ ◦ f ξ )( D Θ ) . W e achiev e this end-to-end by estimating the diver gence using the differentiable non-parametric importance sampling k -NN estimator defined in Eq. 1 , ev aluated over the trajectory dataset D τ . The core of this estimator relies on the unnormalized importance weight w j for a gi ven trajectory τ j = ( s j 0 , a j 0 , . . . , s j T , a j T ) , representing the density ratio between the reconstructed and original mixtures, computed as in Mutti et al. ( 2021 ): w j = P ˆ θ ∈ D b Θ p ( τ j | ˆ θ ) P θ ∈ D Θ p ( τ j | θ ) = P ˆ θ ∈D b Θ Q T t =0 π ˆ θ ( a j t | s j t ) P θ ∈D Θ Q T t =0 π θ ( a j t | s j t ) , where p ( τ | θ ) = µ ( s 0 ) Q T t =0 P ( s t +1 | s t , a t ) π θ ( a t | s t ) is the probability density of a trajectory . Crucially , because the en vironment dynamics P and initial state distribution µ are identical for both the original and reconstructed policies, they cancel out entirely . This yields a purely policy- explicit formulation, rendering the loss function fully dif ferentiable with respect to the Autoencoder parameters ξ and ζ . The final weights are obtained via self-normalization, w j = w j / P N i =1 w i , and by assigning to each particle s j i the weight w j of the trajectory τ j that produced it. A numerically stable formulation of the importance weights can be found in Appendix C . T o manage the computational complexity of the k -NN estimator over lar ge populations, we adopt a stochastic approach, computing the loss and updating the parameters ov er mini-batches of policies ψ ⊂ D Θ and their corresponding trajectories. The detailed algorithm for training the autoencoder is pro vided in Appendix D . 3. Latent Behavior Optimization: Similar to APC, we employ PGPE to optimize the latent code z directly , avoiding analytical computation of the Jacobian of the decoder . T o fully e xploit the low dimensionality and topologically dense structure of the OPC latent space, we introduce a Latent Warm Start phase prior to running PGPE. W e first sample a small set of latent codes. W e then ev aluate the reconstructed policies and use the best-performing latent code as a starting point for standard PGPE (Eq. 4 ). This coarse-to-fine strategy pre vents PGPE from con verging to poor local optima a nd accelerates fine-tuning by starting the gradient updates in a high-reward basin of attraction. 5 All (100%) T op 5% APC T op 5% OPC -2 1 1 23 36 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (a) speed -2 12 26 40 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (b) clockwise -2 12 26 40 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (c) c-clockwise -2 1 1 24 36 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (d) radial 0.00 0.25 0.50 0.75 1.00 Min-Max Normalized Score 0 10 20 30 Reward V alue (e) speed , APC 0.00 0.25 0.50 0.75 1.00 Min-Max Normalized Score 0 10 20 30 Reward V alue (f) speed , OPC 0.00 0.25 0.50 0.75 1.00 Min-Max Normalized Score 0 20 Reward V alue (g) clockwise , APC 0.00 0.25 0.50 0.75 1.00 Min-Max Normalized Score 0 20 Reward V alue (h) radial , OPC Figure 1: Comparison of APC and OPC thresholding in the RC en vironment: rew ard distribution in the curated datasets (top row , frequency is on a log scale) and score distribution comparison (bottom row , scores are min-max normalized). The vertical dashed line marks the 5% cut-of f. In the following section, we empirically validate how these structural enhancements translate to behaviorally richer learned latent manifolds. 5 Experiments In this section, we empirically e valuate the proposed frame work. W e first outline the experimental setup and domains, then systematically address the three research questions introduced in Section 1 . Experimental Settings. W e select continuous control domains that are challenging yet interpretable to clearly illustrate the features of the learned behavioral manifold. The first is Mountain Car Continuous (MC, Moore , 1990 ). T o e valuate the quality of the latent space, we define four downstream tasks: standard and left place the goal state on the right and left hill, respectively; speed and height incentivize the car to maintain high velocity and ele vation ( z -coordinate), respectiv ely , without terminating the episode. W e also consider two MuJoCo en vironments ( T odorov et al. , 2012 ). In Reacher (RC), a two-jointed robotic arm moving in a 2D plane, we remov e target observ ations and define four tasks based on fingertip kinematics: speed promotes high linear velocity; clockwise and c-clockwise promote sustained rotation; and radial promotes fast arm extension and retraction. Finally , in Hopper (HP), we e valuate locomotion via four do wnstream tasks: forward , backward , and standstill rew ard the agent for positiv e, negati ve, and zero velocity along the x -axis, respectiv ely , while standard corresponds to the original Gymnasium composite re ward. F or all experiments, we generate a dataset of 50 , 000 policies and retain the top 5% most informativ e ones. Policies are fully-connected neural networks with ≈ 10 3 parameters. Full experimental details are pro vided in Appendix E . 5.1 Policy Dataset Curation Q1: On the role of curating policies for improv ed datasets. T o answer Q1 , we analyze the score distributions assigned to the policies and the re ward distributions of the resulting datasets obtained during the curation phase. W e compare the re ward distribution of the original random dataset ( 100% ) against a 5% thresholding according to both scores ρ APC (Eq. 2 ) and ρ OPC (Eq. 5 ). W e also map the min–max normalized scores against the rew ards across all tasks and en vironments. The core results for the RC en vironment are presented in Fig. 1 , while the extended e xperimental results for all other domains are detailed in Appendix F .1 . As illustrated in Fig. 1a , 1b , 1c , 1d , the random initialization process naturally yields a heavy-tailed distribution where high-re ward behaviors are exceedingly rare; this phenomenon is exacerbated 6 APC score OPC score APC loss OPC loss APC loss OPC loss Standard Forward Figure 2: Ablation study comparing Latent Space topologies in the HP environment. Rows represent rew ard functions, while columns represent dif ferent score/loss combinations. Lighter and darker colors indicate higher and lower returns of the decoded polic y . in complex control tasks such as Hopper . The APC nov elty search, relying strictly on immediate action differences, frequently discards these rare beha vioral modes. In contrast, the OPC curation successfully isolates and preserves these critical outlier policies, maintaining a significantly hea vier tail in the high-rew ard regions across the ev aluated tasks. This effect is explained by the score distributions: while scores ρ APC (Fig. 1e , 1g ) are generally uncorrelated with the actual task re wards or global beha vioral uniqueness, scores ρ OPC (Fig. 1f , 1h ) are highly structured, ensuring that the most functionally unique policies consistently fall within the top percentile of the scoring distrib ution. 5.2 Latent Behavior Compression Q2: On the role of OPC in the quality of latent spaces. T o answer Q2 , we perform a qualitativ e visual inspection of the latent spaces generated by both APC and OPC pipelines. W e conduct an ablation study to assess the effects of the occupanc y-based components (uniqueness scores and the compression objecti ve). W e focus on the HP en vironment, as it offers a more comple x locomotion task. The full ablation study is reported in Appendix F .2 . In Fig. 2 , we sho w 2D latent embeddings colored by task performance. W e sample a uniform grid in latent space and e valuate the reconstructed policies. The fully action-based baseline ( APC score & APC loss ) f ails to recov er a meaningful topological structure, resulting in an unstructured space dominated by lo w-performing behaviors. Ev aluating the components in isolation rev eals their individual limitations. Introducing only the occupancy-based objecti ve ( APC scor e & OPC loss ) yields a smoother geometric gradient, b ut the lack of di verse high-performing policies in the APC- curated dataset restricts the presence of high-reward regions. Conv ersely , using the occupancy-based metric for curation with the action-based objectiv e ( OPC scor e & APC loss ) successfully introduces high-rew ard policies into the latent space, b ut the action-matching loss scatters them erratically , failing to form cohesi ve clusters. The full occupanc y-based pipeline ( OPC score & OPC loss ) effecti vely synergizes both components. The latent space is organized into a continuous, tra versable geometry , with high-rew ard policies densely clustered in cohesiv e, structured regions. 5.3 Latent Behavior Optimization Q3: On the role of OPC for latent optimization. T o answer Q3 , we ev aluate the downstream optimization performance. Follo wing the experimental setting in T enedini et al. ( 2026 ), we compare the performance of PGPE ( Sehnke et al. , 2008 ) operating in the parameter space, in the latent space deriv ed from the APC pipeline (A-PGPE), and in the latent space deri ved from our OPC pipeline (O-PGPE) with v arious baselines, including DDPG ( Lillicrap et al. , 2016 ), TD3 ( Fujimoto et al. , 2018 ), and SA C ( Haarnoja et al. , 2018 ). W e perform the optimization o ver all tasks and en vironments, and across several latent dimensions. The results can be 7 O -PGPE 2D O -PGPE 8D WS- O -PGPE 3D WS- O -PGPE 5D WS- O -PGPE 8D Best in Dataset DDPG PPO S A C PGPE A -PGPE 3D A -PGPE 8D (a) MC, standard (b) MC, left (c) MC, speed (d) MC, height (e) RC, speed (f) RC, clockwise (g) RC, c-clockwise (h) RC, radial (i) HP , forward (j) HP , backward (k) HP , standstill (l) HP , standard Figure 3: Performance comparison in MC, RC, and HP across different tasks. W e report the average and 95% confidence interval o ver 10 runs of the best-performing algorithms for each task. observed in Fig. 3 . For each task, we plot only the best curve for each family of algorithms: A-PGPE, O-PGPE, WS-O-PGPE, and the baselines. W e also show the best-performing polic y in the training dataset. The complete collection of training curv es for all algorithms can be found in Appendix F .3 . As sho wn in Fig. 3 , optimizing ov er the occupancy-matched latent space (O-PGPE) yields sample efficienc y and final returns comparable to those of standard DRL baselines and action-matched manifolds (A-PGPE). Incorporating the Latent W arm Start (WS-O-PGPE) often accelerates the initial learning phase by initializing the agent in a high-reward region. For e xample, in MC, using W arm Start seemingly transforms the problem into a zero-shot optimization, superseding all other methods (Fig. 3a , 3b , 3c ), except for the task height (Fig. 3d ). The main advantage of our framew ork, howe ver , emerges in complex en vironments, such as HP . In this domain, the action-matching proxy of APC fails to learn a behaviorally meaningful latent representation, leading to collapsed fine-tuning performance. Con versely , OPC successfully com- presses high-performing behaviors in the latent manifold, which it later reco vers to reliably combat standard baselines. Notably , in the complex locomotion task backward , OPC demonstrates an impressiv e capacity to generalize, discov ering latent behaviors that achie ve returns surpassing e ven the best-performing policies present in the training dataset. This task also highlights a trade-of f of Latent W arm Start: coarse initialization can occasionally trap the optimization in local optima. 6 Conclusion In this paper, we presented an enhanced framew ork for Latent Behavior Compression that funda- mentally shifts the characterization of policy behaviors from immediate-action proxies to global state-visitation distributions. By combining an information-theoretic uniqueness metric for dataset curation with a differentiable mixture-occupanc y matching objectiv e, our pipeline successfully cap- tures complex, long-horizon dynamics. Empirically , we demonstrated that it preserves critical outlier behaviors, constructs a beha viorally meaningful latent manifold, and enables highly sample-efficient adaptation. Crucially , optimizing within this occupancy-matched space enables agents to solve complex continuous control tasks that pre vious action-based compression methods fail to solve. Limitations and Future Directions. While our frame work establishes a robust foundation for state-occupancy manifold learning, its maximum asymptotic performance is ultimately limited by 8 the behavioral di versity of the pre-training dataset. Future research could explore replacing random policy initialization with acti ve, task-agnostic exploration frame works, such as maximum-entropy or intrinsic-motiv ation algorithms (e.g., Eysenbach et al. , 2018 ; Mutti et al. , 2021 ). Furthermore, both the non-parametric k -NN estimator and the zeroth-order PGPE optimizer can exhibit high v ariance and fail to exploit the dif ferentiable latent manifold. Consequently , employing variance-reduction techniques or exploring alternative latent optimization strategies beyond PGPE, such as Bayesian Optimization or gradient-aw are methods like Latent-PPO ( Han , 2026 ), could unlock ev en greater downstream adaptation capabilities within these highly compressed beha vioral spaces. References Joshua Achiam, Harrison Edwards, Dario Amodei, and Pieter Abbeel. V ariational option discovery algorithms. arXiv pr eprint arXiv:1807.10299 , 2018. Rishabh Agarwal, Max Schw arzer , Pablo Samuel Castro, Aaron C Courville, and Marc Bellemare. Reincarnating reinforcement learning: Reusing prior computation to accelerate progress. Advances in Neural Information Pr ocessing Systems , 35:28955–28971, 2022. Ji ˇ rí Ajgl and Miroslav Šimandl. Dif ferential entropy estimation by particles. IF A C Pr oceedings V olumes , 44(1):11991–11996, 2011. ISSN 1474-6670. 18th IF A C W orld Congress. Martin Arjovsk y , Soumith Chintala, and Léon Bottou. W asserstein generative adversarial netw orks. In International confer ence on machine learning , pp. 214–223. Pmlr , 2017. Oscar Chang, Robert Kwiatkowski, Siyuan Chen, and Hod Lipson. Agent embeddings: A latent representation for pole-balancing networks. In International Conference on A utonomous Agents and MultiAgent Systems , pp. 656–664, 2019. Y an Duan, Xi Chen, Rein Houthooft, John Schulman, and Pieter Abbeel. Benchmarking deep reinforcement learning for continuous control. In International Confer ence on Machine Learning , 2016. Benjamin Eysenbach, Abhishek Gupta, Julian Ibarz, and Sergey Levine. Div ersity is all you need: Learning skills without a reward function. In International Confer ence on Learning Repr esentations , 2018. Francesco Faccio, V incent Herrmann, Aditya Ramesh, Louis Kirsch, and Jürgen Schmidhuber . Goal- conditioned generators of deep policies. In AAAI Conference on Artificial Intelligence , v olume 37, pp. 7503–7511, 2023. Scott Fujimoto, Herke Hoof, and Da vid Meger . Addressing function approximation error in actor- critic methods. In International Confer ence on Machine Learning , pp. 1587–1596, 2018. Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair , Aaron Courville, and Y oshua Bengio. Generativ e adversarial netw orks. Communications of the A CM , 63(11):139–144, 2020. Karol Gre gor, Danilo Rezende, and Daan W ierstra. V ariational intrinsic control. W orkshop @ International Confer ence on Learning Repr esentations , 2017. Ishaan Gulrajani, Faruk Ahmed, Martin Arjovsky , V incent Dumoulin, and Aaron C Courville. Improv ed training of Wasserstein GANs. Advances in neural information pr ocessing systems , 30, 2017. T uomas Haarnoja, Aurick Zhou, Pieter Abbeel, and Sergey Levine. Soft actor-critic: Off-policy maximum entrop y deep reinforcement learning with a stochastic actor . In International Confer ence on Machine Learning , pp. 1861–1870, 2018. 9 Xiang Han. LG-H-PPO: offline hierarchical PPO for robot path planning on a latent graph. F rontier s in Robotics and AI , 12, 2026. ISSN 2296-9144. Ste ven Hansen, Will Dabney , Andre Barreto, David W arde-Farley , T om V an de W iele, and V olodymyr Mnih. Fast task inference with v ariational intrinsic successor features. In International Confer ence on Learning Repr esentations , 2019. Shashank Hegde, Sumeet Batra, KR Zentner , and Gaurav Sukhatme. Generating behaviorally di verse policies with latent diffusion models. Advances in Neural Information Pr ocessing Systems , 36: 7541–7554, 2023. Geoffre y E Hinton and Ruslan R Salakhutdinov . Reducing the dimensionality of data with neural networks. science , 313(5786):504–507, 2006. Jonathan Ho and Stefano Ermon. Generati ve adversarial imitation learning. Advances in neural information pr ocessing systems , 29, 2016. Sham M Kakade. A natural policy gradient. Advances in Neural Information Pr ocessing Systems , 14, 2001. V ˇ era K ˚ urková and P aul C. Kainen. Functionally equiv alent feedforward neural networks. Neural Computation , 6(3):543–558, 1994. Michael Laskin, Denis Y arats, Hao Liu, Kimin Lee, Albert Zhan, K evin Lu, Catherine Cang, Lerrel Pinto, and Pieter Abbeel. Urlb: Unsupervised Reinforcement Learning benchmark. 2021. Beiming Li, Sergio Rozada, and Alejandro Ribeiro. Learning policy representations for steerable behavior synthesis. arXiv preprint , 2026. T imothy P Lillicrap, Jonathan J Hunt, Alexander Pritzel, Nicolas Heess, T om Erez, Y uval T assa, David Silver , and Daan W ierstra. Continuous control with deep reinforcement learning. In International Confer ence on Learning Repr esentations , 2016. Alberto Maria Metelli, Matteo Papini, Francesco Faccio, and Marcello Restelli. Policy optimization via importance sampling. Advances in Neural Information Pr ocessing Systems , 31, 2018. Léo Meynent, Iv an Melev , Konstantin Schürholt, Goeran Kauermann, and Damian Borth. Structure is not enough: Le veraging behavior for neural network weight reconstruction. W orkshop @ International Confer ence on Learning Repr esentations , 2025. Atsushi Miyamae, Y uichi Nagata, Isao Ono, and Shigenobu K obayashi. Natural policy gradient methods with parameter-based exploration for control tasks. Advances in Neur al Information Pr ocessing Systems , 23, 2010. Alessandro Montenegro, Marco Mussi, Alberto Maria Metelli, and Matteo Papini. Learning optimal deterministic policies with stochastic policy gradients. In International Confer ence on Machine Learning , pp. 36160–36211, 2024. Andrew W illiam Moore. Ef ficient memory-based learning for robot control. T echnical report, Univ ersity of Cambridge, 1990. Mirco Mutti, Lorenzo Pratissoli, and Marcello Restelli. T ask-agnostic exploration via policy gradient of a non-parametric state entropy estimate. Pr oceedings of the AAAI Confer ence on Artificial Intelligence , 35(10):9028–9036, 2021. DOI: 10.1609/aaai.v35i10.17091. Mirco Mutti, Stefano Del Col, and Marcello Restelli. Rew ard-free policy space compression for reinforcement learning. In International Confer ence on Artificial Intelligence and Statistics , pp. 3187–3203. PMLR, 2022. 10 W illiam Peebles, Ilija Radosa vovic, T im Brooks, Alex ei A Efros, and Jitendra Malik. Learning to learn with generativ e models of neural network checkpoints. arXiv pr eprint arXiv:2209.12892 , 2022. Jan Peters and Stefan Schaal. Reinforcement learning of motor skills with policy gradients. Neural networks , 21(4):682–697, 2008. Martin L Puterman. Markov decision pr ocesses: discrete stochastic dynamic pr ogramming . John W iley & Sons, 2014. Nemanja Rakicevic, Antoine Cully , and Petar Kormushe v . Policy manifold search: Exploring the manifold hypothesis for diversity-based neuroevolution. In Genetic and Evolutionary Computation Confer ence , pp. 901–909, 2021. Thomas Rückstiess, Frank Sehnke, T om Schaul, Daan W ierstra, Y i Sun, and Jürgen Schmidhuber . Exploring parameter space in reinforcement learning. P aladyn , 1(1):14–24, 2010. Andrei A. Rusu, Sergio Gomez Colmenarejo, Caglar Gulcehre, Guillaume Desjardins, James Kirk- patrick, Razvan Pascanu, V olodymyr Mnih, Koray Kavukcuoglu, and Raia Hadsell. Policy distillation, 2016. John Schulman, Filip W olski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov . Proximal policy optimization algorithms. arXiv pr eprint arXiv:1707.06347 , 2017. K onstantin Schürholt, Dimche Kostadino v , and Damian Borth. Self-supervised representation learning on neural network weights for model characteristic prediction. Advances in Neural Information Pr ocessing Systems , 34:16481–16493, 2021. K onstantin Schürholt, Boris Knyaze v , Xavier Giró-i Nieto, and Damian Borth. Hyper-representations as generativ e models: Sampling unseen neural network weights. Advances in Neur al Information Pr ocessing Systems , 35:27906–27920, 2022. K onstantin Schürholt, Michael W Mahoney , and Damian Borth. T owards scalable and versatile weight space learning. In International Conference on Machine Learning , pp. 43947–43966, 2024. Frank Sehnke, Christian Osendorfer , Thomas Rückstieß, Ale x Grav es, Jan Peters, and Jürgen Schmid- huber . Policy gradients with parameter-based e xploration for control. In International Confer ence on Artificial Neural Networks , pp. 387–396. Springer , 2008. Davide T enedini, Riccardo Zamboni, Mirco Mutti, and Marcello Restelli. From parameters to behaviors: Unsupervised compression of the polic y space. In International Conference on Learning Repr esentations , 2026. Emanuel T odorov , T om Erez, and Y uval T assa. Mujoco: A physics engine for model-based control. In International Confer ence on Intelligent Robots and Systems , pp. 5026–5033, 2012. Riccardo Zamboni, Mirco Mutti, and Marcello Restelli. T owards principled unsupervised multi-agent reinforcement learning. Advances in Neural Information Pr ocessing Systems , 2025. Ming Zhang, Y awei W ang, Xiaoteng Ma, Li Xia, Jun Y ang, Zhiheng Li, and Xiu Li. W asserstein distance guided adversarial imitation learning with reward shape exploration. In 2020 IEEE 9th Data Driven Control and Learning Systems Conference (DDCLS) , pp. 1165–1170, 2020. DOI: 10.1109/DDCLS49620.2020.9275169. 11 Supplementary Materials The following content was not necessarily subject to peer r eview . A Extended Related W ork Our framew ork sits at the intersection of unsupervised behavior discov ery , weight-space learning, and occupanc y matching, thereby unifying these domains to learn a functionally grounded, lo w- dimensional policy manifold. Unsupervised RL and Beha vior Discovery . A ke y challenge in Deep Reinforcement Learning is the dependenc y on extrinsic rew ards, which limits an agent’ s ability to generalize. T o address this, Unsupervised RL (URL) methods seek to learn useful behaviors before a specific goal is defined. Information-theoretic framew orks like DIA YN ( Eysenbach et al. , 2018 ), as well as other skill-discov ery methods ( Gre gor et al. , 2017 ; Achiam et al. , 2018 ; Hansen et al. , 2019 ), aim to discover div erse skills by maximizing the mutual information between states and latent v ariables. Similarly , MEPOL ( Mutti et al. , 2021 ) utilizes a maximum-entropy objecti ve to driv e an agent toward maximal state-space cov erage, while more recently , TRPE ( Zamboni et al. , 2025 ) utilizes a similar approach to enforce behavioral di versity in multi-agent systems. While these methods successfully identify robust task-agnostic initializations, the y typically revert to operating in the original high-dimensional parameter space once supervised fine-tuning begins. Our frame work, instead, identifies a continuous global behavioral manifold that inherently facilitates and accelerates this downstream adaptation process. Policy Compr ession and W eight Space Learning . The goal of learning a latent representation of neural network parameters connects our approach to the field of W eight Space Learning (WSL). In RL, recent generativ e models hav e explored task-specific parameter generation conditioned on goals ( Faccio et al. , 2023 ) or performance checkpoints ( Peebles et al. , 2022 ). In supervised learning, hyper-r epresentations ( Schürholt et al. , 2021 ; 2022 ; 2024 ) aim to b uild task-agnostic embeddings from a “zoo” of trained models. Ho wev er , standard WSL methods and traditional policy compression techniques ( Rusu et al. , 2016 ) face a critical hurdle: the v ast number of parameter-space symmetries (e.g., neuron permutations and scaling) inherent in neural architectures ( K ˚ urk ová & Kainen , 1994 ). T o circumv ent this, recent works in supervised learning supplement standard parameter reconstruction losses with beha vioral output matching ( Me ynent et al. , 2025 ). In Unsupervised Reinforcement Learning, other approaches tackle policy space simplification mathematically , such as reducing the cardinality of the policy space ( Mutti et al. , 2022 ). Howe ver , these strict constraints often lead to NP- hard optimization problems. Our approach sidesteps permutation symmetries entirely . Building on the Latent Beha vior Compression frame work ( T enedini et al. , 2026 ), we use a functional compression metric rather than parameter reconstruction, thereby allowing the generati ve model to naturally assimilate mathematically div ergent yet functionally identical parameterizations. Policy Manif olds and Quality Diversity . The e xistence of low-dimensional manifolds embedded within policy parameter spaces has been hypothesized ( Rakice vic et al. , 2021 ) and e xplored using V ariational Autoencoders (V AEs) to analyze pre-trained expert embeddings ( Chang et al. , 2019 ). Similar architectures hav e been applied in Quality Di versity to impro ve the sample efficiency of div ersity-based search ( Rakicevic et al. , 2021 ) or to distill large policy archiv es into generativ e models ( Hegde et al. , 2023 ). Notably , these methods rely on parameter -reconstruction losses , which fundamentally restrict their compression ratios (e.g., up to 19 : 1 in Hegde et al. ( 2023 )). By optimizing a beha vioral objectiv e directly , our unsupervised pipeline achie ves substantially greater compression while providing a topologically smooth space for do wnstream optimization. Occupancy Matching and Policy Optimization. Comparing behaviors formally often inv olves analyzing state occupancy distrib utions, a concept recently utilized to learn steerable latent polic y representations via e xpected state-action features ( Li et al. , 2026 ). Comparing behaviors is also the foundation of Imitation Learning (IL), where methods like GAIL ( Ho & Ermon , 2016 ) and W asserstein-GANs ( Goodfellow et al. , 2020 ; Arjovsky et al. , 2017 ; Gulrajani et al. , 2017 ; Zhang 12 et al. , 2020 ) minimize the di vergence between an agent’ s behavior and a tar get expert distrib ution. Our contrib ution scales these concepts from single-e xpert matching to population-le vel alignment by dev eloping a mixture-occupancy matching objecti ve. Once this manifold is learned, we optimize downstream tasks using Policy Gradient with P arameter-based Exploration (PGPE) ( Sehnke et al. , 2008 ; Rückstiess et al. , 2010 ; Miyamae et al. , 2010 ; Montene gro et al. , 2024 ). While zeroth-order methods like PGPE and first-order methods ( Peters & Schaal , 2008 ; Lillicrap et al. , 2016 ; Kakade , 2001 ; Schulman et al. , 2017 ) traditionally struggle to scale in highly redundant parameter spaces, ex ecuting PGPE within our highly compressed, beha viorally organized latent space resolves these scalability bottlenecks, av oiding the complex decoder Jacobian calculations required by prior manifold optimization approaches ( Rakicevic et al. , 2021 ). B Policy Dataset Curation: Derivations and Estimators This section provides the formal deriv ations and practical estimation details for the information- theoretic uniqueness score introduced in Section 4 . B.1 Derivation of the Uniqueness Score Let M = | Ψ | denote the number of policies in the population. Let d i ( s ) for i = 1 , . . . , M be the state occupancy distribution induced by the i -th policy π i ∈ Ψ . W e consider the uniform mixture ov er these occupancy distrib utions by assuming that each policy is selected with equal probability , p ( i ) = 1 M . This induces a joint distribution o ver states and policies gi ven by p ( s, i ) = p ( s | i ) p ( i ) = 1 M d i ( s ) , from which the marginal state distrib ution, corresponding to the mixture occupancy d Ψ , is obtained as d Ψ ( s ) = P M i =1 p ( s, i ) = 1 M P M i =1 d i ( s ) . Let H ( X ) denote the dif ferential entropy of a random variable X . Starting from the chain rule H ( X, Y ) = H ( X ) + H ( Y | X ) and using the symmetry of joint differential entrop y , we can write: H ( X ) + H ( Y | X ) = H ( Y ) + H ( X | Y ) H ( X ) = H ( X | Y ) + M I ( X , Y ) , where M I ( X , Y ) denotes the mutual information. W e no w apply this to the random v ariables S ∼ d Ψ ( s ) and π ∼ p ( i ) . The conditional entropy becomes: H ( S | π ) = − M X i =1 Z S p ( s, i ) log p ( s | i ) ds = − M X i =1 Z S 1 M d i ( s ) log d i ( s ) ds = 1 M M X i =1 H ( d i ) . 13 The mutual information can be expanded as: M I ( S, π ) = M X i =1 Z S p ( s, i ) log p ( s, i ) d Ψ ( s ) p ( i ) ds = M X i =1 Z S 1 M d i ( s ) log 1 M d i ( s ) d Ψ ( s ) · 1 M ds = 1 M M X i =1 Z S d i ( s ) log d i ( s ) d Ψ ( s ) ds = 1 M M X i =1 D KL ( d i ∥ d Ψ ) . Combining the two terms, we obtain the entropy decomposition of the mixture occupanc y distrib ution: H ( d Ψ ) = 1 M M X i =1 [ H ( d i ) + D KL ( d i ∥ d Ψ )] . The term inside the summation represents the marginal contribution of the i -th policy to the total entropy of the mixture, which we utilize as our uniqueness score ρ ( θ i ) . B.2 T ractable Estimation via GMMs Because the analytical form of the occupanc y d θ i is unkno wn, we estimate the scores using trajectory data. W e first fit Gaussian Mixture Models (GMMs) to the temporally do wnsampled trajectories of each policy , yielding the continuous density estimators ˆ d θ i ( s ) for individual policies and ˆ d Ψ ( s ) for the global mixture. Using a set of N particles D θ i = { s j } N j =1 sampled from the individual density estimate ˆ d θ i , we apply Monte Carlo (MC) integration to approximate the values of the dif ferential entropy and the KL div ergence: b H ( d θ i ) = − 1 N N X j =1 log ˆ d θ i ( s j ) , d D KL ( d θ i ∥ d Ψ ) = 1 N N X j =1 h log ˆ d θ i ( s j ) − log ˆ d Ψ ( s j ) i . B.3 KL Divergence Bound and the Saturation Effect When calculating d D KL , the estimation of the mixture occupancy distribution ˆ d Ψ must be handled carefully to a void a saturation effect that de grades the discriminati ve po wer of the scores as the population grows. This is due to a strict upper bound on the KL diver gence between a single component and its mixture. Proposition 1. Let m ( x ) = P M j =1 w j p j ( x ) be a mixtur e of M pr obability density functions with weights w j ≥ 0 and P w j = 1 . F or any component i , the KL diverg ence is bounded: D KL ( p i ∥ m ) ≤ − log w i . Pr oof. By the definition of the mixture, m ( x ) = w i p i ( x ) + P j = i w j p j ( x ) . Because density functions and weights are non-negati ve, m ( x ) ≥ w i p i ( x ) , which implies p i ( x ) m ( x ) ≤ 1 w i . 14 Since the logarithm is monotonically increasing, log p i ( x ) m ( x ) ≤ − log w i . The KL diver gence is the expectation of this log-ratio o ver p i : D KL ( p i ∥ m ) = Z p i ( x ) log p i ( x ) m ( x ) dx ≤ Z p i ( x )( − log w i ) dx. Since − log w i is a constant and p i ( x ) integrates to 1 , D KL ( p i ∥ m ) ≤ − log w i . ■ In our uniform mixture, w i = 1 / M , yielding the bound D KL ( d θ i ∥ d Ψ ) ≤ log M . If ˆ d Ψ is estimated using exhausti ve aggre gation across a massiv e population Ψ , the KL term mathematically v anishes relativ e to the scale of the state space, f ailing to capture uniqueness. T o preserve metric sensiti vity , we restrict the calculation of the global reference ˆ d Ψ to a representativ e subset of the population. C Numerically Stable Importance W eight Computation As introduced in Section 4 , the computation of the importance weights follows the density-ratio methodology of Mutti et al. ( 2021 ), adapted here to accommodate mixtures of policies. W e associate each particle s j t with the importance weight w j calculated for the full trajectory τ j that produced it. Because the non-normalized weight w j in volv es the product of numerous probability v alues lying in the interval [0 , 1] , both the numerator and denominator can quickly vanish to zero ov er long horizons. T o prevent arithmetic underflo w and a loss of numerical precision, we transform the product of probabilities into a sum of log-probabilities and compute the weights entirely in log-space using the Log-Sum-Exp (LSE) trick. Let ℓ i,j be the log-probability of trajectory τ j under the i -th policy of the original dataset mixture π θ i ∈ D Θ : ℓ i,j = log p ( τ j | π θ i ) = T X t =0 log π θ i ( a j t | s j t ) . Similarly , let ℓ ′ i,j be the log-probability of the same trajectory under the i -th reconstructed target policy π ˆ θ i ∈ D ˆ Θ . The non-normalized weight w j is the ratio of these two sums of e xponentials. In log-space, letting λ j = log w j , this is expressed as: λ j = log X i exp( ℓ ′ i,j ) ! − log X i exp( ℓ i,j ) ! . T o prevent overflo w or underflow during these summations, each term is computed using the LSE function: LSE ( x 1 , . . . , x n ) = c + log n X i =1 exp( x i − c ) , where c = max i { x i } . By extracting the maximum value c , we ensure that the lar gest exponentiated term inside the sum- mation is strictly exp(0) = 1 , safely shifting the range of the calculation into a stable floating-point region. Thus, the non-normalized log-weight is robustly computed as: λ j = LSE i ∈D ˆ Θ ( ℓ ′ i,j ) − LSE i ∈D Θ ( ℓ i,j ) . The final step is self-normalization, which ensures that the weights over the dataset sum to unity , w j = w j / P k w k . T o maintain numerical stability until the very end of the computation, we perform this normalization in the log-domain as well: w j = exp ( λ j − LSE k ( λ k )) . This approach guarantees that we exponentiate only the final output, preserving high numerical precision throughout the density-ratio estimation process. 15 Algorithm 1 Latent Beha vior Compression 1: Input: Policy dataset D Θ , trajectory dataset D τ , inner iterations I , batch size P , nearest neighbors parameter k , learning rate α . 2: Output: Generati ve behavioral mapping g ζ . 3: Randomly initialize autoencoder parameters: encoder ξ and decoder ζ . 4: repeat 5: Sample a mini-batch of P policies ψ = { θ i } P i =1 ⊂ D Θ 6: Retrie ve corresponding trajectories D ψ ⊂ D τ 7: f or i = 1 to I do 8: Reconstruct parameters: ˆ ψ = { ˆ θ i = g ζ ( f ξ ( θ i )) | θ i ∈ ψ } 9: Compute mixture-matching loss: L B ( ξ , ζ ) = D KL d ψ ∥ d ˆ ψ {via Eq. 1 } 10: Update weights: { ξ , ζ } ← { ξ , ζ } − α ∇ { ξ , ζ } L B ( ξ , ζ ) 11: end for 12: until con vergence or full population co verage 13: return g ζ D A utoencoder T raining Pipeline Optimizing the mixture-occupancy matching objecti ve over the entire policy dataset D Θ at once is computationally prohibiti ve. T o scale the training process, we employ a mini-batch stochastic gradient descent strategy o ver the policy population. During each training step, we uniformly sample a subset ψ of P policies from the dataset, and fetch their corresponding pre-collected trajectories D ψ ⊂ D τ . A major adv antage of our non-parametric importance-sampling formulation is the ability to reuse the exact trajectory batch across multiple sequential parameter updates, thereby drastically increasing sample efficienc y . Specifically , for each sampled batch ψ , we ex ecute an inner loop consisting of I consecutiv e gradient descent steps of the autoencoder’ s parameters { ξ , ζ } . Critically , because the decoder’ s weights (and consequently , the reconstructed policies ˆ ψ ) ev olve after each step, the true density ratio shifts. Therefore, the importance weights are re-ev aluated at ev ery step using the numerically stable Log-Sum-Exp procedure described in Appendix C . This nested update scheme significantly accelerates con ver gence and improves sample ef ficiency . Training iterations are repeated until the population has been sufficiently sampled or the autoencoder has con ver ged. The complete training procedure is summarized in Algorithm 1 . Practical Implementation of Policy Stochasticity . While our theoretical frame work models policies as strictly stochastic ( π ∈ ∆( A ) ) to accommodate density ratio estimation, ev aluating highly exploratory , randomly initialized continuous policies can yield erratic, noisy actions due to untrained variance parameters. T o ensure meaningful and stable state-space cov erage during the Policy Dataset Curation phase, and during downstream PGPE optimization, we deploy the deterministic version of the policies (i.e., we e xecute only the mean of the action distribution). Consequently , during the autoencoder training phase, we ev aluate the stochastic likelihood of these deterministically collected trajectories. W e acknowledge that e valuating stochastic probabilities on deterministically generated data introduces bias into the importance-sampling estimator . Ho wev er , this is a necessary and highly effecti ve empirical trade-off: it strips catastrophic noise from the behavioral rollouts while utilizing the v ariance parameters as a continuous smoothing mechanism. This guarantees a strictly positiv e probability density during training, circumventing the v anishing denominators and intractable density ratios that would occur if purely deterministic Dirac-delta distrib utions were used. 16 E Experimental Settings This section provides the details regarding the architecture, en vironment, and training procedure used to conduct the experiments presented in Section 5 . E.1 Architectur e and T raining Policy Networks. T o model the behaviors, we use fully-connected, feed-forward Multi-Layer Perceptrons (MLPs). The input layer has |S | neurons and is preceded by a normalization layer that standardizes the state features to hav e zero mean and unit variance. The policies consist of two hidden layers of 32 neurons, each follo wed by Exponential Linear Unit (ELU) activ ations. The final output layer consists of |A| neurons with a tanh activ ation to squash the actions into the valid en vironmental bounds. The policies have roughly 10 3 parameters: 1 , 218 for MC, 1 , 412 for RC, and 1 , 638 for HP . The weights are sampled from independent uniform distributions θ i ∼ U ( − 2 . 5 , 2 . 5) n , where n is the number of parameters. A utoencoder Ar chitecture. W e utilize a fixed, symmetric autoencoder architecture to construct the behavioral manifold. First, the weights are standardized to hav e zero mean and unit variance. Then, the encoder projects the n -dimensional policy parameter v ector through two fully connected hidden layers with 25 and 10 neurons, respectiv ely , into a k -dimensional bottleneck. The decoder symmetrically maps the k -dimensional latent code back through layers of 10 and 25 neurons to reconstruct the n -dimensional weight vector . The encoder and decoder output layers are not activ ated. All other layers utilize ELU activ ation functions. Based on the en vironment, the bottleneck can have shape k ∈ { 1 , 2 , 3 , 5 , 8 } . A utoencoder T raining. During the Latent Behavior Compression phase, the autoencoder is trained on a curated dataset of |D Θ | = 50 , 000 policies. The dataset is then reduced to the top 5%. T o compute the mixture-matching loss, we sample 20 trajectories per policy , which we found to be an optimal trade-of f between estimator v ariance and computational ef ficiency . F or the importance- sampling estimator , the nearest-neighbors parameter is fix ed at k = 30 across all domains. W e train with a small batch size of 5; while this introduces gradient noise, it crucially prev ents the model from collapsing into a tri vial mean-policy solution, thereby forcing the manifold to capture distinct, high-rew ard behavioral modes that would otherwise be smoothed out. The total number of training iterations is computed to ensure that each policy in the dataset is sampled at least once with probability greater than 0 . 99 . Furthermore, policies are sampled uniformly at random rather than sequentially to prev ent catastrophic forgetting. E.2 En vironments W e ev aluate our framework on three continuous control environments from the Gymnasium li- brary . F or each en vironment, the unsupervised pre-training phase is entirely re ward-free, while the downstream optimization phase e valuates fe w-shot adaptation to four distinct reward functions. Mountain Car Continuous (MC). In this environment, a car is stochastically placed in a sinusoidal valle y and must build momentum to trav erse the hills. The state space S ⊆ R 2 captures position p ∈ [ − 1 . 2 , 0 . 6] and v elocity v ∈ [ − 0 . 07 , 0 . 07] , while the action space A ⊆ R 1 represents the applied acceleration. W e define four tasks: (1) standard : a sparse +100 re ward for reaching the right goal ( p ≥ 0 . 45 ), with a − 0 . 1 a 2 control penalty; (2) left : identical to standard , but the goal is at the left peak ( p ≤ − 1 . 1 ); (3) height : a dense re ward R t = h 2 for height h ≥ 0 . 2 ; and (4) speed : a dense rew ard R t = v 2 . Episodes terminate upon reaching the standard goal or after 999 steps. Reacher (RC). RC features a two-jointed robotic arm mo ving in a 2D plane. T o focus strictly on task-agnostic behavioral discov ery , we remove all target-related information from the observ ations. The normalized state space S ⊆ R 6 captures the sines, cosines, and angular velocities of the two joints, and the action space A ⊆ R 2 controls the hinge torques. For the purpose of normalization, we consider the state bounded between the vectors [ − 1 , − 1 , − 1 , − 1 , − 5 , − 5] and [1 , 1 , 1 , 1 , 5 , 5] . W e 17 define four binary tasks e valuated on the fingertip’ s kinematics ( R t = 1 if met, else 0 ): (1) speed : linear velocity > 6 ; (2) clockwise : tangential v elocity < − 1 ; (3) c-clockwise : tangential velocity > 1 ; and (4) radial : radial velocity > 3 . Episodes terminate after 50 steps. Hopper (HP). HP is a complex planar monopod robot go verned by a normalized 11 -dimensional state space capturing height, joint angles, and velocities, controlled via a 3 -dimensional action space gov erning the hip, knee, and ankle torques. For the purpose of normalization, we consider the state bounded between the vectors [0 . 7 , − 0 . 2 , − 2 . 7 , − 2 . 7 , − 0 . 8 , − 5 . 0 , − 5 . 0 , − 5 . 0 , − 5 . 0 , − 5 . 0 , − 5 . 0] and [1 . 5 , 0 . 2 , 0 . 0 , 0 . 0 , 0 . 8 , 5 . 0 , 5 . 0 , 5 . 0 , 5 . 0 , 5 . 0 , 5 . 0] . W e define four locomotion tasks: (1) forward : x -axis velocity > 1 ; (2) backward : x -axis velocity < − 1 ; (3) standstill : x - axis velocity within [ − 0 . 05 , 0 . 05] ; and (4) standard : the def ault Gymnasium composite rew ard consisting of a +1 healthy survi val bonus, a forw ard velocity term, and a control penalty . The first three tasks use binary rew ards. Episodes terminate if the robot falls or after 1,000 steps. E.3 Hyperparameters Baselines. W e implement the baseline algorithms, PPO, DDPG, and SA C, using the StableBaselines library and employing the same hyperparameters as in T enedini et al. ( 2026 ), specifically: • Mountain Car: – DDPG: Exploration noise standard deviation of 0.75 and 0.65, and a replay buf fer size of 50,000. – SA C: Generalized State Dependent Exploration (gSDE), entropy coef ficient of 0.1, soft update coefficient of 0.01, train frequenc y of 32, and 32 gradient steps per rollout. – PPO: Generalized State Dependent Exploration (gSDE), learning rate of 0.0001, batch size of 256, and 4 optimization epochs per rollout. • Reacher: Hyperparameters are identical to those used in Mountain Car , with the exception of the DDPG exploration noise standard de viation, which is set to 0.5. • Hopper: Standard default library parameters for all algorithms. PGPE. Latent Behavior Optimization is performed using Polic y Gradients with Parameter -based Exploration (PGPE), optimized via Adam. W e maintain a population size of 8 across all en vironments and use an e xponential scheduler for learning-rate decay . T o prevent overshooting high-rew ard regions and becoming trapped in zero-gradient plateaus, we reduce the first moment decay to β 1 = 0 . 1 . Hyperparameters are tailored to specific en vironment objectives: for the Mountain Car (MC) standard and left rew ards, we set the center and standard de viation learning rates to 0 . 11 and 0 . 1 , respecti vely (initial σ = 1 . 0 ). The speed task uses more conserv ativ e rates ( 0 . 02 center , 0 . 01 std), while the height task requires a center rate of 0 . 01 and a reduced initial σ of 0 . 1 . F or Reacher (RC), we utilize a center learning rate of 0 . 1 and a standard de viation learning rate of 0 . 05 , whereas Hopper (HP) is optimized with a center learning rate of 0 . 11 . For the W arm Start variation of PGPE, we adopt the h yperparameters previously used for Reacher in the Hopper en vironment (center LR = 0 . 1 , std LR = 0 . 05 ). In contrast, for Mountain Car, we employ a more conserv ativ e configuration: a center learning rate of 0 . 01 , an initial standard de viation of 0 . 1 , and a standard deviation learning rate of 0 . 05 . This choice is informed by the observation that initial random sampling often identifies high-re ward regions early , necessitating only marginal refinement rather than extensi ve further optimization. For the warm-start initialization, we sample an initial set of policies with size proportional to the latent space dimensionality: 40 , 56 , and 80 policies for the 3D, 5D, and 8D spaces, respectiv ely . Reproducibility . All experimental components are fixed with specific random seeds to ensure reproducibility and facilitate fair comparisons. The seeding strategy for the pipeline is as follo ws: • Dataset Generation and Curation: A constant seed of 0 is applied across all stages, including policy generation, particle collection, and score computation. 18 All (100%) T op 5% APC T op 5% OPC −100 −50 0 50 100 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (a) MC, standard −100 −50 0 50 100 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (b) MC, left 0.0 0.5 1.0 1.5 2.0 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (c) MC, speed 0 100 200 300 400 10 0 10 1 10 2 10 3 Frequency (Log Scale) (d) MC, height 0 10 20 30 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (e) RC, speed 0 10 20 30 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (f) RC, clockwise 0 10 20 30 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (g) RC, c-clockwise 0 10 20 30 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (h) RC, radial 0 20 40 60 80 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (i) HP , forward 0 10 20 30 40 50 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (j) HP , backward 0 200 400 600 800 1000 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (k) HP , standstill 0 200 400 600 800 1000 10 0 10 1 10 2 10 3 10 4 Frequency (Log Scale) (l) HP , standard Figure 4: Re ward distributions comparison in MC, RC, and HP . • Latent Behavior Compression: F or the main optimization experiments, ten distinct latent spaces are trained using seeds 0 , . . . , 9 . The ablation studies that compare latent spaces specifically utilize seeds 888 and 999 . • Latent Behavior Optimization: The PGPE optimization scripts are initialized with seed 0 , while the baselines are ev aluated across ten independent runs each using seeds 0 , . . . , 9 . F Additional Experiments In this section, we present the full list of experiments presented in Section 5 , including those that could not fit in the main text. F .1 Policy Dataset Curation In this experiment, we generate a dataset of 50 , 000 policies for each en vironment (MC, RC, and HP). W e then apply a top 5% threshold based on scores generated by both the APC pipeline (obtained via nov elty search in the Euclidean space of immediate actions) and the OPC pipeline (obtained via our information-theoretic uniqueness metric in the occupancy space). In Fig. 4 , we visualize both dataset distributions compared to the complete (100%) dataset. Consequently , in Fig. 5 , we compare the distribution of scores that led to the pre viously mentioned thresholding. As noted in Section 5.1 , the scores generated by the APC pipeline tend to have less structure, consequently leading to the loss of rare, high-performing behaviors in the thresholded dataset. Con versely , the OPC scores generally fav or unique behaviors. Interestingly , for tasks in which achieving a good performance is trivial, the scores successfully mitig ate both the probability mass of low-performing and high-performing policies introduced by the random initialization (e.g., the radial task for RC in Fig. 4h ). 19 0.0 0.2 0.4 0.6 0.8 1.0 −100 −50 0 50 100 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score −100 −50 0 50 100 Reward V alue (a) MC, standard 0.0 0.2 0.4 0.6 0.8 1.0 −100 −50 0 50 100 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score −100 −50 0 50 100 Reward V alue (b) MC, left 0.0 0.2 0.4 0.6 0.8 1.0 0.0 0.5 1.0 1.5 2.0 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0.0 0.5 1.0 1.5 2.0 Reward V alue (c) MC, speed 0.0 0.2 0.4 0.6 0.8 1.0 0 100 200 300 400 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 100 200 300 400 Reward V alue (d) MC, height 0.0 0.2 0.4 0.6 0.8 1.0 0 5 10 15 20 25 30 35 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 5 10 15 20 25 30 35 Reward V alue (e) RC, speed 0.0 0.2 0.4 0.6 0.8 1.0 0 10 20 30 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 10 20 30 Reward V alue (f) RC, clockwise 0.0 0.2 0.4 0.6 0.8 1.0 0 10 20 30 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 10 20 30 Reward V alue (g) RC, c-clockwise 0.0 0.2 0.4 0.6 0.8 1.0 0 5 10 15 20 25 30 35 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 5 10 15 20 25 30 35 Reward V alue (h) RC, radial 0.0 0.2 0.4 0.6 0.8 1.0 0 20 40 60 80 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 20 40 60 80 Reward V alue (i) HP , forward 0.0 0.2 0.4 0.6 0.8 1.0 0 10 20 30 40 50 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 10 20 30 40 50 Reward V alue (j) HP , backward 0.0 0.2 0.4 0.6 0.8 1.0 0 200 400 600 800 1000 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 200 400 600 800 1000 Reward V alue (k) HP , standstill 0.0 0.2 0.4 0.6 0.8 1.0 0 200 400 600 800 1000 Reward V alue 0.0 0.2 0.4 0.6 0.8 1.0 Min-Max Normalized Score 0 200 400 600 800 1000 Reward V alue (l) HP , standard Figure 5: Correlation between APC (top row) and OPC (bottom row) scores and re wards in MC, RC, and HP . The vertical dashed line indicates the top 5% selection threshold. 20 APC score OPC score APC loss OPC loss APC loss OPC loss Standard Forward Backward Standstill Figure 6: HP , seed 888, 2D F .2 Latent Beha vior Compr ession In this ablation study , we first generate a dataset of 50 , 000 policies for the HP en vironment. W e then curate it to 5% using both the scores from the OPC and APC pipelines. Finally , we train two autoencoders: one with the action-matching objectiv e from the APC pipeline and one with the occupancy-matching objective from the OPC pipeline. In all, we obtain four latent spaces: one obtained by running the full APC pipeline; one obtained by running the full OPC pipeline; one obtained by optimizing the OPC objectiv e on the APC curated dataset; and, finally , one obtained by optimizing the APC objectiv e on the OPC curated dataset. W e repeat this process with two different latent dimensions: 2D and 3D. W e compare the obtained topologies in figures 6 , 7 , 8 and 9 . F .3 Latent Beha vior Optimization In this experiment, we compare the optimization performances of our pipeline (O-PGPE) against similar methods and other competitiv e DRL baselines, which include: P-PGPE, i.e., standard PGPE running in the parameter space, SAC, DDPG, and TD3, all utilizing policies of the same shape as the one being compressed by our autoencoder . Additionally , we compare our results with A-PGPE (Latent PGPE on the Action-based Policy Compression manifolds). Both O-PGPE and A-PGPE are tested on the same bottleneck dimensions, namely: k = 1 , 2 , 3 for MC, and k = 3 , 5 , 8 for RC and HP . Finally , we e valuate the performance of WS-O-PGPE (W arm Start O-PGPE) with bottleneck size k = 3 , 5 , and 8 . 21 APC score OPC score APC loss OPC loss APC loss OPC loss Standard Forward Backward Standstill Figure 7: HP , seed 999, 2D W e present the results in categories: Occupanc y-based Policy Compression (Fig. 10 ), Action-based Policy Compression (Fig. 11 ), and baselines (Fig. 12 ). 22 APC score OPC score APC loss OPC loss APC loss OPC loss Standard Forward Backward Standstill Figure 8: HP , seed 888, 3D APC score OPC score APC loss OPC loss APC loss OPC loss Standard Forward Backward Standstill Figure 9: HP , seed 999, 3D 23 O -PGPE 1D O -PGPE 2D O -PGPE 3D O -PGPE 5D O -PGPE 8D Best in Dataset WS- O -PGPE 3D WS- O -PGPE 5D WS- O -PGPE 8D (a) MC, standard (b) MC, left (c) MC, speed (d) MC, height (e) RC, speed (f) RC, clockwise (g) RC, c-clockwise (h) RC, radial (i) HP , forward (j) HP , backward (k) HP , standstill (l) HP , standard Figure 10: Performance comparison in MC, RC, and HP for different tasks using Occupancy-based Policy Compression . A -PGPE 1D A -PGPE 2D A -PGPE 3D A -PGPE 5D A -PGPE 8D (a) MC, standard (b) MC, left (c) MC, speed (d) MC, height (e) RC, speed (f) RC, clockwise (g) RC, c-clockwise (h) RC, radial Figure 11: Performance comparison in MC, RC, and HP for different tasks using Action-based Policy Compression . 24 DDPG PPO S A C TD3 PGPE (a) MC, standard (b) MC, left (c) MC, speed (d) MC, height (e) RC, speed (f) RC, clockwise (g) RC, c-clockwise (h) RC, radial (i) HP , forward (j) HP , backward (k) HP , standstill (l) HP , standard Figure 12: Performance comparison in MC, RC, and HP for dif ferent tasks across all baselines . 25

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment