Neural Approximation of Generalized Voronoi Diagrams

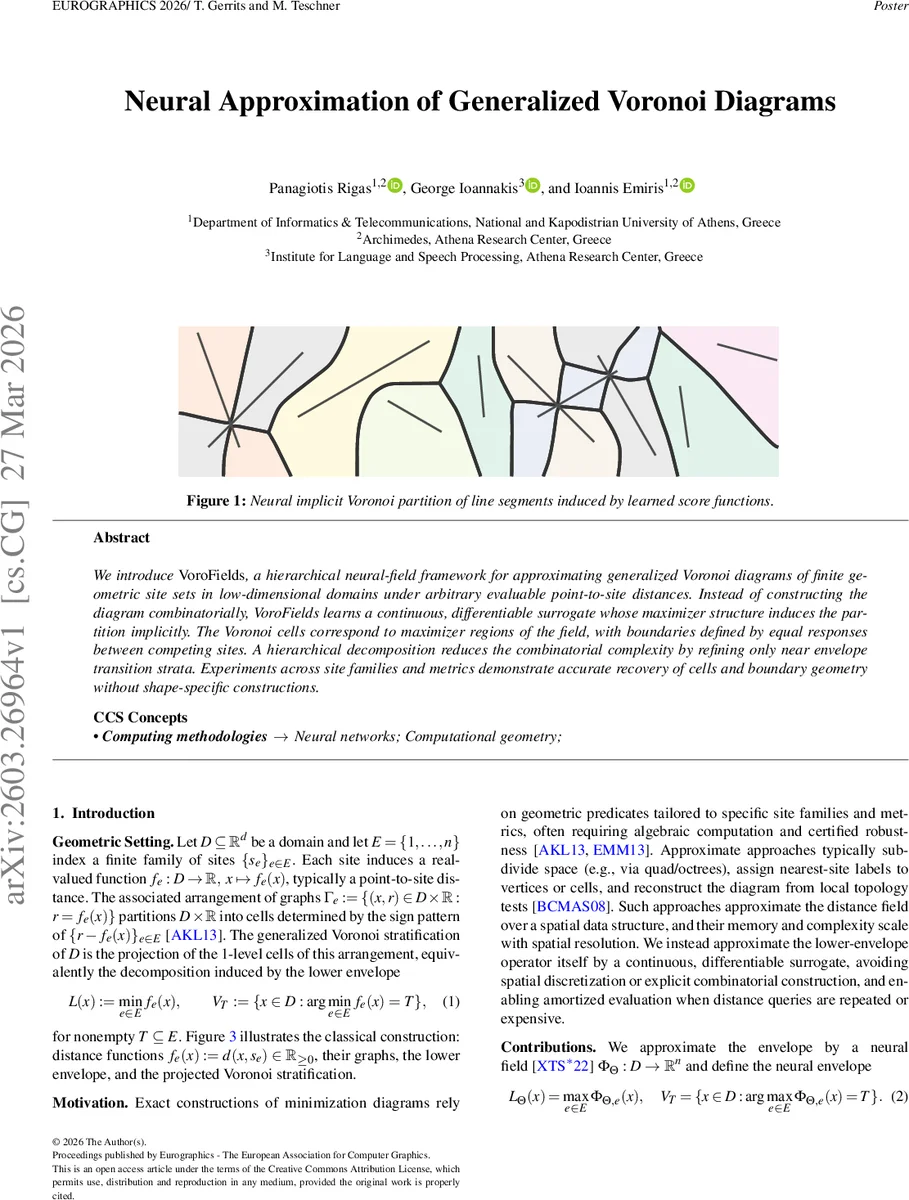

We introduce VoroFields, a hierarchical neural-field framework for approximating generalized Voronoi diagrams of finite geometric site sets in low-dimensional domains under arbitrary evaluable point-to-site distances. Instead of constructing the diagram combinatorially, VoroFields learns a continuous, differentiable surrogate whose maximizer structure induces the partition implicitly. The Voronoi cells correspond to maximizer regions of the field, with boundaries defined by equal responses between competing sites. A hierarchical decomposition reduces the combinatorial complexity by refining only near envelope transition strata. Experiments across site families and metrics demonstrate accurate recovery of cells and boundary geometry without shape-specific constructions.

💡 Research Summary

The paper introduces VoroFields, a hierarchical neural‑field framework designed to approximate generalized Voronoi diagrams for finite sets of geometric sites in low‑dimensional domains under arbitrary evaluable point‑to‑site distance functions. Traditional exact constructions rely on combinatorial predicates that are highly specialized to particular site families and metrics, often requiring algebraic computation and robust geometric predicates. Approximate methods typically discretize space with quad‑ or octrees, assign nearest‑site labels to cells, and reconstruct the diagram from local topology tests, which leads to memory and computational costs that scale with spatial resolution.

VoroFields replaces the combinatorial lower‑envelope operator L(x)=minₑ fₑ(x) with a continuous, differentiable neural surrogate Φ_Θ : D → ℝ^{|E|}. For each input point x, the network outputs a vector of scores Φ_Θ,ₑ(x) for all sites e. The neural envelope is defined as L_Θ(x)=maxₑ Φ_Θ,ₑ(x), and the induced partition assigns x to the site whose score is maximal. Training enforces that argmaxₑ Φ_Θ,ₑ(x) matches the ground‑truth argminₑ fₑ(x) obtained from the exact distance functions, using a cross‑entropy loss over sampled points.

To scale to large numbers of sites (n), the authors employ two key strategies. First, a hierarchical decomposition: the global site set E is recursively partitioned via k‑means clustering into subsets E(v) of size k ≪ n, forming a tree T of depth h ≈ ⌈log_k n⌉. Each node v stores a local neural field Φ_Θ(v) that only distinguishes among its subset of sites. Inference proceeds by traversing the tree, performing a local maximization at each node until a leaf is reached, thus reducing the global O(n) maximization to O(k·h).

Second, fat‑bisector sampling: within a node v, Voronoi boundaries correspond to points where two site distance functions are equal and minimal. Rather than sampling uniformly, the method concentrates samples inside an ε‑thickened bisector region B(v)_ε = { x ∈ R(v) | ∃ i≠j : f_i(x), f_j(x) ≤ minₑ fₑ(x) + ε }. In practice, for low dimensions, the authors approximate this region using cluster centroids c_i(v) and surrogate distances \tilde f_i(v)(x)=‖x−c_i(v)‖, which efficiently generate candidate boundary points.

Experiments cover synthetic datasets of 10 k sites per set, including squares, cuboids, ellipses, line segments, and non‑convex polygons, in both 2D and 3D, and evaluate multiple distance metrics (Euclidean, L∞, etc.). Accuracy is reported as Top‑1 and Top‑2 percentages. Results show Top‑1 accuracies above 90 % across all configurations, with Top‑2 often exceeding 95 %. Increasing ε improves boundary coverage, especially for long line segments where bisector complexity grows. Errors concentrate near dense bisector intersections and are sensitive to ε and sampling density. Notably, training only on the lower envelope yields representations that preserve higher‑order ordering (Kendall‑τ ≈ 0.72, order‑2 ≈ 0.77) on a held‑out subset, indicating that the network implicitly learns the relative ordering of distance functions beyond the binary nearest‑site classification.

The authors argue that VoroFields eliminates the need for explicit spatial discretization, reduces memory footprint, and provides amortized evaluation for repeated distance queries. Its continuous, differentiable nature also opens the door to integration with gradient‑based optimization, inverse problems, and physics‑based simulation pipelines. Future work is suggested on extending to higher dimensions, handling dynamic site updates, and exploring unsupervised or semi‑supervised training regimes. Overall, VoroFields offers a compelling alternative to classical combinatorial Voronoi construction, leveraging modern neural implicit representations to achieve scalable, accurate, and metric‑agnostic diagram approximation.

Comments & Academic Discussion

Loading comments...

Leave a Comment