GaussianGPT: Towards Autoregressive 3D Gaussian Scene Generation

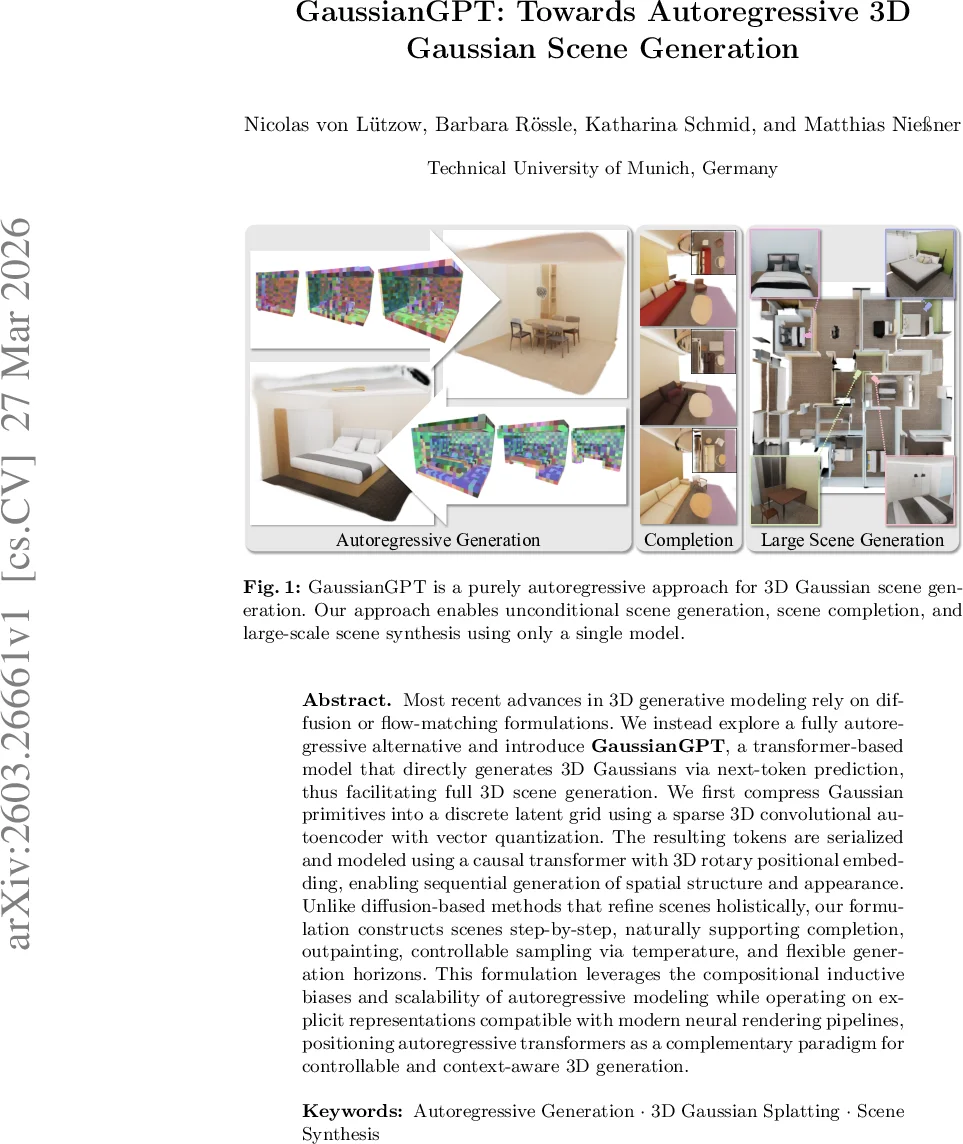

Most recent advances in 3D generative modeling rely on diffusion or flow-matching formulations. We instead explore a fully autoregressive alternative and introduce GaussianGPT, a transformer-based model that directly generates 3D Gaussians via next-token prediction, thus facilitating full 3D scene generation. We first compress Gaussian primitives into a discrete latent grid using a sparse 3D convolutional autoencoder with vector quantization. The resulting tokens are serialized and modeled using a causal transformer with 3D rotary positional embedding, enabling sequential generation of spatial structure and appearance. Unlike diffusion-based methods that refine scenes holistically, our formulation constructs scenes step-by-step, naturally supporting completion, outpainting, controllable sampling via temperature, and flexible generation horizons. This formulation leverages the compositional inductive biases and scalability of autoregressive modeling while operating on explicit representations compatible with modern neural rendering pipelines, positioning autoregressive transformers as a complementary paradigm for controllable and context-aware 3D generation.

💡 Research Summary

The paper “GaussianGPT: Towards Autoregressive 3D Gaussian Scene Generation” presents a novel paradigm for 3D scene synthesis by shifting from the predominant diffusion-based approaches to a fully autoregressive framework. The core idea is to model the generation of a 3D scene as a sequential next-token prediction problem, akin to how large language models generate text, thereby enabling step-by-step, controllable construction of 3D environments.

The methodology is structured around two main pillars: scene tokenization and autoregressive sequence modeling. First, to make complex 3D data tractable for a transformer, the authors compress a scene represented by 3D Gaussian Splatting primitives into a discrete latent grid. This is achieved using a sparse 3D convolutional autoencoder combined with Lookup-Free Vector Quantization (LFQ). The autoencoder downsamples the dense set of Gaussians into a structured grid of latent features, which are then quantized into discrete codebook indices. Each occupied voxel in this grid thus becomes represented by a position (its 3D grid coordinates) and a feature token (its codebook index).

Second, this quantized 3D grid is serialized into a 1D token sequence following a fixed spatial order (e.g., traversing x, y, then z axes). The sequence is composed of interleaved position and feature tokens. A decoder-only causal transformer, architected similarly to GPT models, is then trained to predict this sequence autoregressively. Given a context of previous tokens, the model learns to predict the next position token (where the next scene element will be placed) and then the corresponding feature token (what its visual attributes will be).

A key technical innovation is the use of 3D Rotary Positional Embeddings (3D RoPE). Standard positional embeddings in transformers only consider the 1D order in the sequence, which poorly correlates with actual 3D spatial proximity after serialization. 3D RoPE modifies the attention mechanism to be aware of the relative 3D coordinates between the voxels corresponding to any two tokens. This injects a strong spatial inductive bias, allowing the model to understand geometric relationships and layouts independent of the arbitrary serialization order.

This autoregressive formulation offers several distinct advantages over holistic denoising methods like diffusion models. It naturally supports incremental scene construction and editing tasks such as completion (filling in missing parts given a partial scene) and outpainting (extending a scene beyond its current boundaries). The generation process is highly controllable; sampling “temperature” can adjust the diversity of outputs, and the step-by-step nature allows for human-in-the-loop guidance. Furthermore, by operating on fixed-size local “chunks” of the latent grid, the model can generate large-scale scenes in a scalable, compositional manner. Finally, because the output is decoded back into an explicit 3D Gaussian representation, it remains fully compatible with efficient, real-time neural rendering pipelines like the original 3D Gaussian Splatting.

In summary, GaussianGPT re-frames 3D generation as a sequential prediction task, successfully leveraging the strengths of autoregressive transformers—compositionality, controllability, and flexible conditioning—for the domain of structured 3D scene synthesis. It establishes autoregressive modeling as a powerful and complementary paradigm to diffusion models, particularly for applications requiring precise spatial control and incremental scene assembly.

Comments & Academic Discussion

Loading comments...

Leave a Comment