GaussFusion: Improving 3D Reconstruction in the Wild with A Geometry-Informed Video Generator

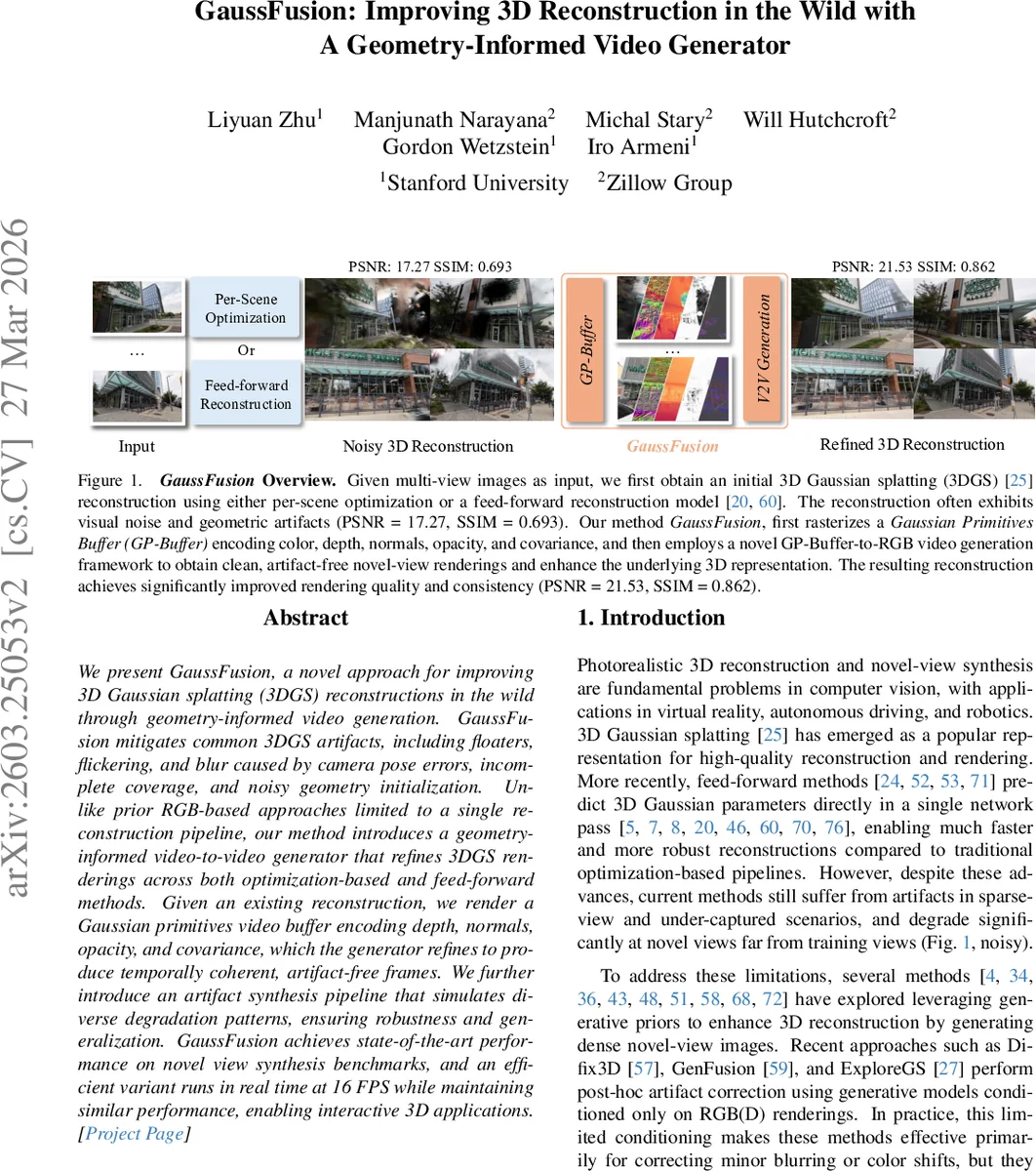

We present GaussFusion, a novel approach for improving 3D Gaussian splatting (3DGS) reconstructions in the wild through geometry-informed video generation. GaussFusion mitigates common 3DGS artifacts, including floaters, flickering, and blur caused by camera pose errors, incomplete coverage, and noisy geometry initialization. Unlike prior RGB-based approaches limited to a single reconstruction pipeline, our method introduces a geometry-informed video-to-video generator that refines 3DGS renderings across both optimization-based and feed-forward methods. Given an existing reconstruction, we render a Gaussian primitive video buffer encoding depth, normals, opacity, and covariance, which the generator refines to produce temporally coherent, artifact-free frames. We further introduce an artifact synthesis pipeline that simulates diverse degradation patterns, ensuring robustness and generalization. GaussFusion achieves state-of-the-art performance on novel-view synthesis benchmarks, and an efficient variant runs in real time at 15 FPS while maintaining similar performance, enabling interactive 3D applications.

💡 Research Summary

GaussFusion tackles persistent visual artifacts in 3D Gaussian splatting (3DGS) by introducing a geometry‑informed video‑to‑video generation pipeline that works across both optimization‑based and feed‑forward reconstruction methods. The core idea is to extract a rich, pixel‑aligned representation from any 3DGS output, called the Gaussian Primitives Buffer (GP‑Buffer). This buffer stores five modalities per pixel: rendered color, opacity, depth, surface normals, and the inverse 2‑D covariance (a measure of local geometric uncertainty). By providing depth, normals, and uncertainty in addition to RGB, the buffer supplies the generative model with explicit geometric cues that are essential for identifying and correcting artifacts such as floating splats, flickering, and blur caused by pose errors or incomplete coverage.

The generative component is a video‑to‑video transformer built on flow‑matching diffusion (DiT). A Geometry Adapter (GA) module injects the GP‑Buffer features into the transformer at multiple layers. Each GA block first projects the multi‑modal latent (obtained by encoding each GP‑Buffer channel with a VAE) through a 3D convolution, then refines it with self‑attention and cross‑attention to optional text prompts. The resulting geometry‑aware feature is added to the main latent stream, guiding the flow network to predict velocity fields that transform noisy input videos into clean, temporally coherent outputs.

To ensure robustness, the authors design an artifact synthesis pipeline that programmatically degrades clean 3DGS renderings with a wide range of realistic errors (pose perturbations, depth distortion, normal corruption, opacity mis‑blending, and covariance mis‑estimation). Over 75 000 synthetic video sequences are generated, providing diverse training data that span the degradation patterns of both optimization‑based and feed‑forward pipelines. This extensive augmentation is shown to be critical for the model’s ability to generalize across different reconstruction sources.

Experiments on standard novel‑view synthesis benchmarks demonstrate that GaussFusion consistently outperforms prior post‑hoc refinement methods. Quantitatively, PSNR improves from ~17.3 dB (baseline 3DGS) to ~21.5 dB after refinement, while SSIM rises from 0.69 to 0.86, and LPIPS drops significantly. Visual inspection confirms the removal of floating splats and the stabilization of temporal flicker, even for challenging scenes with sparse views. Ablation studies reveal that each GP‑Buffer modality contributes to performance, with the inverse covariance map being especially valuable for high‑frequency detail recovery. Removing the Geometry Adapter or training without the artifact synthesis data leads to notable degradation, underscoring the importance of both components.

Efficiency is addressed by a lightweight variant that runs at approximately 15–16 FPS on a single GPU, making the approach suitable for interactive applications such as AR/VR and robotics. The authors also provide a few‑step fine‑tuning recipe that enables on‑the‑fly refinement during rendering without sacrificing quality.

In summary, GaussFusion presents a unified, geometry‑aware video generation framework that significantly enhances 3DGS reconstructions in the wild, is agnostic to the underlying reconstruction pipeline, and achieves real‑time performance. Future work may explore multi‑scale GP‑Buffers, texture‑specific enhancement modules, and integration with downstream tasks like scene understanding or navigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment