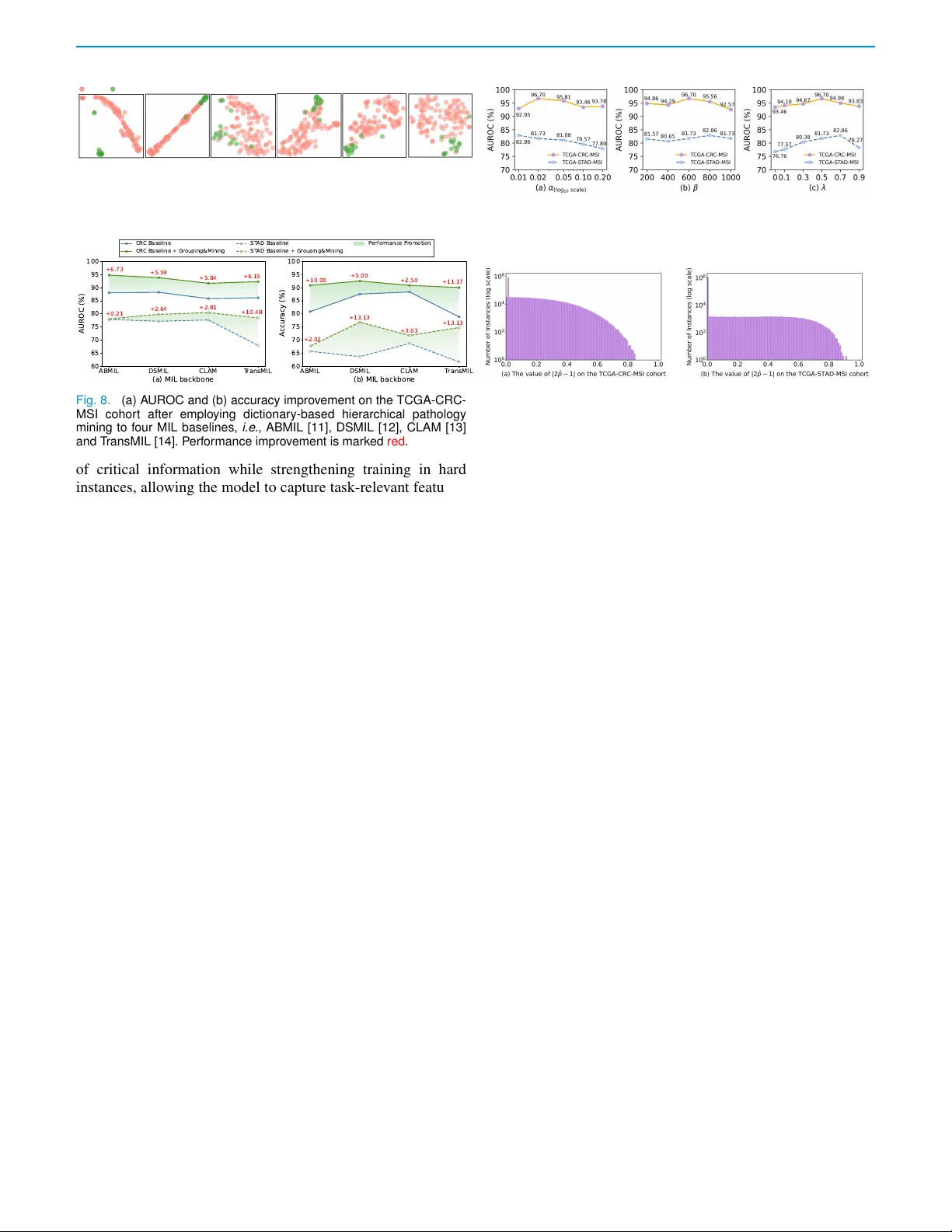

Dictionary-based Pathology Mining with Hard-instance-assisted Classifier Debiasing for Genetic Biomarker Prediction from WSIs

Prediction of genetic biomarkers, e.g., microsatellite instability in colorectal cancer is crucial for clinical decision making. But, two primary challenges hamper accurate prediction: (1) It is difficult to construct a pathology-aware representation…

Authors: Ling Zhang, Boxiang Yun, Ting Jin