GroupRAG: Cognitively Inspired Group-Aware Retrieval and Reasoning via Knowledge-Driven Problem Structuring

The performance of language models is commonly limited by insufficient knowledge and constrained reasoning. Prior approaches such as Retrieval-Augmented Generation (RAG) and Chain-of-Thought (CoT) address these issues by incorporating external knowle…

Authors: Xinyi Duan, Yuanrong Tang, Jiangtao Gong

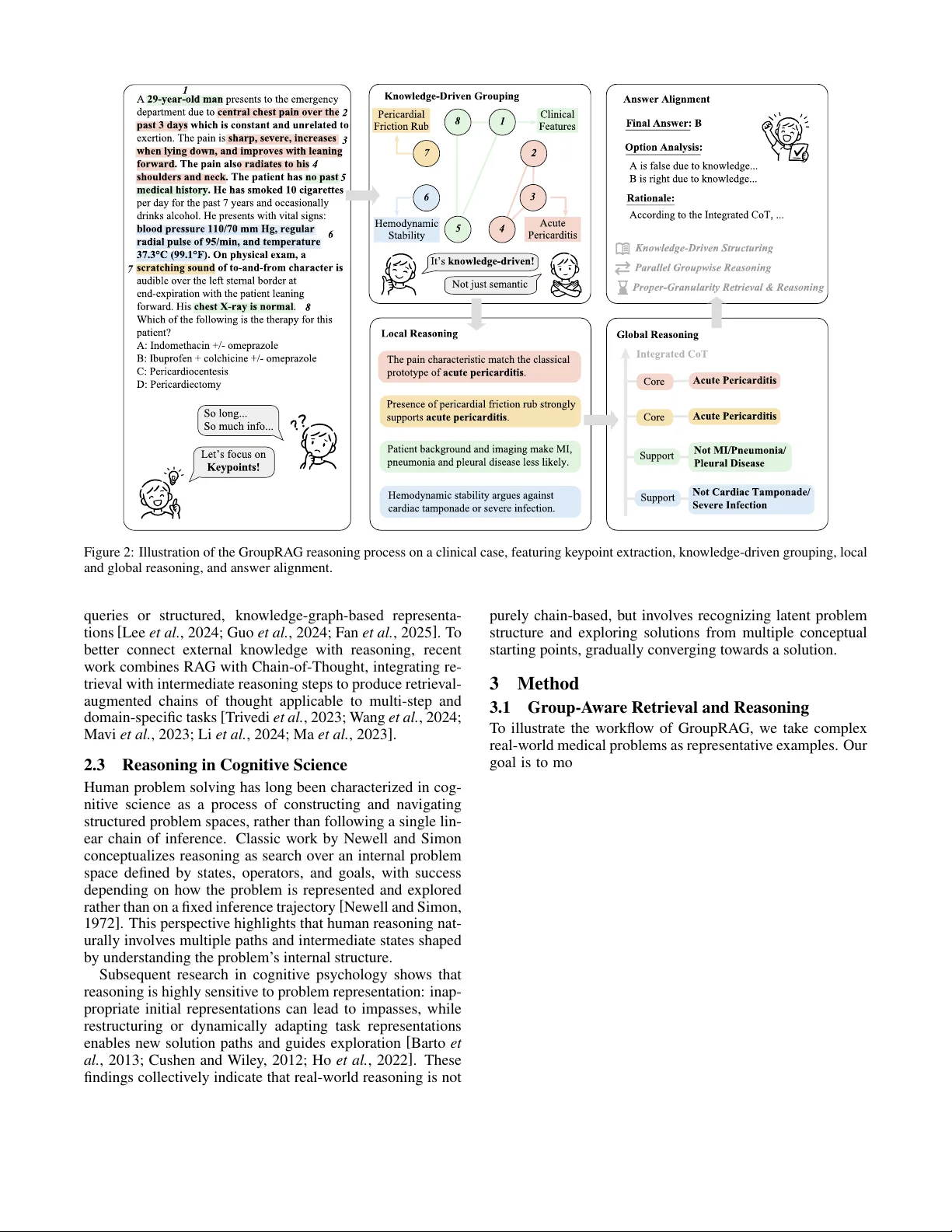

Gr oupRA G: Cognitiv ely Inspir ed Gr oup-A war e Retriev al and Reasoning via Knowledge-Dri ven Pr oblem Structuring Xinyi Duan 1 , Y uanrong T ang 1 and Jiangtao Gong 1 ∗ 1 Tsinghua Uni versity duanxy23@mails.tsinghua.edu.cn, tangxtong2022@gmail.com, gongjiangtao2@gmail.com Abstract The performance of language models is com- monly limited by insufficient knowledge and constrained reasoning. Prior approaches such as Retrie v al-Augmented Generation (RAG) and Chain-of-Thought (CoT) address these issues by incorporating e xternal knowledge or enforcing lin- ear reasoning chains, but often degrade in real- world settings. Inspired by cogniti ve science, which characterizes human problem solving as search o ver structured problem spaces rather than single inference chains, we argue that inadequate awareness of problem structure is a key overlook ed limitation. W e propose GroupRA G, a cogni- tiv ely inspired, group-a ware retrie v al and reasoning framew ork based on knowledge-dri ven keypoint grouping. GroupRA G identifies latent structural groups within a problem and performs retriev al and reasoning from multiple conceptual starting points, enabling fine-grained interaction between the two processes. Experiments on MedQA sho w that GroupRAG outperforms representativ e RA G- and CoT -based baselines. These results suggest that explicitly modeling problem structure, as in- spired by human cognition, is a promising direction for robust retrie v al-augmented reasoning. 1 Introduction Language models hav e achie ved remarkable progress across a wide range of tasks, yet they continue to struggle with com- plex, knowledge-dense questions that in v olve long contexts, multiple information sources, and intricate reasoning require- ments. Such challenges are particularly prominent in real- world scenarios. In medical decision making, for example, relev ant information is often scattered, heterogeneous, and embedded in lengthy , partially noisy descriptions. Prior studies and empirical observ ations suggest that fail- ures on such problems can largely be attributed to two fac- tors: insufficient access to relev ant knowledge and limited ability to reason over that knowledge. T o address these is- sues, two major lines of research have emerged. Retrie v al- ∗ Corresponding author . Real-World Alignment Linear Divergent Convergent Abstract Reasoning Multi-Path Reasoning Information-Dense Reasoning Keypoint Extraction Knowledge -Driven Grouping Initial Reasoning State Retrieval and Reasoning Intermediate Reasoning States Terminal Reasoning State Figure 1: Reasoning Paradigms Comparison. Traditional CoT fol- lows Linear/Di ver gent paths on unstructured sequences. GroupRAG transforms monolithic inputs into a structured problem space, em- ploying a Con v ergent net via k eypoint grouping for real-w orld align- ment. Augmented Generation (RAG) incorporates external infor- mation sources to alle viate the reliance on parametric mem- ory for knowledge-dense tasks [ Lewis et al. , 2020 ] . In par - allel, Chain-of-Thought (CoT) prompting and related distil- lation methods improve reasoning performance by explic- itly modeling intermediate inference steps [ W ei et al. , 2022; Hsieh et al. , 2023 ] . Despite their ef fecti veness, existing RA G- and CoT -based approaches exhibit notable limitations in comple x, real-world settings. In RAG systems, retriev ed chunks often fail to pre- cisely match the information actually required to answer the question, and models may struggle to align, filter , and in- tegrate retrieved content into coherent reasoning [ Izacard et al. , 2023 ] . CoT -based methods, while improving reasoning fluency , remain heavily dependent on the model’ s internal knowledge: when critical facts are missing or misaligned, the resulting reasoning chains may appear coherent but are grounded in incomplete or incorrect premises. Consequently , simply retrieving more information or generating longer rea- soning chains is often insufficient for reliably solving com- plex problems. Recent work has attempted to bridge this gap through ei- ther structured retriev al mechanisms or tighter integration of retriev al and reasoning, such as organizing external knowl- edge using graphs and interleaving retriev al steps within rea- soning traces, respectively [ T ri vedi et al. , 2023; W ang et al. , 2024; Fan et al. , 2025; Guo et al. , 2024 ] . While promising, these approaches often increase system complexity or still op- erate at a coarse granularity , treating each question as a single undifferentiated unit for retrie v al and reasoning. A key insight underlying this work is that the difficulty of complex real-world questions—unlike formal mathemat- ical or symbolic reasoning problems—lies not only in miss- ing knowledge or insufficient reasoning capacity , but in how the pr oblem is internally structur ed and repr esented [ Newell and Simon, 1972; Barto et al. , 2013; Eckstein and Collins, 2021 ] . Cognitive science has long shown that human prob- lem solving is highly sensitive to problem representation: complex tasks are understood and solved by organizing in- formation into structured problem spaces rather than treating them as undifferentiated sequences [ Newell and Simon, 1972; Cushen and W iley , 2012; Ho et al. , 2022 ] . In real-w orld ques- tion answering, inputs are rarely monolithic. F or instance, when a patient describes their condition to a physician, the information is often con veyed through a lengthy , loosely or- ganized narrati ve that interleav es symptoms, medical history , test results, and irrelev ant details. Howe ver , language mod- els are typically forced to process such inputs as a single flat sequence, leading retriev al to operate at an inappropriate granularity . This representational mismatch in turn gives rise to entangled and error-prone reasoning [ Zhang et al. , 2023; Eckstein and Collins, 2021 ] . This perspectiv e motiv ates a different design principle: rather than pushing models to retrie ve more information or to generate increasingly long reasoning chains, we aim to enable them to reason ov er a structured problem space by explic- itly uncovering the latent structure of a question. From this view , effecti ve real-world reasoning resembles human prob- lem solving: it begins by identifying meaningful substruc- tures, proceeds through parallel inference from multiple con- ceptual starting points, and gradually integrates these partial inferences into a coherent conclusion, as illustrated in Fig- ure 1. Accordingly , we focus on identifying key information points within a question and organizing them into meaningful groups that reflect underlying knowledge relations, thereby providing an explicit structural scaf fold for both retrie val and reasoning. Based on this design principle, we propose GroupRA G , a cogniti vely inspired, group-aware retriev al-and-reasoning framew ork based on knowledge-dri ven keypoint grouping. GroupRA G treats grouping as a first-class operation that makes the internal structure of a question explicit, trans- forming unstructured inputs into a set of structured reasoning units. Retriev al and reasoning are then performed at the group lev el and subsequently integrated, allowing the two processes to be temporarily decoupled yet mutually reinforcing: re- triev al provides group-specific knowledge at an appropriate granularity , while reasoning over each group guides the se- lection and integration of relev ant information to ward a final answer . By explicitly discovering the latent grouping structure of knowledge points within a question, our approach reframes complex real-world reasoning as structured exploration over a problem space. This decomposition transforms a mono- lithic reasoning task into a set of lower -complexity , group- lev el subtasks, mirroring how humans organize and navigate complex problem representations in cogniti ve science. As a result, retriev al and reasoning are jointly constrained at an ap- propriate granularity , yielding modular and interpretable in- termediate reasoning traces that are amenable to supervision, distillation, and alignment. • W e introduce a cognitively inspired, group-aware retriev al-and-reasoning framework that explicitly mod- els the internal structure of complex questions by or- ganizing key information points into knowledge-dri ven groups, enabling retrie val and reasoning to operate at an appropriate, structure-aware granularity . • W e reformulate conv entional Chain-of-Thought reason- ing from a single linear chain or div ergent tree into a con vergent reasoning net, in which inference is initi- ated from multiple grouped reasoning roots, augmented with group-specific retriev al, and progressiv ely inte- grated into a coherent global conclusion. • W e demonstrate that GroupRA G consistently outper- forms a wide range of RA G-based and CoT -based meth- ods on knowledge-intensi ve medical question answer- ing, providing empirical evidence that explicit prob- lem structuring—rather than longer reasoning chains alone—is critical for robust real-w orld reasoning. 2 Related W ork 2.1 Chain-of-Thought Chain-of-Thought (CoT) prompting was introduced to elicit explicit multi-step reasoning in large language models by generating intermediate inference steps before a final an- swer , improving performance on reasoning tasks [ W ei et al. , 2022 ] . Subsequent enhancements improve robustness and precision, including methods that sample multiple reason- ing paths, structure reasoning from simple to complex sub- problems, or generate executable representations for struc- tured reasoning [ W ang et al. , 2022; Zhou et al. , 2022; Chen et al. , 2022 ] . Beyond linear chains, recent work adopts richer reasoning structures such as trees, forests, or more complex graphs to explore multiple solution trajecto- ries [ Y ao et al. , 2023; Chen et al. , 2025; Bi et al. , 2024; Pande y et al. , 2025 ] . Despite these advances, most CoT ap- proaches—including those using tree, forest, or more com- plex graph structures—still rely on a single start point, high- lighting the continued reliance on single-start inference paths in current CoT and its extensions. 2.2 Retriev al-A ugmented Generation Retriev al-Augmented Generation (RAG) improv es language models by retrie ving external knowledge, reducing re- liance on parametric memory for knowledge-intensi ve tasks [ Lewis et al. , 2020 ] . Early RA G frame works retriev e unstructured text passages to condition genera- tion [ Izacard et al. , 2023 ] , while more recent work en- hances retriev al relev ance and efficienc y through task-aw are So long... So much info... Let’s focus on Keypoi nts! Acute Pericarditis Hemodynamic Stability Pericardial Friction Rub Clinical Features It’s knowledge-driven! Not just semantic The pain characteristic match the classical prototype of acute pericarditis. Local Reasoni ng Presence of pericardial friction rub strongly supports acute pericarditis. Hemodynamic stability argues against cardiac tamponade or severe infection. Patient background and imaging make MI, pneumonia and pleural disease less likely. Global Reasoni ng Core Support Support Core Acute Peri cardi ti s Acute Peri cardi ti s Not MI/Pneumoni a/ Pleural Di sease Not C ardi ac T amponade/ S e v ere In f ecti on F i nal Ans w er : B O pti on Analysi s : Rati onale : Ans w er Ali gnment A is false due to kno w ledge... B is right due to kno w ledge... According to the Integrated CoT, ... Kno w ledge - Dri v en Groupi ng A 29 - y ear-old m an presents to the emergency department due to central c h est pain over t h e past 3 days wh i c h i s constant and unrelated to e x ertion. The pain is s h arp , severe , increases wh en lyi ng do w n , and i mpro v es w i t h leani ng f or w ard . Th e pai n also radi ates to h i s s h oulders and nec k. Th e pati ent h as no past medi cal h i story . H e h as smo k ed 10 ci garettes per day for the past 7 years and occasionally drinks alcohol. He presents w ith vital signs : blood pressure 110 / 70 mm H g , regular radi al pulse o f 95 /mi n , and temperature 3 7. 3 ° C (99.1° F ). O n p h ysi cal e x am , a scratc h i ng sound o f to - and -f rom c h aracter i s audible over the left sternal border at end - e x piration w ith the patient leaning for w ard. His c h est X -ra y is nor m al. W hich of the follo w ing is the therapy for this patient ? A : Indomethacin +/ - omepra z ole B : Ibuprofen + colchicine +/ - omepra z ole C : Pericardiocentesis D : Pericardiectomy Integrated C o T 8 1 2 3 7 6 5 4 1 2 3 4 5 6 7 8 Knowledge-Driven Structuring Parallel Groupwise Reasoning Proper-Granularity Retrieval & Reasoning Figure 2: Illustration of the GroupRAG reasoning process on a clinical case, featuring keypoint extraction, kno wledge-driven grouping, local and global reasoning, and answer alignment. queries or structured, knowledge-graph-based representa- tions [ Lee et al. , 2024; Guo et al. , 2024; Fan et al. , 2025 ] . T o better connect external kno wledge with reasoning, recent work combines RA G with Chain-of-Thought, integrating re- triev al with intermediate reasoning steps to produce retriev al- augmented chains of thought applicable to multi-step and domain-specific tasks [ T rivedi et al. , 2023; W ang et al. , 2024; Mavi et al. , 2023; Li et al. , 2024; Ma et al. , 2023 ] . 2.3 Reasoning in Cognitive Science Human problem solving has long been characterized in cog- nitiv e science as a process of constructing and navigating structured problem spaces, rather than following a single lin- ear chain of inference. Classic work by Newell and Simon conceptualizes reasoning as search ov er an internal problem space defined by states, operators, and goals, with success depending on how the problem is represented and explored rather than on a fixed inference trajectory [ Newell and Simon, 1972 ] . This perspectiv e highlights that human reasoning nat- urally inv olves multiple paths and intermediate states shaped by understanding the problem’ s internal structure. Subsequent research in cognitiv e psychology shows that reasoning is highly sensiti ve to problem representation: inap- propriate initial representations can lead to impasses, while restructuring or dynamically adapting task representations enables new solution paths and guides exploration [ Barto et al. , 2013; Cushen and W iley , 2012; Ho et al. , 2022 ] . These findings collectively indicate that real-world reasoning is not purely chain-based, but in volves recognizing latent problem structure and exploring solutions from multiple conceptual starting points, gradually con verging to wards a solution. 3 Method 3.1 Group-A ware Retriev al and Reasoning T o illustrate the workflo w of GroupRAG, we take complex real-world medical problems as representati ve examples. Our goal is to model such problems in order to identify multiple starting points for reasoning chains. Inspired by how human students approach complex problems, we first employ a lan- guage model to e xtract k ey information points from the prob- lem. This step, termed Keypoint Extraction , is analogous to ho w students highlight or circle important information in a problem. The extracted keypoints are then organized to achiev e a structured representation of the problem. W e implement a Knowledge-Driv en Grouping strategy , where a fine-tuned model leverages retrie ved external knowledge to group re- lated keypoints. This process resembles how students loop up reference materials to link strongly associated information points. Unlike con ventional semantic-matching-based group- ing, our approach is kno wledge-driven, enabling more mean- ingful organization. After grouping, each group corresponds to a specific knowledge concept or category label. Through Ke ypoint Extraction and Knowledge-Dri ven Grouping, we achiev e information structuring, transforming complex and lengthy problems into ke ypoint groups. Each group is then treated as an independent starting point for reasoning. W e perform groupwise retrie val and reasoning, constrained by problem conditions. This approach narrows retriev al ke ywords, shifting from coarse-grained (problem- lev el) to fine-grained (group-le vel) retrie val, while also limit- ing the reasoning scope to reduce interference from unrelated domains. The outcome is multiple Local Reasoning conclu- sions, each corresponding to a keypoint group. These local reasoning conclusions can be categorized into three types according to their relev ance and contribution to the problem: core conclusions ( Core ), supporting conclu- sions ( Support ), and noise ( Noise ). Building upon this, we utilize a model to identify and select the conclusions cate- gorized as Cor e or Support , and subsequently integrate them into a coherent global Chain-of-Thought (CoT). The CoT ob- tained by fusing multiple local reasoning conclusions consti- tutes the Global Reasoning . Global Reasoning produces a readable and high- confidence reasoning chain. T o align with downstream ev aluation, we perform an Answer Alignment step. Starting from Global Reasoning, the model conducts fine-grained retriev al ov er candidate answer options, outputting the correct choice, option analysis, and rationale. Empirical studies ha ve shown that this step is crucial for small language models, preventing situations where the CoT is correct but the final selected option is wrong. 3.2 System Design Modular Pipeline and Stage-wise T raining Building on the fiv e-stage workflow of GroupRA G— Ke ypoint Extraction, Knowledge-Dri ven Grouping, Local Reasoning, Global Reasoning, and Answer Alignment—this section presents the design details of the system. Each stage has independent inputs and outputs, functioning as sequen- tially connected modules. T o ensure high-quality outputs at each module while reduc- ing deployment and experimental costs, we implement each module using a dedicated, fine-tuned small language model. Concretely , we first run a large training dataset of medical questions through the complete pipeline, recording the inputs and outputs at each of the fiv e modules. These records are then used as hard labels to fine-tune five base models sepa- rately , ef fectiv ely creating experts specialized for each sub- task. Among these modules, the Global Reasoning module is tasked with e v aluating contrib utions of multiple local reason- ing conclusions to answering the question. This process is inherently soft and context-dependent as multiple combina- tions of conclusions can be valid. Supervised fine-tuning with hard labels is thus limited in capturing these nuanced depen- dencies. T o better align the module with this task, we adopt a reinforcement learning (RL) approach, specifically utiliz- ing a policy gradient method to fine-tune the selection policy against a custom-designed re ward function. Global Reasoning Optimization The Global Reasoning module consists of two steps. First, a selection model identifies and selects the local reasoning con- clusions that serv e as Cor e or Support conclusions. Second, a synthesis model combines the selected conclusions into a co- herent global Chain-of-Thought. W e independently fine-tune the models used in each step of Global Reasoning, and further optimize the first-step model with a policy gradient method to enhance the selection precision. The key principle in Global Reasoning is to ensure that all Cor es are fully included, Noises are av oided, and Supports are encouraged. T o quantitatively guide the model tow ards this goal, we design a reward function that captures the se- lection quality of local reasoning conclusions, defined as the W eighted Infer ence F-scor e (WIF) . W eighted Inference F-score (WIF). Let the sets of local reasoning conclusions be C = { C i } (Core) , S = { S j } (Support) , N = { N k } (Noise) . For a model-selected subset of local reasoning P ⊂ C ∪ S ∪ N , we define: Core Recall: R c = | P ∩ C | | C | or 1 ( if | C | = 0) Support Recall: R s = | P ∩ S | | S | or 0 ( if | S | = 0) Noise Recall: R n = | P ∩ N | | N | or 0 ( if | N | = 0) WIF ( P ) = R α c · (1 − R n ) β · (1 + γ R s ) where the hyperparameters are chosen such that α ≥ β ≫ γ , prioritizing coverage of Cores, penalizing selection of Noises, and mildly encouraging inclusion of Supports. In practice, we set α = 2 . 5 , β = 2 , γ = 0 . 5 . If P = ∅ , we assign WIF ( P ) = 0 to discourage the model from skipping all conclusions. P olicy Optimization. W e treat the model as a stochastic pol- icy π θ ( P | x ) that outputs a subset of local reasoning conclu- sions P giv en a problem x . For each problem, we generate K candidate selections (rollouts) { P k } K k =1 and compute the corresponding rew ards R k = WIF ( P k ) . The baseline reward for advantage estimation is the mean re ward ov er rollouts: ¯ R = 1 K K X k =1 R k , ˆ A k = R k − ¯ R std ( { R i } ) + ϵ . W e parameterize the model π θ ( P | x ) as independent Bernoulli distributions over each local reasoning conclusion. For conclusion l i , the model outputs a probability p i = π θ ( l i = 1 | x ) of being selected. A rollout P k is gener- ated by sampling each l i independently according to p i . The log-probability of P k is then computed as log π θ ( P k | x ) = X l i ∈ P k log p i + X l i / ∈ P k log(1 − p i ) , The policy is then updated using a polic y gradient loss: L = − 1 K K X k =1 ˆ A k · log π θ ( P k | x ) . (1) This training procedure ensures that the model learns to se- lect local reasoning conclusions that maximize WIF , produc- ing a coherent global Chain-of-Thought that prioritizes Cor e while av oiding Noise . Answer Global Reasoning Composed Chain of Thought (CoT) Answer Alignment Final Answer Option Analysis Rationale Data Flow RAG Keypoint Extraction Clinical Findings Symptoms Lab Results Medical History Knowledge -Driven Grouping Symptom Groups Patient Context Prior Treatments Local Reasoning Inference Pattern 1 Inference Pattern 2 Inference Pattern 3 Information Structuring Convergent Reasoning Figure 3: An Abstract Overvie w of GroupRA G. 3.3 Retriev al-A ugmented Generation in GroupRA G Retriev al-Augmented Generation (RAG) plays a crucial role in GroupRAG and is integrated into three key modules: Knowledge-Dri ven Grouping, Local Reasoning, and Answer Alignment. Rather than serving as a standalone retriev al com- ponent, RA G is tightly coupled with the groupwise reason- ing structure and operates at dif ferent granularities across the pipeline. In Knowledge-Dri ven Grouping, RA G is applied at the keypoint level. For each extracted keypoint, the model re- triev es external knowledge to ground it in a relev ant kno wl- edge context. When multiple keypoints retrie ve overlap- ping or highly related knowledge, they are more likely to be grouped together , as they are inferred to be associated with the same underlying medical concept. This retriev al-guided grouping enables the model to organize keypoints based on shared knowledge relev ance, rather than surf ace-level seman- tic similarity . In Local Reasoning, RA G operates at the group lev el. Each group of keypoints is treated as a unified query for retriev al, with the objectiv e of identifying knowledge that jointly ex- plains multiple keypoints. This groupwise retriev al is par- ticularly important in knowledge-intensi ve domains such as medicine. For e xample, retrieving information for two symp- toms independently may lead to different candidate diseases, whereas retrie ving their combination may re veal that they are associated symptoms of the same disease. By conditioning retriev al on grouped keypoints, the model is able to perform more precise and context-a ware reasoning. RA G in Answer Alignment is demonstrated in Subsec- tion 3.1. Overall, RA G in GroupRA G is adapted to different stages of the reasoning process, operating at varying granu- larities from the keypoint lev el to the group lev el and the op- tion level. This group-aware integration of retrie val enables GroupRA G to progressively refine both the granularity and relev ance of retrie ved kno wledge, forming a solid foundation for the subsequent reasoning process. 4 Experiments 4.1 Dataset and Model T o e valuate the effecti veness of GroupRAG in address- ing complex real-world reasoning problems, we adopt MedQA [ Jin et al. , 2021 ] , a USMLE-style medical dataset, for both training and ev aluation. MedQA consists of long and information-dense clinical case descriptions that require multi-step reasoning and span a wide range of domains in ba- sic and clinical medicine. From MedQA, we randomly select 2,000 questions as the training set, ensuring balanced cover - age across different medical specialties and question types. Follo wing the procedure described in Subsection 3.2, each training question is sequentially processed by the five mod- ules of GroupRA G, each instantiated with GPT -4o [ OpenAI, 2024 ] , with the module-wise outputs recorded as intermedi- ate supervision signals for training. W e choose LLaMA3.1-8B [ Dubey et al. , 2024 ] as the base model. Using the collected intermediate data, the base model is trained and specialized into five lightweight sub-models, each corresponding to a specific sub-task in the GroupRA G pipeline. For ev aluation, we construct a separate test set of 400 information-dense questions, some of which include distracting or irrele vant details, aiming to assess the robust- ness and reasoning capability of GroupRA G under challeng- ing conditions. 4.2 Evaluation Metrics W e design stage-wise e valuation metrics for the fiv e modules of GroupRA G. Keypoint Extraction. Extraction performance is e valuated using precision, recall, and F1 score. For each question, the keypoints e xtracted by the trained lightweight model are com- pared against a gold standard set of keypoints extracted by GPT -4o. Precision is defined as the proportion of predicted keypoints that correctly match the gold standard keypoints, while recall measures the proportion of gold standard key- points that are successfully recovered. The F1 score is com- puted as the harmonic mean of precision and recall, reflecting the accuracy of ke ypoint extraction. Knowledge-Driv en Grouping . The grouping stage aims to partition the extracted keypoints into groups associated with the same pieces of knowledge, which naturally constitutes a clustering task rather than a classification task. W e adopt the BCubed ev aluation, which is specifically designed for entity-lev el clustering ev aluation [ Bagga and Baldwin, 1998 ] . BCubed Precision measures, for each keypoint, the propor- tion of other keypoints in the same predicted group that also belong to the same gold-standard group generated by GPT - 4o. BCubed Recall measures the proportion of keypoints in the gold-standard group that are correctly placed into the same predicted group. BCubed F1 is computed as the har- monic mean of BCubed Precision and BCubed Recall, and the final score is obtained by av eraging over all ke ypoints. Local and Global Reasoning. F or local reasoning based on each keypoint group, we use GPT -4o to ev aluate the factual and logical correctness of each inference, and then compute the ov erall accurac y o ver all inferences. For global reasoning, we adopt the WIF function defined in Subsection 3.2 to assess the model’ s ability to distinguish Core, Support, and Noise lo- cal conclusions. This ensures that the assembled global rea- soning co vers all essential conclusions while filtering out dis- tracting or irrelev ant inferences. Answer Alignment. T o ev aluate the final output of GroupRA G, we compare the selected answer options with the correct options in the dataset, and calculate the overall accuracy across the test set. This accuracy serves as the pri- mary metric for horizontal comparison with other models and methods. 4.3 Experimental Design T o systematically e valuate the effecti veness of GroupRA G and analyze the contribution of its individual modules, we design three sets of experiments: leave-one-out ablation, pro- gressiv e ablation, and joint comparison across dif ferent mod- els and methods. Leav e-One-Out Ablation. In this setting, we assess the marginal contribution of each module in GroupRA G by re- moving one module at a time while keeping all other compo- nents unchanged. Specifically , for each of the fiv e modules, we either replace the specially trained model with the base model, or remove the RAG component within the module, if it originally contains one. Each ablation variant is ev aluated using the same set of metrics as the complete GroupRA G system. The performance of each variant is then compared against the complete GroupRA G pipeline, enabling a fine- grained analysis of the individual impact of each module. Progr essive Ablation. While leave-one-out ablation fo- cuses on isolated effects, progressi ve ablation is designed to examine the cumulati ve contribution of GroupRA G’ s mod- ules. Starting from the complete GroupRA G system, we pro- gressiv ely replace trained models with the base model and sequentially remov e RAG modules in the order they appear in the pipeline. This process continues until the system de- generates into a baseline configuration composed entirely of Extract F1 Group F1 Local Acc.(%) Global WIF Answer Acc.(%) GroupRA G 0.962 0.802 73.14 1.13 71.75 w/o Ext. T rain 0.951 0.816 70.25 1.06 68.50 w/o Gro. T rain 0.962 0.714 64.52 0.93 63.00 w/o Loc. T rain 0.962 0.802 61.87 0.85 64.25 w/o Glo. T rain 0.962 0.802 73.14 0.69 68.25 w/o Ans. T rain 0.962 0.802 73.14 1.13 67.50 w/o Gro. RA G 0.962 0.706 62.87 0.91 64.50 w/o Loc. RA G 0.962 0.802 59.54 0.86 63.25 w/o Ans. RA G 0.962 0.802 73.14 1.13 67.25 T able 1: Leav e-One-Out Ablation Results. Each v ariant is compared with the full pipeline (first row) to demonstrate the marginal contri- bution of each module. “w/o” indicates removing each component independently from the full pipeline. base models and without any RAG component. Each experi- mental setting is compared with the preceding one, allo wing us to observe ho w performance ev olves as modules are incre- mentally remov ed. Joint Comparison Across Models and Methods. In the final set of experiments, we aim to ev aluate the capability gap of small language models under different reasoning and retriev al paradigms, and to compare their performance with a reference model. T o this end, we conduct a horizontal comparison across dif ferent models and methods, ev aluating LLM, SLM, and trained SLM under CoT prompting, standard RA G, and GroupRAG. Specifically , for the trained SLM set- ting, models are separately fine-tuned under different supervi- sion signals corresponding to each method, such as question- answer pairs, CoT , and the intermediate data of GroupRA G. For the LLM setting, GPT -4o is included as a reference model to provide an approximate upper bound on task performance. For clarity and consistency , we focus on final answer accu- racy as the sole e valuation metric in this comparison. 4.4 Results Leav e-One-Out Ablation. Results are shown in T able 1. Since the fi ve modules are ex ecuted sequentially , ablating a specific module only affects its own ev aluation metric and those of downstream modules, while leaving upstream met- rics unchanged. Compared with the complete GroupRA G system, all ablation v ariants exhibit varying degrees of de gra- dation in final answer accuracy , indicating that each of the fiv e trained modules and their associated RA G components plays a role in the overall performance of GroupRA G. A closer comparison across ablation variants shows that four configurations experience the largest drops in answer accu- racy (approximately 8%), corresponding to the remov al of trained models and RAG components in the Knowledge- Driv en Grouping and Local Reasoning modules. In contrast, ablating the Keypoint Extraction and Global Reasoning mod- ules leads to relati vely smaller decreases in final accuracy (ap- proximately 3%). Finally , when comparing each ablation set- ting with the complete GroupRA G configuration, we observe consistent degradation in downstream e valuation metrics fol- Extract F1 Group F1 Local Acc.(%) Global WIF Answer Acc.(%) Acc. ∆ (%) GroupRA G 0.962 0.802 73.14 1.13 71.75 − Ext. T rain 0.951 0.816 70.25 1.06 68.50 -3.25 − Gro. T rain 0.951 0.724 62.16 0.94 62.75 -5.75 − Gro. RA G 0.951 0.702 61.89 0.88 61.00 -1.75 − Loc. T rain 0.951 0.702 58.57 0.75 56.75 -4.25 − Loc. RA G 0.951 0.702 55.74 0.69 56.00 -0.75 − Glo. T rain 0.951 0.702 55.74 0.55 53.75 -2.25 − Ans. T rain 0.951 0.702 55.74 0.55 52.75 -1.00 − Ans. RA G 0.951 0.702 55.74 0.55 51.00 -1.75 T able 2: Progressive Ablation Results. Each variant is compared with the previous row to demonstrate the cumulati ve ef fect of the integrated modules. “ − ” indicates cumulati ve remo val. Model Method -(%) +CoT Prompting(%) +Naiv e RA G(%) +Group- RA G(%) GPT -4o 89.00 89.75 87.75 85.25 L3.1-8B(base) 48.25 54.50 53.50 61.00 L3.1-8B(trained) 52.75 61.50 58.25 71.75 T able 3: Joint Comparison Across Models and Methods. L3.1-8B refers to LLaMA 3.1-8B. lowing the remo val of upstream modules. Progr essive Ablation. Results are shown in T able 2. As modules are removed sequentially following the pipeline or- der , ev aluation metrics of downstream stages exhibit a grad- ual decline, forming a stage-wise degradation pattern that aligns with the pipeline structure. As fewer modules are re- tained, de gradation in upstream outputs and intermediate rea- soning quality accumulates and results in a monotonic de- crease in final answer accurac y . The final column, denoted as ∆ , reports the accurac y drop of each configuration relati ve to the previous one. Among all transitions, remo ving the trained models of the Grouping and Local Reasoning modules leads to the largest accurac y declines. Joint Comparison Across Models and Methods. Re- sults are shown in T able 3. For the untrained base model LLaMA3.1-8B, CoT prompting and Naive RA G yield accuracy improvements of approximately 5-6%, whereas GroupRA G leads to a substantially larger gain of around 13%. Across all retrie val and reasoning methods, the trained LLaMA3.1-8B outperforms its untrained counter- part. Among these configurations, applying GroupRA G to the trained small language model achieves the highest accu- racy within the small model setting, reaching 71.75%. As a reference, GPT -4o achieves the best performance under both the non-augmented setting (89.00%) and CoT prompt- ing (89.75%), while incorporating Naiv e RA G or GroupRA G results in slight performance degradation. 4.5 Discussion Results of the leav e-one-out ablation study indicate that prop- erly uncovering problem structure and conducting local rea- soning are central to accurate problem solving. In contrast, tasks that are more procedural in nature—for example, ex- tracting information—can be properly handled by untrained small models, and thus ha ve limited impact on the final an- swer accuracy . Results of the progressiv e ablation study demonstrate that the performance gain of GroupRAG arises from the cumula- tiv e synergy between modules. The quality of upstream out- puts directly affects subsequent reasoning, and any upstream degradation is amplified through the pipeline, ultimately im- pacting the final answer . Joint comparison across models and methods indicates that GroupRA G ef fectiv ely compensates for the knowledge and reasoning limitations of small language models, enabling them to answer complex questions more accurately and ro- bustly . In contrast, for large language models with strong inherent knowledge co verage and implicit reasoning capabil- ities (e.g., GPT -4o), introducing GroupRAG may slightly re- duce accurac y . This suggests that e xternal retrie v al and struc- tured reasoning may introduce redundant information or in- terfere with the efficient internal reasoning process of large language models. Overall, this experiment highlights the sig- nificant benefits of GroupRA G for small language models, while pro viding a reasonable explanation for the observ ed ef- fects on large language models. Future work could explore incorporating a multi-agent collaboration mechanism within the GroupRA G frame work. This would replace the current fixed modular reasoning pipeline, allowing dif ferent agents to coordinate dynamically during reasoning and uncov er potential latent structures in the problem. Another direction is to de velop more sophisticated methods for modeling internal problem structures, which could better constrain retrie val and reasoning, thereby im- proving both accuracy and rob ustness across tasks and model scales. 5 Conclusion In this paper, we propose GroupRAG, a cognitiv ely inspired, group-aware retrie val and reasoning framework that explic- itly models the internal structure of complex questions. By reformulating con ventional Chain-of-Thought into a conv er- gent reasoning net, GroupRA G enables inference to proceed from multiple grouped reasoning roots in parallel and eventu- ally conv erge into a coherent global conclusion. GroupRA G allows retriev al and reasoning to be temporarily decoupled yet mutually reinforcing, with retriev al providing knowledge at an appropriate granularity and reasoning guiding the se- lection and integration of relev ant information. Empirical ev aluations on knowledge-intensi ve medical problems vali- date that GroupRAG outperforms representative RA G- and CoT -based methods. Ultimately , this w ork highlights that e x- plicitly modeling problem structure is a promising direction for robust real-world reasoning, beyond the mere extension of reasoning chains. References [ Bagga and Baldwin, 1998 ] Amit Bagga and Breck Baldwin. Entity-based cross-document coreferencing using the vec- tor space model. In COLING 1998 V olume 1: The 17th in- ternational conference on computational linguistics , 1998. [ Barto et al. , 2013 ] Andrew G Barto, George Konidaris, and Christopher V igorito. Behavioral hierarchy: exploration and representation. In Computational and r obotic models of the hierar chical organization of behavior , pages 13–46. Springer , 2013. [ Bi et al. , 2024 ] Zhenni Bi, Kai Han, Chuanjian Liu, Y ehui T ang, and Y unhe W ang. F orest-of-thought: Scaling test- time compute for enhancing llm reasoning. arXiv pr eprint arXiv:2412.09078 , 2024. [ Chen et al. , 2022 ] W enhu Chen, Xueguang Ma, Xin yi W ang, and W illiam W Cohen. Program of thoughts prompting: Disentangling computation from reason- ing for numerical reasoning tasks. arXiv pr eprint arXiv:2211.12588 , 2022. [ Chen et al. , 2025 ] Qiguang Chen, Libo Qin, Jinhao Liu, Dengyun Peng, Jiannan Guan, Peng W ang, Mengkang Hu, Y uhang Zhou, T e Gao, and W anxiang Che. T o- wards reasoning era: A survey of long chain-of-thought for reasoning large language models. arXiv pr eprint arXiv:2503.09567 , 2025. [ Cushen and W iley , 2012 ] Patrick J. Cushen and Jennifer W iley . Cues to solution, restructuring patterns, and reports of insight in creati ve problem solving. Consciousness and Cognition , 21(3):1166–1175, 2012. [ Dubey et al. , 2024 ] Abhimanyu Dube y , Abhina v Jauhri, Abhinav Pandey , Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur , Alan Schelten, Amy Y ang, Angela Fan, et al. The llama 3 herd of models. arXiv e-prints , pages arXiv–2407, 2024. [ Eckstein and Collins, 2021 ] Maria K Eckstein and Anne GE Collins. How the mind creates structure: Hierarchical learning of action sequences. In Cogsci... annual confer- ence of the cognitive science society . cognitive science so- ciety (us). confer ence , volume 43, page 618, 2021. [ Fan et al. , 2025 ] T ianyu Fan, Jingyuan W ang, Xubin Ren, and Chao Huang. Minirag: T ow ards extremely sim- ple retriev al-augmented generation. arXiv pr eprint arXiv:2501.06713 , 2025. [ Guo et al. , 2024 ] Zirui Guo, Lianghao Xia, Y anhua Y u, T u Ao, and Chao Huang. Lightrag: Simple and fast retrie val-augmented generation. arXiv pr eprint arXiv:2410.05779 , 2024. [ Ho et al. , 2022 ] Mark K Ho, David Abel, Carlos G Correa, Michael L Littman, Jonathan D Cohen, and Thomas L Griffiths. People construct simplified mental representa- tions to plan. Natur e , 606(7912):129–136, 2022. [ Hsieh et al. , 2023 ] Cheng-Y u Hsieh, Chun-Liang Li, Chih- Kuan Y eh, Hootan Nakhost, Y asuhisa Fujii, Alex Ratner , Ranjay Krishna, Chen-Y u Lee, and T omas Pfister . Dis- tilling step-by-step! outperforming lar ger language mod- els with less training data and smaller model sizes. In F indings of the Association for Computational Linguistics: A CL 2023 , pages 8003–8017, 2023. [ Izacard et al. , 2023 ] Gautier Izacard, Patrick Lewis, Maria Lomeli, Lucas Hosseini, Fabio Petroni, Timo Schick, Jane Dwiv edi-Y u, Armand Joulin, Sebastian Riedel, and Edouard Grav e. Atlas: Few-shot learning with retriev al augmented language models. Journal of Machine Learn- ing Researc h , 24(251):1–43, 2023. [ Jin et al. , 2021 ] Di Jin, Eileen Pan, Nassim Oufattole, W ei- Hung W eng, Hanyi Fang, and Peter Szolovits. What dis- ease does this patient ha ve? a large-scale open domain question answering dataset from medical exams. Applied Sciences , 11(14):6421, 2021. [ Lee et al. , 2024 ] Y oonsang Lee, Minsoo Kim, and Seung- won Hwang. Disentangling questions from query generation for task-adaptiv e retriev al. arXiv preprint arXiv:2409.16570 , 2024. [ Lewis et al. , 2020 ] Patrick Lewis, Ethan Perez, Aleksan- dra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich K ¨ uttler , Mike Lewis, W en-tau Y ih, Tim Rockt ¨ aschel, et al. Retriev al-augmented generation for knowledge-intensi ve nlp tasks. Advances in neural infor- mation pr ocessing systems , 33:9459–9474, 2020. [ Li et al. , 2024 ] Mingchen Li, Huixue Zhou, Han Y ang, and Rui Zhang. Rt: a retrieving and chain-of-thought frame- work for few-shot medical named entity recognition. J our- nal of the American Medical Informatics Association , 31(9):1929–1938, 2024. [ Ma et al. , 2023 ] Xilai Ma, Jing Li, and Min Zhang. Chain of thought with explicit evidence reasoning for few-shot re- lation extraction. arXiv preprint , 2023. [ Mavi et al. , 2023 ] V aibhav Ma vi, Ab ulhair Saparov , and Chen Zhao. Retriev al-augmented chain-of- thought in semi-structured domains. arXiv preprint arXiv:2310.14435 , 2023. [ Newell and Simon, 1972 ] Allen Newell and Herbert A. Si- mon. Human Pr oblem Solving . Prentice-Hall, Englew ood Cliffs, NJ, 1972. [ OpenAI, 2024 ] OpenAI. GPT -4o system card. https:// openai.com/index/gpt- 4o- system- card/, 2024. [ Pande y et al. , 2025 ] T ushar Pandey , Ara Ghukasyan, Ok- tay Goktas, and Santosh Kumar Radha. Adaptive graph of thoughts: T est-time adaptiv e reasoning unify- ing chain, tree, and graph structures. arXiv pr eprint arXiv:2502.05078 , 2025. [ T rivedi et al. , 2023 ] Harsh T rivedi, Niranjan Balasubrama- nian, T ushar Khot, and Ashish Sabharwal. Interleaving retriev al with chain-of-thought reasoning for knowledge- intensiv e multi-step questions. In Pr oceedings of the 61st annual meeting of the association for computational lin- guistics (volume 1: long papers) , pages 10014–10037, 2023. [ W ang et al. , 2022 ] Xuezhi W ang, Jason W ei, Dale Schu- urmans, Quoc Le, Ed Chi, Sharan Narang, Aakanksha Chowdhery , and Denny Zhou. Self-consistency improves chain of thought reasoning in language models. arXiv pr eprint arXiv:2203.11171 , 2022. [ W ang et al. , 2024 ] Zihao W ang, Anji Liu, Haowei Lin, Jiaqi Li, Xiaojian Ma, and Y itao Liang. Rat: Retrie val aug- mented thoughts elicit context-aware reasoning in long- horizon generation. arXiv preprint , 2024. [ W ei et al. , 2022 ] Jason W ei, Xuezhi W ang, Dale Schuur- mans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information pr ocessing systems , 35:24824–24837, 2022. [ Y ao et al. , 2023 ] Shunyu Y ao, Dian Y u, Jeffre y Zhao, Izhak Shafran, T om Griffiths, Y uan Cao, and Karthik Narasimhan. T ree of thoughts: Deliberate problem solving with large language models. Advances in neural informa- tion pr ocessing systems , 36:11809–11822, 2023. [ Zhang et al. , 2023 ] Jiajie Zhang, Shulin Cao, T ingjian Zhang, Xin Lv , Juanzi Li, Lei Hou, Jiaxin Shi, and Qi Tian. Reasoning over hierarchical question decomposition tree for explainable question answering. In Pr oceedings of the 61st Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , pages 14556–14570, 2023. [ Zhou et al. , 2022 ] Denny Zhou, Nathanael Sch ¨ arli, Le Hou, Jason W ei, Nathan Scales, Xuezhi W ang, Dale Schuur- mans, Claire Cui, Olivier Bousquet, Quoc Le, et al. Least- to-most prompting enables complex reasoning in large lan- guage models. arXiv preprint , 2022.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment