The Language of Touch: Translating Vibrations into Text with Dual-Branch Learning

The standardization of vibrotactile data by IEEE P1918.1 workgroup has greatly advanced its applications in virtual reality, human-computer interaction and embodied artificial intelligence. Despite these efforts, the semantic interpretation and under…

Authors: Jin Chen, Yifeng Lin, Chao Zeng

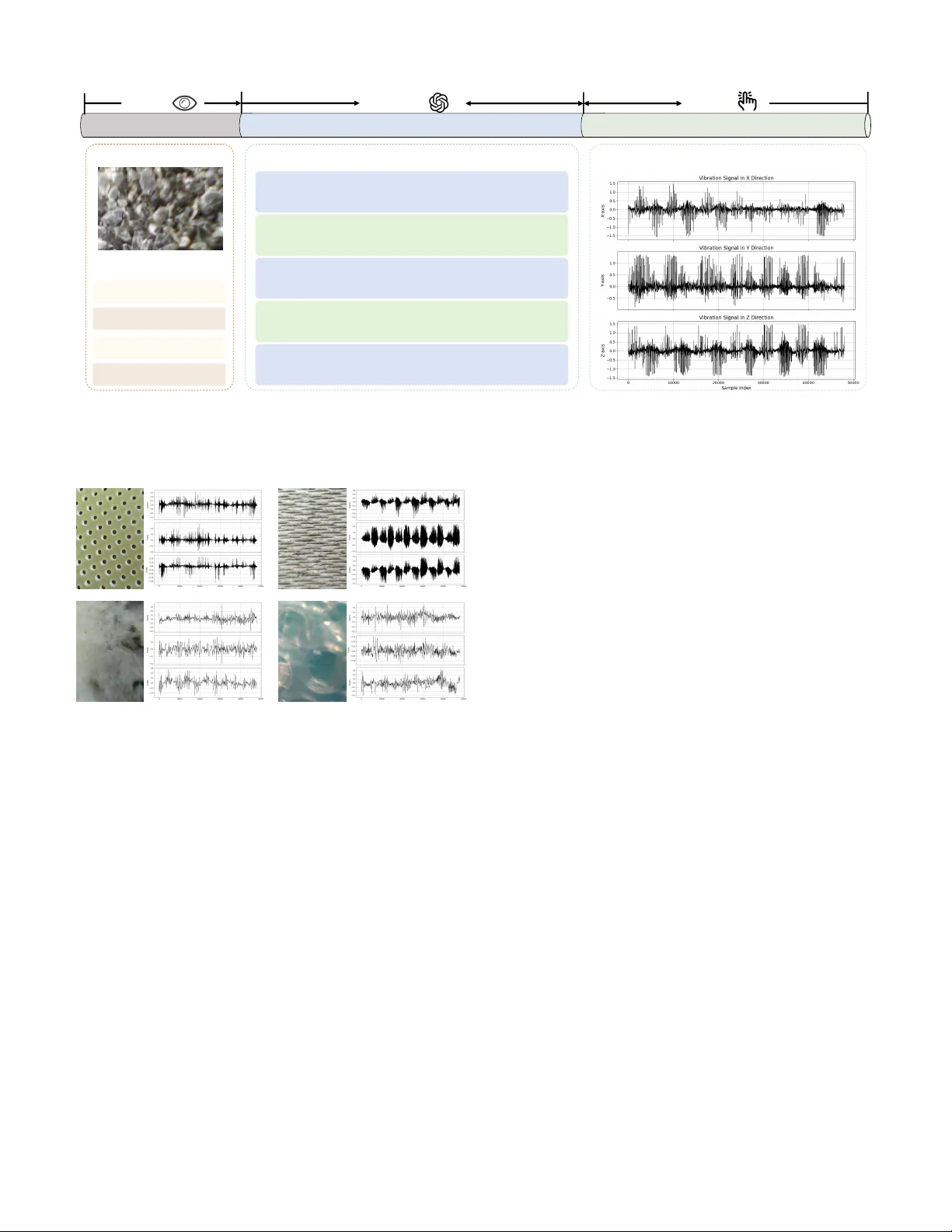

JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 1 The Language of T ouch: T ranslating V ibrations into T ext with Dual-Branch Learning Jin Chen, Y ifeng Lin, Chao Zeng, Si W u, and T iesong Zhao, Senior Member , IEEE Abstract —The standardization of vibrotactile data by IEEE P1918.1 workgr oup has greatly advanced its applications in vir - tual reality , human-computer interaction and embodied artificial intelligence. Despite these efforts, the semantic inter pretation and understanding of vibrotactile signals remain an unresolved challenge. In this paper , we make the first attempt to ad- dress vibrotactile captioning, i.e. , generating natural language descriptions from vibrotactile signals. W e propose V ibrotactile Periodic-A periodic Captioning (ViP A C), a method designed to handle the intrinsic properties of vibrotactile data, including hybrid periodic-aperiodic structures and the lack of spatial semantics. Specifically , ViP A C employs a dual-branch strategy to disentangle periodic and aperiodic components, combined with a dynamic fusion mechanism that adaptively integrates signal features. It also introduces an orthogonality constraint and weighting regularization to ensure feature complementarity and fusion consistency . Additionally , we construct LMT108-CAP , the first vibrotactile-text pair ed dataset, using GPT -4o to generate five constrained captions per surface image from the popular LMT -108 dataset. Experiments show that ViP A C significantly outperforms the baseline methods adapted from audio and image captioning, achieving superior lexical fidelity and semantic alignment. Index T erms —V ibrotactile Captioning, Haptic P erception, Multimodal Learning, Haptic Signal Processing. I . I N T R O D U C T I O N Haptics plays a critical role in enhancing multimodal in- teraction by complementing visual and auditory modalities. It has been widely applied in industrial automation, telemedicine, hazardous en vironment exploration, and e-commerce. T ypi- cally , haptic signals can be classified into kinesthetic and vibrotactile components [1]: the former in volves motion, force, and torque, while the latter conv eys surface-related properties such as friction, hardness, temperature, and roughness. Until now , vibrotactile signals can be captured by different types of sensors, including visuotactile sensors [2] and triaxial This work is supported by the National Science Foundation of China (Grant No. 62571131) (Corresponding author: T iesong Zhao.) J. Chen and Y . Lin are with the Fujian Ke y Lab for Intelligent Processing and W ireless Transmission of Media Information, Fuzhou University , Fuzhou 350108, China(e-mails: { 231110036, 241120007 } @fzu.edu.cn). T . Zhao is with the Fujian Ke y Lab for Intelligent Processing and W ireless T ransmission of Media Information, Fuzhou University , Fuzhou 350108, China and also with the Fujian Science and T echnology Innov ation Laboratory for Optoelectronic Information of China, Fuzhou 350108, China (e-mails: { t.zhao } @fzu.edu.cn). C. Zeng is with the School of Artificial Intelligence, Hubei University , W uhan, China, and the Ke y Laboratory of Intelligent Sensing System and Security , Hubei University and Ministry of Education, China. (email: chao.zeng@hubu.edu.cn) S. W u is with the School of Computer Science and Engineering, South China University of T echnology . (email: cswusi@scut.edu.cn) Semantic Retrieval Material Inspection & Automated Reports VR Semantic Augmentation in T actile Rendering V ibrotactile Captioning V ibration Signal Natural Language Caption This surface feels coarse and irregular ... Fig. 1. Application scenarios of vibrotactile captioning. accelerometers [3]. Although visuotactile sensors such as Gel- Sight hav e attracted considerable attention from the computer vision community [4], the IEEE P1918.1 W orking Group stan- dardized the representation of vibrotactile signals as multiple 1D time series in 2019 [5]. This step has significantly en- hanced the perception, transmission, reproduction, and cross- platform application of vibrotactile signals [6]. It has also promoted interoperability across tactile devices and enabled system-lev el integration in virtual reality , human–computer interaction, and embodied artificial intelligence applications [5]. Despite their broad applicability , vibrotactile signals are inherently complex and noisy , which makes their interpretation challenging [7]. T o facilitate a deeper understanding of these signals, we adapt the concept of audio-visual captioning and introduce the task of vibr otactile captioning , which translates these 1D signals into structured natural language. Rather than relying on low-le vel features or fixed taxonomies, natural language aligns more closely with human perception and facilitates intuitive understanding. As illustrated in Fig. 1, vibrotactile captioning enables three practical applications: (i) Semantic indexing , where captions support language-based search, retrie val, and reasoning; (ii) Material inspection , where standardized textual summaries assist in quality control and verification within industrial workflows; (iii) Virtual per - ception , where captions of fer semantic guidance to augment texture understanding in virtual reality en vironments with limited haptic resolution. Although Large Language Models (LLMs) have shown strengths in describing signals, their massiv e parameter and computational power requirements also limit their applications in the above scenarios. T o the best of our knowledge, vibrotactile captioning or similar works have not been explored. This might be attributed JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 2 to data scarcity and technical challenges. Data Scarcity . First, there are few publicly av ailable vibrotactile datasets, and most lack natural language annotations. For example, the LMT haptic texture database [8] offers triaxial acceleration signals but does not provide textual descriptions, reducing its v alue for cross-modal learning. Second, vibrotactile signals often contain relev ant and irrelev ant components, making it difficult to extract clean and meaningful features [9]. Third, human descriptions of tactile sensations vary widely depending on individual perception, expertise, and attention [10], which hin- ders consistent large-scale annotation. T echnical Challenges. First, captioning models dev eloped for image, video, or audio domains rely on structural priors such as spatial layouts, motion continuity , or acoustic regularity . The absence of these priors in vibrotactile signals leads to difficulties in cross- modal transfer . Second, vibrotactile data encodes material surface properties as comple x temporal dynamics that combine periodic patterns ( e.g. , from regular textures) and aperiodic patterns ( e.g. , from irregular surfaces or noise). This hybrid structure poses significant modeling challenges for single- stream encoders. T o address the above issues, we propose V iP A C, a caption- ing framework tailored to vibrotactile signals. T o mitigate data scarcity , we employ GPT -4o to generate textual descriptions of material surface images and pair them with their corresponding vibration signals to construct a cross-modal dataset. T o ov er- come modeling challenges, we design a dual-branch encoder that separately processes periodic and aperiodic components for stable and transient vibrotactile cues, respectively . These features are fused via adapti ve weighting and decoded via a Transformer -based decoder . This design allows V iP AC to effecti vely model complex vibrotactile structures and generate perception-aligned descriptions. Our main contributions are as follows: • W e introduce the task of vibr otactile captioning , which aims to con vert 1D triaxial acceleration signals into structured natural language descriptions that reflect char- acteristics of material surface and haptic signals. This task establishes a new direction for semantic modeling and interpretation of tactile data. • W e propose V iP A C , the first captioning framew ork specif- ically designed for vibrotactile signals. The model em- ploys a dual-branch encoder to separately extract periodic and aperiodic features, and integrates them through an adaptiv e fusion mechanism that captures the hybrid tem- poral structure of tactile inputs. The fused representation is then decoded into natural language using a standard T ransformer decoder . • W e construct LMT108-CAP , a vibrotactile-text paired dataset generated using GPT -4o under controlled lin- guistic constraints. Comprehensi ve experiments on the dataset validate the ef fectiv eness of V iP A C in capturing the temporal structure of tactile signals and producing descriptiv e outputs that align with human interpretations. The remainder of this paper is organized as follows. Section II revie ws related work on vibrotactile datasets and text description tasks. Section III introduces the construction of the proposed LMT108-CAP vibrotactile–text paired dataset. Section IV presents the V iP A C framework, including the dual- branch encoder, dynamic fusion mechanism, and decoding strategy . Section V reports experimental results, ablation stud- ies, and a retrie val demo to validate the ef fectiv eness of the proposed method. Finally , Section VI concludes the paper and outlines directions for future work. I I . R E L AT E D W O R K A. Datasets Existing vibrotactile datasets fall into two categories: visuo- tactile patterns and multiple 1D signals. Significant progress has been made in visuotactile datasets such as TVL [11] and T ouch100k [12], which use vision-based sensors to produce representations compatible with computer vision techniques, thereby facilitating multimodal alignment and captioning. In contrast, multiple 1D vibrotactile datasets aligns with the IEEE P1918.1 standard that adv ocates multiple 1D vibration signals as the canonical format. F or example, the LMT hap- tic texture database [8] provides triaxial acceleration signals without paired natural language descriptions, which restricts its applicability in cross-modal learning. T o date, no public dataset aligns triaxial signals with textual descriptions, posing significant obstacles to model training and e valuation. Moti- vated by the success of LLMs in visuotactile research [11], we extend this paradigm to multiple 1D vibrotactile signals. W e use GPT -4o to generate natural language descriptions for surface images and pair them with corresponding triaxial acceleration signals, resulting in a new dataset that supports tactile semantic modeling and cross-modal learning. B. T ext Description T asks 1) Image-to-T e xt: Image captioning aims to generate de- tailed textual descriptions of visual content by lev eraging the structured spatial semantics present in images, such as object presence, spatial layout, and visual attributes [13]–[15]. These methods perform well in visual domains but cannot be directly applied to vibrotactile data. Unlike images, vibrotactile signals do not contain spatial structure and instead encode material properties through temporal and spectral patterns. This fun- damental difference makes conv entional vision-based feature extractors, such as Con volutional Neural Networks (CNNs), ineffecti ve when applied to multiple 1D tactile signals. 2) V ideo-to-T e xt: V ideo captioning extends image caption- ing into the temporal domain, modeling dynamic content such as motion trajectories, temporal coherence, and scene transitions [16]–[18]. Although vibrotactile signals also e volve ov er time, they capture localized, fine-grained surface inter- actions rather than global scene-le vel dynamics. As a result, video captioning models, which emphasize macroscopic visual motion, fail to represent the subtle frequency-based variations characteristic of tactile vibrations. 3) Audio-to-T e xt: Audio captioning generates textual de- scriptions from acoustic signals, benefiting from well-defined semantic categories such as speech, music, and en vironmental sounds, along with consistent recording conditions [19]–[21]. In contrast, vibrotactile signals exhibit high variability due JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 3 This material surface is rough-textured with irregular jagged edges and varying sizes of protruding fragments. This material surface has a rough uneven texture with slightly jagged edges. This material surface feels rough with small uneven closely-packed pebbles. This material surface feels coarse with uneven jagged small pebbles closely packed together . This material surface is rough with small irregularly shaped protrusions. Natural language description: Three-axis acceleration signal: Image : Prompt : Sentence pattern Exclude color information Length restriction Deterministic descriptions Source Data Generate T extua l Descriptions Paired with Corr esponding Signals GPT -4o V ision T ouch Fig. 2. Illustration of the vibrotactile-text dataset generation process. Surface images from the LMT -108 dataset are provided as input to GPT -4o, which generates fiv e textual descriptions per image under predefined linguistic constraints. These descriptions are then paired with the corresponding triaxial acceleration signals collected from the same material surfaces, resulting in the final vibrotactile-text dataset. Fig. 3. Examples of material surface images and their corresponding three- axis vibrotactile signals. T op: materials with regular textures exhibit strong periodicity . Bottom: irregular surfaces yield noisy , aperiodic signals. This motiv ates the use of distinct modeling pathways. to scanning speed and contact force, and lack standardized semantic labels. This variability introduces ambiguity and noise, making direct application of audio captioning models ineffecti ve for vibrotactile interpretation. Abov e all, these modality-specific captioning models rely on structural priors that do not hold in the vibrotactile domain. Image models assume spatial semantics, video models empha- size motion continuity , and audio models exploit categorical regularity—none of which apply to tactile vibrations. T o ad- dress this gap, we propose V iP A C, a captioning frame work that models vibrotactile-specific features through separate periodic and aperiodic branches. The model further emplo ys adaptive fusion to accommodate temporal v ariability and mitigate se- mantic ambiguity . This design provides a dedicated solution to the challenges of cross-modal captioning in tactile contexts. I I I . L M T 1 0 8 - C A P D A TA S E T C O N S T R U C T I O N T o ov ercome the lack of publicly a vailable paired vibrotactile-text data, we introduce LMT108-CAP , a novel captioned vibrotactile dataset. This dataset, is based on the LMT -108 Surface-Materials database [22] promoted by the IEEE P1918.1 workgroup. The LMT -108 Surf ace-Materials database contains 108 distinct material surfaces grouped into 10 categories, each recorded with triaxial acceleration, friction, sound, and surface image data. Each surface is measured 20 times, resulting in 2,160 vibrotactile samples represented as 1D triaxial acceleration signals. The dataset is widely recognized and applied for its representati veness. As one of the field’ s most widely used tactile benchmarks, LMT -108 offers representativ e cov erage and thus provides a suitable basis for constructing paired data. W e construct the vibrotactile-text pairs with the follo wing approach, which is inspired by image captioning dataset construction such as Flickr8K [23]. Specifically , we employ GPT -4o to generate fiv e textual descriptions for each material surface image in the LMT -108 dataset. The generation process adheres to four constraints designed to ensure relev ance and consistency with the characteristics of vibrotactile signals: (i) Sentence Pattern : Descriptions start with “This material surface... ” to encourage vibrotactile-focused textual output. (ii) Exclude Color Information : T o align with vibrotactile signal characteristics, which do not capture color . (iii) Length Restriction : Each description contains no more than 15 words. (iv) Deterministic Descriptions : T o prev ent uncertain or imaginativ e associations, ensuring consistenc y and relev ance to vibrotactile properties. Images are used only once to bootstrap the textual side; no visual inputs are used during training or inference, so the model learns signal–semantic correspondences rather than reproducing visual semantics. LMT108-CAP contains surface images, their corresponding triaxial acceleration signals, and GPT -generated constrained captions, as illustrated in Fig. 2. In total, the dataset includes 2,160 samples, each with five captions, yielding 10,800 paired instances following the fiv e-caption protocol of Flickr8K [23]. W e split the data into training and testing subsets using a JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 4 T riaxial Acceleration Signals Aperiodic Branch Periodic Branch Input One-Dimensional Signals DFT321 Encoder periodicity L aperiodicity L orthogonality L Dynamic Feature Fusion Linear + Softmax Previous T oke ns Output "This material surface..." CE L Cross-Attention Decoder W ord Embedding PosEnc Periodic Branch Aperiodic Branch Mel-Spectrogram Conv+Pool Cosine Sine Activation Function Mel-Spectrogram Learnable Weigh ts LSTM LSTM LSTM ...... ...... Transformer ... ... ... T ransformer Decoder W ord probability distribution per time step. Fig. 4. V iP AC takes triaxial acceleration signals as input and applies DFT321 to obtain 1D vibration data. These signals are processed by a dual-branch encoder that separately models periodic and aperiodic components using F AN-based frequency analysis and Transformer+LSTM-based temporal modeling, respectiv ely . The extracted features are dynamically fused based on estimated periodicity scores, and the fused representation is decoded into natural language using a Transformer decoder . 7:3 ratio, with 1,512 training samples (7,560 captions) and 648 test samples (3,240 captions), and perform corpus-level vocab ulary control by filtering singleton words so that each remaining token appears at least once in both splits [24]. The selected materials span a broad range of textures, pro viding representativ e coverage for training and ev aluating vibrotactile captioning models. T o ensure the reliability of the generated text, we impose strict post-processing constraints. The LMT - 108 dataset has also been widely used in prior work on tactile sensing and surface analysis [25]–[27], further supporting its suitability as a foundation for paired caption construction. I V . P RO P O S E D V I PAC M O D E L The primary innov ation of this work lies in defining and systematically tackling the novel task of vibrotactile caption- ing. V ibrotactile signals, unlike visual or auditory signals, often combine periodic and aperiodic components that arise from structured (periodic) and unstructured (aperiodic) surface interactions [28], as illustrated in Fig. 3. This hybrid structure challenges single-stream models and motiv ates an approach that treats these components explicitly . W e propose V iP A C, a dedicated framework that translates vibration signals into natural language through three stages: (i) dual-branch encoding to extract periodic and aperiodic fea- tures, (ii) adaptiv e fusion guided by signal characteristics, and (iii) sequence generation with a Transformer -based decoder . This design targets both repetitive and transient patterns in vibrotactile signals; the ov erall architecture is sho wn in Fig. 4. In the encoder, periodic and aperiodic components are mod- eled separately to match their distinct statistics—an approach consistent with practices in surface engineering and tactile sensing. Concretely , the periodic branch emplo ys a Fourier Analysis Network (F AN) for frequency-domain processing of stable, repeating patterns, while the aperiodic branch uses an LSTM+T ransformer stack to capture irre gular , long-range tem- poral variation. The two streams are then fused adaptiv ely via a periodicity embedding p i , enabling the model to emphasize the appropriate branch for each input and to form a unified representation for decoding. A. Encoder Design T o simplify the input while preserving perceptually relev ant cues, we apply DFT321 to compress triaxial acceleration into a single 1-D signal. DFT321 is a widely used, standard front end in vibrotactile processing, including work that processes the LMT -108 dataset in the same way [25], and it is consistent with the IEEE P1918.1 recommendations. Landin et al. [29] introduced DFT321 and showed that collapsing triaxial high- frequency vibration into a single magnitude channel causes no noticeable perceptual degradation. Our objective is to generate semantic descriptions of surface properties; the relev ant cues are texture statistics and spectral structure rather than full 3- D force or direction vectors. Direction-inv ariant magnitude fusion therefore remo ves orientation nuisance while preserving the information needed for captioning. The resulting signal t i typically consists of both peri- odic patterns from regular textures and aperiodic fluctuations caused by irregular surfaces or noise: t i = t PER + t APER . (1) Con ventional single-stream encoders fail to adequately cap- ture this hybrid structure: f = f enc ( t i ) . (2) T o address this, we propose a dual-branch encoder that models periodic and aperiodic components independently: f PER = f PER ( t i ) , f APER = f APER ( t i ) . (3) JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 5 These features are then fused into a unified representation: f = Fuse ( f PER , f APER ) . (4) a) P eriodic Branch: T o capture stable, repetitive patterns that emerge from regular textures, we employ the Fourier Analysis Network (F AN) [30]. F AN is chosen for its effec- tiv eness in extracting dominant frequency components from time-series signals. The transformed output is con v erted into a Mel-Spectrogram and further processed by a conv olution- pooling module to obtain spectral representations: f PER ,i = Con vPool ( Mel ( F AN ( t i ))) . (5) T o enhance the learning of periodic structure, we introduce a periodicity loss computed as the v ariance of peak intervals in the autocorrelation function: L periodicity = V ar (∆ F AN ( t i )) , (6) where ∆ t denotes the lag between autocorrelation peaks. b) Aperiodic Branch: T o model irregular , non-repetitiv e variations commonly observed in natural surf aces, we use a T ransformer encoder combined with a Long-Short T erm Mem- ory (LSTM) layer . The layer captures short-term dynamics, while the T ransformer pro vides long-range temporal modeling. This architecture is selected to address both local variability and global dependencies: f APER ,i = T ransformer ( LSTM ( Mel ( t i ))) . (7) T o regularize the aperiodic features and avoid overly large activ ations, we apply a Mean Squared Error (MSE) style penalty to their magnitude: L aperiodicity = 1 D ∥ f APER ,i ∥ 2 2 . (8) c) F eatur e Decoupling and Fusion: T o ensure that the two branches capture complementary information, we apply an orthogonality loss between periodic and aperiodic features: L orthogonality = ∥⟨ f PER ,i , f APER ,i ⟩∥ 2 . (9) W e compute a scalar fusion weight w i ∈ [0 , 1] based on the periodicity embedding p i via a sigmoid activ ation: w i = σ ( α · ( p i − τ )) , (10) where τ is a learnable threshold and α controls the sharpness of the transition. The final fused representation is: f i = w i · f PER ,i + (1 − w i ) · f APER ,i . (11) B. Decoder Design The decoder transforms the fused tactile feature f i into a natural language description c i = { c 1 , c 2 , ..., c T } in an auto-regressi ve manner . W e adopt a standard Transformer - based decoder [31] consisting of a word embedding layer, a T ransformer block with mask ed self-attention and cross- attention, and a linear projection layer with softmax activ ation. At each time step t , the decoder computes the probability of generating the next token conditioned on the previously generated tokens and the tactile feature: p ( c t | c 1: t − 1 , f i , θ ) = TransDec ( c 1: t − 1 , f i ; θ ) , (12) where θ denotes the decoder parameters. The sequence is trained to minimize the cross-entropy loss between the pre- dicted and reference captions: L CE ( θ ) = − 1 T T X t =1 log p ( c t | c 1: t − 1 , f i , θ ) . (13) During training, we apply the teacher forcing strate gy , where the ground-truth tokens c 1: t − 1 are used as input to predict c t . While the decoder architecture itself is not nov el, it plays a vital role in translating temporal tactile features into coherent, perception-aligned textual descriptions. C. Loss Functions The model is trained using a composite loss function that supervises both the tactile feature encoding and the text generation process. The overall objectiv e is defined as: L total = L CE + λ 1 L periodicity + λ 2 L aperiodicity + λ 3 L orthogonality , (14) where λ 1 , λ 2 , λ 3 are hyperparameters controlling the relativ e importance of each term. During test, V iP A C processes a raw triaxial acceleration sig- nal by computing periodic and aperiodic features, fusing them adaptiv ely , and decoding a textual description conditioned on the fused representation and previously generated tokens. V . E X P E R I M E N T S A. Experimental Setup Compared Methods. T o v alidate the ef fectiv eness of our V iP AC framew ork, we compared it with existing methods from audio captioning tasks, including A CT [31], Kim et al. [32], and Recap [33], as well as image captioning methods adapted for vibrotactile signals via Mel-spectrogram conv er- sion, such as ClipCap [34], V iECap [35] and RCMF [36]. This cross-domain comparison rigorously ev aluates our frame- work’ s ability to translate vibrotactile vibration signals into natural language under di verse methodological paradigms. All methods were trained under the same experimental conditions, including the same training, validation, and test data splits. Although ClipCap [34] was not published through a peer - revie wed venue, it has gained popularity due to its novel approach of injecting CLIP-based visual embeddings as prefix tokens into pretrained language models for image captioning. Evaluation Metrics. W e adopted fiv e widely used metrics to comprehensiv ely e valuate caption quality . BLEU [37] mea- sures n -gram ov erlap using the geometric mean of modified precision with a brevity penalty . R OUGE-L [38] computes an F-score based on the longest common subsequence, reflecting structural alignment. METEOR [39] improves recall-oriented ev aluation by incorporating synonym and stem matching. CIDEr [40] captures semantic relev ance via TF-IDF-weighted cosine similarity of n -grams. SPICE [41] ev aluates semantic content by comparing scene graph tuples (objects, attrib utes, relations). SPIDEr, the arithmetic mean of SPICE and CIDEr , balances semantic accuracy and lexical fidelity . JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 6 T ABLE I P E RF O R M AN C E C O M P A R I SO N O F DI FF E R EN T CA P T I ON I N G M O DE L S . Model BLEU1 BLEU2 BLEU3 BLEU4 R OUGE-L METEOR CIDEr SPICE SPIDEr A CT (DCASE 2021) 0.7204 0.5619 0.4498 0.3510 0.5931 0.2882 0.3107 0.2454 0.2780 Kim et al. (ICASSP 2023) 0.7365 0.5732 0.4278 0.3086 0.5163 0.2597 0.7428 0.1942 0.4685 Recap (ICASSP 2024) 0.7524 0.5891 0.4415 0.3213 0.5278 0.2714 0.7652 0.2126 0.4889 ClipCap (2021) 0.7343 0.5792 0.4667 0.3748 0.5819 0.2985 0.4737 0.2480 0.4959 V iECap (ICCV 2023) 0.7432 0.5764 0.4573 0.3682 0.5867 0.2946 0.6981 0.2413 0.4697 RCMF (TCSVT 2024) 0.7480 0.5900 0.4520 0.3350 0.5800 0.2750 0.7200 0.2260 0.4730 V iP A C 0.7635 0.6005 0.4782 0.3861 0.6047 0.3094 0.7795 0.2592 0.5194 V iP AC : This material surface has evenly spaced s lightly raised rounded per forations providing a textured feel . V iP AC : This material surface has a smooth text ur e with subtle evenly spac ed linear grooves . V iP AC : This material surface has a slightly rough texture with fine evenly sp aced bumps. V iP AC : This material surface is rough and uneven with small irregular gaps and interwoven texture . GT1 : This material surface has evenly spaced rounded perforati ons with a slightly raised texture at each hole . GT2 : This materia l surface features evenly spaced s lightly raised rounded perforations . GT3 : This materia l surface feels smooth with evenly spaced slightly raised rounded perf orations. GT4 : This materia l surface has evenly spaced slightl y raised rounded perforations. GT5 : This materia l surface is perforated with evenly spaced slightly raised rounded hole s. GT1 : This material surface feels smooth with a faint uniform grid texture underneath. GT2 : This materia l surface is smooth with a fine ma terial pattern of tiny evenly space d dots . GT3 : This materia l surface has a fine evenly spaced g rid-like texture . GT4 : This materia l surface has a fine grid-like text ure subtly raised and evenly spaced . GT5 : This materia l surface has a fine regular patter n with a smooth slightly reflective texture. GT1 : This material surface has a r ough text ure with uniformly distributed sma ll glitter particles. GT2 : This materia l surface is slightly rough with finely distr ibuted raised reflective speckles . GT3 : This materia l surface has fine rough microglitter particles evenly disperse d. GT4 : This materia l surface has fine uneven glitter pa rticles creating a slightly rough texture. GT5 : This materia l surface is rough with small scattered sli ghtly raised irregular speckles. GT1 : This material surface has a textured uneven pattern with no ticeable indentations and raised areas . GT2 : This materia l surface is uneven textured and sli ghtly r ough with m inor creases. GT3 : This materia l surface features an irregular bu mpy texture with slight depressions throughout. GT4 : This materia l surface has a rough uneven texture with sma ll bumps and indentations. GT5 : This materia l surface features an uneven textur e with multiple small indistinct bumps and slight indentation . Fig. 5. Qualitative comparisons between V iP A C generated captions and fi ve GPT -4o reference descriptions for four representati ve materials. Matched phrases are highlighted to emphasize semantic consistency . The selected samples—covering regular perforations, fine grids, rough glitter , and irregular bumps—demonstrate V iP A C’ s ability to produce accurate and diverse textual descriptions directly from vibrotactile signals. T ABLE II A B LAT IO N S T U DY O N M O D E L C O MP O N E NT S . W E EV A L UA T E T H E E FF E C TS OF R EM OV I N G T H E P E RI O D I C B R AN C H , A P E RI O D IC B RA N C H , A N D A DA P T I VE F U SI O N M O D UL E . T H E F U L L M O D EL C ON S I ST E N T L Y A CH I E V ES T HE H IG H E ST P ER F O R MA N C E , C O N FIR M I N G T H A T B OT H B R A N CH E S A N D T H E DY NA M I C W E IG H T I NG M EC H A NI S M C O N T RI B U TE TO T HE E FFE C T IV E N E SS O F V I P AC . V ariant BLEU1 BLEU2 BLEU3 BLEU4 R OUGE-L METEOR CIDEr SPICE SPIDEr Periodic Only 0.6124 0.4893 0.3582 0.2645 0.5012 0.2287 0.4215 0.1683 0.2949 Aperiodic Only 0.6657 0.5328 0.4127 0.3061 0.5237 0.2614 0.5362 0.1947 0.3655 No Fusion 0.6829 0.5535 0.4312 0.3198 0.5379 0.2745 0.5683 0.2054 0.3869 V iP A C (Full) 0.7635 0.6005 0.4782 0.3861 0.6047 0.3094 0.7795 0.2592 0.5194 Experimental Configuration. All experiments were con- ducted on a W indows 10 64-bit workstation equipped with an Intel Core i7-14700KF CPU (3.40 GHz), 32 GB RAM, and an NVIDIA GeForce R TX 4090 D GPU (24 GB). Model dev elopment and training were implemented using Python 3.7.12, PyT orch 2.1.0, and CUD A T oolkit 11.1. B. Experimental Results Qualitative and Quantitati ve Analysis. W e ev aluate V iP AC using standard captioning metrics and compare it against baseline methods adapted from image and audio do- mains. As shown in T able I, V iP A C achieves the highest scores across all metrics, with particularly notable gains in CIDEr and SPICE, indicating improv ed lexical precision and semantic consistency . Fig. 5 illustrates qualitativ e comparisons between generated captions and GPT -4o-generated references for four representativ e material types: regular grid, fine mesh, glittery roughness, and bump y irregularities. V iP A C reliably captures salient tactile features such as smoothness, periodic spacing, and surface irre gularity . Howe ver , challenging textures occa- JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 7 T ABLE III C O MP L E T E E V A L UATI O N M E T R IC S WH E N A S PE C I FIC M A T ER I A L C A T E G ORY (G 1 TO G9 ) IS E XC L U D ED F RO M T R AI N I N G . E AC H RO W C O RR E S P ON D S T O A M O DE L TR A I N ED WI T H O UT T HE R ES P E C TI V E C A T E G ORY . Excluded Category BLEU1 BLEU2 BLEU3 BLEU4 ROUGE-L METEOR CIDEr SPICE SPIDEr G1 0.6325 0.4674 0.3592 0.2841 0.5035 0.2394 0.4892 0.1553 0.3223 G2 0.7152 0.5483 0.4301 0.3456 0.5658 0.2765 0.6518 0.2169 0.4344 G3 0.6950 0.5298 0.4164 0.3340 0.5536 0.2661 0.6142 0.2007 0.4075 G4 0.6159 0.4563 0.3508 0.2756 0.4927 0.2328 0.4671 0.1475 0.3073 G5 0.7524 0.5891 0.4667 0.3748 0.5931 0.2985 0.7100 0.2480 0.4380 G6 0.6437 0.4779 0.3660 0.2901 0.5124 0.2450 0.5094 0.1625 0.3360 G7 0.7053 0.5385 0.4235 0.3404 0.5597 0.2713 0.6332 0.2074 0.4203 G8 0.5998 0.4427 0.3384 0.2667 0.4793 0.2260 0.4425 0.1394 0.2909 G9 0.5412 0.3906 0.2975 0.2328 0.4351 0.1925 0.3764 0.1152 0.2458 Full Data 0.7635 0.6005 0.4782 0.3861 0.6047 0.3094 0.7795 0.2592 0.5194 T ABLE IV A B LAT IO N O N IN P U T R E P RE S E N T A T I O N W I TH TH E FU L L M E T R IC S UI T E . T R AI N I N G T H RE E IN D E P EN D E NT M OD E L S W I T H O N L Y O N E A X I S ( X /Y / Z ) Y I EL D S C O N SI S T E NT LY W O RS E CA P T IO N S T H A N U S I NG D FT 3 2 1 T O F U SE T RI A X I AL S IG NA L S I N T O O N E C H AN N E L . Input B1 B2 B3 B4 R-L MET CIDEr SPICE SPIDEr X-only 0.6820 0.5220 0.4010 0.3087 0.5450 0.2668 0.5954 0.2130 0.4042 Y -only 0.7090 0.5450 0.4250 0.3370 0.5660 0.2790 0.6512 0.2068 0.4290 Z-only 0.6640 0.5060 0.3890 0.2924 0.5380 0.2583 0.5627 0.2045 0.3836 Mean(X,Y ,Z) 0.6850 0.5240 0.4050 0.3127 0.5500 0.2680 0.6031 0.2081 0.4056 DFT321 0.7635 0.6005 0.4782 0.3861 0.6047 0.3094 0.7795 0.2592 0.5194 Fig. 6. Demo interface for caption-based material retriev al. The left panel displays matched material images; the right panel shows vibrotactile files. Images are used only for visualization. sionally result in lo wer semantic alignment, highlighting the need for improved sensitivity to subtle signal variations. These results collectiv ely demonstrate the effecti veness of V iP A C in producing accurate, fluent, and perception-consistent textual descriptions of vibrotactile signals. Demo of V iP A C Application in Retrieval. T o demonstrate a practical application of vibrotactile captioning, we designed a web-based demo interface that supports text-based retrie val ov er material samples. Users can input a ke yword or sentence to retriev e all generated captions containing the query . The interface displays the corresponding material name and ref- erence image in the left panel and the matched vibrotactile files in the right panel. This proof-of-concept system operates solely on vibrotactile input; no visual data are used during training or inference. Reference images are included only for visualization, helping users interpret the results. As shown in Fig. 6, the interface supports both ke yword and sentence queries and exemplifies the semantic indexing and retriev al scenario introduced earlier . C. Ablation Study Component Ablation. W e conduct ablation experiments to assess the impact of each model component. As shown in T a- ble II, the full V iP A C model achiev es the highest performance across all metrics. Removing the dynamic fusion module and using fix ed weights leads to noticeable degradation, confirming the effecti veness of adapti ve weighting. The aperiodic-only variant performs better than the periodic-only one, indicating that irregular features contain more standalone information. These results validate that periodic and aperiodic features JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 8 are complementary and that dynamic fusion is essential for modeling hybrid tactile structures. Generalization under Missing Categories. T o assess gen- eralization, we retrain nine models, each with one material category (G1–G9) held out, and e valuate on that category . T able III reports the full metric suite. Across all held-out cate- gories, V iP AC remains stable. The strongest zero-shot transfer appears on G5, followed by G2 and G7; the weakest is G9, suggesting its textures are less represented by the remaining categories. T rends are consistent across lexical and semantic metrics, indicating that periodic/aperiodic cues learned from other groups transfer broadly . The “Full Data” row provides the upper bound when no category is removed. Justifying DFT321 Axis Fusion. T o verify that compress- ing triaxial acceleration into a single magnitude channel via DFT321 is reasonable for captioning, we replace DFT321 with single-axis inputs and train three independent models using only x , only y , or only z signals. All settings are identical to the main model. T able IV reports the full metric suite. DFT321 clearly outperforms any single-axis alternativ e across lexical (BLEU/ROUGE/METEOR) and semantic met- rics (CIDEr/SPICE/SPIDEr). The best single-axis model (Y - only) still trails DFT321 by 4.9 BLEU4 points and 0.090 SPIDEr; the mean of single-axis runs remains notably lower . V I . C O N C L U S I O N This work introduces vibrotactile captioning, which aims to translate multiple 1D triaxial acceleration signals into natural- language descriptions that conv ey material surface characteris- tics. T o enable this task, we construct a paired vibrotactile-text dataset using an LLM and propose a dual-branch architecture that separately models the periodic and aperiodic components of vibrotactile signals. The proposed method, V iP A C, gener- ates accurate and semantically rich captions, as demonstrated by both quantitative metrics and qualitativ e comparisons. W e further dev elop a retriev al demo to sho wcase the usefulness of vibrotactile captioning for caption-based material search. Limitations of this study include the modest dataset size, the lack of large-scale human-authored captions, and the reliance on triaxial acceleration signals only . Future work will expand the dataset with more diverse materials and human-verified descriptions, improv e robustness under real-world conditions, and explore multimodal fusion with additional sensory signals. R E F E R E N C E S [1] R. Hassen and E. Steinbach, “V ibrotactile signal compression based on sparse linear prediction and human tactile sensitivity function, ” in Proc. IEEE W orld Haptics Conf. (WHC) , 2019, pp. 301–306. [2] S. Li, Z. W ang, C. W u, X. Li, S. Luo, B. Fang, F . Sun, X.-P . Zhang, and W . Ding, “When vision meets touch: A contemporary revie w for visuotactile sensors from the signal processing perspective, ” IEEE Journal of Selected T opics in Signal Processing , vol. 18, no. 3, pp. 267– 287, 2024. [3] T . Zhao, Y . Fang, K. W ang, Q. Liu, and Y . Niu, “High efficiency vibrotactile codec based on gate recurrent network, ” IEEE T ransactions on Multimedia , vol. 25, pp. 5043–5052, 2023. [4] Y . Li, J.-Y . Zhu, R. T edrake, and A. T orralba, “Connecting touch and vision via cross-modal prediction, ” in 2019 IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , 2019, pp. 10 601– 10 610. [5] O. Holland, E. Steinbach, R. V . Prasad, Q. Liu, Z. Dawy , A. Aijaz, N. Pappas, K. Chandra, V . S. Rao, S. Oteafy , M. Eid, M. Luden, A. Bhardwaj, X. Liu, J. Sachs, and J. Ara ´ ujo, “The ieee 1918.1 “tactile internet” standards w orking group and its standards, ” Proceedings of the IEEE , vol. 107, no. 2, pp. 256–279, 2019. [6] B. W u and Q. Liu, “Integrating point spread function into taxel-based tactile pattern super resolution, ” IEEE T ransactions on Haptics , v ol. 17, no. 4, pp. 637–649, 2024. [7] C. Bernard, E. Thoret, N. Huloux, and S. Ystad, “The high/low frequency balance driv es tactile perception of noisy vibrations, ” IEEE T ransactions on Haptics , vol. 17, no. 4, pp. 614–624, 2024. [8] M. Strese, L. Bruderm ¨ uller , J. Kirsch, and E. Steinbach, “Haptic Material Analysis and Display Inspired by Human Exploratory Patterns, ” 2019. [9] L. Zou, C. Ge, Z. J. W ang, E. Cretu, and X. Li, “Novel tactile sensor technology and smart tactile sensing systems: A revie w , ” Sensors , vol. 17, no. 11, 2017. [10] G. Pati ˜ no-Lakatos, H. Genevois, and B. Navarret, “From vibrotactile sensation to semiotics. mediations for the experience of music, ” Hybrid , 2019. [Online]. A vailable: https://api.semanticscholar .org/CorpusID: 246393140 [11] L. Fu, G. Datta, H. Huang, W . C.-H. Panitch, J. Drake, J. Ortiz, M. Mukadam, M. Lambeta, R. Calandra, and K. Goldberg, “ A touch, vi- sion, and language dataset for multimodal alignment, ” in Pr oceedings of the 41st International Conference on Mac hine Learning , ser . ICML ’24. JMLR.org, 2025. [12] N. Cheng, J. Xu, C. Guan, J. Gao, W . W ang, Y . Li, F . Meng, J. Zhou, B. Fang, and W . Han, “T ouch100k: A large-scale touch-language-vision dataset for touch-centric multimodal representation, ” p. 103305, 2025. [13] Q. Huang, Y . Liang, J. W ei, Y . Cai, H. Liang, H.-f. Leung, and Q. Li, “Image dif ference captioning with instance-level fine-grained feature representation, ” IEEE T ransactions on Multimedia , vol. 24, pp. 2004– 2017, 2022. [14] M. Al-Qatf, X. W ang, A. Hawbani, A. Abdussalam, and S. H. Alsamhi, “Image captioning with novel topics guidance and retrieval-based topics re-weighting, ” IEEE T ransactions on Multimedia , vol. 25, pp. 5984– 5999, 2023. [15] S. Kornblith, L. Li, Z. W ang, and T . Nguyen, “Guiding image captioning models toward more specific captions, ” in Proceedings of the IEEE/CVF International Confer ence on Computer V ision (ICCV) , October 2023, pp. 15 259–15 269. [16] Y . Zhong, L. W ang, J. Chen, D. Y u, and Y . Li, “Comprehensive image captioning via scene graph decomposition, ” in Computer V ision – ECCV 2020 , A. V edaldi, H. Bischof, T . Brox, and J.-M. Frahm, Eds. Cham: Springer International Publishing, 2020, pp. 211–229. [17] Z. Zhang, Z. Qi, C. Y uan, Y . Shan, B. Li, Y . Deng, and W . Hu, “Open- book video captioning with retriev e-copy-generate network, ” in 2021 IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , 2021, pp. 9832–9841. [18] G. Xu, S. Niu, M. T an, Y . Luo, Q. Du, and Q. W u, “T ow ards accurate text-based image captioning with content diversity exploration, ” in 2021 IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , 2021, pp. 12 632–12 641. [19] X. Mei, X. Liu, J. Sun, M. D. Plumbley , and W . W ang, “T owards generating diverse audio captions via adversarial training, ” IEEE/ACM T rans. Audio, Speech and Lang. Pr oc. , vol. 32, p. 3311–3323, Jun. 2024. [20] S. Deshmukh, B. Elizalde, D. Emmanouilidou, B. Raj, R. Singh, and H. W ang, “Training audio captioning models without audio, ” in 2024 International Conference on Acoustics, Speech, and Signal Processing , April 2024. [21] X. Mei, C. Meng, H. Liu, Q. Kong, T . K o, C. Zhao, M. D. Plumbley , Y . Zou, and W . W ang, “W avcaps: A chatgpt-assisted weakly-labelled audio captioning dataset for audio-language multimodal research, ” IEEE/ACM T ransactions on Audio, Speech, and Language Processing , vol. 32, pp. 3339–3354, 2024. [22] M. Strese, C. Schuwerk, A. Iepure, and E. Steinbach, “Multimodal Feature-Based Surface Material Classification, ” IEEE T ransactions on Haptics , vol. 10, no. 2, pp. 226–239, 2017. [23] M. Hodosh, P . Y oung, and J. Hockenmaier , “Framing image description as a ranking task: data, models and ev aluation metrics, ” J. Artif. Int. Res. , vol. 47, no. 1, p. 853–899, May 2013. [24] K. Drossos, S. Lipping, and T . V irtanen, “Clotho: An audio captioning dataset, ” in Pr oceedings of the IEEE International Confer ence on Acoustics, Speech, and Signal Pr ocessing (ICASSP) . Barcelona, Spain: IEEE, May 2020, pp. 736–740. [25] S. Cai, K. Zhu, Y . Ban, and T . Narumi, “V isual-T actile Cross-Modal Data Generation Using Residue-Fusion GAN With Feature-Matching and Perceptual Losses, ” IEEE Rob . Autom , pp. 7525–7532, Jul. 2021. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 9 [26] H. Liu, D. Guo, X. Zhang, W . Zhu, B. F ang, and F . Sun, “T o ward image-to-tactile cross-modal perception for visually impaired people, ” IEEE T rans. Autom. Sci. Eng. , pp. 521–529, Feb . 2021. [27] Y . Ujitoko and Y . Ban, “V ibrotactile Signal Generation from T exture Im- ages or Attributes Using Generative Adversarial Netw ork, ” in Proc. Int. Conf. Hum. Haptic Sens. T ouc h Enabled Comput. Appl. (EuroHaptics) , 2018, pp. 25–36. [28] P . Pawlus, R. Reizer, and M. W ieczorowski, “ A revie w of methods of random surface topography modeling, ” T ribology International , vol. 152, p. 106530, 2020. [29] N. Landin, J. M. Romano, W . McMahan, and K. J. Kuchenbecker , “Dimensional reduction of high-frequency accelerations for haptic ren- dering, ” in Haptics: Generating and P erceiving T angible Sensations , 2010, pp. 79–86. [30] Y . Dong, G. Li, Y . T ao, X. Jiang, K. Zhang, J. Li, J. Su, J. Zhang, and J. Xu, “Fan: Fourier analysis networks, ” 2024. [Online]. A vailable: https://arxiv .org/abs/2410.02675 [31] X. Mei, X. Liu, Q. Huang, M. D. Plumbley , and W . W ang, “ Audio captioning transformer, ” in Pr oceedings of the 6th Detection and Clas- sification of Acoustic Scenes and Events 2021 W orkshop (DCASE2021) , Barcelona, Spain, November 2021, pp. 211–215. [32] M. Kim, K. Sung-Bin, and T .-H. Oh, “Prefix tuning for automated audio captioning, ” in ICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2023, pp. 1–5. [33] S. Ghosh, S. Kumar, C. K. Reddy Evuru, R. Duraiswami, and D. Manocha, “Recap: Retrie val-augmented audio captioning, ” in ICASSP 2024 - 2024 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2024, pp. 1161–1165. [34] R. Mokady , A. Hertz, and A. H. Bermano, “Clipcap: Clip prefix for image captioning, ” arXiv preprint , 2021. [35] J. Fei, T . W ang, J. Zhang, Z. He, C. W ang, and F . Zheng, “Transfer - able decoding with visual entities for zero-shot image captioning, ” in Pr oceedings of the IEEE/CVF International Conference on Computer V ision (ICCV) , October 2023, pp. 3136–3146. [36] L. W ang, H. Chen, Y . Liu, and Y . Lyu, “Regular constrained multimodal fusion for image captioning, ” IEEE T ransactions on Cir cuits and Systems for V ideo T echnology , vol. 34, no. 11, pp. 11 900–11 913, 2024. [37] K. Papineni, S. Roukos, T . W ard, and W .-J. Zhu, “Bleu: a method for automatic ev aluation of machine translation, ” in Pr oceedings of the 40th Annual Meeting of the Association for Computational Linguistics , P . Isabelle, E. Charniak, and D. Lin, Eds., Jul. 2002, pp. 311–318. [38] C.-Y . Lin and F . J. Och, “ Automatic ev aluation of machine translation quality using longest common subsequence and skip-bigram statistics, ” in Pr oceedings of the 42nd Annual Meeting of the Association for Computational Linguistics (ACL-04) , Barcelona, Spain, Jul. 2004, pp. 605–612. [Online]. A vailable: https://aclanthology .or g/P04- 1077/ [39] M. Denkowski and A. Lavie, “Meteor universal: Language specific translation ev aluation for any target language, ” in Pr oceedings of the Ninth W orkshop on Statistical Machine T r anslation , O. Bojar, C. Buck, C. Federmann, B. Haddow , P . K oehn, C. Monz, M. Post, and L. Specia, Eds., Jun. 2014, pp. 376–380. [40] R. V edantam, C. L. Zitnick, and D. Parikh, “Cider: Consensus-based image description evaluation, ” in 2015 IEEE Conference on Computer V ision and P attern Recognition (CVPR) , 2015, pp. 4566–4575. [41] P . Anderson, B. Fernando, M. Johnson, and S. Gould, “Spice: Semantic propositional image caption ev aluation, ” in Computer V ision – ECCV 2016 , B. Leibe, J. Matas, N. Sebe, and M. W elling, Eds. Cham: Springer International Publishing, 2016, pp. 382–398.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment