Gaussian Joint Embeddings For Self-Supervised Representation Learning

Self-supervised representation learning often relies on deterministic predictive architectures to align context and target views in latent space. While effective in many settings, such methods are limited in genuinely multi-modal inverse problems, wh…

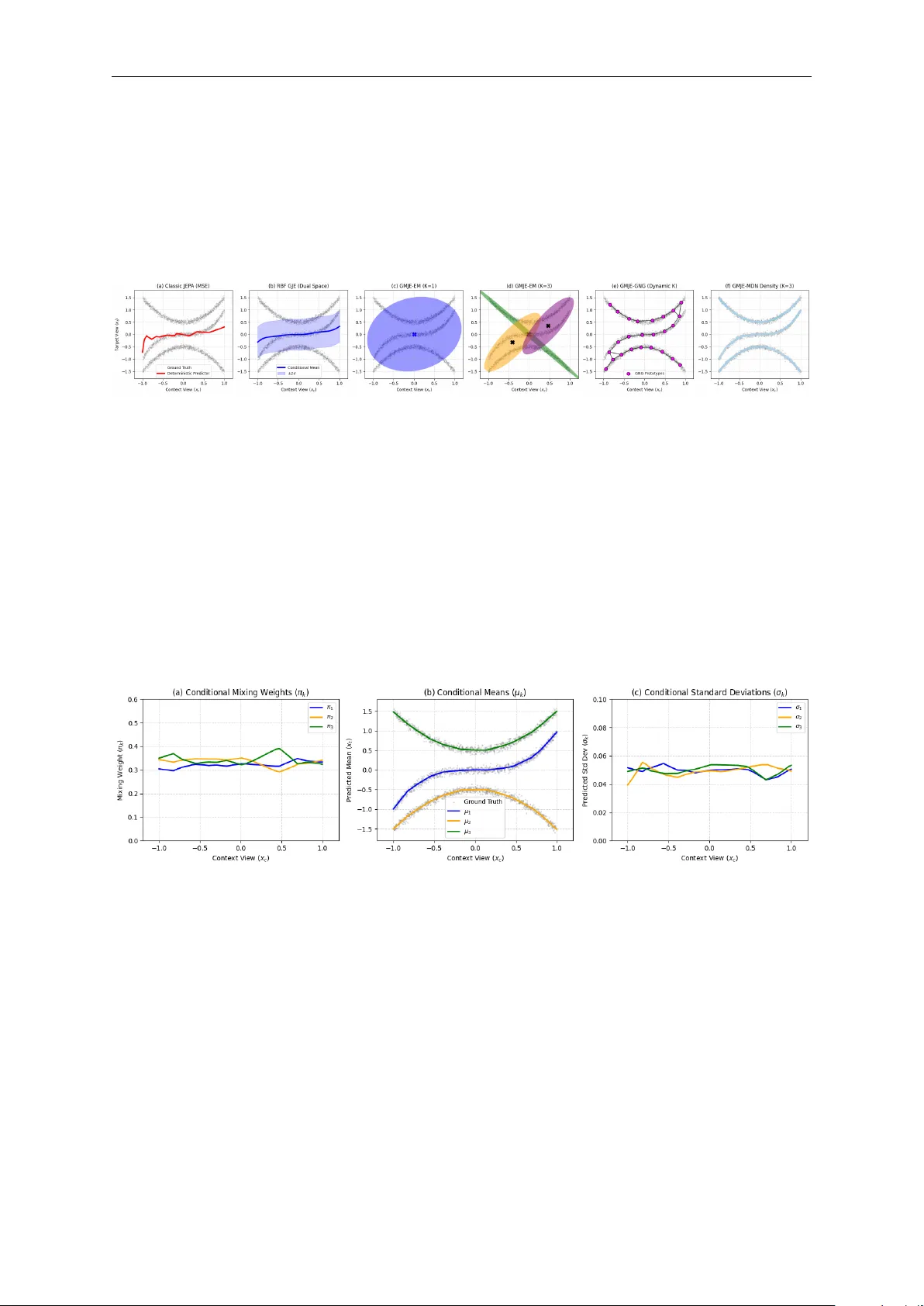

Authors: Yongchao Huang