Drive My Way: Preference Alignment of Vision-Language-Action Model for Personalized Driving

Human driving behavior is inherently personal, which is shaped by long-term habits and influenced by short-term intentions. Individuals differ in how they accelerate, brake, merge, yield, and overtake across diverse situations. However, existing end-…

Authors: Zehao Wang, Huaide Jiang, Shuaiwu Dong

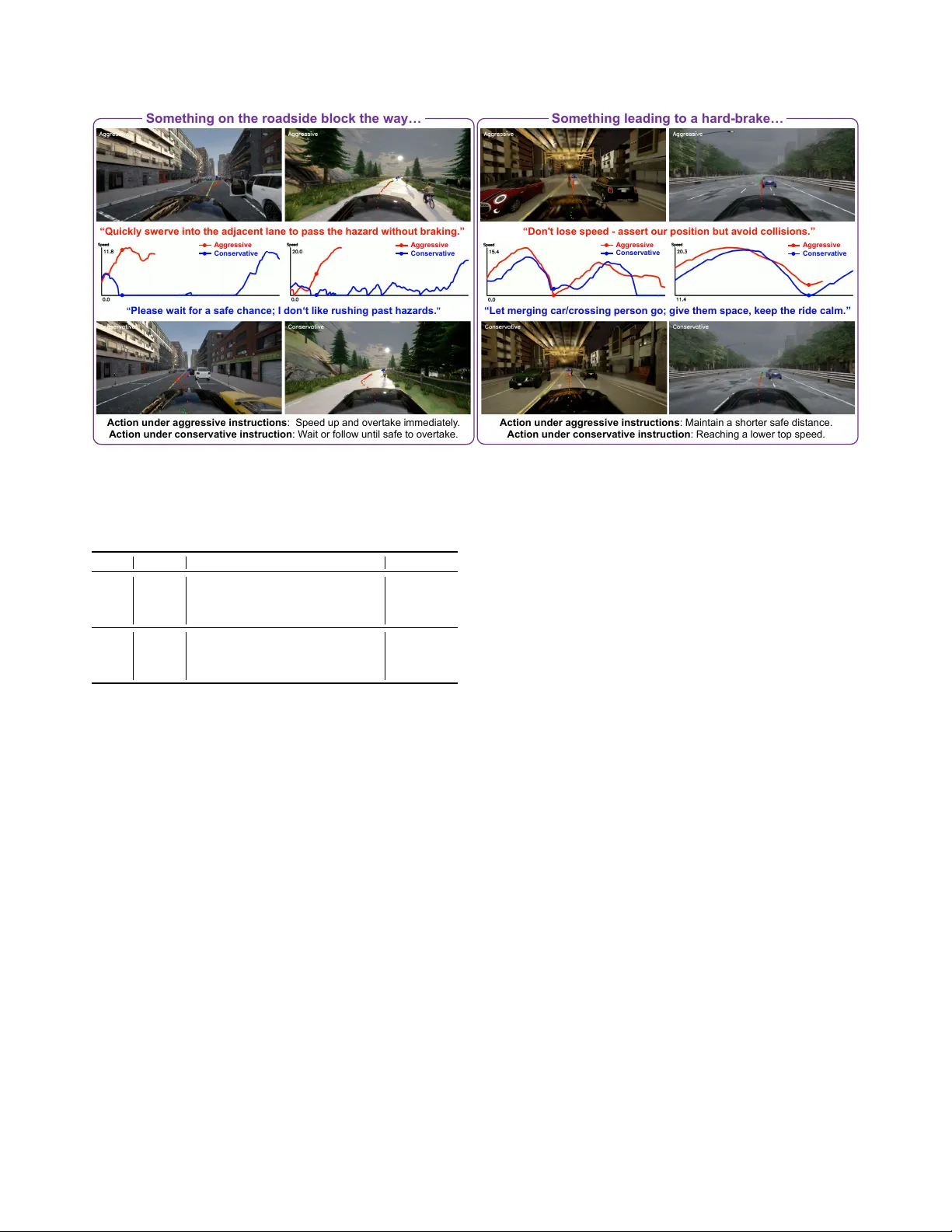

Driv e My W ay: Pr eference Alignment of V ision-Language-Action Model f or P ersonalized Driving Zehao W ang 1 , Huaide Jiang 1 , Shuaiwu Dong 1 , Y uping W ang 1 , 2 , Hang Qiu 1 , Jiachen Li 1 , † 1 Uni versity of California, Ri v erside 2 Uni versity of Michig an Abstract Human driving behavior is inher ently personal, which is shaped by long-term habits and influenced by short-term intentions. Individuals differ in how they accelerate, brak e, mer ge , yield, and overtak e acr oss diverse situations. How- ever , existing end-to-end autonomous driving systems ei- ther optimize for generic objectives or r ely on fixed driving modes, lacking the ability to adapt to individual pr efer ences or interpr et natural langua ge intent. T o addr ess this gap, we pr opose Drive My W ay (DMW), a personalized V ision- Language-Action (VLA) driving frame work that aligns with users’ long-term driving habits and adapts to r eal-time user instructions. DMW learns a user embedding fr om our personalized driving dataset collected acr oss multiple real drivers and conditions the policy on this embedding during planning, while natur al language instructions pr o vide addi- tional short-term guidance. Closed-loop evaluation on the Bench2Drive benchmark demonstr ates that DMW impr o ves style instruction adaptation, and user studies show that its generated behaviors ar e r ecognizable as each driver’ s own style, highlighting personalization as a ke y capability for human-center ed autonomous driving. Our data and code ar e available at https://dmw-cvpr .github .io/ . 1. Introduction End-to-end autonomous dri ving has emerged as a powerful paradigm that directly learns to map raw multi-modal sen- sor inputs to driving trajectories or control actions [ 1 – 4 ]. Recent advances in unified architectures and foundation- model-based frameworks ha ve demonstrated impressive performance in open-loop benchmarks through imitation of expert trajectories [ 5 – 7 ]. Ho we ver , these systems often op- timize for generic safety and ef ficiency objectiv es, ov er - looking the individuality and context-dependent nature of human driving behaviors. In practice, driving is inherently personal; individuals differ in how assertively they accel- erate, brake, or overtake depending on the situation, pur- † Corresponding author . zehao.wang1@email.ucr.edu , jiachen.li@ucr.edu Throttle: 0.5 Brake: 0.0 Steer: - 0. 3 Throttle: 0.0 Brake: 0.8 Steer: 0.0 Current Spe ed: 3 m/s Brake no w , stop u ntil there is no ca r from opposite. 36 - year - old school teacher, 16 years of driving… Maintain spee d , s traddle the center line and take over. Driver A 27 - year - old sales manager, 5 years of dri ving… Dri v e r B Dr i v e My Wa y Figure 1. Driv e My W ay (DMW) achieves end-to-end personal- ized driving via both long-term preference alignment and short- term style instruction adaptation. pose, or emotional state (e.g., commuting, leisure, or emer - gency travel). Existing autonomous driving systems typi- cally provide only a fe w preset modes (e.g., “sport”, “com- fort”, or “eco”) or manual parameter adjustments, which fail to capture subtle and ev olving passenger preferences [ 8 – 10 ]. More importantly , such rigid presets cannot interpret intuitiv e, natural language instructions, such as “I’m tired” or “I’m late for work”. Therefore, achieving long-term, context-adaptive personalization is crucial for enhancing user trust, comfort, and satisfaction [ 11 , 12 ]. Existing research on personalized autonomous dri ving mainly falls into two categories. First, data-driv en meth- ods extract predefined driving styles from human demon- strations and learn style-conditioned policies using behav- ior cloning or in verse reinforcement learning (IRL) [ 11 , 13 ]. While effecti ve at mimicking human-like styles, these approaches require diverse large-scale datasets and scale poorly to a growing population of users with heterogeneous preferences. Surmann et al. [ 14 ] mitigate this limitation through multi-objecti ve reinforcement learning, enabling runtime adjustment of preference weights. Howe ver , such methods cannot handle real-time human interaction via nat- ural language or adapt to user instructions on the fly . Sec- 1 ond, language-dri ven approaches leverage large language models (LLMs) for instruction-based personalization [ 15 ]. For example, T alk2Drive [ 16 ] employs GPT -4 to interpret passenger commands and generate high-level driving de- cisions, reducing takeover rates in simplified intersection and parking scenarios in volving limited traffic participants. Despite promising results, existing language-based meth- ods remain constrained to simple, low-interaction settings. They ha ve not been systematically in vestig ated in comple x, dynamic driving contexts, such as merging, overtaking, or emergenc y braking, where trade-of fs among safety , com- fort, and efficienc y must adapt to dynamic scenes. Fully lev eraging the reasoning and generalization capabilities of foundation models for such context-a ware adaptations re- mains largely unexplored. Additionally , current personal- ization methods based on LLMs [ 16 ] or vision-language models (VLMs) [ 17 ] do not consider a driv er’ s long-term habits or cumulati ve experiences, which are div erse and continuously ev olving. T o address these issues, we propose DMW , a novel V ision-Language-Action (VLA) framew ork that integrates visual observ ations, contextual user profiles, and real-time natural language instructions to generate adaptive, person- alized driving actions, as shown in Fig. 1 . DMW learns user embeddings that encode long-term driving behaviors from a newly collected personalized driving dataset comprising profiles, trajectories, and pri vileged information from thirty driv ers across twenty realistic scenarios in CARLA [ 18 ]. T o enable human interaction and real-time adaptation, we fur- ther apply reinforcement fine-tuning to the driving policy using rewards deriv ed from safety , comfort, and ef ficiency objectiv es, whose weights are dynamically adjusted. Ex- tensiv e closed-loop experiments on Bench2Driv e [ 19 ] sho w that DMW effecti vely adapts to dif ferent style instructions while maintaining safety . Further user studies demonstrate that its driving behavior aligns with individual user prefer - ences and expresses distinct dri ving behaviors. Our main contributions are summarized as follo ws: • W e propose DMW , a novel personalized end-to-end au- tonomous dri ving framework that integrates contextual user embeddings to align policy behaviors with indi vidual driving preferences. DMW also enables real-time human interaction and adaptability through reinforcement fine- tuning conditioned on natural language instructions. • W e construct the first multi-modal personalized dri ving dataset (PDD) collected from thirty real dri vers across div erse traf fic scenarios in CARLA, which provides a valuable dataset for developing and ev aluating human- centered driving models. • W e conduct e xtensiv e ev aluations on the Bench2Drive benchmark [ 19 ], complemented by personalization met- rics and user studies, which demonstrate the effecti veness of DMW in adapting its driving behavior to individual user preferences while maintaining a balanced trade-off among safety , ef ficiency , and comfort. 2. Related W ork Foundation Models for A utonomous Driving. Existing end-to-end autonomous driving models [ 20 – 22 ] still strug- gle in closed-loop benchmarks [ 23 , 24 ] due to reliance on imitation learning and limited generalization [ 25 , 26 ]. Foundation models (LLMs, VLMs) hav e recently emerged [ 27 – 36 ] and existing work (e.g., Driv eVLM [ 37 ], GPT - Driv er [ 38 ], AlphaDriv e [ 39 ]) le verages their world kno wl- edge and semantic reasoning to interpret complex traffic scenarios, producing high-le vel decisions and language ra- tionales for their actions. In parallel, VLA models hav e demonstrated the ability to directly translate raw sensory in- puts and linguistic instructions into fine-grained actions [ 40 , 41 ] or low-le vel control signals through action tokens [ 22 ] or action decoders [ 42 ]. While these approaches have made substantial progress toward language-conditioned dri ving, their capacity for personalization and adaptation to indi vid- ual driving preferences remains lar gely underexplored. Personalization in A utonomous Driving. The pursuit of personalized autonomous driving remains an activ e area of research, with the ke y challenge lying in aligning individual driving preferences. Recent studies like [ 11 ] learn style- conditioned driving policies, but ov erlook fine-grained in- dividual dif ferences. MA VERIC [ 43 ] explores the learn- ing of a latent space of di verse and socially-aware driv- ing behaviors, offering greater flexibility compared to pre- defined style categories. Meanwhile, LLMs have enabled more intuiti ve human-vehicle interaction [ 8 , 15 , 17 , 44 ], al- lowing systems to respond to personalized commands in real time. Recent works [ 16 ] le verage memory modules based on retrie val-augmented generation (RA G) to person- alize decisions by retrieving user-specific context. How- ev er, these approaches rely on e xplicit human feedback and accurate retriev al, producing contexts that are largely de- scriptiv e rather than behavioral and fail to capture implicit, long-term driving tendencies. Moreov er , most of them are ev aluated only in open-loop settings, making it difficult to assess ho w well personalization transfers to closed-loop de- cision making and influences real-time driving beha vior . 3. Personalized Dri ving Dataset T o learn and ev aluate personalized dri ving policies, we con- struct a new Personalized Driving Dataset (PDD) , as illus- trated in Fig. 2 . PDD captures the driving environments and the personal conte xt of human driv ers across div erse, highly interactiv e, safety-critical driving situations. Unlike prior datasets that rely on synthetic instruction-action pairs with limited behavioral variation [ 45 ] or those that infer style la- bels retrospectiv ely using coarse VLM-based reasoning or heuristic labeling [ 11 ], our dataset records human driving behaviors during complex real-time interactions, which al- lows us to study how different individuals make decisions when faced with similar situations. W e recruit thirty dri vers with diverse backgrounds and 2 Driving Data RGB Image Human Action Expert Speed 4 Scenario T ypes 20 Routes 30 Dr ivers Ego Status Surrounding Vehicles Profile Da ta Age Driving Experience Driving Preference … Driving Style A 36 - year - old school teacher with the following driving background: Driving Expe rience: 16 years of driving, t ypically 5 – 10 hours per week. Driving Style : Calm. Driving Purpose s: Commuting to school, weekend hiking trips with family. … Figure 2. An overvie w of the Personal Driving Dataset, which consists of the driving data and structured dri ver profile data. lev els of driving experience. Before data collection, each participant completes a structured questionnaire covering demographic information, dri ving history , and typical dri v- ing purposes such as commuting or leisure travel. These profiles provide a semantic context that connects personal background with long-term dri ving habits. Each participant then performs a standardized set of twenty driving scenar- ios in the CARLA, spanning four scenario types, includ- ing overtaking, merging into traffic, handling intersections, and navigating pedestrian crossings or encountering vehi- cle cut-ins. All drivers operate the v ehicle using a Logitech G-series steering wheel and pedal setup to enable naturalis- tic control inputs. PDD records ego-v ehicle motion states, scene perception of surrounding vehicles, pedestrians, cy- clists, and roadside hazards, as well as traffic context such as signal states, stop signs, route geometry , and speed lim- its. T o support comparison across dri vers and scenarios, we record an expert target speed using PDM-Lite [ 46 ]; the de- viation between human-driv en speed and this target serves as a dense descriptor of behavioral style under v arying con- ditions. Overall, PDD offers a rich and behavior -sensiti ve foundation for modeling personalized driving, which sup- ports preference-conditioned policy learning. 4. Problem F ormulation W e aim to learn a personalized dri ving policy that aligns with a driv er’ s long-term driving behavior while adapting to real-time preference instructions. Let M = { 1 , . . . , M } denote the set of dri vers, where M is the number of driv ers. For each driver m ∈ M , the collected dri ving dataset is D m = ( s m t , a m t ) T m t =1 , where s m t and a m t denote the en vi- ronmental state and the executed action of driver m at time step t , and T m is the trajectory length. The user profile ob- tained is P m , which summarizes the driv er’ s background, habits, and driving e xperience. The complete dataset across all driv ers is D = S m ∈M D m . W e formalize personalized driving as a Mark ov Decision Process (MDP), defined by the tuple ( S , A , O , T , R , γ ) . S represents the state space, which includes en vironmental in- formation. A ⊂ R 3 is the continuous action space consist- ing of throttle, brake, and steering. At time t , the agent ob- serves o t = ( I t , q t , I t , g t , P m ) ∈ O , where I t ∈ R H × W × 3 is the front-view image, q t is the ego-vehicle state, I t is a language instruction expressing short-term preference (e.g., “I’m in a rush”), g t is the navigation target (e.g., route way- points), and P m identifies the context of the activ e dri ver . T denotes the transition in the en vironment. R is the reward function that ev aluates the action. γ is the discount factor . The objective is to learn a driving policy π θ ( a t | o t ) that maximizes the expected cumulative discounted re- ward: max θ E π θ h P T t =0 γ t R ( s t , a t ) i . T o achieve person- alization, the policy must infer the driver’ s long-term be- havioral tendencies from their historical driving data D m and relate these behaviors to their profile P m . During ex- ecution, the policy adapts its decisions based on the speci- fied user embedding z m p , which is encoded from the driv er’ s profile P m , and the current instruction I t , ensuring safe and effecti ve dri ving behavior that reflects each dri ver’ s charac- teristic style and situational preferences. 5. Method W e focus on personalized adaptation of dri ving policies to accommodate different users and their short-term driving preferences. The overall diagram of DMW is provided in Fig. 3 . Giv en the camera observations and na vigation goals, the model fuses the dri ver’ s long-term preference with user instructions to produce adaptiv e, personalized actions. 5.1. VLA Backbone W e employ SimLingo [ 42 ] as our VLA backbone due to its ability to perform language grounding and planning. SimLingo is built on InternVL2-1B [ 47 , 48 ], which inte- grates the InternV iT -300M-448px vision encoder with the Qwen2-0.5B language model, of fering computational effi- ciency compared to larger alternativ es [ 49 ]. Supervised by privile ged expert demonstrations from PDM-Lite [ 46 ] and auxiliary reasoning signals, the backbone predicts tempo- ral and geometric waypoints, which are then conv erted into target speed and steering commands. 5.2. P ersonalization via Reinfor cement Fine-tuning Despite the strong planning capability of the VLA back- bone, imitation learning alone tends to capture generalized behaviors rather than user-specific driving styles. W e em- ploy the Group Relativ e Policy Optimization (GRPO) to further finetune the personalization ability [ 50 ]. By sam- pling a group of outputs and computing normalized group advantages, GRPO inherently promotes policy specializa- tion. T o generate a rich set of candidate actions for the GRPO policy update, we introduce a residual decoder . As shown in Fig. 3 , learnable r esidual query tokens are inte- grated into the language model along with vision, language, navigation, user embedding, and motion query tokens. The resulting residual features are decoded by an MLP followed 3 M oti on P r edi c tor Res i dual Dec oder Dr iv e M y Wa y Q wen 2 - 0.5B User In st r u ct io n “ I' m r eal l y i n a r us h.” “ I feel c ar s i c k .” “ K e e p s t e ad y , go c a r e f u l l y . ” “ I am bei ng l ate to w or k .” “ Let’ s be pati ent and c auti ous .” ... Ins tr uc ti on E nc oder La ngua ge T ok e n V i s i on E nc oder V i s i on T ok e n M ot i on Q ue r y R esi d u al Q u er y ... T ar g et T o ken Nav i gati on E nc oder Long T er m P r efer enc e E nc oder U s e r Em be ddi ng R oute T a r ge t: [50.023, 31.981] Dr iv er P r o f ile A Dr iv in g P r ef er en c e G ende r Dr iv in g E x per i enc e C hoi c e 1 C hoi c e 2 C hoi c e 3 ... ... ... ... … Figure 3. An ov ervie w of the DMW framework with a pretrained VLA backbone. The model takes in front-view camera images, instruc- tions, route target points, and user profile as inputs, while the motion predictor outputs route and speed waypoints, which deriv e the base action (throttle, steer angle). The residual decoder outputs a discrete residual applied to the base to produce the final personalized action. by a categorical action head to produce two discrete residual adjustments: (i) speed change and (ii) steering change. The combined action is then passed through a PID con- troller to produce the final a t = a base t + a ∆ t , where a base t is deriv ed from the predicted waypoints and a ∆ t is the per- sonalized residual. This design allows the policy to pre- serve safe planning while adapting e xpressively to different driv ers and situational intentions among multiple feasible planning trajectories (i.e., different choices of action). 5.3. Long-term Prefer ence Lear ning and Alignment User Embedding Lear ning. T o model the underlying dri v- ing style of dif ferent users and align VLA with personalized preferences, we introduce a long-term preference encoder that learns user embeddings from profile. T o relate the se- mantic profile context with corresponding dri ving behavior , we adopt a contrastive learning mechanism [ 51 ] to learn a shared latent space Z . As illustrated in Fig. 4 , the long- term preference encoder f p ( · ) takes a profile P m of driv er m from the total set of dri vers M and outputs a user embed- ding z m p ∈ Z . f p ( · ) includes a DeBER T aV3 [ 52 ] text pro- cessor followed by a projection head. The route processor f b ( · ) is a temporal encoder with multi-head self-attention that processes a past trajectory windo w of length k at the current timestep t , ξ m t = { ( I m t − k : t , q m t − k : t , a m t − k : t ) } , which consists of sequential front-view camera images I m t − k : t , ego- vehicle states q m t − k : t and driv er’ s actions a m t − k : t from D m in PDD. This outputs a behavior embedding z m b,t ∈ Z that summarizes the driver’ s actual dri ving tendencies. T o align the embeddings from the two encoders, we employ the In- foNCE [ 51 ] contrastiv e objective that encourages z m p and z m b,t from the same driver to be close while pushing embed- dings from different dri vers apart: L m t = − log exp sim( z m p , z m b,t ) /τ P M j =1 exp sim( z j p , z m b,t ) /τ , (1) where z j p is the user embedding of all other drivers, sim( · , · ) denotes the cosine similarity and τ is the temperature. Prefer ence Alignment. After obtaining the user embed- ding z m p , we condition the VLA policy on this embedding and further adapt it through reinforcement fine-tuning. The user embedding acts as a latent personalization prior, guid- ing the policy toward decision patterns consistent with the driv er’ s characteristic style. T o align the policy with indi vidual driving preferences while enhancing behavioral diversity , for each target driver m , we augment trajectories by conditioning the user em- bedding on both their own and another driv er’ s data u ∈ M , u = m . W e want driver m and driver u to behave dif- ferently in order to effecti vely distinguish different driv ers, so for a gi ven driv er m , we always select u who has the least similarity with m regarding user embedding: u = arg min x ∈M sim( z m p , z x p ) . The augmented action ˜ a m t is es- timated by scaling the original human action a m t according to the ratio of their route-lev el action statistics, which cap- ture each dri ver’ s action variability . Let the route-le vel av er- age action of driv er m and u be ¯ a m and ¯ a u respectiv ely , then we calculate the augmented action as: ˜ a m t = ¯ a m ¯ a u · a m t . The rew ard is then formulated as a behavioral similarity between the model’ s sampled action and the reference or augmented action. When the policy is conditioned on the target profile P m : R ( s m t , a t ) = d ( a t , a m t ) . When conditioned on P u : R ( s m t , a t ) = d ( a t , ˜ a m t ) , where d ( · ) measures similarity in action space, encouraging the policy to adapt decisions to each user embedding while learning a smooth manifold of div erse driving styles. 5.4. Human-V ehicle Personalized Interaction Style Instruction. In complex and dynamically ev olving traffic scenarios, effecti ve personalization requires the au- tonomous agent to infer not only the long-term dri ving pref- erence but also the context-adapti ve preferences that vary across situations. T o capture these nuances, we construct a 4 Driving History of Driver B Contrastive Learning Driver B Profile A 27 - year - old sales manager with the following driv ing background: Driving Experience : 5 years of driving, typically more than 20 hours per week. Driving Purposes: … Habits : … Driver A Profile A 36 - year - old school teacher with the following backg round: Driving Experience: 16 years of driving, typically 5 – 10 hours per week. Driving Purposes : … Habits :… Long Term Preference Encoder Driving History of Driver A v t Route Processor Driver Similarity Matrix Negative Pair Positive Pair A B C A B C … … v t Figure 4. The contrastive learning mechanism on the long-term preference encoder and route processor . style instruction set spanning twenty distinct dri ving scenar- ios. These instructions incorporate style intent and scene- specific semantics. Each scenario includes nine stylistic in- structions that cover three dri ving styles expressed at three lev els of directness, following the degrees of implicitness defined in prior work [ 16 ]. This design enables the model to interpret both e xplicit commands and subtle linguistic cues that reflect real-time user intent across diverse con- texts, thereby achie ving context-aware adaptation. Style-A ware Reward Adaptation. T o translate user intents into safe and personalized objectiv es, we design a reward function that integrates driving performance metrics with instruction-dependent style alignment. The overall driving rew ard is formulated as a weighted combination of safety , efficienc y , and comfort terms: R ( s t , a t ) = w s · R safety + w e · R efficienc y + w c · R comfort , (2) where w s , w e , and w c are importance weights, and R safety , R efficienc y , and R comfort denote the corresponding compo- nents. The safety rew ard penalizes risky interactions based on T ime-to-Collision (TTC): R safety = I safety TTC t ≥ β safety , where I safety is the binary indicator enforces a min- imum instruction-dependent safety threshold β safety . The efficienc y reward encourages maintaining a speed consis- tent with the desired style: R efficienc y = exp( − α · | v t − v pref | ) , where v t is the actual speed deri ved from action a t , v pref is the instruction-dependent preferred speed, and α is a penalty coefficient controlling sensitivity to de- viation. The comfort reward ev aluates whether a t re- mains within smoothness limits: R comfort = I comf a steer t | < β lat and | a acceleration t | < β long , where I comf is a binary in- dicator , β lat and β long are instruction-dependent lateral and longitudinal comfort thresholds, respectiv ely . T o encourage personalized interaction with the user and P r ef er en ce A l i g n m en t v i a G R P O F i n e - t u n i n g P r ef er en ce R ew ar d G en er at i o n w i t h L L M “ Le t ’ s be pa t i e nt . ” A c c i de nt on t he S i de 0 . 3 0 . 4 0 . 3 S : 0. 1 E : 0. 9 C : 0. 2 R: 0.29 S : 0. 5 E : 0. 4 C : 0. 5 R: 0.48 S : 1. 0 E : 0. 2 C : 0. 3 R: 0.63 C o m fo r t: 0 . 2 E f fi ci e n cy : 0 . 3 S af e t y : 0 . 5 M oti on P r edi c tor V i s i on E nc oder Q w en2- 0.5B LoRA Res i dual Dec oder Re a s ons Figure 5. The fine-tuning process and re ward generation for short- term instruction alignment. adapt to real-time preferences, we map the rew ard param- eters based on both the instruction and the dri ving con- text. Specifically , for each style command I t , and sce- nario description, this mapping produces a set of weights ( w s , w e , w c ) , a TTC threshold β safety , a desired speed v pref , and comfort thresholds β lat , β long . Instructions associated with an aggressi ve style correspond to a higher w e and v pref to prioritize efficienc y over other metrics. This formula- tion provides a more distinct optimization objective for each style rather than relying on a generic combination. T o enable scalable and reliable weight adjustment, we employ a multi-stage approach illustrated in Fig. 5 . First, we lev erage the reasoning capabilities of advanced LLMs (e.g., GPT -5) to infer rew ard weights and thresholds from the scenario description and the instruction with a style S ∈ { Conservati ve , Neutral , Aggressi ve } , and the inferred parameters are initialized within predefined upper bounds for each style to maintain balanced trade-offs among met- rics. Second, the generated parameters are refined through expert revie w to ensure that the final reward functions re- flect the intended command semantics while preserving safe driving behaviors across diverse traffic conditions. This adaptation bridges the gap between subtle preferences in language and dynamic style rew ards. 6. Experiments W e conduct extensiv e closed-loop experiments to validate the effecti veness of the DMW frame work in both user align- ment and instruction preference adaptation. Our ev aluation is designed to answer three key research questions: • RQ1: Long-term Driving Alignment. Can our policy align with specific driving behavior when conditioned on 5 T able 1. Bench2Drive closed-loop driving metrics with different style instructions. W e compare SimLingo and StyleDrive under different style instructions with our policy fine-tuning with fixed rewards weights (DMW -V anilla) and style-aware rewards weights ( DMW ). Method Style DS SR Efficienc y Comfort Speed Acceleration LC Headw ay TT SimLingo [ 42 ] Aggressiv e 78.56 65.83 247.60 18.61 7.66 5.39 0.75 25.99 25.35 Neutral 78.15 65.85 241.44 24.67 7.37 5.22 0.75 27.81 31.41 Conservati ve 78.18 65.56 238.77 26.99 7.21 5.29 0.70 29.12 33.02 StyleDriv e [ 11 ] Aggressiv e 75.68 60.89 256.71 16.79 7.23 5.59 0.74 24.95 27.76 Neutral 76.26 62.13 249.07 21.35 6.98 5.43 0.66 23.62 29.12 Conservati ve 77.02 61.96 242.18 23.67 6.82 5.39 0.70 27.19 29.98 DMW -V anilla Aggressiv e 82.19 70.97 253.10 15.86 7.86 5.29 0.78 26.46 19.69 Neutral 81.96 70.63 247.77 19.21 7.66 5.17 0.77 26.63 23.16 Conservati ve 81.48 71.05 246.80 21.87 7.75 5.37 0.75 26.90 22.51 DMW Aggressiv e 79.50 67.36 281.56 21.62 7.72 6.01 0.70 26.37 26.93 Neutral 82.03 70.95 244.98 28.67 6.34 5.43 0.61 27.60 40.75 Conservati ve 82.72 71.56 237.06 34.62 6.18 5.26 0.60 30.05 47.38 learned user embeddings? • RQ2: Short-term Instruction Adaptation. Ho w well does the policy align with various styles of real-time lan- guage commands under different scenarios? • RQ3: Driving Perf ormance. Does the introduction of personalization maintain or compromise driving perfor- mance, particularly regarding safety and success rate in complex scenarios? 6.1. Experimental Setup Implementation Details. For user embedding learning, both the long-term preference encoder and the route pro- cessor are trained using the AdamW optimizer [ 53 ] with a weight decay of 1e-3 and a learning rate of 1e-4. After con vergence, the long-term preference encoder is frozen, and we fine-tune the full motion predictor and residual de- coder while adopting the LoRA adapter [ 54 ] for parameter - efficient adaptation of Qwen2-0.5B [ 49 ]. The full model is trained on eight NVIDIA R TX A6000 GPUs with a per- GPU batch size of 8. For GRPO, we generate 4 responses per input for policy gradient updates. Scenarios and Baselines. W e ev aluate closed-loop perfor- mance using Bench2Drive [ 19 ]. Unlike the original ev alu- ation, which continues the episode after a collision, we ter- minate the route immediately once a collision occurs to em- phasize the safety-critical assessment. T o v alidate the effect of personalization, we compare DMW with SimLingo [ 42 ] under v arious styles of instruction, focusing on whether the model can effecti vely adjust its driving tendency accord- ing to user-specific preferences. Additionally , to strengthen comparisons with prior personalization-focused work, we implement a StyleDriv e-like [ 11 ] baseline by mapping each instruction to a style condition (i.e., Aggressi ve, Neutral, Conservati ve) and injecting it into the policy . Meanwhile, we adopt MORL-PD [ 14 ] as a baseline since it conditions the policy on a preference v ector (speed/comfort) at runtime to modulate behavior . By deriving a per-user preference vector from dri ving metrics of test drivers and conditioning a multi-objectiv e RL (MORL) policy , we compare it with DMW for long-term preference alignment. Evaluation Metrics. W e employ metrics that assess both driving performance and stylistic alignment. The Driv- ing Score (DS), Success Rate (SR), Efficiency (Effic.), and Comfort are adopted from Bench2Dri ve [ 19 ]. T o quan- tify the personalization of the model, we measure the mean value of driving metrics, including average Speed in m / s , Acceleration (Acce.) in m / s 2 , Lane Change Counts (LC), Headway in m , and Tra vel Time (TT) in s . W e introduce the Alignment Score (AS) for user studies to e valuate how well the policy aligns with indi vidual driving preferences. User Study for Long-T erm Preference Alignment. T o test whether the driving beha viors of DMW align with each driv er’ s preference. W e introduce an Alignment Score (AS) by first clustering all dri vers using their historical logs, then generating roll-outs condition on each driver’ s profile, and finally calculating the accuracy across test routes where roll-out is correctly classified into their target cluster . Ad- ditionally , we recruit ten e v aluators and ask them to rate the similarity of the driv er’ s o wn logs and corresponding model roll-outs on a 1-10 scale. T o test the zero-shot generalizabil- ity of DMW , we perform preference alignment on 25 drivers from PDD and ev aluate the behavior alignment on both 25 in-distribution (ID) and 5 out-of-distribution (OOD) drivers. For each scenario type, we choose three test routes. 6.2. Main Results Adaptation to Style Instruction. T able 1 presents the closed-loop e valuation results on the Bench2Driv e. The results show that fine-tuning alone already improves both SR and DS, likely due to the ne wly introduced safety re- ward. Furthermore, when conditioned on conservati ve in- structions, DMW achie ves the greatest gains in DS and SR while maintaining comparable ef ficiency , reflecting im- prov ed reliability in cautious driving. Under the aggressiv e instructions, our policy yields a substantial efficiency gain of 18.77% compared to the 3.70% in SimLingo, and 6.00% 6 T able 2. Driving metrics with and without style instructions, for each driver pr ofile with a differ ent long-term prefer ence. Note that for Alignment Scor e (AS) and Ratings, we compute their value regardless of the style since it only measures long-term alignment. Driver Scenario Style DS Speed Efficienc y Acceleration Headway AS Ratings D1 Emergenc y Brake None ↓ Aggressiv e 95.10 → 84.02 8.06 → 9.58 150.94 → 167.12 6.31 → 6.39 18.94 → 17.11 1.00 8.8 D2 98.64 → 96.71 4.61 → 4.97 96.02 → 99.21 4.94 → 5.35 23.10 → 21.18 0.67 8.3 D3 97.88 → 95.93 5.39 → 6.34 109.83 → 121.06 5.63 → 6.16 21.61 → 19.22 1.00 8.0 D4 94.02 → 93.15 7.76 → 8.35 145.22 → 159.56 6.03 → 6.92 21.03 → 18.19 0.67 7.8 D1 Merging None ↓ Conservati ve 90.05 → 94.38 9.32 → 8.76 270.84 → 260.10 5.15 → 4.68 96.44 → 101.16 0.67 8.4 D2 86.94 → 91.72 6.37 → 5.76 196.82 → 175.31 4.29 → 4.16 118.92 → 121.03 1.00 8.1 D3 96.85 → 95.94 6.98 → 6.20 205.41 → 195.77 4.46 → 4.24 111.26 → 114.05 0.67 7.6 D4 97.31 → 97.88 8.78 → 8.63 261.52 → 259.52 5.01 → 4.91 88.76 → 100.93 1.00 8.2 D1 Overtaking None ↓ Neutral 97.56 → 97.94 7.70 → 7.94 220.19 → 223.13 7.28 → 7.37 14.69 → 14.53 1.00 9.0 D2 98.23 → 98.41 5.50 → 5.56 167.40 → 167.80 5.65 → 5.78 22.23 → 22.04 1.00 8.6 D3 97.14 → 96.62 6.34 → 6.13 191.02 → 183.84 6.18 → 6.01 20.79 → 22.19 0.67 7.5 D4 80.61 → 82.19 9.63 → 9.51 271.98 → 265.84 7.11 → 6.82 17.81 → 18.03 1.00 8.1 D1 T raffic Sign None ↓ Conservati ve 90.82 → 96.67 9.08 → 8.49 408.74 → 401.28 7.12 → 6.37 31.44 → 32.52 1.00 8.7 D2 97.92 → 98.35 5.79 → 4.91 193.26 → 183.11 5.72 → 5.51 35.02 → 36.91 1.00 8.2 D3 89.31 → 92.02 6.88 → 5.37 237.05 → 231.46 5.96 → 5.91 34.54 → 36.51 1.00 8.1 D4 95.91 → 97.32 8.91 → 6.74 418.02 → 379.68 6.54 → 5.81 31.19 → 31.77 0.67 7.9 in StyleDrive, with only a 3.89% reduction in DS relativ e to the conservati ve condition. DMW significantly outper- forms SimLingo in style adaptation, which highlights that DMW accurately captures the intended beha viors expressed by the instructions while preserving overall performance. Meanwhile, StyleDriv e induces smaller metric shifts and achiev es a lo wer DS than DMW , likely due to its fixed style condition, which further highlights the benefit of our style- sensitiv e adaptation. The consistent reduction in accelera- tion and lane-change counts under conservati ve ones further validates that DMW maintains distinct stylistic beha viors. T o further illustrate the behavioral diversity induced by different instructions, Fig. 6 visualizes ho w our pol- icy reacts under aggressi ve and conservati ve instructions in safety-critical scenarios. When facing a road blockage, the aggressiv e instruction prefers an immediate ov ertake (red speed curve). Conv ersely , the conservati ve command causes the agent to decelerate and either stop or maintain a lo w following speed until the opposite lane is clear (blue curve). This behavioral diver gence arises from the style- aware weighting in our fine-tuning reward, which dynami- cally adjusts the balance between safety and efficiency ac- cording to the given instruction. In hard-braking scenar- ios, the agent tends to maintain a tighter following distance when objects appear ahead or mer ge into the lane under ag- gressiv e instructions, while the agent slo ws down and yields more noticeably when conditioned on conservati ve ones. This distinction is largely go verned by the style-a ware TTC threshold, which is adapted to be more permissiv e for ag- gressiv e ones and more restrictiv e for conservati ve ones, directly tuning the model’ s safety margins. The complete driving clips are pro vided in the supplementary materials. Long-term Preference Alignment. W e report metrics and user study results for two seen driv ers (D1, D2) and two unseen drivers (D3, D4). T able 2 shows that the pol- icy conditioned on each profile exhibits consistent motion statistics across different scenarios. For instance, Driv ers T able 3. Long-term preference alignment. D: Driv er ID. Alignment Score A verage Ratings Methods ID OOD ID OOD D1 D2 D3 D4 D1 D2 D3 D4 MORL-PD [ 14 ] 0.42 0.58 0.25 0.33 5.1 6.2 3.9 3.5 DMW 0.92 0.92 0.83 0.83 8.7 8.3 7.8 8.0 1 and 4 exhibit higher efficiency and acceleration, reflect- ing a more aggressiv e driving preference, whereas Drivers 2 and 3 show lo wer acceleration and larger headway across all categories, indicating a preference that prioritizes safety and comfort. Furthermore, Driv er 2 shows the most cau- tious style with the lowest acceleration, while Driv er 3, though still conservati ve, exhibits slightly higher speeds and greater acceleration, suggesting a willingness to acceler- ate more readily in scenarios such as Overtaking and Traf- fic Sign. While each driver’ s driving metrics vary across scenarios, the driving behavior remains distinct among the driv ers, suggesting that the policy aligns with individual driv ers’ long-term driving preferences across different sce- narios. Furthermore, T able 3 shows higher AS and ratings for DMW , indicating that more roll-outs are classified into the target cluster where their dri ver belongs, and ev alua- tors can consistently recognize driving beha viors that reflect the corresponding driv er’ s driving habits. While MORL- PD also induces style shifts via its preference-weight condi- tioning, it achie ves a lo wer alignment, especially on unseen driv ers, which suggests that our user embedding better cap- tures semantic context from the profile, leading to stronger generalization. In addition, consistent with T able 1 , language style in- structions can provide an additional adaptation, shifting the driving to wards the short-term preference. 6.3. Ablation Study Adaptive A verage Pooling. W e ablate the masked Adap- tiv e A verage Pooling (AAP) module used in the preference 7 So me t h in g o n t h e r o a d s id e b lo c k t h e w a y … So me t h in g le a d in g t o a h a r d - b r ake… A ct i on under aggr essi ve i nst r uct i ons : S peed up and over t ake im m e d ia t e ly . A ct i on under conser vat i ve i nst r uct i on : W a it o r f o llo w u n t il s a f e t o over t ake . A ct i on under aggr essi ve i nst r uct i ons : M ai nt ai n a shor t er saf e di st ance. A ct i on under conser vat i ve i nst r uct i on : R eachi ng a l ow er t op speed. “Q ui ckl y sw er ve i nt o t he adj acent l ane t o pass t he hazar d w i t ho ut br aki ng. ” “ P l ease w ai t f or a saf e cha nce; I don‘ t l i ke r ushi ng past hazar ds. ” “D on' t l ose speed - asser t our posi t i on but avoi d col l i si ons. ” “Let m er gi ng car / cr ossi ng per son go; gi ve t hem space, keep t he r i de cal m . ” C o n ser v at i v e A g g r essi v e C o n ser v at i v e A g g r essi v e C o n ser v at i v e A g g r essi v e C o n ser v at i v e A g g r essi v e Figure 6. Driving preference under aggressive and conservati ve instructions. Red waypoints denote distance parametrized (every 1 m ) navigation path and green w aypoints denote time parametrized (e very 0 . 25 s ) trajectory . T able 4. A veraged driving metrics using long-term preference encoder with or without Adaptive A verage P ooling (AAP). Driver Encoder DS Speed Effic. Acce. Headway AS Ratings D1 w/o AAP 82.74 4.52 161.38 5.84 50.11 0.67 6.1 D2 88.02 4.21 163.07 5.88 47.56 0.58 5.9 D3 87.65 4.49 133.12 5.81 41.63 0.25 4.6 D4 80.13 4.46 172.92 5.79 38.94 0.50 5.3 D1 w/ AAP 93.38 8.54 262.68 6.46 40.38 0.92 8.7 D2 95.43 5.57 163.38 5.15 49.82 0.92 8.3 D3 95.30 6.40 185.83 5.56 47.05 0.83 7.8 D4 91.96 8.77 274.19 6.17 39.70 0.83 8.0 encoder , which compresses textual profile features into a fixed-length sequence aligned with the temporal features of the route processor . Replacing this module with an unmasked global mean reduces the expressi veness of user embeddings. As shown in T able 4 , driving policy con- ditioned on the user embeddings from the preference en- coder with AAP yields a higher div ersity of motion statis- tics across different user profiles. W e also observe a higher AS, demonstrating that the model can more consistently re- produce each driv er’ s behavioral traits. W e attrib ute the im- prov ement to its ability to preserve semantically important embeddings while mitigating the bias from irrelev ant seg- ments. W e also observe that in denser interaction scenarios, the policy may shift toward conservati ve behavior for safety , which can reduce style expressi veness for neutral driv ers. Style-aware Reward Adaptation. W e examine the effect of fixed versus style-aware adapti ve reward weights on driv- ing performance and beha vioral div ersity . The results are in T able 1 . When the rew ard components are fixed (i.e., w s = 0 . 35 , w e = 0 . 35 , w c = 0 . 30 ), only the style- dependent TTC and comfort thresholds remain to induce stylistic variation. DMW -V anilla achiev es higher overall DS and SR, indicating effecti ve optimization of generic ob- jectiv es. Ho we ver , the inability of dynamic adaptation to efficienc y and comfort weights causes aggressiv e and con- servati ve instructions to exhibit similar driving, rev ealing a reduced sensitivity to style. In contrast, DMW adap- tiv ely adjusts rew ard weights and thresholds based on both context and instruction style, maintaining driving perfor- mance while producing clear behavioral distinctions. Ag- gressiv e instructions lead to higher speeds and shorter head- ways, whereas conserv ativ e ones fav or smoother control and larger safety margins. This demonstrates the necessity of adapti ve weighting to achiev e both reliable and personal- ized driving beha viors. 7. Conclusion W e introduced DMW , a personalized VLA driving frame- work that aligns long-term driver preferences and adapts to short-term style instructions. Through learning user em- beddings from the curated Personalized Dri ving Dataset and conditioning on the end-to-end driving policy , our method captures and aligns with individual drivers’ behav- iors. Extensiv e experiments on Bench2Dri ve demonstrate that DMW achiev es more distinct adaptation than existing baselines. User studies further highlight the potential of per- sonalized VLA systems to bridge the gap to ward human- centered autonomous driving, enabling vehicles that adapt their behavior to indi vidual user preferences. Our approach is currently validated within the CARLA simulator . Future work will focus on bridging the sim-to-real gap by deploy- ing and ev aluating the DMW framework on real vehicles. W e also plan to expand our dataset to encompass a wider div ersity of dri ving behaviors to enable more robust and generalizable preference alignment. 8 Acknowledgment W e gratefully acknowledge Janice Nguyen, Shubham Der- hgawen, Jonathan Setiabudi, Alexander T otah, Prana v Gowrishankar , and Pratheek Sunilkumar for their support in data collection. References [1] Sicong Jiang, Zilin Huang, Kangan Qian, Ziang Luo, T ianze Zhu, Y ang Zhong, Y ihong T ang, Menglin K ong, Y unlong W ang, Siwen Jiao, Hao Y e, Zihao Sheng, Xin Zhao, T uopu W en, Zheng Fu, Sikai Chen, Kun Jiang, Diange Y ang, Seongjin Choi, and Lijun Sun. A surve y on vision-language- action models for autonomous driving. In Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision (ICCV) W orkshops , pages 4524–4536, October 2025. 1 [2] Litian Gong, Fatemeh Bahrani, Y utai Zhou, Amin Banay- eeanzade, Jiachen Li, and Erdem Bıyık. Autofocus-il: Vlm- based saliency maps for data-efficient visual imitation learn- ing without extra human annotations. In International Con- fer ence on Robotics and Automation (ICRA) , 2026. [3] Y uping W ang, Shuo Xing, Cui Can, Renjie Li, Hongyuan Hua, K exin T ian, Zhaobin Mo, Xiangbo Gao, Keshu W u, Sulong Zhou, et al. Generati ve ai for autonomous driving: Frontiers and opportunities. arXiv preprint arXiv:2505.08854 , 2025. [4] Bernard Lange, Masha Itkina, Jiachen Li, and Mykel K ochenderfer . Self-supervised Multi-future Occupancy Forecasting for Autonomous Driving. In Pr oceedings of Robotics: Science and Systems , 2025. 1 [5] Rui Zhao, Y uze Fan, Ziguo Chen, Fei Gao, and Zhenhai Gao. Diffe2e: Rethinking end-to-end driving with a hy- brid diffusion-re gression-classification policy . In The Thirty- Ninth Annual Confer ence on Neural Information Pr ocessing Systems , 2025. 1 [6] Jinning Li, Jiachen Li, Sangjae Bae, and David Isele. Adap- tiv e prediction ensemble: Improving out-of-distrib ution gen- eralization of motion forecasting. IEEE Robotics and Au- tomation Letters , 10(2):1553–1560, 2024. [7] Maneekwan T oyungyernsub, Esen Y el, Jiachen Li, and Mykel J Kochenderfer . Predicting future spatiotemporal oc- cupancy grids with semantics for autonomous dri ving. In 2024 IEEE Intelligent V ehicles Symposium (IV) , pages 2855– 2861. IEEE, 2024. 1 [8] Genghua Kou, Fan Jia, W eixin Mao, Y ingfei Liu, Y ucheng Zhao, Ziheng Zhang, Osamu Y oshie, T iancai W ang, Y ing Li, and Xiangyu Zhang. Padri ver: T ow ards personalized au- tonomous driving. In 2025 International Joint Confer ence on Neural Networks (IJCNN) , pages 1–8, 2025. 1 , 2 [9] Manabu Nakanoya, Junha Im, Hang Qiu, Sachin Katti, Marco Pav one, and Sandeep Chinchali. Personalized fed- erated learning of driver prediction models for autonomous driving, 2021. [10] Jiachen Li, David Isele, Kanghoon Lee, Jinkyoo Park, Kikuo Fujimura, and Mykel J Kochenderfer . Interactive au- tonomous navigation with internal state inference and in- teractivity estimation. IEEE T ransactions on Robotics , 40: 2932–2949, 2024. 1 [11] Ruiyang Hao, Bo wen Jing, Haibao Y u, and Zaiqing Nie. Styledriv e: T owards dri ving-style aware benchmarking of end-to-end autonomous driving. In Pr oceedings of the AAAI Confer ence on Artificial Intelligence , pages 4627– 4635, 2026. 1 , 2 , 6 [12] Udita Ghosh, Dripta S. Raychaudhuri, Jiachen Li, K onstanti- nos Karydis, and Amit K. Roy-Cho wdhury . Reducing oracle feedback with vision-language embeddings for preference- based rl. In IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2026. 1 [13] Anqing Jiang, Y u Gao, Y iru W ang, Zhigang Sun, Shuo W ang, Y uwen Heng, Hao Sun, Shichen T ang, Lijuan Zhu, Jinhao Chai, et al. Irl-vla: Training an vision-language- action policy via re ward world model. arXiv preprint arXiv:2508.06571 , 2025. 1 [14] Hendrik Surmann, Jorge De Heuvel, and Maren Bennewitz. Multi-objectiv e reinforcement learning for adaptable person- alized autonomous driving. In 2025 Eur opean Confer ence on Mobile Robots (ECMR) , pages 1–8. IEEE, 2025. 1 , 6 , 7 [15] Y unsheng Ma, Can Cui, Xu Cao, W enqian Y e, Peiran Liu, Juanwu Lu, Amr Abdelraouf, Rohit Gupta, Kyungtae Han, Aniket Bera, James M. Rehg, and Ziran W ang. Lampilot: An open benchmark dataset for autonomous driving with lan- guage model programs. In IEEE/CVF Conference on Com- puter V ision and P attern Recognition (CVPR) , pages 15141– 15151, 2024. 2 [16] Can Cui, Zichong Y ang, Y upeng Zhou, Y unsheng Ma, Juanwu Lu, Lingxi Li, Y aobin Chen, Jitesh Panchal, and Zi- ran W ang. Personalized autonomous dri ving with large lan- guage models: Field experiments. In 2024 IEEE 27th Inter- national Conference on Intelligent T ransportation Systems (ITSC) , pages 20–27. IEEE, 2024. 2 , 5 [17] Can Cui, Zichong Y ang, Y upeng Zhou, Juntong Peng, Sung- Y eon Park, Cong Zhang, Y unsheng Ma, Xu Cao, W enqian Y e, Y iheng Feng, et al. On-board vision-language mod- els for personalized autonomous vehicle motion control: System design and real-world validation. arXiv preprint arXiv:2411.11913 , 2024. 2 [18] Alexey Dosovitskiy , German Ros, Felipe Codevilla, Antonio Lopez, and Vladlen K oltun. CARLA: An open urban dri ving simulator . In Proceedings of the 1st Annual Conference on Robot Learning , pages 1–16, 2017. 2 [19] Xiaosong Jia, Zhenjie Y ang, Qifeng Li, Zhiyuan Zhang, and Junchi Y an. Bench2driv e: T owards multi-ability bench- marking of closed-loop end-to-end autonomous driving. Ad- vances in Neural Information Processing Systems , 37:819– 844, 2024. 2 , 6 [20] Xiaosong Jia, Y ulu Gao, Li Chen, Junchi Y an, Patrick Langechuan Liu, and Hongyang Li. Driv eadapter: Breaking the coupling barrier of perception and planning in end-to-end autonomous driving. In ICCV , 2023. 2 [21] Xiaosong Jia, Penghao W u, Li Chen, Jiangwei Xie, Conghui He, Junchi Y an, and Hongyang Li. Think twice before driv- ing: T owards scalable decoders for end-to-end autonomous driving. In CVPR , 2023. [22] Zewei Zhou, Tianhui Cai, Seth Z. Zhao, Y un Zhang, Zhiyu Huang, Bolei Zhou, and Jiaqi Ma. Autovla: A vision- language-action model for end-to-end autonomous driving with adaptive reasoning and reinforcement fine-tuning. In 9 Advances in Neural Information Pr ocessing Systems , 2025. 2 [23] Carla autonomous driving leaderboard, 2025. 2 [24] Y uping W ang, Xiangyu Huang, Xiaokang Sun, Mingxuan Y an, Shuo Xing, Zhengzhong T u, and Jiachen Li. Uniocc: A unified benchmark for occupancy forecasting and prediction in autonomous driving. In Proceedings of the IEEE/CVF International Confer ence on Computer V ision , pages 25560– 25570, 2025. 2 [25] Haohan Chi, Huan-ang Gao, Ziming Liu, Jianing Liu, Chenyu Liu, Jinwei Li, Kaisen Y ang, Y angcheng Y u, Zeda W ang, W enyi Li, Leichen W ang, Xingtao Hu, Hao Sun, Hang Zhao, and Hao Zhao. Impromptu vla: Open weights and open data for dri ving vision-language-action models. In Advances in Neural Information Processing Systems, Datasets and Benchmarks T rac k , 2025. 2 [26] Haohong Lin, Y unzhi Zhang, W enhao Ding, Jiajun W u, and Ding Zhao. Model-based policy adaptation for closed-loop end-to-end autonomous driving. In W orkshop on F oundation Models Meet Embodied Agents at CVPR 2025 , 2025. 2 [27] Zhiwen Fan, Jian Zhang, Renjie Li, Junge Zhang, Runjin Chen, Hezhen Hu, Ke vin W ang, Huaizhi Qu, Dilin W ang, Zhicheng Y an, et al. Vlm-3r: V ision-language models aug- mented with instruction-aligned 3d reconstruction. In Pr o- ceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2026. 2 [28] Y uan Gao, Mattia Piccinini, Y uchen Zhang, Dingrui W ang, Korbinian Moller , Roberto Brusnicki, Baha Zarrouki, Alessio Gambi, Jan Frederik T otz, Kai Storms, Stev en Pe- ters, Andrea Stocco, Bassam Alrifaee, Marco Pa vone, and Johannes Betz. Foundation models in autonomous driving: A surve y on scenario generation and scenario analysis. IEEE Open Journal of Intellig ent T ransportation Systems , 2026. [29] Piyush Gupta, Sangjae Bae, Jiachen Li, and David Isele. Scale-plan: Scalable language-enabled task plan- ning for heterogeneous multi-robot teams. arXiv pr eprint arXiv:2603.08814 , 2026. [30] Y unsong Zhou, Linyan Huang, Qingwen Bu, Jia Zeng, T ianyu Li, Hang Qiu, Hongzi Zhu, Minyi Guo, Y u Qiao, and Hongyang Li. Embodied understanding of driving sce- narios. In European Conference on Computer V ision , pages 129–148. Springer , 2024. [31] Xiaopan Zhang, Zejin W ang, Zhixu Li, Jianpeng Y ao, and Jiachen Li. Commcp: Efficient multi-agent coordination via llm-based communication with conformal prediction. In IEEE International Confer ence on Robotics and A utomation (ICRA) . IEEE, 2026. [32] Mingxuan Y an, Y uping W ang, Zechun Liu, and Jiachen Li. Rdd: Retriev al-based demonstration decomposer for planner alignment in long-horizon tasks. In Pr oceedings of the 39th Annual Conference on Neural Information Pr ocessing Sys- tems (NeurIPS) , 2025. [33] Xiaopan Zhang, Hao Qin, Fuquan W ang, Y ue Dong, and Ji- achen Li. Lamma-p: Generalizable multi-agent long-horizon task allocation and planning with lm-driven pddl planner . In 2025 IEEE International Conference on Robotics and Au- tomation (ICRA) , pages 10221–10221. IEEE, 2025. [34] Trishna Chakraborty , Udita Ghosh, Xiaopan Zhang, Fahim Faisal Niloy , Y ue Dong, Jiachen Li, Amit Roy- Chowdhury , and Chengyu Song. Heal: An empirical study on hallucinations in embodied agents driven by large lan- guage models. In F indings of the Association for Com- putational Linguistics: EMNLP 2025 , pages 21226–21243, 2025. [35] Sayak Nag, Udita Ghosh, Calvin-Khang T a, Sarosij Bose, Jiachen Li, and Amit K Roy-Chowdhury . Conformal pre- diction and mllm aided uncertainty quantification in scene graph generation. In Pr oceedings of the IEEE/CVF Con- fer ence on Computer V ision and P attern Recognition , pages 11676–11686, 2025. [36] Zhikai Zhao, Chuanbo Hua, Federico Berto, Kanghoon Lee, Zihan Ma, Jiachen Li, and Jinkyoo Park. Traje vo: Trajectory prediction heuristics design via llm-dri ven evolution. In Pr o- ceedings of the AAAI Conference on Artificial Intelligence (AAAI) , 2026. 2 [37] Xiaoyu Tian, Junru Gu, Bailin Li, Y icheng Liu, Y ang W ang, Zhiyong Zhao, Kun Zhan, Peng Jia, XianPeng Lang, and Hang Zhao. Driv e vlm: The con vergence of autonomous driving and lar ge vision-language models. In Conference on Robot Learning , pages 4698–4726. PMLR, 2025. 2 [38] Jiageng Mao, Y uxi Qian, Junjie Y e, Hang Zhao, and Y ue W ang. Gpt-driver: Learning to drive with gpt. arXiv pr eprint arXiv:2310.01415 , 2023. 2 [39] Bo Jiang, Shaoyu Chen, Qian Zhang, W enyu Liu, and Xing- gang W ang. Alphadrive: Unleashing the power of vlms in autonomous driving via reinforcement learning and reason- ing. arXiv pr eprint arXiv:2503.07608 , 2025. 2 [40] Haoyu Fu, Diankun Zhang, Zongchuang Zhao, Jianfeng Cui, Dingkang Liang, Chong Zhang, Dingyuan Zhang, Hongwei Xie, Bing W ang, and Xiang Bai. Orion: A holistic end-to- end autonomous driving framework by vision-language in- structed action generation. In Proceedings of the IEEE/CVF International Confer ence on Computer V ision (ICCV) , pages 24823–24834, October 2025. 2 [41] Shuang Zeng, Xinyuan Chang, Mengwei Xie, Xinran Liu, Y ifan Bai, Zheng Pan, Mu Xu, and Xing W ei. Futuresight- driv e: Thinking visually with spatio-temporal cot for au- tonomous driving. In Advances in Neural Information Pro- cessing Systems , 2025. 2 [42] Katrin Renz, Long Chen, Elahe Arani, and Oleg Sinavski. Simlingo: V ision-only closed-loop autonomous dri ving with language-action alignment. In Pr oceedings of the Computer V ision and P attern Recognition Conference , pages 11993– 12003, 2025. 2 , 3 , 6 , 1 [43] Mariah L Schrum, Emily Sumner , Matthew C Gombolay , and Andrew Best. Maveric: A data-driv en approach to personalized autonomous driving. IEEE T ransactions on Robotics , 40:1952–1965, 2024. 2 [44] Xu Han, Xianda Chen, Zhenghan Cai, Pinlong Cai, Meixin Zhu, and Xiaowen Chu. From words to wheels: Automated style-customized policy generation for autonomous driving. arXiv pr eprint arXiv:2409.11694 , 2024. 2 [45] Ziye Qin, Siyan Li, Chuheng W ei, Guoyuan W u, Matthew J Barth, Amr Abdelraouf, Rohit Gupta, and Kyungtae Han. In vestigating personalized driving behaviors in dilemma zones: Analysis and prediction of stop-or-go decisions. IEEE Robotics and Automation Letter s , 2025. 2 [46] Chonghao Sima, Katrin Renz, Kashyap Chitta, Li Chen, Hanxue Zhang, Chengen Xie, Jens Beißwenger , Ping Luo, Andreas Geiger , and Hongyang Li. Drivelm: Driving with 10 graph visual question answering. In European Confer ence on Computer V ision , 2024. 3 [47] Zhe Chen, W eiyun W ang, Hao T ian, Shenglong Y e, Zhang- wei Gao, Erfei Cui, W enwen T ong, Kongzhi Hu, Jiapeng Luo, Zheng Ma, et al. How far are we to gpt-4v? closing the gap to commercial multimodal models with open-source suites. Science China Information Sciences , 67(12):220101, 2024. 3 [48] Zhe Chen, Jiannan Wu, W enhai W ang, W eijie Su, Guo Chen, Sen Xing, Muyan Zhong, Qinglong Zhang, Xizhou Zhu, Lewei Lu, et al. Internvl: Scaling up vision foundation mod- els and aligning for generic visual-linguistic tasks. In Pr o- ceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , pages 24185–24198, 2024. 3 [49] An Y ang, Baosong Y ang, Binyuan Hui, Bo Zheng, Bowen Y u, Chang Zhou, Chengpeng Li, Chengyuan Li, Dayiheng Liu, Fei Huang, et al. Qwen2 technical report. eprint arXiv: 2407.10671 , 2024. 3 , 6 [50] Zhihong Shao, Peiyi W ang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y ang Wu, et al. Deepseekmath: Pushing the limits of math- ematical reasoning in open language models. arXiv preprint arXiv:2402.03300 , 2024. 3 [51] Aaron van den Oord, Y azhe Li, and Oriol V inyals. Repre- sentation learning with contrastiv e predictiv e coding. arXiv pr eprint arXiv:1807.03748 , 2018. 4 [52] Pengcheng He, Jianfeng Gao, and W eizhu Chen. De- BER T a v3: Improving deBER T a using ELECTRA-style pre- training with gradient-disentangled embedding sharing. In The Eleventh International Conference on Learning Repre- sentations , 2023. 4 [53] Ilya Loshchilov and Frank Hutter . Decoupled weight de- cay regularization. In International Conference on Learning Repr esentations (ICLR) , 2019. 6 [54] Edward J Hu, Y elong Shen, Phillip W allis, Zeyuan Allen- Zhu, Y uanzhi Li, Shean W ang, Lu W ang, W eizhu Chen, et al. Lora: Low-rank adaptation of lar ge language models. ICLR , 1(2):3, 2022. 6 11 Driv e My W ay: Pr eference Alignment of V ision-Language-Action Model f or Personalized Driving Supplementary Material A. Additional Quantitative Results T o further validate whether DMW produces behaviors con- sistent with human e xpectations, we report the detailed user study results on both long-term preference alignment and short-term adaptation to style instructions. A.1. Long-term Prefer ence Alignment Building on the results across scenario types in T able 2 , the av eraged metrics in T able 5 further show clear differences in driv er-specific behavior . Dri vers with higher av erage speeds exhibit consistent behavior across scenarios, whereas more cautious drivers tend to maintain larger headways and lower accelerations. These trends are consistent with the cross- scenario patterns observed in T able 2 , suggesting that the policy aligns with persistent behavioral traits beyond indi- vidual routes. A.2. Adaptation on Style Instruction Beyond long-term preferences, users may also express short-term intentions via style instructions depending on sit- uational context. T o complement objectiv e metrics in T able 1 , and further e v aluate whether the policy adapts to these in- structions, we conduct a user study in which human e valua- tors rate trajectories generated under different style prompts for the same scenario. Each ev aluator is shown short video clips rendered under conservative , neutral , and aggr essive instructions and ask ed to judge whether the resulting behav- ior matches the intended style. Evaluators score each trajectory on a 0-10 scale accord- ing to three criteria: (i) ho w well the behavior follows the instruction, (ii) efficienc y , comfort, and smoothness of driv e, and (iii) perceiv ed safety . As shown in T able 6 , across four representati ve scenario types, both StyleDri ve [ 11 ] and DMW outperform SimLingo baseline [ 42 ], indicating the benefit of style-aware driving adaptation. StyleDri ve [ 11 ] demonstrates improved alignment with short-term style in- structions compared to SimLingo, but still f alls short of DMW . In contrast, DMW consistently achieves the high- est ratings across all styles and scenarios. Evaluators con- sistently observe that under aggressiv e instructions, DMW produces higher speeds, shorter follo wing distances, and more decisive accelerations, while conserv ative instructions yield smoother control profiles and larger safety margins. These results confirm that the proposed policy not only re- sponds to explicit style instructions, but does so in a more sensitiv e manner . T able 5. Driving metrics across all scenario types. Driver DS Speed Effic. Acce. Headway AS Ratings D1 93.38 8.54 262.68 6.46 40.38 0.92 8.7 D2 95.43 5.57 163.38 5.15 49.82 0.92 8.3 D3 95.30 6.40 185.83 5.56 47.05 0.83 7.8 D4 91.96 8.77 274.19 6.17 39.70 0.83 8.0 D5 92.58 7.48 229.93 5.58 39.08 0.83 7.9 D6 97.71 6.16 190.74 6.08 42.41 0.75 7.4 D7 96.11 7.16 218.25 6.36 40.12 0.83 7.9 D8 95.28 5.82 188.27 5.54 43.95 0.92 8.2 D9 96.16 5.60 178.97 5.84 45.07 0.67 7.0 D10 94.20 8.38 258.28 6.89 40.26 1.00 8.6 A.3. Additional Qualitative Results Fig. 7 visualizes how DMW responds to aggressive and conservati ve instructions across safety-critical scenarios. In the lost-of-control scenario, where the ego-v ehicle risks los- ing control due to the bad road conditions, the aggressive in- struction prioritizes efficienc y: it maintains a higher speed and attempts to pass the unstable area quickly . In contrast, the conservati ve instruction leads the agent to maintain a smoother trajectory , and preserve vehicle stability . This di- ver gence highlights how the policy adapts its balance be- tween efficienc y and comfort based on the giv en instruction. A similar pattern emerges in the oncoming-v ehicle intru- sion scenario. When another vehicle inv ades the ego lane, the aggressi ve instruction causes the agent to accelerate and ex ecute a decisi ve rightward maneuver at relati vely high speed. Meanwhile, the conservati ve instruction prompts early caution: the agent reduces speed, yields space sooner , and performs a safer av oidance. In another scenario in volving a parked vehicle blocking the lane, the agent must decide when to safely overtake. Un- der an aggressiv e instruction, corresponding to personal re- quirements such as “I’m in a hurry” or “I’m running late”. The policy seeks the earliest viable gap and initiates the ov ertake quickly to reduce waiting time. In contrast, under a conservati ve instruction, the agent remains patient, yield- ing until the oncoming lane is fully clear , especially under low-visibility conditions. In the scenario where the agent needs to make a left turn at an unsignalized junction, under the aggressiv e instruc- tion, the agent initiates the turn earlier once it identifies a tighter and feasible opening, minimizing delay . In con- trast, the conserv ati ve instruction causes the agent to wait patiently for a safer gap before turning. This cautious be- havior reflects the emphasis on safety and low-risk in com- plex intersection negotiations. T ogether , these examples il- 1 T able 6. User study ratings (0-10) e valuating how well trajectories match intended instructions. Fiv e e valuators (E1-E5) rate trajecto- ries from SimLingo [ 42 ], StyleDriv e [ 11 ], and DMW . Scenario Model Style E1 E2 E3 E4 E5 Emergenc y Brake SimLingo [ 42 ] Conservati ve 7.4 6.7 6.4 7.3 6.5 Neutral 7.0 7.3 7.5 7.0 7.1 Aggressiv e 6.5 7.6 7.2 6.6 7.4 StyleDriv e [ 11 ] Conservati ve 8.2 7.5 7.3 8.0 7.2 Neutral 7.8 8.0 8.2 7.6 7.9 Aggressiv e 7.2 8.4 7.9 7.1 8.2 DMW Conservati ve 9.0 8.1 8.0 8.8 7.9 Neutral 8.4 8.6 9.1 8.1 8.5 Aggressiv e 7.8 9.2 8.3 7.6 9.0 Merging SimLingo [ 42 ] Conservati ve 7.2 6.4 6.3 7.2 6.3 Neutral 6.8 7.0 7.3 6.7 6.8 Aggressiv e 6.2 7.5 6.9 6.1 7.3 StyleDriv e [ 11 ] Conservati ve 8.0 7.3 7.2 7.9 7.1 Neutral 7.6 7.8 8.1 7.4 7.7 Aggressiv e 7.0 8.3 7.6 6.9 8.1 DMW Conservati ve 8.9 7.9 7.7 8.7 7.6 Neutral 8.2 8.4 9.0 8.0 8.3 Aggressiv e 7.5 9.1 8.1 7.4 8.9 Overtaking SimLingo [ 42 ] Conservati ve 7.3 6.3 6.2 7.2 6.2 Neutral 6.9 7.1 7.4 6.8 6.9 Aggressiv e 6.1 7.5 7.0 6.0 7.3 StyleDriv e [ 11 ] Conservati ve 8.1 7.2 7.1 8.0 7.0 Neutral 7.7 7.9 8.2 7.5 7.8 Aggressiv e 6.9 8.4 7.7 6.8 8.2 DMW Conservati ve 9.1 7.7 7.5 8.8 7.6 Neutral 8.3 8.5 9.0 8.0 8.4 Aggressiv e 7.4 9.3 8.2 7.2 9.2 T raffic Sign SimLingo [ 42 ] Conservati ve 7.4 7.0 6.9 7.3 6.8 Neutral 7.1 7.3 7.5 7.0 7.1 Aggressiv e 6.8 7.6 7.2 6.7 7.4 StyleDriv e [ 11 ] Conservati ve 8.3 7.8 7.6 8.1 7.7 Neutral 7.9 8.1 8.3 7.7 8.0 Aggressiv e 7.4 8.6 8.0 7.2 8.4 DMW Conservati ve 8.9 8.3 8.1 8.6 8.2 Neutral 8.5 8.7 8.8 8.2 8.6 Aggressiv e 8.0 9.0 8.4 7.8 8.9 lustrate DMW’ s ability to adapt in real-time according to short-term style instruction. B. Personalized Dri ving Dataset W e pro vide a more detailed description of the collected per - sonalized driving datasets from thirty real dri vers. B.1. Scenarios In T own 12, we collect twenty routes that cov er a di- verse set of representati ve dri ving scenarios under vary- ing weather and illumination conditions. The scenarios are summarized below (descriptions are taken from https : //leaderboard.carla.org/scenarios/ ): • Accident / ParkedObstacle / ConstructionObstacle : B ad road conditions / Opposit e vehicle invades Action under aggressive instructions : Mainta ins higher speeds throu ghout. Action under conservative instruction : R eacts with caution. “Let’s move fast and maintain speed.” “Let’s keep it easy and smooth.” Parked obstacle / T urn at non - signali zed junction Action under aggressive instructions : Overtake at the earliest possible opening. Action under conservative instruction : Wait until a larger safe margin. “I’m okay with taking tighter gaps today . I’m running late.” “Better to let others go first, patience will keep us safest here.” Figure 7. Driving preference under aggressi ve and conserva- tiv e instructions. Red waypoints denote distance parametrized (ev ery 1 m ) na vigation path and green waypoints denote time parametrized (ev ery 0 . 25 s ) trajectory . An obstacle (e.g., a construction zone, an accident, or a parked vehicle) is blocking the ego lane. The ego vehicle must change lanes into traffic moving in the same direc- tion to bypass the obstacle. • SignalizedJunctionLeftT urn / NonSignalizedJunction- LeftT urn : The ego vehicle performs an unprotected left turn at an intersection (can occur at both signalized and unsignalized intersections). • CrossingBicycleFlow : The ego vehicle must ex ecute a turn at an intersection while yielding to bicycles crossing perpendicular to its path. • StaticCutIn : Another vehicle cuts into the ego lane from a queue of stationary traffic. It must decelerate, brake, or change lanes to av oid a collision. 2 • NonSignalizedJunctionRightT urn / SignalizedJunc- tionRightT urn / V anillaNonSignalizedT urn : The ego vehicle makes a right turn at an intersection while yield- ing to crossing traffic. • InterurbanActorFlow : The ego vehicle leav es the in- terurban road by turning left, crossing a fast traf fic flow . • BlockedIntersection : While performing a maneuver , the ego vehicle encounters a stopped vehicle on the road and must perform an emergency brake or an av oidance ma- neuver . • HazardAtSideLane : A slow-mo ving hazard (e.g., bicy- cle) partially obstructs the ego vehicle’ s lane. The ego vehicle must either brake or carefully bypass the hazard (bypassing on the lane with traffic in the same direction). • ParkingCutIn : A parked vehicle exits a parallel parking space into the ego vehicle’ s path. The ego vehicle must slow down to allow the parked vehicle to merge into traf- fic. • V ehicleOpensDoorT woW ays : The ego vehicle needs to av oid a parked vehicle with its door opening into the lane. • DynamicObjectCrossing : A pedestrian suddenly emerges from behind a parked vehicle and enters the lane. The ego vehicle must brake or take ev asiv e action to av oid hitting the pedestrian. • EnterActorFlow : A flow of cars runs a red light in front of the ego when it enters the junction, forcing it to react (interrupting the flow or merging into the flow). These v e- hicles are ’ special’ ones, such as police cars, ambulances, or firetrucks. • HighwayExit : The ego vehicle must cross a lane of mo v- ing traffic to e xit the highway at an off-ramp. • ControlLoss : The ego vehicle loses control due to bad conditions on the road and it must recover , coming back to its original lane. • MergerIntoSlowT raffic : The ego-vehicle merge into a slow traf fic on the off-ramp when e xiting the highway . B.2. A uxiliary Information T o enable reliable and interpretable dri ving preference anal- ysis, we extract en vironmental information and motion statistics from driving logs. Concretely , we record data at 5 Hz , including: • Camera Sensor . A forward-facing RGB camera with a resolution of 1024 × 512 a nd a wide 110 ◦ field of view serves as the primary visual sensor . • Ego-state and Control Signals. W e store full ego- vehicle kinematics, including linear acceleration, angular velocity , speed, and the world-frame pose ( location , rotation ). Human control commands, including throt- tle, brake, steering, gear, hand-brake status, and rev erse flag, together with the speed limit and lane-lev el attributes such as lane ID, lane type, lane width, and whether the ego v ehicle is currently inside a junction. • Surrounding Agents. W e store a detailed description of the leading vehicle ( front vehicle info : ID, type, 3D position, velocity , speed, and color), along with all nearby dynamic agents in other vehicles and walkers . For each surrounding actor , we log its trans- form, velocity , bounding-box extent, and other metadata. Nearby traffic lights and stop signs are also recorded, cap- turing both their spatial relation to the ego and whether they are currently influencing the e go vehicle. • Expert Supervision. Each timestep is paired with privile ged control from the PDM-Lite expert, includ- ing throttle , brake , steer , and the corresponding target speed . • Route Geometry . W e additionally provide route infor- mation in the form of two upcoming waypoints along the global route, transformed into the ego frame as target point and target point next . All actor-le vel annotations are saved as JSON files syn- chronized with the image index. 3

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment