Training the Knowledge Base through Evidence Distillation and Write-Back Enrichment

The knowledge base in a retrieval-augmented generation (RAG) system is typically assembled once and never revised, even though the facts a query requires are often fragmented across documents and buried in irrelevant content. We argue that the knowle…

Authors: Yuxing Lu, Xukai Zhao, Wei Wu

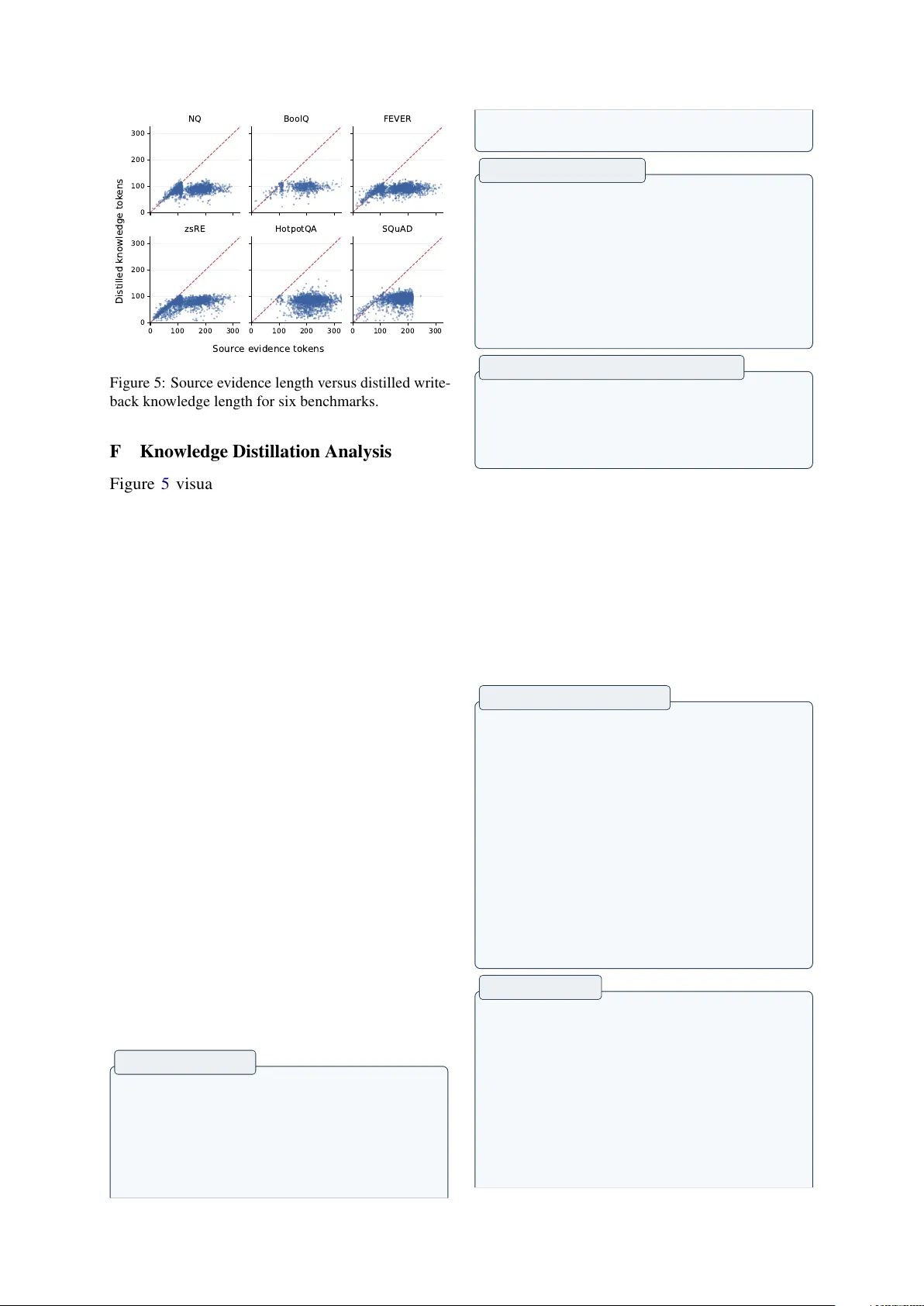

W R I T E B AC K - R AG : T raining the Knowledge Base thr ough Evidence Distillation and Write-Back Enrichment Y uxing Lu ♠ ♡ , Xukai Zhao ♣ , W ei W u ♠ , Jinzhuo W ang * ♠ ♠ Peking Uni versity ♡ Georgia Institute of T echnology ♣ Tsinghua Uni versity Abstract The kno wledge base in a retrie val-augmented generation (RA G) system is typically assem- bled once and nev er revised, ev en though the facts a query requires are often fragmented across documents and buried in irrele v ant con- tent. W e argue that the kno wledge base should be treated as a trainable component and pro- pose W R I T E B AC K - R AG , a framework that uses labeled examples to identify where re- trie val succeeds, isolate the rele vant documents, and distill them into compact knowledge units that are index ed alongside the original corpus. Because the method modifies only the corpus, it can be applied once as an offline preprocess- ing step and combined with any RA G pipeline. Across four RA G methods, six benchmarks, and two LLM backbones, W R I T E B A C K - R A G improv es e very e valuated setting, with gains a v- eraging +2.14%. Cross-method transfer experi- ments further sho w that the distilled knowledge benefits RA G pipelines other than the one used to produce it, confirming that the improvement resides in the corpus itself. 1 Introduction Retrie val-augmented generation (RAG) systems consist of three core components: a retriever , a generator , and a knowledge base (KB) ( Hu and Lu , 2024 ; Fan et al. , 2024 ). Modern RA G research has de voted substantial ef fort to optimizing the first two: training better retriev ers ( Shi et al. , 2024 ), teaching generators when and how to use retrie ved e vidence ( Asai et al. , 2023 ; Jiang et al. , 2023b ), and designing tighter retriev er-generator integra- tion ( Izacard et al. , 2023 ). The knowledge base, by contrast, is treated as a fix ed input: assembled once from raw document collections like W ikipedia dumps, textbooks, or web crawls, and nev er up- dated in response to do wnstream task signals. Kno wledge bases are composed of raw docu- ments, so the granularity at which knowledge is * Corresponding author . ✘ ✔ Standard RAG Generator Gate Distill Write-Back KB T raining WriteBack-RAG Q + Generator Q Figure 1: Standard RA G retrie ves fragmented e vidence from raw documents. W R I T E B A C K - R AG distills use- ful evidence into compact write-back documents that improv e future retriev al and generation. stored is dictated by document boundaries. Ho w- e ver , the kno wledge a query requires rarely aligns with these boundaries: the relev ant facts are typi- cally distributed across multiple documents ( frag- mentation ), while each document contains substan- tial content irrelev ant to the query ( noise ). As a result, the retrie ver surfaces partially relev ant doc- uments, b ut the conte xt the generator recei ves is both incomplete and diluted. By observing ho w a RA G system interacts with the corpus on labeled data, which samples benefit from retriev al, and which documents contribute to the generation, we can identify where knowledge is fragmented and should be rewritten and fused. This provides a natural supervision signal for optimizing the KB. This observ ation motiv ates a ne w concept we call knowledge base training : optimizing the KB itself using supervision from labeled examples, analogous to how model parameters are updated through training data (Appendix A ). W e instantiate this idea in W R I T E B AC K - R AG , a frame work that learns from retriev al patterns on training data to improv e the kno wledge base. Concretely , a two- stage gating mechanism analyzes retrie v al beha vior to identify which training samples benefit from re- trie val and which retrie ved documents contrib ute 1 to better generation. An LLM-based distiller then fuses and compresses the selected e vidence into compact, self-contained knowledge units that are permanently indexed alongside the original cor- pus. Because W R I T E B AC K - R AG augments only the KB, not the retrie ver or generator , it enhances any RAG pipeline as an orthogonal optimization step, with a one-time offline cost and no additional inference-time ov erhead. Our contributions are: 1. W e propose treating the kno wledge base as a trainable component of RA G systems and in- troduce W R I T E B AC K - R AG , a frame work that learns from retriev al patterns on labeled data to restructure and enrich the KB through gated e vidence distillation and persistent write-back. 2. W e pro vide extensi ve empirical v alidation across four RA G methods (Nai ve Retrie val, Re- Plug, Self-RAG, Flare), six benchmarks (NQ, BoolQ, FEVER, zsRE, HotpotQA, SQuAD), and two LLM backbones (Llama-3.1-8B, Gemma-3-12B), sho wing consistent improve- ments in all settings. 3. W e present detailed analyses of write-back kno wledge properties, including compression statistics, retriev al dynamics, and generalization behavior , providing insight into when and why W R I T E B A C K - R AG improv es performance. 2 Related W orks Retriev al and Generation Strategies. The stan- dard RA G pipeline retriev es top- K documents and conditions generation on them ( Lu et al. , 2025 ; Guu et al. , 2020 ; Borgeaud et al. , 2022 ). A large body of work has improved this pipeline from both sides. On the retriev al side, R E P L U G ( Shi et al. , 2024 ) ensembles generation probabilities ov er documents for better passage weighting, and HyDE ( Gao et al. , 2023 ) generates hypothetical documents to improve query representations. On the generation side, S E L F - R AG ( Asai et al. , 2023 ) introduces reflection tokens for adaptiv e retriev al decisions, F L A R E ( Jiang et al. , 2023b ) triggers re- trie val when generation confidence drops, and At- las ( Izacard et al. , 2023 ) jointly trains the retrie ver and generator . These methods share a common assumption: the knowledge base is a fixed input. They optimize how to search it and how to consume its outputs, but the content and or ganization of the KB itself is ne ver modified. W R I T E B AC K - R A G addresses this independent dimension. Impro ving Retriev ed Context at Infer ence Time. A separate line of w ork aims to improve the quality of the context the generator sees, rather than the retrie val or generation mechanism. RE- COMP ( Xu et al. , 2023 ) trains extractiv e and ab- stracti ve compressors to shorten retriev ed docu- ments. FILCO ( W ang et al. , 2023 ) learns to select useful spans within documents. LLMLingua ( Jiang et al. , 2023a ) uses perplexity-based tok en pruning to compress prompts. GenRead ( Y u et al. , 2022 ) bypasses retrie val entirely , prompting the LLM to generate its own context. RA Gate ( W ang et al. , 2025 ) gates external retrie v al according to whether the required knowledge is already a vailable within the model. All of these operate per query at infer- ence time : they produce ephemeral, compressed or generated context that is consumed once and dis- carded. This means the cost scales linearly with the number of test queries, and kno wledge gained from one query ne ver benefits another . W R I T E B A C K - R A G in verts this paradigm: it distills and fuses e vidence once during an of fline phase, producing persistent knowledge units that benefit all future queries at zero inference-time cost. Knowledge Base Optimization. The idea of di- rectly modifying the knowledge source to impro ve do wnstream performance has been explored in two distinct settings, neither of which addresses the RAG corpus. In traditional NLP , kno wledge base construction methods e xtract structured triples from text ( Dong et al. , 2015 ; Martinez-Rodriguez et al. , 2018 ), but these produce symbolic KBs rather than retrie val-ready documents. In the model edit- ing literature, methods like ROME ( Meng et al. , 2022a ) and MEMIT ( Meng et al. , 2022b ) update factual associations by modifying model parame- ters, ef fectiv ely “editing the KB” that li ves inside the network weights. Howe ver , these parametric edits are brittle at scale and entangled with the model’ s other capabilities. W R I T E B A C K - R AG pursues a non-parametric alternati ve: rather than editing model weights, it edits the external corpus that the model retrie ves from. This is more modu- lar (the enriched KB w orks with any retrie ver and generator), more interpretable (write-back units are readable text), and more scalable (adding docu- ments does not risk de grading the model). T o our kno wledge, W R I T E B A C K - R AG is the first frame- work to treat the RA G knowledge base as a train- able component that is systematically optimized using do wnstream task signals. 2 T raining Phase T est Phase Generator Knowledge Base Labeled Dataset Stage ① Retriever Retriever Generator docs from KB Ye s docs from KB correct answer No-retrieval Utility Gate < Stage ② Document Gate Stage ③ > Generator … Distillation LLM Distiller Index separately Which documents contribute? Compress and fuse Does retrieval help this sample? Write-Back Score with metrics - > > Retriever and Generator are unchanged, only augment KB Compatiable with any RAG method, no additional overhead ✔ ✔ ✔ ✘ > ✔ > ✔ = ✘ D tr ain D test D test Stage ④ Figure 2: The W R I T E B AC K - R AG pipeline. During training (top), a two-stage gating mechanism identifies examples where retrie v al helps and selects contributing documents. An LLM distiller fuses the selected evidence into a compact kno wledge unit, which is inde xed into a separate write-back corpus. During testing (bottom), the retriev er searches combined knowledge source with no changes to the retrie ver or generator . 3 Problem F ormulation A RA G system consists of three components: a retrie ver R , a generator G , and a knowledge base K = { d 1 , d 2 , . . . , d K } containing K documents. Gi ven a query q , the retriev er returns a set of top- K documents: D q = R ( q , K ) = { d ( 1 ) , d ( 2 ) , . . . , d ( K ) } (1) and the generator produces an answer conditioned on both the query and retrie ved documents: ˆ a = G ( q , D q ) (2) The quality of the answer is measured by a task- specific metric M ( q, a G , D q ) , where a is the reference answer . Existing work optimizes R and G while treating K as fixed. W e instead propose to optimize K while keeping R and G unchanged. Giv en a set of labeled training e xamples D train = {( q i , a i )} N i = 1 , the KB training objective is to find a write-back corpus K wb such that the augmented KB K ′ = K ∪ K wb maximizes do wnstream performance: K ∗ wb = arg max K wb ∑ D test M q , a G , R ( q , K ∪ K wb ) (3) At test time, retrie val operates o ver the combined index: D ′ q = R ( q , K ′ ) = T op- K R ( q , K ) ∪ R ( q , K wb ) (4) 4 Methods 4.1 Overview W R I T E B A C K - R AG instantiates the KB training objecti ve (Eq. 3 ) by learning from how a RA G system interacts with the corpus on labeled data. The ke y insight is that retrie val patterns on train- ing examples rev eal where the KB’ s knowledge org anization is deficient, where relev ant facts are fragmented across documents or buried in noise, and this signal can be used to systematically re- structure the KB. As shown in Figure 2 , W R I T E B AC K - R AG oper - ates in two phases. During the training phase , a two-stage gating mechanism first selects training examples where retrie val genuinely helps (utility gate, § 4.2 ) and then identifies which retrie ved doc- uments carry useful knowledge (document gate, § 4.3 ). The selected evidence is fused and com- pressed into a single knowledge unit via LLM- based distillation (§ 4.4 ) and indexed into a sep- arate write-back corpus (§ 4.5 ). During the test 3 Algorithm 1 W R I T E B AC K - R AG KB T raining Require: D train , R , G , K , M , τ δ , τ s , τ doc Ensure: T rained KB K ′ = K ∪ K wb 1: K wb ← ∅ 2: for each ( q i , a i ) ∈ D train do 3: s nr i ← M ( q i , a i G ) 4: D i ← R ( q i , K ) ; s rag i ← M ( q i , a i G , D i ) 5: δ i ← s rag i − s nr i 6: if δ i > τ δ and s rag i > τ s then 7: // Utility Gate passed 8: D ∗ i ← ∅ 9: for each d j ∈ D i do 10: s i,j ← M ( q i , a i G , d j ) 11: if s i,j − s nr i > τ doc then 12: D ∗ i ← D ∗ i ∪ { d j } // Document Gate 13: end if 14: end for 15: if D ∗ i = ∅ then 16: D ∗ i ← T op- n min ( D i ) 17: end if 18: k i ← F ( q i , D ∗ i ) // Distillation 19: K wb ← K wb ∪ { k i } // Write-Back 20: end if 21: end for 22: Index K wb ; set K ′ ← K ∪ K wb phase , the retrie ver searches the combined kno wl- edge source K ′ = K ∪ K wb with no changes to the retrie ver or generator . The full pipeline is given in Algorithm 1 . Both gating stages rely on two reference scores computed for each training example ( q i , a i ) . The no-r etrieval score measures what the generator can answer from parametric kno wledge alone: s nr i = M ( q i , a i G ) (5) The RA G scor e measures performance with re- trie val from the original KB: s rag i = M ( q i , a i G , R ( q i , K )) (6) The g ap δ i = s rag i − s nr i quantifies the retriev al bene- fit for each example and driv es all gating decisions. The backbone RA G method (e.g., Nai ve Retrie val, RePlug, Self-RA G, FLARE) is used consistently for computing these scores, for distillation, and for final e valuation. W R I T E B AC K - R AG is an orthog- onal optimization step that works on top of any backbone without modifying it. 4.2 Utility Gate The utility gate operates at the sample level , select- ing training e xamples where retrie ved kno wledge makes a genuine dif ference. If the generator can already answer correctly without retriev al, or if re- trie val does not improv e the answer , there is no useful signal for KB training. W R I T E B A C K - R AG retains a training example ( q i , a i ) if and only if: δ i > τ δ and s rag i > τ s (7) The margin threshold τ δ ensures retriev al provides non-negligible improvement, and the quality thresh- old τ s ensures the retriev al-augmented answer is actually correct. Their conjunction guards against two f ailure modes: high gain b ut lo w absolute qual- ity (retriev al improves a wrong answer to a slightly less wrong one), or high quality already achiev able without retrie val. W e denote the set of examples passing the utility gate as D util ⊆ D train . 4.3 Document Gate The document gate operates at the document le vel within each utility-approv ed example. Among the K retrie ved documents, not all carry useful knowl- edge, some are noisy , tangential, or distracting. The document gate isolates the specific documents that contribute to the impro ved answer . For each retriev ed document d j , W R I T E B AC K - R A G measures its standalone contribution: s doc i,j = M ( q i , a i G , d j ) (8) A document passes if it provides information be- yond the generator’ s parametric knowledge: D ∗ i = { d j ∈ D i s doc i,j − s nr i > τ doc } (9) If no documents pass ( D ∗ i = ∅ ), we retain the top- n min by retriev al rank as a fallback. Remo ving weak e vidence before distillation ensures the result- ing kno wledge units are focused and more likely to generalize beyond the original training query . 4.4 Distillation Gi ven a training query q i and its gated e vidence D ∗ i , an LLM-based distiller F synthesizes a single kno wledge unit: k i = F ( q i , D ∗ i ) (10) The distiller takes multiple gated documents as in- put and produces a single compact passage as out- put. Its core operation is fusion : merging correlated kno wledge that is scattered across separate docu- ments, i.e., information that is related b ut separated by document boundaries in the original KB, into one coherent unit. At the same time, it compr esses aw ay redundant or tangential content within each source document, producing a denser passage. The 4 training query q i serves only as a locator that identi- fies which documents should be fused; the resulting kno wledge unit is written in a topic-level, enc yclo- pedic style so that it can be retrie ved by di verse future queries, not just the original one (full prompt in Appendix G ). The goal is that a single distilled unit is at least as useful as the full multi-document e vidence it was deri ved from: M ( q, a G , k i ) ≥ M ( q , a G , D ∗ i ) (11) 4.5 Write-Back The distilled kno wledge units are collected into a separate write-back corpus: K wb = { k i ( q i , a i ) ∈ D util } (12) A retriev al index is built for K wb using the same retrie ver encoder . At inference time, the retriever searches K and K wb independently and merges the results into a single top- K set (Eq. 4 ). The trained kno wledge base is K ′ = K ∪ K wb . W e store write-back knowledge in a separate in- dex rather than mer ging it into the original KB for three reasons: (1) the original corpus is kept clean and unmodified, a voiding an y risk of corrupting existing retrie val quality; (2) the write-back inde x can be updated, replaced, or rolled back indepen- dently without rebuilding the base index; and (3) it introduces no additional storage o verhead be yond the distilled documents themselves. Because W R I T E B AC K - R AG augments only the KB, not the retrie ver or generator , it enhances any RA G pipeline as an orthogonal optimization step (see Appendix C for a detailed discussion). 5 Experiments 5.1 Datasets W e ev aluate on six benchmarks from the FlashRA G collection ( Jin et al. , 2025 ): Natural Questions (NQ) ( Kwiatkowski et al. , 2019 ), BoolQ ( Clark et al. , 2019 ), FEVER ( Thorne et al. , 2018 ), zsRE ( Levy et al. , 2017 ), HotpotQA ( Y ang et al. , 2018 ), and SQuAD ( Rajpurkar et al. , 2016 ). W e use the preprocessed benchmark releases provided by FlashRAG ( Jin et al. , 2025 ) and adopt the FlashRA G-provided W ikipedia corpus as the exter- nal kno wledge source for retriev al. These datasets cov er a diverse set of kno wledge- intensi ve tasks. NQ ev aluates open-domain ques- tion answering o ver W ikipedia; BoolQ ev aluates naturally occurring yes/no question answering; Dataset T ask Metric T rain T est NQ Open-domain QA Acc 79,168 3,610 BoolQ Y es/No QA Acc 9,427 3,270 FEVER Fact v erification F1 104,966 10,444 zsRE Slot filling Acc 147,909 3,724 HotpotQA Multi-hop QA Acc 90,447 7,405 SQuAD Extractiv e QA EM 87,599 10,570 T able 1: Main ev aluation datasets. Detailed descriptions and split statistics are giv en in Appendix T able 5 . FEVER e valuates e vidence-based fact verification; zsRE e valuates slot filling / relation e xtraction for- mulated as question answering; HotpotQA e val- uates multi-hop question answering that requires aggregating e vidence across multiple documents; and SQuAD e valuates e xtracti ve question answer - ing. T able 1 summarizes the datasets and ev aluation metrics used in the main paper , while Appendix T a- ble 5 provides detailed task descriptions and split statistics. Follo wing our ev aluation setup, we report Accuracy on NQ, BoolQ, zsRE, and HotpotQA; F1 on FEVER, and Exact Match (EM) on SQuAD. 5.2 Implementation Details W e use E5-base-v2 ( W ang et al. , 2022 ) as the re- trie ver with K = 5 documents; the same encoder is used to index both K and K wb . The same LLM (Llama-3.1-8B ( Grattafiori et al. , 2024 ) and Gemma-3-12B ( T eam et al. , 2024 )) serves as both the generator G and the distiller F ; the distiller operates only during the training phase with a task- specific prompt (Appendix G ). For gating, we set τ s = 0 . 1 and τ δ = 0 . 01 (any strict improv ement suffices, i.e., δ i > 0 ). The docu- ment gate uses τ doc = 0 . 01 with n min = 2 fallback documents. Threshold sensiti vity is analyzed in Section 6.5 . Notably , the distiller does not receiv e the gold answer , so there is no direct answer leak- age into the write-back corpus (Appendix B ). K wb is stored as a separate F AISS index ( Douze et al. , 2025 ); at inference time, both indices are searched independently and results are mer ged into a sin- gle top- K set. Full hyperparameters are gi ven in Appendix T able 6 . The training phase has three cost components: baseline scoring ( 2 N generator calls), document gating (up to D util × K calls), and distillation ( D util calls). For NQ ( N = 79 , 168 , D util = 12 , 295 , K = 5 ), this totals approximately 220 K generator calls, completing in 0.5 hours on two H200 GPUs. This is a one-time of fline cost; at inference time, write-back adds zero overhead beyond a marginally 5 T able 2: Main results across six benchmarks, four RAG methods, and two LLMs. +WB denotes W R I T E B AC K - R AG using write-back RA G. Numbers in parentheses show absolute g ains over the corresponding retrie val baseline. LLM Method NQ BoolQ FEVER zsRE Hotpot SQuAD Acc Acc F1 Acc Acc EM Gemma-3-12B No Retriev al 30.61 63.39 34.24 17.29 24.28 54.36 Naiv e RA G 31.44 80.85 32.77 22.34 41.13 60.89 + WB 34.82 (+3.38) 83.12 (+2.27) 37.89 (+5.12) 22.82 (+0.48) 41.99 (+0.86) 61.24 (+0.35) RePlug 31.39 80.92 32.73 22.34 41.13 60.25 + WB 34.63 (+3.24) 83.52 (+2.60) 37.92 (+5.19) 22.77 (+0.43) 41.97 (+0.84) 61.39 (+1.14) Self-RA G 34.77 77.25 29.61 19.68 27.49 61.73 + WB 36.53 (+1.76) 79.81 (+2.56) 32.08 (+2.47) 20.03 (+0.35) 28.73 (+1.24) 63.22 (+1.49) FLARE 38.50 84.22 46.25 21.18 29.82 60.55 + WB 41.23 (+2.73) 84.73 (+0.51) 51.31 (+5.06) 21.64 (+0.46) 30.18 (+0.36) 61.78 (+1.23) Llama-3.1-8B No Retriev al 29.17 64.82 33.13 16.43 23.90 55.18 Naiv e RA G 32.15 82.43 34.08 21.80 42.33 62.14 + WB 35.84 (+3.69) 84.95 (+2.52) 39.89 (+5.81) 22.49 (+0.69) 43.56 (+1.23) 63.23 (+1.09) RePlug 31.92 82.51 33.96 21.78 42.12 61.83 + WB 35.53 (+3.61) 85.45 (+2.94) 39.60 (+5.64) 22.37 (+0.59) 43.12 (+1.00) 63.42 (+1.59) Self-RA G 35.18 79.20 31.45 19.94 29.33 63.58 + WB 37.48 (+2.30) 82.13 (+2.93) 34.77 (+3.32) 20.69 (+0.75) 31.04 (+1.71) 65.45 (+1.87) FLARE 39.42 85.61 48.18 21.50 31.25 62.29 + WB 42.83 (+3.41) 86.45 (+0.84) 53.90 (+5.72) 22.27 (+0.77) 32.12 (+0.87) 64.16 (+1.87) larger retrie val index. 6 Results W e organize the analysis around fiv e research ques- tions: whether KB training improv es downstream accuracy ( RQ1 ), what the write-back corpus looks like in practice ( RQ2 ), where the retained e vidence sits in the retrie v al ranking ( RQ3 ), whether write- back kno wledge transfers across RA G methods ( RQ4 ), and how sensitive the pipeline is to its main hyperparameters ( RQ5 ). 6.1 RQ1: Ov erall Perf ormance T able 2 reports results for all 48 settings (4 RA G methods × 6 datasets × 2 LLMs). W R I T E B AC K - R A G shows impro vement on e very single setting, with an a verage gain of +2.14% (Prompts and Ex- amples can be found in Appendix G and H ). The ef fect is not driv en by any particular backbone or model scale: av eraged over datasets and LLMs, Nai ve RA G gains +2.29%, RePlug +2.40%, Self- RA G +1.90%, and FLARE +1.99%; a veraged o ver methods and datasets, Gemma-3-12B gains +1.92% and Llama-3.1-8B gains +2.36%. The size of the improv ement varies across tasks in a w ay that aligns with the nature of the kno wl- edge demand. FEVER (+4.79%) and NQ (+3.01%) benefit most, as both require locating specific factual evidence that is often scattered across W ikipedia passages, exactly the scenario where fus- ing and compressing evidence should help. BoolQ (+2.15%) also sees clear gains despite its short- answer format. Improvements on zsRE (+0.56%), HotpotQA (+1.01%), and SQuAD (+1.33%) are smaller but uniformly positiv e. W e note that e ven the smallest gains are achiev ed at zero inference- time cost: the only change is a slightly lar ger re- trie val inde x. T wo observ ations deserv e emphasis. First, the gains on Self-RA G and FLARE show that KB train- ing is complementary to adapti ve retrie val strate- gies, not redundant with them, these methods al- ready decide when and whether to retrie ve, yet still benefit from a better-or ganized corpus. Second, write-back helps even when retriev al itself hurts: on FEVER, Nai ve RA G (32.77%) underperforms the no-retrie val baseline (34.24%), yet adding write- back raises F1 to 37.89%, well above both. This suggests that distilled documents can partially com- pensate for noisy retriev al by placing more focused e vidence within reach of the retriev er . 6.2 RQ2: Selection and Compr ession T able 3 shows the write-back construction process under Gemma-3-12B + Naiv e RA G (we use Naiv e RA G as the reference setting throughout the analy- sis to isolate the effect of write-back from retrie val 6 T able 3: Training-time write-back construction statistics. Selected Rate is the fraction of training examples written back to the KB. Retained Docs is the a verage number of retained documents after document filtering. Source T okens and Distilled T okens denote the av erage source and distilled token counts for selected examples. Compression is computed as source tokens divided by distilled tokens. F allback Rate is the fraction of selected examples for which no document passed the document gate and the top- n min fallback was used. Dataset Selected Rate Retained Docs Source T okens Distilled T okens Compression F allback Rate NQ 14.0% 1.77 183.4 87.0 2.15 × 5.9% BoolQ 6.3% 2.79 288.2 92.7 3.21 × 29.2% FEVER 9.1% 2.37 243.0 85.6 2.88 × 13.5% zsRE 11.6% 2.11 216.0 71.6 3.51 × 5.3% HotpotQA 49.3% 4.76 489.2 79.8 6.79 × 1.2% SQuAD 48.1% 1.97 203.2 87.9 2.55 × 96.2% strategy dif ferences; see Appendix I ). The utility gate selects vastly dif ferent fractions of training data depending on the task: only 6-14% for NQ, BoolQ, FEVER, and zsRE, b ut nearly half for HotpotQA (49.3%) and SQuAD (48.1%). The gap reflects ho w much each task depends on re- trie val be yond the model’ s parametric kno wledge. HotpotQA, by design, requires cross-document rea- soning that the generator cannot perform alone, so a large share of examples exhibit a positi ve retriev al benefit. SQuAD’ s high selection rate has a dif fer- ent explanation: its fallback rate of 96.2% indicates that for extracti ve QA, where the answer typically resides in a single passage, individual documents rarely surpass the no-retriev al baseline on their o wn. In such cases the fallback mechanism ensures that distillation still recei ves a compact evidence b un- dle, and the downstream gains on SQuAD (+0.35% to +1.87% across settings) confirm that write-back remains ef fectiv e e ven when the document gate defers to fallback. After document filtering, the evidence bundles are compact: roughly 2 documents on av erage for most tasks, and 4.76 for HotpotQA. The distiller compresses these b undles by 2.15-6.79 × , produc- ing write-back units of 72-93 tokens. The strongest compression occurs on HotpotQA, where multi- document bundles averaging 489 tokens are re- duced to 80-token units. Appendix Figure 5 and Appendix F confirm this pattern: across all tasks, the majority of points fall below the identity line. The spread within each panel indicates that the distiller adapts its compression to the input length rather than producing fixed-length outputs. 6.3 RQ3: Evidence Rank Distrib ution T o further understand how the document gate se- lects useful evidence, we analyze the retriev al-rank distribution of retained documents. Figure 3 shows, 1 2 3 4 5 0.0 0.2 0.4 NQ 1 2 3 4 5 BoolQ 1 2 3 4 5 FEVER 1 2 3 4 5 0.0 0.2 0.4 zsRE 1 2 3 4 5 HotpotQA 1 2 3 4 5 SQuAD R etrieval rank F raction of r etained documents Figure 3: Retriev al-rank distribution of retained docu- ments. Each panel sho ws the fraction of retained docu- ments among the retriev ed documents. for each dataset, the fraction of retained documents originating from each rank among the top-5 re- trie ved results. For NQ, BoolQ, FEVER, and zsRE, the distrib ution is clearly top-heavy: rank-1 and rank-2 documents account for the largest share, with a steady decline toward rank-5. This indicates that the retriev er already places useful evidence near the top for these tasks; the document gate’ s primary role is to filter out the lo wer-rank ed noise rather than to rescue useful documents from deep in the list. HotpotQA and SQuAD illustrate two dif ferent non-standard patterns. HotpotQA is nearly flat across ranks 1 to 5, indicating that useful evidence is distributed broadly across the retrie ved set rather than concentrated in the top few documents, which is consistent with its multi-hop nature, answering requires combining facts from multiple passages regardless of their retrie val score. SQuAD is al- most entirely concentrated on ranks 1 and 2, which directly reflects its high fallback rate (96.2%, T a- ble 3 ): the fallback mechanism defaults to the top- n min documents, so the rank profile here illustrates fallback beha vior rather than document-gate selec- ti vity . 7 Naive R A G R ePlug R A G 30 32 34 36 +3.38 +3.24 +3.82 +3.49 =+0.44 =+0.25 NQ Naive R A G R ePlug R A G 80 81 82 83 84 +2.26 +2.60 +2.38 +2.57 =+0.12 =-0.03 BoolQ Evaluation method A ccuracy (%) Same- WB Cr oss- WB No WB (r etrieval- only) Figure 4: Cross-writeback rob ustness. Same-WB uses write-back knowledge from the same RA G method, while Cross-WB uses write-back knowledge from the other method. Numbers abov e the bars denote absolute gains ov er the No-WB baseline. 6.4 RQ4: T ransfer and Reuse A key question is whether write-back knowledge is specific to the RA G method that produced it, or whether it behaves as a reusable improv ement to the kno wledge source itself. Figure 4 addresses this with a cross-writeback experiment between Nai ve RA G and RePlug. Same-WB e valuates a method using its o wn write-back corpus; Cross- WB e valuates it using the corpus distilled by the other method. Across all four e valuation settings, both Same- WB and Cross-WB outperform the corresponding no-write-back baseline. Same-WB yields gains of +2.26% to +3.38%, while Cross-WB yields +2.38% to +3.82%. The gap between the two ne ver exceeds 0.44% in either direction, and in three of four cases Cross-WB is marginally better . If the distilled doc- uments were encoding artifacts of a specific de- coding policy rather than genuine improv ements to the kno wledge source, performance should degrade noticeably under cross-method reuse. Instead, the write-back corpus produced by one method is es- sentially interchangeable with that of another , indi- cating that W R I T E B AC K - R AG impro ves the cor- pus itself rather than fitting to a particular pipeline. 6.5 RQ5: Component Ablations T able 4 ablates three controls of the write-back pipeline on NQ (Nai ve RA G baseline: 31.44% Acc). Every write-back configuration outperforms this baseline, with gains ranging from +1.75 to +3.45 points, so the method does not depend on precise hyperparameter tuning. The utility gate is the least sensitiv e: varying τ s from 0 to 0.20 changes accuracy by only 0.16 points (34.66%-34.82%), indicating that its role is simply to exclude clearly uninformati ve examples. T able 4: Ablation study on the utility gate threshold τ s , document gate threshold τ doc , and fallback size n min . † marks the default configuration in the main experiments. No-WB retrie val baseline on NQ: 31.44 Acc Utility gate τ s 0 0.05 0 . 10 † 0.15 0.20 NQ Acc 34.78 34.76 34.82 34.71 34.66 Document gate τ doc 0 0 . 01 † 0.03 0.05 0.10 NQ Acc 34.89 34.82 34.79 33.85 33.76 Fallback n min 1 2 † 3 4 5 NQ Acc 33.19 34.82 34.71 33.60 34.28 The document gate has a larger ef fect. Light filter - ing performs best, τ doc = 0 yields 34.89% and the default τ doc = 0 . 01 yields 34.82%, but raising the threshold to 0.05 or 0.10 drops accuracy to 33.85 and 33.76, suggesting that aggressiv e standalone contribution tests discard documents that are indi- vidually weak but become useful after fusion, con- sistent with the evidence patterns observed in RQ2 and RQ3. The fallback size n min has the strongest ef fect. A single f allback document (33.19%) is in- suf ficient since one passage rarely provides enough material for a good re write. Performance peaks at the default n min = 2 (34.82%) and declines for both smaller and lar ger bundles, suggesting a trade-off between having enough material for distillation and av oiding the reintroduction of noise. Larger fall- back sizes also increase the offline distillation cost, as the distiller must process more source tok ens per example, making n min = 2 a practical choice that balances accuracy and ef ficiency . 7 Conclusion W e proposed W R I T E B AC K - R AG , a framew ork that treats the kno wledge base as a trainable compo- nent of RA G systems. By observing which training examples benefit from retriev al and which docu- ments contribute, W R I T E B A C K - R AG distills scat- tered e vidence into compact knowledge units that are indexed alongside the original corpus. The approach modifies only the KB and is therefore compatible with any retrie ver and generator . Exper- iments across four RA G methods, six benchmarks, and two LLM backbones sho w that write-back con- sistently improv es downstream performance, with an av erage gain of +2.14%. Cross-method transfer experiments confirm that the distilled knowledge is a property of the corpus, not of the pipeline that produced it. These results establish W R I T E B A C K - R A G as a viable method for impro ving RA G, com- plementary to advances in retrie val and generation. 8 Limitations W R I T E B A C K - R AG has several limitations. It re- lies on labeled training examples, so its effecti ve- ness in lo w-label or unsupervised settings remains unclear (though can be replaced by LLM-as-a- Judge). The quality of the auxiliary corpus also depends on the quality of the underlying LLM: un- supported abstractions or hallucinated details may be written back and later retriev ed. In addition, our experiments are limited to public W ikipedia-based benchmarks, leaving domain transfer , multilingual settings, and continuously updated corpora for fu- ture work. Finally , W R I T E B A C K - R AG introduces a nontri vial offline cost and currently studies only additi ve write-back, without deletion, deduplica- tion, or contradiction resolution. Ethical Consideration Because W R I T E B AC K - R AG writes distilled kno wledge back into a retriev able corpus, errors or biases in the distillation process may persist and af fect future queries. W e mitigate direct answer leakage by not e xposing the gold answer during distillation, and we store write-back knowledge in a separate index to support inspection and rollback. Ho wev er , the method still inherits biases from both the source corpus and the LLM used for distilla- tion. Our experiments use public benchmark re- leases and a public W ikipedia corpus, but applying the method to proprietary or user -generated data would require additional safeguards for priv acy , ac- cess control, and sensiti ve-content filtering. The method also incurs additional of fline computation, which should be weighed against its downstream benefits. References Akari Asai, Zeqiu W u, Y izhong W ang, A virup Sil, and Hannaneh Hajishirzi. 2023. Self-rag: Learning to retrie ve, generate, and critique through self-reflection. In The T welfth International Confer ence on Learning Repr esentations . Sebastian Borgeaud, Arthur Mensch, Jordan Hoff- mann, Tre vor Cai, Eliza Rutherford, Katie Milli- can, George Bm V an Den Driessche, Jean-Baptiste Lespiau, Bogdan Damoc, Aidan Clark, and 1 others. 2022. Improving language models by retrie ving from trillions of tok ens. In International confer ence on machine learning , pages 2206–2240. PMLR. Christopher Clark, K enton Lee, Ming-W ei Chang, T om Kwiatko wski, Michael Collins, and Kristina T outanova. 2019. Boolq: Exploring the surprising dif ficulty of natural yes/no questions. In Pr oceedings of the 2019 conference of the north American chap- ter of the association for computational linguistics: Human language technologies, volume 1 (long and short papers) , pages 2924–2936. Xin Luna Dong, Evgeniy Gabrilo vich, Ke vin Murphy , V an Dang, W ilko Horn, Camillo Lugaresi, Shaohua Sun, and W ei Zhang. 2015. Knowledge-based trust: Estimating the trustworthiness of web sources. arXiv pr eprint arXiv:1502.03519 . Matthijs Douze, Alexandr Guzhv a, Chengqi Deng, Jeff Johnson, Gergely Szilvasy , Pierre-Emmanuel Mazaré, Maria Lomeli, Lucas Hosseini, and Hervé Jégou. 2025. The faiss library . IEEE T ransactions on Big Data . W enqi Fan, Y ujuan Ding, Liangbo Ning, Shijie W ang, Hengyun Li, Dawei Y in, T at-Seng Chua, and Qing Li. 2024. A surve y on rag meeting llms: T o wards retriev al-augmented large language models. In Pr o- ceedings of the 30th A CM SIGKDD confer ence on knowledge discovery and data mining , pages 6491– 6501. Luyu Gao, Xueguang Ma, Jimmy Lin, and Jamie Callan. 2023. Precise zero-shot dense retriev al without rel- ev ance labels. In Pr oceedings of the 61st Annual Meeting of the Association for Computational Lin- guistics (V olume 1: Long P apers) , pages 1762–1777. Aaron Grattafiori, Abhimanyu Dube y , Abhinav Jauhri, Abhinav Pande y , Abhishek Kadian, Ahmad Al- Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex V aughan, and 1 others. 2024. The llama 3 herd of models. arXiv pr eprint arXiv:2407.21783 . Kelvin Guu, Kenton Lee, Zora T ung, Panupong Pasu- pat, and Mingwei Chang. 2020. Retrie val augmented language model pre-training. In International confer- ence on machine learning , pages 3929–3938. PMLR. Y ucheng Hu and Y uxing Lu. 2024. Rag and rau: A survey on retriev al-augmented language model in natural language processing. arXiv preprint arXiv:2404.19543 . Gautier Izacard, P atrick Le wis, Maria Lomeli, Lucas Hosseini, Fabio Petroni, T imo Schick, Jane Dwivedi- Y u, Armand Joulin, Sebastian Riedel, and Edouard Grav e. 2023. Atlas: Fe w-shot learning with retriev al augmented language models. Journal of Mac hine Learning Researc h , 24(251):1–43. Huiqiang Jiang, Qianhui W u, Chin-Y e w Lin, Y uqing Y ang, and Lili Qiu. 2023a. Llmlingua: Compressing prompts for accelerated inference of lar ge language models. In Proceedings of the 2023 conference on empirical methods in natural language pr ocessing , pages 13358–13376. Zhengbao Jiang, Frank F Xu, Luyu Gao, Zhiqing Sun, Qian Liu, Jane Dwivedi-Y u, Y iming Y ang, Jamie Callan, and Graham Neubig. 2023b. Activ e retriev al 9 augmented generation. In Pr oceedings of the 2023 confer ence on empirical methods in natural language pr ocessing , pages 7969–7992. Jiajie Jin, Y utao Zhu, Zhicheng Dou, Guanting Dong, Xinyu Y ang, Chenghao Zhang, T ong Zhao, Zhao Y ang, and Ji-Rong W en. 2025. Flashrag: A modular toolkit for efficient retriev al-augmented generation research. In Companion Pr oceedings of the A CM on W eb Conference 2025 , pages 737–740. T om Kwiatko wski, Jennimaria Palomaki, Olivia Red- field, Michael Collins, Ankur P arikh, Chris Alberti, Danielle Epstein, Illia Polosukhin, Jacob De vlin, Ken- ton Lee, and 1 others. 2019. Natural questions: a benchmark for question answering research. T rans- actions of the Association for Computational Linguis- tics , 7:453–466. Omer Levy , Minjoon Seo, Eunsol Choi, and Luke Zettle- moyer . 2017. Zero-shot relation e xtraction via read- ing comprehension. In Pr oceedings of the 21st Con- fer ence on Computational Natural Language Learn- ing (CoNLL 2017) , pages 333–342. Y uxing Lu, Gecheng Fu, W ei W u, Xukai Zhao, Goi Sin Y ee, and Jinzhuo W ang. 2025. T o wards doctor -like reasoning: Medical rag fusing kno wledge with pa- tient analogy through textual gradients. In The Thirty- ninth Annual Confer ence on Neural Information Pro- cessing Systems . Jose L Martinez-Rodriguez, Ivan López-Arév alo, and Ana B Rios-Alvarado. 2018. Openie-based approach for kno wledge graph construction from text. Expert Systems with Applications , 113:339–355. Ke vin Meng, David Bau, Alex Andonian, and Y onatan Belinkov . 2022a. Locating and editing factual as- sociations in gpt. Advances in neural information pr ocessing systems , 35:17359–17372. Ke vin Meng, Arnab Sen Sharma, Alex Andonian, Y onatan Belinkov , and David Bau. 2022b. Mass- editing memory in a transformer . arXiv pr eprint arXiv:2210.07229 . Pranav Rajpurkar , Jian Zhang, Konstantin Lopyre v , and Percy Liang. 2016. Squad: 100,000+ questions for machine comprehension of text. In Pr oceedings of the 2016 confer ence on empirical methods in natural language processing , pages 2383–2392. W eijia Shi, Sewon Min, Michihiro Y asunaga, Min- joon Seo, Richard James, Mik e Lewis, Luk e Zettle- moyer , and W en-tau Y ih. 2024. Replug: Retriev al- augmented black-box language models. In Pr oceed- ings of the 2024 Confer ence of the North American Chapter of the Association for Computational Lin- guistics: Human Language T echnologies (V olume 1: Long P apers) , pages 8371–8384. Gemma T eam, Thomas Mesnard, Cassidy Hardin, Robert Dadashi, Surya Bhupatiraju, Shreya Pathak, Laurent Sifre, Mor gane Rivière, Mihir Sanjay Kale, Juliette Love, and 1 others. 2024. Gemma: Open models based on gemini research and technology . arXiv preprint arXiv:2403.08295 . James Thorne, Andreas Vlachos, Christos Christodoulopoulos, and Arpit Mittal. 2018. Fev er: a large-scale dataset for fact extraction and verification. In Proceedings of the 2018 Confer ence of the North American Chapter of the Association for Computational Linguistics: Human Langua ge T echnologies, V olume 1 (Long P apers) , pages 809–819. Liang W ang, Nan Y ang, Xiaolong Huang, Binxing Jiao, Linjun Y ang, Daxin Jiang, Rangan Majumder , and Furu W ei. 2022. T ext embeddings by weakly- supervised contrastiv e pre-training. arXiv preprint arXiv:2212.03533 . Xi W ang, Procheta Sen, Ruizhe Li, and Emine Y ilmaz. 2025. Adaptive retrie v al-augmented generation for con versational systems. In F indings of the Associ- ation for Computational Linguistics: N AACL 2025 , pages 491–503. Zhiruo W ang, Jun Araki, Zhengbao Jiang, Md Rizwan Parv ez, and Graham Neubig. 2023. Learning to filter context for retrie val-augmented generation. arXiv pr eprint arXiv:2311.08377 . Fangyuan Xu, W eijia Shi, and Eunsol Choi. 2023. Re- comp: Impro ving retriev al-augmented lms with con- text compression and selecti ve augmentation. In The T welfth International Confer ence on Learning Repr e- sentations . Zhilin Y ang, Peng Qi, Saizheng Zhang, Y oshua Bengio, W illiam Cohen, Ruslan Salakhutdino v , and Christo- pher D Manning. 2018. Hotpotqa: A dataset for div erse, e xplainable multi-hop question answering. In Pr oceedings of the 2018 confer ence on empiri- cal methods in natural languag e pr ocessing , pages 2369–2380. W enhao Y u, Dan Iter, Shuohang W ang, Y ichong Xu, Mingxuan Ju, Soumya Sanyal, Chenguang Zhu, Michael Zeng, and Meng Jiang. 2022. Gen- erate rather than retriev e: Lar ge language mod- els are strong context generators. arXiv preprint arXiv:2209.10063 . 10 A On the Use of “KB T raining” The implementation of W R I T E B A C K - R AG is a corpus augmentation and distillation pipeline, not gradient-based optimization o ver KB parameters. W e adopt the term “training” because the process is supervised (driv en by labeled examples), task- informed (guided by do wnstream retrie val perfor - mance signals), and persistent (the KB is modified once and benefits all future queries). In this sense the KB under goes a transformation analogous to ho w model parameters are shaped by training data, e ven though the mechanism is distillation rather than gradient descent. More concretely , the analogy rests on three struc- tural parallels. First, training data acts as supervi- sion: just as labeled examples define a loss signal for model parameters, the labeled set D train pro- vides the signal that driv es the utility gate and doc- ument gate. Second, the process is iterati ve over data: the pipeline loops over training examples, accumulating write-back kno wledge one unit at a time, analogous to how parameter updates accumu- late ov er mini-batches. Third, the result is a persis- tent artifact: the enriched KB K ′ is produced once and reused for all future inference, just as trained model weights are. W e acknowledge that no gradi- ent computation is in volv ed, and the term “training” is used in this broader , process-lev el sense rather than in the narrow sense of stochastic optimization. B W R I T E B AC K - R AG Pre vents Answer Leakage Although the distiller ne ver recei ves the gold an- swer a i , the utility gate selects examples where retrie val produces a correct answer , and the doc- ument gate retains documents that contributed to that answer . The distiller therefore recei ves an evi- dence b undle implicitly conditioned on correctness, raising the question of whether the method simply smuggles answers into the corpus. W e argue that it does not. The selected documents D ∗ i are passages already present in the original KB—the distiller has no access to information beyond what the re- trie ver already surfaces, and its prompt instructs it to produce a general-purpose enc yclopedic passage rather than to answer the question (Appendix G ). Any answer -relev ant content in a write-back doc- ument was already retriev able from the original corpus; the distiller merely reor ganizes it into a more compact and retrie val-friendly format. More fundamentally , the improv ement must gen- eralize to unseen queries to affect test-time per- formance, because write-back documents compete with the entire original corpus and are rank ed solely by embedding similarity to the test query . A docu- ment narro wly tailored to a single training question would not rank highly for semantically dif ferent test queries and would simply be ignored by the retrie ver . The cross-writeback e xperiment (RQ4, Figure 4 ) provides direct e vidence of this general- ization: write-back corpora produced by one RAG method transfer to another with negligible perfor - mance difference, ruling out pipeline-specific ar - tifacts or memorized answer patterns. T ogether with the consistent gains across all 48 settings in T able 2 , these results indicate that the benefit stems from improv ed knowledge org anization rather than indirect answer leakage. C W R I T E B AC K - R AG as a General Method f or RA G A natural question is why W R I T E B A C K - R AG can improv e RA G methods with very different retriev al and generation strate gies without any method- specific modification. The four RA G backbones we ev aluate dif fer substantially in ho w they use retrie ved documents. Nai ve RA G concatenates the top- K passages into a single prompt. RePlug ensembles generation prob- abilities across documents, weighting each passage by its retrie val score. Self-RAG introduces reflec- tion tokens that let the generator decide, per step, whether to retriev e and which passages to trust. FLARE monitors generation confidence token-by- token and triggers retrie val only when uncertainty exceeds a threshold. Despite these dif ferences, all four methods share a common dependency: the quality of the documents present in the retrie val index. A document that is more focused, less noisy , and better aligned with the knowledge a query re- quires will be ranked higher by the retriever and will be more useful to the generator , regardless of ho w the generator consumes it. W R I T E B A C K - R AG operates entirely at this shared layer . It does not modify the retriev al al- gorithm, the generation prompt, or the decoding strategy . It adds distilled documents to the index, and the existing retrie ver decides whether to surface them. If a write-back document is more rele vant than the original passages it was deri ved from, it will naturally rise in the ranking; if not, it will be ignored (Figure 1 ). This means the method can- 11 Dataset T ask Description T rain T est Metric NQ Open-domain QA Real user questions answered using retrie ved W ikipedia evi- dence. 79,168 3,610 Acc BoolQ Y es or No QA Naturally occurring Y es or No questions paired with supporting documents. 9,427 3,270 Acc FEVER Fact verification Claim verification against W ikipedia evidence with SUPPORTS , REFUTES , and NO T ENOUGH INFO labels. 104,966 10,444 F1 zsRE Slot filling Relation extraction framed as answering natural-language rela- tion queries ov er factual knowledge. 147,909 3,724 Acc HotpotQA Multi-hop QA Question answering that requires aggregating evidence across multiple W ikipedia documents. 90,447 7,405 Acc SQuAD Extractiv e QA Reading comprehension where the answer is extracted as a te xt span from the provided passage. 87,599 10,570 EM T able 5: Detailed dataset statistics used in our experiments, follo wing the FlashRA G benchmark release ( Jin et al. , 2025 ). All datasets use the FlashRA G-provided W ikipedia corpus ( wiki18_100w ) as external kno wledge. not degrade retrie val quality for queries unrelated to the training set, because irrelev ant write-back documents simply remain unretrie ved. The empirical results in T able 2 confirm this reasoning. The gains are positi ve across all four backbones, and the cross-writeback experiment (Figure 4 ) shows that write-back corpora are in- terchangeable across methods. T ogether , these ob- serv ations support treating W R I T E B A C K - R A G as a corpus-le vel preprocessing step that is independent of the choice of RAG pipeline: it can be applied once and reused with any do wnstream method. D Datasets W e ev aluate on six benchmarks from the FlashRA G collection ( Jin et al. , 2025 ) and use the FlashRA G- provided W ikipedia corpus ( wiki18_100w ) as the external kno wledge source for retrie val in all ex- periments. These datasets span open-domain ques- tion answering, fact verification, slot filling, multi- hop reasoning, and extracti ve question answering. This breadth is important for our study because W R I T E B A C K - R AG is designed to improve the kno wledge base itself rather than a single task- specific generation strategy . T able 5 reports the task type, a short description, the train and test split sizes, and the e valuation metric used in our experiments. W e follo w the FlashRA G benchmark release for preprocessing and split construction. E Hyperparameters Our implementation uses one shared retrie ver en- coder for both the original corpus and the write- back corpus, and uses the same backbone LLM as both the generator and the distiller . The ke y de- sign choices are therefore concentrated in retriev al depth, gating thresholds, and distillation settings. T able 6 summarizes the full configuration used in the main experiments. Hyperparameter V alue Retrieval Retriev er E5-base-v2 Retriev al top- K 5 Write-back retriev al top- K 5 Merged retrie val top- K 5 Gating Utility gate threshold τ s 0.10 Utility gate margin τ δ 0.01 Document gate margin τ doc 0.01 Document gate fallback n min 2 Distillation Distiller model Same as generator Use gold answer in distillation No Extractiv e pre-selection Enabled Max selected evidence sentences 8 Fallback selected sentences 6 Max new tok ens 128 T emperature 0.0 Indexing Write-back storage mode Separate F AISS index Incremental indexing Enabled T able 6: Full hyperparameter settings used in the main experiments. The utility gate uses a minimum absolute re- trie val score threshold τ s together with a positi ve improv ement margin τ δ so that write-back is trig- gered only when retrie v al is both useful and suf- ficiently correct. The document g ate uses a small positi ve margin τ doc and a f allback mechanism with n min = 2 so that distillation still receiv es a com- pact e vidence b undle e ven when no single retrie ved document is indi vidually strong enough under the standalone contribution test. 12 0 100 200 300 NQ BoolQ FEVER 0 100 200 300 0 100 200 300 zsRE 0 100 200 300 HotpotQA 0 100 200 300 SQuAD Sour ce evidence tok ens Distilled knowledge tok ens Figure 5: Source e vidence length versus distilled write- back knowledge length for six benchmarks. F Knowledge Distillation Analysis Figure 5 visualizes the relationship between ex- tracted source e vidence length and distilled write- back knowledge length across six datasets. Over - all, most points fall below the identity line, sho w- ing that the write-back module usually produces a shorter distilled note than the source e vidence from which it is deri ved. This trend is consistent across all datasets, confirming that the module generally performs compression rather than direct copying. The figure specifically reflects compression from retriev ed evidence into write-back knowledge, rather than general prompt shortening. The broad spread within each panel also indicates that the re write module performs adapti ve compression, producing shorter or longer notes depending on the amount and structure of the av ailable evidence. G Prompt T emplates W e use task-specific prompts for retriev al-based inference. For no-retriev al baselines, we deriv e matched prompts by removing the document block from the corresponding retriev al prompt and re- moving evidence-dependent wording to preserv e prompt parity as closely as possible. Belo w we show representati ve task prompts to- gether with the extracti ve e vidence prompt and the re write prompt used in the write-back pipeline. BoolQ task prompt Decide whether the answer to the question is true or false using the provided evidence. Output exactly one word: True or False. Do not output yes or no, labels, or any explanation. The following are given documents. {reference} HotpotQA task prompt Answer the multi-hop question using the provided evidence. Output only the final answer. If the question is yes or no, output exactly yes or no in lowercase. Otherwise output only the shortest answer phrase. The following are given documents. {reference} Representati ve no-retriev al task prompt Answer the factoid question from your own knowledge. Output only the short final answer phrase or entity name. Do not output a sentence or explanation. The next two prompts correspond to the write- back stage. The first extracts answer-rele vant evi- dence sentences from retrie ved passages, and the second rewrites the selected e vidence into a com- pact retrie val-oriented document that is later in- dex ed into the auxiliary write-back corpus. Be- cause the distilled document must remain reusable for future queries, the re write stage is conditioned on the question and supporting e vidence only and does not expose the gold answer . Extractive e vidence prompt System: Extract only answer-relevant evidence sentences from retrieved passages. Do not paraphrase. Keep exact sentence text. User: Question: {question} Retrieved passages: {formatted_reference} Select up to {extractive_max_sentences} evidence sentences. Output one sentence per line using this format only: [Doc ] where Doc index starts from 1. Rewrite prompt System: You are writing a high-utility retrieval document for future QA. Use only facts supported by the provided knowledge. User: Question: {question} Supporting knowledge: {evidence_text} Write one merged document in the same style as the original evidence corpus. 13 Quality requirements: 1) Add concise supporting facts that improve retrieval recall: key entities, aliases, dates, numbers, and locations when supported. 2) Reuse important terms from the question and evidence; include alternative names only if supported. 3) Keep it factual and compact; do not add unsupported claims. Output format (exactly two parts, no labels): <knowledge paragraph(s)> Do not output prefixes like ‘Title:‘ or ‘ Knowledge:‘. H Representati ve Write-Back Examples W e next present representativ e training instances that were re written and added to the write-back cor- pus. Each example includes the original question, the model outputs with and without retrie val, the utility signal used for selection, the e xtracted evi- dence sentences, and the final distilled document written back to the auxiliary corpus. F or clarity , we report the reference answer in the qualitativ e exam- ples below as part of analysis, but the distillation prompt itself does not recei ve the gold answer . T o keep the appendix readable in A CL double- column format, each example is displayed in a single breakable outer box, while the evidence and distilled text are sho wn as compact monospaced blocks inside the same box. Binary verification example. This example sho ws a case where retrie val supplies historical and geopolitical context that is not reliably reco vered in the no-retrie val setting. Example 1: BoolQ Question: do iran and afghanistan speak the same language Gold answer: ["True"] Original RA G prediction: True No-retrie val prediction: False Utility scores: s rag = 1 . 0 , s nr = 0 . 0 , ∆ = 1 . 0 Retained document indices: [3] Extractive e vidence. [Doc 1] Afghanistan-Iran relations Afghanistan-Iran relations were established in 1935 during King Zahir Shah’s reign and the Pahlavi dynasty of Persia. [Doc 1] Mujahideen, Afghan refugees, and Taliban), as well as Iran’s water dispute , the growing influence of the United States in Afghanistan. [Doc 1] Afghanistan and Iran share the same underlying language, Persian, but speak different dialects. [Doc 1] When the Safavid dynasty was founded in Persia, part of what is now Afghanistan was ruled by the Khanate of Bukhara and Babur from Kabulistan. [Doc 1] They have been negatively affected by the 1979 Iranian Revolution and issues related to the 1978-present Afghan conflicts. Distilled knowledge. Afghanistan-Iran relations Afghanistan-Iran relations were established in 1935 during King Zahir Shah’s reign and the Pahlavi dynasty of Persia. Afghanistan shares a relatively long history with Iran (called Persia in the West before 1935). When the Safavid dynasty was founded in Persia , part of what is now Afghanistan was ruled by the Khanate of Bukhara and Babur from Kabulistan. They have been negatively affected by the 1979 Iranian Revolution and issues related to the 1978-present Afghan conflicts (i.e. Mujahideen, Afghan refugees, ... Multi-hop QA example. This case illustrates ho w the extracted e vidence brings together lexi- cal cues and supporting chemical context that help recov er the correct answer . Example 2: HotpotQA Question: Cadmium Chloride is slightly soluble in this chemical, it is also called what? Gold answer: ["alcohol"] Original RA G prediction: alcohol No-retrie val prediction: water Utility scores: s rag = 1 . 0 , s nr = 0 . 0 , ∆ = 1 . 0 Retained document indices: [0, 1, 2, 3, 4] Extractive e vidence. [Doc 2] It is a hygroscopic solid that is highly soluble in water and slightly soluble in alcohol. [Doc 1] Cadmium tetrafluoroborate may be prepared from the reaction between an aqueous solution of fluoroboric acid and cadmium carbonate or cadmium oxide: It is also possible to prepare Cadmium tetrafluoroborate through an oxidation reduction reaction implementing nitrosyl tetrafluoroborate: Cadmium tetrafluoroborate is an ionic compound formed from the two, ionic species Cd and BF. [Doc 3] Cadmium hydride Cadmium hydride ( systematically named cadmium dihydride) is an inorganic compound with the chemical formula. [Doc 5] It forms by treating cadmium oxide with acetic acid: Cadmium acetate is used for glazing ceramics and pottery; in electroplating baths, in dyeing and printing textiles; and as an analytic reagent for sulfur, selenium and tellurium. [Doc 1] At room temperature it forms colorless, odorless crystals which are 14 soluble in polar solvents such as water or ethanol. [Doc 2] Cadmium chloride Cadmium chloride is a white crystalline compound of cadmium and chlorine, with the formula CdCl. [Doc 2] The crystal structure of cadmium chloride (described below), composed of two dimensional layers of ions, is a reference for describing other crystal structures. [Doc 4] Operation LAC (Large Area Coverage) was a United States Army Chemical Corps operation in 1957 and 1958 which dispersed microscopic zinc cadmium sulfide (ZnCdS) particles over much of the United States. Distilled knowledge. Cadmium Chloride Cadmium chloride is a white crystalline compound of cadmium and chlorine, with the formula CdCl. It is a hygroscopic solid that is highly soluble in water and slightly soluble in alcohol. The crystal structure of cadmium chloride, composed of two dimensional layers of ions, is a reference for describing other crystal structures. At room temperature it forms colorless, odorless crystals which are soluble in polar solvents such as water or ethanol. Cadmium tetrafluoroborate may be prepared from the reaction between an aqueous solution of fluoroboric acid and cadmium carbonate or cadmium oxide. It is also possible to prepare Cadmium tetrafluoroborate ... Short answer factoid QA example. This exam- ple sho ws a case where the rewritten document consolidates salient series-le vel facts into a com- pact document that is easy to retrie ve later . Example 3: NQ Question: big little lies season 2 how many episodes Gold answer: ["seven"] Original RA G prediction: seven No-retrie val prediction: NAN Utility scores: s rag = 1 . 0 , s nr = 0 . 0 , ∆ = 1 . 0 Retained document indices: [0, 2] Extractive e vidence. [Doc 1] All seven episodes are being written by Kelley [Doc 2] Kelley, the series’ seven episode first season was directed by Jean Marc Vallee. [Doc 2] Big Little Lies (TV series) Big Little Lies is an American drama television series, based on the novel of the same name by Liane Moriarty, that premiered on February 19, 2017, on HBO. [Doc 2] ""Big Little Lies"" stars Nicole Kidman, Reese Witherspoon and Shailene Woodley and tells the story of three emotionally [Doc 1] Despite originally being billed as a miniseries, HBO renewed the series for a second season. [Doc 1] Production on the second season began in March 2018 and is set to premiere in 2019. [Doc 2] The first season was released on Blu ray and DVD on August 1, 2017. Distilled knowledge. Big Little Lies (TV series) Big Little Lies is an American drama television series, based on the novel of the same name by Liane Moriarty, that premiered on February 19, 2017, on HBO. ""Big Little Lies"" stars Nicole Kidman, Reese Witherspoon and Shailene Woodley and tells the story of three emotionally complex women. The first season, consisting of seven episodes, was directed by Jean Marc Vallee and released on Blu ray and DVD on August 1, 2017. Despite originally being billed as a miniseries, HBO renewed the series for a second ... I Rationale f or using Naive RA G for Analysis W e mainly adopt naive RA G as the reference base- line during analysis because it offers the most con- trolled setting for identifying the source of im- prov ement. The central question of W R I T E B A C K - R A G is not whether a more sophisticated retriev al pipeline can improve results, b ut whether our write- back mechanism can provide additional gains by distilling retrie ved e vidence into reusable knowl- edge. Using naiv e RA G as the primary compari- son point reduces confounding f actors and makes attribution clearer: performance dif ferences can be interpreted more directly as arising from our method, rather than from auxiliary changes in re- trie val, reranking, or prompt engineering. In con- trast, if the main comparison were conducted only against stronger RA G v ariants, it would be dif fi- cult to disentangle whether the improvement came from the RA G system itself or from the proposed method layered on top of it. For this reason, nai ve RA G serves as the fairest baseline for measuring the incremental v alue of our approach. 15

</div>

<hr style="margin: 50px 0; border: 0; border-top: 2px solid #eee;" />

<!-- ── Original Paper Viewer ── -->

<section class="original-paper-section" id="paper-viewer-anchor">

<div style="display: flex; justify-content: space-between; align-items: center; margin-bottom: 25px;">

<h3 style="margin:0; font-size: 1.4rem; color: #222;">Original Paper</h3>

<div id="nav-top"></div>

</div>

<div id="paper-content-container" style="background: #f4f4f4; border: 1px solid #ddd; border-radius: 8px; min-height: 600px; position: relative; overflow: visible;">

<div id="loading-status" style="text-align: center; padding: 100px 20px; color: #888;">

<p>Loading high-quality paper...</p>

</div>

</div>

<div id="nav-bottom" style="margin-top: 30px; display: flex; justify-content: center;"></div>

</section>

<!-- ── Related Papers Section ── -->

<section id="related-papers-section" style="margin-top:60px; border-top:2px solid #eee; padding-top:40px;">

<h3 style="font-size:1.4rem; font-weight:800; color:#1a1a1a; margin-bottom:24px; display:flex; align-items:center; gap:10px;">

<svg width="22" height="22" viewBox="0 0 24 24" fill="none" stroke="#0366d6" stroke-width="2"><path d="M4 19.5A2.5 2.5 0 0 1 6.5 17H20"/><path d="M6.5 2H20v20H6.5A2.5 2.5 0 0 1 4 19.5v-15A2.5 2.5 0 0 1 6.5 2z"/></svg>

Related Papers

</h3>

<div id="related-papers-list" style="display:grid; grid-template-columns:repeat(auto-fill,minmax(260px,1fr)); gap:20px;">

<p style="color:#aaa; font-style:italic; font-size:0.9rem;">Loading...</p>

</div>

</section>

<!-- ── Comment Section ── -->

<section class="comments-section" style="margin-top: 80px; border-top: 3px solid #0366d6; padding-top: 50px;">

<h3 style="font-size: 1.6rem; font-weight: 800; color: #1a1a1a; margin-bottom: 35px; display: flex; align-items: center; gap: 12px;">

<svg width="28" height="28" viewBox="0 0 24 24" fill="none" stroke="#0366d6" stroke-width="2.5"><path d="M21 15a2 2 0 0 1-2 2H7l-4 4V5a2 2 0 0 1 2-2h14a2 2 0 0 1 2 2z"/></svg>

Comments & Academic Discussion

</h3>

<div id="comments-list" style="margin-bottom: 50px;">

<p style="color: #999; font-style: italic;">Loading comments...</p>

</div>

<div class="comment-form-wrap" style="background: #fdfdfd; padding: 35px; border-radius: 16px; border: 1px solid #e1e4e8; box-shadow: 0 4px 12px rgba(0,0,0,0.03);">

<h4 id="reply-title" style="margin-top: 0; margin-bottom: 20px; font-size: 1.2rem; font-weight: 800; color: #333;">Leave a Comment</h4>

<form id="comment-form" onsubmit="submitComment(event)">

<input type="hidden" id="parent-id" value="">

<div style="display: grid; grid-template-columns: 1fr 1fr; gap: 20px; margin-bottom: 20px;">

<input type="text" id="comment-author" placeholder="Your Name" required style="padding: 14px; border: 1px solid #ddd; border-radius: 8px; font-size: 1rem; outline: none; transition: border-color 0.2s;" onfocus="this.style.borderColor='#0366d6'" onblur="this.style.borderColor='#ddd'">

<input type="text" id="comment-website" placeholder="Website" style="display:none !important;" tabindex="-1" autocomplete="off">

</div>

<textarea id="comment-content" rows="5" placeholder="Share your insights or questions about this paper..." required style="width: 100%; padding: 14px; border: 1px solid #ddd; border-radius: 8px; margin-bottom: 20px; resize: vertical; box-sizing: border-box; font-size: 1rem; outline: none; transition: border-color 0.2s;" onfocus="this.style.borderColor='#0366d6'" onblur="this.style.borderColor='#ddd'"></textarea>

<div style="display: flex; justify-content: space-between; align-items: center;">

<button type="button" id="cancel-reply" onclick="resetReply()" style="display: none; background: #fff; border: 1px solid #d73a49; color: #d73a49; padding: 10px 20px; border-radius: 8px; font-weight: bold; cursor: pointer; transition: all 0.2s;">Cancel Reply </button>

<button type="submit" style="background: #0366d6; color: white; border: none; padding: 14px 40px; border-radius: 8px; font-weight: 800; cursor: pointer; font-size: 1rem; transition: background 0.2s; margin-left: auto;" onmouseover="this.style.background='#0056b3'" onmouseout="this.style.background='#0366d6'">Post Comment</button>

</div>

</form>

</div>

</section>

<script>

const arxivId = "2603.25737";

const apiUrl = "/api/comments/" + arxivId;

const pageLang = "en";

// ── Related Papers ────────────────────────────────────────

(function loadRelatedPapers() {

const container = document.getElementById('related-papers-list');

if (!container || !arxivId) return;

fetch('/api/related/' + encodeURIComponent(arxivId) + '?lang=' + pageLang)

.then(r => r.json())

.then(papers => {

if (!papers || papers.length === 0) {

document.getElementById('related-papers-section').style.display = 'none';

return;

}

container.innerHTML = papers.map(p => `

<a href="${p.url}" style="display:block; text-decoration:none; color:inherit; background:#fff; border:1px solid #e8e8e8; border-radius:10px; padding:16px; transition:box-shadow 0.2s;" onmouseover="this.style.boxShadow='0 4px 16px rgba(3,102,214,0.12)'" onmouseout="this.style.boxShadow='none'">

${p.image_url ? `<div style="aspect-ratio:16/9; overflow:hidden; border-radius:6px; margin-bottom:10px; background:#f5f5f5;"><img src="${p.image_url}" style="width:100%;height:100%;object-fit:cover;" loading="lazy" onerror="this.parentElement.style.display='none'"></div>` : ''}

<div style="font-size:0.72rem; color:#888; margin-bottom:5px;">${p.arxiv_id} · ${p.date_str}</div>

<div style="font-size:0.92rem; font-weight:700; color:#1a1a1a; line-height:1.4; display:-webkit-box; -webkit-line-clamp:2; -webkit-box-orient:vertical; overflow:hidden;">${p.title}</div>

</a>

`).join('');

})

.catch(() => {

document.getElementById('related-papers-section').style.display = 'none';

});

})();

function loadComments() {

fetch(apiUrl)

.then(res => res.json())

.then(data => {

const list = document.getElementById('comments-list');

if (!data || data.length === 0) {

list.innerHTML = `<div style="text-align:center; padding:40px; background:#fcfcfc; border-radius:12px; border:1px dashed #ddd; color:#999;">No comments yet. Be the first to share your thoughts!</div>`;

return;

}

// Group replies under parents

const top = data.filter(c => !c.parent_id);

const byParent = {};

data.filter(c => c.parent_id).forEach(c => {

byParent[c.parent_id] = byParent[c.parent_id] || [];

byParent[c.parent_id].push(c);

});

top.forEach(c => { c.replies = byParent[c.id] || []; });

list.innerHTML = top.map(renderComment).join('');

})

.catch(e => console.error('loadComments error:', e));

}

function renderComment(c) {

return `

<div class="comment-item" style="margin-bottom: 30px; border-left: 4px solid #0366d6; padding: 15px 25px; background: #fff; border-radius: 0 12px 12px 0; box-shadow: 0 2px 8px rgba(0,0,0,0.02);">

<div style="display: flex; justify-content: space-between; align-items: center; margin-bottom: 12px;">

<span style="font-weight: 800; color: #1a1a1a; font-size: 1.05rem;">${c.author}</span>

<span style="font-size: 0.85rem; color: #bbb;">${c.created_at ? c.created_at.slice(0,10) : ''}</span>

</div>

<p style="margin: 0; color: #4a4a4a; line-height: 1.7; font-size: 1.05rem; white-space: pre-wrap;">${c.content}</p>

<div style="margin-top: 15px;">

<button onclick="setReply(${c.id}, '${c.author.replace(/'/g, "\\'")}')" style="background:none; border:none; color:#0366d6; font-size:0.9rem; padding:0; cursor:pointer; font-weight:bold; display:flex; align-items:center; gap:5px;">

<svg width="14" height="14" viewBox="0 0 24 24" fill="none" stroke="currentColor" stroke-width="2.5"><path d="M15 10l-5 5 5 5"/><path d="M4 4v7a4 4 0 0 0 4 4h12"/></svg>

Reply

</button>

</div>

${c.replies && c.replies.length > 0 ? `<div style="margin-top: 25px; margin-left: 30px; border-top: 1px solid #f0f0f0; padding-top: 25px;">${c.replies.map(renderComment).join('')}</div>` : ''}

</div>

`;

}

function setReply(id, name) {

document.getElementById('parent-id').value = id;

document.getElementById('reply-title').innerText = `Reply to ${name}`;

document.getElementById('cancel-reply').style.display = 'inline-block';

document.getElementById('comment-content').focus();

document.getElementById('comment-form').scrollIntoView({ behavior: 'smooth', block: 'center' });

}

function resetReply() {

document.getElementById('parent-id').value = "";

document.getElementById('reply-title').innerText = "Leave a Comment";

document.getElementById('cancel-reply').style.display = 'none';

}

function submitComment(e) {

e.preventDefault();

const author = document.getElementById('comment-author').value;

const content = document.getElementById('comment-content').value;

const parent_id = document.getElementById('parent-id').value || null;

const website = document.getElementById('comment-website').value;

if (website) return; // honeypot

fetch(apiUrl, {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({ author, content, parent_id })

}).then(res => {

if (res.ok) {

document.getElementById('comment-content').value = "";

resetReply();

loadComments();

alert("Comment posted successfully.");

} else {

alert("Error posting comment. Please try again.");

}

});

}

document.addEventListener('DOMContentLoaded', loadComments);

</script>

<script>

document.addEventListener("DOMContentLoaded", function() {

const arxivId = "2603.25737";

const container = document.getElementById('paper-content-container');

const loadingStatus = document.getElementById('loading-status');

let currentPage = 1;

let totalPages = 0;

const yearStr = "2026";

const monthStr = "03";

const paths = [

`/koineu_html/${yearStr}/${monthStr}/${arxivId}/index.html`

];

function updateNav() {

const navHtml = `

<div style="display: flex; align-items: center; gap: 15px; background: #fff; padding: 8px 25px; border-radius: 30px; border: 1px solid #ddd; box-shadow: 0 2px 8px rgba(0,0,0,0.05);">

<button onclick="movePage(-1)" ${currentPage === 1 ? 'disabled' : ''} style="border:0; background:none; cursor:pointer; font-weight:bold; color:${currentPage === 1 ? '#ccc' : '#0366d6'}">◀ Prev</button>

<span style="font-family: monospace; font-weight:bold; color:#333;">PAGE ${currentPage} / ${totalPages}</span>

<button onclick="movePage(1)" ${currentPage === totalPages ? 'disabled' : ''} style="border:0; background:none; cursor:pointer; font-weight:bold; color:${currentPage === totalPages ? '#ccc' : '#0366d6'}">Next ▶</button>

</div>

`;

document.getElementById('nav-top').innerHTML = navHtml;

document.getElementById('nav-bottom').innerHTML = navHtml;

}

window.movePage = function(delta) {

const next = currentPage + delta;

if (next >= 1 && next <= totalPages) {

document.getElementById('pf' + currentPage.toString(16)).style.display = 'none';

document.getElementById('pf' + next.toString(16)).style.display = 'block';

currentPage = next;

updateNav();

window.scrollTo({ top: document.getElementById('paper-viewer-anchor').offsetTop - 20, behavior: 'smooth' });

}

};

function tryLoad(idx) {

if (idx >= paths.length) {

loadingStatus.innerHTML = "<p>Original content is being processed. Available soon.</p>";

return;

}

const url = paths[idx];

const baseUrl = url.replace('index.html', '');

fetch(url).then(r => { if(!r.ok) throw new Error(); return r.text(); }).then(html => {

const parser = new DOMParser();

const doc = parser.parseFromString(html, 'text/html');

const pageContainer = doc.getElementById('page-container') || doc.body;

const styles = doc.querySelectorAll('style');

styles.forEach(s => {

const newStyle = document.createElement('style');

newStyle.textContent = s.textContent.replace(/url\((?!http|data|["']?\/)/g, `url(${baseUrl}`);

document.head.appendChild(newStyle);

});

if (pageContainer) {

const pages = pageContainer.querySelectorAll('.pf');

totalPages = pages.length || 1;

pageContainer.style.cssText = "position:relative !important; top:0 !important; left:0 !important; width:100% !important; display:block !important;";

pages.forEach((p, i) => {

p.style.display = (i === 0) ? 'block' : 'none';

p.style.cssText += "position:relative !important; margin:0 auto !important; max-width:100% !important; background:white !important; box-shadow:0 0 15px rgba(0,0,0,0.1) !important;";

p.querySelectorAll('img').forEach(img => {

const src = img.getAttribute('src');

if (src && !src.startsWith('http') && !src.startsWith('/')) img.src = baseUrl + src;

img.onerror = function() { this.style.display = 'none'; };

});

});

container.innerHTML = "";

container.appendChild(pageContainer);

updateNav();

}

}).catch(() => tryLoad(idx + 1));

}

tryLoad(0);

});

</script>

<script>

document.addEventListener("DOMContentLoaded", function() {

const arxivId = "2603.25737";

const lang = "en";

// Record View & Update Count

fetch(`/api/view/${arxivId}?lang=${lang}`, { method: 'POST' })

.then(res => res.json())

.then(data => {

if (data.status === 'success' && data.view_count !== undefined) {

const viewEl = document.getElementById('post-view-number');

if (viewEl) viewEl.innerText = Number(data.view_count).toLocaleString();

}

})

.catch(e => console.error(e));

// Load Sidebar Data

fetch(`/api/sidebar-data?lang=${lang}`)

.then(res => res.json())

.then(data => {

// Popular Posts

const popContainer = document.getElementById('dynamic-sidebar-popular');

if (data.popular_posts && data.popular_posts.length > 0) {

let popHtml = `<h4 class="sidebar-section-title">${lang === 'kr' ? '인기 게시물' : 'Popular Posts'}</h4>`;

data.popular_posts.forEach(p => {

popHtml += `

<a href="${p.url}" class="sidebar-post">

<div class="sidebar-post__img">

<img src="${p.image_url}" onerror="this.src='/images/placeholder.jpg'" alt="${p.title}" loading="lazy" />

</div>

<span class="sidebar-post__title">${p.title}</span>

</a>

`;

});

popContainer.innerHTML = popHtml;

} else {

popContainer.style.display = 'none';

}

// Recent Comments

const commentContainer = document.getElementById('dynamic-sidebar-comments');

if (data.recent_comments && data.recent_comments.length > 0) {

let cHtml = `<h4 class="sidebar-section-title">${lang === 'kr' ? '최근 댓글' : 'Recent Comments'}</h4><div style="display:flex; flex-direction:column; gap:15px;">`;

data.recent_comments.forEach(c => {

cHtml += `

<a href="${c.url}#comments-list" style="text-decoration:none; background:#f8f9fa; padding:12px; border-radius:8px; display:block; border:1px solid #eee; transition:background 0.2s;" onmouseover="this.style.background='#f0f7ff'" onmouseout="this.style.background='#f8f9fa'">

<div style="font-size:0.85rem; color:#666; margin-bottom:5px;"><strong>${c.author}</strong> on <span style="color:#0366d6;">${c.post_title}</span></div>