PixelSmile: Toward Fine-Grained Facial Expression Editing

Fine-grained facial expression editing has long been limited by intrinsic semantic overlap. To address this, we construct the Flex Facial Expression (FFE) dataset with continuous affective annotations and establish FFE-Bench to evaluate structural co…

Authors: Jiabin Hua, Hengyuan Xu, Aojie Li

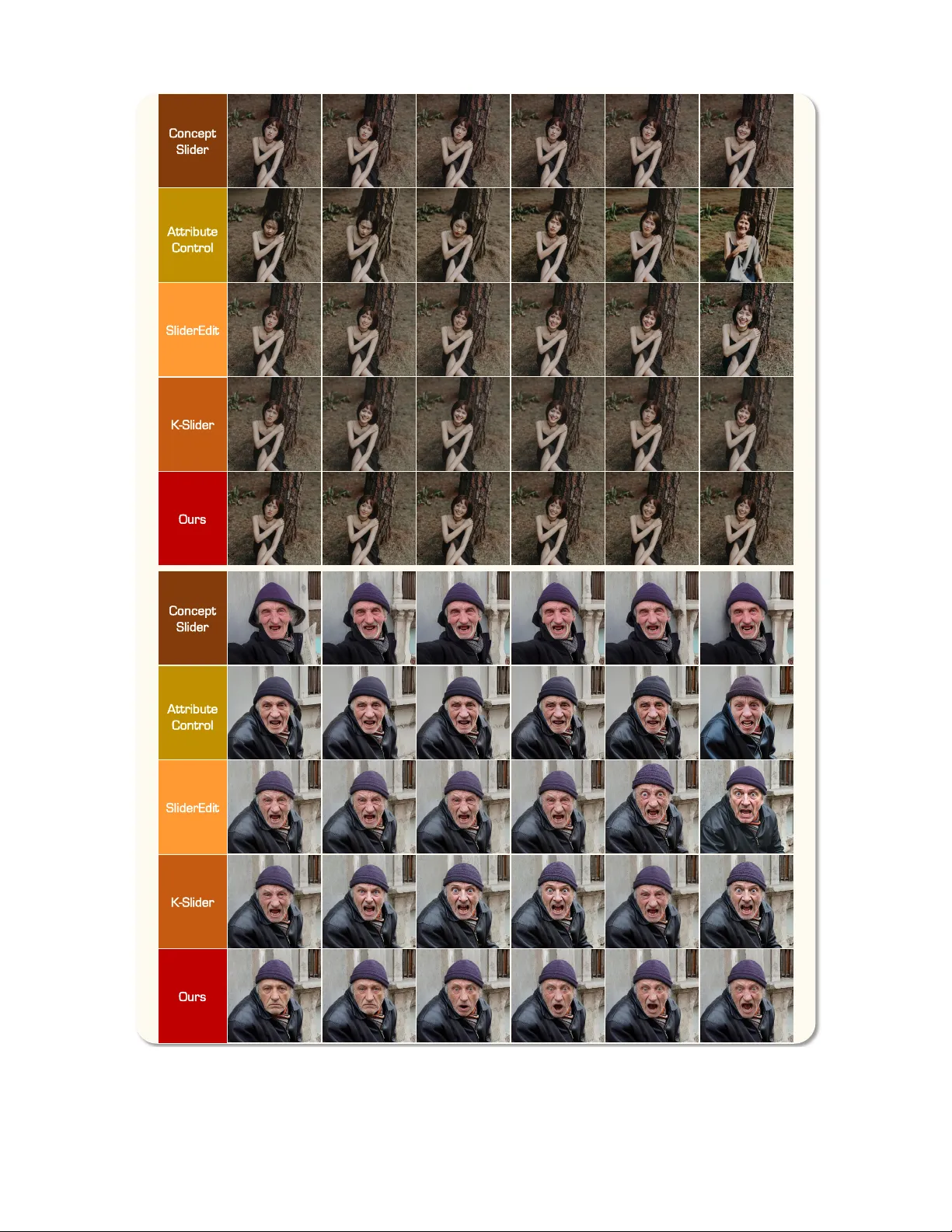

PixelSmile: T oward Fine-Grained F acial Expr ession Editing Jiabin Hua 1 , 2 , ∗ Hengyuan Xu 1 , 2 , ∗ Aojie Li 2 , † W ei Cheng 2 Gang Y u 2 , ‡ Xingjun Ma 1 , ‡ Y u-Gang Jiang 1 1 Fudan Uni versity 2 StepFun Project P age Code Model Benchmark Demo R e a l H u m a n A n i me H a ppy H appy S ad D i s g us t A n g r y F e ar S u r p r i s e d A n x i o u s Sleep y C o n t e m p t S hy C o n f u s e d c o n f i d e n t Ⅰ . F in e - G r a i n ed E x p r es s i o n E d i t i n g Ⅱ . E x t en d ed E x p r es s i o n Ⅲ . E x p r es s i o n B l e n d i n g Figure 1. Overview of PixelSmile . It enables 1) continuous and precise control of facial expression intensity across real-world and anime domains, 2) editing across 12 distinct expression cate gories, and 3) seamless blending of multiple expressions. Abstract F ine-grained facial expr ession editing has long been limited by intrinsic semantic o verlap. T o addr ess this, we construct the Flex F acial Expression (FFE) dataset with continuous affective annotations and establish FFE-Bench to evalu- ate structural confusion, editing accuracy , linear contr ol- lability , and the trade-of f between expr ession editing and identity preservation. W e pr opose PixelSmile , a diffusion ∗ Equal contribution. † Project lead. ‡ Corresponding authors. frame work that disentangles expr ession semantics via fully symmetric joint training. PixelSmile combines intensity su- pervision with contrastive learning to pr oduce str onger and mor e distinguishable e xpressions, achie ving pr ecise and stable linear e xpression contr ol thr ough te xtual latent in- terpolation. Extensive experiments demonstrate that Pix- elSmile ac hieves superior disentanglement and r obust iden- tity preservation, confirming its effectiveness for continu- ous, contr ollable, and fine-grained expr ession editing, while naturally supporting smooth e xpression blending . 1. Introduction Recent adv ances in diffusion-based image editing mod- els [ 46 , 73 ] and identity-consistent generation tech- niques [ 27 , 34 , 74 ] hav e significantly impro ved the ability to manipulate personal portraits using natural language. De- spite this progress, fine-grained facial expression editing re- mains a challenging problem. Current models can generate clearly distinct expressions, such as happy versus sad, but struggle to delineate highly correlated, semantically over - lapping e xpression pairs, such as fear versus surprise or anger versus disgust. Most existing methods rely on dis- crete expression categories, forcing inherently continuous human expressions into rigid class boundaries. As a result, these formulations fail to capture subtle expression bound- aries, leading to structured cross-category confusion, lim- ited control over expression intensity , and degraded identity consistency during editing. T o better understand this limitation, we analyze the se- mantic structure of facial expressions. As illustrated in Fig. 2 , facial expressions lie on a continuous semantic man- ifold where semantically adjacent emotions naturally over - lap. This overlap manifests as systematic confusion across multiple stakeholders: human annotators, classifiers, and generativ e models often fail to uniquely distinguish se- mantically adjacent expressions like fear versus surprise or anger versus disgust. When generati ve models are trained using discrete and potentially conflicting labels from such ambiguous samples, they are forced to learn entangled rep- resentations in the latent space. Consequently , this struc- tural entanglement prevents precise control, resulting in un- intended e xpression leakage, where editing one emotion in- advertently triggers the characteristics of another or even degrades identity consistenc y . Addressing this challenge requires a new supervision paradigm for facial expression editing models. Con ven- tional datasets often represent facial expressions using rigid one-hot labels, which fail to capture the nuanced structure of human af fect and propagate semantic entanglement into the generativ e pipeline. T o address this limitation, we in- troduce a new supervision paradigm based on continuous affecti ve annotations. Specifically , we construct the Flex Facial Expression (FFE) dataset, which replaces discrete labels with continuous 12-dimensional affecti ve score dis- tributions. Based on this dataset, we further establish FFE- Bench to ev aluate structural confusion, editing accuracy , linear controllability , and the trade-off between expression editing and identity preservation. By providing div erse ex- pressions within the same identity and continuous affec- tiv e ground truth across both real and anime domains, FFE breaks the one-hot supervision bottleneck, allo wing models to learn the fine-grained boundaries of the e xpression mani- fold rather than disjoint categories, and enabling systematic ev aluation of controllable expression editing. Building upon this data-centric foundation, we propose PixelSmile , a diffusion-based editing framework that dis- entangles expression semantics. Our framework introduces a fully symmetric joint training paradigm to contrast con- fusing expression pairs identified in our analysis. Com- bined with a flow-matching-based textual latent interpola- tion mechanism, PixelSmile enables precise and linearly controllable expression intensity at inference time without requiring reference images. Through the synergy between continuous affecti ve supervision and symmetric learning, PixelSmile achie ves robust and controllable editing while preserving identity fidelity . In summary , our contrib utions are threefold: • Systematic Analysis of Semantic Overlap. W e rev eal and formalize the structured semantic ov erlap between fa- cial expressions, demonstrating that structured semantic ov erlap, rather than purely classification error , is a pri- mary cause of failures in both recognition and generative editing tasks. • Dataset and Benchmark. W e construct the FFE dataset—a large-scale, cross-domain collection featuring 12 expression categories with continuous af fective anno- tations—and establish FFE-Bench, a multi-dimensional ev aluation environment specifically designed to e valuate structural confusion, expression editing accuracy , linear controllability , and the trade-of f between expression edit- ing and identity preservation. • PixelSmile Framework. W e propose a novel diffusion- based framework utilizing fully symmetric joint training and textual latent interpolation. This design ef fectively disentangles overlapping emotions and enables disentan- gled and linearly controllable expression editing. 2. Related W ork Facial Expression Editing. Facial expression editing aims to modify facial expressions while preserving iden- tity . Early approaches relied on conditional GANs [ 24 ], formulating the task as multi-domain image-to-image trans- lation [ 10 , 11 , 15 , 45 , 59 ]. Subsequent works explored disentangled latent manipulation within StyleGAN-based architectures [ 28 , 36 , 37 , 64 , 65 , 80 ] to identify seman- tic directions for continuous expression control. Another line of research incorporates explicit facial priors, such as Action Units or 3DMM parameters, to enable structured, interpretable manipulation. For instance, MagicFace [ 71 ] lev erages such priors to guide dif fusion models, while other works [ 13 , 16 , 22 , 33 , 59 ] explore similar structural constraints. Despite facilitating discrete expression trans- fers, these methods often struggle with fine-grained control, identity consistency , and generalization. More recently , diffusion models [ 30 ] have significantly adv anced image generation and editing quality [ 4 , 29 , 49 , 82 ]. Further- more, lar ge-scale multimodal pretraining has fueled signifi- S ur pr i s e d F e ar A n g ry D i s g u s t S h a r e d : W i d e E y es , Op en M o u t h S h a r e d : F r o w n , N e g a t i ve Af f e c t F e a r : 0. 95 Su r p r i s e d ! H u man M o d el R e c o g n i t i o n C o n f us i o n E x p r es s i o n S em a n t i c O v er l a p E d i t i n g C o n f us i o n A n gr y D i sg u st A n g ry D i s g u s t F e ar S ur pr i s e d F F E D a t a s e t { “ i d ” : ‘ 0 0 1 1 3 6 ’ , “ c a t e g o r y ” : ‘ a n g r y ’ , “ s c o r e s ” : { “ h a p p y ” : 0 . 8 5 , “ a n g r y ” : 0 . 0 , “ s a d ” : 0 . 1 , “ s u r p r i s e d ” : 0 . 0 2 , “ f e a r ” : 0 . 0 , “ d i s g u s t ” : 0 . 0 , “ a n x i o u s ” : 0 . 0 1 , “ c o n f i d e n t ” : 0 . 5 5 , “ c o n t e m p t ” : 0 . 0 1 , “ c o n f u s e d ” : 0 . 0 , “ s h y ” : 0 . 0 2 , “ s l e e p y ” : 0 . 0 , } , “ b o x ” : [ 5 3 . 2 1 , … ] } D a t a S t r uc t ur e A n g r y R e al H u m an S a m pl e s A n i me S a m pl e s Ke y Ob s e r v a t i o n Ou r C o n tr i b u ti o n P i xe lS m ile Figure 2. Observation of Expr ession Semantic Overlap. Inherent expression ov erlap causes systematic confusion across human annota- tors, recognition models, and generati ve models (top). W e resolve this via the FFE dataset (bottom left) and Pix elSmile frame work (bottom right), utilizing continuous supervision and symmetric training for disentangled editing. cant advancements in general-purpose editing. Large-scale foundation models, such as GPT -Image [ 54 ], Nano Banana Pro [ 25 ], Qwen-Image [ 73 ], and LongCat-Image [ 68 ], now demonstrate remarkable zero-shot fle xibility and editing ca- pabilities [ 5 , 39 , 46 ]. Continuously Controlled Generation. Prior works achiev e continuous editing by leveraging interpolatable subspaces within generati ve models. ConceptSlider [ 20 ] interpolates LoRA weights, while subsequent methods [ 3 , 7 , 12 , 21 , 23 , 26 , 29 , 32 , 35 , 63 , 67 , 77 , 85 ] manipulate text embeddings or modulation features to achie ve grad- ual semantic variation. More recently , SliderEdit [ 81 ], K ontinuous-Konte xt [ 57 ], and concurrent works [ 72 , 75 , 79 ] extend continuous control to editing models built upon FLUX.1 Konte xt [ 40 ]. Despite smoother transitions via re- duced strength or pix el interpolation, these methods remain constrained by entangled latent spaces, leading to semantic ambiguity and identity drift at large magnitudes. By dis- entangling latent expression semantics, our structured for- mulation achieves fine-grained linear control and identity preservation across di verse manipulation strengths. Facial Expression Datasets and Benchmarks. High- quality datasets and reliable benchmarks are essential for facial expression analysis. Early controlled datasets [ 41 , 47 , 48 , 78 ] provide same-identity multi-expression samples for precise comparison but lack diversity , while large-scale in- the-wild datasets [ 2 , 42 , 50 , 76 , 83 ] enhance generalization but lack paired expressions for the same identity , hinder - ing identity-expression disentanglement in generative edit- ing. Recent ef forts extend to video and multimodal settings. While video-based datasets [ 51 , 60 , 84 ] focus on temporal or cross-modal dynamics, the MEAD dataset [ 69 ] provides expressions with three distinct intensity levels, moving be- yond purely categorical labels but still falling short of fine- grained, continuous control and structured disentanglement in static editing contexts. Alongside these, benchmarks such as F-Bench [ 44 ] and SEED [ 87 ] ev aluate facial gen- eration using visual metrics and human preference. Ho w- ev er , standard metrics (e.g., CLIP , SSIM, LPIPS) capture ov erall quality b ut offer limited insight into disentanglement and continuous control. T o address these gaps, we propose FFE and FFE-Bench. By providing same-identity pairs with continuous affecti ve annotations, our approach enables rig- orous e valuation of fine-grained, linearly controllable, and disentangled expression editing. 3. Dataset and Benchmark T o facilitate fine-grained and linearly controllable f acial ex- pression editing, we construct the FFE dataset and estab- lish FFE-Bench, a dedicated ev aluation benchmark. Ex- isting datasets often lack same-identity expression div er- sity or pro vide only discrete expression labels, which limits the ev aluation of controllable e xpression manipulation. Our dataset addresses these limitations by providing large-scale same-identity expression variations with continuous affec- tiv e annotations, enabling systematic analysis of e xpression disentanglement and editing controllability . F l o w Ma t c h i n g L o ss 1 2 f ear , f ear , s u rp + s u rp , s u rp , f ear G T ( S u r p r i s e ) G T ( F e a r ) G e n ( F e a r ) G T ( F e a r ) G T ( S u r p r i s e ) G e n ( S u r p r i se ) S o ur c e I m a ge G e n er a t ed S u r p r i se G e n er a t ed F e ar 1 . H e ’ s S u r p r is e d ( 0 . 8 ) 2 . H e is in F e a r ( 0 . 6 ) GT F e ar (0 . 6 ) GT S u rp ri s e ( 0. 8 ) S o urce 1 − c o s , An g r i e r N e ut r a l I n f er en c e S t age N e u t r al P r o mp t Te x t E n co d e r T a r ge t P r o mp t Te x t E n co d e r P i x e l S m i l e I n t e r po l at e d E m b e ddi n g = + ⋅ ( t ar g et − ) C o n t r a s ti v e L o s s I D L o ss T e x t E nc od e r Di T B l o c ks Lo R A P i x el S m i l e V AE T r a i n i n g S t a g e A r cF a ce Figure 3. Framework Overview . (1) Inference Stage . W e interpolate between the neutral and target expression embeddings in textual latent space using a controllable coefficient α , enabling continuous adjustment of expression intensity . (2) T raining Stage . W e adopt a joint fully symmetric training frame work. Specifically , we sample a source image P src and a confusing expression pair ( P a , P b ) to construct a triplet. W e first treat P a as the positiv e and P b as the negati ve to compute a joint loss, and then swap their roles to compute it again, yielding a symmetric training objective. The joint loss consists of three components: a Flow-Matching loss for intensity alignment, a contrastiv e loss for expression separation, and an identity preservation loss to maintain subject consistenc y . 3.1. The FFE Dataset FFE is constructed through a four-stage collect–compose– generate–annotate pipeline designed to ensure expression div ersity , cross-domain cov erage, and reliable annotations. The final dataset contains 60,000 images across real and anime domains, supporting both photorealistic and stylized facial expression editing. Base Identity Collection. W e first curate a set of high- quality base identities from two domains: (1) Real do- main : approximately 6,000 real-world portraits are col- lected from public portrait datasets [ 1 , 66 ], covering di- verse demographics and scene compositions, including both close-up and full-body images; (2) Anime domain : to enable cross-domain e valuation, we collect stylized portraits from 207 anime productions covering 629 characters, from which around 6,000 high-quality images are retained after quality filtering and automated face detection. For both domains, automated face detection follo wed by manual verification is applied to ensure identity clarity and image quality . These images form the identity backbone of FFE dataset. Expression Prompt Composition. T o obtain fine-grained expression variations, we construct a structured prompt li- brary for 12 target expressions. The taxonomy consists of six basic emotions [ 19 ] and six extended emotions (Con- fused, Contempt, Confident, Shy , Sleepy , Anxious). Rather than relying solely on abstract expression labels, each ex- pression is decomposed into facial attribute components (e.g., mouth shape, e yebrow mo vement, and e ye openness). Candidate attribute combinations are automatically gener- ated and filtered with a vision-language model to remove anatomically inconsistent or semantically conflicting de- scriptions, resulting in a validated library of fine-grained expression prompts. Controlled Expr ession Generation. For each base iden- tity , multiple target expressions with v arying intensities are synthesized using a state-of-the-art image editing model, Nano Banana Pro . W e adopt a dual-part prompt design that specifies both the global expression category and localized facial attributes, improving controllability and reducing am- biguity between semantically similar expressions. This pro- cess produces approximately 60,000 images in total (30,000 per domain), providing rich identity-preserving expression variations across di verse conditions. Continuous Annotation and Quality Filtering. Departing from con ventional one-hot expression labels, each image is annotated with a 12-dimensional continuous score vector v ∈ [0 , 1] 12 . The scores are predicted by a vision-language model, Gemini 3 Pro , which estimates the intensity of each expression category . A subset of samples is verified by hu- man annotators to ensure reliability . This representation captures semantic overlap between facial expressions (e.g., fear and surprise), providing a faithful approximation of the affecti ve manifold. W e further perform consistency checks and manual spot verification to remov e ambiguous or low- confidence samples. The resulting dataset provides same- identity expression v ariations with continuous soft labels, enabling fine-grained e valuation of expression disentangle- ment and controllable facial expression editing. 3.2. The FFE-Bench Benchmark Motiv ated by the intrinsic semantic entanglement among fa- cial expressions, which leads to structured cross-category confusion, we design a unified benchmark to ev aluate fa- cial expression editing from four complementary aspects: structural confusion, the trade-off between expression edit- ing and identity preserv ation, control linearity , and expres- sion editing accuracy . All expression classifications and in- tensity scores are predicted by Gemini 3 Pro. Mean Structural Confusion Rate (mSCR). T o quantify structured confusion between semantically similar expres- sions, we define the directed confusion rate C i → j and the bidirectional confusion rate (BCR) as follows: C i → j = 1 N i N i X k =1 1 ( ˆ y ( i ) k = j ) , (1) BCR( i, j ) = 1 2 ( C i → j + C j → i ) , (2) where N i denotes the number of samples edited to ward class i , and ˆ y ( i ) k is the predicted dominant expression. The mSCR is computed by averaging BCR( i, j ) over predefined confusing pairs (e.g., Fear–Surprise and Angry–Disgust). A lower mSCR indicates reduced cross-category confusion and improv ed semantic disentanglement. Harmonic Editing Score (HES). Facial expression edit- ing requires both accurate expression transfer and identity preservation. W e define the Harmonic Editing Score as HES = 2 × S E × S ID S E + S ID , (3) where S E denotes the VLM-based target expression score, and S ID is the cosine similarity between source and edited faces. Identity similarity is computed as the average cosine similarity from three face recognition models (including Ar- cFace [ 14 ], AdaFace [ 38 ], FaceNet [ 62 ]) for rob ustness. High HES is achiev ed only when both expression strength and identity fidelity are preserved. Control Linearity Score (CLS). T o ev aluate continuous controllability , we feed uniformly spaced intensity coeffi- cients α ∈ [0 , α max ] during inference and compute the Pearson correlation between α and the VLM-predicted in- tensity scores. Higher CLS indicates more linear and pre- dictable expression control. Expression Editing Accuracy (Acc). W e report the pro- portion of generated images whose predicted dominant ex- pression matches the target instruction. This metric mea- sures ov erall categorical editing success. 4. Method W e present PixelSmile, a framew ork for fine-grained facial expression editing. As illustrated in Fig. 3 , our method 0.1 0.2 0.3 0.4 0.5 0.6 0.7 Expression Score 0.4 0.5 0.6 0.7 0.8 0.9 ID Sim Narrower ID Impairment Wider Expression Range Ours (Full) KSlider SliderEdit Ours(w/o CL) 0.0 3.0 0.0 3.0 0.0 3.0 0.0 3.0 Figure 4. Quantitative Evaluation of Linear Control Methods . Comparison of the trade-off between ID similarity and e xpression score across dif ferent models. PixelSmile achie ves an optimal bal- ance, providing a wider expression manipulation range while pre- serving identity fidelity . builds upon a pretrained Multi-Modal Diffusion T rans- former (MMDiT) [ 58 ] with LoRA adaptation [ 31 ]. T o ad- dress intrinsic semantic entanglement and enable continu- ous intensity control, we introduce two key components: (1) a Flow-Matching-based textual interpolation mecha- nism [ 43 ] for smooth expression strength control; and (2) a Fully Symmetric Joint Training frame work with a symmet- ric contrasti ve objectiv e to reduce cross-category confusion while preserving identity and background consistency . 4.1. T extual Latent Interpolation for Continuous Editing Existing expression editing approaches typically rely on discrete labels or coarse reference signals [ 73 ], which lim- its fine-grained control over expression intensity . Instead, we perform linear interpolation in the textual latent space to enable continuous and smooth expression manipulation. T extual Latent Interpolation. Gi ven a neutral prompt P neu and a target expression prompt P tgt , the frozen MMDiT text encoder maps them to embeddings e neu and e tgt , respectiv ely . W e define the residual direction ∆ e = e tgt − e neu , (4) which captures the semantic shift from neutral to the target expression. A continuous conditioning embedding is then con- structed as e cond ( α ) = e neu + α · ∆ e, α ∈ [0 , 1] . (5) When α = 0 , the conditioning corresponds to neutral e x- pression; when α = 1 , it reco vers the full target expression. Intermediate v alues of α yield smoothly varying e xpression intensities. Importantly , the same direction also supports T able 1. Quantitative Evaluation of General Editing Models . Best, second best, and third best results are indicated by , , and respectiv ely . Method mSCR ↓ Acc-6 ↑ Acc-12 ↑ ID Sim ↑ Closed Seedream [ 5 ] 0.3725 0.5294 0.3737 0.7221 Nano Banana Pro [ 25 ] 0.1754 0.8431 0.6200 0.7107 GPT -Image [ 54 ] 0.1107 0.8039 0.6300 0.5056 Open FLUX-Klein [ 39 ] 0.2850 0.4510 0.3310 0.4146 LongCat [ 68 ] 0.1754 0.6275 0.4100 0.6036 Qwen-Edit [ 73 ] 0.2625 0.4510 0.2900 0.6938 Ours(w/o training) 0.2400 0.5294 0.3500 0.6769 Ours 0.0550 0.8627 0.6000 0.6522 T able 2. Quantitative Evaluation of Linear Control Models . Best, second best, and third best results are indicated by , , and respectiv ely . Method CLS-6 ↑ CLS-12 ↑ ID Sim ↑ HES ↑ SAEdit [ 35 ] -0.0183 0.0007 - - ConceptSlider [ 20 ] 0.3161 ∗ - 0.6250 0.3656 AttributeControl [ 3 ] 0.2856 ∗ - 0.3609 0.2712 K-Slider [ 57 ] -0.0459 -0.0634 0.7974 0.3272 SliderEdit [ 81 ] 0.5599 0.5217 0.7414 0.3441 Ours(w/o training) 0.6892 0.5217 0.6769 0.4086 Ours 0.8078 0.7305 0.6522 0.4723 ∗ Evaluated on CLS-2 ( happy , surprised ). extrapolation: at inference time, α > 1 enables stronger e x- pression transfer while maintaining structural consistency . Score-Super vised Flow Matching. T o enforce consistency between textual interpolation and visual intensity , we intro- duce score supervision during Flow Matching (FM) train- ing. Each training image is associated with a ground-truth intensity coefficient α gt ∈ [0 , 1] , deriv ed from the continu- ous expression annotations. During LoRA fine-tuning, we set α = α gt and use e cond ( α ) as the conditioning input to the dual-stream attention blocks. The score-supervised ve- locity loss is defined as L edit FM = E t,x 0 ,x 1 h v θ ( x t , t, e cond ( α )) − ( x 1 − x 0 ) 2 2 i , (6) where x 0 denotes the source image latent and x 1 denotes the edited tar get latent. This objecti ve e xplicitly couples the in- terpolation coefficient with the corresponding visual trans- formation. At inference, continuous control is achiev ed by varying α , without requiring reference images. 4.2. Fully Symmetric Joint T raining for Disentan- glement As stated in Sec. 1 and illustrated in Fig. 2 , facial e xpres- sions lie on a continuous and highly ov erlapping seman- tic manifold. For example, Surprise and F ear share simi- lar arousal and facial cues, leading to structural confusion near class boundaries when trained with discrete supervi- sion only . Inspired by contrastive learning and the idea of symmetric learning [ 70 ], we introduce a Fully Symmetric Joint Training framew ork with a symmetric contrastiv e ob- jectiv e in the feature space. Symmetric Construction. Gi ven a pair of semantically ov erlapping expressions, ( E a , E b ) , defined based on the confusion patterns observ ed in the FFE dataset, and an in- put image, the model performs two parallel generations, G a and G b , conditioned on prompts corresponding to E a and E b , respecti vely . For G a , the ground-truth image with ex- pression E a , denoted as P a , serves as the positive, while the image with e xpression E b , denoted as P b , is treated as a hard negativ e; the roles are reversed for G b . This symmetric design a voids directional bias and enforces consistent sepa- ration between confusing expressions. Symmetric Contrastive Loss. All images are encoded us- ing a frozen CLIP image encoder to capture expression se- mantics. The symmetric loss is defined as L SC = 1 2 [ T ( G a , P a , P b ) + T ( G b , P b , P a )] , (7) where T pulls the generated sample to ward its target while pushing it away from the confusing e xpression. W e in vestigate three realizations of T , including hinge- based [ 62 ], log-ratio [ 52 ], and InfoNCE-style [ 53 ] formu- lations. In practice, we primarily adopt the InfoNCE-style objectiv e due to its stable optimization. Detailed formula- tions and ablations are provided in the Appendix A . 4.3. Identity Preser vation Strong intensity extrapolation ( α > 1 ) or contrastive forces may degrade identity consistency . T o stabilize biometric features, we introduce an identity preserv ation loss based on a pretrained face recognition model. Specifically , we adopt ArcFace [ 14 ] as a frozen identity encoder Φ arc ( · ) . For generated images G a , G b and their corresponding ground truths P a , P b , the identity loss is defined as L ID = 1 2 X i ∈{ a,b } [1 − cos(Φ arc ( G i ) , Φ arc ( P i ))] , (8) This term enforces identity consistency while allowing expression v ariation. 4.4. Overall T raining Objective W e fine-tune the LoRA parameters of the frozen MMDiT under a symmetric dual-branch training scheme, where a pair of confusing expressions ( a, b ) is optimized jointly for the same subject. The overall objecti ve is defined as L total = 1 2 L a FM + L b FM + λ sc L SC + λ id L ID , (9) where λ sc and λ id control the trade-off between disentan- glement and identity preservation. This symmetric formu- lation jointly enforces continuous intensity control, expres- sion separation, and identity consistency . A n g r y Di sg u st A n g r y Di sg u st F e ar S ur pr i s e d F e ar S ur pr i s e d N an o B an an a P ro G PT - I m age 1. 5 S e e dr e am - 4. 5 F LU X . 2 K le i n L o n gC at - I m ag e - E d it Qw e n - Im a g e - Ed i t - 2 51 1 O urs Figure 5. Qualitative Comparison with General Editing Models. PixelSmile produces clearer e xpression changes while preserving facial identity , whereas e xisting editing models either weaken expression editing or degrade identity consistenc y . 5. Experiment 5.1. Experimental Setup W e implement PixelSmile based on Qwen-Image-Edit- 2511. T o handle the distinct stylistic distributions of real- world and anime domains, we train tw o independent LoRA adapters for each. Following prior work [ 6 , 88 ], for con- trastiv e supervision, we adopt CLIP-V iT -L/14 [ 61 ] for the real domain and DanbooruCLIP [ 55 ] for anime. Identity preservation is enforced using a pretrained ArcFace (an- telopev2) model for the real domain. Additional implemen- tation details are provided in Appendix B . Baselines. T o ensure a comprehensi ve and fair ev aluation, we divide baselines into two groups according to their pri- mary strengths in facial expression editing: general editing models, which are strong in ov erall e xpression editing qual- ity , and linear control models, which are designed for con- tinuous and predictable intensity control. C onc e p t S lid e r A ttr i b u t e C ont r o l S l i de r E di t K - S lid e r O urs C onc e p t S lid e r A ttr i b u t e C ont r o l S l i de r E di t K - S lid e r O urs Figure 6. Qualitative Comparison with Linear Control Models. PixelSmile achie ves smooth and monotonic expression transitions while preserving facial identity , whereas existing control methods either produce unstable responses or sacrifice identity consistency . The figure illustrates two representati ve expressions: happy (top row) and surprised (bottom ro w). Group 1: General Editing Models. This group repre- sents the strongest general-purpose text-guided image edit- ing systems. W e include three closed-source commercial systems: Nano Banana Pro, GPT -Image-1.5 (GPT -Image), Seedream-4.5 (Seedream), and three open-source mod- els: Qwen-Image-Edit-2511 (Qwen-Edit), FLUX.2 Klein (FLUX-Klein), and LongCat-Image-Edit (LongCat). In the following, we refer to each model by the abbreviated name in parentheses. Although these models do not provide explicit mechanisms for fine-grained linear control, their strong generativ e priors make them competitive in ov erall expression editing quality . W e therefore use them to ev al- uate expression editing accuracy and the ability to resolve structural confusion between semantically ov erlapping ex- pressions. Group 2: Linear Control Models. This group fo- cuses on continuous attrib ute manipulation in latent space. W e compare with recent control-oriented editing models including K ontinuous-Konte xt (K-Slider), SliderEdit, and SAEdit. W e also include earlier latent control approaches ConceptSlider and AttributeControl, using their officially recommended in version strategies for real-image editing. While these earlier methods pioneered latent attribute con- trol, they are often limited by narrow predefined attribute categories and information loss introduced by in version. W e therefore treat them as reference baselines rather than pri- mary competitors in multi-category quantitati ve e valuation. w / o I D L o ss O u rs s r c Figure 7. Ablation on identity loss. Without ID loss, large ex- pression intensities cause identity drift in hairstyle and skin tex- ture. Our full method preserves identity consistently . Evaluation Metrics. W e adopt the benchmark protocol de- fined in Sec. 3.2 . For Group 1, we ev aluate editing accurac y and e xpression disentanglement using Acc-6, Acc-12, and mSCR. For Group 2, we e valuate linear intensity control and identity fidelity using CLS-6, CLS-12, and HES. 5.2. Quantitative Ev aluation W e quantitativ ely compare PixelSmile with both general editing and linear control models in T able 1 and T able 2 . Evaluation with General Editing Models. As shown in T able 1 , we e valuate baselines on editing accuracy , struc- tural confusion, and identity fidelity . For the six basic e x- pressions, PixelSmile achie ves the highest editing accuracy (0.8627), surpassing Nano Banana Pro (0.8431) and GPT - Image (0.8039). On the twelve e xtended expressions, our method remains among the best-performing models. This partially reflects the bias of the VLM scoring model (Gem- ini 3 Pro), which is highly reliable on basic expressions but less consistent on extended expression categories. More im- portantly , PixelSmile achiev es the lo west structural confu- sion rate (0.0550), significantly outperforming GPT -Image (0.1107) and Nano Banana Pro (0.1754), while most other models e xceed 0.2000. A v alue approaching 0.5 indicates that the model tends to collapse the confusing expression pair into a single expression, reflecting poor disentangle- ment of ov erlapping expressions. In terms of identity fi- delity , empirical observations in [ 74 ] suggest that realistic facial expression editing typically yields ID similarity val- ues around 0.6–0.7. Scores above 0.8 often indicate rigid “copy-paste” behavior , while scores below 0.5 imply se- vere identity distortion. Some baselines fall into these ex- tremes: Seedream maintains high ID similarity but suf fers from large structural confusion due to limited edits, whereas FLUX-Klein drops below 0.5, significantly degrading iden- tity consistency . In contrast, Pix elSmile produces strong e x- pressions while maintaining identity similarity within the natural range, achieving a better balance between expres- sion strength and identity preservation. Evaluation with Linear Control Models. PixelSmile demonstrates robust and consistent linear controllability across all metrics. ConceptSlider and Attrib uteControl are limited to editing only two expression attributes (happy and surprised) and produce weak editing effects; therefore, we report them as reference baselines with partial met- rics (e.g., CLS-2) rather than full-category comparisons. SAEdit is a text-to-image method that does not explicitly support identity-preserving editing; therefore, we include it only for quantitati ve reference and do not provide detailed qualitativ e analysis. As sho wn in T able 2 and Figure 4 , simply applying textual embedding interpolation to Qwen- Edit (zero-shot) already yields competitiv e controllability (CLS-6 0.6892, HES 0.4086), outperforming existing con- trol baselines. W ith the proposed symmetric joint training, w / o C o n t ra st iv e L o s s w / o S y m m e t ric a l f r a m e w o r k O ur s Figure 8. Ablation on symmetric contrastiv e lear ning. Both w/o Contrastive Loss and w/o Symmetric Frame work suf fer from e xpression confusion, while our full method achiev es precise expression disentanglement. The upper three rows show angry and disgust, and the lo wer three rows sho w fear and surprised. PixelSmile further improves performance and achie ves the best results across all benchmarks (CLS-6 0.8078, CLS-12 0.7305, and HES 0.4723), indicating that explicitly mod- eling expression semantics is critical for stable and fine- grained controllability . Figure 4 further re veals the limi- tations of existing methods. Although K-Slider and Slid- erEdit maintain high av erage ID similarity , this is largely because low editing intensities produce negligible changes, yielding ID similarity v alues close to 1.0. Specifically , K- Slider exhibits neg ative CLS scores and irregular intensity fluctuations that nev er exceed ∼ 0.3, failing to establish lin- ear controllability . SliderEdit shows increasing expression intensity but forces a rapid drop in ID similarity (down to ∼ 0.4) once expression scores approach 0.5, indicating a trade-of f between editing strength and identity preserva- tion. In contrast, PixelSmile achieves a monotonic response across a wide intensity range (expression scores reaching ∼ 0.8) while maintaining identity similarity within the natu- ral 0.6–0.7 interval, ef fectiv ely balancing controllability and fidelity . This beha vior demonstrates that our method not only improves average performance but also ensures stable and predictable control across the intensity spectrum. T able 3. Ablation Study . Best, second best, and third best results are indicated by , , and respectively . Ablation mSCR ↓ A CC-6 ↑ ACC-12 ↑ CLS-6 ↑ CLS-12 ↑ HES ↑ ID Sim ↑ Loss w/o Contrastiv e Loss 0.2725 0.6471 0.5889 0.6978 0.5889 0.4500 0.7018 w/o ID Loss 0.0550 0.8824 0.6500 0.8215 0.6874 0.4451 0.5749 w/o Sym. Frame. 0.1350 0.7843 0.4700 0.7939 0.6488 0.4253 0.6402 Constraint w/ Log-Ratio Constraint 0.1750 0.8039 0.5300 0.7917 0.6546 0.4933 0.6943 w/ Hinge Constraint 0.0950 0.8824 0.6600 0.7997 0.7228 0.4758 0.6280 Data MEAD 0.2125 0.7647 0.4700 0.7047 0.6187 0.4235 0.5735 Ours Full Setting 0.0550 0.8627 0.6000 0.8078 0.7305 0.4723 0.6522 5.3. Qualitative Comparison W e qualitativ ely compare PixelSmile with both general editing models and linear control baselines, as illustrated in Figure 5 and Figure 6 . Comparison with General Editing Models. As sho wn in Figure 5 , existing general editing models struggle to simul- taneously achieve clear expression editing and strong iden- tity preserv ation. Several models, including Nano Banana Pro, Qwen-Edit, Seedream, and LongCat, preserve iden- tity well b ut produce only weak expression changes, of- ten resulting in barely noticeable edits. In contrast, GPT - Image generates more visible expression differences but introduces moderate identity drift. FLUX-Klein performs the worst in both aspects, sho wing weak expression editing while sev erely degrading identity consistency . Compared with these methods, PixelSmile produces clear and recog- nizable expression changes while maintaining stable facial identity , achieving the best balance between semantic edit- ing and identity preservation. Comparison with Linear Control Models. Figure 6 com- pares continuous expression control across different meth- ods. An ideal method should produce expression inten- sity that increases monotonically with the control param- eter while preserving identity consistency . W e first analyze the relatively simple expression Happy . ConceptSlider and AttributeControl show limited linear response but quickly degrade identity as editing strength increases. SliderEdit exhibits a step-like behavior: expressions remain nearly un- changed for most control v alues and suddenly increase at higher strengths, accompanied by significant identity degra- dation. K-Slider shows unstable beha vior, where expres- sion changes hav e little correlation with the control param- eter . When mo ving to the more challenging e xpression Sur - prised , the linear response of these methods further dete- riorates and identity preservation becomes worse. In con- trast, PixelSmile maintains a stable monotonic increase in expression intensity while preserving identity across the en- tire control range. Even for more difficult expressions such as Disgust , our method continues to produce clear and con- trollable expression changes. 5.4. Ablation Study T o v alidate the necessity of each component in PixelSmile, we conduct comprehensiv e ablation e xperiments, with quantitativ e results summarized in T able 3 . Overall, the re- sults rev eal an inherent trade-off between expression editing capability and identity preservation: stronger editing often leads to identity degradation, while excessiv e identity con- straints suppress effecti ve e xpression transfer . Ablation on Loss Framework. W e first analyze the roles of the identity loss and the contrastive loss. Removing the identity loss improves expression editing and disentangle- ment but significantly de grades identity consistency . The model tends to modify facial attributes such as hairstyle or skin texture to match the tar get e xpression, especially at large editing intensities, leading to clear identity drift and inconsistent facial appearance across edits. As illustrated in Fig. 7 , the full model maintains stable identity while the variant without ID loss shows noticeable identity changes, confirming the importance of identity supervision for pre- serving subject consistency . 1000 3000 5000 7000 9000 Steps 0.06 0.08 0.10 0.12 0.14 mSCR w/o Sym. frame. (mSCR) Ours (mSCR) w/o Sym. frame. (Loss) Ours (Loss) 0.35 0.40 0.45 0.50 0.55 0.60 Train Loss Figure 9. T raining dynamics of symmetric contrastive learn- ing. The asymmetric variant reduces loss faster in early train- ing but leads to higher structural confusion, while the symmetric framew ork achieves lo wer and more stable mSCR. Con versely , removing the contrastiv e loss yields the highest identity similarity but leads to the weakest edit- ing accuracy and the highest structural confusion. Without the contrasti ve objective, the model collapses to ward recon- structing the source image instead of performing meaning- ful expression edits. As sho wn in Fig. 8 , the model without contrastiv e supervision fails to separate semantically sim- ilar expressions, resulting in severe expression confusion. These results demonstrate that the two losses play comple- mentary roles: identity loss stabilizes facial identity , while contrastiv e loss enhances expression disentanglement. Ablation on Symmetric Framework. W e further com- pare the proposed symmetric training design with an asym- metric v ariant that applies contrastive supervision to only one branch. As sho wn in Fig. 8 , removing the symmet- ric structure again leads to noticeable expression confusion. From the training dynamics in Fig. 9 , the asymmetric model shows faster initial loss reduction but con verges to worse solutions with lower editing accuracy and higher confusion rates. In contrast, the symmetric design acts as a structural regularizer: although it slows early conv ergence, the bidi- rectional constraints stabilize optimization and lead to bet- ter disentangled representations. Ablation on T riplet Formulations. W e also compare three triplet formulations: Log-r atio , Hinge , and InfoNCE . Log- ratio fav ors identity preserv ation but weakens e xpression editing, while Hinge maximizes editing strength at the cost of identity consistency . InfoNCE achieves the best balance between expression disentanglement and identity fidelity , and is therefore adopted as the default formulation. Ablation on Dataset. Finally , we ev aluate the impact of training data by training the same architecture on the widely used MEAD dataset [ 69 ], with preprocessing details pro- vided in Appendix C . The MEAD-trained model consis- tently underperforms our full model across all metrics. This gap is mainly due to MEAD’ s limited identity di versity and discrete intensity annotations, which restrict fine-grained expression modeling and semantic disentanglement. In con- trast, our FFE provides richer identity v ariation and continu- ous soft-label supervision, enabling more precise and robust expression editing in the wild. 5.5. User Study W e conducted a user study with 2,400 images and 10 trained annotators who ranked three continuous editing methods on expression continuity and identity consistency . Mean scores (continuity , identity) are: PixelSmile (4.48, 3.80); K-Slider (1.36, 4.06); SliderEdit (3.16, 1.14). As illustrated in Fig- ure 10 , human judgments are consistent with the machine- based ev aluation. Overall, PixelSmile achie ves the best balance, attaining the highest continuity while maintaining strong identity preservation. 5.6. Expression Blend Human facial beha vior often in v olves compound expres- sions [ 17 , 18 , 56 ]. T o explore whether such compositional- ity emerges in the learned representation, we perform pair- wise linear interpolation among six basic expressions, pro- ducing 15 zero-shot combinations. As shown in Fig. 12 in the Appendix, several pairs generate perceptually coherent compound expressions, suggesting that the learned emo- tion manifold is continuous and compositional. Ho wev er, some combinations collapse into a single dominant expres- sion (e.g., Fear+Surprise) or produce unstable results due to physiological conflicts (e.g., Angry+Happy). Overall, 9 out of 15 combinations form plausible compound expressions, indicating that the learned representation supports linear composition while respecting implicit facial constraints and capturing meaningful compositional structure. 1 2 3 4 5 Continuity 1 2 3 4 5 Identity Consistency Ours K-Slider SliderEdit Figure 10. User study results. W e show the trade-off between identity preservation and continuity of editing, annotated by hu- man annotators. The size of the points indicates the HES scores of human annotators. 6. Conclusion In this paper , we present PixelSmile, a framework for ad- dressing semantic entanglement in facial expression edit- ing. By shifting from discrete supervision to the continuous expression manifold defined by FFE and ev aluated through FFE-Bench, our approach enables precise and linearly con- trollable editing via symmetric joint training. Extensiv e ex- periments demonstrate ef fectiveness of PixelSmile in four dimensions: structural confusion, expression accuracy , lin- ear controllability , and identity preserv ation. Overall, this work establishes a standardized frame work for fine-grained facial expression editing and adv ances research toward con- tinuous and compositional facial af fect manipulation. Ethics Statement All data in FFE is collected from publicly a vailable sources and used in compliance with their respectiv e licenses and terms of use. The real-world subset is derived from existing public datasets (e.g., Human Images Dataset [ 66 ] and Mat- ting Human Dataset [ 1 ], both distrib uted under the MIT Li- cense), while the anime subset consists of stylized fictional characters from publicly av ailable media. W e do not collect or use any priv ate or login-restricted data. Facial expres- sion editing is a dual-use technology that may pose risks, such as misuse in identity-related scenarios. Our work fo- cuses on expression manipulation and is intended for non- commercial academic research. W e do not aim to alter iden- tity or enable decepti ve applications. T o mitigate potential risks, no personal metadata is retained, and the dataset is curated to exclude offensi ve content. W e encourage respon- sible use of the dataset and models in compliance with ap- plicable laws, re gulations, and ethical guidelines. References [1] aisegmentcn. Matting human datasets. https : / / github . com / aisegmentcn / matting _ human _ datasets , 2020. Accessed: 2026-03-03. 4 , 13 [2] Emad Barsoum, Cha Zhang, Cristian Canton Ferrer , and Zhengyou Zhang. Training deep networks for facial expres- sion recognition with crowd-sourced label distrib ution. In ICMI , 2016. 3 [3] Stefan Andreas Baumann, Felix Krause, Michael Neumayr , Nick Stracke, Melvin Sevi, V incent T ao Hu, and Bj ¨ orn Om- mer . Continuous, subject-specific attribute control in t2i models by identifying semantic directions. In CVPR , 2025. 3 , 6 [4] T im Brooks, Aleksander Holynski, and Alex ei A Efros. In- structpix2pix: Learning to follo w image editing instructions. In CVPR , 2023. 2 [5] ByteDance. Seedream 4.5: Advanced ai image genera- tion model. https : / / seed . bytedance . com / en / seedream4_5 , 2025. Accessed: 2026-03. 3 , 6 [6] Jingjing Chang, Y ixiao Fang, Peng Xing, Shuhan W u, W ei Cheng, Rui W ang, Xianfang Zeng, Gang Y u, and Hai-Bao Chen. Oneig-bench: Omni-dimensional nuanced ev alua- tion for image generation. arXiv pr eprint arXiv:2506.07977 , 2025. 7 [7] T a Y ing Cheng, Prafull Sharma, Mark Boss, and V arun Jam- pani. Marble: Material recomposition and blending in clip- space. In CVPR , 2025. 3 [8] W ei Cheng, Su Xu, Jingtan Piao, Chen Qian, W ayne W u, Kwan-Y ee Lin, and Hongsheng Li. Generalizable neural performer: Learning robust radiance fields for human novel view synthesis. arXiv preprint , 2022. 16 [9] W ei Cheng, Ruixiang Chen, Siming Fan, W anqi Y in, K eyu Chen, Zhongang Cai, Jingbo W ang, Y ang Gao, Zhengming Y u, Zhengyu Lin, et al. Dna-rendering: A diverse neural actor repository for high-fidelity human-centric rendering. In ICCV , 2023. 16 [10] Y unjey Choi, Minje Choi, Munyoung Kim, Jung-W oo Ha, Sunghun Kim, and Jaegul Choo. Star gan: Unified genera- tiv e adv ersarial networks for multi-domain image-to-image translation. In CVPR , 2018. 2 [11] Y unjey Choi, Y oungjung Uh, Jaejun Y oo, and Jung-W oo Ha. Stargan v2: Div erse image synthesis for multiple domains. In CVPR , 2020. 2 [12] Y usuf Dalva, Kav ana V enkatesh, and Pinar Y anardag. Fluxs- pace: Disentangled semantic editing in rectified flow trans- formers. arXiv pr eprint arXiv:2412.09611 , 2024. 3 [13] Radek Dan ˇ e ˇ cek, Michael J Black, and T imo Bolkart. Emoca: Emotion driven monocular face capture and animation. In CVPR , 2022. 2 [14] Jiankang Deng, Jia Guo, Niannan Xue, and Stefanos Zafeiriou. Arcface: Additiv e angular margin loss for deep face recognition. In CVPR , 2019. 5 , 6 [15] Hui Ding, K umar Sricharan, and Rama Chellappa. Exprgan: Facial e xpression editing with controllable expression inten- sity . In AAAI , 2018. 2 [16] Zheng Ding, Xuaner Zhang, Zhihao Xia, Lars Jebe, Zhuowen Tu, and Xiuming Zhang. Diffusionrig: Learning personalized priors for facial appearance editing. In CVPR , 2023. 2 [17] Shichuan Du and Aleix M Martinez. Compound facial ex- pressions of emotion: from basic research to clinical appli- cations. Dialogues in clinical neur oscience , 2015. 12 [18] Shichuan Du, Y ong T ao, and Aleix M Martinez. Compound facial expressions of emotion. PNAS , 2014. 12 [19] Paul Ekman. An argument for basic emotions. Cognition & emotion , 1992. 4 [20] Rohit Gandikota, Joanna Materzy ´ nska, Tingrui Zhou, Anto- nio T orralba, and Da vid Bau. Concept sliders: Lora adaptors for precise control in dif fusion models. In ECCV , 2024. 3 , 6 [21] Rohit Gandikota, Zongze W u, Richard Zhang, David Bau, Eli Shechtman, and Nick K olkin. Sliderspace: Decomposing the visual capabilities of dif fusion models. In ICCV , 2025. 3 [22] Nicola Garau, Niccolo Bisagno, Piotr Br ´ odka, and Nicola Conci. Deca: Deep viewpoint-equi variant human pose esti- mation using capsule autoencoders. In ICCV , 2021. 2 [23] Daniel Garibi, Shahar Y adin, Roni Paiss, Omer T ov , Shiran Zada, Ariel Ephrat, T omer Michaeli, Inbar Mosseri, and T ali Dekel. T oken verse: V ersatile multi-concept personalization in token modulation space. T OG , 2025. 3 [24] Ian J Goodfello w , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair , Aaron Courville, and Y oshua Bengio. Generati ve adv ersarial nets. NeurIPS , 2014. 2 [25] Google. Nano banana pro: High-fidelity ai im- age generation and editing model. https : / / www.androidcentral.com /apps- software/ ai/ googles- nano - banana- pro- and- more , 2025. Ac- cessed: 2026-03. 3 , 6 [26] Julia Guerrero-V iu, Milos Hasan, Arthur Roullier , Midhun Harikumar , Y iwei Hu, Paul Guerrero, Diego Gutierrez, Be- len Masia, and V alentin Deschaintre. T exsliders: Dif fusion- based texture editing in clip space. In SIGGRAPH , 2024. 3 [27] Zinan Guo, Y anze W u, Chen Zhuowei, Peng Zhang, Qian He, et al. Pulid: Pure and lightning id customization via contrastiv e alignment. NeurIPS , 2024. 2 [28] Erik H ¨ ark ¨ onen, Aaron Hertzmann, Jaakko Lehtinen, and Sylvain Paris. Ganspace: Discovering interpretable gan con- trols. NeurIPS , 2020. 2 [29] Amir Hertz, Ron Mokady , Jay T enenbaum, Kfir Aberman, Y ael Pritch, and Daniel Cohen-Or . Prompt-to-prompt im- age editing with cross attention control. arXiv pr eprint arXiv:2208.01626 , 2022. 2 , 3 [30] Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffu- sion probabilistic models. NeurIPS , 2020. 2 [31] Edward J Hu, Y elong Shen, Phillip W allis, Zeyuan Allen- Zhu, Y uanzhi Li, Shean W ang, Liang W ang, W eizhu Chen, et al. Lora: Low-rank adaptation of large language models. ICLR , 2022. 5 [32] Rahul Jain, Amit Goel, Koichiro Niinuma, and Aakar Gupta. Adaptiv esliders: User-aligned semantic slider-based editing of text-to-image model output. In CHI , 2025. 3 [33] W ooseok Jang, Y oungjun Hong, Geonho Cha, and Seungry- ong Kim. Controlface: Harnessing facial parametric control for face rigging. In CVPR , 2025. 2 [34] Liming Jiang, Qing Y an, Y umin Jia, Zichuan Liu, Hao Kang, and Xin Lu. Infiniteyou: Flexible photo recrafting while pre- serving your identity . In ICCV , 2025. 2 [35] Ronen Kamenetsky , Sara Dorfman, Daniel Garibi, Roni Paiss, Or Patashnik, and Daniel Cohen-Or . Saedit: T oken- lev el control for continuous image editing via sparse autoen- coder . arXiv pr eprint arXiv:2510.05081 , 2025. 3 , 6 [36] T ero Karras, Samuli Laine, and T imo Aila. A style-based generator architecture for generativ e adversarial networks. In CVPR , 2019. 2 [37] T ero Karras, Samuli Laine, Miika Aittala, Janne Hellsten, Jaakko Lehtinen, and Timo Aila. Analyzing and improving the image quality of stylegan. In CVPR , 2020. 2 [38] Minchul Kim, Anil K Jain, and Xiaoming Liu. Adaface: Quality adaptiv e mar gin for face recognition. In CVPR , 2022. 5 [39] Black Forest Labs. FLUX.2: Frontier V isual Intelligence. https://bfl.ai/blog/flux- 2 , 2025. 3 , 6 [40] Black Forest Labs, Stephen Batifol, Andreas Blattmann, Frederic Boesel, Saksham Consul, Cyril Diagne, Tim Dock- horn, Jack English, Zion English, Patrick Esser , et al. Flux. 1 kontext: Flow matching for in-context image generation and editing in latent space. arXiv preprint , 2025. 3 [41] Oli ver Langner , Ron Dotsch, Gijsbert Bijlstra, Daniel HJ W igboldus, Skyler T Hawk, and AD V an Knippenberg. Pre- sentation and v alidation of the radboud faces database. Cog- nition and Emotion , 2010. 3 [42] Shan Li, W eihong Deng, and JunPing Du. Reliable crowd- sourcing and deep locality-preserving learning for expres- sion recognition in the wild. In CVPR , 2017. 3 [43] Y aron Lipman, Ricky TQ Chen, Heli Ben-Hamu, Maximil- ian Nickel, and Matt Le. Flow matching for generati ve mod- eling. arXiv pr eprint arXiv:2210.02747 , 2022. 5 [44] Lu Liu, Huiyu Duan, Qiang Hu, Liu Y ang, Chunlei Cai, T ianxiao Y e, Huayu Liu, Xiaoyun Zhang, and Guangtao Zhai. F-bench: Rethinking human preference ev aluation metrics for benchmarking face generation, customization, and restoration. In ICCV , 2025. 3 [45] Ming Liu, Y ukang Ding, Min Xia, Xiao Liu, Errui Ding, W angmeng Zuo, and Shilei W en. Stgan: A unified selec- tiv e transfer network for arbitrary image attribute editing. In CVPR , 2019. 2 [46] Shiyu Liu, Y ucheng Han, Peng Xing, Fukun Y in, Rui W ang, W ei Cheng, Jiaqi Liao, Y ingming W ang, Honghao Fu, Chun- rui Han, et al. Step1x-edit: A practical framework for general image editing. arXiv pr eprint arXiv:2504.17761 , 2025. 2 , 3 [47] Patrick Lucey , Jeffre y F Cohn, T akeo Kanade, Jason Saragih, Zara Ambadar , and Iain Matthews. The extended cohn- kanade dataset (ck+): A complete dataset for action unit and emotion-specified expression. In 2010 ieee computer soci- ety conference on computer vision and pattern r ecognition- workshops , 2010. 3 [48] Daniel Lundqvist, Anders Flykt, and Arne ¨ Ohman. Karolin- ska directed emotional faces. Cognition and Emotion , 1998. 3 [49] Chenlin Meng, Y utong He, Y ang Song, Jiaming Song, Jia- jun W u, Jun-Y an Zhu, and Stefano Ermon. Sdedit: Guided image synthesis and editing with stochastic differential equa- tions. arXiv pr eprint arXiv:2108.01073 , 2021. 2 [50] Ali Mollahosseini, Behzad Hasani, and Mohammad H Ma- hoor . Affectnet: A database for facial expression, valence, and arousal computing in the wild. IEEE transactions on affective computing , 2017. 3 [51] Arsha Nagrani, Joon Son Chung, W eidi Xie, and Andre w Zisserman. V oxceleb: Large-scale speaker verification in the wild. Computer Speech & Langua ge , 2020. 3 [52] Hyun Oh Song, Y u Xiang, Stefanie Jegelka, and Silvio Sav arese. Deep metric learning via lifted structured feature embedding. In CVPR , 2016. 6 [53] Aaron van den Oord, Y azhe Li, and Oriol V in yals. Repre- sentation learning with contrastive predictive coding. arXiv pr eprint arXiv:1807.03748 , 2018. 6 [54] OpenAI. Introducing gpt-image-1.5. https://openai . com / index / new - chatgpt - images - is - here/ , 2025. Accessed: 2026-03. 3 , 6 [55] OysterQA Q. Danbooruclip. https : / / huggingface . co / OysterQAQ / DanbooruCLIP , 2023. Accessed: 2023-05-18. 7 [56] Dongwei Pan, Long Zhuo, Jingtan Piao, Huiwen Luo, W ei Cheng, Y uxin W ang, Siming Fan, Shengqi Liu, Lei Y ang, Bo Dai, et al. Renderme-360: A large digital asset library and benchmarks towards high-fidelity head avatars. NeurIPS , 2023. 12 , 16 [57] Rishubh P arihar, Or Patashnik, Daniil Ostashev , R V enkatesh Babu, Daniel Cohen-Or , and Kuan-Chieh W ang. Kontinuous kontext: Continuous strength control for instruction-based image editing. arXiv pr eprint arXiv:2510.08532 , 2025. 3 , 6 [58] W illiam Peebles and Saining Xie. Scalable diffusion models with transformers. In ICCV , 2023. 5 [59] Albert Pumarola, Antonio Agudo, Aleix M Martinez, Al- berto Sanfeliu, and Francesc Moreno-Noguer . Ganimation: Anatomically-aware facial animation from a single image. In ECCV , 2018. 2 [60] Zongyang Qiu, Bingyuan W ang, Xingbei Chen, Y ingqing He, and Zeyu W ang. Emovid: A multimodal emotion video dataset for emotion-centric video understanding and genera- tion. arXiv pr eprint arXiv:2511.11002 , 2025. 3 [61] Alec Radford, Jong W ook Kim, Chris Hallacy , Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry , Amanda Askell, Pamela Mishkin, Jack Clark, et al. Learn- ing transferable visual models from natural language super- vision. In ICLR , 2021. 7 [62] Florian Schroff, Dmitry Kalenichenko, and James Philbin. Facenet: A unified embedding for face recognition and clus- tering. In CVPR , 2015. 5 , 6 [63] Prafull Sharma, V arun Jampani, Y uanzhen Li, Xuhui Jia, Dmitry Lagun, Fredo Durand, Bill Freeman, and Mark Matthews. Alchemist: Parametric control of material prop- erties with diffusion models. In CVPR , 2024. 3 [64] Y ujun Shen and Bolei Zhou. Closed-form factorization of latent semantics in gans. In CVPR , 2021. 2 [65] Y ujun Shen, Jinjin Gu, Xiaoou T ang, and Bolei Zhou. Inter- preting the latent space of gans for semantic face editing. In CVPR , 2020. 2 [66] snmahsa. Human images dataset (men and women). https:/ /www.kaggle .com/ datasets/snmahsa / human - images - dataset - men - and - women , 2021. Accessed: 2026-03-03. 4 , 13 [67] Deepak Sridhar and Nuno V asconcelos. Prompt sliders for fine-grained control, editing and erasing of concepts in dif- fusion models. In ECCV , 2024. 3 [68] Meituan LongCat T eam, Hanghang Ma, Haoxian T an, Jiale Huang, Junqiang W u, Jun-Y an He, Lishuai Gao, Songlin Xiao, Xiaoming W ei, Xiaoqi Ma, Xunliang Cai, Y ayong Guan, and Jie Hu. Longcat-image technical report. arXiv pr eprint arXiv:2512.07584 , 2025. 3 , 6 [69] Kaisiyuan W ang, Qianyi W u, Linsen Song, Zhuoqian Y ang, W ayne Wu, Chen Qian, Ran He, Y u Qiao, and Chen Change Loy . Mead: A large-scale audio-visual dataset for emotional talking-face generation. In ECCV , 2020. 3 , 12 , 16 [70] Y isen W ang, Xingjun Ma, Zaiyi Chen, Y uan Luo, Jinfeng Y i, and James Bailey . Symmetric cross entropy for rob ust learning with noisy labels. In ICCV , 2019. 6 [71] Mengting W ei, T uomas V aranka, Xingxun Jiang, Huai-Qian Khor , and Guoying Zhao. Magicface: High-fidelity facial expression editing with action-unit control. arXiv pr eprint arXiv:2501.02260 , 2025. 2 [72] Alon W olf, Chen Katzir , Kfir Aberman, and Or Patashnik. Continuous control of editing models via adaptiv e-origin guidance. arXiv pr eprint arXiv:2602.03826 , 2026. 3 [73] Chenfei W u, Jiahao Li, Jingren Zhou, Jun yang Lin, Kaiyuan Gao, Kun Y an, Sheng-ming Y in, Shuai Bai, Xiao Xu, Y ilei Chen, et al. Qwen-image technical report. arXiv pr eprint arXiv:2508.02324 , 2025. 2 , 3 , 5 , 6 [74] Hengyuan Xu, W ei Cheng, Peng Xing, Y ixiao Fang, Shuhan W u, Rui W ang, Xianfang Zeng, Daxin Jiang, Gang Y u, Xingjun Ma, et al. W ithanyone: T ow ards control- lable and id consistent image generation. arXiv preprint arXiv:2510.14975 , 2025. 2 , 9 [75] Zhenyu Xu, Xiaoqi Shen, Haotian Nan, and Xinyu Zhang. Numerikontrol: Adding numeric control to dif fusion trans- formers for instruction-based image editing. arXiv pr eprint arXiv:2511.23105 , 2025. 3 [76] Y uqi Y ang, Dongliang Chang, Y uanchen Fang, Y i-Zhe SonG, Zhanyu Ma, and Jun Guo. Controllable-continuous color editing in diffusion model via color mapping. arXiv pr eprint arXiv:2509.13756 , 2025. 3 [77] W eixin Y e, Hongguang Zhu, W ei W ang, Y ahui Liu, and Mengyu W ang. All-in-one slider for attribute manipulation in diffusion models. arXiv pr eprint arXiv:2508.19195 , 2025. 3 [78] Lijun Y in, Xiaozhou W ei, Y i Sun, Jun W ang, and Matthe w J Rosato. A 3d facial expression database for facial behavior research. In 7th international conference on automatic face and gestur e r ecognition (FGR06) , 2006. 3 [79] Xingxi Y in, Jingfeng Zhang, Y ue Deng, Zhi Li, Y icheng Li, and Y in Zhang. Instructattribute: Fine-grained ob- ject attributes editing with instruction. arXiv preprint arXiv:2505.00751 , 2025. 3 [80] O ˘ guz Kaan Y ¨ uksel, Enis Simsar , Ezgi G ¨ ulperi Er, and Pinar Y anardag. Latentclr: A contrastive learning approach for unsupervised discov ery of interpretable directions. In ICCV , 2021. 2 [81] Arman Zarei, Samyadeep Basu, Mobina Pournemat, Sayan Nag, Ryan Rossi, and Soheil Feizi. Slideredit: Continuous image editing with fine-grained instruction control. arXiv pr eprint arXiv:2511.09715 , 2025. 3 , 6 [82] Lvmin Zhang, An yi Rao, and Maneesh Agraw ala. Adding conditional control to text-to-image dif fusion models. In ICCV , 2023. 2 [83] Zhanpeng Zhang, Ping Luo, Chen Change Loy , and Xiaoou T ang. From facial expression recognition to interpersonal relation prediction. IJCV , 2018. 3 [84] Zhicheng Zhang, W eicheng W ang, Y ongjie Zhu, W enyu Qin, Pengfei W an, Di Zhang, and Jufeng Y ang. V idemo: Affecti ve-tree reasoning for emotion-centric video founda- tion models. arXiv pr eprint arXiv:2511.02712 , 2025. 3 [85] W eizhi Zhong, Huan Y ang, Zheng Liu, Huiguo He, Zijian He, Xuesong Niu, Di Zhang, and Guanbin Li. Mod-adapter: T uning-free and versatile multi-concept personalization via modulation adapter . arXiv pr eprint arXiv:2505.18612 , 2025. 3 [86] Hao Zhu, W ayne W u, W entao Zhu, Liming Jiang, Siwei T ang, Li Zhang, Ziwei Liu, and Chen Change Loy . Celebv- hq: A large-scale video facial attributes dataset. In ECCV , 2022. 16 [87] Y ule Zhu, Ping Liu, Zhedong Zheng, and W ei Liu. Seed: A benchmark dataset for sequential facial attribute editing with diffusion models. arXiv preprint , 2025. 3 [88] Cailin Zhuang, Ailin Huang, Y aoqi Hu, Jingwei W u, W ei Cheng, Jiaqi Liao, Hongyuan W ang, Xinyao Liao, W ei- wei Cai, Hengyuan Xu, et al. V istorybench: Comprehen- siv e benchmark suite for story visualization. arXiv pr eprint arXiv:2505.24862 , 2025. 7 A ppendix A. Details of the Symmetric Contrastive Loss A.1. T riplet Constraint Formulations In this section, we provide detailed formulations of the triplet constraint function T ( G, P , N ) used in Sec. 4 . All features are extracted using a frozen CLIP image encoder to represent expression semantics and are ℓ 2 -normalized be- fore distance computation. For brevity , we denote d G,P = d ( G, P ) and d G,N = d ( G, N ) as cosine distances, and s G,P = sim( G, P ) and s G,N = sim( G, N ) as cosine simi- larities. Hinge-based Formulation. The margin-based objective is T hinge ( G, P , N ) = max 0 , d G,P − d G,N + m , (10) where m is a fixed mar gin. Log-Ratio Formulation. W e adopt a smooth distance- ratio objectiv e: T ratio ( G, P , N ) = log d G,P + ϵ d G,N + ϵ , (11) where ϵ is a small constant for numerical stability . InfoNCE-style Formulation. The probabilistic con- trastiv e objectiv e is T nce ( G, P , N ) = − log exp( s G,P /τ ) P x ∈{ P,N } exp( s G,x /τ ) , (12) where τ is a temperature parameter . A.2. Implementation Details Unless otherwise specified, we use the InfoNCE-style for- mulation with temperature τ = 0 . 07 . For the hinge-based variant, the margin is set to m = 0 . 2 . For the log-ratio formulation, we set ϵ = 10 − 6 for numerical stability . All variants are e valuated under identical training schedules. B. Details of Experiment T o ensure reproducibility and clarity , we provide additional implementation details for PixelSmile. Training is con- ducted on 4 NVIDIA H200 GPUs. LoRA Configuration. W e apply LoRA to major attention and MLP components of the dif fusion transformer . Key hy- perparameters are: rank = 64, α = 128, and dropout = 0. T raining Hyper parameters. The models are optimized for 100 epochs using the AdamW optimizer with β 1 = 0 . 9 , β 2 = 0 . 999 , weight decay = 0.001, and ϵ = 1 e − 8 . The learning rate is set to 1 e − 4 with cosine scheduling and 500 warmup steps. Mixed precision (bf16) is enabled to stabi- lize training. For the loss weights, we set λ SC = 1 . 0 (In- foNCE mode, symmetric) and λ ID = 0 . 1 . The batch size per GPU is 4 with gradient accumulation steps = 1. C. Details of Dataset Ablation C.1. Dataset Overview Among human-centric dataset [ 8 , 9 , 56 , 69 , 86 ], we choose MEAD [ 69 ] to ablate on effecti veness of proposed dataset. The MEAD dataset [ 69 ] contains 7 discrete facial expres- sions captured from multi-vie w video sequences, with three intensity levels (low , medium, high). For our ablation, we only use the front-view subset and map its three intensity lev els to continuous v alues 0.5,0.75,1.0 to match the input range of PixelSmile. C.2. Prepr ocessing and T riplet Construction Since MEAD pro vides video sequences, we uniformly sam- ple frames to obtain independent images. From these sam- pled frames, we construct triplets ( P a , P b , I orig ) in the same manner as for FFE to train the symmetric contrastive framew ork. Each triplet consists of: • I orig 1: the source frame. • P a , P b 2: two frames of the same subject with distinct expressions. Finally , we construct triplet data pairs from the same identities to conduct the symmetric contrasti ve training un- der our default configuration. D. Additional Qualitative Results This section provides additional qualitati ve results for Pix- elSmile. W e present more examples of linear expression editing across multiple e xpression categories, as well as ad- ditional expression blending results obtained through inter- polation in the learned expression space. D.1. Additional Linear Expr ession Editing Results Figure 11 presents additional linear editing results for the remaining ten expressions across both real and anime do- mains. As the control parameter increases, the expression intensity changes smoothly while the facial identity remains consistent. D.2. Expr ession Blend Results Figure 12 shows the examples of expression blending ob- tained through pairwise interpolation between basic expres- sions. A n x i o us C ont e m p t Dis g u s t F e ar S ad An g r y C o nf i d e nt C onf u s e d S hy S l e ep y Figure 11. Additional linear expression editing results. W e show the remaining ten expressions across both real and anime domains. The top row shows results on real images, while the bottom row shows results on anime images. Expression intensity increases from left to right for each expression. H a ppy S urp r i s e d B l e nd e d B l e nd e d B l e nd e d B l e nd e d B l e nd e d B l e nd e d H a ppy S ad H a ppy Dis g u s t H a ppy F e ar F e ar Dis g u s t S ad S ad Figure 12. Expression Blending Results. V isualizing compositional facial e xpressions generated by smoothly blending multiple emotional categories in Pix elSmile. E. Additional Dataset Details This section provides supplementary details of FFE, includ- ing annotation and scoring prompts, together with addi- tional dataset statistics. E.1. Annotation and Scoring Prompts W e provide the prompt templates used in our annotation pipeline and expression scoring procedure. T able 4 and T able 5 present the prompts used for statistical annotation of the human and anime subsets, respectiv ely . These two templates are designed to extract structured semantic at- tributes for dataset analysis and are both based on Qwen3- VL-235B-A22B . T able 6 shows the prompt used to assign expression intensity scores to images, which is based on Gemini 3 Pro . E.2. Dataset Statistics W e present the statistical analysis of FFE in Fig. 13 and Fig. 14 , cov ering cate gorical distrib utions and textual de- scription patterns across the real-world and anime domains. From Fig. 13 , the real-world subset is diverse b ut im- balanced, dominated by young adults (53.5%), with chil- dren, teens, and seniors forming smaller proportions. Sim- ilar trends are observed in other attributes, where female samples are more frequent and light-to-medium skin tones constitute the majority , indicating that the dataset inherits non-uniform demographic characteristics. This bias reflects common patterns in portrait-centric internet images and in- troduces challenges for expression modeling. In contrast, the anime subset exhibits broader stylistic div ersity , with CG and 2D anime each accounting for about 44%, along with additional styles such as chibi, manga, and sketch. Compared with the real-w orld subset, the anime subset also shows a flatter age distribution. Ho wev er, it contains more unknown labels in attributes like gender and age, suggesting that stylized characters are inherently more ambiguous un- der real-world categorization schemes, which increases the difficulty of consistent annotation and e valuation. Fig. 14 further rev eals domain-specific textual patterns. The real-world subset emphasizes natural appearance cues such as clothing, hairstyle, and facial details, while the anime subset contains more stylized and visually distinc- tiv e descriptions. This dif ference highlights that expres- sion editing in FFE in v olves both visual transformation and domain-dependent semantic interpretation, requiring mod- els to generalize across heterogeneous distributions. Over - all, these statistics indicate that FFE combines substan- tial di versity with realistic biases, making it a challenging and representati ve benchmark for fine-grained, di verse, and real-world facial e xpression editing. 0% 10% 20% 30% 40% 50% 60% Percentage (%) Y oung Adult Adult Middle Aged Child T een Senior Unknown 53.5% 19.7% 12.4% 5.6% 5.0% 3.6% 0.1% (a) Age distribution in the real-world domain 0% 10% 20% 30% 40% 50% Percentage (%) Cg Anime 2D Anime Chibi Manga Unknown Sk etch Other 44.7% 44.1% 4.0% 2.7% 2.4% 1.2% 1.0% (b) Style distribution in the anime domain Figure 13. Statistical distributions of annotated data in FFE. The results provide insights into the underlying data characteristics across real-world and anime domains. T able 4. Human Dataset Annotation Prompt T emplate. Human Dataset Annotation Prompt System Context Y ou are an image annotation assistant. For a single person image, output strict JSON only . Requirements • Describe only visible facts; do not infer identity , story , or intent. • categorical values must be selected from the provided enums. • All three fields in descriptions must be present. • Write all description sentences in English, each 8–25 words. JSON Schema { "categorical": { "gender": "male/female/androgynous/unknown", "age_group": "child/teen/young_adult/adult/middle_aged/senior/unknown", "skin_tone": "very_light/light/medium/dark/very_dark/unknown", "expression": "neutral/happy/sad/angry/surprised/fear/disgust/other/unknown" }, "descriptions": { "appearance_sentence": "One sentence about clothing and accessories.", "action_sentence": "One sentence about action or pose.", "background_sentence": "One sentence about scene/background." } } (a) Real-world appearance descriptions (b) Anime-style appearance descriptions Figure 14. V isualization of appearance-related textual descriptions in FFE. The visualizations highlight the distribution and diversity of annotations across real-world and anime domains. T able 5. Anime Dataset Annotation Prompt T emplate. Anime Dataset Annotation Prompt System Context Y ou are an anime image annotation assistant. For a single character image, output strict JSON only . Requirements • Describe only visible facts; do not infer identity , story , or intent. • categorical values must be selected from the provided enums. • All three fields in descriptions must be present. • Write all description sentences in English, each 8–25 words. JSON Schema { "categorical": { "gender": "male/female/androgynous/unknown", "age_group": "child/teen/young_adult/adult/middle_aged/senior/unknown", "expression": "neutral/happy/sad/angry/surprised/fear/disgust/other/unknown", "anime_style": "2d_anime/chibi/manga/sketch/cg_anime/other/unknown" }, "descriptions": { "appearance_sentence": "One sentence about clothing and accessories.", "action_sentence": "One sentence about action or pose.", "background_sentence": "One sentence about scene/background." } } T able 6. F acial Expression Scoring Prompt T emplate. The same prompt is applied to both human and anime domains, highlighting the domain-agnostic nature of our scoring pipeline. Facial Expr ession Scoring Prompt Role and T ask Definition Y ou are an expert AI specialized in analyzing facial expressions in both photorealistic and anime-style images. Y our task is to analyze the input image and determine the intensity score for specific expression cate gories. T arget Emotion Definitions Analyze the image based on the follo wing visual definitions. Crucially , pay close attention to the distinctions between similar expressions (e.g., Fear vs. Surprise, Anger vs. Disgust). • Happiness: Faintly smiling, cheerful, ecstatic; corners of the mouth raised or laughing. • Sadness: Somber, sorro wful, dev astated; frowning with do wncast eyes or tears. • Anger: Annoyed, angry , furious; furro wed brows and glaring eyes. • Fear: W ary , frightened, terrified; knitted brows and pupil constriction. • Surprise: T aken aback, amazed, stunned; e yes wide open or jaw dropped. • Disgust: Distasteful, repulsed, gagging; wrinkling the nose or raising the upper lip. • Embarrassment: A shy smile, blushing cheeks, av oiding eye contact, looking down, or covering the face with hands. • Confidence: Self-assured smile, corners of the mouth raised, sharp and firm gaze, slightly smug. • Confusion: Knitted brows, looking aside or rolling the eyes, head tilted in deep thought. • Dro wsiness: Heavy drooping e yelids, yawning, lethargic or lo w-energy look. • Contempt: Asymmetrical smirk (one corner raised), sneering, looking down on someone. • Nervousness: V isible sweat drops, tense facial muscles, uneasy or restless g aze. Output Format Requir ements • Return format: Output a standard JSON object. • K eys: Use the e xact emotion labels provided above. • V alues: The intensity score (a float between 0.00 and 1.00). • Constraint: Do not e xplain your reasoning. Output only the JSON object. Do not include Markdo wn formatting such as ‘‘‘json ... ‘‘‘ . Output Example { "Happiness": 0.90, "Surprise": 0.75, "Anger": 0.05, ... }

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment