Back to Basics: Revisiting ASR in the Age of Voice Agents

Automatic speech recognition (ASR) systems have achieved near-human accuracy on curated benchmarks, yet still fail in real-world voice agents under conditions that current evaluations do not systematically cover. Without diagnostic tools that isolate…

Authors: Geeyang Tay, Wentao Ma, Jaewon Lee

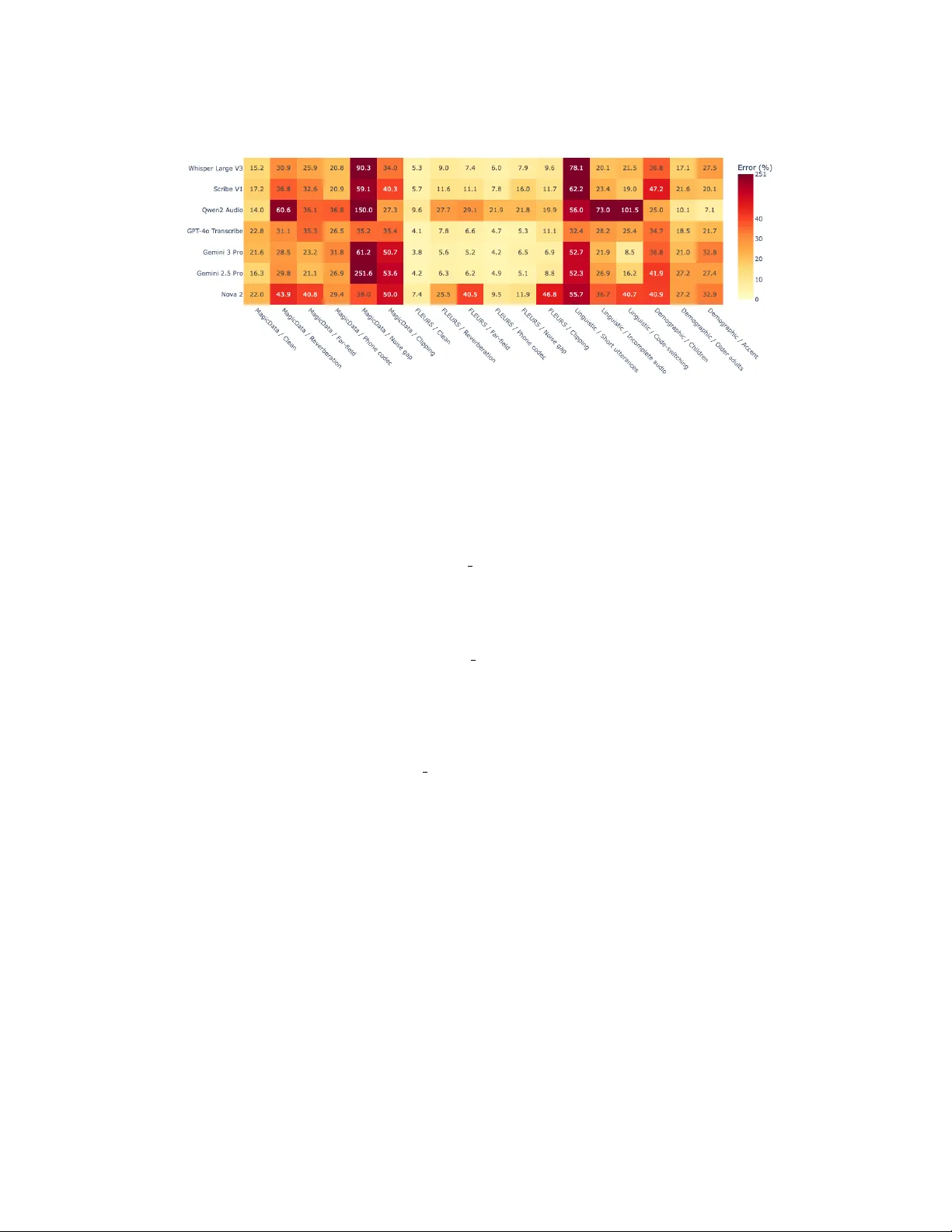

B A C K T O B A S I C S : R E V I S I T I N G A S R I N T H E A G E O F V O I C E A G E N T S Geeyang T ay † , W entao Ma † ∗ , Jaewon Lee, Y uzhi T ang, Daniel Lee, W eisu Y in, Dongming Shen, Silin Meng, Y i Zhu, Mu Li, Alex Smola Boson AI A B S T R AC T Automatic speech recognition (ASR) systems hav e achiev ed near-human accurac y on curated benchmarks, yet still fail in real-world v oice agents under conditions that current e valuations do not systematically co ver . W ithout diagnostic tools that isolate specific failure factors, practitioners cannot anticipate which condi- tions, in which languages, will cause what degree of degradation. W e introduce W ildASR, a multilingual (four-language) diagnostic benchmark sourced entirely from real human speech that factorizes ASR robustness along three axes: en viron- mental degradation, demographic shift, and linguistic diversity . Evaluating seven widely used ASR systems, we find severe and unev en performance degradation, and model rob ustness does not transfer across languages or conditions. Critically , models often hallucinate plausible but unspoken content under partial or de graded inputs, creating concrete safety risks for downstream agent behavior . Our results demonstrate that tar geted, factor-isolated e valuation is essential for understand- ing and improving ASR reliability in production systems. Besides the benchmark itself, we also present three analytical tools that practitioners can use to guide deployment decisions. https://huggingface.co/datasets/bosonai/WildASR https://github.com/boson- ai/WildASR- public 1 I N T RO D U C T I O N The field of Automatic Speech Recognition (ASR) has witnessed a decade of unprecedented progress, driv en largely by the scaling of neural architectures and the av ailability of massiv e datasets. The declaration of “human parity” by (Amodei et al., 2016; Xiong et al., 2017) mark ed a pi votal mo- ment, and this progress has been further accelerated by (Zhang et al., 2020; Radford et al., 2022; Pratap et al., 2023) which leverage hundreds of thousands of hours of web-scraped audio to achie ve remarkable performance across di verse languages. Contemporary systems now routinely obtain word error rates (WER) lower than 5% on curated benchmarks (Panayoto v et al., 2015; Ardila et al., 2020). This rapid advancement raises the question: Is multilingual ASR a solved pr oblem? V oice agents, AI systems capable of engaging in spoken dialogue with users, hav e been rapidly proliferating in the past few years (Shi et al., 2025; Zeng et al., 2024; Arora et al., 2025). As voice emerges as a dominant interface modality , these agents must contend with a wide spectrum of out- of-distribution (OOD) conditions: telephony compression, overlapping speech, regional accents, disfluencies, and code-switching. F or the well performed ASR system, when they are deployed in real-world v oice agents, failures still occur (Chen et al., 2024; Jain et al., 2025; Xu et al., 2025). Moreov er , voice agents do not merely use ASR outputs as passiv e transcription, but rely on them to trigger downstream tools, retrie ve context, and execute actions. Under OOD conditions, tran- scription errors are not merely cosmetic, as can be seen in Figure 1. Y et existing ASR ev aluations predominantly test on in-domain data and report aggregate word error rate (WER) (Panayotov et al., 2015; Ardila et al., 2020; Shah et al., 2024; W ang et al., 2025a; Sakshi et al., 2024), obscuring which specific acoustic or linguistic factors drive failures. As a result, current ASR benchmarks cannot ∗ Corresponding to: wentao@boson.ai. † Equal contribution. 1 Figure 1: Multilingual ASR rob ustness under real-w orld distribution shifts in WildASR. W e ev aluate sev en ASR systems across four languages and aggregate performance ov er three OOD di- mensions. The horizontal line denotes the in-distrib ution clean-set model-a verage reference (5.7%), defined as the average error rate on the FLEURS test set across all models and languages. The sharp and unev en degradation across OOD conditions shows that human-parity performance on in- distribution data does not reliably transfer to real-w orld settings. answer whether robustness to one perturbation transfers across languages, en vironments, or con ver - sational settings. This creates a diagnostic gap : practitioners have no systematic way to identify wher e (en vironment), who (demographics), and what (linguistic phenomena) drives ASR failures in their specific deployment. T o close this diagnostic gap, we introduce WildASR , a multilingual (four-language) benchmark that provides systematic, factor-isolated ev aluation of ASR robustness under real-world OOD conditions. Our contributions are threefold: • WildASR: a diagnostic benchmark for real-world OOD shifts W e introduce a multi- lingual (four-language), multi-dimensional benchmark whose source audio comes entirely from real human speech rather than TTS-generated data. T o systematically isolate failure modes, we decompose robustness into three ax es: En vironmental Degradation (the where), Demographic Shift (the who), and Linguistic Div ersity (the what). • Í Thorough evaluation under a unified protocol W e benchmark seven state-of-the-art systems (including proprietary and open-source models) under a unified protocol. W e re- port both standard metrics and factor -isolated degradations, re vealing that robustness does not transfer reliably , and performance rankings fluctuate wildly across languages. • û Diagnostic analyses as deployment decision tools Moving beyond average WER, we characterize specific deployment risks and present analytical tools that practitioners can directly apply: a P90 Elbow analysis to identify instability thresholds under increasing distortion, prompt sensitivity profiling to quantify variance from instruction phrasing, and hallucination error rate to expose semantic fabrications in linguistic edge cases. 2 R E L A T E D W O R K ASR Modern ASR systems ha ve adv anced rapidly due to self-supervised learning and lar ge-scale multilingual training. Models (Baevski et al., 2020; Gulati et al., 2020; Radford et al., 2022) hav e achiev ed near -human accuracy on widely used benchmarks (P anayotov et al., 2015; Hernandez et al., 2018; Ardila et al., 2020; Pratap et al., 2020; 2023; Conneau et al., 2022). These datasets have played a critical role in driving progress by standardizing e valuation and enabling fair comparison. Howe ver , these ASR benchmarks largely reflect in-distrib ution conditions, resulting in saturated performance and limited insight into system behavior under realistic deployment shifts (K oenecke et al., 2024; Bara ´ nski et al., 2025; Frieske & Shi, 2024). T o address this gap, sev eral works study ASR robustness under specific perturbations such as additi ve noise, re verberation, accented speech, or domain mismatch (Shah et al., 2024; W ang et al., 2025b), demonstrating substantial degradation under adverse conditions and motivating robustness-oriented training. While valuable, these ev al- uations typically focus on a limited set of languages or datasets and often rely on TTS-generated 2 speech to construct test samples. Ho wev er , synthetic speech lacks the authentic paralinguistic phe- nomena present in real human recordings (Liao et al., 2025; Li et al., 2024), such as hesitations, disfluencies, and unstable articulation, and can substantially underestimate failure rates (see Sec- tion 5 for empirical evidence). Preserving real human speech sources is therefore critical for valid robustness e valuation. In contrast, our WildASR sources all audio from real human speech and ap- plies controlled augmentations to enable factorized e valuation across multiple perturbation axes and languages, enabling systematic analysis of ASR failure modes and rob ustness trade-offs. A udioLLM Recent work has e xplored integrating speech understanding with large language mod- els (T ang et al., 2024; Chu et al., 2023; Ghosh et al., 2024; Rubenstein et al., 2023; Huang et al., 2023; Elev enLabs, 2025; OpenAI, 2025a; Chu et al., 2024; Deepgram, 2025), giving rise to Audi- oLLMs that combine pretrained audio encoders with text-centric LLM backbones for unified speech recognition, translation, and audio reasoning, or e ven with other modalities (Comanici et al., 2025; Google Deepmind, 2025). Parallel efforts have explored end-to-end speech-to-speech (S2S) sys- tems (D ´ efossez et al., 2024; Google Research, 2025; OpenAI, 2025b; Roy et al., 2026; W u et al., 2025), which reduce latency and preserv e paralinguistic cues. T o ev aluate such models, benchmarks (W ang et al., 2025a; Chen et al., 2024; Sakshi et al., 2024) emphasize multimodal audio understanding and reasoning rather than transcription accuracy alone. More efforts (Cheng et al., 2025; Liu et al., 2025b; Zhang et al., 2025; K oudounas et al., 2025; Zhang et al., 2024; Liu et al., 2025a) try to highlight hallucination and instability in audio-language systems(T ang et al., 2024; Kuan & Lee, 2024; Atwany et al., 2025; W ang et al., 2025c). Howe ver , these benchmarks often ev aluate task success, implicitly assuming that upstream ASR outputs are sufficiently reliable. In contrast, our W ildASR focuses specifically on the trustworthiness of ASR as a foundational component in such systems. By exposing substantial transcription failures under realistic conditions, W ildASR highlights a critical gap between benchmark ASR performance and the reliability required for safe downstream decision-making. 3 W I L D A S R Real-world v oice agents encounter a long tail of acoustic and linguistic conditions that curated benchmarks rarely cov er , and these conditions can trigger not just higher error rates but outright hal- lucinations. Rather than optimizing for average-case accuracy , we construct a diagnostic benchmark that (i) reflects real voice-chat usage, (ii) isolates concrete OOD factors, and (iii) enables per-factor analysis. W e organize these factors into three dimensions: en vir onmental de gradation (the wher e), demographic shift (the who), and linguistic diver sity (the what) . T o operationalize these failure modes, we construct WildASR . W e first describe our curation pipeline, then detail each dimension. An ov erview is presented in T able 1. 3 . 1 C U R A T I O N P I P E L I N E The design of W ildASR follows a real source , contr olled perturbation principle: all source audio originates from real human speech to preserve authentic paralinguistic phenomena (e.g., hesitations, disfluencies, and articulatory variation) that TTS systems fail to reproduce; controlled augmentations are then applied post-hoc to isolate specific acoustic factors without introducing synthetic artifacts. The benchmark covers four languages: English (EN), Chinese (ZH), Japanese (J A), and Korean (K O), with three distinct data splits corresponding to our three OOD dimensions. The curation pipeline consists of sev en stages: DC (Data Collection), SF (Speaker Filtering), QF (Quality Filtering), NR (Audio Normalization), AA (Acoustic Augmentation), MT (Manual Trun- cation & Transcript Alignment), and MV (Manual V erification). Not all steps apply to e very subset; the rightmost column of T able 1 indicates which steps were applied to each subcategory . Detailed descriptions of each step are provided in Appendix C. 3 . 2 E N V I R O N M E N T A L D E G R A DA T I O N V oice agents operate on user-generated audio recorded in uncontrolled conditions that are often far from the distrib utions represented in standard ASR training and e valuation. T o isolate en vironment- driv en acoustic shifts while keeping the linguistic content fixed, we apply fiv e controlled, transcript- preserving augmentations to each utterance. 3 T able 1: Overview of the proposed WildASR. Each OOD dimension is decomposed into e xplicitly defined subcategories. For each subcategory , we report the cov ered languages, the number of sam- ples per language, the av erage utterance duration, and the curation steps applied (defined in §3.1). Detailed data sources are listed in Appendix D. Categories Languages #Samples A vg Duration (s) Curation Steps En vironmental degradation Rev erberation EN/ZH/J A/KO 2841/3735/2850/2046 10.0/12.9/12.5/10.4 DC → QF → NR → AA → MV Far-field EN/ZH/J A/KO 2841/3735/2850/2046 10.0/13.0/12.5/10.4 DC → QF → NR → AA → MV Phone codec EN/ZH/J A/KO 1894/2490/1900/1364 7.5/10.4/10.0/7.9 DC → QF → NR → AA → MV Noise gap EN/ZH/J A/KO 3788/4980/3800/2728 8.6/11.6/11.2/9.1 DC → QF → NR → AA → MV Clipping EN/ZH/J A/KO 947/1245/950/682 7.5/10.4/10.0/7.9 DC → QF → NR → AA → MV Demographic shift Children EN/ZH 300/1000 4.06/3.02 DC → SF → QF → NR → MV Older adults EN/ZH 300/1000 5.93/1.95 DC → SF → QF → NR → MV Accent EN/ZH 1000/1000 3.48/5.69 DC → SF → QF → NR → MV Linguistic diversity Short utterances EN/ZH/J A/KO 318/367/467/255 1.2/0.7/1.1/1.0 DC → QF → NR → MV Incomplete audio EN/ZH/J A/KO 2345/2517/195/396 3.9/2.1/1.9/2.6 DC → QF → NR → MT → MV Code-switching (EN)+ZH/J A/KO 700/700/700 8.6/11.7/11.5 DC → QF → NR → MV Reverberation Re verberation is one of the most common factors that reduces indoor audio quality . W e simulate room acoustics using the image-source method (Scheibler et al., 2018), which intro- duces temporal smearing from reflections. W e parameterize sev erity by the re verberation time R T 60 (i.e., the time for the sound energy to decay by 60 dB). T o be specific, we vary R T 60 across three distinct lev els (0 . 4 / 0 . 8 / 1 . 6 s ) to cov er mild to strong rev erberation. Far -field Distinct from simple reverberation (which relies on room absorption), far -field audio is characterized by a low direct-to-rev erberant ratio (Haeb-Umbach et al., 2020). It creates a smear- ing ef fect where reflections overwhelm the direct path, se verely de grading the intelligibility of short phonemes (e.g., consonants). T o isolate this effect, we fix the room acoustics ( R T 60 ) using a simu- lated room geometry , and vary only the source-microphone distance to (4 / 8 / 16 m ) . Phone Codec Real-w orld voice agents frequently encounter narro wband telephone audio rather than wideband, studio-like recordings. T o simulate legacy communication channels, we process audio through two standard codecs: GSM (representing classic mobile telephony) and G.711 µ - law (representing standard landline/V oIP infrastructure). Both operations in volv e do wnsampling the input to 8 kHz, applying the codec’ s quantization artifacts, and resampling back to 16 kHz, testing the model’ s ability to recover phonemes from band-limited representations. Noise gap Hallucinations are often associated with long non-speech spans within an utterance (Koe- necke et al., 2024). T o probe this failure mode, we inject synthetic stationary noise between con- tiguous speech fragments, increasing the non-vocal duration while preserving the original lexical content. Specifically , we vary the density and duration of these insertions: 3 or 5 gaps of either 0 . 2 s or 0 . 4 s duration, leading to ( N gap , ∆ t ) ∈ { (3 , 0 . 2) , (5 , 0 . 2) , (3 , 0 . 4) , (5 , 0 . 4) } . This stresses the model’ s endpointing mechanisms without introducing confounding linguistic complexity . Clipping Clipping occurs when input gain saturates the recording hardware (e.g., loud speech or background music), clamping the wav eform against a maximum limit. W e model this by setting a per-utterance clipping threshold such that the top 40% of signal amplitude values are flattened, fol- lowed by RMS rescaling to recover loudness. This introduces harsh non-linear harmonic distortion that standard noise-robustness techniques often f ail to model. W e establish a high-quality base corpus by sampling utterances from two complementary sources: the widely adopted FLEURS (Conneau et al., 2022) test split, which provides read speech, and a few con versational datasets from MagicData (Zhou et al., 2025; MagicData, 2024; 2025), which captures spontaneous speech. Both sources cover all four tar get languages. W e discard unintelligible samples, and apply fiv e controlled perturbations to enable factor-isolated analysis. 3 . 3 D E M O G R A P H I C S H I F T Standard ASR training and ev aluation corpora are dominated by working-age adults speaking rela- tiv ely standard varieties. This mismatch constitutes both a fairness concern and a product risk, par- ticularly salient for two fast-gro wing use cases: children’ s education and geriatric care. T o bridge 4 Figure 2: Error heatmap f or seven ASR models on W ildASR. Each cell visualizes error rate (WER for EN and CER for CJK), with lighter colors indicating lower error . This patchy landscape rev eals that ASR systems still exhibit large performance degradation and une ven robustness gaps. this gap, we curate three sub-corpora that represent critical user groups for which current systems often fail. Children Recognition of child speech is uniquely challenging due to higher fundamental frequenc y , irregular prosody , frequent disfluencies, and ev olving linguistic patterns that defy adult-trained model assumptions. In W ildASR, we source English data from Zenodo’ s children speech record- ing (Kennedy et al., 2016) and T omRoma/Child Speech (T omRoma, 2024); Chinese data from the B AAI/ChildMandarin (Zhou et al., 2024) targeting children aged 3–5. W e perform rigorous filtering to exclude samples with poor signal-to-noise ratios and manually v alidate transcripts for accuracy . Older adults Elderly speech is affected by presbyphonia, causing reduced vocal intensity , breathi- ness, hoarseness, tremors, and slower articulation that degrade ASR performance. W e sample En- glish speakers aged 50+ from MushanW/GLOBE V3 (W ang et al., 2024) and Chinese elderly speech from ev an0617/seniortalk (Chen et al., 2025). Given that elderly speakers may exhibit confounding factors such as dialect variations, we perform manual filtering to select samples where age-related acoustic degradation is the dominant feature, minimizing the influence of other v ariables. Accent While nati ve accents are well-represented, global deployment requires rob ustness to second language accents, which introduce phonemic substitutions and stress shifts. English accented sam- ples are drawn from MushanW/GLOBE V2 (W ang et al., 2024) with diverse non-nativ e accents, while Chinese samples from T winkStart/KeSpeech (Shi et al., 2026) focusing on regional Mandarin varieties, e xcluding mutually unintelligible dialects like Cantonese. Note that the demographic shift subset only cov ers English and Chinese at this moment, as high- quality child and elderly speech resources are scarce for the other languages. W e balance data quality and acquisition difficulty to ensure reproducibility of our W ildASR benchmark. 3 . 4 L I N G U I S T I C D I V E R S I T Y While acoustic robustness focuses on signal quality , semantic robustness targets linguistic phenom- ena and structural edge cases that occur frequently in spontaneous dialogue but are systematically underrepresented in standard training corpora. In this w ork, we identify three specific failure modes where the model’ s reliance on learned probabilities becomes a liability . Short utterances Real-world dialogue relies hea vily on backchannels (e.g., “hmm” “right”), phatic greeting (e.g., “how are you, ” “what’ s up?”) and terse commands (e.g., “stop, ” “next”). These are critical for natural turn-taking and latency management in voice agents. Howe ver , current models suffer from such short utterance, leading to wrong transcriptions and hallucinations in most cases. Here we select utterances containing fewer than 6 words (or 6 characters for CJK languages) from Y OD AS (Li et al., 2023) for all four languages. Incomplete audio In streaming voice applications, users are frequently cut of f by aggressive voice activity detection (V AD), network latency , or interruptions. Howe ver , ASR models are typically 5 T able 2: Impact of envir onmental degradations on multilingual ASR performance. A verage error rates across seven ASR models under controlled acoustic perturbations. Results are reported as MagicData / FLEURS. ∆ denotes the absolute increase in error rates relativ e to the clean condition. Bold highlights the largest de gradation magnitude per language and dataset. Perturbations EN ZH J A KO WER (%) ∆ CER (%) ∆ CER (%) ∆ CER (%) ∆ Original 19.9/4.1 –/– 14.6/7.8 –/– 19.7/5.1 –/– 19.5/5.9 –/– Rev erberation 31.9/9.5 +12.0/+5.3 25.7/13.0 +11.1/+5.2 45.3/15.5 +25.5/ +10.4 46.6/15.5 +27.0/+9.6 Far-field 26.0/15.8 +6.1/ +11.7 23.1/12.3 +8.5/+4.5 33.7/13.5 +13.9/+8.4 40.1/19.1 +20.6/ +13.2 Phone (G.711) 20.5/10.4 +0.6/+6.3 16.9/8.6 +2.3/+0.8 29.1/6.7 +9.4/+1.6 24.8/8.8 +5.3/+2.9 Phone (GSM) 22.9/5.2 +3.0/+1.1 25.0/9.4 +10.4/+1.6 33.8/10.5 +14.1/+5.4 47.6/7.9 +28.0/+2.0 Noise gap 87.6/6.7 +67.7 /+2.5 24.9/13.2 +10.3/+5.4 138.7/10.0 +118.9 /+5.0 140.5/12.8 +121.0 /+6.8 Clipping 30.6/15.6 +10.7/+11.5 37.3/17.9 +22.7 / +10.1 52.0/13.6 +32.3/+8.5 46.6/18.5 +27.0/+12.5 trained on complete, well-formed sentences. When presented with a cut-of f word, model may fill in a likely continuation based on language priors, producing fluent completions that were nev er spok en - a direct pathway to hallucination. This hallucinated completion is dangerous for agents ex ecuting API calls, where “delete” vs. “delete all” requires precise transcription of the actual audio. Gi ven selected utterances from Y OD AS (Li et al., 2023), we manually edit wa veforms to truncate speech mid-sentence or mid-word while referencing the truncated transcript as the ground truth. Code-switching Code-switching is frequent in multilingual communities and is a common interac- tion pattern for voice agents. Most ASR models rely on an initial Language Identification token to condition generation. Code-switching breaks this “one-utterance-one-language” assumption. Mod- els often force the transcribed output into a single script, resulting in phonetic transliteration errors (i.e., foreign terms are mapped to nonsensical homophones in the primary language) or simply drop- ping the secondary language content. Here we sample the data directly from SwitchLingua (Xie et al., 2025) and perform light-weight filtering to remov e samples without rich multilingual mixes. 4 E X P E R I M E N T S In this w ork, we e v aluate a total of 7 state-of-the-art ASR models on the proposed W ildASR, co ver - ing both proprietary and open-source models. Specifically , we include Whisper Large V3 (Radford et al., 2022), GPT -4o Transcribe (OpenAI, 2025a), Gemini 2.5 Pro (Comanici et al., 2025), Gem- ini 3 Pro (Google Deepmind, 2025), Qwen2-Audio (Chu et al., 2024), Nov a 2 (Deepgram, 2025) and Scribe V1 (Elev enLabs, 2025). Details of inference protocol are included in Appendix A. Due to space constraints, results in the main text are presented in aggregated form to facilitate cross- condition analysis; full per-model breakdowns for each subset and language are provided in Ap- pendix G. 4 . 1 M U L T I L I N G UA L A S R I S N OT S O L V E D T o hav e an ov erall understanding of models’ ASR performance on W ildASR, we conduct a system- atic ev aluation and present the general results in Figure 2. Each cell aggregates error across a vailable languages. It rev eals a patchy landscape where each model shows pockets of strong performance alongside sev ere failures, indicating that ASR systems still exhibit large performance degradation and unev en robustness gaps across realistic OOD conditions. Furthermore, robustness does not uniformly transfer across en vironmental, semantic, and demo- graphic shifts. For instance, Gemini 3 Pro achiev es low error on FLEURS/Clean (3.8%) but degrades sharply on MagicData/Noise gap (61.2%) and Linguistic/Short utterances (52.7%). These patterns are common in Figure 2, making extrapolation from one setting to another unreliable, which indi- cates models can e xcel on standard benchmarks yet f ail drastically under real-w orld conditions. This validates the importance of our benchmark in rev ealing weaknesses masked by aggregate metrics. W e next present detailed findings across three dimensions to systematically analyze robustness of multilingual ASR. 4 . 2 E N V I R O N M E N T A L D E G R A DA T I O N S U B S E T 6 0.2 0.4 0.6 0.8 1.0 1.2 1.4 1.6 1.8 R T60 (s) 0 10 20 30 40 WER / CER (%) baseline clean WER (%) WER (%) P90 WER (%) P90 elbow 0.2 0.4 0.6 0.8 1.0 1.2 1.4 1.6 1.8 R T60 (s) 0 5 10 15 20 25 30 35 40 WER / CER (%) baseline clean WER (%) WER (%) P90 WER (%) P90 elbow Figure 3: ASR error dynamics under increasing reverberation for Qwen2- Audio on FLEURS (top: English, bot- tom: Chinese). In T able 2, we report the results separately on FLEURS and MagicData to ha ve a holistic understanding of the impact from en vironmental degradations on both read speech and sponta- neous conv ersational speech. For each dataset and language, we average the resulting WER/CER across models for each perturbation type, and additionally report paired degradations as ∆ WER/ ∆ CER relativ e to the original (clean) condition. W e observe that all acoustic perturbations result in positive error increases across both corpora, indicating that each per- turbation category introduces measurable performance degra- dation. Overall, the av erage degradation is often larger on MagicData than on FLEURS, likely because con versational speech exhibits greater variability and poses more challenges than read speech. Notably , noise gap is the most detrimen- tal perturbation for con versational speech, increasing the error rate on MagicData by +67.7% (EN) and +10.3% (ZH). W e also find that degradation patterns are highly non- uniform across languages and recording settings. For exam- ple, on MagicData, noise gap increases ZH CER by +10.3%, yet increases J A and KO CER by +118.9% and +121.0%, re- spectiv ely . T ogether , these discrepancies indicate that robustness measured in one language or recording setting can substantially mispredict behavior in another . In addition, we analyze performance as a function of distortion strength, using reverberation as an example with Qwen2-Audio on FLEURS, shown in Figure 3. The blue solid curve shows corpus- lev el WER at each distortion level, with the blue dotted line denoting the clean baseline; the shaded band indicates ± 1 standard de viation of utterance-lev el WER. The orange dashed curve reports the P90 (90th percentile) WER, capturing tail behavior , and the vertical orange dashed line marks the P90 elbow point. As distortion sev erity increases, corpus-level WER grows gradually , while the error distribution widens substantially : the P90 curve rises faster than the mean and variabil- ity across utterances increases. This pattern indicates the emergence of sev ere outliers ev en when av erage performance remains acceptable, a critical concern for voice-agent deployment where tail failures strongly af fect user experience. T o quantify the onset of instability , we define the P90 elbo w as the distortion level at which the P90 curve exhibits accelerated growth, computed using knee- detection methods. This elbow provides a practical instability threshold for deployment decisions, such as bounding allow able distortion or triggering abstention. 4 . 3 D E M O G R A P H I C S H I F T S U B S E T T able 3: ASR performance under demo- graphic shift. English remains relativ ely ro- bust, while Chinese and child speech exhibit substantially higher error rates. Accent Children Older Model ZH EN ZH EN ZH EN Nov a 2 59.2 6.6 54.4 27.4 51.6 2.9 GPT -4o Transcribe 40.7 2.6 39.9 29.4 36.0 1.1 Gemini 2.5 Pro 49.9 5.0 58.6 25.1 52.6 1.8 Gemini 3 Pro 62.5 3.0 55.3 18.2 41.4 0.7 Qwen2-Audio 7.5 6.8 23.4 26.7 18.6 1.5 Scribe V1 37.9 2.2 65.1 29.3 42.3 0.8 Whisper Large V3 51.0 4.1 52.0 21.7 34.0 0.2 T able 3 reports performance of sev en models un- der demographic shift. Across models, rob ustness is consistently higher for English than for Chinese: WERs for Accent and Older speech in English re- main in the low single digits, whereas Chinese exhibits substantially higher error rates. Notably , child speech in English remains challenging f or all models , with the lo west observed error still at 18.2 WER, indicating a deployment-critical failure mode giv en the prev alence of child and family use cases. For Chinese, Qwen2-Audio shows the lo west error across all three demographic conditions, likely reflecting broader co verage in its Chinese training data. W e further analyze prompt sensitivity in multilingual ASR by ev aluating Gemini 2.5 Pro with ten paraphrased prompts on the three demographic OOD subsets in both languages. The prompts are listed in Appendix E. All prompts express the same instruction: transcribe the speech in the target language and output only the transcript, but differ in wording and style. For each prompt, we com- 7 T able 4: ASR performance and hallucination behavior under linguistic diversity . W e can see that short and truncated inputs induce high error and frequent hallucinations, rev ealing semantic failures not captured by lexical metrics alone (EN not applicable for code-switching). WER/CER/MER (%) HER (%) Model Category ZH EN J A K O ZH EN J A K O Nov a 2 code-switch 33.7 - 32.0 56.4 68.4 - 58.1 71.9 short 57.6 43.2 56.8 65.3 52.6 36.3 46.9 59.6 incomplete 35.0 13.5 37.2 61.0 38.1 7.8 37.4 56.8 Gemini 2.5 Pro code-switch 20.7 - 9.8 18.2 7.0 - 9.4 19.1 short 40.6 64.4 48.6 55.6 30.5 35.4 28.1 34.1 incomplete 31.9 15.3 37.1 23.5 31.6 10.5 32.8 11.1 Gemini 3 Pro code-switch 7.2 - 9.0 9.4 3.7 - 6.3 11.9 short 33.9 73.9 55.4 47.8 15.5 27.3 31.2 23.5 incomplete 21.7 10.6 36.7 18.5 16.9 6.7 25.6 14.1 GPT -4o Transcribe code-switch 21.9 - 24.4 29.9 12.0 - 17.9 36.9 short 26.9 38.7 37.3 26.9 21.5 20.5 21.9 21.2 incomplete 25.4 38.1 26.6 22.9 22.3 12.4 26.1 12.6 Qwen2-Audio code-switch 12.3 - 80.5 211.7 8.9 - 35.6 85.7 short 21.4 40.7 59.2 102.6 14.7 23.3 40.6 73.3 incomplete 20.5 13.0 224.4 34.2 14.7 6.1 21.5 37.6 Scribe V1 code-switch 10.2 - 22.8 23.9 7.6 - 20.1 31.7 short 38.3 57.2 94.9 58.3 30.0 38.5 50.0 32.6 incomplete 25.5 12.9 36.4 18.8 30.5 10.9 38.5 15.7 Whisper Large V3 code-switch 12.0 - 22.8 29.6 10.9 - 23.6 38.0 short 41.6 39.8 154.1 92.0 31.6 21.4 9.4 22.0 incomplete 24.0 12.2 26.7 17.7 21.0 7.7 19.5 12.9 pute corpus-lev el error rates and visualize their distribution, along with mean and standard deviation, in Figure 4. Figure 4: Prompt sensitivity of Gemini 2.5 Pro on demographic subsets across ten paraphrased prompts (EN/ZH). Results sho w that ASR performance could be highly sensitive to prompt phrasing, particularly in Chinese . Across Chinese subsets, the standard deviation across prompts reaches σ = 13 . 7% (Ac- cent), σ = 46 . 1% (Children), and σ = 8 . 3% (Older), whereas English exhibits minimal v ariation ( σ ≤ 0 . 6% across all conditions). These findings demonstrate that ev en for basic transcription, para- phrased instructions can materially affect model be- havior , especially in non-English settings. As the optimal prompt is rarely known in advance in real- world deployments, prompt choice alone can induce substantial performance de gradation. This motiv ates ev aluating ASR systems not only by mean error un- der a single prompt, but also by prompt robustness, e.g., variance across a controlled prompt set as a first-class stability metric. On the other hand, the profiling methodology itself is reusable: practitioners can apply the same controlled prompt set to any ne w model or language to assess prompt stability before deployment. 4 . 4 L I N G U I S T I C D I V E R S I T Y S U B S E T In this section, we evaluate models across three challenging linguistic scenarios: short utterances, incomplete audio and code-switching. T o understand hallucination behavior , we compute Hallucina- tion Error Rate (HER) (Atwany et al., 2025) to assess semantic-le vel errors beyond le xical metrics. Detailed results can be seen in T able 4. Across all languages, short utterances consistently induce high error rates , reaching 38.7%–73.9% even in English. The reason might be threefold: (i) short segments contain limited acoustic evidence and are more sensitive to V AD errors; (ii) decoder- 8 only models with strong language priors may over -generate plausible continuations when context is scarce; and (iii) many training pipelines do wnweight or remov e very short clips, reducing coverage of these patterns entirely . W e further observe insertion-dominated auto-completion failures , with WER/CER exceeding 100%, indicating that models generate substantial hallucinated content rather than transcribing faith- fully . For example, Qwen2-Audio reaches 102.6% CER on KO short utterances, 211.7% MER on K O code-switching, and 224.4% on J A incomplete audio. These f ailures suggest a tendency to “complete” truncated or ambiguous inputs instead of producing conservati ve transcriptions. Finally , HER rev eals semantic failures that lexical metrics alone obscure . Discrepancies be- tween WER/CER and HER highlight cases where surface-le vel transcription appears reasonable despite se vere meaning distortion. For instance, Nov a 2 on ZH code-switching exhibits 33.7% MER but 68.4% HER, indicating substantial semantic fabrication. Such meaning-altering hallucinations, e.g., negation introduced by a single insertion (“no I can” → “no I can’t”) pose significant risks in high-stakes applications. Joint analysis of WER and HER therefore enables a more faithful charac- terization of ASR reliability by distinguishing benign lexical errors from critical semantic failures. This joint e valuation protocol can be readily applied to any ASR system to surface semantic risks that WER alone would miss. Overall, incomplete audio is relatively manageable for EN, while code-switching is substantially harder for J A/KO mixed with English. Another observation is, proprietary models tend to perform better (more robust) than open-source models on our linguistic diversity subset, e.g., occurrences where error rates beyond 80 happen mostly in Qwen2-Audio and Whisper Lar ge V3. 5 F U RT H E R D I S C U S S I O N Is WildASR intrinsically difficult for humans? Although WildASR induces sev ere failures in state-of-the-art ASR systems, the underlying speech remains largely intelligible to human listeners. W e conducted a human evaluation in which samples wer e r eviewed in randomized and anonymized or der by independent annotators. The resulting averag e err or rate was 4.7%, consistent with estab- lished estimates of human-level transcription performance. This gap confirms that W ildASR does not derive its difficulty from ambiguity or poor signal quality , b ut rather exposes modeling limi- tations under realistic long-tail conditions. The disparity between human and model performance highlights substantial headroom for improving rob ustness in deployed ASR systems. Why real speech sources matter for robustness evaluation? A central design choice of W ildASR is that all source audio originates from real human speech, rather than being generated by text-to- speech systems. T o assess the impact of this choice, we compared r eal child speech with synthetic counterparts generated fr om identical transcripts using Qwen3-TTS (Hu et al., 2026). T ake Whisper Lar ge V3 as an example, it achie ved near -ceiling performance on synthetic audio (3.7%), but error rates incr eased dramatically on real child speech (21.7%) for English (shown in table 3). Quali- tativ e inspection reveals that synthetic samples capture coarse acoustic cues (e.g., pitch) but fail to reproduce authentic paralinguistic phenomena such as hesitations and unstable articulation. This discrepancy suggests that e valuations relying on synthetic data can underestimate failure rates. Real speech remains essential for rev ealing robustness gaps that directly affect v oice-agent reliability . What is the “gr ound truth” f or multilingual ASR? Although ASR is often treated as a well- defined transcription task, its notion of ground truth is inherently use-case and culture dependent. Decisions such as whether to preserve filler words or partial utterances vary across languages and conv ersational norms, and can materially affect downstream interpretation. In some settings, these phenomena conv ey pragmatic meaning, while in others they are routinely normalized. While W ildASR adopts a fixed transcription target for consistenc y , our observations highlight the need for multilingual benchmarks that account for culturally specific transcription norms and e valuate how different normalization choices impact rob ustness, hallucination behavior , and downstream utility . Is ASR obsolete in the era of speech-to-speech systems? Recent progress in large multimodal and S2S models has motivated the vie w that explicit transcription may become unnecessary , as end-to-end systems can directly operate on acoustic representations while preserving paralinguistic cues. Indeed, modern voice agents can often sustain fluent con versations even when retrospectiv e transcripts contain recognition errors or hallucinated phrases. Howe ver , our results argue that this 9 does not diminish the importance of ASR; rather, it reframes its role. Explicit ASR provides a transparent, auditable, and inspectable interface that is critical for deb ugging, compliance, retriev al, indexing, and structured tool use. Moreov er , while S2S systems may tolerate minor errors in benign conditions, our findings on severe hallucinations under OOD inputs suggest that uninterpretable end-to-end failures may be harder to detect and correct. From this perspectiv e, robust ASR should be vie wed not as a legac y component, but as a stabilizing input layer and safety guardrail for next- generation voice agents. Future work should study hybrid architectures that dynamically combine explicit transcription with audio-nati ve reasoning, rather than treating them as mutually exclusi ve. 6 C O N C L U S I O N A N D F U T U R E W O R K This work introduces WildASR, a multilingual benchmark designed to stress-test ASR systems un- der a diverse set of OOD conditions spanning acoustic environments ( wher e ), demographic charac- teristics ( who ), and linguistic phenomena ( what ). Across all ev aluated models, our results reveal a fragmented robustness landscape: strong performance on in-domain benchmarks does not reliably transfer across domains, demographics, or interaction settings, and failure modes often manifest as sev ere semantic distortions rather than gradual degradation. These findings carry broader implica- tions beyond ASR as a standalone task since voice interfaces and con versational agents become an increasingly prominent mode of human–AI interaction. Due to the scope of constructing a multilingual benchmark from real human speech sources and the resources required for large-scale ev aluation, W ildASR naturally has limitations that point to promising future directions: From diagnosis to mitigation. Our current work focuses on identifying and characterizing failure modes, including hallucination behavior , rather than resolving them. The factor -isolated structure of W ildASR directly rev eals which OOD conditions cause the largest degradation for each model and language, providing a natural starting point for targeted data augmentation, fine-tuning, or adaptation strategies. In particular , our hallucination analysis moti vates the de velopment of hallucination-aware decoding and abstention mechanisms that withhold transcription when confidence is low . Broader language, condition, and sample coverage. The current benchmark co vers four lan- guages and a defined set of OOD f actors, lea ving out low-resource languages and conditions such as multi-speaker ov erlap and real-time streaming artifacts. Additionally , certain subsets, particularly the demographic split, ha ve limited sample sizes due to the scarcity of publicly av ailable real speech data for specific populations. As a diagnostic benchmark designed to e xpose failure modes and iden- tify broad degradation patterns, these sizes are sufficient for our analytical goals, but expanding both language coverage and per -condition sample sizes would further strengthen the diagnostic cov erage. Finer -grained reporting and broader model coverage. Due to space constraints, results in the main text are presented in aggregated form to support cross-condition analysis and narrativ e clar- ity; full per-model breakdowns are provided in the appendix. W e also welcome future work that ev aluates additional models on WildASR to broaden the comparati ve landscape. More broadly , W ildASR highlights the need for benchmarks grounded in real human speech sources and realistic usage patterns, as synthetic or narrowly curated ev aluations risk obscuring failure modes that matter most in deployment. W e hope this work serves as a foundation for dev eloping ASR systems that are not only accurate, but dependable in real-w orld voice agents. 10 R E F E R E N C E S Dario Amodei, Sundaram Ananthanarayanan, Rishita Anubhai, Jingliang Bai, Eric Battenberg, Carl Case, Jared Casper, Bryan Catanzaro, Qiang Cheng, Guoliang Chen, Jie Chen, Jingdong Chen, Zhijie Chen, Mike Chrzanowski, Adam Coates, Greg Diamos, Ke Ding, Niandong Du, Erich Elsen, Jesse Engel, W eiwei Fang, Linxi Fan, Christopher Fougner , Liang Gao, Caixia Gong, A wni Hannun, T ony Han, Lappi Johannes, Bing Jiang, Cai Ju, Billy Jun, Patrick LeGresle y , Libby Lin, Junjie Liu, Y ang Liu, W eigao Li, Xiangang Li, Dongpeng Ma, Sharan Narang, Andre w Ng, Sherjil Ozair , Y iping Peng, Ryan Prenger , Sheng Qian, Zongfeng Quan, Jonathan Raiman, V inay Rao, Sanjeev Satheesh, David Seetapun, Shubho Sengupta, Kavya Srinet, Anuroop Sriram, Haiyuan T ang, Liliang T ang, Chong W ang, Jidong W ang, Kaifu W ang, Y i W ang, Zhijian W ang, Zhiqian W ang, Shuang W u, Likai W ei, Bo Xiao, W en Xie, Y an Xie, Dani Y ogatama, Bin Y uan, Jun Zhan, and Zhen yao Zhu. Deep Speech 2 : End-to-End Speech Recognition in English and Mandarin. In ICML , 2016. Rosana Ardila, Megan Branson, Kelly Davis, Michael Henretty , Michael Kohler , Josh Meyer , Reuben Morais, Lindsay Saunders, Francis M. T yers, and Gregor W eber . Common voice: A massiv ely multilingual speech corpus. In Proceedings of LREC , 2020. URL https: //arxiv.org/abs/1912.06670 . Siddhant Arora, Haidar Khan, Kai Sun, Xin Luna Dong, Sajal Choudhary , Seungwhan Moon, Xinyuan Zhang, Adithya Sagar , Surya T eja Appini, Kaushik Patnaik, et al. Stream rag: Instant and accurate spoken dialogue systems with streaming tool usage. arXiv pr eprint arXiv:2510.02044 , 2025. Hanin Atw any , Abdul W aheed, Rita Singh, Monojit Choudhury , and Bhiksha Raj. Lost in transcrip- tion, found in distribution shift: Demystifying hallucination in speech foundation models. arXiv pr eprint arXiv:2502.12414 , 2025. Alex ei Baevski, Y uhao Zhou, Abdelrahman Mohamed, and Michael Auli. wa v2vec 2.0: A Frame- work for Self-Supervised Learning of Speech Representations. In Advances in Neural Information Pr ocessing Systems (NeurIPS) , 2020. URL . Mateusz Bara ´ nski, Jan Jasi ´ nski, Julitta Bartolewska, Stanisław Kacprzak, Marcin W itko wski, and K onrad Ko walczyk. Inv estigation of Whisper ASR Hallucinations Induced by Non-Speech Au- dio. In ICASSP 2025 - 2025 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pp. 1–5, 2025. doi: 10.1109/ICASSP49660.2025.10890105. Y ang Chen, Hui W ang, Shiyao W ang, Junyang Chen, Jiabei He, Jiaming Zhou, Xi Y ang, Y equan W ang, Y onghua Lin, and Y ong Qin. SeniorT alk: A Chinese Con versation Dataset with Rich Annotations for Super-Aged Seniors, 2025. Y iming Chen, Xianghu Y ue, Chen Zhang, Xiaoxue Gao, Robby T . T an, and Haizhou Li. V oiceBench: Benchmarking LLM-Based V oice Assistants. arXiv preprint , 2024. URL . Xize Cheng, Dongjie Fu, Chenyuhao W en, Shannon Y u, Zehan W ang, Shengpeng Ji, Siddhant Arora, T ao Jin, Shinji W atanabe, and Zhou Zhao. AHa-Bench: Benchmarking Audio Hallu- cinations in Large Audio-Language Models. In The Thirty-ninth Annual Conference on Neu- ral Information Pr ocessing Systems Datasets and Benchmarks T rack , 2025. URL https: //openreview.net/forum?id=vCej5sO61x . Y unfei Chu, Jin Xu, Xiaohuan Zhou, Qian Y ang, Shiliang Zhang, Zhijie Y an, Chang Zhou, and Jingren Zhou. Qwen-Audio: Advancing Audio-Language Models, 2023. URL https: //arxiv.org/abs/2311.07919 . Y unfei Chu, Jin Xu, Qian Y ang, Haojie W ei, Xipin W ei, Zhifang Guo, Y ichong Leng, Y uanjun Lv , Jinzheng He, Junyang Lin, Chang Zhou, and Jingren Zhou. Qwen2-Audio T echnical Report. arXiv preprint arXiv:2407.10759 , 2024. Gheorghe Comanici et al. Gemini 2.5: Pushing the Frontier with Advanced Reasoning, Multimodal- ity , Long Context, and Next Generation Agentic Capabilities. arXiv preprint , 2025. 11 Alexis Conneau, Min Ma, Simran Khanuja, Y u Zhang, V era Axelrod, Siddharth Dalmia, Jason Riesa, Clara Rivera, and Ankur Bapna. FLEURS: Few-shot Learning Evaluation of Univ ersal Representations of Speech. arXiv preprint , 2022. URL https://arxiv. org/abs/2205.12446 . Deepgram, 2025. URL https://deepgram.com/learn/ nova- 2- speech- to- text- api . Alexandre D ´ efossez, Laurent Mazar ´ e, Manu Orsini, Am ´ elie Royer , Patrick P ´ erez, Herv ´ e J ´ egou, Edouard Grave, and Neil Zeghidour . Moshi: a Speech-T ext Foundation Model for Real-Time Dialogue. arXiv preprint , 2024. Elev enLabs, 2025. URL https://elevenlabs.io/blog/meet- scribe . Rita Frieske and Bertram E. Shi. Hallucinations in Neural Automatic Speech Recognition: Iden- tifying Errors and Hallucinatory Models, 2024. URL 01572 . Sreyan Ghosh, Sonal Kumar , Ashish Seth, Chandra Kiran Reddy Evuru, Utkarsh T yagi, S Sakshi, Oriol Nieto, Ramani Duraiswami, and Dinesh Manocha. GAMA: A General Audio Model for Audio Understanding. arXiv pr eprint arXiv:2406.11768 , 2024. URL abs/2406.11768 . Google Deepmind, 2025. URL https://deepmind.google/models/gemini/pro/ . Google Research. Gemini liv e: Real-time multimodal con versational ai, 2025. T echnical report. Anmol Gulati, James Qin, Chung-Cheng Chiu, Niki Parmar , Y u Zhang, Jiahui Y u, W ei Han, Shibo W ang, Zhengdong Zhang, Y onghui W u, and Ruoming Pang. Conformer: Con volution-augmented T ransformer for Speech Recognition. In Proceedings of Interspeech , 2020. URL https:// arxiv.org/abs/2005.08100 . Reinhold Haeb-Umbach, Jahn Heymann, Lukas Drude, Shinji W atanabe, Marc Delcroix, and T o- mohiro Nakatani. Far -Field Automatic Speech Recognition. Pr oceedings of the IEEE , 2020. Franc ¸ ois Hernandez, V incent Nguyen, Sahar Ghannay , Natalia T omashenko, and Y annick Est ` eve. TED-LIUM 3: T wice as Much Data and Corpus Repartition for Experiments on Speaker Adapta- tion. In Pr oceedings of Interspeech , 2018. URL . Hangrui Hu, Xinfa Zhu, Ting He, Dake Guo, Bin Zhang, Xiong W ang, Zhifang Guo, Ziyue Jiang, Hongkun Hao, Zishan Guo, Xinyu Zhang, Pei Zhang, Baosong Y ang, Jin Xu, Jingren Zhou, and Junyang Lin. Qwen3-TTS T echnical Report. arXiv pr eprint arXiv:2601.15621 , 2026. URL https://arxiv.org/abs/2601.15621 . Rongjie Huang, Mingze Li, Dongchao Y ang, Jiatong Shi, Xuankai Chang, Zhenhui Y e, Y uning W u, Zhiqing Hong, Jia wei Huang, Jinglin Liu, Y i Ren, Zhou Zhao, and Shinji W atanabe. Audio- GPT : Understanding and Generating Speech, Music, Sound, and T alking Head. arXiv pr eprint arXiv:2304.12995 , 2023. URL . Dhruv Jain, Harshit Shukla, Gautam Rajee v , Ashish Kulkarni, Chandra Khatri, and Shubham Agarwal. V oiceagentbench: Are voice assistants ready for agentic tasks? arXiv preprint arXiv:2510.07978 , 2025. James Kennedy , Sev erin Lemaignan, Caroline Montassier, Pauline Lav alade, Bahar Irfan, Fo- tios Papadopoulos, Emmanuel Senft, and T ony Belpaeme. Children speech recording (english, spontaneous speech + pre-defined sentences). Human-Robot Interaction (HRI), 2016. URL https://doi.org/10.5281/zenodo.200495 . Allison K oenecke, Anna Seo Gyeong Choi, Katelyn X. Mei, Hilke Schellmann, and Mona Sloane. Careless Whisper: Speech-to-T ext Hallucination Harms. In Pr oceedings of the 2024 ACM Con- fer ence on F airness, Accountability , and T ranspar ency , 2024. 12 Alkis K oudounas, Moreno La Quatra, Manuel Giollo, Sabato Marco Siniscalchi, and Elena Baralis. Hallucination Benchmark for Speech Foundation Models. In Pr oceedings of Interspeech , 2025. URL . Under revie w . Chun-Y i. Kuan and Hung-yi Lee. Can Large Audio-Language Models Truly Hear? T ackling Hallu- cinations with Multi-T ask Assessment and Stepwise Audio Reasoning. In Pr oceedings of ICASSP , 2024. URL . W eiqin Li, Peiji Y ang, Y icheng Zhong, Y ixuan Zhou, Zhisheng W ang, Zhiyong W u, Xixin W u, and Helen Meng. Spontaneous style text-to-speech synthesis with controllable spontaneous behaviors based on language models. arXiv preprint , 2024. Xinjian Li, Shinnosuk e T akamichi, T akaaki Saeki, W illiam Chen, Sayaka Shiota, and Shinji W atan- abe. Y odas: Y outube-Oriented Dataset for Audio and Speech. In IEEE Automatic Speech Recog- nition and Understanding W orkshop (ASRU) , 2023. Huan Liao, Qink e Ni, Y uancheng W ang, Y iheng Lu, Haoyue Zhan, Pengyuan Xie, Qiang Zhang, and Zhizheng W u. Nvspeech: An integrated and scalable pipeline for human-like speech modeling with paralinguistic vocalizations. arXiv pr eprint arXiv:2508.04195 , 2025. Heyang Liu, Y uhao W ang, Ziyang Cheng, Ronghua W u, Qunshan Gu, Y anfeng W ang, and Y u W ang. V ocalBench: Benchmarking the V ocal Con versational Abilities for Speech Interaction Models. arXiv preprint arXiv:2505.15727 , 2025a. Hongcheng Liu, Y ixuan Hou, Heyang Liu, Y uhao W ang, Y anfeng W ang, and Y u W ang. V ocalBench- DF: A Benchmark for Evaluating Speech LLM Robustness to Disfluency . arXiv preprint arXiv:2510.15406 , 2025b. MagicData. ASR-KCSC: A K orean Con versational Speech Corpus, 2024. URL https:// magichub.com/datasets/korean- conversational- speech- corpus/ . MagicData. Japanese Duplex Con versation T raining Dataset, 2025. URL https://magichub. com/datasets/japanese- duplex- conversation- training- dataset/ . OpenAI, 2025a. URL https://openai.com/index/ introducing- our- next- generation- audio- models/ . OpenAI. GPT -Realtime: Low-Latenc y Speech-to-Speech Models, 2025b. T echnical report. V assil Panayotov , Guoguo Chen, Daniel Pove y , and Sanjeev Khudanpur . LibriSpeech: An ASR Corpus Based on Public Domain Audio Books. In Pr oceedings of ICASSP , 2015. URL https: //ieeexplore.ieee.org/document/7178964 . V ineel Pratap, Qiantong Xu, Anuroop Sriram, Gabriel Synnaev e, and Ronan Collobert. MLS: A Large-Scale Multilingual Dataset for Speech Research. In Pr oceedings of Interspeech , 2020. URL . V ineel Pratap, Andros Tjandra, Bo wen Shi, Paden T omasello, Arun Babu, Sayani Kundu, Ali Elkahky , Zhaoheng Ni, Apoorv Vyas, Maryam Fazel-Zarandi, Alexei Baevski, Y ossi Adi, Xi- aohui Zhang, W ei-Ning Hsu, Alexis Conneau, and Michael Auli. Scaling Speech T echnology to 1,000+ Languages. arXiv preprint , 2023. Alec Radford, Jong W ook Kim, T ao Xu, Greg Brockman, Christine McLeav ey , and Ilya Sutskev er . Robust Speech Recognition via Large-Scale W eak Supervision. arXiv pr eprint arXiv:2212.04356 , 2022. Rajarshi Roy , Jonathan Raiman, Sang-gil Lee, T eodor-Dumitru Ene, Robert Kirby , Sungwon Kim, Jaehyeon Kim, and Bryan Catanzaro. PersonaPlex: V oice and Role Control for Full Duple x Con- versational Speech Models. 2026. URL https://github.com/NVIDIA/personaplex . 13 Paul K. Rubenstein, Chulayuth Asawaroengchai, Duc Dung Nguyen, Ankur Bapna, Zal ´ an Bor - sos, F ´ elix de Chaumont Quitry, Peter Chen, Dalia El Badawy, W ei Han, Eugene Kharitono v , Hannah Muckenhirn, Dirk Padfield, James Qin, Danny Rozenberg, T ara Sainath, Johan Schalk- wyk, Matt Sharifi, Michelle T admor Ramanovich, Marco T agliasacchi, Alexandru Tudor , Mi- hajlo V elimirovi ´ c, Damien V incent, Jiahui Y u, Y ongqiang W ang, V icky Zayats, Neil Zeghi- dour , Y u Zhang, Zhishuai Zhang, Lukas Zilka, and Christian Frank. AudioP aLM: A Large Language Model That Can Speak and Listen. arXiv preprint , 2023. URL https://arxiv.org/abs/2306.12925 . S Sakshi, Utkarsh T yagi, Sonal Kumar , Ashish Seth, Ramaneswaran Selvakumar , Oriol Nieto, Ra- mani Duraiswami, Sre yan Ghosh, and Dinesh Manocha. MMA U: A Massiv e Multi-T ask Au- dio Understanding and Reasoning Benchmark. arXiv preprint , 2024. URL https://arxiv.org/abs/2410.19168 . Robin Scheibler , Eric Bezzam, and Iv an Dokmani ´ c. Pyroomacoustics: A Python Package for Audio Room Simulation and Array Processing Algorithms. In 2018 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pp. 351–355, 2018. doi: 10.1109/ICASSP . 2018.8461310. Muhammad A Shah, David Solans Noguero, Mikko A Heikkila, Bhiksha Raj, and Nicolas K ourtel- lis. Speech robust bench: A robustness benchmark for speech recognition. arXiv pr eprint arXiv:2403.07937 , 2024. Qundong Shi, Jie Zhou, Biyuan Lin, Junbo Cui, Guoyang Zeng, Y ixuan Zhou, Ziyang W ang, Xin Liu, Zhen Luo, Y udong W ang, and Zhiyuan Liu. UltraEval-Audio: A Unified Framew ork for Comprehensiv e Evaluation of Audio Foundation Models. arXiv preprint , 2026. Y emin Shi, Y u Shu, Siwei Dong, Guangyi Liu, Jaward Sesay , Jingwen Li, and Zhiting Hu. V oila: V oice-language foundation models for real-time autonomous interaction and voice role-play . arXiv preprint arXiv:2505.02707 , 2025. Changli T ang, W enyi Y u, Guangzhi Sun, Xianzhao Chen, T ian T an, W ei Li, Lu Lu, Zejun Ma, and Chao Zhang. SALMONN: T owards Generic Hearing Abilities for Large Language Models. In Pr oceedings of ICLR , 2024. URL . T omRoma. Child Speech dataset Whisper . https://huggingface.co/datasets/ TomRoma/Child_Speech_dataset_Whisper , 2024. Accessed: 2026-01-23. Bin W ang, Xunlong Zou, Geyu Lin, Shuo Sun, Zhuohan Liu, W enyu Zhang, Zhengyuan Liu, AiT i A w , and Nancy F . Chen. Audiobench: A universal benchmark for audio large language models. In Pr oceedings of the 2025 Conference of the Nations of the Americas Chapter of the Associa- tion for Computational Linguistics: Human Language T echnologies (NAA CL) , pp. 4297–4316. Association for Computational Linguistics, 2025a. He W ang, Linhan Ma, Dake Guo, Xiong W ang, Lei Xie, Jin Xu, and Junyang Lin. Contextasr- bench: A massi ve contextual speech recognition benchmark. arXiv preprint , 2025b. W enbin W ang, Y ang Song, and Sanjay Jha. Globe: A high-quality english corpus with global accents for zero-shot speaker adapti ve te xt-to-speech, 2024. Y ingzhi W ang, Anas Alhmoud, Saad Alsahly , Muhammad Alqurishi, and Mirco Rav anelli. Calm- Whisper: Reduce Whisper Hallucination On Non-Speech By Calming Crazy Heads Down. In Interspeech 2025 , pp. 3414–3418, 2025c. doi: 10.21437/Interspeech.2025- 201. Boyong W u, Chao Y an, Chen Hu, Cheng Y i, Chengli Feng, Fei Tian, Feiyu Shen, Gang Y u, Haoyang Zhang, Jingbei Li, Mingrui Chen, Peng Liu, W ang Y ou, Xiangyu T ony Zhang, Xingyuan Li, Xuerui Y ang, Y ayue Deng, Y echang Huang, Y uxin Li, Y uxin Zhang, Zhao Y ou, Brian Li, Changyi W an, Hanpeng Hu, Jiangjie Zhen, Siyu Chen, Song Y uan, Xuelin Zhang, Y imin Jiang, Y u Zhou, Y uxiang Y ang, Bingxin Li, Buyun Ma, Changhe Song, Dongqing Pang, Guoqiang Hu, Haiyang Sun, Kang An, Na W ang, Shuli Gao, W ei Ji, W en Li, W en Sun, Xuan W en, Y ong Ren, Y uankai Ma, Y ufan Lu, Bin W ang, Bo Li, Changxin Miao, Che Liu, Chen Xu, Dapeng Shi, Dingyuan Hu, 14 Donghang W u, Enle Liu, Guanzhe Huang, Gulin Y an, Han Zhang, Hao Nie, Haonan Jia, Hongyu Zhou, Jianjian Sun, Jiaoren W u, Jie W u, Jie Y ang, Jin Y ang, Junzhe Lin, Kaixiang Li, Lei Y ang, Liying Shi, Li Zhou, Longlong Gu, Ming Li, Mingliang Li, Mingxiao Li, Nan W u, Qi Han, Qinyuan T an, Shaoliang Pang, Shengjie Fan, Siqi Liu, T iancheng Cao, W anying Lu, W enqing He, W uxun Xie, Xu Zhao, Xueqi Li, Y anbo Y u, Y ang Y ang, Y i Liu, Y ifan Lu, Y ilei W ang, Y uanhao Ding, Y uanwei Liang, Y uanwei Lu, Y uchu Luo, Y uhe Y in, Y umeng Zhan, Y uxiang Zhang, Zidong Y ang, Zixin Zhang, Binxing Jiao, Daxin Jiang, Heung-Y eung Shum, Jiansheng Chen, Jing Li, Xiangyu Zhang, and Y ibo Zhu. Step-Audio 2 T echnical Report, 2025. URL https://arxiv.org/abs/2507.16632 . Peng Xie, Xingyuan Liu, Tsz W ai Chan, Y equan Bie, Y angqiu Song, Y ang W ang, Hao Chen, and Kani Chen. SwitchLingua: The First Lar ge-Scale Multilingual and Multi-Ethnic Code-Switching Dataset. arXiv preprint , 2025. W ayne Xiong, Jasha Droppo, Xuedong Huang, Frank Seide, Michael L. Seltzer , Andreas Stolcke, Dong Y u, and Geoffre y Zweig. T ow ard Human Parity in Con versational Speech Recognition. IEEE/A CM T ransactions on A udio, Speec h, and Languag e Processing , 2017. Pengyu Xu, Shijia Li, Ao Sun, Feng Zhang, Y ahan Li, Bo W u, Zhanyu Ma, Jiguo Li, Jun Xu, Jiuchong Gao, et al. V oiceagentev al: A dual-dimensional benchmark for expert-lev el intelligent voice-agent ev aluation of xbench’ s professional-aligned series. arXiv pr eprint arXiv:2510.21244 , 2025. Aohan Zeng, Zhengxiao Du, Mingdao Liu, K edong W ang, Shengmin Jiang, Lei Zhao, Y uxiao Dong, and Jie T ang. Glm-4-voice: T ow ards intelligent and human-like end-to-end spoken chatbot. arXiv pr eprint arXiv:2412.02612 , 2024. Jian Zhang, Linhao Zhang, Bokai Lei, Chuhan W u, W ei Jia, and Xiao Zhou. W ildSpeech-Bench: Benchmarking Audio LLMs in Natural Speech Con versation. arXiv pr eprint arXiv:2506.21875 , 2025. Jiawei Zhang, T ianyu P ang, Chao Du, Y i Ren, Bo Li, and Min Lin. Benchmarking lar ge multimodal models against common corruptions. arXiv pr eprint arXiv:2401.11943 , 2024. Y u Zhang, James Qin, Daniel S. Park, W ei Han, Chung-Cheng Chiu, Ruoming P ang, Quoc V . Le, and Y onghui W u. Pushing the Limits of Semi-Supervised Learning for Automatic Speech Recognition. arXiv preprint , 2020. Jiaming Zhou, Shiyao W ang, Shiwan Zhao, Jiabei He, Haoqin Sun, Hui W ang, Cheng Liu, Aobo K ong, Y ujie Guo, and Y ong Qin. ChildMandarin: A Comprehensive Mandarin Speech Dataset for Y oung Children Aged 3-5. arXiv pr eprint arXiv:2409.18584 , 2024. Zhitong Zhou, Qingqing Zhang, Lei Luo, Jiechen Liu, and Ruohua Zhou. Open-Source Full- Duplex Con versational Datasets for Natural and Interactive Speech Synthesis. arXiv preprint arXiv:2509.04093 , 2025. 15 A U N I FI E D I N F E R E N C E P RO T O C O L All systems are ev aluated under a unified inference protocol unless specified otherwise. W e ev aluate each model on all subsets listed in T able 1, and report performance independently for each factor . W e report corpus-le vel WER for English (EN) and CER for Chinese/Japanese/K orean (ZH/J A/K O). For the code-switching subset, we report Mix ed Error Rate (MER): each transcript is tokenized into a mixed sequence where Latin/English spans are word-tokenized (after normalization) and CJK scripts are character-tokenized, and we compute WER over the mixed token stream at the corpus lev el. Hyperparameter settings can be seen in T able5. Inference setting V alue T emperature 0.2 T op- p 0.9 Max new tok ens 2048 Language conditioning Prompt ( { language name } ) and/or SDK language code T able 5: Unified inference setting for ASR benchmarking. Audio inputs are resampled to 16kHz. The default instruction is: ‘Please transcribe the audio in { language name } . Do not add any additional text that is not in the speech content.’ B A C C E N T D I S T R I B U T I O N The accent distribution in W ildASR is visualized in Figure 5. For English (Figure 5 left), the dataset encompasses a div erse range of accents including Canadian (12.4%), Australian (12.1%), German (11.5%), etc. This distribution ensures comprehensiv e coverage of non-nati ve English accents. For Chinese (Figure 5 right), the dataset focuses on regional Mandarin varieties with representation from Zhongyuan (29.6%), Ji Lu (21.9%), Jiang Huai (20.3%), etc, capturing the phonological diversity across different Mandarin-speaking re gions while maintaining mutual intelligibility . 12.4% 12.1% 11.5% 9.8% 9.6% 9.5% 8.9% 8.2% 7.7% 4.7% 5.6% Canadian Australian Ger man NewZealand SouthAsia US F ilipino England Irish Scottish Other 29.6% 21.9% 20.3% 14.3% 11.6% 2.3% Zhongyuan Ji-L u Jiang-Huai Mandarin Jiao -Liao Other Figure 5: Accent Distribution in W ildASR. Left: English. Right: Chinese. C C U R A T I O N P I P E L I N E D E TA I L S W e describe each stage of the curation pipeline introduced in §3.1: DC (Data Collection). W e source raw audio from publicly available speech corpora with verified transcripts across our target languages. Source datasets for each subcategory are listed in T able 6. SF (Speaker Filtering). For demographic subsets, we verify speaker metadata (age, accent, nativ e language) against dataset annotations and discard samples with ambiguous or missing labels. For older adults, we filter to select samples where age-related acoustic degradation is the dominant feature, minimizing confounding factors such as dialect v ariation. 16 QF (Quality Filtering). W e discard unintelligible or corrupted recordings and remove samples with poor signal-to-noise ratios. F or children speech, we perform rigorous filtering to exclude low-SNR samples. For code-switching, we remo ve samples without substanti ve multilingual mixing. NR (A udio Normalization). All audio is resampled to 16 kHz mono with loudness normalization to ensure consistent input conditions across sources. AA (Acoustic A ugmentation). For the en vironmental degradation subset, we apply fiv e controlled, transcript-preserving perturbations (reverberation, far -field, phone codec, noise gap, clipping) at multiple calibrated sev erity levels, as detailed in §3.2. MT (Manual T runcation & T ranscript Alignment). For incomplete audio, we manually edit wa veforms to truncate speech mid-sentence or mid-w ord, and use the truncated transcript as the ground truth, as described in §3.4. MV (Manual V erification). W e manually validate transcript correctness across all subsets. For children and older adult speech, transcripts are manually revie wed for accuracy . D D A T A S O U R C E S T able 6 lists the source datasets used for each subcategory of W ildASR. T able 6: Source datasets f or each WildASR subcategory . Subcategory Sources En vironmental degradation FLEURS, MagicData Children Zenodo, Child Speech, ChildMandarin Older adults GLOBE V3, seniortalk Accent GLOBE V2, KeSpeech Short utterances YOD AS Incomplete audio Y OD AS Code-switching SwitchLingua E P RO M P T S E N S I T I V I T Y W e run prompt-sensitivity experiments in two languages (English and Chinese) using Gemini 2.5 Pro to measure how much the model’ s transcripts change when the instruction wording changes. W e ev aluate on demographic slices (Accent, Children, Older Adult). For fairness, we express the same transcription request using 10 paraphrased prompt variants in each language. For each audio sample, we test all 10 variants in the sample’ s original language. The English prompt variants are shown in T able 7. F Q U A L I T A T I V E FA I L U R E PA T T E R N S T able 8 shows representativ e errors made by dif ferent models on the English subset, across se veral dataset slices. W e highlight the incorrect parts of the model prediction in bold . W e observe sev eral recurring failure types: • Non-transcription outputs (e.g., producing tokens such as [noise] instead of words) • Full hallucinations (e.g., “ah yeah” → “I’m with breast”; “who identified” → “I don’t know if I can. ”) • A uto-Completion beyond the audio (e.g., continuing “so Putin took the” with inv ented content) • Refusals (e.g., responding with apologies or capability disclaimers instead of transcribing) • Phonetically similar substitutions (e.g. ‘searching” → “shouting”) 17 T able 7: English prompt variants used for prompt-sensitivity evaluation. All prompts share the same intent— transcribe the audio into { english } and output only the transcript —but dif fer in wording and style. ID English prompt variant p01 English transcription only . Return the spoken words as text—nothing else. p02 T ask: produce a verbatim english transcript of the audio. Output transcript text only; omit all other content. p03 Perform speech-to-text for this audio in english. Respond with plain transcript text only (no labels or commentary). p04 Please write the english transcript of what you hear . Reply with only the transcription. p05 Create the most f aithful english transcript possible. Output ONL Y the transcript (no summaries, notes, or formatting). p06 In english, transcribe the audio. Provide only the transcript. p07 Y ou are an ASR engine. Emit the english transcript as raw text only; do not add headers, punctuation notes, or extra lines. p08 Return exactly one thing: the english transcription of the audio. No preamble, no JSON, no quotes— just the transcript. p09 Write the spoken content as a english transcript. Do not include timestamps, speaker tags, explanations, or any non-transcript te xt. p10 Provide the english transcript of the audio. Y our entire response should be the transcript text and nothing more. Reporting these qualitative errors is important because it reveals failure modes that are not well captured by WER/CER. These errors can be semantically plausible yet not present in the audio, which can introduce significant risks for downstream systems that rely on accurate transcripts. T able 8: Representati ve English failure cases from W ildASR. Rows are grouped by OOD dimen- sion. W e highlight the erroneous or safety-relev ant portion in bold Subset Ground T ruth Model Prediction En vironmental degradation Noise gap “a car bomb detonated at police headquar- ters in gaziantep turkey yesterday morn- ing killed two police of ficers and injured more than twenty other people” “a car bomb detonated at police headquar- ters in geyve tepe turkey yesterday morn- ing killing two police officers and injuring more than a hundred other people” phone codec “the center of tibetan meditation is the deity yoga through the visualization of various deities the energy channels are cleaned the chakras are acti vated and the enlightenment consciousness is created” “ [noise] [sigh] [sigh] [sigh] [sigh] [sigh] [sigh] [sigh] . . . ” clipping “ah yeah. ” “ I’m with breast . ” Demographic shift child “he was searching for him e verywhere” “ they were shouting for him every- where” Linguistic diversity incomplete audio “who identified” “ I don’t know if I can. ” short utterance “Y eah” “ I’m sorry , b ut I cannot listen to audio files. I can only process and generate text . . . ” short utterance “so Putin took the” “So Putin took the measur e of the W est and he decided that he could handle whatever we thr ew at him . ” 18 G D E T A I L E D P E R - M O D E L R E S U LT S This appendix pro vides full per -model results for each subset and language, complementing the aggregated tables in the main te xt. T able 9: Nova 2 — Environmental degradation on FLEURS. ∆ : absolute change relative to original. Augmentation ZH EN J A K O Perturbation Method CER (%) ∆ WER (%) ∆ CER (%) ∆ CER (%) ∆ Original – 10.1 – 6.0 – 7.0 – 6.5 – Clipping – 42.6 +32.5 60.4 +54.4 32.0 +25.0 51.9 +45.4 Far -field – 25.6 +15.5 71.0 +65.0 19.5 +12.5 45.9 +39.4 Phone G.711 12.6 +2.5 6.6 +0.6 8.8 +1.8 6.6 +0.1 GSM 14.5 +4.4 7.9 +1.9 11.6 +4.6 7.7 +1.2 Noise gap – 16.8 +6.7 8.2 +2.2 11.9 +4.9 10.7 +4.2 Rev erberation – 23.3 +13.2 31.0 +24.9 17.1 +10.1 30.8 +24.3 T able 10: Gemini 2.5 Pro — En vironmental degradation on FLEURS. ∆ : absolute change relati ve to original. Augmentation ZH EN J A K O Perturbation Method CER (%) ∆ WER (%) ∆ CER (%) ∆ CER (%) ∆ Original – 6.7 – 3.6 – 2.7 – 4.0 – Clipping – 13.6 +6.9 7.9 +4.3 6.3 +3.6 7.4 +3.4 Far -field – 9.6 +2.9 6.5 +2.9 4.2 +1.5 4.5 +0.6 Phone G.711 7.0 +0.3 3.5 -0.1 3.2 +0.5 4.0 +0.0 GSM 7.9 +1.2 4.2 +0.6 5.4 +2.7 4.1 +0.1 Noise gap – 8.2 +1.5 4.2 +0.6 3.5 +0.8 4.4 +0.4 Rev erberation – 9.2 +2.5 4.7 +1.1 6.4 +3.7 5.2 +1.2 T able 11: Gemini 3 Pr o — En vironmental degradation on FLEURS. ∆ : absolute change relati ve to original. Augmentation ZH EN J A K O Perturbation Method CER (%) ∆ WER (%) ∆ CER (%) ∆ CER (%) ∆ Original – 6.1 – 2.8 – 2.7 – 3.8 – Clipping – 10.8 +4.8 5.7 +2.9 5.8 +3.1 5.4 +1.6 Far -field – 7.7 +1.6 5.1 +2.3 3.7 +1.1 4.4 +0.6 Phone G.711 6.2 +0.1 2.9 +0.1 2.8 +0.2 3.8 +0.0 GSM 6.8 +0.8 3.5 +0.6 4.2 +1.6 3.8 +0.1 Noise gap – 11.9 +5.9 3.1 +0.3 6.6 +4.0 4.2 +0.5 Rev erberation – 7.9 +1.9 4.1 +1.2 5.6 +2.9 4.9 +1.1 19 T able 12: GPT -4o T ranscribe — En vironmental de gradation on FLEURS. ∆ : absolute change relativ e to original. Augmentation ZH EN J A K O Perturbation Method CER (%) ∆ WER (%) ∆ CER (%) ∆ CER (%) ∆ Original – 6.4 – 2.8 – 3.0 – 4.0 – Clipping – 17.4 +11.0 8.8 +5.9 7.9 +4.9 10.2 +6.2 Far -field – 8.3 +1.9 7.5 +4.7 5.5 +2.5 5.1 +1.1 Phone G.711 6.7 +0.3 2.9 +0.0 3.7 +0.7 4.0 +0.1 GSM 7.3 +0.9 3.1 +0.3 5.8 +2.8 4.3 +0.3 Noise gap – 7.6 +1.2 4.5 +1.6 4.5 +1.5 4.9 +0.9 Rev erberation – 10.0 +3.6 5.1 +2.3 9.7 +6.7 6.3 +2.4 T able 13: Qwen2-A udio — Environmental degradation on FLEURS. ∆ : absolute change relativ e to original. Augmentation ZH EN J A KO Perturbation Method CER (%) ∆ WER (%) ∆ CER (%) ∆ CER (%) ∆ Original – 9.1 – 5.8 – 10.9 – 12.7 – Clipping – 11.6 +2.5 12.6 +6.8 19.6 +8.7 35.8 +23.1 Far -field – 10.1 +1.0 8.9 +4.1 40.2 +29.3 57.0 +44.3 Phone G.711 8.8 -0.3 48.3 +42.5 15.3 +4.4 30.9 +18.2 GSM 9.4 +0.3 8.8 +3.0 30.4 +29.5 23.3 +10.6 Noise gap – 16.6 +7.5 9.8 +4.0 20.7 +9.8 40.0 +27.3 Rev erberation – 11.1 +2.0 9.5 +3.7 46.2 +35.3 44.1 +21.4 T able 14: Scribe V1 — En vironmental degradation on FLEURS. ∆ : absolute change relative to original. Augmentation ZH EN J A KO Perturbation Method CER (%) ∆ WER (%) ∆ CER (%) ∆ CER (%) ∆ Original – 8.7 – 3.6 – 4.8 – 5.6 – Clipping – 15.7 +7.1 5.6 +2.0 15.9 +11.1 9.6 +4.1 Far -field – 14.0 +5.4 5.5 +1.9 14.5 +9.7 10.6 +5.1 Phone G.711 11.0 +2.4 4.2 +0.6 8.0 +3.2 6.4 +0.8 GSM 11.5 +2.8 4.3 +0.7 10.1 +5.3 6.9 +1.3 Noise gap – 20.3 +11.6 11.3 +7.7 14.8 +10.0 17.8 +12.3 Rev erberation – 16.5 +7.8 5.1 +1.5 14.7 +9.9 9.9 +4.4 T able 15: Whisper Lar ge V3 — En vironmental de gradation on FLEURS. ∆ : absolute change relativ e to original. Augmentation ZH EN J A K O Perturbation Method CER (%) ∆ WER (%) ∆ CER (%) ∆ CER (%) ∆ Original – 7.5 – 4.2 – 4.6 – 5.0 – Clipping – 13.5 +5.9 8.5 +4.3 7.5 +3.0 9.0 +4.0 Far -field – 10.8 +3.3 6.3 +2.0 6.6 +2.1 6.1 +1.1 Phone G.711 7.8 +0.3 4.8 +0.6 5.0 +0.5 5.9 +0.9 GSM 8.7 +1.1 4.9 +0.7 5.6 +1.1 5.2 +0.3 Noise gap – 10.8 +3.3 5.5 +1.3 8.3 +3.8 7.1 +2.2 Rev erberation – 13.1 +5.5 6.8 +2.6 9.0 +4.5 7.1 +2.2 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment