R-C2: Cycle-Consistent Reinforcement Learning Improves Multimodal Reasoning

Robust perception and reasoning require consistency across sensory modalities. Yet current multimodal models often violate this principle, yielding contradictory predictions for visual and textual representations of the same concept. Rather than mask…

Authors: Zirui Zhang, Haoyu Dong, Kexin Pei

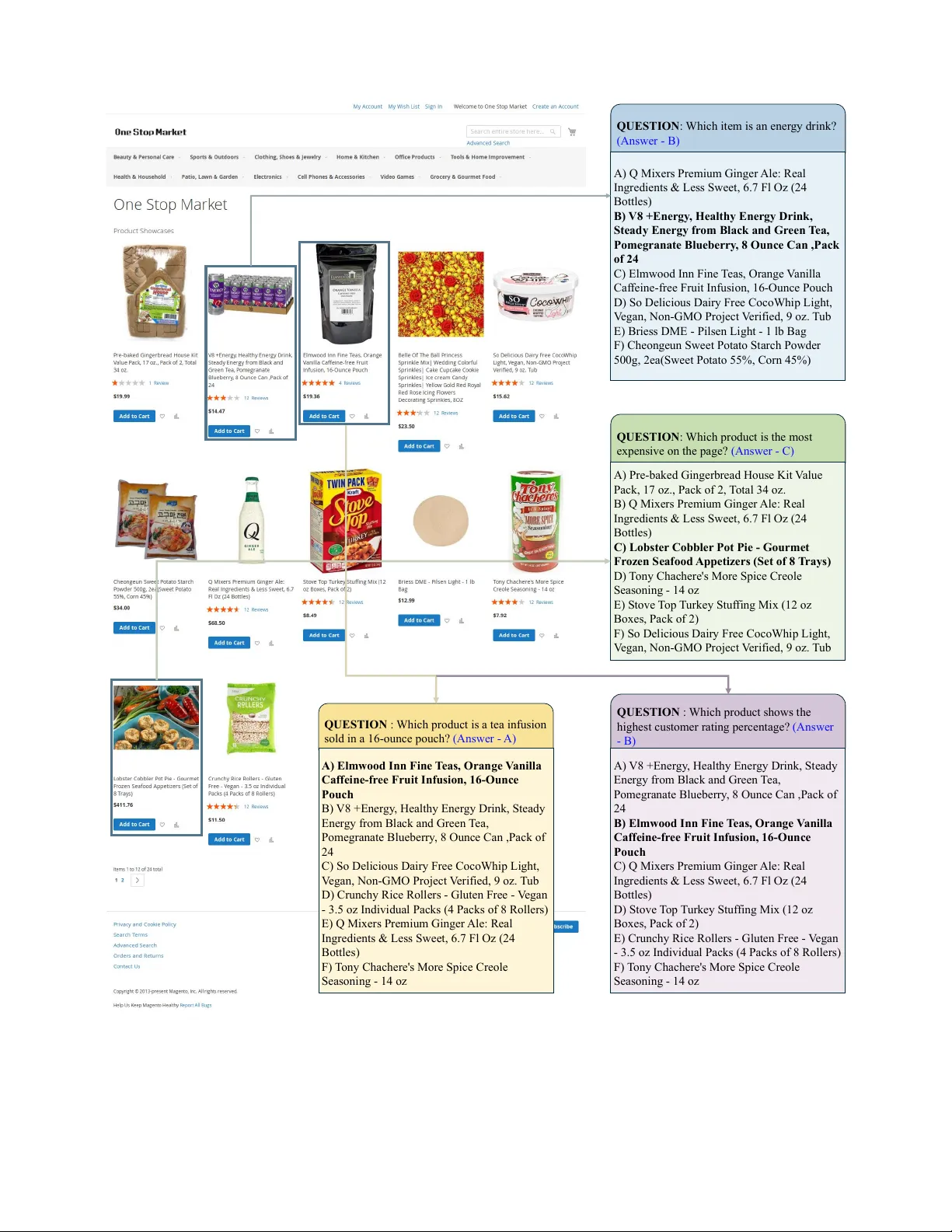

R - C 2 : Cycle-Consistent Reinf or cement Learning Impr ov es Multimodal Reasoning Zirui Zhang 1 Haoyu Dong 2 K exin Pei 3 Chengzhi Mao 1 1 Rutgers Uni versity 2 Columbia Uni versity 3 Uni versity of Chicago https://zirui00.github.io/RC2-Project-Page/ Abstract Robust per ception and r easoning r equir es consistency acr oss sensory modalities. Y et, current multimodal models often violate this principle, yielding contradictory pr edic- tions for visual versus textual repr esentations of the same concept. Rather than masking these failur es with standard voting mechanisms—which amplify systematic biases—we demonstrate that cr oss-modal inconsistency pr ovides a rich, natural signal for learning. W e intr oduce R - C 2 , a reinfor ce- ment learning framework that resolves internal conflicts by enfor cing cr oss-modal cycle consistency . By r equiring a model to perform backwar d infer ence, switches modalities, and reliably r econstruct the answer via forward infer ence, we establish a dense, label-fr ee r ewar d. This cyclic con- straint for ces the model to autonomously align its r epr esen- tations. Optimizing for this structur e mitigates modality- specific error s and impr oves reasoning accuracy by up to 7.6 points. Our r esults suggest that advanced r easoning emer ges not just fr om scaling data, but fr om enforcing a structurally consistent understanding of the world. 1. Introduction Multimodal Lar ge Language Models (MLLMs) suf fer from a fundamental modality g ap [ 57 ], often contradicting them- selves on visual versus te xt vie ws of the same input content. As shown in Figure 1 , we show that an MLLM can yield dif- ferent answers for an identical webpage when presented as a screenshot v ersus its raw HTML source. As MLLMs are widely deployed in domains such as multimodal document understanding [ 30 , 31 ], web UI na vigation [ 18 ], and agentic systems [ 32 , 48 , 51 ], this lack of robustness and consistency can be a critical failure. Most prior work attempts to improve reasoning through large-scale fine-tuning on meticulously curated datasets, which are expensi v e to construct and inherently limited in their ability to scale model performance [ 45 ]. Rein- forcement learning (RL) [ 33 , 34 ] of fers an alternati ve, b ut BHPC Blaze by Beverly Hills PoloClub, 6 oz Body spray for Men. 12 Reviews $14.37 Add to Cart Bath & Body Works TeakwoodMen's Deodorizing Body spray 3.7Oz. 1 Review $14.95 Add to Cart xxx $14.37 Add to Cart xxx $14.95 Add to Cart Home Elements F12 QUESTION : Which product is a body spray under $15 and shows 12 Reviews? MLLM ANSWER : Bath & Body Works Men’s Deodorizing Body Spray ANSWER : BHPC Blaze by Beverly Hills Polo Club Website Snapshot HTML Figure 1. Gap in Multimodal Reasoning. Multimodal large language models (MLLMs) frequently fail the test of modal- in variance. For example, they produce conflicting answers for the same webpage when presented as a screenshot versus its raw HTML source. W e introduce a cycle-consistency framew ork that directly tar gets this modality gap, lev eraging the inconsistency it- self as a signal to jointly improv e reasoning and alignment. hinges on reliable reward signals; unlike math [ 47 , 54 ] or code [ 24 , 37 ], complex multimodal answers are rarely v eri- fiable. W ithout annotated query-answer pairs, recent self- improv ement methods use majority voting [ 16 , 43 , 44 ]. Howe v er , these voting mechanisms suffer from inherent limitations. As illustrated in Figure 2 , the problem is com- pounded in multimodal settings: when visual and textual predictions disagree—an e xtremely common scenario—the consensus becomes unstable and arbitrary . This lea ves the underlying conflict unresolved and, in “majority-is-wrong” cases, actually amplifies the error . Our key insight is that this cross-modal inconsistency is not a failure but a powerful, untapped resource for self- rew ard learning. Instead of relying on flawed voting, we introduce cross-modal cycle consistency ( R - C 2 ), a frame- work that reframes this gap as a self-supervised re ward sig- nal. R - C 2 starts from a candidate answer . Then it performs backward inference to propose a query that would elicit that 1 !! ! ! Projection Screens !! !!!!!! QUESTIO N : According to the breadcrumbs, which speci fic subcategory page are you viewing? (Answer - C) A) Sports & Outdoors ! ! ! ! B) Office Products !!! C) Projection Screens ! ! ! D) T elevision & V ideo C C C D D D Rollout 1 Rollout 2 Rollout 3 IMAGE TEXT C D V ote QUESTIO N : Between the two 60-capsule items, which is cheaper? (Answer - B) A) Dcore Olena Natural DHT Blocker 60 Capsules for Hair Loss !!!!!!!! B) ALPHA BEARD Growth V itamins ... - 60 Capsules ! C) AICHUN BEAUTY Beard Oil ... 30ml A C B B A A Rollout 1 Rollout 2 Rollout 3 Random A IMAGE TEXT V ote Figure 2. Failure of multimodal voting. Left: Consistent Conflict — both text and image modalities produce self-consistent predictions ( mode-stable ) but disagree with each other, and only one modality aligns with the ground truth. Right: Unstable Recovery — within a single modality , some rollouts yield the correct answer , but the majority vote remains wrong, reflecting intra-modal instability . Using multimodal voting can amplify biases or lose correct signals. answer , then switches modalities to perform forward infer- ence and reconstruct the original answer , as shown in Fig- ure 3 . This cycle serves as a dense, label-free re ward that forces the model to resolve its own internal multimodal con- flicts, thus improving the reasoning capability . Extensiv e experiments, complemented by a div erse suite of case-study visualizations that rev eal modality con- flicts and how R - C 2 resolves them, demonstrate that R - C 2 significantly improves multimodal reasoning capabil- ities without human annotations. In 3B and 8B multi- modal LLMs, our method improves performance by up to 7.6 points on major multimodal benchmarks, includ- ing ScienceQA [ 26 ], ChartQA [ 29 ], InfoVQA [ 31 ], Math- V ista [ 27 ], A-OKVQA [ 35 ], and V isual W eb Arena [ 18 ]. Moreov er , our method greatly increases the consistenc y of cross-modal prediction. W e further study the conditions un- der which our cross-modal cycle consistency approach pro- vides the greatest benefit, offering insights into the nature of the modality gap in state-of-the-art models. 2. Related W ork Multimodal Large Language Models. V ision–language LLMs [ 23 , 25 , 39 , 50 ] extend Language Models (LMs) [ 1 – 3 , 40 ] with a vision encoder that maps images into the token (or embedding) space of the LM. In most systems, the vi- sion encoder is pretrained separately and then frozen [ 39 ], and the supervision av ailable for image–text is far sparser than for text-only corpora. These factors contribute to a modality gap : the same query can yield dif ferent answers depending on whether relev ant information is provided as text or embedded in an image. Recent studies begin to char- acterize this gap [ 38 , 52 ], but practical methods to close it remain limited. A prev ailing strategy is to synthesize additional image–text QA pairs by first captioning images and then prompting an LM to generate query–answer pairs from the captions [ 5 , 6 , 10 , 15 , 23 , 42 , 56 ]; howe ver , such pipelines often require nontri vial human curation and can propagate caption biases [ 11 ]. In contrast, we le verage inci- dental structure in naturally occurring multimodal data to improv e understanding and reasoning without relying on large v olumes of manually curated synthetic QA. Reward Modeling. Reinforcement learning from human feedback (RLHF) and its extensions hav e been central to aligning LLMs with human preferences [ 14 , 33 ]. For verifiable domains such as mathematics and code [ 19 ], outcome-based rew ards are sufficient because correctness can be objectiv ely measured. Beyond outcome-only super- vision [ 36 ], recent work explores rew arding intermediate reasoning steps [ 12 , 46 , 49 ], often pairing process super- vision with learned reward models that estimate the quality of partial solutions rather than final answers [ 21 , 22 , 28 , 47 ]. Although these methods are effecti ve in domains with ver- ifiable feedback, they remain brittle in multimodal reason- ing, where step-level ev aluation is ambiguous, and learned rew ard models can inherit modality-specific biases. This leads to r ewar d misspecification —overfitting to superficial textual or visual cues rather than genuine semantic correct- ness. Label-Free Reinfor cement Learning and Self-Ev olution. T o mitigate reliance on human or synthetic labels, recent trends pursue label-fr ee self-improvement paradigms, in- cluding label-free RL [ 16 ], self-play [ 41 ], self-instruct [ 45 ], and self-training or self-refinement [ 13 , 17 , 58 ]. These methods iteratively generate and refine their own data us- ing internal feedback, sometimes leveraging consistenc y or confidence as rew ard signals [ 22 , 55 , 59 ]. More adv anced framew orks extend this idea to weak-to-strong learning [ 4 , 20 ] and self-critique mechanisms [ 7 , 8 , 53 ], where models bootstrap improvement from self-generated critics. How- ev er , many systems still equate consensus with correctness, optimizing for agreement through majority voting [ 9 ]. This 2 Candidate Answer ( a orig ) Query from T ext V iew Query from Image View T ext Observe ( x T ) Image Observe ( x I ) Reconstructed Answer ( a tt ) Reconstructed Answer ( a ti ) Reconstructed Answer ( a it ) Reconstructed Answer ( a ii ) Multimodal LLM Multimodal LLM T ext Observe ( x T ) Image Observe ( x I ) T ext Observe ( x T ) Image Observe ( x I ) ( q T ) ( q I ) Figure 3. Overview of multimodal cycle consistency . Starting from a potential answer candidate a orig , the model performs backwar d infer ence to reconstruct two latent queries, ˆ q T from the text view x T and ˆ q I from the image view x I . Each reconstructed query is then used for forward infer ence across both modalities, resulting in four reconstructed answers { a tt , a ti , a it , a ii } generated via the paths T → T , T → I , I → T , and I → I . Cycle consistency is measured by whether the reconstructed answers remain consistent with the original a orig , forming a full 4-way cross-modal reasoning cycle. risks amplifying systematic biases, a phenomenon often de- scribed as a “majority-is-wrong” failure. In multimodal LLMs, this issue is exacerbated by cr oss-modality incon- sistency [ 57 ], where visual and textual predictions diver ge ev en when grounded in the same underlying content. Our method av oids voting through a c ycle consistency . 3. Method Our goal is to improv e the multimodal reasoning capabili- ties of MLLM. W e frame this as a reinforcement learning (RL) problem, where the key challenge is to acquire a re- ward signal without human labels. W e first revie w self- rew arding methods based on consensus voting, sho wing how they fail in single-modal settings and how this failure is compounded in multimodal contexts. W e then introduce our solution, R - C 2 , a self-supervised rew ard framework that replaces flawed consensus with cross-modal cycle consis- tency . 3.1. The F ailure of Consensus-Based Rewards W e define our MLLM as a model F θ that produces an an- swer ˆ a given a multimodal input x and a text query q : ˆ a = F θ ( x , q ) . The input x can be visual-only x I (e.g., a screenshot), text-only x T (e.g., HTML), or an interleaved mix x M . T o improv e MLLM’ s reasoning capability , we follow the standard practice and optimize the model with reinforce- ment learning, a GRPO objectiv e [ 36 ]. At each step, F θ generates an answer for the query; a rew ard model assigns a scalar score, and we update θ to increase the lik elihood of the sampled answer when the reward is high and decrease it when the rew ard is low . This aligns F θ tow ards responses that consistently earn a higher reward. Formally , we will maximize this objectiv e: L GRPO = E h log π θ ( ˆ a i | x i , q i ) · ˆ A ( ˆ a i , a i ) i where A (ˆ a ) is the advantage, which is calculated via ˆ A ( ˆ a i , a i ) = r i − mean( r ) std( r ) , (1) where the advantage is calculated on the basis of its rela- tiv e normalized v alue in the batch. This paradigm, howe ver , hinges on a reliable reward function r i = R ( ˆ a i , a i ) that re- quires a ground-truth answer a or an external verifier , both of which can be expensi ve to scale for many multimodal tasks. Single-Modal V oting and “Majority-is-Wrong”. T o re- mov e the need for labels, recent “self-improv ement” meth- ods like R-zero [ 16 ] generate their own re wards without la- beled query-answer pairs. For a gi ven te xt query , the model generates k candidate answers { a j } k j =1 . Then it applies ma- jority voting to select the most plausible answer a ′ , which serves as a pseudo-label. a ′ = mo de( { a j } k j =1 ) . It calculates the reward as r i = R ( ˆ a i , a ′ ) . The model is then trained to increase the probability of producing this majority-voted answer . This approach suf fers from a fundamental “majority-is- wrong” failure. If the model has a systematic bias, the ma- jority of its answers will be incorrect. V oting will select an incorrect pseudo-label, and the RL objective will simply re- inforce the model’ s own mistakes, leading to a collapse in performance. Compounded Failur e: Multimodal V oting. T o extend voting to multimodal reasoning, we aggregate predictions from both the image and text modalities. For each query , the model answers k I times from image vie ws and k T times 3 …HOW TO CHOOSE \n THE RIGHT RACQUET CONTROL \n Who needs a control frame?... TWEENER \n Who needs a tweener frame?... POWER \n Who needs a power frame?... Candidate Answer: ['control, tweener , power'] ! —> T ext Query: What are the thr ee types of tennis racquet frames? ! —> Image Query: What are the three types of frames? InfoVQA InfoVQA …Number of ! most gold medals in one Olympics \n (BEIJING, 2008) Michael Phelps \n Swimmer , USA… Candidate Answer: ['michael phelps'] ! —> T ext Query: Who won the most gold medals in Beijing Olympics? ! —> Image Query: Who won the most medals at 2008 Summer Olympics? …candy on a tray .", "Desserts are on white paper liners on a silver platter .", "There is a piece of fluted paper , similar to… Candidate Answer: ['wrapping'] ! —> T ext Query: What tool does the pastry chef use to place the almonds on top of the chocolates? ! —> Image Query: What is the object being used to wrap the gift? ! A-OKVQA DocVQA This document appears to be an article or report from ! ITC Limited , focusing on their brands ! and their impact on the nation. … Candidate Answer: [‘ITC Limited’] ! —> T ext Query: Which company is the document discussing? ! —> Image Query: What is the name of the company? ChartQA ! …U.S. favorability in Russia\":… \"2011\": 57, \n \"2013\": 52, \"2015\": 23 \n } \n },\n \"Russia favorability in U.S.\":... Candidate Answer: ['Y es'] ! —> T ext Query: Did US favorability in Russia decrease fr om 2013 to 2015? ! —> Image Query: Did US favorability toward Russia in 2015 fall below 25%? ScienceQA U.S. map… 3 states highlighted: Arizona, Louisiana, & W est V irginia … \"W est Virginia\", \n \"count\": 1 … n \”color\”: \"lightgreen\" Candidate Answer: [‘West V irginia’] ! —> T ext Query: Which state is labeled with a green arr ow pointing to its northeastern border in the image? ! —> Image Query: Which U.S. state is the location of the highest elevation point shown in the map? ScienceQA \"Sample A and Sample B\"... \"Mass of each particle: 28 u\", \"A verage particle speed: 1300m/s\" \"Mass of each particle: 44 u\" \"A verage particle speed: 1300m/s\". .. Candidate Answer: [’B’] ! —> T ext Query: Which sample has particles with a greater mass, given that both samples have the same average particle speed? ! —> Image Query: Which sample showed a higher concentration of compound X after 24 hours of incubation? VWA …ALPHA BEARD Gr owth Vitamins | Beard ! and Hair Growth Supplement for Men (60 Capsules) … =“price”>$21.95

\n !!!!!!!!!  !!!!!!!!! Gaerit 16:9 Projection Scr een, Foldable HD Movies Screen !!!!!!!!

!!!!!!!!! Gaerit 16:9 Projection Scr een, Foldable HD Movies Screen !!!!!!!!

!!!!! ! $60.29

\n !!!!!!!!!! \n !!!!!!!!!  ! !!!!!!! 16FT Inflatable Projector Movie TV Screen for Backyard !!!!!!!!

! !!!!!!! 16FT Inflatable Projector Movie TV Screen for Backyard !!!!!!!!

!! $179.99

\n !!!!!!!!!

Comments & Academic Discussion

Loading comments...

Leave a Comment