Out of Sight but Not Out of Mind: Hybrid Memory for Dynamic Video World Models

Video world models have shown immense potential in simulating the physical world, yet existing memory mechanisms primarily treat environments as static canvases. When dynamic subjects hide out of sight and later re-emerge, current methods often strug…

Authors: Kaijin Chen, Dingkang Liang, Xin Zhou

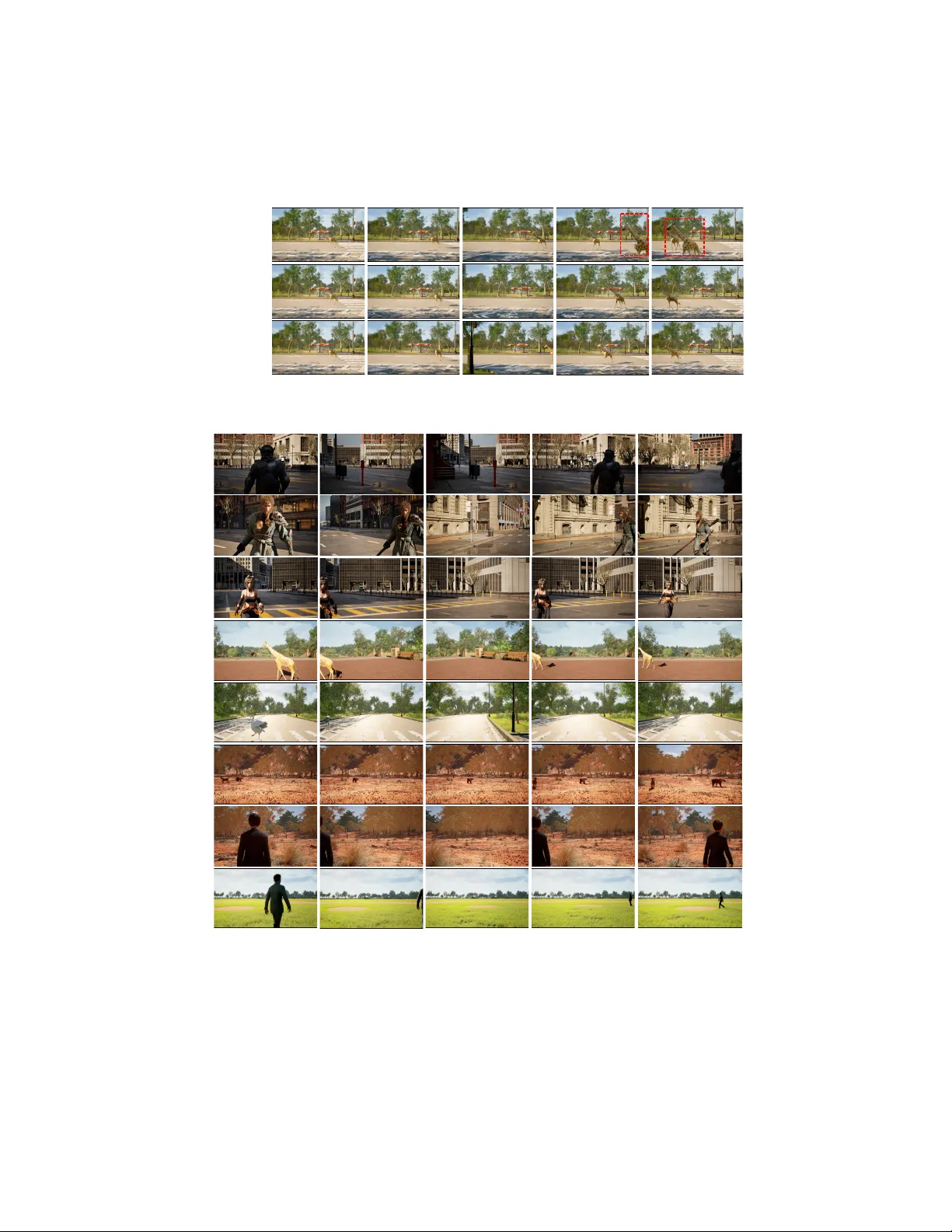

Out of Sigh t but Not Out of Mind: Hybrid Memory for Dynamic Video W orld Mo dels Kaijin Chen 1 , Dingk ang Liang 1 , Xin Zhou 1 , Yik ang Ding 2 , Xiao qiang Liu 2 , P engfei W an 2 , and Xiang Bai 1 1 Huazhong Univ ersity of Science and T ec hnology 2 Kling T eam, Kuaishou T echnology {kjchen, dkliang}@hust.edu.cn Pro ject Page: Hybrid-Memory-in-Video-W orld-Models T2 T3 T4 T5 T1 Subj e c t E xi t i n g t h e F r am e Subj e c t Re - e n t e r i n g t h e F r am e A p p e ar an c e C on s i s t e n c y M ot i on C on s i s t e n c y ( b ) H yb r i d M e m or y (Ou r s ) Appearance Consistency Static Consistency Motion Consistency Subject Exiting the Frame Subject Re -entering the Frame T1 T2 T3 T4 T5 In Sight Out of Sight Fig. 1: Hybrid Memory demands the mo del to maintain static consistency in back- grounds, while simultaneously preserving the motion and appearance consistency of dynamic sub jects during out-of-view interv als. Abstract. Video w orld models hav e sho wn immense potential in sim- ulating the physical w orld, yet existing memory mechanisms primarily treat environmen ts as static canv ases. When dynamic sub jects hide out of sigh t and later re-emerge, current metho ds often struggle, leading to frozen, distorted, or v anishing sub jects. T o address this, w e introduce Hybrid Memory , a no vel paradigm requiring models to simultaneously act as precise archivists for static backgrounds and vigilant track ers for dynamic sub jects, ensuring motion contin uity during out-of-view inter- v als. T o facilitate researc h in this direction, we construct HM-W orld , the first large-scale video dataset dedicated to hybrid memory . It features 59K high-fidelity clips with decoupled camera and sub ject tra jectories, W ork done during an internship at Kling T eam, Kuaishou T echnology . 2 K. Chen et al. encompassing 17 diverse scenes, 49 distinct sub jects, and meticulously designed exit-entry even ts to rigorously ev aluate hybrid coherence. F ur- thermore, we prop ose HyDRA , a sp ecialized memory architecture that compresses memory into tok ens and utilizes a spatiotemp oral relev ance- driv en retriev al mechanism. By selectively attending to relev ant motion cues, HyDRA effectively preserves the identit y and motion of hidden sub- jects. Extensive exp erimen ts on HM-W orld demonstrate that our metho d significan tly outp erforms state-of-the-art approaches in b oth dynamic sub ject consistency and ov erall generation quality . Keyw ords: W orld Mo dels · Spatiotemp oral Consistency · Memory 1 In tro duction W orld Mo dels [ 1 – 4 ] hav e recen tly garnered significan t res earc h attention for their abilit y to generate high-fidelit y en vironments that align with the real w orld. These mo dels hav e demonstrated immense p oten tial across diverse do wnstream domains, including autonomous driving [ 5 , 6 ] and em b odied in telligence [ 7 , 8 ]. The latest adv ancemen ts in video generation [ 9 – 11 ] further v alidate the feasibil- it y of mo deling the physical world. Crucially , memory mechanisms hav e emerged as a critical frontier in adv ancing world mo dels, as memory capacity dictates the spatial and temp oral consistency of generated con tent. Sp ecifically , it is the cog- nitiv e anchor that allo ws the model to retain historical context during viewp oint shifts or long-term extrap olation. Without robust memory , a simulated w orld quic kly unrav els into disconnected, chaotic frames. While recent studies [ 15 – 18 , 28 ] hav e enhanced the memory capacity through adv anced retriev al retriev al [ 15 – 17 ] and compression [ 28 ] tec hniques, they share a common blind sp ot: treating the world as a static canv as. They excel at mem- orizing and reconstructing motionless environmen ts, but the physical w orld is a bustling, dynamic stage p opulated by sub jects (e.g., walking p edestrians, run- ning animals) gov erned by their indep endent motion logic. When dynamic sub- jects hide outside the camera’s field of view, these mo dels lose trac k of them, often rendering the returning sub jects as frozen statues, distorted phantoms, or simply letting them v anish in to the air. T o bridge this gap, we introduce a no vel memory paradigm: Hybrid Memory , which requires the mo del to sim ul- taneously perform precise memorization and viewp oin t reconstruction of static bac kgrounds, while contin uously seeking and predicting the motion of dynamic sub jects. As illustrated in Fig. 1 , when a sub ject hides out of view, the mo del m ust not only remember its app earance but also mentally predict its unseen tra jectory , ensuring b oth visual coherence and motion consistency when they re-en ter the frame. T o inv estigate and v alidate this new hybrid memory paradigm, construct- ing a sp ecialized dataset and designing corresp onding memory mechanisms are imp erativ e. In this w ork, w e in tro duce HM-W orld , the first large-scale video dataset purp ose-built to train and ev aluate H ybrid M emory capabilities. HM- W orld p ossesses tw o core prop erties: 1) meticulously designed shots with d y- Hybrid Memory for Dynamic Video W orld Mo dels 3 namic sub jects exiting and entering the frame, and 2) highly diverse scenarios, sub jects, and motion patterns. Comprising 59K video clips, the dataset deliber- ately decouples camera tra jectories from sub ject mov emen ts, creating countless natural instances where sub jects slip in to the unseen margins b efore re-emerging. F urthermore, HM-W orld exhibits exceptional diversit y , encompassing 17 distinc- tiv ely styled scenes, 49 different sub jects (including h umans of v arious appear- ances and m ultiple animal sp ecies), 10 motion paths for sub jects, and 28 t yp es of camera tra jectories. Based on the prop osed dataset HM-W orld, we ev aluate existing methods and observe that they tend to either immobilize mo ving ob jects or distort dy- namic con ten t, lac king the h ybrid memory capacit y to trac k unseen motion. T o equip mo dels with this capacity , we prop ose HyDRA ( Hy brid D ynamic R etriev al A tten tion), a memory approach designed to seek the hidden sub jects and preserve dynamic consistency . HyDRA emplo ys a Memory T ok enizer that compresses memory latents into tok ens with ric her information. When a sub ject is poised to re-enter the frame, HyDRA utilizes a spatiotemporal relev ance- driv en retriev al mechanism to activ ely scan these tokens, pulling the most cru- cial motion and app earance cues into the current denoising pro cess. This allo ws the mo del to effectiv ely rediscov er the hidden sub ject, seamlessly picking up its tra jectory where it left off. Extensive exp erimen ts on HM-W orld demonstrate that HyDRA significantly outp erforms state-of-the-art approaches in preserving dynamic sub ject consistency and ov erall generation quality . Ablation studies fur- ther v erify the robustness of our design. W e hop e our dataset and metho d can offer a fresh p erspective for the communit y . Our main contributions can b e summarized as follo ws: 1) W e identify the limitations of existing static-centric memory mechanisms and propose Hybrid Memory , a no vel paradigm that requires mo dels to sim ultaneously maintain spatial consistency for static bac kgrounds, and motion con tinuit y for dynamic sub jects, especially during out-of-view interv als. 2) W e introduce HM-W orld , the first large-scale v ideo dataset dedicated to h ybrid memory research. F ea- turing 59K clips with div erse scenes, sub jects, and motion patterns, it provides a rigorous b enc hmark for ev aluating spatiotemp oral coherence in complex, dy- namic environmen ts. 3) W e prop ose HyDRA , a sp ecialized memory architec- ture that utilizes a spatiotemp oral relev ance-driven retriev al mechanism with memory tokens. By attending to relev ant motion cues, HyDRA effectively seeks and redisco vers hidden sub jects and preserv es its iden tity and motion, signifi- can tly outp erforming existing state-of-the-art metho ds. 2 Related W orks 2.1 Video W orld Models Recen t adv ances in video generation mo dels [ 9 – 11 , 42 , 43 ] ha ve demonstrated their p oten tial in mo deling the real world and synthesizing high-fidelity clips, in- creasingly serving as the foundation for world models. Building on this progress, m ultiple video world mo dels ha ve b een introduced [ 2 , 3 , 14 , 26 , 27 , 44 , 47 ]. GameGen- X [ 26 ] explores in teractive video world mo dels within game-like environmen ts. 4 K. Chen et al. Y ume [ 3 ] further increases the length of generated videos through autoregressiv e generation. Matrix-Game 2 [ 2 ] constructs a large-scale dataset based on GT A- V and Unreal Engine 5 [ 19 ] and incorp orates autoregressive denoising [ 43 ] to ac hieve con trollability and visual quality comparable to video games. RELIC [ 27 ] fo cuses on static scene consistency and distills long-video generation with re- pla yed back-propagation, enabling stable, long-duration generation. W orldplay [ 14 ] lev erages large-scale, high-quality data and con text forcing technique to de- liv er b oth exceptional visual quality and consistency while supp orting real-time generation. Despite significant progress, video world mo dels contin ue to confront several c hallenges, with generation consistency b eing a prominen t one. Curren t mo dels still struggle to maintain b oth static and dynamic consistency across generated sequences. This issue is particularly pronounced during long-duration generation and under camera motion, where models frequently lose track of previously gen- erated con tent or con textual input, leading to inconsisten t outputs. Our work aims to tackle this challenge from the p ersp ectiv e of hybrid memory , enabling spatiotemp orally consistent generation. 2.2 Memory in Video Generation Existing memory approaches primarily fo cus on pro cessing the context and op- timizing the interaction and propagation of con textual information during the generation process. V mem [ 16 ] emplo ys a 3D surfel-indexed memory structure to retrieve context, while Context-as-Memory [ 15 ] adopts Field-of-View (FO V) o verlap. W orldmem [ 17 ] combines F OV-based retriev al for an external mem- ory bank with Diffusion F orcing [ 29 ] on Minecraft data. Memory F orcing [ 18 ] further incorp orates temp oral memory to balance exploration and consistency . Similarly , W orldPlay [ 14 ] enhances long-term generation consistency through a con text-forcing approach. Inspired b y F ramePac k [ 30 ], MemoryPac k [ 28 ] in tro- duces an up datable semantic pack throughout the generation pro cess, retaining seman tically relev ant memory . In parallel, RELIC [ 27 ] applies uniform spatial do wn-sampling to compress context memory . Existing studies ha ve ac hieved notable results. How ev er, most of these meth- o ds are designed for static scenes [ 15 , 16 , 27 ] or relatively simple dynamic en- vironmen ts [ 17 , 18 , 28 ], and ha ve not been sp ecifically optimized for complex dynamic scenes inv olving mo ving sub jects and dynamic elements. Although Ge- nie 3 [ 50 ] demonstrates remark able dynamic consistency , it is a closed-source mo del with tec hnical details remaining undisclosed. This researc h gap p ersists in both dataset construction and metho d design. T o address this, our w ork fo- cuses on hybrid memory in complex dynamic scenes, tackling the challenge from b oth metho dological and dataset p ersp ectiv es. 3 HM-W orld: Dataset T o address the research gap in h ybrid memory , we conduct an in-depth anal- ysis of its definition and inheren t c hallenges for curren t video world models in Hybrid Memory for Dynamic Video W orld Mo dels 5 Exiting the frame Re -entering the frame Camera T rajectory Fig. 2: Instances of exit-entry camera motion. Sec. 3.1 . Building up on this analysis, w e in troduce HM-W orld , a large-scale dataset constructed for H ybrid M emory in Video W orld Models, and detail its c haracteristics in Sec. 3.2 . 3.1 Hybrid Memory Memory refers to the mo del’s ability to retain information from inputs or gen- erated conten t, ensuring consistency throughout the generation process. Static memory ensures the consistency of immobile elements (e.g., buildings, roads), and is typically ev aluated by assessin g whether a scene lo oks iden tical when the camera returns to a previous pose [ 15 ]. Hybrid memory , how ever, demands a far more sophisticated cognitive leap. It requires the mo del to simultaneously anc hor the static background while trac king the dynamic sub jects (e.g., p edes- trians, running dogs). As illustrated in Fig. 2 , when a sub ject exits and re-en ters the frame, hybrid memory dictates that it must not only retain its original visual iden tity but also reappear at a plausible lo cation with a consisten t motion state. A chieving h ybrid memory is challenging for several reasons: 1) Need for spatiotemp oral decoupling . Unlike static memory , which merely maps cam- era p oses to a fixed 3D space, hybrid memory forces the mo del to independently un tangle the camera’s ego-motion from the sub ject’s indep enden t tra jectory . 2) Out-of-view extrap olation . Once a sub ject steps off-stage, the mo del loses di- rect visual evidence and must implicitly simulate the sub ject’s mov ement in the laten t space. 3) F eature entanglemen t . In standard diffusion latents, static bac kground features and sub ject features are heavily coupled. Retrieving his- torical con text without isolating the dynamic cues often causes the sub jects to freeze in to the background or distort unnaturally . T o conquer these complex dynamics and bridge the research gap, a dedicated testing ground is essential. As natural videos with perfectly captured, uno ccluded exit-and-re-en try even ts are remark ably scarce, w e constructed HM-W orld, a dataset explicitly tailored for h ybrid memory . 6 K. Chen et al. (a) 3D UE Sce ne s ( b) Subjects (c) Subject T rajectories (d) Camera T rajectories Fig. 3: Construction Pro cedure of HM-W orld. W e combine (a) 3D scenes, (b) sub jects, (c) sub ject tra jectories, and (d) camera tra jectories to render data con taining dynamics in Unreal Engine 5. 3.2 Dataset Characteristics Since videos with exit-en try even ts are rarely found on the Internet, we con- struct the dataset b y implemen ting a data rendering pipeline within Unreal Engine 5 [ 19 ]. As depicted in Fig. 3 , our data generation pro cess is structured along four dimensions: scenes, sub jects, sub ject tra jectories, and camera tra jec- tories. W e first collect 17 st ylistically div erse scenes to serv e as the environmen tal bac kground. Then, 49 distinct sub jects, encompassing p eople of v aried appear- ances and animals of multiple species , are combined into groups of 1 to 3. Each com bination is pro cedurally placed within a scene. F urthermore, eac h sub ject is ass ociated with its o wn motion animation and follows a randomly selected tra jectory from a set of 10 predefined paths. T o guaran tee a ric h density of exit-entry ev ents, we meticulously designed the camera motions. Moving b ey ond simple unidirectional tracking, our camera tra jectories incorp orate delib erate bac k-and-forth camera motions, as illustrated in Fig. 2 , to actively induce hide-and-reappear dynamics. F or instance, a left ward pan follow ed by a right ward pan t ypically causes a captured sub ject to leav e and re-enter the frame. F ollowing this principle, we designed 28 distinct camera tra jectories. Additionally , each camera mo v ement is assigned m ultiple initial p ositions, further enhancing the diversit y of camera motion sequences. After procedurally combining elements from all four dimensions and filter- ing clips that lack exit-en try ev ents, w e obtain a final collection of 59,225 high-fidelit y video clips. Every sample is comprehensively annotated with the rendered video, a descriptive caption generated b y MiniCPM-V [ 40 ], correspond- ing camera p oses, p er-frame p ositions of all sub jects, and precise timestamps marking each sub ject’s exit from and en try into the frame. T ab. 1 highlights the Hybrid Memory for Dynamic Video W orld Mo dels 7 T able 1: The comparison b etw een existing datasets and HM-W orld dataset. "Dynamic Sub ject" means including moving sub jects, "Exit-Enter" refers to containing exit-en try ev ents in clips, and "Sub ject Pose" denotes including annotated 3D p oses of sub jects. Dataset Reference Dynamic Sub ject Sub ject Exit-Enter Sub ject Pose Camera Mov able T otal Num. W orldScore [ 35 ] ICCV 25 ✓ ✗ ✗ ✓ 3K Context-As-Memory [ 15 ] SIGGRAPH Asia 25 ✗ ✗ ✗ ✓ 10K Multi-Cam Video [ 22 ] ICCV 25 ✓ ✗ ✗ ✓ 136K 360 ° -Motion [ 33 ] ICLR 25 ✓ ✗ ✓ ✗ 5.4K HM-W orld (ours) - ✓ ✓ ✓ ✓ 59K comparison b et w een HM-W orld and existing datasets. Sp ecifically , the Context- as-Memory dataset only con tains static scenes. W orldScore includes n umerous real-w orld scenes with certain dynamic elements, but its scale is limited to only 3K. Multi-Cam Video features dynamic sub jects, but they only p erform actions in place. 360 ° -Motion contains mo ving sub jects, but the camera remains static, and the sub jects are alwa ys within the field of view. In contrast, our HM-W orld not only features ric h, dynamic sub jects and complex camera tra jectories, b ut also includes sp ecific in-and-out-of-frame even ts for h ybrid memory . 4 Hybrid Dynamic Retriev al A tten tion Giv en a sequence of context frames X ctx ∈ R N × C × H × W and a full sequence of camera tra jectory P = { P ctx , P tg t } spanning b oth historical and future times- tamps, our goal is to predict the target frames X tg t ∈ R M × C × H × W . Unlik e static scene generation, the context frames X ctx feature dynamic sub jects go v- erned by their indep endent motion. As the camera viewp oin t shifts according to P tg t (e.g., panning or rotation), these sub jects frequen tly hide and re-enter the camera’s field of view. T o syn thesize high-fidelity future frames X tg t , the mo del m ust preserve the static background while seeking the moving sub jects to main tain their app earance and motion consistency . T o ac hieve this, w e introduce HyDRA ( Hy brid D ynamic R etriev al A tten tion), a memory metho d designed to decouple and preserv e consistency of dynamic sub jects. 4.1 Base Architecture and Camera Injection Ov erall Architecture . As depicted in Fig. 4 , our approach is built up on a full- sequence video diffusion mo del, comprising a causal 3D V AE [ 31 ] and a Diffusion T ransformer (DiT) [ 12 ]. Each DiT blo c k integrates dynamic retriev al attention, a pro jector, cross-atten tion, and a feedforward netw ork (FFN). The diffusion timestep is enco ded via a Multi-Lay er P erceptron (MLP) to mo dulate the DiT blo c ks. The mo del follo ws Flow Matc hing [ 32 ]. Given a sequence of video frames x , the 3D V AE enco des it into video latent z 0 ∈ R C × f × h × w , compressing b oth temp oral and spatial dimensions. During the training phase, the noised latent z t at timestep t is obtained through linear in terp olation b et ween z 0 and Gaussian noise z 1 ∼ N (0 , I ) . The model u learns to predict the ground-truth v elo city 8 K. Chen et al. v t = z 0 − z 1 at timestep t ∈ [0 , 1] , with the loss function defined as: L θ = E z 0 ,z 1 ,t || u ( z t , t ; θ ) − v t || 2 , (1) where θ represents the mo del parameters. During the inference phase, randomly sampled Gaussian noise is progressively denoised to yield a clean latent, which is then deco ded by the 3D V AE Deco der to reconstruct the video sequence. × N Camera Sequence Dynamic Retrieval Attention Projector Cross Attention Feed Forward Network 🔥 ❄ 🔥 ❄ 3D VA E Encoder ❄ Context Frames T arget Frames Camera Encoder 🔥 Memory T okenizer 🔥 × N ( b) D yna m i c R e t r i e va l A t t e nt i on … C on t e xt F r am e s In p u t N oi s e T ar ge t F r am e M e m or y T oke ni z e r M e m or y T ok e n s T ar ge t Qu e r y 𝒒 𝒊 A f f i ni t y C om put a t i on T op - K R e t r i e va l A t t e nt i on C a l c ul a t i on ( a ) M e m or y T oke ni z a t i on R e t r i e va l A t t e nt i on M a p 𝑞 ! 𝑘 #$ % 𝑘 &' & Se l e c t e d T ok e n s M e m or y T oke ni z e r Fig. 4: Mo del architecture. Camera Injection . T o enable precise spatial con trol of generated con tent, we inject camera tra jecto- ries in to the mo del as an explicit con- dition. Suppose the camera pose se- quence of length f is denoted as P = { ( R i , t i ) } f i =1 , where R i ∈ R 3 × 3 and t i ∈ R 3 represen t the rotation matrix and the translation vector for the i - th frame, resp ectively . W e flatten and concatenate these parameters to form a unified camera condition c cam ∈ R f × 12 . F ollowing ReCamMaster [ 22 ], w e emplo y a camera enco der E cam ( · ) , implemen ted as a MLP la yer to en- co de c cam . The enco ded camera fea- tures are then broadcast spatially and added element-wise to the laten t features. F ormally , let H in b e the sequence features fed into the DiT blo c ks, the camera- injected feature H out is form ulated as: H out = H in + E cam ( c cam ) , (2) where E cam ( c cam ) is pro jected to match the exact channel dimension of H in . 4.2 Memory T okenization for Retriev al In our framework, the enco ded memory latents, denoted as Z mem , serve as the primary representation of memory . A naive approach to memory utilization w ould in volv e injecting the entire Z mem in to the generation process. Ho wev er, this not only incurs computational ov erhead but also flo o ds the mo del with ir- relev ant noise. Such noise can easily mislead the mo del’s reasoning path wa ys, ultimately resulting in spatially and temporally inconsisten t generation. There- fore, a retriev al mechanism is essential to filter the memory and accurately recall the hidden sub ject outside the current frame. Nev ertheless, p erforming retriev al directly on the latent represen tation could b e sub-optimal. Under our prop osed hybrid memory paradigm, the task inv olv es highly dynamic sub jects and complex spatial relationships driv en b y camera mo vemen ts. Direct retriev al from ra w, uncoupled laten ts can lac k the expres- siv eness needed to fully capture the underlying motion of dynamic sub jects and Hybrid Memory for Dynamic Video W orld Mo dels 9 3D VA E E nc ode r × N ❄ C on t e xt F r am e s T ar ge t F r am e s C am e r a Se que nc e Ca m e r a E nc ode r D yna m i c R e t r i e va l A t t e nt i on P r oj e c t or C r os s A t t e nt i on F e e d F or w a r d N e t w or k 🔥 ❄ 🔥 ❄ 🔥 N oi s e M e m or y T oke ni z e r 🔥 × N (b) Dynamic Retrieval Attention … Memory Frames Input Noise Memory T okens T arget Query 𝒒 𝒊 Affinity Computation T arget Frame Attention Calculation Retrieval Attention Map 𝑞 ! 𝑘 #$% 𝑘 &'& Selected T okens Memory T okenization T op-K Retrieval (a) Memory T okenization Memory T okenizer Reshape Fig. 5: Ov erview of HyDRA . (a) Memory T okenization Mo dule. (b) Dynamic re- triev al atten tion computes relev ance betw een the target query and memory tokens to retrieve the top-k relev ant tokens, enabling the mo del to recall associated h ybrid memory . the asso ciated camera transformations, p otentially undermining spatiotemp oral consistency in the generated con tent. T o ov ercome this limitation, w e in tro duce a 3D-conv olution-based memory tok enizer, designed to pro cess b oth spatial and temp oral dimensions sim ultane- ously . W e argue that facilitating spatiotemp oral interaction on the latents yields memory tokens with muc h deep er, motion-aw are representations. This enriched represen tation is crucial for optimizing the retriev al pro cess and ensuring con- sisten t generation, which is v alidated by our extensive empirical exp erimen ts. Sp ecifically , the Memory T okenizer T mem pro cessed the latents Z mem in to compact memory tok ens M . By emplo ying 3D conv olutions, the tok enizer ex- pands the spatiotemp oral receptive field to capture long-duration motion infor- mation. F ormally , this transformation is defined as: M = T mem ( Z mem ) , M ∈ R C ′ × f ′ mem × h × w , (3) where f ′ mem represen ts the temp oral dimension, and h × w denotes the downsam- pled spatial resolution. By compressing the raw latents in to dense, spatiotemp orally- a ware memory tok ens M , the mo del effectiv ely filters out irrelev ant context while preserving the essen tial motion and app earance cues. These refined tok ens M then serve as the foundation for our dynamic retriev al attention mo dule, which will b e detailed in the following section. 4.3 Dynamic Retriev al A ttention As discussed in Sec. 4.2 , indiscriminately injecting all historical con text degrades video consistency and inflates computational cost. T o tac kle this, a retriev al mec hanism is imperative for optimizing the information flo w. Building upon the principles of attention [ 37 ], we prop ose Dynamic Retriev al A ttention , a spatiotemp oral-informed retriev al method and memory mechanism that directly replaces the standard 3D self-atten tion lay ers within the base mo del. 10 K. Chen et al. Giv en the denoising target laten ts Z tg t ∈ R C ′ × f tgt × H ′ × W ′ and the memory tok ens M ∈ R C ′ × f ′ mem × h × w , we first pro ject them in to their respective Query , Key , and V alue. Concretely , the target latents are pro jected in to queries Q , while the memory tok ens are pro jected into keys K mem and v alues V mem . T o p erform dynamic retriev al, we pro cess the query set q i corresp onding to eac h target latent i ∈ { 1 , . . . , f tg t } sequentially . Because q i and K mem op erate at differen t spatial resolutions, w e first apply spatial po oling to downsample q i in to ˜ q i ∈ R C ′ × h × w , aligning it with the memory tokens. W e then compute a spatiotemp oral affinity metric b et w een the do wnsampled query ˜ q i and each temp oral slice of the memory key k mem,j (where j ∈ { 1 , . . . , f ′ mem } ). Since they share the same spatial resolution and c hannel dimension, the affinit y S i,j is calculated b y taking the element-wise pro duct across the spatial dimensions: S i,j = 1 √ d h X y =1 w X x =1 ⟨ ˜ q i ( x, y ) , k mem,j ( x, y ) ⟩ , (4) where ⟨· , ·⟩ denotes the channel-wise inner pro duct, and d is the channel dimen- sion for scaling. The affinit y metric effectively quantifies the spatiotemp oral corresp ondence b et w een the current target latent and the memory tok en. Based on these affini- ties, we employ a T op-K selection strategy to filter the memory tokens, isolating the subset of memory that exhibits the strongest correlation with q i : I i = T opK ( S i , K ) , K sel = { k mem,j | j ∈ I i } , V sel = { v mem,j | j ∈ I i } , (5) where I i represen ts the indices of the K most relev ant memory tok ens. While retrieving historical memory is crucial for long-term consistency , main- taining lo cal denoising stability is equally imp ortan t. T o preserve the structural in tegrity of the original self-attention, we forcefully include the queries’ own lo- cal temp oral windo w in to the atten tion computation. Let K loc and V loc denote the keys and v alues deriv ed from the adjacent latents within a lo cal windo w W i cen tered around frame i in the target sequence. W e first flatten these lo cal features and the retrieved memory features, then concatenate them to form the final k eys K ′ i = [ K sel , K loc ] and v alues V ′ i = [ V sel , V loc ] . Finally , after flattening the query q i , the dynamic retriev al attention for the i -th laten t is computed using the standard attention formulation: A ttention ( q i , K ′ i , V ′ i ) = Softmax q i ( K ′ i ) T √ d V ′ i . (6) By iterating this pro cess across all queries in the denoising sequence, the mo del selectiv ely attends to the most pertinent motion and app earance cues of the out- of-sigh t sub jects. Extensive experiments v alidate that this method successfully trac ks hidden sub jects, preserves spatiotemp oral consistency , and substantially decreases the computational burden. Hybrid Memory for Dynamic Video W orld Mo dels 11 T able 2: Quantitativ e comparison with other metho ds. Method Reference PSNR SSIM LPIPS DSC ctx DSC GT Sub j. Cons. Bg. Cons. Baseline - 18.696 0.517 0.356 0.812 0.837 0.903 0.925 DF oT [ 20 ] ICML 25 17.693 0.482 0.410 0.803 0.826 0.893 0.913 Context-as-Memory [ 15 ] SIGGRAPH Asia 25 18.921 0.530 0.342 0.816 0.839 0.911 0.922 HyDRA (ours) - 20.357 0.606 0.289 0.827 0.849 0.926 0.932 5 Exp erimen ts 5.1 Exp erimen t Setup Implemen t Details . W e build our metho d on W an2.1-T2V-1.3B [ 9 ]. The model enco des 77 context frames and temp orally downsamples them by a factor of 4 via a 3D V AE. F or our proposed modules, the memory tokenizer employs a 3D con volution with a kernel size of 2 × 4 × 4 . In the Dynamic Retriev al Atten tion, the retriev al tok en length is set to 10, and the lo cal windo w for the denoising laten t is 5. W e train our mo del on the proposed HM-W orld dataset for 10K iterations using 32 GPUs, with a total batc h size of 32. Ev aluation Proto col . T o ev aluate our method, we construct a test set compris- ing 1000 video samples randomly selected from the HM-W orld dataset, includ- ing scenes and sub jects that are unsee n during training to assess generalization. Our ev aluation metrics span three categories: 1) General Memory Capacit y . PSNR, SSIM, and LPIPS analyze pixel-wise differences across frames to measure o verall reconstruction fidelity . 2) F rame-level Consistency . W e adopt Sub- ject Consistency and Bac kground Consistency from the Vb enc h [ 38 ] to measure frame-lev el coherence. 3) Dynamic Sub ject Consistency (DSC) . T o isolate and ev aluate the motion and app earance consistency of moving sub jects, esp e- cially in re-entering even ts. W e propose a new metric DSC ( D ynamic S ub ject C onsistency). Sp ecifically , we utilize b ounding b o xes of moving sub jects, which are obtained via YOLOv11 [ 41 ], to crop the sub ject regions from the predicted video, the GT video, and the context video. W e then extract semantic features from these cropp ed regions using a pretrained CLIP [ 39 ] mo del. After spatial alignmen t and temporal normalization, w e calculate the feature similarities to yield t wo scores DSC ctx and DSC GT , form ulated as: DSC GT = sim F pred , F g t , DSC ctx = sim F pred , F ctx , (7) where sim ( · , · ) refers to the spatially a veraged cosine similarity across the feature c hannels, F pred , F g t , and F ctx denote sub ject features from predicted video, GT video, and context video. DSC GT ev aluates motion and app earance fidelity against the ground truth, while DSC ctx ev aluates against historical context. 5.2 Main Results In this section, w e ev aluate the p erformance of our proposed metho d against a baseline and state-of-the-art approac hes, including DF oT [ 20 ] and Context-as- Memory [ 15 ]. The baseline is built up on a W an2.1-T2V-1.3B mo del equipp ed 12 K. Chen et al. T able 3: Quantitativ e comparison against the state-of-the-art commercial mo del. Method PSNR SSIM LPIPS DSC ctx DSC GT Sub ject Consistency Background Consistency W orldPlay [ 14 ] 14.855 0.355 0.500 0.822 0.832 0.910 0.925 HyDRA (ours) 20.357 0.606 0.289 0.827 0.849 0.926 0.932 with a camera enco der, which directly concatenates the context laten ts and the noisy latents as the input of the DiT. F or fair comparisons, these mo dels are trained on our dataset, strictly adhering to the same training configurations used for our approac h. F urthermore, w e include a zero-shot ev aluation of W orld- Pla y [ 14 ], a cutting-edge commercial known for its exceptional consistency . The comparison results are summarized in T ab. 2 , T ab. 3 and Fig. 6 . Quan titative Comparison . As shown in T ab. 2 , HyDRA consisten tly out- p erforms comp eting approac hes across all ev aluation metrics. Compared to the baseline, our mo del achiev es significant improv ements, lifting PSNR from 18.696 to 20.357 and SSIM from 0.517 to 0.606. This demonstrates that HyDRA achiev es sup erior reconstruction accuracy for future frames. Crucially , our method attains the highest DSC ctx and DSC GT scores of 0.827 and 0.849, resp ectiv ely , proving its robust capabilit y to track sub jects and maintain their app earance and motion consistency , b oth in aligning with historical con text and predicting future states. The Sub ject Consistency of 0.926 and Background Consistency of 0.932 further corrob orate that it successfully anchors the static stage while preserving ov erall visual coherence. While DF oT relies on a neighbor con text windo w, yielding a PSNR of 17.693, and Context-as-Memory utilizes FO V-based context filtering, yielding 18.921, our metho d surpasses them b oth, likely because we leverage re- triev al ov er richer token represen tations and fuse spatiotemp oral relationships via dynamic retriev al atten tion. T ab. 3 presents the comparison with the zero-shot p erformance of W orldPlay . Our metho d surpasses W orldPlay across all metrics, with a notable PSNR gap of 5.502. Although W orldPlay exhibits low er p erfor- mance on GT-referenced metrics (e.g., PSNR of 14.855, DSC GT of 0.832) due to domain distribution gap and lac k of sp ecific finetuning, it demonstrates remark- able robustness on con text-referenced metrics b y ac hieving a DSC ctx of 0.822. This observ ation not only confirms that extensiv ely trained mo dels p ossess fair h ybrid consistency but also indirectly v alidates the rationalit y of our prop osed DSC metrics in reflecting dynamic sub ject consistency . Ultimately , these impres- siv e results highlight the exceptional capabilities of our mo del, demonstrating its sup eriorit y even o ver established commercial mo dels. Qualitativ e Comparison . W e present a qualitativ e comparison in Fig. 6 . In the case of complex exit-and-entry ev ents, the baseline and Con text-as-Memory exhibit severe sub ject distortion and motion incoherence. DF oT fails to main tain sub ject in tegrity , leading to complete v anishing. While W orldPlay manages to preserv e the sub ject’s app earance consistency , it suffers from stuttering mov e- men ts and unnatural actions. In contrast, our method successfully main tains h ybrid consistency , preserving b oth the sub ject’s iden tity and motion coherence after the sub ject re-enters the frame. Due to space limitations, more generation results are pro vided in the supplementary materials . Hybrid Memory for Dynamic Video W orld Mo dels 13 Frame 10 Frame 20 Frame 40 Frame 60 Exit then Re-enter HyDRA (ours) GT Baseline DFoT Context- as -Memory Context Frame W orldPlay Incoherent Motion Ba s el i n e 的图换⼀个 Our s 换⼀帧 Fig. 6: Qualitative comparison with other methods. The green b o xes in the figure represen t consistently generated sub jects, while the red b o xes stand for failure cases. T able 4: Kernel Size of Memory T ok enizer. T H × W PSNR SSIM LPIPS DSC ctx DSC GT Sub ject Consistency Background Consistency 2 2 × 2 20.113 0.599 0.299 0.820 0.843 0.919 0.929 2 4 × 4 20.357 0.606 0.289 0.827 0.849 0.926 0.932 2 8 × 8 20.230 0.610 0.292 0.822 0.843 0.923 0.927 1 4 × 4 19.076 0.554 0.337 0.819 0.841 0.912 0.925 5.3 Ablation Study In this section, we conduct comprehensive ablation studies to v alidate the effec- tiv eness of the core comp onents in our metho d. Kernel Size of Memory T okenizer . W e first ev aluate the impact of differ- en t kernel sizes in the memory tok enizer, with the results summarized in T ab. 4 . The kernel size is denoted as T × H × W , representing the temp oral, heigh t, and width dimensions, resp ectiv ely . The results indicate that our mo del exhibits strong robustness to v ariations in the spatial dimensions. The p erformance dif- ferences among spatial dimensions’ settings are marginal, as transitioning from the optimal 4 × 4 configuration to 2 × 2 or 8 × 8 results in a minor PSNR decrease of only 0.244 and 0.127, resp ectiv ely . In contrast, when the temp oral dimension is reduced to 1, we observ e a significant p erformance drop of 1.281 in PSNR and 0.014 in DSC GT , which demonstrates the necessity of temporal in teraction within the tok enizer for capturing long-term dynamic information. Num b er of Retriev ed T okens . W e in vestigate the effect of the retrieved memory tok en length in T ab. 5 . Retrieving only 5 tok ens yields sub optimal p er- formance with a PSNR of 19.309, indicating that an ov erly restricted token count leads to severe information loss. Conv ersely , increasing the num b er to 10 and 15 14 K. Chen et al. T able 5: Number of retrieved tok ens. Setting PSNR SSIM LPIPS DSC ctx DSC GT Sub ject Consistency Background Consistency 5 19.309 0.566 0.339 0.817 0.836 0.913 0.927 10 20.357 0.606 0.289 0.827 0.849 0.926 0.932 15 20.333 0.612 0.291 0.828 0.842 0.925 0.935 T able 6: Approaches to retriev e tokens. Method PSNR SSIM LPIPS DSC ctx DSC GT Sub ject Consistency Background Consistency FO V Ov erlap 19.776 0.586 0.300 0.820 0.844 0.908 0.930 Dynamic Affinit y 20.357 0.606 0.289 0.827 0.849 0.926 0.932 generates better and more stable results, with negligible differences b etw een the t wo. This suggests that a mo derate num b er of tokens is sufficient to provide the necessary spatiotemp oral information without introducing redundant noise. T oken Retriev al Approaches . W e ablate the tok en retriev al mec hanism by comparing our dynamic affinity retriev al with F OV ov erlap retriev al in T ab. 6 . Since a single memory tok en in our architecture aggregates information from m ultiple frames with v arying camera poses, w e av erage the camera p oses of the source frames to represen t the tok en’s pose. W e then follo w Con text-as- Memory [ 15 ] to calculate the FO V ov erlap b et ween the token and the target frame to perform retriev al. Exp erimen tal results demonstrate that our metho d outp erforms the FO V-based approac h across all metrics, notably impro ving Sub- ject Consistency from 0.908 to 0.926. This superiority stems from leveraging QK in teractions to assess fine-grained spatiotemp oral relev ance, whereas the F OV- based approac h relies solely on static geometry ov erlap. 6 Conclusion In this pap er, w e introduce the nov el paradigm of Hybrid Memory , challenging mo dels to simultaneously maintain static bac kground consistency and dynamic sub ject coherence, particularly during complex exit-and-re-entry even ts. T o sys- tematically facilitate researc h in this field, w e construct HM-W orld , the first large-scale video dataset dedicated to h ybrid memory , featuring highly diverse scenarios and complex dynamic processes. T o tac kle the challenge of h ybrid memory , we propose HyDRA , an adv anced memory arc hitecture sp ecifically designed to effectively extract and retrieve motion and app earance cues for con- sisten t generation. Extensiv e exp erimen ts demonstrate that HyDRA significan tly outp erforms existing metho ds. W e hop e that the h ybrid memory paradigm, alongside the HM-W orld dataset and the HyDRA framew ork, will inspire new researc h and provide a solid foundation for adv ancing video world mo dels. Limitations and F uture W ork . Despite the promising results, our work still presen ts certain limitations. Sp ecifically , HyDRA’s p erformance in maintain- ing consisten t generation tends to degrade in highly complex scenes inv olving three or more sub jects or severe o cclusions. In future work, w e plan to explore Hybrid Memory for Dynamic Video W orld Mo dels 15 more adv anced and robust memory mec hanisms to handle intricate m ulti-sub ject dynamics and scale our approac h to unconstrained real-world environmen ts. A c knowledgemen ts W e express our sincere gratitude to Jichao W ang, Xiaole Xiong, Siyuan Luo, Mengyuan Li, Bo yu Zheng, and Yik e Yin from Kuaishou T echnology for their in v aluable assistance in developing the HM-W orld dataset. References 1. Y. Cui, H. Chen, H. Deng, X. Huang, X. Li, J. Liu, Y. Liu, Z. Luo, J. W ang, W. W ang et al. , “Emu3. 5: Nativ e m ultimo dal mo dels are world learners,” arXiv pr eprint arXiv:2510.26583 , 2025. 2. X. He, C. P eng, Z. Liu, B. W ang, Y. Zhang, Q. Cui, F. Kang, B. Jiang, M. An, Y. Ren et al. , “Matrix-game 2.0: An op en-source real-time and streaming in teractive w orld mo del,” arXiv pr eprint arXiv:2508.13009 , 2025. 3. X. Mao, S. Lin, Z. Li, C. Li, W. Peng, T. He, J. Pang, M. Chi, Y. Qiao, and K. Zhang, “Y ume: An interactiv e w orld generation model,” arXiv pr eprint arXiv:2507.17744 , 2025. 4. D. Y e, F. Zhou, J. Lv, J. Ma, J. Zhang, J. Lv, J. Li, M. Deng, M. Y ang, Q. F u et al. , “Y an: F oundational interactiv e video generation,” arXiv pr eprint arXiv:2508.08601 , 2025. 5. S. Gao, J. Y ang, L. Chen, K. Chitta, Y. Qiu, A. Geiger, J. Zhang, and H. Li, “Vista: A generalizable driving world mo del with high fidelity and versatile con trollability ,” in NeurIPS , 2024. 6. X. Zhou, D. Liang, S. T u, X. Chen, Y. Ding, D. Zhang, F. T an, H. Zhao, and X. Bai, “Hermes: A unified self-driving w orld model for simultaneous 3d scene understanding and generation,” in ICCV , 2025. 7. X. W ang, L. Liu, Y. Cao, R. W u, W. Qin, D. W ang, W. Sui, and Z. Su, “Embo d- iedgen: T ow ards a generative 3d world engine for embo died intelligence,” arXiv pr eprint arXiv:2506.10600 , 2025. 8. Y. Jiang, S. Chen, S. Huang, L. Chen, P . Zhou, Y. Liao, X. He, C. Liu, H. Li, M. Y ao et al. , “Enerverse-ac: Envisioning embo died environmen ts with action condition,” arXiv preprint arXiv:2505.09723 , 2025. 9. T. W an, A. W ang, B. Ai, B. W en, C. Mao, C.-W. Xie, D. Chen, F. Y u, H. Zhao, J. Y ang et al. , “W an: Op en and adv anced large-scale video generativ e models,” arXiv preprint arXiv:2503.20314 , 2025. 10. W. Kong, Q. Tian, Z. Zhang, R. Min, Z. Dai, J. Zhou, J. Xiong, X. Li, B. W u, J. Zhang et al. , “Hun yuanvideo: A systematic framew ork for large video generative mo dels,” arXiv pr eprint arXiv:2412.03603 , 2024. 11. Z. Y ang, J. T eng, W. Zheng, M. Ding, S. Huang, J. Xu, Y. Y ang, W. Hong, X. Zhang, G. F eng et al. , “Cogvideox: T ext-to-video diffusion models with an expert transformer,” arXiv pr eprint arXiv:2408.06072 , 2024. 12. W. Peebles and S. Xie, “Scalable diffusion models with transformers,” in ICCV , 2023. 13. J. Song, C. Meng, and S. Ermon, “Denoising diffusion implicit models,” arXiv pr eprint arXiv:2010.02502 , 2020. 16 K. Chen et al. 14. W. Sun, H. Zhang, H. W ang, J. W u, Z. W ang, Z. W ang, Y. W ang, J. Zhang, T. W ang, and C. Guo, “W orldplay: T ow ards long-term geometric consistency for real-time in teractive w orld mo deling,” arXiv pr eprint arXiv:2512.14614 , 2025. 15. J. Y u, J. Bai, Y. Qin, Q. Liu, X. W ang, P . W an, D. Zhang, and X. Liu, “Con- text as memory: Scene-consistent interactiv e long video generation with memory retriev al,” in ACM SIGGRAPH Asia , 2025. 16. R. Li, P . T orr, A. V edaldi, and T. Jak ab, “V mem: Consisten t interactiv e video scene generation with surfel-indexed view memory ,” in ICCV , 2025. 17. Z. Xiao, L. Y ushi, Y. Zhou, W. Ouyang, S. Y ang, Y. Zeng, and X. Pan, “W orldmem: Long-term consisten t world sim ulation with memory ,” in NeurIPS , 2025. 18. J. Huang, X. Hu, B. Han, S. Shi, Z. Tian, T. He, and L. Jiang, “Memory forc- ing: Spatio-temp oral memory for consistent scene generation on minecraft,” arXiv pr eprint arXiv:2510.03198 , 2025. 19. Epic Games, “Unreal engine 5,” https :/ / www . unrealengine . com / en - US / unreal - engine- 5 , 2022, accessed: 2025-10-22. 20. K. Song, B. Chen, M. Simcho witz, Y. Du, R. T edrake, and V. Sitzmann, “History- guided video diffusion,” in ICML , 2025. 21. S. Bahmani, I. Skorokhodov, A. Siarohin, W. Menapace, G. Qian, M. V asilko vsky , H.-Y. Lee, C. W ang, J. Zou, A. T agliasacc hi et al. , “V d3d: T aming large video diffusion transformers for 3d camera con trol,” arXiv pr eprint arXiv:2407.12781 , 2024. 22. J. Bai, M. Xia, X. F u, X. W ang, L. Mu, J. Cao, Z. Liu, H. Hu, X. Bai, P . W an et al. , “Recammaster: Camera-controlled generative rendering from a single video,” in ICCV , 2025. 23. J. Y u, Y. Qin, X. W ang, P . W an, D. Zhang, and X. Liu, “Gamefactory: Creating new games with generative interactiv e videos,” in ICCV , 2025. 24. J. T ang, J. Liu, J. Li, L. W u, H. Y ang, P . Zhao, S. Gong, X. Y uan, S. Shao, and Q. Lu, “Hun yuan-gamecraft-2: Instruction-following interactiv e game world mo del,” arXiv pr eprint arXiv:2511.23429 , 2025. 25. J. Zhou, H. Gao, V. V oleti, A. V asish ta, C.-H. Y ao, M. Boss, P . T orr, C. Rupprech t, and V. Jampani, “Stable virtual camera: Generative view synthesis with diffusion mo dels,” in ICCV , 2025. 26. H. Che, X. He, Q. Liu, C. Jin, and H. Chen, “Gamegen-x: Interactiv e op en-w orld game video generation,” in ICLR , 2025. 27. Y. Hong, Y. Mei, C. Ge, Y. Xu, Y. Zhou, S. Bi, Y. Hold-Geoffro y , M. Roberts, M. Fisher, E. Shech tman et al. , “Relic: Interactiv e video w orld mo del with long- horizon memory ,” arXiv pr eprint arXiv:2512.04040 , 2025. 28. X. W u, G. Zhang, Z. Xu, Y. Zhou, Q. Lu, and X. He, “Pac k and force your mem- ory: Long-form and consistent video generation,” arXiv pr eprint arXiv:2510.01784 , 2025. 29. B. Chen, D. Martí Monsó, Y. Du, M. Simcho witz, R. T edrake, and V. Sitz- mann, “Diffusion forcing: Next-tok en prediction meets full-sequence diffusion,” in NeurIPS , 2024. 30. L. Zhang, S. Cai, M. Li, G. W etzstein, and M. Agraw ala, “F rame context pac king and drift prev ention in next-frame-prediction video diffusion models,” in NeurIPS , 2025. 31. D. P . Kingma and M. W elling, “Auto-encoding v ariational bay es,” arXiv pr eprint arXiv:1312.6114 , 2013. 32. Y. Lipman, R. T. Chen, H. Ben-Hamu, M. Nick el, and M. Le, “Flow matching for generativ e mo deling,” arXiv pr eprint arXiv:2210.02747 , 2022. Hybrid Memory for Dynamic Video W orld Mo dels 17 33. F. Xiao, X. Liu, X. W ang, S. Peng, M. Xia, X. Shi, Z. Y uan, P . W an, D. Zhang, and D. Lin, “3dtra jmaster: Mastering 3d tra jectory for multi-en tit y motion in video generation,” in ICLR , 2024. 34. Y.-C. Chou, X. W ang, Y. Li, J. W ang, H. Liu, C. Xie, A. Y uille, and J. Xiao, “Captain safari: A world engine,” arXiv pr eprint arXiv:2511.22815 , 2025. 35. H. Duan, H.-X. Y u, S. Chen, L. F ei-F ei, and J. W u, “W orldscore: A unified ev alu- ation b enc hmark for world generation,” in ICCV , 2025. 36. Z. Li, C. Li, X. Mao, S. Lin, M. Li, S. Zhao, Z. Xu, X. Li, Y. F eng, J. Sun et al. , “Sek ai: A video dataset tow ards world exploration,” a rXiv pr eprint arXiv:2506.15675 , 2025. 37. A. V aswani, N. Shazeer, N. Parmar, J. Uszk oreit, L. Jones, A. N. Gomez, Ł. Kaiser, and I. Polosukhin, “Atten tion is all you need,” in NeurIPS , 2017. 38. Z. Huang, Y. He, J. Y u, F. Zhang, C. Si, Y. Jiang, Y. Zhang, T. W u, Q. Jin, N. Chanpaisit et al. , “Vbench: Comprehensive b enc hmark suite for video generative mo dels,” in CVPR , 2024. 39. A. Radford, J. W. Kim, C. Hallacy , A. Ramesh, G. Goh, S. Agarwal, G. Sastry , A. Ask ell, P . Mishkin, J. Clark et al. , “Learning transferable visual models from natural language sup ervision,” in ICML , 2021. 40. Y. Y ao, T. Y u, A. Zhang, C. W ang, J. Cui, H. Zh u, T. Cai, H. Li, W. Zhao, Z. He et al. , “Minicpm-v: A gpt-4v lev el mllm on y our phone,” arXiv pr eprint arXiv:2408.01800 , 2024. 41. R. Khanam and M. Hussain, “Y olov11: An o verview of the key arc hitectural en- hancemen ts,” arXiv pr eprint arXiv:2410.17725 , 2024. 42. Z. Zheng, X. Peng, T. Y ang, C. Shen, S. Li, H. Liu, Y. Zhou, T. Li, and Y. Y ou, “Op en-sora: Demo cratizing efficient video production for all,” arXiv pr eprint arXiv:2412.20404 , 2024. 43. X. Huang, Z. Li, G. He, M. Zhou, and E. Shech tman, “Self forcing: Bridging the train-test gap in autoregressive video diffusion,” in NeurIPS , 2025. 44. A. Bar, G. Zhou, D. T ran, T. Darrell, and Y. LeCun, “Navigation w orld mo dels,” in CVPR , 2025. 45. H. He, Y. Xu, Y. Guo, G. W etzstein, B. Dai, H. Li, and C. Y ang, “Cam- eractrl: Enabling camera control for text-to-video generation,” arXiv pr eprint arXiv:2404.02101 , 2024. 46. X. K ong, S. Liu, X. Lyu, M. T aher, X. Qi, and A. J. Davison, “Eschernet: A generativ e mo del for scalable view synthesis,” in CVPR , 2024. 47. J. Li, J. T ang, Z. Xu, L. W u, Y. Zhou, S. Shao, T. Y u, Z. Cao, and Q. Lu, “Hunyuan- gamecraft: High-dynamic interactiv e game video generation with h ybrid history condition,” arXiv pr eprint arXiv:2506.17201 , 2025. 48. T. Miyato, B. Jaeger, M. W elling, and A. Geiger, “Gta: A geometry-aw are attention mec hanism for multi-view transformers,” arXiv pr eprint arXiv:2310.10375 , 2023. 49. W. Sun, S. Chen, F. Liu, Z. Chen, Y. Duan, J. Zhu, J. Zhang, and Y. W ang, “Dimensionx: Create an y 3d and 4d scenes from a single image with decoupled video diffusion,” in CVPR , 2025. 50. P . J. Ball, J. Bauer, F. Belletti, B. Brownfield, A. Ephrat, S. F ruc hter, A. Gupta, K. Holsheimer, A. Holynski, J. Hron, C. Kaplanis, M. Limon t, M. McGill, Y. Oliv eira, J. Park er-Holder, F. P erb et, G. Scully , J. Shar, S. Sp encer, O. T o v, R. Villegas, E. W ang, J. Y ung, C. Baetu, J. Berb el, D. Bridson, J. Bruce, G. Buttimore, S. Chakera, B. Chandra, P . Collins, A. Cullum, B. Damoc, V. Dasagi, M. Gazeau, C. Gbadamosi, W. Han, E. Hirst, A. Kachra, L. Kerley , K. Kjems, E. Kno epfel, V. K oriakin, J. Lo, C. Lu, Z. Mehring, A. Moufarek, 18 K. Chen et al. H. Nandw ani, V. Oliveira, F. Pardo, J. Park, A. Pierson, B. Poole, H. Ran, T. Salimans, M. Sanchez, I. Saprykin, A. Shen, S. Sidhw ani, D. Smith, J. Stanton, H. T omlinson, D. Vijaykumar, L. W ang, P . Wingfield, N. W ong, K. Xu, C. Y ew, N. Y oung, V. Zubov, D. Ec k, D. Erhan, K. Ka vukcuoglu, D. Hassabis, Z. Gharamani, R. Hadsell, A. v an den Oord, I. Mosseri, A. Bolton, S. Singh, and T. Ro cktäsc hel, “Genie 3: A new frontier for world mo dels,” 2025. [Online]. A v ailable: h ttps://deepmind.go ogle/mo dels/genie/ Out of Sigh t but Not Out of Mind: Hybrid Memory for Dynamic Video W orld Mo dels Supplemen tary Material This file provides additional information ab out our w ork, mainly from more generation results and ablation studies. Fig. 1: The results generated by HyDRA. A Qualitativ e Analysis A.1 Generation Results Fig. 1 shows HyDRA’s generation results across multiple scenes, sub jects, and tra jectories. HyDRA effectiv ely implements memorization of b oth background and sub jects in complex dynamic scenarios with exit-entry even ts, maintaining app earance and motion consistency . A.2 Op en-Domain Results W e collect open-domain videos featuring sub ject motion from the In ternet and apply bac k-and-forth camera mov emen ts for inference. The results in Fig 2 demonstrate that even in entirely unseen scenes, HyDRA exhibits go o d capacit y of h ybrid memory . B More Ablation Studies In this section, we further conduct comprehensive ablation analyses on our pro- p osed metho d and core designs. Analysis of Retriev al Approaches . W e first compare our dynamic-affinit y- based retriev al metho d with the traditional Field of View (FO V) ov erlap filtering 20 Fig. 2: Op en-domain results of HyDRA. Retrieval Approaches FOV Overlap Dynamic Affinity Context Frame 10 Context Frame 20 Frame 20 Frame 40 Frame 60 Frames selected by Dynamic Affinity Frames selected by FOV Overlap Fig. 3: Qualitative comparison b et ween retriev al metho ds. The upp er displays frames selected b y different metho ds, while the lo wer shows the generation results. Selected frames are the source frames of the selected tokens. approac h. As illustrated in Fig. 3 , during a long camera mov ement in volving com- plex exit-and-re-en try even ts, the F OV-based metho d merely selects the nearest camera poses corresponding to the re-en try clip. Consequen tly , it mistakenly retriev es empty shots, leading to a severe loss of critical app earance informa- tion and inconsisten t generation. In contrast, our dynamic affinity approach fil- ters memory tokens based on feature-level correlations. It successfully retriev es k eyframes con taining ric h sub ject details, thereby maintaining the appearance and motion consistency of the sub ject after re-en try . F urthermore, w e inv esti- gate the distribution of the retrieved tokens across different filtering strategies in Fig. 4 . The F OV ov erlap metho d relies on static 3D geometric calculations, meaning the selected memory tok ens remain fixed throughout the en tire in- ference stage. In contrast, our dynamic affinity metho d computes feature-lev el correlations dynamically . As a result, it adaptiv ely selects different tok ens at dif- feren t timesteps and across different DiT lay ers. This dynamic mechanism gran ts Hybrid Memory for Dynamic Video W orld Mo dels 21 (a) T oken distribution selected by FOV Overlap. (b) T oken distribution selected by Dynamic Affinity. Fig. 4: Distribution comparison of different retriev al methods. The x-axis and y-axis represen t the token index and DiT lay ers, resp ectiv ely . The bubble size and color reflect the selection frequency of each token during the en tire denoising pro cess. (a) The F OV o verlap method yields a fixed token selection. (b) Our dynamic affinity metho d exhibits a div erse retriev al distribution, enabling the p erception of richer memory contexts. Kernel Size (𝑇×𝐻 ×𝑊 ) 2×4×4 2×8×8 2× 16 × 16 1×4×4 Frame 1 Frame 15 Frame 30 Frame 45 Frame 60 Fig. 5: Qualitative comparison b et ween differen t kernel sizes of the Memory T okenizer. The red b ounding b o xes annotate the inconsistent region. the mo del a broader memory receptive field and superior flexibility during the generation pro cess. Ablation on Kernel Size of Memory T okenizer . W e pro vide further qualitativ e ablation results regarding the kernel size of the Memory T okenizer. As shown in Fig. 5 , when the temporal dimension of the kernel size is set to 2 , the generated results maintain spatiotemp oral consistency due to effective temp oral in teraction. Ho w ever, when the temp oral k ernel size is reduced to 1 (i.e., no temp oral in teraction during tokenization), noticeable inconsistencies emerge in the generated sub jects. These qualitativ e observ ations further corrob orate the quan titative ablation results presented in the main pap er. Ablation on Number of Retrieved T ok ens . W e qualitatively ablate the n umber of retrieved tok ens. As depicted in Fig. 6 , restricting the tok en length to 5 results in a substan tial loss of context information, whic h misleads the mo del in to generating severe artifacts (e.g., hallucinating tw o giraffes instead of one). I n 22 Number of Retrieved T okens 5 10 15 Frame 1 Frame 15 Frame 30 Frame 45 Frame 60 Fig. 6: Qualitative comparison b et ween the n umber of retrieved tok ens. The red bound- ing b o xes annotate the inconsistent region. Fig. 7: Additional examples of HM-W orld dataset. con trast, other settings with an adequate n umber of retrieved tokens successfully main tain sub ject consistency and physical plausibility . Hybrid Memory for Dynamic Video W orld Mo dels 23 C A dditional Examples from the HM-W orld Dataset T o further illustrate the challenges present in the proposed HM-W orld dataset, w e provide additional examples in Fig. 7 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment