On Neural Scaling Laws for Weather Emulation through Continual Training

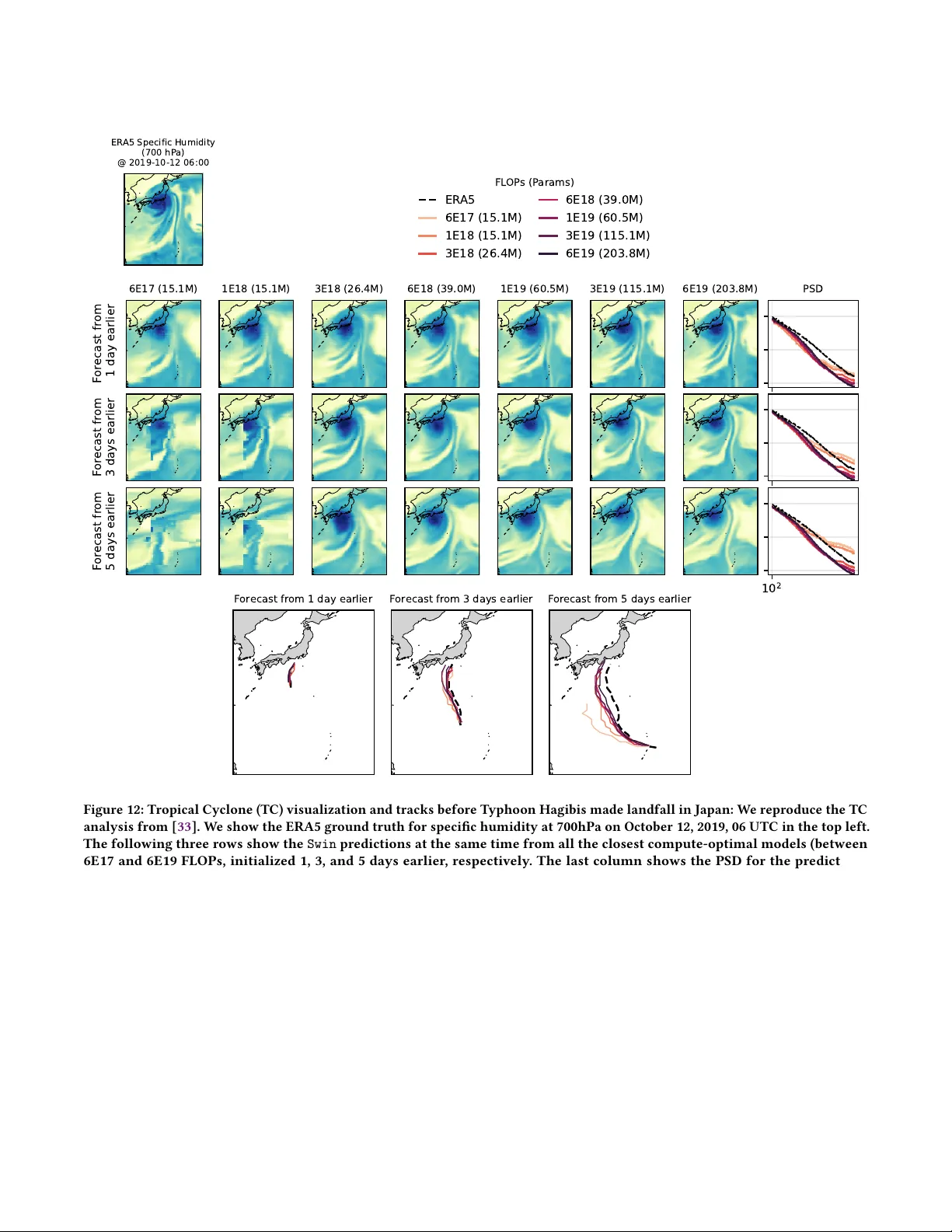

Neural scaling laws, which in some domains can predict the performance of large neural networks as a function of model, data, and compute scale, are the cornerstone of building foundation models in Natural Language Processing and Computer Vision. We …

Authors: Shashank Subramanian, Alex, er Kiefer