Advances in Exact and Approximate Group Closeness Centrality Maximization

In the NP-hard \textsc{Group Closeness Centrality Maximization} problem, the input is a graph $G = (V,E)$ and a positive integer $k$, and the task is to find a set $S \subseteq V$ of size $k$ that maximizes the reciprocal of group farness $f(S) = \su…

Authors: Christian Schulz, Jakob Ternes, Henning Woydt

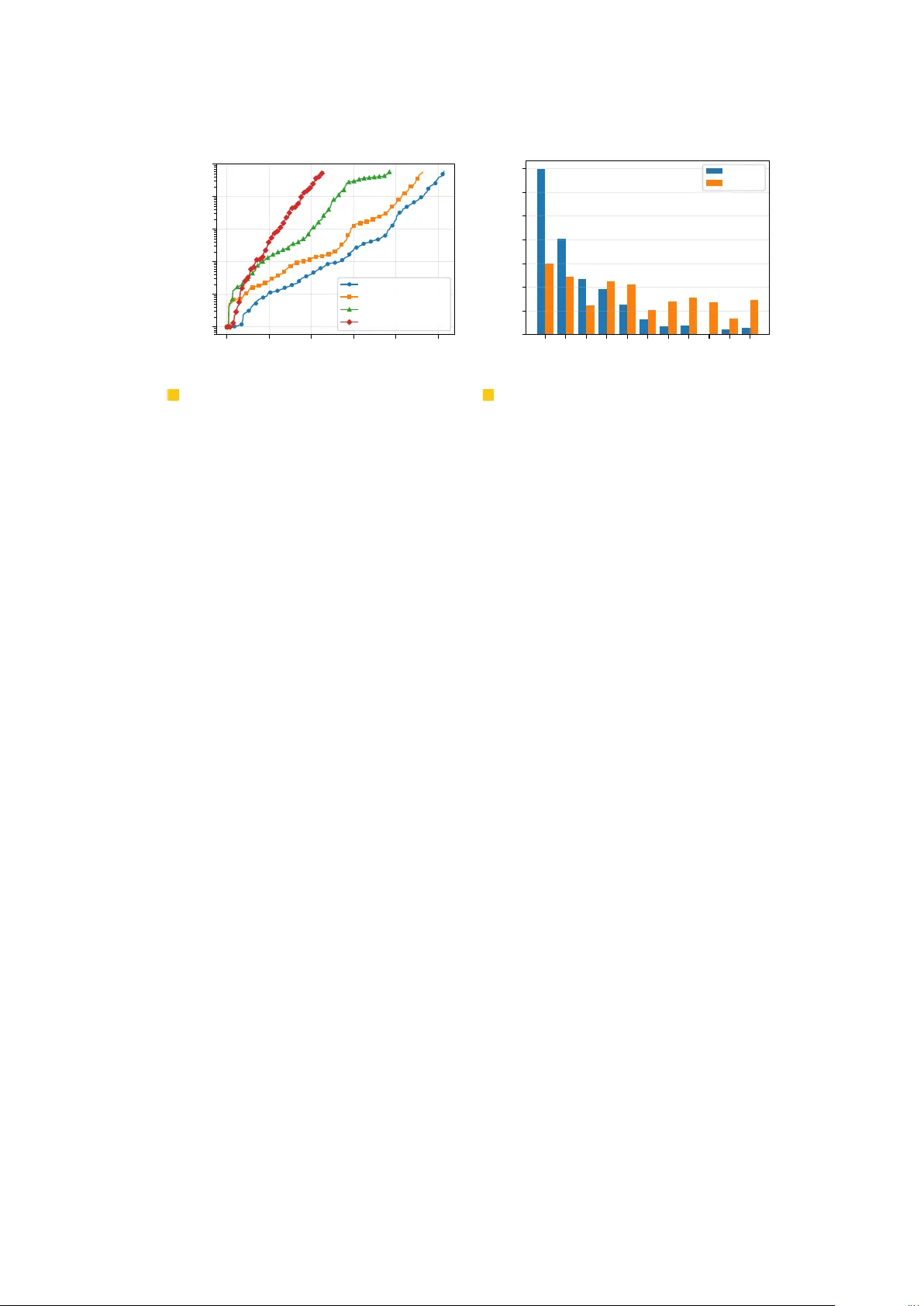

A dvances in Exact and App ro ximate Group Closeness Centralit y Maximization Christian Sc hulz # Heidelb erg Univ ersit y , F acult y of Mathematics and Computer Science, German y Jak ob T ernes 1 # Heidelb erg Univ ersit y , F acult y of Mathematics and Computer Science, German y Henning W o ydt # Heidelb erg Univ ersit y , F acult y of Mathematics and Computer Science, German y Abstract In the NP-hard Group Closeness Centrality Maximiza tion problem, the input is a graph G = ( V , E ) and a p ositive in teger k , and the task is to find a set S ⊆ V of size k that maximizes the reciprocal of group farness f ( S ) = P v ∈ V min s ∈ S dist ( v , s ) . A widely used greedy algorithm with previously unkno wn approximation guaran tee may produce arbitrarily p o or appro ximations. T o efficiently obtain solutions with qualit y guarantees, kno wn exact and approximation algorithms are revised. The state-of-the-art exact algorithm iteratively solv es ILPs of increasing size until the ILP at hand can represent an optimal solution. In this work, we propose t wo new techniques to further improv e the algorithm. The first tec hnique reduces the size of the ILPs while the second tec hnique aims to minimize the num b er of needed iterations. Our improv ements yield a sp eedup by a factor of 3 . 6 o ver the next b est exact algorithm and can ac hieve sp eedups by up to a factor of 22 . 3 . F urthermore, we add reduction techniques to a 1 / 5 -approximation algorithm, and sho w that these adaptions do not compromise its approximation guaran tee. The improv ed algorithm ac hieves mean sp eedups of 1 . 4 and a maxim um speedup of up to 2 . 9 times. 2012 ACM Subject Classification Mathematics of computing → Graph algorithms Keyw o rds and phrases Group Closeness Cen trality , Exact Algorithms, Approximation Algorithms F unding Henning W oydt : Supp orted by the Deutsc he F orsch ungsgemeinschaft (DFG, German Researc h F oundation) – DFG SCHU 2567/6-1 A ckno wledgements The results of this work are based on the corresponding author’s thesis [24]. 1 Intro duction A crucial task in the analysis of netw orks is the identification of v ertices or groups of v ertices whic h, in some sense, are imp ortant. Centralit y measures quan tify the imp ortance by assigning a n umeric v alue to a vertex or a group of v ertices. They are frequently used in the analysis of so cial net works [9], biological netw orks [25, 10], electrical pow er grids [1] and, among many other uses, in facilit y location problems [ 15 ]. V arious measures hav e b een prop osed to identify the centralit y of an individual vertex in a net work. F or instance, closeness cen trality measures ho w far a no de is from the other nodes in the graph, b etw eenness cen tralit y measures ho w often a no de lies on the shortest path betw een other nodes, and degree centralit y simply captures the n umber of neighbors. As noted by Everett and Borgatti [ 12 ], these measures fall short of identifying vertices, which are collectively central as a group, leading them to prop ose generalizations of the aforemen tioned measures to groups of v ertices. Indeed, it has b een sho wn that the ov erlap b et w een the top k no des with the greatest individual closeness cen tralit y and groups of size k with high group closeness cen trality is relatively small [ 8 ]. 1 Corresponding author 2 Exact and App ro ximate Group Closeness Maximization Naturally , the question arises of ho w to efficien tly find groups of a giv en size k suc h that a certain group cen trality measure is maximized. In the NP-hard [ 11 , 22 ] group closeness cen trality maximization ( GCCM ) problem, a p ositiv e integer k and an undirected connected graph G = ( V , E ) are given, and the task is to find a group S ⊆ V of size k that maximizes c ( S ) : = | V | − k P v ∈ V min s ∈ S dist ( v , s ) . There are tw o prominent w a ys to exactly solve the problem. On the one hand, branch-and- b ound algorithms sp ecifically designed for this problem are used. These algorithms exhaust- iv ely explore a search-tree con taining all possible solutions. T o sp eed up the computation, man y pruning techniques are used to cut of large parts of the explorable searc h-space. Such metho ds ha ve b een explored by Ra jbhandari et al. [ 18 ], Staus et al. [ 22 ] and W o ydt et al. [ 26 ]. On the other hand, ILP solvers can b e used to solve GCCM . Since ILPs can capture a v ast range of problems, a h uge amount of researc h and engineering has flo wn in to ILP solvers. This has made ILP solv ers an incredibly p ow erful tool for a large class of optimization problems, including GCCM . Bergamini et al. [ 8 ] were the first to prop ose an ILP for this problem. It has a quadratic n umber v ariables and constrain ts. Staus et al. [ 22 ] then improv ed on the prop osed formulation and reduced the v ariable and constraint count to O ( n · diam ( G )) . A dditionally , they prop osed an iterative approach that starts b y solving smaller ILPs and only if necessary enlarges them. Exp erimen tal ev aluations in [ 22 , 26 ] sho w that ILP solvers curren tly outp erform the branch-and-bound approac h. When solution quality is not paramoun t, one ma y decide to use a heuristic or appro x- imation algorithm. Chen et al. [ 11 ] considered a simple greedy algorithm that starts with the empt y set and iteratively adds the v ertex that improv es the score the most, until k elemen ts hav e b een added. They claimed that the algorithm has a (1 − 1 /e ) -appro ximation guaran tee. The run time of the algorithm was massiv ely impro ved by Bergamini et al. [ 8 ] and they observed very high empirical solution qualit y , when compared to an optimal solution. Ho w ever, they also show ed that the (1 − 1 /e ) -appro ximation guarantee w as erroneously assumed. Angriman et al. [ 2 ] later presented an algorithm with a 1 / 5 -appro ximation ratio, that is based on a lo cal search algorithm for the related k -median problem [ 5 ]. The algorithm ma y start with an y initial solution, whic h is then refined using a swap-based lo cal search pro cedure. V ertices inside the solution are swapped with vertices outside of the solution if the swap improv es the ob jective. If no more objective-impro ving sw aps can b e made then the algorithm terminates. Our Con tribution. W e develop improv ements for group closeness centralit y maxim- ization, b oth in the exact and approximate case. In the exact case, w e further refine the state-of-the-art iterative ILP approach prop osed by Staus et al. [ 22 ]. W e propose a data reduction technique that aims to reduce the num b er of decision v ariables used in the ILP. A dditionally , w e prop ose a technique to reduce the n umber of ILPs to be solved. F or some instances, these impro vemen ts can lead to a speedup of 22 . 37 × , while the ov erall speedup is 3 . 65 × . In the approximate case, we impro v e the sw ap-based lo cal search algorithm of Angriman et al. [ 2 ]. W e sho w that the search space of vertices can b e restricted to a set that is guaran teed to contain an optim um solution, without impacting the appro ximation guaran tee. By applying this tec hnique, mean speedups of a factor of 1 . 4 are achiev ed. Finally , we show that the greedy algorithm proposed by Chen et al. [ 11 ] does not ha ve an appro ximation guaran tee for GCCM . C. Schulz, J. T ernes and H. W oydt 3 2 Prelimina ries Let G = ( V , E ) b e a graph with v ertex set V and edge set E . Throughout this w ork, w e assume that the graph is undirected, un weigh ted, connected and do es not hav e any self lo ops. T w o v ertices u, v ∈ V are adjac ent if { u, v } ∈ E . The neighb orho o d N ( v ) of a v ertex v con tains all adjacen t vertices to v . The de gr e e of a vertex is the size of its neigh b orho o d, i.e., deg ( v ) = | N ( v ) | . The maximum de gr e e in a graph G is ∆( G ) = max v ∈ V deg ( v ) . The close d neighb orho o d N [ v ] = N ( v ) ∪ { v } of a v ertex v additionally includes v . A vertex v dominates a vertex u if N [ u ] ⊆ N [ v ] . The distanc e dist ( u, v ) betw een t wo vertices u and v is the length of a shortest path b etw een u and v . The distance b etw een u and a set of v ertices S is the minimum distance from u to any v ertex in S , i.e., dist ( u, S ) : = min v ∈ S dist ( u, v ) . The e c c entricity of a vertex v is the maximum distance from v to an y other v ertex u , i.e., ecc ( v ) = max u ∈ V dist ( u, v ) . The diameter of a graph G is the maxim um eccentricit y of all its vertices, i.e., diam ( G ) = max v ∈ V ecc ( v ) . A v ertex w ∈ V is called a cut vertex if G − w has more comp onents than G . The closeness c entr ality measure [ 6 , 7 , 21 ] (see Eq. 1) scores eac h individual vertex v ∈ V while the gr oup closeness c entr ality measure [ 12 ] (see Eq. 2) generalizes this score to sets S ⊆ V of v ertices. (1) c ( v ) : = | V | − 1 P u ∈ V dist ( u, v ) (2) c ( S ) : = | V | − | S | P u ∈ V dist ( u, S ) Note that other definitions instead use n or 1 as the n umerator. Regardless of the exact definition of group closeness centralit y , due to the identical denominator one may equiv alen tly minimize gr oup farness f ( S ) : = P u ∈ V dist ( u, S ) . In the literature, there are v arying names used to refer to this problem. Some authors refer to the problem itself as gr oup closeness c entr ality [ 22 ], while others refer to it as gr oup closeness maximization [ 8 ]. W e refer to the problem as gr oup closeness c entr ality maximization ( GCCM ), to differen tiate the problem from the function. Let U b e a universe of items and 2 U b e its p ow er set. Let f : 2 U → R b e a set function. The mar ginal gain of e ∈ U at A ⊆ U is defined as ∆( e | A ) = f ( A ∪ { e } ) − f ( A ) . A set function f is called sup ermo dular if ∀ A ⊆ B ⊆ U , e ∈ U \ B it holds that ∆( e | A ) ≤ ∆( e | B ) . The group farness function is sup ermo dular [ 8 ]. Similarly , a set function f is called submo dular if ∆( e | A ) ≥ ∆( e | B ) . 3 Related Wo rk 3.1 Exact Algorithms T o exactly solv e GCCM , recen t research has fo cused on branc h-and-b ound algorithms [22, 26] or ILP solvers [8, 22] . Branch-and-bound algorithms explore the space of p ossible solutions S ⊆ V with | S | = k b y tra versing a Set-Enumeration T ree [ 20 ]. Let T b e a no de of the searc h tree with a working set S T = { s 1 , . . . , s i } , i.e., the current subset of the solution, and a c andidate set C T = { c 1 , . . . , c m } , i.e., the elements which can b e added to S T . A node has m c hildren where the i -th child has the candidate set C T \ { c 1 , . . . , c i } and the working set S T ∪ { c i } . A no de T with a w orking set of size k has no c hildren at all and the ro ot R of the tree has the candidate set C R = V and working set S R = ∅ . The leafs of this tree hold all sets S ⊆ V of size k exactly once, and therefore exploring the tree guarantees to find the optimal set S ∗ that maximizes group closeness centralit y . 4 Exact and App ro ximate Group Closeness Maximization This searc h tree has O n k leaf nodes, how ever Staus et al. [ 22 ] and W o ydt et al. [ 26 ] presen t metho ds to av oid exploring many leaf no des in most cases. Let S ′ b e the b est set the branc h-and-b ound algorithm has found so far and let T b e the node the algorithm is curren tly exploring. The core idea is to calculate a lo wer b ound b ≤ f ( S T ∪ C ∗ T ) , where C ∗ T = arg min C ′ ⊆ C T , | C ′ | = k −| S T | f ( S T ∪ C ′ ) . C ∗ T is the b est p ossible subset of size k ′ = k − | S T | of the remaining candidates C T . This underestimates the b est p ossible farness in the subtree of the curren t no de T . If f ( S ′ ) ≤ b , i.e., the underestimated b ound is still greater than the best solution, then no b etter solution exists in the subtree of T , and it do es not ha v e to b e explored further. The subtree can b e pruned from the search space. F or example, a simple lo wer b ound tak es the k ′ candidates c i with the greatest marginal gain ∆( c i | S T ) . Subtracting the sum of marginal gains from the curren t score f ( S T ) guarantees a b ound lo w er than f ( S T ∪ C ∗ T ) . Additionally , instead of pruning complete subtrees of the searc h tree, they also prop ose a metho d to prune individual v ertices of the candidate set. This reduces the num b er of c hildren, and therefore the size of the search tree to b e explored. Both of these metho ds are based on the sup ermo dularity of group farness. Bergamini et al. [ 8 ] were the first to prop ose an ILP formulation for GCCM . F or every v ertex v ∈ V , a binary v ariable y v ∈ { 0 , 1 } indicates whether the vertex is selected as part of the solution set S ∗ ( y v = 1 ) or not ( y v = 0 ). F urthermore, they define the binary v ariables x u,v ∈ { 0 , 1 } for each vertex pair ( u, v ) ∈ V × V . These v ariables mo del the assignment of v ertices to their closest selected v ertex. More precisely , they say a vertex u is assigne d to a v ertex v ∈ S ∗ if dist ( u, v ) = dist ( u, S ∗ ) . If v ∈ S ∗ and u is assigned to v , then x u,v = 1 , otherwise x u,v = 0 . The ILP that minimizes group farness is then written as minimize X u ∈ V X v ∈ V dist ( u, v ) x u,v sub ject to X v ∈ V y v = k (3) X u ∈ V x v ,u = 1 ∀ v ∈ V (4) x u,v ≤ y v ∀ u, v ∈ V . (5) Constrain t 3 ensures that exactly k v ertices are selected for the solution set S ∗ . Con- strain t 4 guaran tees that every vertex u ∈ V is assigned to exactly one vertex v . T ogether with Constrain t 5, whic h enforces that assignments are only p ossible to vertices that b elong to the solution (i.e., x u,v ≤ y v ), this ensures that eac h vertex is assigned to exactly one selected vertex in S ∗ . The ob jective function sums the distances dist ( u, v ) weigh ted by the assignmen t v ariables x u,v . Consequen tly , the distance dist ( u, v ) con tributes to the ob jective only if v ertex u is assigned to v ertex v . Since ev ery v ertex u m ust be assigned to exactly one v ertex in S ∗ , the ob jective effectively sums the distances from each vertex to its closest selected vertex. Therefore, minimizing the ob jective corresp onds to minimizing the group farness of the selected set. The ILP has O ( n 2 ) v ariables and constrain ts. Staus et al. [ 22 ] proposed further ILP formulations that all aim to reduce the n umber of v ariables and constraints needed. The idea for all of their new formulations is to circum v ent the creation of the v ariables x u,v . After all, the information to which vertex v the vertex u is assigned is not inherently relev an t, only the underlying distance to S ∗ is. Therefore, the v ariables x u,v are remov ed from the ILP and instead v ariables x u,i ∈ { 0 , 1 } are added with u ∈ V and i ∈ { 0 , . . . , diam ( G ) } . If vertex u has distance i to the optimal solution S ∗ , then x u,i = 1 , otherwise x u,i = 0 . The new ILP formulation then reads as follo ws: C. Schulz, J. T ernes and H. W oydt 5 minimize X u ∈ V X i ∈{ 0 ,..., diam ( G ) } i · x u,i sub ject to X v ∈ V x v , 0 = k (6) X i ∈{ 0 ,..., diam ( G ) } x v ,i = 1 ∀ v ∈ V (7) x v ,i ≤ X w ∈ V : dist ( v,w )= i x w, 0 ∀ v ∈ V , ∀ i ∈ { 0 , . . . , diam ( G ) } . (8) Constrain ts 6 and 7 enco de that the solution has exactly k v ertices and each vertex has only one activ e distance to S ∗ . Constraint 8 ensures that the active distance from v to S ∗ is c hosen correctly , i.e., x v ,i can only be 1 if there exists at least one w ∈ V with w ∈ S ∗ ( x w, 0 = 1 ) and distance dist ( v , w ) = i . This ILP has O ( n · diam ( G )) v ariables and constrain ts, making it inherently well suited for small diameter net works lik e so cial net w orks. The ILP can further be reduced in size b y removing unnecessary v ariables. Instead of using i ∈ { 0 , . . . , diam ( G ) } for eac h v ertex, one can instead use i ∈ { 0 , . . . , ecc ( v ) } for eac h individual vertex v , since dist ( v , S ∗ ) ≤ ecc ( v ) . Ho w ever, Staus et al. [ 22 ] still noticed that man y x v ,i are not relev an t for the ILP. F or example, if a solution with x v ,i = 1 was determined then, in hindsigh t, all v ariables x v ,j with j > i w ere not relev ant for the ILP. An ILP without those v ariables would ha ve determined the same solution, in less time. This led them to design an iterative algorithm called ILPind [ 22 ], which is the state-of-the-art algorithm to exactly solve GCCM . The idea of the algorithm is to start with a small form ulation of the ILP whic h might not be able to find the optimal solution - in which case the ILP is iterativ ely enlarged un til this is the case. Instead of creating all v ariables x v ,i with i ∈ { 0 , . . . , ecc ( v ) } , they only create the v ariables with i ∈ { 0 , . . . , d ( v ) = 2 } . The v ariables x v ,i with i < d ( v ) k eep their original meaning, ho w ever the v ariables x v ,d ( v ) c hange their meaning. If x v ,d ( v ) = 1 then dist ( v , S ∗ ) ≥ d ( v ) , i.e., the distance from v to S ∗ ma y be greater than d ( v ) , and therefore it is not guaran teed that the curren t S ∗ is optimal. If the ILP is solved and there is a x v ,d ( v ) = 1 with d ( v ) < ecc ( v ) , then the ILP is called insufficient and another iteration is needed 2 . F or each vertex v with x v ,d ( v ) = 1 , the v alue d ( v ) is increased by 1 but capp ed at ecc ( v ) . The ILP is solved with the new d ( v ) -v alues and this is rep eated un til even tually a sufficient ILP is encountered. While it is not inheren tly clear that this approach is adv antageous in comparison to solving the larger ILP only once, the experimental ev aluation in [ 22 ] sho ws that it is indeed significantly faster. Another improv ement they utilize is the use of dominating vertices. Remember that a v ertex v is dominated by a vertex u if N [ v ] ⊆ N [ u ] . Staus et al. [ 22 ] show that a set of dominated v ertices D can be remov ed from the searc h space, i.e., there exists an optimal solution S ∗ in V \ D . More precisely , for all v ertices v ∈ V , it m ust hold that v ∈ V \ D or v is dominated b y a vertex in V \ D . Let D ⊆ V b e the set of all vertices remo v ed from the searc h space according this rule. Then, for all v ertices v ∈ D , the ILP do es not need the v ariables x v , 0 . Note that V \ D is constructed to hav e at least size k . 2 Note that this notion of sufficiency is relativ e to the solution found. If several optimal solutions exist, the ILP might b e sufficien t with respect to one solution, but insufficient with respect to another. 6 Exact and App ro ximate Group Closeness Maximization 3.2 Heuristic and App roximate Algo rithms Due to the NP-hardness of GCCM , many authors hav e prop osed heuristic algorithms. Chen et al. [ 11 ] prop osed a simple greedy heuristic, which we call Greedy . Essen- tially , Greedy starts with the empty set S 0 = ∅ and iterativ ely adds the vertex that yields the greatest marginal gain in group closeness cen trality , i.e., S i +1 = S i ∪ { v } with v = arg max v ′ ∈ V \ S i ∆( v ′ | S i ) . The final solution is S k . In its most simple form Greedy first computes the O ( n 2 ) distance matrix in O ( n ( n + m )) time using breadth-first-search (BFS) from eac h v ertex. T o determine v in eac h of the k iterations then tak es O ( n 2 ) p er iteration. In their w ork, Chen et al. [ 11 ] claimed that Greedy has an appro ximation ratio of (1 − 1 /e ) by using a w ell-known result for submo dular set functions [ 17 ]. Bergamini et al. [ 8 ] later pointed out that Chen et al. [ 11 ] erroneously assumed that group closeness cen trality is submo dular and thus the approximation claim could not b e supported by their proof strategy . Consequen tly , the question of whether GCCM could be approximated was considered unsettled [2]. W e later sho w that Greed y do es not hav e an appro ximation guaran tee. Ho w ever, Bergamini et al. [ 8 ] still observ ed very high quality solutions in exp eriments and prop osed an improv ed greedy algorithm called Greedy ++. It computes the same solution as Greed y in less time and less space. They circum ven t the creation of the distance matrix and only keep a v ector d that stores the distance from each vertex to the current set S i . T o compute ∆( v ′ | S i ) they utilize a Single-Source-Shortest-P aths algorithm and compare the resulting distance to d . The memory requirement is reduced to O ( n ) but the total running time is increased to O ( k n ( n + m )) since each SSSP is implemented via a BFS. While the memory requirement allows to process larger graphs, the running time is impractical, so they dev elop ed further impro vemen ts. These improv ements do not reduce the theoretical running time, but greatly reduce the running time in practice. F or example, not ev ery BFS needs to b e run un til completion. Once a vertex v is encoun tered that can b e reached faster from the curren t solution S i than from v ′ , then the unexplored neigh b ors of v can also be reac hed faster from S i . Then, the BFS do es not need to explore further from v . Another impro v ement is the exploitation of sup ermo dularity . In essence, this often allows them to skip reev aluating a v ertex in iteration i if it would hav e yielded a po or marginal gain in iteration i − 1 . Angriman et al. [ 4 ] prop osed tw o lo cal searc h algorithms with no kno wn appro ximation guaran tee. Their first algorithm, LocalSw ap , starts by uniformly picking an initial solution S . Then they sw ap v ertices v ∈ S with their neigh b ors u ∈ N ( v ) if it is exp ected that the group farness is decreased. Instead of querying f (( S \ { v } ) ∪ { u } ) for each p ossible sw ap, they heuristically determine go o d pairs of v ertices, and keep auxiliary data structures to sp eed up the query of f . If no swap is found then the algorithm terminates and returns the current set. Their second algorithm, GrowShrink , lifts the neigh borho o d limitation, as the previous algorithm ma y get stuck in lo cal optima. Swaps can no w b e performed b et w een any pair of v ertices. Again, a uniformly chosen initial solution is pick ed at the start. Instead of swappin g v ertices, Gr owShrink op erates in t wo distinct phases, the gr ow -and shrink -phase. During the grow-phase the algorithm may add m ultiple v ertices and therefore temporarily violate the constrain t | S | ≤ k . Ho w ev er, in the shrink-phase the algorithm then discards ve rtices suc h that the cardinalit y constrain t is again fulfilled. The idea is that the gro w-phase adds man y b eneficial vertices, while the shrink-phase then discards the least beneficial v ertices. In a later work, Angriman et al. [ 2 ] prop ose an algorithm with a 1/5-appro ximation guaran tee, th us settling the approximabilit y question of GCCM . The local search algorithm is based on an algorithm by Arya et al. [ 5 ] for the related k -median problem. They start with an y solution S and then p erforms sw aps ( S \ { u } ) ∪ { v } with u ∈ S and v ∈ V \ S , if the swap C. Schulz, J. T ernes and H. W oydt 7 is b eneficial. Once all p ossible sw aps do not improv e the ob jectiv e anymore, the algorithm terminates. They sho w that the result of Arya et al. [ 5 ] applies, i.e. for the output set S it holds that c ( S ) ≥ 1 5 c ( S ∗ ) (i.e. f ( S ) ≤ 5 f ( S ∗ ) ) with S ∗ b eing an optimal solution. Since the appro ximation guaran tee does not depend on the initial solution, different algorithms to obtain the initial solutions can be chosen. Angriman et al. prop ose tw o algorithms Greed y- Ls-C and GS-LS-C , where the first one uses the solution provided by the Greed y algorithm and the second one utilizes the Gr owShrink algorithm. Interestingly , Greed y-Ls-C ’s and GS-LS-C ’s solution qualit y are very similar. Finally , Ra jbhandari et al. [ 18 ] prop osed an anytime algorithm called Presto that finds heuristic solutions and, when run until termination, exact solutions. 4 Grover The main con tribution of this w ork is our exact algorithm called Gr over 3 . It impro ves on ILPind from Staus et al. [ 22 ] by reducing the num b er of iterations, and the n umber of v ariables in the ILP. The former is accomplished by first estimating a go o d initial solution and subsequently using that solution to fine-tune the num b er of v ariables used in the ILP. The latter is ac hieved by new reduction rules whic h allow for the remo v al of v ariables. Recap. Recall that the algorithm ILPind from Staus et al. [ 22 ] starts by solving the ILP with v ariables x v ,i for v ∈ V and i ∈ { 0 , . . . , d ( v ) = 2 } . This wa y , solutions with dist ( v , S ∗ ) ≤ 1 or dist ( v , S ∗ ) = 2 and ecc ( v ) = 2 can be represented by the ILP , i.e. the ILP is sufficien t for suc h solutions. F or instance, if a dominating set of size k exists, then this set is a solution whic h can b e represented by the initial ILP. After the mo del is optimized with the current { d ( v ) } v ∈ V , w e ma y find that x v ,d ( v ) = 1 for some v ertices v , indicating that dist ( v , S ∗ ) ≥ d ( v ) . If, in addition, ecc ( v ) > d ( v ) , then w e cannot rule out the p ossibility that dist ( v , S ∗ ) > d ( v ) . F or such vertices v , the v alue d ( v ) needs to b e incremen ted to mak e sure that a possible solution S ∗ with dist ( v , S ∗ ) > d ( v ) can be represen ted b y the ILP model. This pro cess repeats un til even tually a sufficient ILP is obtained. 4.1 Reducing Iterations Consider a graph with a large diameter and a relatively small v alue of k . Since ILPind initially sets d ( v ) = 2 for all v ertices, it is highly unlikely that the first ILP can represen t an y optimal solution. Similarly , the ILP will lik ely not be sufficien t to represent an optimal solution in the next few iterations. Much time is sp ent to iterate to the final sufficient ILP. On the other hand, setting d ( v ) = ecc ( v ) instan tly generates a sufficient ILP. How ever, it is computationally expensive to find an optimal solution, due to the large num b er of v ariables. Our idea to circum v ent b oth of these extreme cases is to use an estimate 2 ≤ e d ( v ) ≤ ecc ( v ) for each vertex v . The closer these estimates are to the v alues required by an optimal solution, the fewer iterations and unnecessary decision v ariables are needed. If indeed e d ( v ) = dist ( v , S ∗ ) + 1 and the optimal solution S ∗ is unique, then the ILP would be sufficient in the first iteration with as few v ariables as p ossible. Note that if multiple optimal solutions exist, then additional iterations migh t be necessary to verify that no other optimal solution is better than S ∗ . While an optimal solution S ∗ and the distances dist ( v , S ∗ ) are not known a priori, a heuristic solution e S can be computed efficiently . Also recall that [ 2 , 3 , 8 ] sho wed 3 Gro up closeness centralit y maximization sol ver 8 Exact and App ro ximate Group Closeness Maximization that the solution qualit y of many heuristic algorithms is very high on real-w orld graphs. So dist ( v , e S ) ma y b e a go o d estimate of dist ( v , S ∗ ) and can be used to determine e d ( v ) . A dding this technique is relativ ely straigh t-forward. Derive the initial solution e S using any algorithm and then set e d ( v ) = max { dist ( v , e S ) + 1 , 2 } for the first iteration. If the ILP is suf- ficien t, then S ∗ = { v ∈ V | x v , 0 = 1 } , otherwise for each v with x v , e d ( v ) = 1 ∧ ecc ( v ) > e d ( v ) , the v alue e d ( v ) m ust b e incremen ted. This migh t still b e necessary for sev eral iterations dep ending on how close the estimate e d is to the final, sufficien t v alues. T o obtain the initial solution, we utilize GS-LS-C from Angriman et al. [ 3 ], as it giv es an appro ximation guaran tee for GCCM. How ever, any heuristic or approximation algorithm can b e used to compute e S . 4.2 Abso rb ed Vertices Staus et al. [ 22 ] show ed that many vertices do not need to b e included in the searc h space. A set D ⊆ V of v ertices can be remov ed from the search space if for each v ∈ D there exists a v ertex u ∈ V \ D that dominates v . In tuitively , domination means that choosing v in a solution can never lead to a better ob jective v alue than choosing u , since u is at least as close to all ver tices in the graph. Any algorithm can then restrict the search space to V \ D (assuming ≥ k v ertices remain) and is still guaranteed to find an optimal solution. F or the ILP , this is expressed by removing the v ariables x v , 0 for eac h v ∈ D . How ev er, the v ariables x v , 1 , . . . , x v ,d ( v ) are still created, since they are needed to compute the objective function. Our second improv ement aims to extend this data reduction technique to also remov e these decision v ariables. F or example, consider a vertex v with deg ( v ) = 1 and let u b e its neigh b or. Since all shortest paths from v to S ∗ m ust pass through u , w e can deduce dist ( v , S ∗ ) = dist ( u, S ∗ ) + 1 . Let x u,i = 1 in the solved ILP. Since v is one edge apart from u , w e also know that x v ,i +1 = 1 in the solved ILP. Since w e are able to deduce the assignment of x v ,i +1 from x u,i w e can remov e it from the ILP. Ho wev er, remo ving the v ariable alters the ob jectiv e function of the ILP so we additionally need to adjust the cost asso ciated with x u,i , suc h that the same function is still computed. The v ariable x u,i no w also has to capture the distance to v , so instead of asso ciating a cost of i with it, a cost of 2 i + 1 is no w asso ciated with the v ariable. In this case, we sa y that a vertex u absorbs a vertex v . In general, a v ertex u ma y absorb α ( u ) ∈ N man y neigh b ors. In that case, u can capture the cost to all of these v ertices by asso ciating the cost α ( u )( i + 1) + i with x u,i in the objective function. By A ⊆ V w e denote the set of all absorb ed vertices in G . There are even more cases when a vertex u can absorb its neigh b ors. Let u b e a cut v ertex, i.e., G is a connected graph, but G − u w ould hav e at least t wo connected components. If there is a comp onent C = { w 1 , . . . , w ℓ } ⊆ D in G − u suc h that all its v ertices are dominated b y u , then u can absorb w 1 , . . . , w ℓ . In that case, each shortest path from w 1 , . . . , w ℓ to S ∗ m ust pass through u and hence dist ( w i , S ∗ ) = dist ( u, S ∗ ) + 1 and the same argumen t from ab o v e applies. Figure 1 sho ws m ultiple cases, where vertices can and cannot b e absorb ed. A pro of that this searc h space reduction is correct is giv en in the next section. R untime Analysis. The dominated v ertices can be determined b y first sorting the neigh b orho o d of all vertices in P v ∈ V deg ( v ) log deg ( v ) ∈ O ( m ∆( G )) time. Then for eac h of the m v ertex pairs, a linear scan of the neigh b orho o ds reveals if one dominates the other. This tak es at most O ( m ∆( G )) time. T o compute the set of absorb ed vertices A , first T arjan’s [ 23 ] O ( n + m ) biconnectivit y algorithm is used to iden tify cut v ertices. Afterwards, for each cut v ertex it is determined if it dominates a complete comp onent. Since the vertex has at most ∆( G ) neigh b ors and we already computed the domination relation b etw een all neigh b oring v ertices, this costs at most O (∆( G )) time p er v ertex. F or at most n cut-v ertices this tak es at most O ( n ∆( G )) time. In total a runtime of O ( m ∆( G )) is required. C. Schulz, J. T ernes and H. W oydt 9 (a) ... ... (b) (c) Figure 1 Figure showing absorbed vertices. In case (a) and (b) the red v ertices can be absorb ed b y their neighboring blue v ertex. In case (b) an y combination of the dashed edges results in the blue v ertex absorbing its neigh b ors. In case (c) no vertex can be absorb ed. 4.3 The Complete Algo rithm Gro ver starts by determining the set of dominated v ertices D and the set of absorb ed v ertices A ⊆ D . Afterwards, GS-LS-C [ 3 ] is used to compute an initial solution e S . Based on this estimate, e d ( v ) = max { dist ( v , e S ) + 1 , 2 } is determined for each vertex v for the first iteration of the reduced ILP. Our reduced ILP then reads as follo ws: minimize X v ∈ V \ A X i ∈{ 0 ,..., e d ( v ) } x v ,i · ( α ( v ) · ( i + 1) + i ) sub ject to X v ∈ V \ D x v , 0 = k (9) X i ∈{ 0 ,..., e d ( v ) } x v ,i = 1 ∀ v ∈ V \ A (10) x v ,i ≤ X w ∈ V \ D : dist ( v ,w )= i x w, 0 ∀ v ∈ V \ A, ∀ i ∈ { 0 , . . . , e d ( v ) } . (11) Note that in the worst case, the reduced ILP still has the same n um b er of v ariables and constraints as ILPind , namely O ( n · diam ( G )) . If, after solving the ILP , w e encounter x v , e d ( v ) = 1 ∧ ecc ( v ) > e d ( v ) for an y v ∈ V \ A , then the ILP may b e insufficien t to represent an optimal solution. In this case we increase e d ( v ) ← e d ( v ) + 1 for all v ertices that did not pass the chec k and solve the ILP again with the new e d ( v ) -v alues. This is repeated until the ILP is sufficient and then S ∗ = { v ∈ V \ D | x v , 0 = 1 } can easily be extracted. F or high-lev el pseudo co de of the full algorithm, see Algorithm 1. Lines 2 and 3 handle the case for k = 1 , where the Greedy algorithm returns the exact solution. In Line 4, the dominated and absorb ed v ertex sets are determined and in Line 5, the heuristic solution is computed using GS-LS-C [ 3 ]. This is used to estimate e d ( v ) for each vertex v in Lines 6 and 7 . The main work of the algorithm o ccurs in the loop from Lines 8 to 16 . In Line 9 the reduced ILP is solv ed, and Lines 10 to 14 c hec k if the ILP is sufficient. for eac h v ertex v ∈ V \ A it is chec ked whether the ILP is insufficient and if so the corresp onding e d ( v ) -v alue is incremen ted (Line 14). Only when all v ertices passed the chec k, will the algorithm return the solution in Line 16 . 10 Exact and App ro ximate Group Closeness Maximization Algo rithm 1 Gro ver 1: function sol ve( G : Graph, k : N ) 2: if k = 1 then 3: return solution of Greedy with k = 1 ▷ Greed y is exact for k = 1 4: compute D , A and { α ( v ) } v ∈ V \ A 5: compute approximate solution e S ▷ Using GS-LS-C 6: for v ∈ V \ A do 7: e d ( v ) ← max { 2 , dist ( v, e S ) + 1 } ▷ See Section 4.1 8: while true do 9: solv e reduced ILP using D , A, { e d ( v ) } v ∈ V \ A and { α ( v ) } v ∈ V \ A ▷ See Section 4.2 10: ilpSufficien t ← true 11: for v ∈ V \ A do ▷ Check if the ILP is sufficien t 12: if x v , e d ( v ) = 1 and e d ( v ) < ecc ( v ) then ▷ is e d ( v ) insufficien t for v ? 13: ilpSufficien t ← false 14: e d ( v ) ← e d ( v ) + 1 15: if ilpSufficient then 16: return { v ∈ V \ D | x v , 0 = 1 } Pro of of Correctness. T o prov e the correctness of our algorithm, we show that the new ILP form ulation indeed finds an optimal set S ∗ . W e accomplish this b y showing that the new ILP formulation and the ILPind form ulation (see Section 3.1) optimize the same function. A dditionally , w e sho w that w e can infer the v ariable assignment of one ILP based on the v ariable assignment of the other. Since ILPind computes an optimal set S ∗ (see [ 22 ] for a proof ), so will our algorithm. Note that reducing the n um b er of iterations b y estimating the initial e d ( v ) -v alues does not impact the correctness guarantee. ▶ Theo rem 1. L et a r e duc e d optimize d ILP b e sufficient, i.e., after optimizing the ILP it holds that x v , e d ( v ) = 0 with e d ( v ) < e c c ( v ) or x v , e d ( v ) = 1 with e d ( v ) ≥ e c c ( v ) . Then S ∗ = { v ∈ V \ D | x v , 0 = 1 } is a glob al ly optimal solution. Pro of. Let { e x v ,i | 0 ≤ i ≤ e d ( v ) } v ∈ V \ A b e the v ariables of the reduced mo del with v alues { e d ( v ) } v ∈ V \ A of the last iteration. By the definition of our algorithm, these v alues corresp ond to an optim um solution of the ILP and are sufficient, i.e., for each v ∈ V \ A , we ha ve e x v , e d ( v ) = 0 or both e x v , e d ( v ) = 1 and ecc ( v ) ≤ e d ( v ) . Consider the original ILP mo del used in ILPind , for which we in tro duce v ariables { x v ,i | 0 ≤ i ≤ d ( v ) } v ∈ V with d ( v ) = ( e d ( v ) v ∈ V \ A e d ( ρ ( v )) + 1 v ∈ A (12) where ρ ( v ) denotes the v ertex which absorb ed v . W e assign x v ,i = 0 v ∈ A, i = 0 e x ρ ( v ) ,i − 1 v ∈ A, i ≥ 1 e x v ,i v ∈ V \ A for 0 ≤ i ≤ d ( v ) . (13) This construction yields a feasible solution of the original ILP mo del (whic h is also sufficient b y construction). It remains to show that this v ariables assignment corresponds to an optim um solution. C. Schulz, J. T ernes and H. W oydt 11 First note that b y the reduction rules, the identit y X v ∈ V \ A X i ∈{ 0 ,..., e d ( v ) } α ( v ) · ( i + 1) · e x v ,i = X v ∈ A X i ∈{ 0 ,..., e d ( ρ ( v )) } ( i + 1) · e x ρ ( v ) ,i (14) holds. Roughly sp eaking, this identit y expresses that the absorb ed cost can b e counted either by considering the absorbing vertices, or b y considering the absorbed vertices. F or the ob jectiv e v alues e f ∗ of the solution of the reduced ILP , and f ∗ of the ILP used in ILPind , w e then get e f ∗ = X v ∈ V \ A X i ∈{ 0 ,..., e d ( v ) } ( α ( v ) · ( i + 1) + i ) · e x v ,i = X v ∈ V \ A X i ∈{ 0 ,..., e d ( v ) } i · e x v ,i + X v ∈ V \ A X i ∈{ 0 ,..., e d ( v ) } α ( v ) · ( i + 1) · e x v ,i (14) = X v ∈ V \ A X i ∈{ 0 ,..., e d ( v ) } i · e x v ,i + X v ∈ A X i ∈{ 0 ,..., e d ( ρ ( v )) } ( i + 1) · e x ρ ( v ) ,i ( ⋆ ) = X v ∈ V \ A X i ∈{ 0 ,...,d ( v ) } i · x v ,i + X v ∈ A X i ∈{ 0 ,...,d ( v ) } i · x v ,i = X v ∈ V X i ∈{ 0 ,...,d ( v ) } i · x v ,i = f ∗ (15) where ( ⋆ ) follows by applying (12) and (13) together with an index shift. Hence, the constructed solution of the original ILP used in ILPind has the same objective v alue f ∗ = e f ∗ as the solution of the reduced ILP. Ho wev er, we ha ve y et to show that no strictly b etter solution of the original ILP exists. Therefore, assume for a con tradiction, that the constructed solution of the original mo del is not optim um, i.e., there is a feasible assignment of the v ariables of the original mo del with ob jective v alue f ′ < f ∗ . W e refer to the corresponding v ariable assignment as { ˆ x v ,i | 0 ≤ i ≤ d ( v ) } v ∈ V . W e argue that from this b etter solution, w e can construct a b etter solution of the reduced ILP , contradicting its optimalit y . Indeed, using e x v ,i = ˆ x v ,i for all v ∈ V \ A and 0 ≤ i ≤ d ( v ) as an assignment for the reduced ILP , w e obtain a feasible solution of the reduced ILP. Applying the same rearrangements as in (15) , w e conclude that this solution has objective v alue f ′ < e f ∗ , a contradiction to the optimality of the solution of the reduced ILP. ◀ 5 App roximation Results In this section, w e presen t t wo results concerning the appro ximation of GCCM . The first one is the use of dominating vertices in GS-LS-C [ 3 ], a 1 / 5 -appro ximation algorithm. The second result is a proof that the Greedy algorithm do es not hav e an approximation guarantee. Dominating V ertices in GS-LS-C. Due to the NP-hardness of GCCM , heuristic and appro ximation algorithms are the only practical approach for graphs with millions of v ertices. Angriman et al. [ 2 ] present an algorithm based on the 5 -appro ximation for the k -median problem by Arya et al. [ 5 ]. They sho w that the algorithm translates to a 5 -appro ximation of group farness and therefore a 1 / 5 -appro ximation for GCCM . The algorithm performs ob jectiv e-impro ving vertex swaps. As long as there exist vertices s ∈ S and o ∈ V \ S suc h that f ( { o } ∪ S \ { s } ) < f ( S ) , the solution is up dated to ( S \ { s } ) ∪ { o } . When no 12 Exact and App ro ximate Group Closeness Maximization r vertices r 2 v ertices c e 1 e 2 Figure 2 Sketc h of a graph G r of the family ( G r ) r ∈ N used to prov e that Greedy do es not hav e an appro ximation guaran tee. impro ving swap exists, the algorithm terminates and the approximation guarantee holds. Angriman et al. [ 2 ] already restrict the search space by not considering swaps with degree-1 v ertices. This optimization can be done b ecause using the neighbor of a degree-1 vertex instead of the vertex itself never w orsens the solution qualit y . The same idea can b e extended to a set D of dominated vertices where for all v ∈ D , there is a v ertex u ∈ V \ D s.t. v is dominated by u , i.e. N [ v ] ⊆ N [ u ] . Then, there exists an optimal solution S ∗ ⊆ V \ D as sho wn by Staus et al. [ 22 ]. Therefore, it is easy to verify that the pro of of the approximation guaran tee in Ary a et al. [ 5 ] remains applicable when restricting the searc h space to sw aps with vertices in V \ D . Greedy Does Not Appro ximate. As mentioned in the related w ork, Chen et al. [ 11 ] presen ted the Greedy algorithm, whic h starts from the empty set S 0 = ∅ and iteratively adds the vertex that improv es the score the most. That is, S i = S i − 1 ∪ { v } with v = arg max v ∈ V \ S i − 1 c ( S i − 1 ∪ { v } ) . They wrongly assumed that c ( · ) is submo dular and therefore claimed that a result from Nemhauser et al. [ 17 ] for submo dular functions holds, which asserts that the greedy strategy yields an (1 − 1 /e ) -appro ximation. How ever, Bergamini et al. [ 8 ] sho w ed that c ( · ) is not submo dular and therefore the approximation guarantee cannot b e concluded by using the result from Nemhauser et al. [ 17 ]. Note that the lack of submo dularity do es not necessarily mean the greedy strategy performs p o orly . Bergamini et al. [ 8 ] observed v ery high empirical approximation factors nev er low er than 0 . 97 in exp eriments on real-w orld graphs. In conclusion, while Greedy performs w ell in practice, whether it does ha ve an appro ximation guaran tee w as left unansw ered. Here, w e show that Greedy can giv e solutions with scores differing from the optimal score by an arbitrarily large ratio. Therefore, Greed y do es not hav e an approximation guarantee. ▶ Theorem 2. Greedy do es not have an appr oximation guar ante e. Pro of. W e sho w the claim b y constructing a family of graphs ( G r ) r ∈ N for which Greedy ma y pro duce arbitrarily po or appro ximations for k = 2 . A graph G r of the family con tains a path P with end vertices e 1 and e 2 . P has an o dd num b er of v ertices (precisely 2 r − 1 ) and therefore has a cen tral vertex c . A ttac hed to e 1 and e 2 are r 2 lea v es (degree 1 v ertices), resp ectiv ely . Figure 2 sho ws an illustration of such a graph. Let us denote by S G r and S ∗ r the solution pro duced by Greedy and the exact solution for G r with k = 2 , resp ectively . Recall that f ( S ) = P v ∈ V ( G ) dist ( v , S ) denotes the group farness of S . Now consider the solution of Greedy . The algorithm first c ho oses the vertex c as it is the most central one. Afterw ards, the v ertices e 1 and e 2 are equally well suited to b e c hosen and w.l.o.g. we assume the algorithm chooses e 1 and get S G r = { c, e 1 } . The r 2 lea v es attached to e 2 eac h con tribute a cost of r to f ( S G r ) . Therefore, we get f ( S G r ) ≥ r 3 . On the other hand, consider the solution C. Schulz, J. T ernes and H. W oydt 13 S e : = { e 1 , e 2 } . Clearly , f ( S ∗ r ) ≤ f ( S e ) . W e argue that f ( S e ) is upp er b ounded b y a quadratic expression in r and thus f ( S ∗ r ) is as w ell. Indeed, the leav es attached to e 1 and e 2 incur a total cost of 2 r 2 to f ( S e ) . It remains to consider the cost incurred by the v ertices in P , whic h is upp er bounded b y 2 P r − 1 i =1 i = r 2 − r . Therefore, o verall f ( S ∗ r ) ≤ f ( S e ) ≤ 3 r 2 − r and hence we get f ( S G r ) f ( S ∗ r ) ≥ r 3 3 r 2 − r → ∞ for r → ∞ and similarly c ( S ∗ r ) c ( S G r ) → ∞ for r → ∞ , sho wing the claim. ◀ The proof is of course indep enden t of the en umerator x used to define c ( S ) = x/f ( S ) . Note that while we assumed k = 2 , similar families of graphs can b e constructed for an y in teger constan t k > 2 : Let a connected comp onent of G r − c b e called a flower . By starting with c and attac hing k flo w ers to c , w e obtain graphs to whic h the same argumen t applies. Since c is alw ays chosen as the first vertex, there is at least one flow er from whic h no v ertex is c hosen, whic h incurs a cost in O ( r 3 ) . An optimal strategy c ho oses the e i , i = 1 , . . . , k v ertices and therefore only incurs a cost in O ( r 2 ) . Gong et al. [ 13 ] analyze the simple greedy algorithm for non-negativ e monotone set functions with generic submo dularity r atio γ , a scalar measuring how close a function is to b eing submo dular with γ ∈ [0 , 1] and iff γ = 1 , the function is submo dular. While Bergamini et al. [ 8 ] already sho wed that c ( · ) is not submo dular, i.e. γ < 1 , our result actually implies the following stronger result. ▶ Co rollary 3. The gr oup closeness c entr ality function c ( · ) c an have a submo dularity r atio γ arbitr arily close to zer o. Pro of. Let g b e a non-negative monotone set function with generic submo dularity ratio γ . Let S G b e the set computed by the simple greedy algorithm with k ∈ N . Gong et al. [ 13 ] sho w that the simple greedy algorithm obtains the approximation guarantee g ( S G ) ≥ (1 − e − γ ) g ( S ∗ ) , where S ∗ denotes the optimal solution. By Theorem 2, in the expression c ( S G ) = α · c ( S ∗ ) , α ∈ R may get arbitrarily close to zero, implying the same for γ . ◀ 6 Exp erimental Evaluation W e exp erimentally ev aluate our dev elop ed techniques to assess their impact on real-word graphs. First, we ev aluate eac h technique on its own (Section 6.1) before comparing Gr over to curren t state-of-the-art solvers (Section 6.2). In Section 6.3, we ev aluate the impact of using dominating vertices in GS-LS-C [2] to restrict the searc h space. Data and Exp erimental Setup. T o ev aluate our algorithms, we use a sup erset of 32 graphs that w ere used b y Staus et al. [ 22 ]. These are tak en from K onect [ 16 ] and the Net w ork Data Rep ository [ 19 ] and are comprised of so cial, collaboration, biological, economic, informational, brain, and miscellaneous net works of v arying size and structural properties. They range from small graphs with ab out 50 v ertices to graphs with more than 40 000 v ertices. See T able 2 in the App endix for a complete list with more detailed statistics. The experiments are p erformed on an Intel(R) Xeon(R) Silver 4216 CPU @ 2.10GHz, 92 GiB RAM, running Ubuntu 24.04.3. All exp erimen ts are p erformed serially , with only one thread. Note that Gr over simply runs Greedy for k = 1 and hence is able to solv e these instances rather quic kly . F or a fair comparison, we therefore ev aluate each algorithm on eac h graph with k ∈ { 2 , . . . , 20 } . F or each graph- k instance the algorithm has 10 min utes to 14 Exact and App ro ximate Group Closeness Maximization compute an optimal solution. W e capture how many instances are solved and ho w muc h time it takes, excluding IO. Each instance is run five times and the resulting times are a veraged. Solv ers. W e compare our algorithm Gro ver 4 against ILPind [ 22 ], D Vind [ 22 ] and Sub- ModST [ 26 ]. ILPind is the current state-of-the-art algorithm for GCCM and we mo dified it to also use Gurobi 12.0.1 [ 14 ]. DVind is the fastest explicit branc h-and-b ound algorithm to solve GCCM (see Section 3) and it also deplo ys dominating vertices to initially reduce the search space. Both ILPind and D Vind are implemented in K otlin and run using Jav a op enjdk 21.0.8. SubModST is, like DVind , a branch-and-bound algorithm, but it emplo ys more pruning techniques and optimizations to sp eed up the search. Ho wev er, SubModST is a solv er for general submo dular function maximization and does not utilize dominating v ertices. It is implemen ted in C++17 and compiled using gcc 15.1.0 and -O3 optimization. Our algorithm Gro ver is implemen ted in C++20 and compiled lik e SubModST . In the appro ximate setting we compare against GS-LS-C [ 3 ], whic h is implemented in the C++ library Netw orKit [ 3 ]. It likewise is compiled with the highest optimization, and we mo dified the source co de to incorp orate the dominating vertices. A ddressing Implementation Differences. Since the implemen tation details of Gro ver and ILPind differ, for example with respect to the programming language used, a prop er comparison has to take these differences in to account. In the follo wing sections, the algorithm Base refers to our re-implementation of ILPind . Grover is built on top of Base b y extending it with our impro vemen ts. When comparing Base to ILPind using the sum of runtimes ov er all instances (solved by b oth algorithms), Base achiev es a modest 1 . 17 × sp eedup. W e use this measure rather than the geometric mean of speedups to min- imize the skew of JVM warm up (among other effect due to implemen tation differences) on instances solved quickly . Consequently , to ev aluate the effect of our algorithmic techniques, w e primarily compare Gro ver directly against Base . This comparison ensures that any observ ed sp eedup can b e strictly attributed to our algorithmic impro v emen ts, rather than implemen tation details. 6.1 Abso rb ed Vertices and Improved d ( v ) In this section, we ev aluate the effect of absorbing vertices and initially approximating the d ( v ) -v alues. Recall that absorbing v ertices is a data reduction technique that allo ws us to remov e v ariables and corresponding constrain ts from the ILPs. Approximating the d ( v ) -v alues increases the size of the first ILP to b e solved, how ever it should o verall result in fewer iterations of the algorithm and therefore impro v e the running time. Additionally , w e compare the runtime of Gro ver when it is initialized b y GS-LS-C [ 3 ] and when it is initialized with an optimal solution. This comparison is made to provide insigh t into how m uc h further speedup one can exp ect b y utilizing better initial solutions. First, w e compare the effect of absorbing vertices ( A V ) and approximating the d ( v ) -v alues. The Base algorithm only uses dominating v ertices and the default d ( v ) = 2 initialization. Base is in essence ILPind from Staus et al. [ 22 ]. Then we compare to the algorithm Base + A V whic h additionally enables absorbing vertices, but k eeps d ( v ) = 2 . The algorithm Base + e d ( v ) appro ximates the d ( v ) -v alues, but do es not enable absorbing vertices. Finally , Gro ver enables absorbing v ertices and appro ximates the d ( v ) -v alues. Figure 3 shows the sp eedup of eac h configuration ov er Base . F or each instance the sp eedup is determined and these v alues are sorted increasingly . Note that these sp eedups are determined on the 4 A v ailable at § /Grov er C. Schulz, J. T ernes and H. W oydt 15 0 100 200 300 400 #Instances 0.5 1 2 4 8 16 Speedup over Base Grover Base + A V Base + e d ( v ) Base Figure 3 Sp eedup of different optimizations o v er Base . Base uses dominating vertices and d ( v ) = 2 . Absorbing v ertices A V and e d ( v ) are ev aluated separately . Gr over uses both. 0 100 200 300 400 500 #Instances 1 2 3 4 Speedup opt Grover Grover Figure 4 Sp eedup of Gro ver initialized with an optimal solution when compared to the de- fault initialization with GS-LS-C [ 3 ]. Sp eedups are determined p er instance and then sorted. 473 instances each configuration solved. Base + A V , Base + e d ( v ) and Gr over solve 481, 506 and 514 instances respectively . T able 3 in the App endix shows a more detailed view for each algorithm and each graph. Base is ov erall the slow est configuration as the other configurations hav e a mean sp eedup of 1 . 55 × , 1 . 39 × and 1 . 94 × resp ectiv ely . Note that these mean speedups are ho wev er skew ed b y many easy to solve instances, for which the o verhead of computing GS-LS-C has a detrimental effect on the total runtime. This can b e seen for Base + e d ( v ) and Grover in Figure 3. Therefore, w e also compare the speedup measured as total sum of time needed to solve all 473 instances, and simply refer to this measure as sp e e dup , while we refer to the geometric mean sp eedup as me an sp ee dup . Then, relativ e to Base , Base + A V has a sp eedup of 1 . 68 × , while Base + e d ( v ) has a sp eedup of 2 . 25 × . Gro ver has a sp eedup of 3 . 21 × . F or harder to solv e instances that require more time, our impro v ements hav e a greater effect in reducing the runtime. While Gr over has the largest sp eedup, it is how ever not the fastest configuration for each instance. In total, 514 instances w ere solv ed b y at least one configuration. Out of these, Grover is the fastest on 289, while Base + A V is the fastest on 83 instances. Base is the fastest configuration on 102 instances and Base + e d ( v ) is the fastest on 40 instances. F or larger instances, Gro ver mostly is the fastest algorithm; how ever for smaller instances, there is no clear choice. Secondly , we compare Gro ver when it is initialized with an approximate and an optimal solution. Gro ver uses GS-LS-C [ 3 ] to compute an appro ximate initial solution, and based on this solution the e d ( v ) -v alues are determined. While this improv es the running time, it is unclear ho w imp ortant the initial approximation is. W e therefore also kickstart Gr over with an optimal solution and determine the e d ( v ) -v alues with it. A great difference b et w een the running times indicates that a b etter approximation would indeed help to improv e the running time of Gro ver . Should the differences b e small, then this indicates that the approximation is already of high quality , implying further improv ements would not significan tly sp eed up Gro ver . Note that initializing Gr over with an optimal solution means that the algorithm essen tially only has to v erify whether the solution is indeed optimal. How ever, it does not imply that only one iteration of the ILP is necessary . While the ILP is sufficien t for the optimal input solution, it ma y not b e sufficient for another optimal solution. T o verify that the other solution is indeed not strictly better, the algorithm may need to enlarge the ILP 16 Exact and App ro ximate Group Closeness Maximization 0 100 200 300 400 500 #Instances 0.01 0.1 1 10 100 1000 Seconds Grover (514 sol) ILPind (464 sol) DVind (387 sol) SubModST (228 sol) Figure 5 Number of solved instances for Gro ver (ours), ILPind [ 22 ], DVind [ 22 ] and SubModST [ 26 ]. Gro ver solv es the most in- stances in the least amoun t of time. 1 2 3 4 5 6 7 8 9 10 > 10 #Iterations 0 25 50 75 100 125 150 175 #Instances Grover ILPind Figure 6 Distribution of n umber of itera- tions that Gro ver (ours) and ILPind [ 22 ] need to solve all instances. Gr over solves more in- stances in few er iterations. and the corresp onding e d ( v ) -v alues. Overall, this could actually result in larger running times, compared to the appro ximate initialization. F or this experiment, only instances where Gr over finishes in 10 minutes are used. Note that w e remov e the time it costs Gro ver to run GS-LS-C , since opt Gr over has no additional cost to get the optimal solution. Therefore, only the time it costs to solve the ILPs is measured. Figure 4 shows the corresponding sp eedup, while T able 3 in the App endix sho ws a more detailed view. The speedups are computed p er instance and sorted afterw ards. Out of the 514 instances, 286 are solved faster with an optimal solution while 228 are solv ed slo w er. The smallest sp eedup achiev ed is 0 . 68 × for ca-netscience and k = 15 , ho wev er the mean speedup for all slow er instances is only 0 . 97 × . The loss in time therefore is negligible. Ov erall, the speedup is 1 . 14 × while the mean speedup is 1 . 16 × . F or 38 instances ( 7 . 3% ), a speedup of greater than 2 × is ac hieved. A sp eedup of greater than 3 × is achiev ed for 6 instances, and a speedup greater than 4 × is ac hieved for only 1 instance. F or many instances, initialization with an optimal solution do es not greatly reduce the running time, indicating that the approximate initialization with GS-LS-C [3] is already of high qualit y . 6.2 Compa rison to SOT A W e compare our algorithm Grover against ILPind [ 22 ], D Vind [ 22 ] and SubModST [ 26 ]. Figure 5 shows the num b er of instances eac h algorithm was able to solv e. T able 1 in the App endix holds more detailed running times for each algorithm and graph. While SubModST p erforms very fast for the first few instances, it is o verall the slow est algorithm and only solv es 228 instances. DVind , the other explicit branc h-and-b ound algorithm, p erforms better as it solves 387 instances. This is lik ely due to the additional use of dominating v ertices, that SubModST do es not utilize. The iterativ e ILP algorithms ILPind and Gr over p erform b est. ILPind ov erall solv es 464 instances while Gr over solv es 514 out of the 646 p ossible instances. Grover has a speedup of 3 . 65 × compared to ILPind and a maximum sp eedup of 22 . 37 × . Only on 8 out of the 464 instances solved by b oth solvers is Gr over slo wer. In this case, Gro ver ’s worst sp eedup is 0 . 57 × while the mean sp eedup on those 8 instances is 0 . 75 × . Note that Gr over sp ends more than 95% of its time in solving the ILPs. Figure 6 sho ws the num b er of instances Gr over and ILPind solv ed and ho w many ILP iterations are necessary . This plot only includes the 464 instances that both Gro ver C. Schulz, J. T ernes and H. W oydt 17 3 3 3 5 6 6 6 8 8 9 10 11 12 13 14 14 15 15 17 17 19 21 28 46 Graph diameter 0 2 4 6 8 10 12 14 16 #Iterations Grov er ILPind Figure 7 Comparison of num b er of iterations of ILPind [ 22 ] and Gr over for k = 5 . Eac h tic k on the x-axis represen ts a graph. They are ordered first b y diameter and then b y n . 0 5 10 15 20 25 30 #Instances 1 . 0 1 . 5 2 . 0 2 . 5 3 . 0 Speedup k = 10 k = 50 k = 100 Figure 8 Run time ratio of GS-LS-C [ 2 ] without dominating vertices and with domin- ating vertices. A v alue greater 1 means that using dominating v ertices improv es the run time. and ILPind are able to solv e. Gro ver is able to solv e significan tly more instances in less iterations than ILPind . Ab out 38% of instances are solved within one iteration, while ILPind only solv es ab out 16% within one iteration. F or more than 50% of instances, ILPind needs 5 or more iterations while Grover needs 5 or more iterations for only about 17% of all instances. When comparing the algorithms p er instance, Gro ver needs on av erage 39 . 47% fewer iterations than ILPind . The greatest absolute reduction o ccurs for inf-power and k = 6 since ILPind needs 19 iterations while Grover requires six. Note that there also is one instance, econ-mahind as and k = 20 , where ILPind requires few er iterations, namely three in comparison to Gr over , which needs four. How ever, each instance solv ed b y ILPind is also solv ed b y Gr over . Figure 7 sho ws the num b er of iterations for each graph and k = 5 . Note that each tic k on the x -axis represen ts one graph, they are first ordered by their diameter and, if equal, secondly by their num b er of vertices. F or visual clarity , only their diameter is sho wn. F or graphs with smaller diameter ( ≤ 12 ) Grover either uses the same amoun t of iterations as ILPind or is able to complete within 1 iteration. Only for arenas-jazz and econ-mahindas do es Gr over require tw o and three iterations resp ectively . F or graphs with a larger diameter ( > 12 ) Gro ver is almost alwa ys able to halve the num b er of required iterations. This indicates that approximating the d ( v ) -v alues is important for graphs with a large diameter. 6.3 App roximation W e test the v alues of k ∈ { 10 , 50 , 100 } on each graph with more than 100 vertices. Each graph- k instance is run 5 times and the required time and ac hieved group farness is av eraged across the 5 runs. Figure 8 shows the resulting sp eedups. F or k = 10 , the sp eedups div erge the most since the minimal recorded speedup is 0 . 69 × while the maximum sp eedup is 2 . 3 × . The mean sp eedup is 1 . 33 × . F or some instances a reduction in sp eed o ccurs since the dominated v ertices first hav e to be determined. F or smaller instances, which can already be solv ed rather quickly , this additional running time is detrimental. Once GS-LS-C tak es more time to solv e each instance as n increases or for k = 50 and k = 100 , this effect v anishes. The minimal sp eedup for these v alues of k is 0 . 85 × and 0 . 90 × , resp ectiv ely . The mean sp eedup is 1 . 44 × and 1 . 51 × while the maximum sp eedup reaches 2 . 85 × and 2 . 94 × resp ectiv ely . The solution qualit y largely remains unaffected. F or k = 10 , the greatest increase in group farness 18 Exact and App ro ximate Group Closeness Maximization o ccurs for email-univ and it increases from 2046 to 2049 . 2 whic h is an increase of ab out 0 . 15% . F or k = 50 and k = 100 , the greatest relativ e increases are similar, namely 0 . 27% and 0 . 01% , resp ectiv ely . They occur for the graphs inf-po wer and email-univ . On the other hand, there are also no great quality improv ements. F or k = 10 , no instance was solved with a strictly better group farness, while for k = 50 and k = 100 the relativ e impro vemen t is nev er greater than 0 . 27% . Overall, restricting the search space in GS-LS-C [ 2 ] with dominating v ertices improv es the running time, while not altering the solution qualit y . 7 Conclusion and F uture Wo rk Group closeness centralit y maximization asks for a set of k v ertices, such that the distance to all other vertices in a graph is minimized. In this w ork, we presen ted t wo impro vemen ts to the state-of-the-art exact algorithm ILPind [ 22 ]. One is based on a data reduction technique to reduce the num b er of decision v ariables, while the other reduced the num b er of ILPs to b e solved. Overall, these improv ements lead to a sp eedup of 3 . 65 × and achiev e maxim um sp eedups of up to 22 . 37 × . F or the appro ximate case, w e show ed that a known reduction tec hnique for the exact case can be applied to a sw ap-based approximation algorithm without impacting the 1 / 5 -appro ximation guarantee. Our experiments indicate that using the reduction sp eeds up the appro ximation algorithm b y 1 . 4 × . Additionally , we show ed that a widely used greedy algorithm do es not hav e an approximation guarantee. In future work, w e explore if our proposed tec hniques can b e applied to more general problems, such as the w eigh ted version of the problem studied here. References 1 Isaiah G. Adeba yo and Y anxia Sun. A nov el approach of closeness cen tralit y measure for v oltage stability analysis in an electric p ow er grid. International Journal of Emer ging Ele ctric Power Systems , 21(3):20200013, 2020. doi:10.1515/ijeeps- 2020- 0013 . 2 Eugenio Angriman, R uben Beck er, Gianlorenzo D’Angelo, Hugo Gilb ert, Alexander v an der Grin ten, and Henning Mey erhenke. Group-Harmonic and Group-Closeness Maximization - Appro ximation and Engineering. In Martin F arach-Colton and Sabine Storandt, editors, Pr o c ee dings of the 23r d Symp osium on Algorithm Engine ering and Exp eriments, ALENEX 2021, V irtual Confer enc e, January 10-11, 2021 , pages 154–168. SIAM, 2021. doi:10.1137/1. 9781611976472.12 . 3 Eugenio Angriman, Alexander v an der Grinten, Mic hael Hamann, Henning Meyerhenk e, and Man uel Pensc huc k. Algorithms for Large-scale Net work Analysis and the Net worKit Toolkit. In Hannah Bast, Claudius K orzen, Ulrich Meyer, and Man uel Pensc huc k, editors, A lgorithms for Big Data - DF G Priority Pr o gr am 1736 , volume 13201 of L e ctur e Notes in Computer Scienc e , pages 3–20. Springer, 2022. doi:10.1007/978- 3- 031- 21534- 6_1 . 4 Eugenio Angriman, Alexander v an der Grin ten, and Henning Mey erhenke. Local Search for Group Closeness Maximization on Big Graphs. In Chaitany a K. Baru, Jun Huan, Latifur Khan, Xiaohua Hu, Rona y Ak, Y uanyuan Tian, Roger S. Barga, Carlo Zaniolo, Kisung Lee, and Y anfang (F ann y) Y e, editors, 2019 IEEE International Confer ence on Big Data (IEEE BigData), L os A ngeles, CA, USA, De c emb er 9-12, 2019 , pages 711–720. IEEE, 2019. doi:10.1109/BIGDATA47090.2019.9006206 . 5 Vija y Ary a, Nav een Garg, Rohit Khandekar, Adam Meyerson, Kamesh Munagala, and Vina y aka Pandit. Lo cal Search Heuristics for k-Median and Facility Lo cation Problems. SIAM J. Comput. , 33(3):544–562, 2004. doi:10.1137/S0097539702416402 . 6 Alex Ba v elas. A mathematical mo del for group structures. Applie d A nthr opolo gy , 7(3):16–30, 1948. URL: http://www.jstor.org/stable/44135428 . C. Schulz, J. T ernes and H. W oydt 19 7 Murra y A. Beauc hamp. An improv ed index of centralit y . Behavior al Scienc e , 10(2):161–163, 1965. doi:10.1002/bs.3830100205 . 8 Elisab etta Bergamini, T any a Gonser, and Henning Mey erhenk e. Scaling up Group Closeness Maximization. CoRR , abs/1710.01144, 2017. , doi:10.48550/arXiv.1710. 01144 . 9 Stephen P . Borgatti, Martin G. Everett, and Jeffrey C. Johnson. A nalyzing so cial networks . SA GE, 2013. 10 Eric Chea and Dennis R. Liv esa y . How accurate and statistically robust are catalytic site predictions based on closeness cen trality? BMC Bioinform. , 8, 2007. doi:10.1186/ 1471- 2105- 8- 153 . 11 Chen Chen, W ei W ang, and Xiaoy ang W ang. Efficient Maxim um Closeness Centralit y Group Iden tification. In Muhammad Aamir Cheema, W enjie Zhang, and Lijun Chang, editors, Datab ases The ory and Applic ations - 27th A ustr alasian Datab ase Confer enc e, ADC 2016, Sydney, NSW, A ustr alia, Septemb er 28-29, 2016, Pr o ce e dings , v olume 9877 of L e ctur e Notes in Computer Scienc e , pages 43–55. Springer, 2016. doi:10.1007/978- 3- 319- 46922- 5_4 . 12 M. G. Everett and S. P . Borgatti. The centralit y of groups and classes. The Journal of Mathematic al So ciolo gy , 23(3):181–201, 1999. doi:10.1080/0022250X.1999.9990219 . 13 Suning Gong, Qingqin Nong, T ao Sun, Qizhi F ang, Ding-Zh u Du, and Xiaoyu Shao. Maximize a monotone function with a generic submodularity ratio. The or. Comput. Sci. , 853:16–24, 2021. doi:10.1016/J.TCS.2020.05.018 . 14 Gurobi Optimization, LLC. Gur obi Optimizer Refer enc e Manual , 2025. A v ailable at. URL: https://www.gurobi.com . 15 Dirk Kosc hützki, Katharina Anna Lehmann, Leon Peeters, Stefan Ric hter, Dagmar T enfelde- P o dehl, and Oliver Zloto wski. Centr ality Indices , pages 16–61. Springer Berlin Heidelb erg, Berlin, Heidelb erg, 2005. doi:10.1007/978- 3- 540- 31955- 9_3 . 16 Jérôme Kunegis. KONECT – The K oblenz Netw ork Collection. In Pr o c. Int. Conf. on W orld Wide W eb Comp anion , pages 1343–1350, 2013. URL: http://dl.acm.org/citation.cfm?id= 2488173 . 17 George L. Nemhauser, Laurence A. W olsey , and Marshall L. Fisher. An analysis of approx- imations for maximizing submodular set functions - I. Math. Pro gr am. , 14(1):265–294, 1978. doi:10.1007/BF01588971 . 18 Baibha v Ra jbhandari, Paul Olsen Jr., Jeremy Birn baum, and Jeong-Hy on Hwang. PRESTO: Fast and Effective Group Closeness Maximization. IEEE T r ans. K now l. Data Eng. , 35(6):6209– 6223, 2023. doi:10.1109/TKDE.2022.3178925 . 19 R y an A. Rossi and Nesreen K. Ahmed. The Netw ork Data Rep ository with Interactiv e Graph Analytics and Visualization. In Blai Bonet and Sven Koenig, editors, Pro c e e dings of the Twenty-Ninth AAAI Confer enc e on A rtificial Intel ligenc e, January 25-30, 2015, A ustin, T exas, USA , pages 4292–4293. AAAI Press, 2015. doi:10.1609/AAAI.V29I1.9277 . 20 Ron Rymon. Searc h through Systematic Set En umeration. In Bernhard Neb el, Charles Ric h, and William R. Swartout, editors, Pr o c e edings of the 3r d International Confer enc e on Principles of K now le dge R epr esentation and R e asoning (KR’92). Cambridge, MA, USA, Octob er 25-29, 1992 , pages 539–550. Morgan Kaufmann, 1992. 21 Gert Sabidussi. The centralit y index of a graph. Psychometrika , 31(4):581–603, 1966. doi: 10.1007/BF02289527 . 22 Luca Pascal Staus, Christian Kom usiewicz, Nils Morawietz, and F rank Sommer. Exact Algorithms for Group Closeness Centralit y . In Jonathan W. Berry , Da vid B. Shmo ys, Lenore Co w en, and Uwe Naumann, editors, SIAM Confer enc e on Applie d and Computational Discr ete Algorithms, A CDA 2023, Se attle, W A, USA, May 31 - June 2, 2023 , pages 1–12. SIAM, 2023. doi:10.1137/1.9781611977714.1 . 23 Rob ert Endre T arjan. Depth-first search and linear graph algorithms. SIAM J. Comput. , 1(2):146–160, 1972. doi:10.1137/0201010 . 20 Exact and App ro ximate Group Closeness Maximization 24 Jak ob T ernes. Algorithms for Group Closeness Centralit y Maximization, December 2025. doi:10.5281/zenodo.18293375 . 25 Martijn P . v an den Heuvel and Olaf Sporns. Net work hubs in the h uman brain. T r ends in Co gnitive Scienc es , 17(12):683–696, 2013. doi:10.1016/j.tics.2013.09.012 . 26 Henning Martin W oydt, Christian Kom usiewicz, and F rank Sommer. SubMo dST: A Fast Generic Solv er for Submo dular Maximization with Size Constrain ts. In Timoth y M. Chan, Johannes Fisc her, John Iacono, and Grzegorz Herman, editors, 32nd A nnual Eur op e an Sym- p osium on Algorithms, ESA 2024, R oyal Hol loway, L ondon, Unite d K ingdom, Septemb er 2-4, 2024 , v olume 308 of LIPIcs , pages 102:1–102:18. Sc hloss Dagstuhl - Leibniz-Zen trum für Informatik, 2024. doi:10.4230/LIPIcs.ESA.2024.102 . C. Schulz, J. T ernes and H. W oydt 21 App endix T able 1 P er-graph comparison of SubModST [ 26 ], D Vind [ 22 ], ILPind [ 22 ] and Grover (ours). F or eac h graph, #Solved rep orts the num b er of k v alues for which an algorithm solv ed the instance, and P Seconds is the cumulativ e runtime ov er all successfully solved instances. Note that different algorithms ma y solv e different subsets of k v alues. Bold entries indicate the algorithm that solved the most instances and, in case of a tie, ac hieved the lo w est total run time. Graph SubModST D Vind ILPind Grover #Solved P Seconds #Solved P Seconds #Solved P Seconds #Solved P Seconds contiguous-usa 19 737.35 19 19.09 19 1.45 19 0.18 brain_1 19 110.89 19 23.51 19 1.93 19 0.21 arenas-jazz 12 261.39 14 678.52 19 3.82 19 0.63 ca-netscience 12 899.63 19 8.39 19 4.67 19 1.03 robot24c1_mat5 8 475.52 6 163.96 19 15.60 19 7.20 tortoise 9 325.62 18 1194.34 19 30.27 19 9.77 econ-beause 8 657.02 19 9.35 19 9.74 19 1.20 bio-diseasome 11 808.09 19 37.80 19 9.28 19 2.47 soc-wiki-V ote 11 582.12 19 897.86 19 29.54 19 3.45 ca-CSphd 6 360.66 19 43.00 19 64.20 19 6.93 email-univ 9 282.53 8 600.03 19 1580.24 19 698.38 econ-mahindas 9 489.03 9 1235.96 19 59.13 19 14.12 bio-yeast 9 246.89 18 1414.46 19 173.75 19 40.10 comsol 2 45.50 13 233.12 19 33.33 19 24.88 medulla_1 19 41.25 19 120.37 19 24.75 19 3.78 heart2 2 252.99 10 212.30 19 33.35 19 16.66 econ-orani678 12 1494.00 10 652.86 19 68.09 19 16.49 inf-openflights 10 544.23 9 1070.32 19 333.10 19 53.83 ca-GrQc 6 357.37 8 756.79 19 4482.46 19 1409.26 inf-power 2 91.74 12 5160.91 16 3048.41 18 1490.94 ca-Erdos992 6 422.38 10 606.08 19 865.37 19 265.08 soc-advogato 10 1267.51 12 1882.45 19 489.18 19 94.97 bio-dmela 7 1532.54 4 1670.15 2 1094.27 12 2582.48 ia-escorts-dynamic 6 2483.43 - - 9 4267.95 19 1915.29 ca-HepPh 4 1944.25 3 1693.44 - - 5 1627.93 soc-anybeat - - 19 7603.85 19 659.06 19 125.06 econ-poli-large - - 16 4605.45 19 416.15 19 72.00 ca-AstroPh - - - - - - - - ca-CondMat - - - - - - 4 1727.81 ca-cit-HepTh - - - - - - 17 4769.13 fb-pages-media - - - - - - 2 1088.01 soc-gemsec-RO - - - - - - - - 22 Exact and App ro ximate Group Closeness Maximization T able 2 Graphs used in the experimental ev aluation. #dom and #abs denote the num bers of dominated and absorb ed v ertices, resp ectiv ely; dens is the graph densit y and diam its diameter. Graph n m dens diam #dom #abs Graph n m dens diam #dom #abs contiguous-usa 49 107 0.091 11 13 1 econ-orani678 2 529 86 768 0.027 5 42 0 brain_1 65 730 0.351 3 17 0 inf-openflights 2 905 15 645 0.004 14 1 783 806 arenas-jazz 198 2 742 0.141 6 93 5 ca-GrQc 4 158 13 422 0.001 17 2 712 1 171 ca-netscience 379 914 0.013 17 306 128 inf-power 4 941 6 594 <0.001 46 1 487 1 278 robot24c1_mat5 404 14 260 0.175 3 10 0 ca-Erdos992 4 991 7 428 <0.001 14 3 861 3 516 tortoise-fi 496 984 0.008 21 232 140 soc-advogato 5 054 39 374 0.003 9 1 721 1 080 econ-beause 507 39 427 0.307 3 490 1 bio-dmela 7 393 25 569 <0.001 11 2 042 2 005 bio-diseasome 516 1 188 0.009 15 379 204 ia-escorts-dynamic 10 106 39 016 <0.001 10 1 857 1 838 soc-wiki-V ote 889 2 914 0.007 13 239 193 ca-HepPh 11 204 117 619 0.002 13 7 014 1 904 ca-CSphd 1 025 1 043 0.002 28 697 693 soc-anybeat 12 645 49 132 <0.001 10 8 430 6 3205 email-univ 1 133 5 451 0.008 8 232 153 econ-p oli-large 15 575 17 468 <0.001 15 12 970 12 51 econ-mahindas 1 258 7 513 0.009 8 4 0 ca-AstroPh 17 903 196 972 0.001 14 10 462 1 701 bio-yeast 1 458 1 948 0.002 19 800 735 ca-CondMat 21 363 91 286 <0.001 15 14 282 3 386 comsol 1 500 48 119 0.043 12 1 485 0 ca-cit-HepTh 22 721 2 444 642 0.009 9 10 536 317 medulla_1 1 770 8 905 0.005 6 836 392 fb-pages-media 27 917 205 964 <0.001 15 6 330 2 364 heart2 2 339 340 229 0.124 6 2 327 0 soc-gemsec-RO 41 773 125 826 <0.001 19 6 626 5 490 T able 3 P er-graph comparison of Gro ver and its different configurations. Bold entries indicate the configuration that solved the most instances and in case of a tie, achiev ed the low est total run time. opt. Gro ver is not included in this comparison. Graph Base Base + A V Base + e d ( v ) Gro ver opt. Gro ver #Solved P Seconds #Solved P Seconds #Solved P Seconds #Solved P Seconds #Solved P Seconds contiguous-usa 19 0.18 19 0.19 19 0.18 19 0.18 19 0.16 brain_1 19 0.18 19 0.18 19 0.20 19 0.21 19 0.21 arenas-jazz 19 0.58 19 0.52 19 0.53 19 0.63 19 0.40 ca-netscience 19 1.67 19 1.08 19 1.34 19 1.03 19 0.79 robot24c1_mat5 19 5.55 19 5.57 19 7.33 19 7.20 19 2.99 tortoise 19 22.84 19 13.91 19 15.55 19 9.77 19 7.65 econ-beause 19 0.59 19 0.62 19 1.15 19 1.20 19 0.70 bio-diseasome 19 5.02 19 2.44 19 3.89 19 2.47 19 1.68 soc-wiki-V ote 19 17.31 19 8.22 19 8.06 19 3.45 19 2.76 ca-CSphd 19 43.84 19 13.42 19 25.26 19 6.93 19 6.15 email-univ 19 1596.33 19 736.96 19 1285.73 19 698.38 19 365.96 econ-mahindas 19 21.32 19 21.32 19 13.95 19 14.12 19 8.51 bio-yeast 19 150.92 19 79.70 19 70.81 19 40.10 19 32.03 comsol 19 20.76 19 20.80 19 25.50 19 24.88 19 10.14 medulla_1 19 9.99 19 5.27 19 6.29 19 3.78 19 2.39 heart2 19 6.41 19 6.73 19 16.47 19 16.66 19 6.10 econ-orani678 19 16.14 19 16.24 19 16.44 19 16.49 19 10.22 inf-openflights 19 248.31 19 165.27 19 66.68 19 53.83 19 39.03 ca-GrQc 19 4205.63 19 2528.51 19 1903.46 19 1409.26 19 1002.81 inf-power 16 2614.84 17 2195.14 17 1819.69 18 1490.94 18 1367.21 ca-Erdos992 19 956.03 19 419.74 19 574.49 19 265.08 19 224.66 soc-advogato 19 280.71 19 165.65 19 114.31 19 94.97 19 86.78 bio-dmela 2 1058.13 8 3491.01 11 2891.56 12 2582.48 13 2289.61 ia-escorts-dynamic 18 7058.83 19 4815.43 19 2035.28 19 1915.29 19 1841.93 ca-HepPh - - - - 3 828.59 5 1627.93 5 1362.08 soc-anybeat 19 496.25 19 286.42 19 161.94 19 125.06 19 103.91 econ-poli-large 19 340.90 19 89.17 19 226.82 19 72.00 19 41.11 ca-AstroPh - - - - - - - - - - ca-CondMat - - - - 2 1084.54 4 1727.81 4 1688.73 ca-cit-HepTh - - - - 17 5801.30 17 4769.13 18 4096.97 fb-pages-media - - - - - - 2 1088.01 2 1034.82 soc-gemsec-RO - - - - - - - - - -

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment