Efficient Encrypted Computation in Convolutional Spiking Neural Networks with TFHE

With the rapid advancement of AI technology, we have seen more and more concerns on data privacy, leading to some cutting-edge research on machine learning with encrypted computation. Fully Homomorphic Encryption (FHE) is a crucial technology for pri…

Authors: Longfei Guo, Pengbo Li, Ting Gao

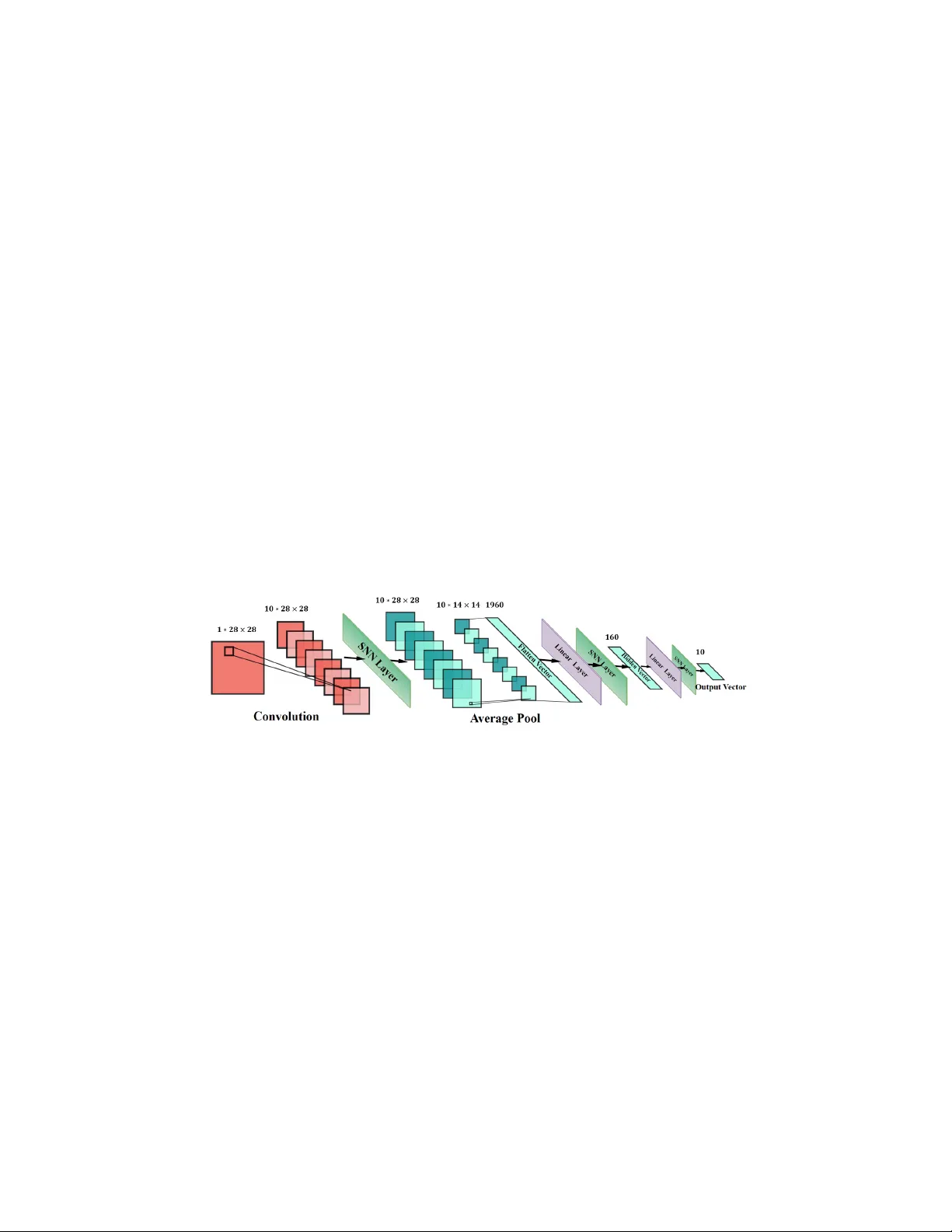

Efficien t Encrypted Computation in Con v olutional Spiking Neural Net w orks with TFHE Longfei Guo 1 , 3 , P engb o Li 2 , 3 , Ting Gao 2 , 3 , 4 ∗ , Y onghai Zhong 2 , 3 , Hao jie F an 2 , 3 , Jinqiao Duan 5 , 6 1 Sc ho ol of Cyb er Science and Engineering, Huazhong Univ ersity of Science and T ec hnology , W uhan, China 2 Sc ho ol of Mathematics and Statistics, Huazhong Universit y of Science and T echnology , W uhan, China 3 Cen ter for Mathematical Science, Huazhong Universit y of Science and T echnology , W uhan, China 4 Steklo v-W uhan Institute for Mathematical Exploration, Huazhong Universit y of Science and T echnology , China 5 Departmen t of Mathematics and Department of Ph ysics, Great Bay Univ ersity , Dongguan, China 6 Guangdong Provincial Key Lab oratory of Mathematical and Neural Dynamical Systems, Dongguan, China Abstract. With the rapid adv ancement of AI tec hnology , we hav e seen more and more concerns on data priv acy , leading to some cutting-edge researc h on machine learning with encrypted computation. F ully Homo- morphic Encryption (FHE) is a crucial technology for priv acy-preserving computation, while it struggles with contin uous non-polynomial func- tions, as it op erates on discrete integers and supp orts only addition and m ultiplication. Spiking Neural Netw orks (SNNs), whic h use discrete spike signals, naturally complement FHE’s characteristics. In this pap er, we in tro duce FHE-DiCSNN, a framew ork built on the TFHE scheme, utiliz- ing the discrete nature of SNNs for secure and efficien t computations. By lev eraging b ootstrapping techniques, we successfully implement Leaky In tegrate-and-Fire (LIF) neuron mo dels on ciphertexts, allo wing SNNs of arbitrary depth. Our framework is adaptable to other spiking neuron mo dels, offering a nov el approach to homomorphic ev aluation of SNNs. A dditionally , w e in tegrate conv olutional methods inspired by CNNs to enhance accuracy and reduce the simulation time asso ciated with random enco ding. Parallel computation techniques further accelerate b ootstrap- ping op erations. Exp erimen tal results on the MNIST and F ashionMNIST datasets v alidate the effectiveness of FHE-DiCSNN, with a loss of less than 3% compared to plaintext, resp ectively , and computation times of under 1 second p er prediction. W e also apply the mo del into real medi- cal image classification problems and analyze the parameter optimization and selection. Keyw ords: Priv acy-preserving · F ully homomrphic encryption · SNNs · image classification 1 In tro duction Priv acy-Preserved AI In recen t years, priv acy preserv ation has garnered sig- nifican t atten tion in the field of mac hine learning. F ully Homomorphic Encryp- tion (FHE) has b ecome the most suitable to ol to facilitate priv acy-preserving 1 mac hine learning (PPML) due to its strong cryptographic security and the abil- it y to p erform algebraic op erations directly on the ciphertext. The foundation of FHE was established in 2009 when Gentry in tro duced the first fully homo- morphic encryption scheme [11] capable of ev aluating arbitrary circuits. His pio- neering work not only prop osed the FHE scheme but also outlined a metho d for constructing a comprehensiv e FHE scheme from a mo del with limited yet suf- ficien t homomorphic ev aluation capacity . Inspired by Gentry’s groundbreaking con tributions, subsequent second-generation schemes like BGV [4] and FV [10] had b een prop osed. The evolution of FHE schemes had contin ued with third- generation models such as FHEW [9], TFHE [7], and Gao [5], which provided v ery efficient b ootstrapping op erations and make unlimited homomorphic com- putation op erations p ossible. The CKKS scheme [6] had attracted considerable in terest as a suitable to ol for PPML implementation, given its natural handling of encrypted real n umbers. Ho wev er, for homomorphic encryption machine learning, existing FHE frame- w orks only supp ort arithmetic op erations such as addition and m ultiplication, and the widely used activ ation functions such as ReLu, sigmoid are non-arithmetic functions. T raditional prediction frameworks that use arithmetic functions to ap- pro ximate non-arithmetic functions are v ery inefficien t. In a distinct study [2], the authors dev elop ed the FHE-DiNN framew ork, a discrete neural net work framew ork predicated on the TFHE scheme. Unlike traditional neural netw orks, FHE-DiNN had discretized netw ork weigh ts in to in tegers and utilized the sign function as the activ ation function. The computation of the sign function had b een achiev ed through b ootstrapping on ciphertexts. Each neuron’s output had b een refreshed with noise, thereby enabling the neural netw ork to extend com- putations to an y depth. Although FHE-DiNN offered high computational sp eed, it had compromised mo del prediction accuracy . Given the close resemblance b et w een the sign function and the output of Spiking Neural Net work(SNN) neu- rons, this work provided a comp elling basis for inv estigating efficient homomor- phic ev aluations of SNNs in the context of PPML. CNNs and SNNs Conv olutional Neural Netw orks (CNNs) are pow erful to ols in computer vision, known for their high accuracy and automated feature extrac- tion [8]. CNNs rely on three key conceptslocal receptive fields, weigh t sharing, and spatial subsamplingimplemented through conv olutional and po oling lay ers. These comp onen ts eliminate the need for manual feature extraction and sp eed up training, making CNNs ideal for visual recognition tasks [24]. In recent years, CNNs hav e b een widely used in image classification [23], Natural Language Pro- cessing (NLP) [3], ob ject detection [17], and video classification [1], driving sig- nifican t progress in deep learning. Spiking Neural Net works (SNNs), the third generation of neural netw orks [20], op erate more lik e biological systems. Unlike Artificial Neural Netw orks (ANNs), SNNs pro cess information in b oth space and time, capturing the tem- p oral dynamics of biological neurons. Neurophysiologists hav e dev elop ed v arious neuron mo dels for SNNs, such as the Hodgkin-Huxley (H-H) mo del [14], leaky 2 in tegrate-and-fire (LIF) mo del [25], Izhik evich mo del [15], and the spike resp onse mo del (SRM) [16]. These mo dels use spikes or "action p otentials" to communi- cate information, closely mimicking brain activity . This time-based pro cessing mak es SNNs more effective for handling time-series data compared to traditional neural net works. Con volution Spiking Neural Net works (CSNNs) combine CNNs’ spatial fea- ture extraction with SNNs’ temp oral processing, enhancing computational effi- ciency and accuracy . Zhou et al. [30] dev elop ed a sophisticated SNN architecture using the VGG16 mo del and Go ogleNet. Zhang et al. [27] created a deep CSNN with tw o con volutional and tw o hidden lay ers, using a ReL-PSP-based spiking neuron mo del and temp oral backpropagation. Other CSNNs range from conv er- sions of standard CNNs to architectures that apply backpropagation using rate co ding or multi-peak neuron strategies [29]. In this pap er, we prop ose the FHE-DiCSNN framew ork. Based on the efficient TFHE scheme, this framework combines the discrete c haracteristics of the CSNN mo del and efficient computation to realize the accurate prediction of ciphertext neural net works. Our contributions are summarized as follows: – Prop osed FHE-Fire and FHE-Reset F unctions Based on Bo otstrapping: W e in tro duced FHE-Fire and FHE-Reset functions to enable LIF neuron com- putations on ciphertext, providing a nov el solution for priv acy preserv ation in SNN. – W e enhanced the framework by optimizing enco ding metho ds and b oot- strapping computations, ensuring no noise accum ulation in deep net work extensions while impro ving computation accuracy and efficiency . – Extensiv e exp erimen ts on public datasets and real-world medical datasets demonstrated the feasibility of efficien t ciphertext computations, la ying a foundation for practical applications of homomorphic encryption tec hniques. 2 Preliminary Kno wledge This section provides an ov erview of b o otstrapping in the TFHE scheme and bac kground knowledge on SNNs. 2.1 TFHE scheme Notations Set Z p = − p 2 + 1 , . . . , p 2 denote a finite ring defined ov er the set of integers. The message space for homomorphic encryption is defined within this finite ring Z p . Consider N = 2 k and the cyclotomic p olynomial X N + 1 , then R q,N ≜ R/q R ≡ Z q [ X ] / X N + 1 ≡ Z [ X ] / X N + 1 , q . Similarly , we can define the p olynomial ring R p,N . 3 FHEW-lik e Cryptosystem – L WE (Learning With Errors). W e revisit the encryption form of L WE [22], whic h is employ ed to encrypt a message m ∈ Z p as LW E s ( m ) = ( a , b ) = a , ⟨ a , s ⟩ + e + q p m mo d q , where a ∈ Z n q , b ∈ Z q , and the k eys are vectors s ∈ Z n q . – RL WE (Ring Learning With Errors) [19]. An RL WE ciphertext of a message m ( X ) ∈ R p,N can b e obtained as follows: RLW E s ( m ( X )) = ( a ( X ) , b ( X )) , where b ( X ) = a ( X ) · s ( X )+ e ( X )+ q p m ( X ) , where a ( X ) ← R q,N is uniformly chosen at random, and e ( X ) ← χ n σ is selected from a discrete Gaussian distribution with parameter σ . – GSW [12]. Given a plaintext m ∈ Z p , the plaintext m is embedded into a p ow er of a p olynomial to obtain X m ∈ R p,N , whic h is then encrypted as RGS W ( X m ) . RGSW enables efficient computation of homomorphic multi- plication, denoted as ⋄ , while effectiv ely controlling noise growth: RGS W ( X m 0 ) ⋄ RGS W ( X m 1 ) = R GS W X m 0 + m 1 , RLW E ( X m 0 ) ⋄ RGS W ( X m 1 ) = R LW E X m 0 + m 1 . Programmable Bo otstrapping TFHE/FHEW b o otstrapping is a core algo- rithm in FHE that enables the computation of any function g with the prop erties g : Z p → Z p and g ( v + p 2 ) = − g ( v ) . This function g , often referred to as the program function in b ootstrapping, defines the transformation applied during the b ootstrapping pro cess. Giv en an L WE ciphertext LW E ( m ) s = ( a , b ) , where m ∈ Z p , a ∈ Z N p , and b ∈ Z p , it is p ossible to b ootstrap it into LW E s ( g ( m )) with refreshed ciphertext noise. This pro cess effectively reduces noise accumulation, ensuring the ciphertext remains suitable for further homomorphic computations. RLW E z Sample Extraction − − − − − − − − − − − − → LW E z ( g ( m )) = ( a , b 0 ) , where a = ( a 0 , . . . , a N − 1 ) is the co efficient vector of a ( X ) , and b 0 is the co efficien t of b ( X ) . Giv en a program function g , b o otstrapping is a pro cess that takes an L WE ciphertext LW E s ( m ) as input and outputs LW E s ( g ( m )) with the original secret k ey s : b ootstrapping ( LW E s ( m )) = LW E s ( g ( m )) . 4 2.2 Leaky Integrate-and-Fire Neuron Model and SNNs Neuroph ysiologists ha ve developed several mo dels to describ e the dynamic b e- ha vior of neuronal membrane p oten tials, crucial for understanding the funda- men tal properties of SNNs. Among these, LIF mo del [26] is widely used due to its simplicity and effectiveness in capturing essential neuronal dynamics, such as leakage, accumulation, and threshold excitation. The LIF mo del is expressed as: τ d V d t = V rest − V + RI , (1) where τ represen ts the membrane time constant, V rest is the resting p oten tial, R is the mem brane imp edance, and I is the input current. This model can b e discretized as: H [ t ] = V [ t − 1] + 1 τ ( − ( V [ t − 1] − V reset ) + I [ t ]) , S [ t ] = Fire ( H [ t ] − V th ) , V [ t ] = Reset( H [ t ]) = V reset , if H [ t ] ≥ V th , H [ t ] , if V reset ≤ H [ t ] ≤ V th , V reset , if H [ t ] ≤ V reset . (2) where H [ t ] represents the membrane p otential, S [ t ] the spike function, and V th the firing threshold. The Fire ( · ) function is a step function. The input current I [ t ] is the weigh ted sum of external inputs, such as those from pre-synaptic neurons or image pixels, denoted as I [ t ] = j ω j x j [ t ] . These inputs can b e pro cessed using either con volutional or fully connected lay ers in a neural netw ork. T raining SNNs is c hallenging due to the non-differentiabilit y of spikes [28]. T raditional bac kpropagation can’t b e directly applied, leading to alternative training metho ds like ANN-to-SNN conv ersion, unsup ervised Spike-Timing-Dependent Plasticit y (STDP), and gradien t surrogate methods. W e adopt a gradient sur- rogate approach, using con tinuous functions to approximate the deriv ative of spik es for backpropagation. T o handle diverse input patterns, different enco ding metho ds are used, such as rate co ding, temp oral co ding, and p opulation co ding [13]. In this study , Pois- son encoding was used to conv ert input data into spike trains using a Poisson pro cess. F or a time interv al ∆t divided into T steps, a random matrix M t with v alues in [0 , 255] is generated. Eac h time step t , the normalized pixel matrix X o is compared with M t to determine spik e occurrences. The final spike enco ding X is: X ( i, j ) = 0 , if X o ( i, j ) ≤ M t ( i, j ) , 1 , if X o ( i, j ) > M t ( i, j ) , where i and j are pixel coordinates. This enco ding aligns with a P oisson distribution, effectiv ely adapting input stimuli for SNNs. 5 3 Prop osed Metho ds 3.1 Con volutional Spiking Neural Net works In SNN neural netw orks, Poisson co ding is a simple sto c hastic co ding tec hnique used to conv ert con tinuous signals, suc h as images, into impulse signals, which are thus suitable as inputs to SNN neural netw orks. How ever, P oisson co ding itself lacks the ability to extract image features. Due to its sto chastic nature, signal co ding using Poisson coding requires a large n umber of impulse samples to enco de the complete information, which in turn increases the computational cost and time o verhead. Con volutional Spiking Neural Net work(CSNN) is a neural netw ork mo del that combines CNN and SNN, which replaces the use of Poisson co ding. In CSNN, the previously mentioned LIF mo del or other spiking mo dels are used to simulate the electrical activity of the neurons, forming the spiking activ ation la yer in the net work, while the con volutional la yer of CSNN is used to extract image features and enco de them as spiking signals. By utilising the spatial feature extraction capability of CNNs and the spiking prop erties of SNNs, CSNNs can b enefit from both conv olutional op erations and discrete spiking.A visualisation of the CSNN is sho wn in Figure (1). Fig. 1. Schematic visualisation of the CSNN netw ork. F or the netw ork, we use a con- v olutional lay er with kernal_size = 3, stridge = 1,padding=1, and an av erage p ooling la yer with kernal_size = 2, stridge = 2, padding=0. 3.2 Discretized CSNN CSNN relies on real num bers for computation, and the outputs of neurons and net work w eights are represented as con tinuous v alues. Ho wev er, homomorphic encryption algorithms require the computation to be defined ov er a ring of in- tegers. Therefore, the outputs and w eights of the neural netw ork must b e dis- cretised in to integers. Discretized CSNNs are characterised by the fact that the neuron outputs consist fundamentally of discrete-v alued signals and only the w eights need to b e discretised. F rom this p erspective, CSNNs are more suitable for homomorphic computation than con ven tional neural netw orks. 6 W e utilize fixed-precision real num b ers and apply suitable scaling to conv ert the weigh ts into integers, effectively discretizing CSNNs into DiCSNNs(Discretized Con volutional Spiking Neural Netw ork). Denote this discretization metho d as the follo wing function: ˆ x ≜ Discret( x, θ ) = ⌊ x · θ ⌉ , where θ ∈ Z is referred to as the scaling factor, and ⌊·⌉ represen ts rounding to the nearest in teger. The discretized result of x is denoted as ˆ x . Within the encryption pro cess, all relev an t numerical v alues are defined on the finite ring Z p . Hence, it is imp erativ e to carefully monitor the n umerical fluctuations throughout the computation to preven t reductions mo dulo p , as such reductions could giv e rise to unan ticipated errors in the computational outcomes. Despite scaling and discretizing the w eights, several challenges remain in ac hieving a fully homomorphic encryption-compatible CSNN (FHE-CSNN): 1) The original equations of LIF neurons (Equation 2) and the av erage p o ol- ing lay er inv olv e division op erations, which are incompatible with homomorphic computation. Alternative approaches are necessary to eliminate explicit division while main taining FHE compatibility . 2) The Fire function, representing the step function in LIF neurons, dep ends on the b o otstrap function g in TFHE. This function must satisfy the condition g ( x ) = − g p 2 + x . The Sign function meets this requirement and is worth considering as a substitute for the Fire function. T o address these challenges, w e in tro duce Theorem 1, which explains the iterativ e pro cess of discrete LIF neurons. Theorem 1. Under the given c onditions V reset = 0 and V th = 1 , Eq.( ?? ) c an b e discr etize d into the fol lowing e quivalent form with a discr etization sc aling factor θ : ˆ H [ t ] = ˆ V [ t − 1] + ˆ I [ t ] , 2 S [ t ] = Sign ˆ H [ t ] − ˆ V τ th + 1 , ˆ V [ t ] = Reset( ˆ H [ t ]) = ˆ V r eset , if ˆ H [ t ] ≥ ˆ V τ th , ; τ − 1 τ ˆ H [ t ] , if ˆ V r eset ≤ ˆ H [ t ] < ˆ V τ th ; ˆ V r eset , if ˆ H [ t ] ≤ ˆ V r eset . (3) Her e, the hat symb ol r epr esents the discr etize d values, and ˆ V τ th = θ · τ . Mor e- over ˆ I [ t ] = ˆ ω j S j [ t ] . Pr o of. W e multiply b oth sides of Equations of the LIF mo del(Eq.( ?? )) by τ and the Sign function has b een substituted for the Fire function, which yields the follo wing equations: 7 τ H [ t ] = ( τ − 1) V [ t − 1] + I [ t ] , 2 S [ t ] = Sign ( τ H [ t ] − V τ th ) + 1 , ( τ − 1) V [ t ] = Reset( τ H [ t ]) = 0 , if τ H [ t ] ≥ V τ th ; τ − 1 τ ( τ H [ t ]) , if 0 ≤ τ H [ t ] ≤ V τ th ; 0 , if τ H [ t ] ≤ 0 . Then, we treat τ H [ t ] and ( τ − 1) V [ t − 1] as separate iterations ob jects. Therefore, w e can rewrite τ H [ t ] as H [ t ] without ambiguit y , as well as ( τ − 1) V [ t − 1] . Finally , by multiplying the corresp onding discretization factor θ , we obtain Eq3. Note that since the division op eration has b een mov ed to the Reset function, rounding is applied during discretization. In Eq.(3), the Leaky Integrate-and-Fire (LIF) model degenerates in to the In tegrate-and-Fire (IF) mo del when V τ th = 1 and τ = ∞ . T o facilitate further discussions, w e will refer to this set of equations as the LIF(IF) function. 2 S [ t ] = LI F ( I [ t ]) , 2 S [ t ] = I F ( I [ t ]) . (4) LIF(IF) mo del t wice spik e signals in Eq (4). If left unaddressed, the next Spiking Activ ation Lay er would receive twice the input. T o tackle this issue, we prop ose a m ulti-level discretization metho d that can also resolve the division problem in a verage p o oling. Supp ose the input signal is given by I [ t ] = ω j S j [ t ] , (5) where ω j represen ts the weigh t parameter. F or the input to the next lay er after the LIF neuron, the normal singular input can b e restored by adjusting the scaling factor as follows: ˆ I [ t ] = Discret( ω j , θ 2 ) · 2 S j ≈ θ · I [ t ] . (6) F or the division in volv ed in the p ooling lay er, the scaling factor is adjusted from θ to θ /n as follows: ˆ I [ t ] = j Discret( ω j , θ n ) · k S k [ t ] ≈ θ · I [ t ] , (7) where n represen ts the divisor in the av erage p ooling lay er. By leveraging Theorem 1 and the m ultilevel discretization metho d, we can ef- fectiv ely address b oth the discretization and division challenges, ensuring smo oth computation of CSNNs on ciphertexts. 8 3.3 Homomorphic Ev aluation of DiCSNNs In the framew ork of FHE-DiCSNN, homomorphic encryption computation is reduced to tw o main pro cesses: the ciphertext computation for W eightSum and the ciphertext computation for the LIF neuron mo del. Ciphertext Computation for W eigh tSum. In this pro cess, user data suc h as input images are encrypted, while mo del parameters like weigh ts remain publicly accessible on the server side. Essentially , FHE-W eightSum represents the dot pro duct b et w een the plain text weigh t vector and the ciphertext v ector of the input la yer. This computation is describ ed as: ˆ ω j LW E ( x j ) = LW E ˆ ω j x j . This equation holds under the follo wing conditions: 1) j ˆ ω j x j ∈ − p 2 , p 2 : This is satisfied by choosing a sufficiently large infor- mation space Z p . 2) The noise remains within p ermissible b ounds: This requires selecting an appropriate initial noise σ to ensure that the weigh ted noise j | ˆ ω j · σ | do es not exceed the b oundary . Ciphertext Computation for LIF Neurons. The Fire and Reset func- tions in Equation (refDiscretEquation) are non-p olynomial functions, neces- sitating the use of programmable b ootstrap metho ds from Theorem (refPro- gram tfhe). F or the FHE-Fire function, we define g ( m ) as: g ( m ) = 1 , if m ∈ 0 , p 2 ; − 1 , if m ∈ − p 2 , 0 . The ciphertext computation for FHE-Fire is then given by: 2 FHE-Fire ( L WE ( m )) = b o otstrap ( L WE ( m )) + 1 = L WE (2) , if m ∈ 0 , p 2 ; L WE (0) , if m ∈ − p 2 , 0 . = L WE (Sign( m ) + 1) = L WE (2 · Spike ) . (8) F or the FHE-Reset function, we define g ( m ) as: g ( m ) ≜ 0 , if m ∈ ˆ V th , p 2 ; θ − 1 θ m , if m ∈ 0 , ˆ V th ; 0 , if m ∈ ˆ V th − p 2 , 0 ; p 2 − θ − 1 θ m , if m ∈ − p 2 , ˆ V th − p 2 . Both functions pro duce the desired encrypted results. How ever, if m falls within the interv al − p 2 , ˆ V th − p 2 , the FHE-Reset function may yield incorrect 9 results. T o preven t this, it is essential to ensure that ˆ H [ t ] do es not enter this range. The following theorem demonstrates that this condition is easily satisfied. Theorem 2. If M ≜ ˆ V th +max t ( | ˆ I [ t ] | ) and M ≤ p 2 , then ˆ H [ t ] ∈ ˆ V thr eshold − p 2 , p 2 . Pr o of. max( ˆ H [ t ]) = max( ˆ V [ t ] + ˆ I [ t ]) ≤ τ − 1 τ ˆ V th + max t ( | ˆ I [ t ] | ) < M ≤ p 2 (9) min( ˆ H [ t ]) = min( ˆ V [ t ] + ˆ I [ t ]) ≥ − max t ( | ˆ I [ t ] | ) ≥ − p 2 + ˆ V th ≥ − p 2 (10) The ab o ve theorem states that as long as M ≤ p 2 , the maxima and minima of ˆ H [ t ] fall within the in terv al ˆ V threshold − p 2 , p 2 . It can b e easily sho wn that the maxim um v alue generated during CSNN computation must o ccur in the v ariable ˆ H [ t ] . This result not only v alidates the correctness of the FHE-Reset function but also facilitates the estimation of the maximum v alue within the information space. F urthermore, it provides a practical guideline for selecting the parameter p for the information space. A dditionally , the Bo otstrapping pro cedure refreshes the ciphertext noise, en- suring that subsequen t la yers of the CSNN inherit the same initial noise level. This capability enables the netw ork to extend to arbitrary depths without con- cerns ab out noise accumulation. 4 Exp erimen ts In this c hapter, we will empirically demonstrate the superior p erformance of FHE-DiCSNN in terms of time efficiency and accuracy . Firstly , we theoretically analyse the maximum v alue of FHE-DiCSNN as well as the relationship b etw een the maximum v alue of the information space and the maximum noise growth with the discretisation factor θ . Secondly , we will exp erimentally ev aluate the ac- tual accuracy and time efficiency of FHE-DiCSNN under different combinations of the attenuation co efficien t τ and the discretisation co efficient θ with different datasets. 4.1 Exp erimen tal Setup Datasets. W e use t wo image datasets in our exp erimen ts: 1) MNIST. This dataset includes 70,000 28Œ28 grayscale images of hand- written digits (0-9). It is split into 60,000 training and 10,000 testing images, with the goal of correctly classifying each handwritten digit. 2) F ashionMNIST: This dataset contains 70,000 28Œ28 grayscale images across 10 clothing categories (e.g., T-shirts, trousers). It is divided into 60,000 training and 10,000 testing images, with the task of accurately identifying the clothing category in eac h image. 10 3)T umor Brain T umor Classification Dataset: This prepro cessed dataset con- sists of 7,023 28Œ28 grayscale brain tumor images, classified into four categories: glioma, meningioma, no tumor, and pituitary . It is split into 5,712 training and 1,311 testing images, aiming to determine the presence of a brain tumor based on brain imaging data. Baselines. The p erformance comparison is made b etw een Plain text CSNN (Con- v olutional Spiking Neural Netw ork) as figure 1 show ed trained on plaintext data and ev aluated on a plaintext test set, and the FHE-DiCSNN (F ully Homomor- phic Encryption Distributed Conv olutional Spiking Neural Net work) ev aluated on encrypted data. Implemen taion Details. W e trained the mo del using the Adam optimizer with batch size of 256, an initial learning rate of 0.001, and sim ulations ov er 4 time-steps. The priv acy-preserving framew ork w as implemented in C++ using the TFHE library 7 for homomorphic encryption. During encrypted inference, the L WE parameters were set with ciphertext dimension of 128, TL WE p olynomial size of 2 15 , and a plaintext message space of 2 14 . W e tested v arious combinations of the atten uation parameter τ and the discretization scaling factor θ . 4.2 Exp erimen tal Results In our exp eriments, image data were encrypted using L WE ciphertext. The encrypted images were then pro cessed by multiplying them with discretized w eights, which w ere passed through the Spiking Activ ation La yer. Within this la yer, the LIF neuron mo del was applied to the ciphertext, p erforming FHE-Fire and FHE-Reset op erations. Bo otstrapping w as optimized using FFT technology and parallel computing. This process (steps 1 to 3) was rep eated T times, with the resulting outputs accumulated to generate classification scores. After com- pleting these steps, the ciphertext was decrypted, and the class with the highest score was selected as the final classification result. W e ev aluated v arious combi- nations of the decay parameter τ and scaling factor θ , with the outcomes detailed in T able 1 and T able 2. The exp erimental results indicate that b oth the scaling factor θ and the deca y co efficien t τ hav e a significant impact on the accuracy of ciphertext predictions. Sp ecifically , θ has a p ositiv e effect on accuracy , as larger θ v alues tend to impro ve the prediction precision within the netw ork. How ev er, it is crucial to ensure that the choice of θ matches the size of the information space. Otherwise, an excessiv ely large θ could lead to data ov erflow, negatively affecting accuracy . As shown in T able 1 and T able 2, FHE-DiCSNN achiev es maximum accu- racies of 98.00% and 87.29% on the MNIST and F ashionMNIST datasets, re- sp ectiv ely . In comparison to plaintext predictions, which reached 98.74% and 90.35%, our accuracy losses w ere less than 1% and 3% for the tw o datasets. 7 h ttps://github.com/tfhe/tfhe 11 T able 1. Different θ and τ parameters on the MNIST dataset (%) τ = 2 . 0 τ = 3 . 0 τ = 4 . 0 τ = ∞ (IF) θ = 20 97.48 97.60 97.10 97.06 θ = 30 97.66 97.50 97.60 97.23 θ = 40 97.60 97.87 98.00 97.32 θ = 50 97.69 97.90 97.30 97.15 θ = 60 97.72 97.70 98.00 97.25 θ = 100 97.68 97.55 96.90 96.38 Plain text 98.78 98.88 98.74 98.75 T able 2. Different θ and τ parameters on the F ashionMNIST dataset (%) τ = 2 . 0 τ = 3 . 0 τ = 4 . 0 τ = ∞ (IF) θ = 20 86.38 85.24 86.47 82.38 θ = 30 86.65 86.37 86.97 83.20 θ = 40 87.20 86.85 85.82 83.38 θ = 50 87.04 86.64 79.52 83.27 θ = 60 87.29 81.69 77.21 82.67 θ = 100 83.11 74.72 74.45 81.76 Plain text 90.35 90.05 89.99 90.63 This demonstrates that the FHE-DiCSNN mo del can handle ciphertext data effectiv ely while maintaining high prediction accuracy , v alidating its reliability . In addition to the public datasets men tioned ab ov e, we also tested our frame- w ork on a medical brain tumor dataset. In the medical field, patient data often carries priv acy concerns, making it crucial to p erform preliminary diagnostics without exp osing sensitive patient information. As shown in T able 3, our FHE- DiCSNN framework ac hieved an accuracy of up to 87.95% on encrypted T umor data, with only a 5.4% reduction compared to plaintext results. 4.3 Time Consumption The sim ulation time T , which can b e interpreted as the num ber of cycles re- quired to pro cess a single image, pla ys a crucial role in determining the time efficiency of the netw ork. A larger T increases the total num b er of cycles and consequen tly the num ber of b o otstrapping op erations. In P oisson coding, the inheren t randomness demands a sufficiently large T to achiev e stable exp er- imen tal results, whic h significan tly raises the time consumption. In con trast, using a con volutional lay er-based spike co ding approach enables more stable feature extraction, reducing the simulation time to just 4 cycles. This ensures that Leaky Integrate-and-Fire (LIF) neurons accumulate enough membrane p o- ten tial to generate spikes effectively . Bo otstrapping, kno wn to b e the most time-consuming step in F ully Homo- morphic Encryption (FHE), primarily takes place during the computation of 12 T able 3. Different θ and τ parameters on the T umor dataset (%) τ = 2 . 0 τ = 3 . 0 τ = 4 . 0 θ = 20 81.46 87.95 83.75 θ = 30 80.42 87.87 86.50 θ = 40 80.78 87.19 86.04 θ = 50 80.01 87.41 85.97 θ = 60 81.79 87.53 85.66 θ = 100 81.62 84.75 83.60 Plain text 93.04 93.35 93.89 LIF neurons in FHE-DiCSNN. Therefore, the n umber of b o otstrapping op era- tions can also serve as a straigh tforward estimate for the total time consumption. The table b elo w provides a comparison b et ween the CSNN and an equiv alent dimension P oisson-co ded SNN, as defined in Fig. 1. T able 4. T 1 and T 2 represen t the simulation time required for Poisson-encoded SNN and CSNN, resp ectiv ely , to achiev e their resp ective p eak accuracy p erformances. Typ- ically , T 1 falls within the range of [20-100], while T 2 falls within the range of [2-4]. Poisson-encoded SNN [18] CSNN bo otstrapping (784 × 2 + 2 × 160 + 2 × 10) × T 1 (7840 × 2 + 2 × 160 + 2 × 10) × T 2 Spiking Activ ation Lay er 5 × T 1 6 × T 2 T able 5. Comparison of the run times of the tw o mo dels Multi-threading Without multi-threading DiSNN [18] 2.62s 8.32s Ours 0.34s 8.29s If we do not consider parallel computing, the num b er of b ootstrapping can b e used as a simple estimate of the net work’s time consumption. In this case, the time consumption of b oth P oisson coded SNNs and CSNNs will b e v ery large. How ever, since the b o otstrapping of Spiking Activ ation Lay ers and Poisson enco ding can b e performed in parallel, the time consumption will b e prop ortional to the num b er of corresp onding la yers. In the case of parallel computing, CSNN exhibits a time efficiency that is 10 times higher than that of Poisson-encoded SNN. T able 5 presen ts a comparison of the run times b etw een the tw o mo dels. 4.4 P arameters Analysis This section discusses the selection of FHE parameters, fo cusing on the message space Z p . Here, p serves as the mo dulus, ensuring op erations remain within Z p . 13 T o preven t o verflo w during subtraction and maintain correctness, Theorem 2 offers a criterion for the maxim um allow able v alue. As long as it is satisfied that ˆ V th + max t ( | ˆ I [ t ] | ) ≈ θ · ( V th + max t ( | I [ t ] | )) = θ · ( V th + ω j S j [ t ]) ≤ p 2 (11) the v alue of the intermediate v ariable will not exceed the message space Z p . The form ula indicates that ˆ I is prop ortional to discretization parameter θ . W e estimated the true maxim um v alue of V th + ω j S j [ t ] on the MNIST dataset, and the findings are summarized in T able 6. A technique from DiNN [2] dynamically adjusts the message space to min- imize computational cost and control noise gro wth, whic h we also adopt b y selecting a smaller plain text space. Accurate estimation of noise growth is cru- cial, as it only o ccurs during W eigh tSum op erations. F or a single W eightSum, the noise increases from the initial v alue σ to: σ j | ˆ w j | ≈ θ · σ j | w j | . (12) Eq. (12) shows that the maximum noise growth can b e precomputed since w eights are known. Exp erimen tal results are summarized in T able 7. T able 6. Spiking Activ ation Lay er Results for Different Thresholds τ V th + max t ( | I [ t ] | ) Spiking Lay er1 Spiking Lay er2 Spiking Lay er3 τ = 2 29.60 70.00 24.90 τ = 3 36.00 86.00 32.10 τ = 4 85.2 175.00 51.70 τ = ∞ (IF) 29.80 31.10 20.60 T able 7. Based on the noise estimation mentioned ab o ve, the quantit y max ∑ j | ω j | can b e employ ed to estimate the growth of noise in DiCSNNs for v arious θ . τ = 2 τ = 3 τ = 4 τ = ∞ (IF) max ∑ j | ω j | 17.42 18.87 10.24 11.89 The discussion sho ws that the message space size and noise upp er b ound are proportional to θ . Exp erimen tal results pro vide scaling factors to estimate b ounds for differen t θ , allowing selection of suitable FHE parameters or use of standard sets lik e STD128 [21]. 5 Conclusion This pap er introduces the FHE-DiCSNN framew ork, whic h is built upon the efficien t TFHE sc heme and incorp orates conv olutional operations from CNN. 14 The framework lev erages the discrete nature of SNNs to achiev e exceptional prediction accuracy and time efficiency in the ciphertext domain. The homo- morphic computation of LIF neurons can b e extended to other SNNs mo dels, offering a nov el solution for priv acy protection in third-generation neural net- w orks. F urthermore, by replacing Poisson enco ding with conv olutional metho ds, it improv es accuracy and mitigates the issue of excessive sim ulation time caused b y randomness. P arallelizing the b o otstrapping computation through engineer- ing techniques significantly enhances computational efficiency . A dditionally , we pro vide upper b ounds on the maxim um v alue of homomorphic encryption and the growth of noise, supp orted by exp erimental results and theoretical analysis, whic h guide the selection of suitable homomorphic encryption parameters and v alidate the adv antages of the FHE-DiCSNN framework. There are also promising av enues for future researc h: 1. Exploring homomor- phic computation of non-linear spiking neuron mo dels, such as QIF and EIF. 2. Inv estigating alternative enco ding metho ds to completely alleviate simulation time concerns for SNNs. 3. Exploring intriguing extensions, suc h as combining SNNs with RNNs or reinforcemen t learning and homomorphically ev aluating these AI algorithms. 6 A c knowledgemen ts This work was supp orted b y the National Natural Science F oundation of China (12401233), NSFC International Creativ e Research T eam (W2541005), National Key Research and Developmen t Program of China (2021ZD0201300), Guangdong- Dongguan Joint Research F und (2023A1515140016), Interdisciplinary Research Program of HUST (2023JCYJ012), Guangdong Pro vincial Key Lab oratory of Mathematical and Neural Dynamical Systems (2024B1212010004), Guangdong Ma jor Pro ject of Basic Research (2025B0303000003), and Hub ei Key Lab oratory of Engineering Mo deling and Scientific Computing. References 1. Ballas, N., Y ao, L., Pal, C., Courville, A.: Delving deep er into conv olutional net- w orks for learning video representations. arXiv preprint arXiv:1511.06432 (2015) 2. Bourse, F., Minelli, M., Minihold, M., Paillier, P .: F ast homomorphic ev aluation of deep discretized neural netw orks. In: Adv ances in Cryptology–CR YPTO 2018: 38th Ann ual International Cryptology Conference, Santa Barbara, CA, USA, August 19–23, 2018, Pro ceedings, Part I II 38. pp. 483–512. Springer (2018) 3. Bradbury , J., Merity , S., Xiong, C., So cher, R.: Quasi-recurren t neural netw orks. arXiv preprint arXiv:1611.01576 (2016) 4. Brakerski, Z., Gentry , C., V aikuntanathan, V.: (leveled) fully homomorphic encryp- tion without b ootstrapping. ACM T ransactions on Computation Theory (TOCT) 6 (3), 1–36 (2014) 5. Case, B.M., Gao, S., Hu, G., Xu, Q.: F ully homomorphic encryption with k-bit arithmetic op erations. Cryptology ePrint Archiv e (2019) 15 6. Cheon, J.H., Han, K., Kim, A., Kim, M., Song, Y.: A full rns v arian t of approx- imate homomorphic encryption. In: Selected Areas in Cryptography–SA C 2018: 25th International Conference, Calgary , AB, Canada, August 15–17, 2018, Revised Selected Papers 25. pp. 347–368. Springer (2019) 7. Chillotti, I., Gama, N., Georgiev a, M., Izabachène, M.: Tfhe: fast fully homomor- phic encryption ov er the torus. Journal of Cryptology 33 (1), 34–91 (2020) 8. Dhillon, A., V erma, G.K.: Conv olutional neural netw ork: a review of mo dels, metho dologies and applications to ob ject detection. Progress in Artificial Intel- ligence 9 (2), 85–112 (2020) 9. Ducas, L., Micciancio, D.: Fhew: b o otstrapping homomorphic encryption in less than a second. In: Annual in ternational conference on the theory and applications of cryptographic techniques. pp. 617–640. Springer (2015) 10. F an, J., V ercauteren, F.: Somewhat practical fully homomorphic encryption. Cryp- tology ePrint Archiv e (2012) 11. Gen try , C.: Computing arbitrary functions of encrypted data. Communications of the ACM 53 (3), 97–105 (2010) 12. Gen try , C., Sahai, A., W aters, B.: Homomorphic encryption from learning with er- rors: Conceptually-simpler, asymptotically-faster, attribute-based. In: Adv ances in Cryptology–CR YPTO 2013: 33rd Annual Cryptology Conference, Santa Barbara, CA, USA, August 18-22, 2013. Pro ceedings, Part I. pp. 75–92. Springer (2013) 13. Georgop oulos, A.P ., Sc hw artz, A.B., Kettner, R.E.: Neuronal p opulation co ding of mo vemen t direction. Science 233 (4771), 1416–1419 (1986) 14. Ho dgkin, A.L., Huxley , A.F.: A quantitativ e description of membrane current and its application to conduction and excitation in nerv e. The Journal of ph ysiology 117 4 , 500–44 (1952) 15. Izhik evich, E.M.: Simple mo del of spiking neurons. IEEE transactions on neural net works 14 6 , 1569–72 (2003) 16. Joliv et, R., J., T., Gerstner, W.: The spike resp onse model a framework to predict neuronal spike trains. In: Kaynak, O., Alpa ydin, E., Oja, E., Xu, L. (eds.) Artificial Neural Netw orks and Neural Information Pro cessing textemdash ICANNICONIP 2003. Lecture Notes in Computer Science, Berlin, Heidelb erg 17. Kim, K.H., Hong, S., Roh, B., Cheon, Y., Park, M.: Pv anet: Deep but light weigh t neural netw orks for real-time ob ject detection. arXiv preprint (2016) 18. Li, P ., Gao, T., Huang, H., Cheng, J., Gao, S., Zeng, Z., Duan, J.: Pri- v acy preserving discretized spiking neural net work with tfhe. In: 2023 Inter- national Conference on Neuromorphic Computing (ICNC). pp. 404–413 (2023). h ttps://doi.org/10.1109/ICNC59488.2023.10462898 19. Lyubashevsky , V., Peik ert, C., Regev, O.: On ideal lattices and learning with errors o ver rings. In: A dv ances in Cryptology–EUROCR YPT 2010: 29th Annual Interna- tional Conference on the Theory and Applications of Cryptographic T echniques, F rench Riviera, May 30–June 3, 2010. Pro ceedings 29. pp. 1–23. Springer (2010) 20. Maass, W.: Netw orks of spiking neurons the third generation of neural netw ork mo dels. Electron. Collo quium Comput. Complex. TR96 (1996) 21. Micciancio, D., Poly ako v, Y.: Bo otstrapping in fhew-like cryptosystems. In: Pro- ceedings of the 9th on W orkshop on Encrypted Computing & Applied Homomor- phic Cryptography . pp. 17–28 (2021) 22. Regev, O.: On lattices, learning with errors, random linear co des, and cryptogra- ph y . Journal of the ACM (JACM) 56 (6), 1–40 (2009) 23. Simon yan, K., Zisserman, A.: V ery deep con volutional netw orks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014) 16 24. V oulo dimos, A., Doulamis, N., Doulamis, A., Protopapadakis, E., et al.: Deep learning for computer vision: A brief review. Computational intelligence and neu- roscience 2018 (2018) 25. W u, Y., Deng, L., Li, G., Zhu, J., Shi, L.: Spatio-temp oral backpropagation for training high-p erformance spiking neural netw orks. F rontiers in Neuroscience 12 (2017) 26. W u, Y., Deng, L., Li, G., Zhu, J., Shi, L.: Spatio-temp oral backpropagation for training high-p erformance spiking neural netw orks. F rontiers in neuroscience 12 , 331 (2018) 27. Zhang, M., W ang, J., W u, J., Belatrec he, A., Amornpaisannon, B., Zhang, Z., Miriy ala, V.P .K., Qu, H., Chua, Y., Carlson, T.E., et al.: Rectified linear p ostsynap- tic p oten tial function for backpropagation in deep spiking neural netw orks. IEEE transactions on neural netw orks and learning systems 33 (5), 1947–1958 (2021) 28. Zhang, T., Zeng, Y., Zhao, D., Shi, M.: A plasticity-cen tric approach to train the non-differen tial spiking neural netw orks. In: Proceedings of the AAAI conference on artificial intelligence. vol. 32 (2018) 29. Zhang, W., Li, P .: T emporal spike sequence learning via backpropagation for deep spiking neural netw orks. Adv ances in Neural Information Pro cessing Systems 33 , 12022–12033 (2020) 30. Zhou, S., Li, X., Chen, Y., Chandrasekaran, S.T., San yal, A.: T emporal-co ded deep spiking neural net work with easy training and robust p erformance. In: Pro ceedings of the AAAI conference on artificial intelligence. vol. 35, pp. 11143–11151 (2021) 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment