Limits of Imagery Reasoning in Frontier LLM Models

Large Language Models (LLMs) have demonstrated impressive reasoning capabilities, yet they struggle with spatial tasks that require mental simulation, such as mental rotation. This paper investigates whether equipping an LLM with an external ``Imager…

Authors: Sergio Y. Hayashi, Nina S. T. Hirata

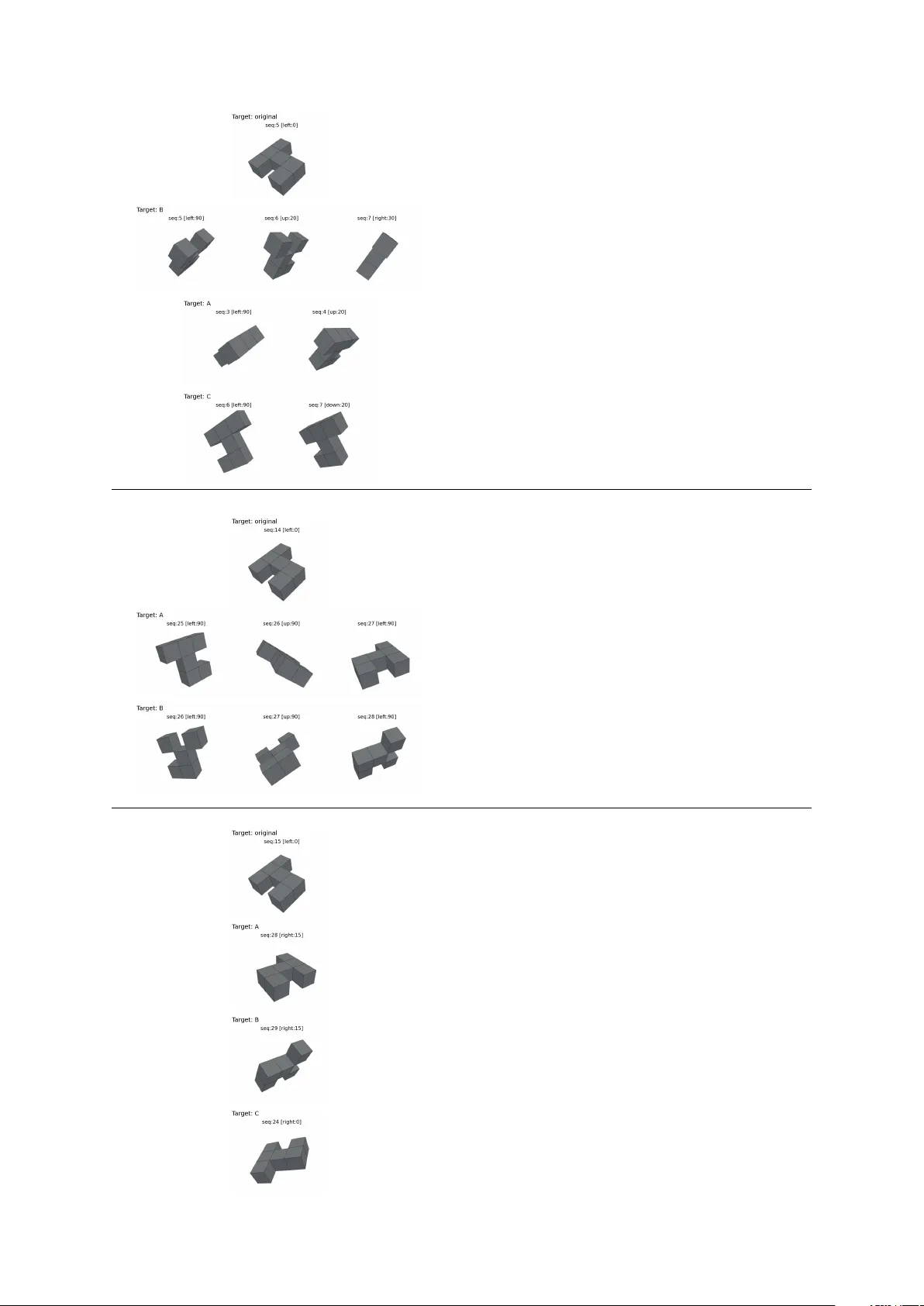

Limits of Imagery Reasoning in F ron tier LLM Mo dels Sergio Y. Ha yashi 1 and Nina S. T. Hirata 1 1 Institute of Mathematics and Statistics – Univ ersit y of S˜ ao Paulo, Brazil Marc h 31, 2026 Abstract Large Language Mo dels (LLMs) ha v e demonstrated impressive reasoning capabil- ities, yet they struggle with spatial tasks that require men tal sim ulation, suc h as men tal rotation. This pap er in vestigates whether equipping an LLM with an exter- nal “Imagery Module”—a to ol capable of rendering and rotating 3D mo dels—can bridge this gap, functioning as a “cognitiv e prosthetic.” W e conducted exp erimen ts using a dual-module arc hitecture in whic h a reasoning module (an MLLM) in teracts with an imagery mo dule (Python/PyVista) on 3D mo del rotation tasks. P erfor- mance w as lo wer than exp ected, with accuracy reac hing at most 62.5%. F urther in vestigation suggests that ev en when the burden of main taining and manipulating a holistic 3D state is outsourced, the system still fails. This reveals that curren t fron tier mo dels lac k the foundational visual-sp atial primitives required to interface with imagery . Sp ecifically , they lac k: (1) the low-lev el sensitivity to extract spatial signals such as (a) depth, (b) motion, and (c) short-horizon dynamic prediction; and (2) the capacit y to reason contemplativ ely ov er images, dynamically shifting visual fo cus and balancing imagery with symbolic and asso ciative information. Pro ject page: https://github.com/sergiohayashi/imagery- - 3d- rotation 1 Con ten ts 1 In tro duction 3 2 The Human Men tal Imagery System and Motiv ation 3 2.1 Imagery Reasoning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 2.2 Motiv ation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6 3 Metho dology: The Prosthetic Imagery Architecture 6 3.1 System Ov erview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8 3.2 Mo del Selection . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9 3.3 Baseline . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9 4 Exp erimen ts and Results 9 4.1 Condition 1: Reset Enabled . . . . . . . . . . . . . . . . . . . . . . . . . 10 4.2 Condition 2: Incremental Rotation with a 360 ◦ Searc h Hint . . . . . . . . 10 4.3 Condition 3: Incremental Rotation Only . . . . . . . . . . . . . . . . . . 10 4.4 Prompt In v ariance and Cognitiv e Limits . . . . . . . . . . . . . . . . . . 11 4.5 Mo del ablation study . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12 4.6 Isolating Core Spatial Capabilities . . . . . . . . . . . . . . . . . . . . . . 13 4.6.1 Iden tify small rotation . . . . . . . . . . . . . . . . . . . . . . . . 13 4.6.2 V GGT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14 4.6.3 Predict the after-rotation state . . . . . . . . . . . . . . . . . . . 15 5 Discussion 16 5.1 F unctional imagery deficit (metaphor: ‘functional aphan tasia’) . . . . . . 16 6 Conclusion 17 A Prompts 21 B Resolution sample 22 2 1 In tro duction Human reasoning relies heavily on men tal imagery ( Shepard and Metzler , 1971 ; Kunda , 2018 )—the ability to visualize and manipulate ob jects in the “mind’s eye” ( Kosslyn and Pylysh yn , 1994 ). Theories suc h as P aivio’s Dual-Co ding Theory ( Paivio , 1986 ) and Kosslyn’s Perceptual-An ticipation Theory ( Kosslyn , 1980 ) suggest that this capability is distinct from verbal reasoning. While Multimo dal Large Language Mo dels (MLLMs) pro cess images, they treat them as sequences of tok ens, lac king the holistic, transformable nature of human mental images ( V asw ani et al. , 2017 ; Kosslyn , 1980 ). This pap er explores the h yp othesis that current LLMs lack an internal mec hanism for vi- sual state sim ulation—a limitation we refer to as functional “aphan tasia” 1 (as an analogy for illustration purp oses). While an external to ol can theoretically supply the p ersisten t 3D structural represen tation, our exp eriments show that LLMs still fail to utilize it. Con- cretely , we argue that the LLM side lacks the basic p er c eptual primitives needed to close the reasoning lo op. W e op erationalize these missing primitiv es as deficits in (1) extract- ing and trac king low-lev el spatial signals (e.g., depth, mov ement, short-horizon dynamic prediction), and (2) engaging in contemplativ e, atten tion-driven visual reasoning rather than defaulting to symbolic, word-based asso ciations. 2 The Human Men tal Imagery System and Motiv a- tion The study of h uman men tal mo dels has a long history; these theories can help us under- stand the missing capabilities of LLMs when h uman cognition is used as a blueprint. 2.1 Imagery Reasoning F or ov er 2000 y ears, it w as b elieved that the h uman mind functioned in a “p erceptual” manner, based on mental images. How ev er, b etw een the 1950s and 1970s ( F o dor , 1975 ), influenced largely b y linguists, the cognitiv e revolution took hold, and the prev ailing view b ecame that the mind is predominan tly “computational” or sym b olic—that is, it thinks via sequen tial symbol manipulation ( Pylysh yn , 1973 ). Nev ertheless, the view that human reasoning includes an imagery comp onen t p ersists. In this mo del, alongside sequen tial symbolic elements, there exists a representation of an “abstract image,” which p ossesses a structural, holistic, and hierarchical nature. This 1 W e use ‘functional aphantasia’ strictly as a metaphorical lab el for the operational deficits defined here; w e make no claims about human cognition or clinical conditions. 3 comp onen t accompanies, shap es, and supp orts the sequen tial pro cessing (and is bidirec- tionally link ed to it). Recen t evidence suggests that curren t LLMs exhibit b ehavior analogous to a v erbal reasoning mo dule, corresp onding to the left hemisphere of the brain, while sim ultane- ously displa ying deficiencies in characteristics asso ciated with the righ t hemisphere (spa- tial/holistic pro cessing), th us renewing in terest in examining LLMs through this lens. According to Paivio’s Dual Coding Theory ( P aivio , 1986 ), h uman cognition operates through tw o distinct systems: a v erbal one, based on language (logogens), and a non- v erbal one, based on men tal images (imagens). Kosslyn ( 1980 ) argues for the so-called Depictiv e Mo del of Imagery , whic h views images as closely related to visual p erception. Barsalou’s P erceptual Symbol Systems (PSS) p osits that images can b e shap ed and sim- ulated to supp ort cognition ( Barsalou , 1999 ). Similar theories ha ve b een prop osed b y Lak e et al. ( 2017 ) and Johnson-Laird ( 1989 ). Exp erimen ts suc h as those b y Shepard and Metzler ( 1971 ) help ed reinforce the theory that p eople think visually , sp ecifically b y p erforming mental rotation of 3D images to solv e matc hing problems (resp onse times increase linearly with the angular disparit y b et ween the ob jects). One characteristic of this mo del is role separation and the existence of bidirectional com- m unication. Although the specific nature of this comm unication, in terms of exactly what information it carries, remains unclear, it is reasonable to assert that the imagery comp onen t pro vides visual information that supp orts reasoning. The imagery mo dule is not static, but dynamic; it is shap ed b y the reasoning of the logogen and works col- lab orativ ely . It is not, for instance, merely a passiv e represen tation lik e an unc hanging photograph. Compared with computational image models, mental imagery has several evident differ- ences. – Low resolution: The h uman brain do es not represen t the real w orld in high resolution, but rather in low resolution, more lik e a silhouette that supp orts dynamic abstraction. – Manipulation: The h uman brain can manipulate an image or 3D ob ject b y p erforming op erations suc h as rotation and translation. – F o cus and atten tion: The h uman brain can mentally shift focus and attend to sp ecific parts of the mental image when necessary . Although not as precise as externally directed atten tion (suc h as eye mov emen ts during reading), the h uman brain can do this in ternally as w ell. – Abstraction: The brain can create abstract images that ha v e no coun terpart in the real w orld, as seen in accounts such as Einstein’s ( Kunda , 2018 ) regarding problem- solving. – Memory: The human brain can retain certain stages of image transformation in 4 w orking memory , creating the sensation of holding a short, low-resolution, video-lik e sequence. Based on this premise and through this lens, we can dra w a comparison with what is curren tly observed in LLMs. Recent studies hav e demonstrated in teresting results, suggesting that imagery theory ma y provide indications on how to impro ve LLMs. W e kno w that T ransformer-based LLMs are inherently sequen tial and autoregressive, lacking an explicit, holistic structured representation useful for imagery , though this arc hitectural trait alone do es not inherently preclude visual reasoning capabilities. Ho wev er, recen t studies hav e sho wn that strong parallels exist. Cai et al. ( 2025 ) show that even the most recent mo dels, with reasoning capabilities that b eat h uman-lev el p erformance in man y cognitive tests, fall significantly short in visual tasks that w ould b e trivial for a h uman, suc h as 3D rotation. Another study ( Lei et al. , 2025 ) shows that activ ation patterns resemble signals from the brain’s left hemisphere, although it also cites a sligh t resem blance to the righ t side. A recen t study ( W ang et al. , 2024 ) demonstrated that when a model is trained with images, it p erforms b etter even on problems that do not in v olve images (text-only), despite requiring text to solv e the problems. In other words, images aid the model’s ov erall enco ding, demonstrating that the T ransformer also b enefits from training on images. Some studies ha ve tried to ov ercome these limitations in the con text of the T ransformer itself, main taining its sequen tial, token-based nature, by forcing the inclusion of interme- diate visual representations, whic h helps in solving problems of a visual nature. Attempts include using c hain-of-thought prompting, encouraging the mo del to generate image to- k ens in many forms ( Rose et al. , 2023 ; Li et al. , 2025 ; Xu et al. , 2025 ; Y ang et al. , 2025 ), or attempting to mo dify part of the mo del to generate images as latent represen tations in do wnstream lay ers ( Y ang et al. , 2025 ). Some approaches rely on external to ols, for exam- ple, those that provide image or spatial data ( Huang et al. , 2025 ), feedbac k from external represen tations ( W ang et al. , 2025b )—which uses tags like [x1,y1,x2,y2] — or external world mo dels ( Ha and Schmidh ub er , 2018 ; W u et al. , 2025b ). Others hav e tried to impro ve image representation b y pro viding images at differen t scales ( Shi et al. , 2024a ) or mo difying the arc hitecture slightly suc h that image atten tion is preserv ed when in tegrated with text mo dels ( Zhang et al. , 2024 ). Huang et al. ( 2025 ) implements the idea of providing visual information through an external p erceiv er agen t, paired with a small purely textual LLM, thereby creating a m ultimo dal mo del. None of these mo dels represen ts the imagery mo dule as an indep endent mo dule with bidirectional comm unication and image manipulation capabilities. Other mo dels ha ve gone further. Instead of treating images as patc hes and tok ens, as was 5 done in the original T ransformer in tegration, they represent them using differen tiable ele- men ts, such as Gaussian Splatting ( Kerbl et al. , 2023 ; Shi et al. , 2024b ; W u et al. , 2025a ). This facilitates the mo del’s abilit y to manipulate data consisten tly , p erforming transfor- mations, for example. While the mo del handles images more nativ ely , it is difficult to b eliev e that h umans, when thinking with images, think in this wa y—with high-precision images and calculated 3D transformations. F or humans, imagery in reasoning may more closely resem ble a “visual sk etchpad” ( Baddeley and Hitc h , 1974 ). The study b y Su et al. ( 2025 ) effectiv ely summarizes the curren t consensus in this area, en- visioning three progressiv e stages to ward full imagery capabilit y: (1) T ool-Driven Visual Exploration, (2) Programmatic Visual Manipulation, and (3) In trinsic Visual Imagina- tion. As the authors note, realizing this final stage requires a critical innov ation: the arc hitectural in tegration of generativ e and reasoning capabilities within a single, unified mo del. Our w ork aligns with stage (2) of this prop osal. 2.2 Motiv ation Based on these observ ations, w e ask: what happens if we externally pro vide a to ol that carries the burden of the hard work—namely , sustaining the image representation and enabling manipulation—to support the reasoning part? F or this, w e prop ose a compact system that incorp orates an artificial imagery mo dule and supp orts communication in a highly simplified setting. W e then ev aluate whether and to what exten t this mo del impro ves p erformance; if it does not, we analyze the remaining limitations as steps tow ard gen uine imagery-based reasoning. 3 Metho dology: The Prosthetic Imagery Arc hitec- ture T o sim ulate the interactio n b etw een v erbal and visual systems, we designed a dual-mo dule agen tic lo op (Figure 1 ) and ev aluated it on the SpatialViz 3D rotation b enchmark ( W ang et al. , 2025c ). The task is a standard mental rotation problem: given one original ob ject and three alternatives (A, B, C), the mo del m ust determine which alternative c annot b e obtained from the original by rotation alone ( Shepard and Metzler , 1971 ; W ang et al. , 2025c ). This task is notoriously easy for h umans, yet recent frontier mo dels achiev e v ery low scores, presenting a p erformance gap from human baselines of more than 45% ( Cai et al. , 2025 ). The ob jects in the dataset are comp osed of uniform cub es, with no more than 2 lev els in heigh t and no more than 5 in width or depth (relativ e to axes). Thus, they ha ve simple structures, and the views (rotated states) are such that they present no ambiguit y 6 Figure 1: Sc hematic of the visual feedback lo op. The LLM functions as the Rea- soning Mo dule , issuing rotation commands to the stateful Imagery Mo dule . The imagery mo dule pro cesses these commands and returns a 2D snapshot of the ob ject from the up dated viewp oin t. 7 or aliasing. Ev en a child can solve the problem without muc h difficulty , yet fron tier mo dels often fail. 3.1 System Ov erview The system consists of tw o comp onen ts. Reasoning Mo dule. A fron tier m ultimo dal LLM (primarily GPT-5.2 in the experiments rep orted here) acts as the agent that conducts the reasoning pro cess and mak es decisions. The mo del do es not manipulate 3D geometry directly; it relies on the imagery mo dule. Imagery Mo dule. This module is responsible for the imagery component of the process. It main tains a p ersisten t 3D state for each ob ject and executes manipulations requested b y the reasoning mo dule. It renders a 2D pro jection from the current camera angle, sim ulating human visual p erception. The problem-solving pro cess is iterative: the reasoning mo dule issues commands and “sees” the result from the up dated angle. The imagery mo dule is implemen ted in Python using PyVista. The flow is con trolled by an external lo op until the task is resolved. P ose calibration / initialization. F or eac h ob ject in the problem, we p erformed a one-time man ual camera-p ose calibration to align the PyVista-rendered ob ject with the viewp oint sho wn in the b enchmark prompt. Concretely , we in teractiv ely adjusted rotation parameters (ya w/pitch/roll in camera space, i.e., relative to the curren t view) un til the rendered snapshot visually matched the reference image. W e then recorded the resulting rotation parameters and hard-co ded them in to the dataset construction script. During ev aluation, the imagery mo dule alwa ys starts from these fixed calibrated p oses; no p er-mo del or p er-run adjustmen t is p erformed. The rotation commands are defined in camera space as orbit-lik e mo vemen ts around the ob ject’s center. The direction and magnitude are defined relative to the current state, using intuitiv e terms like left/righ t and up/do wn. F or example, “left:30” rotates the ob ject left b y 30 degrees relative to the curren t view, analogous to turning it in your hands while your viewp oint remains fixed. There is no explicit axis definition, k eeping it as close as possible to ho w h umans think. W e do not typically think in terms of x , y , and z axes when rotating an ob ject in our hands. Multiple commands for a single target are allo wed, generating a grid of snapshots after each rotation step, whic h can pro vide a sense of contin uous mo v ement. In each turn the reasoning mo dule is pro vided with its memory (including the sequence of commands issued so far), the images generated in the last iteration, and the original problem statement. The system guaran tees that all ob jects (whether as a result of a 8 rotation command or in their curren t state) are provided at least once in each iteration. The system is highly sensitive to prompt design. W e arriv ed at the final prompt (Sec- tion A ) iteratively b y observing failure mo des and refining instructions until the LLM reliably engaged with the imagery mo dule. This included explaining the iterative pro- cess, defining the command set, and establishing minimum incen tives, such as requiring a minim um num b er of iterations. Although prompting strategies can affect system p erformance, they cannot ov ercome the mo del’s in trinsic limitations, nor do they qualitatively alter the core findings discussed in this pap er. 3.2 Mo del Selection Preliminary tests suggested GPT-5.2 p erformed best compared to other models. F or reasons of cost, runtime, and reproducibility , our main exp eriments w ere conducted using GPT-5.2 (mo del GPT-5.2-2025-12-11). W e emphasize that the main qualitative behaviors observed in this pap er w ere not unique to a single mo del. Across all fron tier systems, w e observ ed the same underlying pattern. Therefore, b ecause our fo cus is more on qualitative analysis, we utilized GPT-5.2 as a represen tative baseline for curren t frontier MLLMs. 3.3 Baseline According to the original SpatialViz study , the highest frontier-model performance on this task w as 36.25% (Claude-3.7-Sonnet), while h uman p erformance was appro ximately 79.16% ( W ang et al. , 2025c ). More recent studies ( Cai et al. , 2025 ) indicate that this gap p ersists even for newer models suc h as GPT-5 and Gemini-2.5-Pro. W e also conducted a lo cal, single-turn re-ev aluation of current frontier mo dels. As shown in T able 1 , GPT- 5.2 (solving the problem directly without special prompting or to ols) reaches 50.0%, establishing a stronger baseline than previously rep orted systems, but still leaving a large gap to human spatial reasoning. 4 Exp erimen ts and Results F or ablation purp oses and to highlight b eha vioral c haracteristics, w e p erformed exp eri- men ts under different conditions. 9 4.1 Condition 1: Reset Enabled This setup serv es as an ablation. Here, the mo del w as allow ed to use a reset op eration to rev ert ob jects to canonical viewp oints. Because the 3D ob jects w ere constructed in exactly the same co ordinate space, resetting tw o matc hing ob jects brings them to exactly the same canonical view. Under this condition, the task is reduced to simple 2D image comparison, b ypassing the need for dynamic rotation. In the b est prompt v arian t, the mo del was explicitly encour- aged to use the reset command (requesting a top view and horizon tal view), and accuracy reac hed up to 97.5% (85–97.5% across prompt v arian ts), suggesting that identifying the o dd one out was not fundamentally difficult and could b e resolved in an unambiguous manner once p erfectly aligned. 4.2 Condition 2: Incremental Rotation with a 360 ◦ Searc h Hin t Here, w e instructed the mo del to trigger a sequence of rotations to obtain a full 360 ◦ tra jectory view, pro viding examples of both short (6 steps) and long (20 steps) sequences. This approach theoretically allow ed the mo del to simply search for a matc hing view and conclude immediately , bypassing the need to plan incremental rotations. Although this strategy could bypass the need for spatial planning and fall bac k to pure image matching, surprisingly , the mo del ac hieved only 52.5% accuracy (measured in a single run). Despite making the task mechanically m uch easier, p erformance remained mo derate. Qualitativ e analysis suggested that the mo del often struggled to fo cus on a sp ecific matc hing frame when presented with a long grid sequence of snapshot images. Instead of iden tifying a matching frame directly , it frequently reverted to verbal inference ab out the ob ject’s structure. F urthermore, although a 360 ◦ orbit provides man y facets, it is not guaran teed to hit the exact target angle; nonetheless, the primary b ottlenec k app eared to b e the visual comparison pro cess itself rather than the absence of corresp onding view- p oin ts. 4.3 Condition 3: Incremen tal Rotation Only This is our main experimental setup. The prompt w as crafted to explain the mechanics of the iterative pro cess, how to work with the tool, and the command definitions—without turning it into a step-b y-step tutorial. W e explicitly mentioned that using the to ol makes it p ossible to reach the exact same angle as the target view (original). W e also required at least 5 iterations, b ecause otherwise the system tended to answ er immediately relying solely on zero-shot reasoning. The full prompt is av ailable in App endix A . 10 In this setup, w e obtained a score ranging from 50% to 62.5% (across 10 runs, with the mo del and prompt frozen). An example of the resolution thread is presen ted in App endix B . Qualitativ e analysis show ed that the mo del indeed used the imagery mo dule (av oiding immediate answers), but treated it primarily as an aid for static ob ject insp ection. At no p oint did we observ e the mo del activ ely confirming it had ac hieved a geometric matc h through contin uous rotation. This b ehavior w as consistent across all exp erimen ts and all ev aluated mo dels. F or illustration, the mo del’s t ypical rationale resembled the following: F r om r otations, original app e ars to b e 5 cub es: a 2 × 2 top plate (4 cub es) with one extr a cub e attache d b elow one c orner (making a ’fo ot’). Option B visual ly matches this: 2x2 plate with a single cub e sticking out fr om a side and one cub e ab ove? ne e d verify c ar eful ly. Option A lo oks like a 1x4 b ar with one cub e on top (stil l 5) arr ange d differ ently (not 2x2). Option C is a 3-cub e vertic al c olumn with a 2-cub e horizontal arm at top and 1 cub e at b ottom side (total 5) but adjac ency differs. Next: r otate original to se e if c an form A or B; also r otate B/A/C to che ck for 2x2 plate invariant. We’l l do additional r otations fo cusing on r eve aling 2x2 fac e on e ach. Figure 2: SpatialViz 3D Rotation sample problem. The question is: The left image shows the original cub e stack made of e qual-size d smal l cub es. Which of the options on the right c annot b e obtaine d by r otating the original cub e stack? Ple ase answer fr om options A, B or C. 4.4 Prompt In v ariance and Cognitiv e Limits LLMs are highly sensitive to prompting. W e conducted extensive prompt engineering to rule out deficiencies stemming solely from p o or prompts, although w e did not attempt to find the absolute maxim um p ossible accuracy through ov erfitting. V ariations tested included: • Setting a minim um n umber of iterations (otherwise, the mo del answ ers immediately without relying on rotations). 11 T able 1: Ev aluation of frontier mo dels on the SpatialViz 3D rotation task (3DR), com- pared to human baselines. The b ottom section details our exp erimental phases isolating spatial reasoning mechanisms, revealing that unrestricted manipulation yields artificially high p erformance (97.5%) through 2D matching rather than true 3D spatial simulation. SpatialViz - 3DR Ev aluated En tity Accuracy (%) Human Performanc e ( W ang et al. , 2025c ) 79.16 Baseline Mo dels Llama-4-Ma veric k-17B-128E-Instruct( W ang et al. , 2025c ) 40.00 Claude-3.7-Sonnet( W ang et al. , 2025c ) 36.25 Baseline Mo dels - Our Re-ev aluation GPT-5.2 50.00 Gemini-3.5-Pro 40.00 Claude-3.7-Sonnet (20250219) 37.50 Claude-Opus-4.5 (20251101) 37.50 Grok-4.1-F ast-Reasoning 37.50 Qw en3-VL-30B-A3B-Instruct 20.00 Exp erimen t results Ablation condition 1: With reset (canonical views) 85 – 97.5 Ablation condition 2: With 360 ◦ view hin t 52.5 Normal condition: Incremental rotation, minimal prompt 55 – 62.5 • Instructing the mo del to use the imagery mo dule to p erform contin uous rotations and attempt a direct geometric matc h to the target, prioritizing this o ver structural deduction. • Step-by-step resolution: first grasping the ov erall structure, then focusing on sp ecific asp ects or alternativ e ob jects. • T racing the partial solution by classifying eac h alternativ e (e.g., ’solution candidate’, ’no matc h’). • Estimating the rotational distance before emitting rotation instructions. None of these v ariations yielded meaningful improv ements b eyond the 62.5% ceiling. 4.5 Mo del ablation study W e conducted an ablation study across different frontier LLMs under strictly identi- cal conditions. gpt-5.2 demonstrated the most reliable to ol-use b ehavior, leading us to optimize and freeze our prompt design based on its resp onses, ac hieving 50.0%– 62.5% in the final exp eriments. In terestingly , gpt-5.4 p erformed sligh tly worse under the same conditions, yielding 45.0% to 52.0% across 3 runs. Other mo dels struggled 12 Figure 3: The mo dels w ere asked to detect the rotation (direction and angle) required to transform the left image into the righ t image. The correct answer is “left:30”. All tested mo dels answered incorrectly: GPT-5.2 predicted “right:90”, GPT-5.1 predicted “righ t:45”, and Gemini-3-Flash predicted “rotate:ccw:35,left:45,up:20”. Here, “ccw” means coun terclo ckwise rotation. significan tly to maintain the rigorous, multi-step agentic lo op required for the task: gemini-3-pro-preview failed to complete the b enchmark even after 12 hours of exe- cution, gemini-3-flash-preview suffered from sev ere hallucinations forcing us to halt execution, and grok-4.1-fast-reasoning could not follo w the output syn tax (e.g., out- putting cw instead of the required rotate:cw , or simply “0”). Consequen tly , w e utilized gpt-5.2 as our primary exp erimen tal vehicle due to its unmatc hed op erational stabilit y in this sp ecific to ol-use en vironment. 4.6 Isolating Core Spatial Capabilities 4.6.1 Iden tify small rotation T o understand the low er-than-exp ected p erformance, w e isolated the fundamental unit of spatial reasoning: detecting rotation. W e presented the mo dels (GPT-5.2, Gemini 3 Pro) with tw o images of the same ob ject (from the SpatialViz dataset), where the second w as rotated by a small angle in a sp ecific direction (ya w, pitch, or roll). W e then asked: “Estimate the rotation direction and angle.” In a test with three rotation axes and the rotation magnitude fixed at 15 ◦ , the results for GPT-5.2 are summarized in T able 2 . It correctly predicted only one condition (Righ t 15 ◦ ). The failure mo des reveal a profound inabilit y to infer motion from tw o sequential images. F or instance, when the ob ject was rotated left , the mo del predicted right . Crucially , in the down rotation scenario, the mo del incorrectly predicted up , justifying its answer by noting that “the top part is visible”—conflating static feature visibilit y with the direction of motion. Similarly , planar rotations (clo c kwise and coun terclo ckwise) yielded unrelated directional predictions (e.g., predicting “righ t” or “up”). A more detailed explanation of ho w the rotation w as generated (in camera space) did not improv e these results. 13 Figure 4: VGGT correctly recognized the rotation direction and angle. T ranslation (Direction): [-0.3297236 -0.01104623 0.07304744] Rotation (Degrees): [-0.90105244 18.20889242 1.1002316 ] Pitc h (X): -0.90 ◦ Y a w (Y): 18.21 ◦ Roll (Z): 1.10 ◦ 4.6.2 V GGT Regarding this sp ecific capabilit y , certain sp ecialized vision transformers, suc h as V GGT ( W ang et al. , 2025a ), are explicitly trained to reco v er camera extrinsics. In the example shown in Figure 3 , VGGT correctly output a ya w of 18.21 ◦ (within a margin of error of less than 2 degrees). See Figure 4 . T o systematically ev aluate V GGT’s robustness, w e generated a con trolled dataset us- ing the SpatialViz 3D mo dels by applying incremental camera orbits in three intuitiv e directions—y aw (left/right), pitc h (up/down), and roll (clo ckwise)—from 0 ◦ to 360 ◦ in 30 ◦ steps. VGGT’s prediction correctness was verified b y re-applying the inferred rota- tions (Euler angles) in PyVista and man ually c hec king visual alignmen t with ground-truth snapshots. Results sho wed p erfect matching for all y aw angles and accurate predictions for pitch up to ± 90 ◦ (and 330 ◦ ), but it failed on extreme pitch angles (120 ◦ –270 ◦ ) and most roll angles b ey ond ± 30 ◦ . These failures manifested as axis-rev ersed interpretations due to the mo del’s training bias tow ard upright real-world p oses. Manual v alidation w as straigh tforward, as the matc h was either visually p erfect or mirrored across an axis (whic h w e considered a failure). W e further tested V GGT on the full 3D rotation dataset (40 problems; 80 image pairs mo ving from the “original” view to the alternatives—specifically the 2 out of 3 that are mathematically matc hable). In this setting, VGGT’s results are impressive given that the rotational disparities are often large. The mo del predicts a direct transformation matrix (Euler angles), whic h fundamen tally differs from the trial-and-error sim ulation humans migh t use. Considering that VGGT is a T ransformer-based mo del, this result pro vides strong evi- dence that the T ransformer arc hitecture is not inherently a b ottleneck for learning geo- metric extraction. The limitations observ ed in curren t generalist MLLMs lik ely stem from 14 Figure 5: The mo dels w ere asked to generate an image rotated b y 30 degrees to the left (camera space). None of the models w ere able to do so correctly , or even pro duce a close appro ximation. training strategies rather than architecture; sp ecifically , generalist mo dels lack the sup er- vised training on camera extrinsics found in datasets like Co3D. How ev er, we m ust also note that MLLMs are primarily text-cen tric reasoning systems, op erating very differen tly from sp ecialized mo dels lik e VGGT. 4.6.3 Predict the after-rotation state Bey ond the capability to p erceiv e rotation (identifying direction and angle), spatial reasoning requires the mo del to conceptually visualize the resulting state when a ro- tation is applied—for example, simulating a small rotation in an arbitrary direction in order to issue the correct rotation instruction. T o inv estigate this capability , w e ask ed four frontier image-generation models ( gpt-5.2 , gemini-3-pro-image-preview , gemini-3.1-flash-image-preview , grok-imagine-image ) to generate a post-rotation image given an initial ob ject image. All mo dels failed completely (Figure 5 ); they simply output the original image unchanged. Prompt v ariations did not alter this outcome. T able 2: Performance of GPT-5.2 on 15-degree rotations across differen t axes. The mo del fails to generalize directionality , relying instead on spurious visual cues. T rue Direction Angle Mo del Prediction Outcome Left 15 ◦ Righ t ✗ Righ t 15 ◦ Righ t ✓ Up 15 ◦ Righ t (30 ◦ ) ✗ Do wn 15 ◦ Up (reason: top visible) ✗ Clo c kwise 15 ◦ Righ t (30 ◦ ) ✗ Coun ter-Clo c kwise 15 ◦ Up ✗ 15 5 Discussion 5.1 F unctional imagery deficit (metaphor: ‘functional aphanta- sia’) W e use “functional aphantasia” strictly as a conceptual metaphor to encapsulate several measurable computational deficits observ ed in our exp eriments. Even when equipp ed with an external tool that p erforms spatial rotations and prompted to engage in imagery- based thinking, the mo del remains unable to effectively “sim ulate” rotation to solv e the problem. Crucially , our architecture successfully outsources the maintenance of the p ersisten t 3D mo del to the programmatic mo dule. Therefore, the failure of the system highligh ts that holding a 3D state is only half the battle. T o actually use this state, the LLM m ust p ossess basic visual-spatial primitiv es to in terpret the generated snapshots. W e identify the lack of these foundational primitives as the core cause of the imagery deficit: Insensitivit y to mo vemen t: When pro vided with t wo images, the mo del consistently fails to p erceiv e them as a sequence of snapshots of an ob ject in motion. It treats them as isolated, unrelated frames, even when explicitly prompted to view them as a h uman naturally w ould—recognizing the same ob ject and “imagining” its contin uous mo vemen t. The results also suggest that the mo del lac ks the computational equiv alent of “stereo matc hing” ( Y ang et al. , 2025 ) to extract spatial information within and b et ween images. In humans, bino cular vision pro vides depth cues that make 3D structure self-evident, allo wing us to solve spatial matching problems by men tally simulating rotation step by step. LLMs, lac king this spatial in tuition, treat rotation primarily as abrupt 2D c hanges. Sp ecialized solutions lik e V GGT ( W ang et al. , 2025a ) show promise—ac hieving ∼ 51% accuracy on our 3D dataset with near-p erfect y aw recov ery—though they remain limited b y dataset biases regarding large pitc h and roll angles. Consequently , these findings suggest that a more realistic path forw ard is to explicitly mo del spatial reasoning via p ose tracking and pro jection, whic h our results suggest is currently missing in general- purp ose LLMs. Inabilit y to predict dynamic states: The mo del cannot “imagine” or generate the state of an ob ject after a rotation, as demonstrated by our simple exp eriment with fron tier image mo dels (Figure 5 ). In principle, an MLLM could employ a generate-and-test strategy—syn thesizing intermediate imagery and iteratively analyzing the results—but this is currently hindered by sev erely limited reliability in visual-spatial prediction. Sym b olic-asso ciativ e reasoning bias: Previous researc h ( Ha yashi and Hirata , 2022 ) suggests that autoregressive mo dels exhibit a strong bias to w ard textual sequences, driven b y the higher predictability of text compared to non-sequential image tok ens. As a 16 result, these mo dels default to sym b olic inference, often disregarding nuanced visual cues. The mo del-generated rationales in our exp erimen ts indicate that MLLMs do not attend to images in a fo cused, h uman-like manner; instead, they reason b y grasping a sup erficial gist of an image and adv ancing their logic purely through symbolic links. F or instance, the mo del struggles to delib erately inspect a sp ecific region of an image or to “contempla te” (i.e., dynamically hov er its attention o ver) visual features, which is characteristic of human visual engagement. Its reasoning is ultimately grounded in shallo w p erceptual observ ations rather than a robust structural understanding. In our exp erimen ts, this bias prov ed robust against in terven tion: ev en with explicit prompting designed to force visual-spatial matc hing, the mo del p ersistently reverted to v erbal reasoning ab out ob ject comp osition rather than engaging with visual geometric features. 6 Conclusion This study pro vides evidence that current frontier LLMs exhibit what we metaphorically call “functional aphantasia”: they do not reliably engage in imagery-lik e reasoning ev en when pro vided with an external tool capable of rendering and rotating 3D ob jects. Across our exp erimen ts, the external Imagery Mo dule functions as a capable “scanner” (generating relev ant views on command), but it remains an insufficient “cognitiv e pros- thetic” when paired with today’s LLM Reasoning Mo dules. By pro viding the p ersistent 3D represen tation externally , we isolated what is truly missing on the LLM side: the ba- sic p erceptual primitives. The core limitation is not the absence of a rendered 3D state, but the mo del’s fundamen tal inability to pro cess the visual-spatial primitives required to in terface with that state. In this study , we iden tified tw o central barriers. First, the mo del lacks the founda- tional primitives for spatial and visual pro cessing, sp ecifically a sev ere insensitivity to (i) depth (extracting 3D structure from 2D pro jections), (ii) mov emen t (interpreting t w o images as temp orally related snapshots), and (iii) dynamic prediction (an ticipating the subsequent view after a geometric transformation). Without these building blo cks, the LLM cannot guide the imagery mo dule. Second, the mo del lac ks a contempla- tiv e, atten tion-driven mode of reasoning ov er images; instead, it defaults to symbolic, w ord-based asso ciations that frequently o v erride spatial evidence. Accordingly , future w ork should mo ve b eyond merely attaching LLMs to rendering en- gines or adding parallel imagery branches. Instead, research must fo cus on building foundational architectures that intrinsically supp ort spatial a w areness, con tinuous state- trac king, and contemplativ e visual attention. 17 References Baddeley , A. D. and Hitch, G. (1974). W orking memory . volume 8 of Psycholo gy of L e arning and Motivation , pages 47–89. Academic Press. Barsalou, L. W. (1999). Perceptual symbol systems. Behavior al and Br ain Scienc es , 22(4):577–660. Cai, Z. et al. (2025). Has gpt-5 ac hieved spatial in telligence? an empirical study . arXiv pr eprint arXiv:2508.13142 . F o dor, J. A. (1975). The language of thought , v olume 5. Harv ard universit y press. Ha, D. and Schmidh ub er, J. (2018). W orld mo dels. arXiv pr eprint arXiv:1803.10122 . Ha yashi, S. Y. and Hirata, N. S. (2022). Understanding attention-based enco der-deco der net works: a case study with c hess scoresheet recognition. In 2022 26th International Confer enc e on Pattern R e c o gnition (ICPR) , pages 1586–1592. IEEE. Huang, J. Y., Zhang, S., Liu, Q., Qin, G., Zh u, T., Naumann, T., Chen, M., and Poon, H. (2025). Be my ey es: Extending large language mo dels to new modalities through m ulti-agent collab oration. arXiv pr eprint arXiv:2511.19417 . Johnson-Laird, P . N. (1989). Men tal mo dels. Kerbl, B., Kopanas, G., Leimk ¨ uhler, T., and Drettakis, G. (2023). 3d gaussian splatting for real-time radiance field rendering. A CM T r ansactions on Gr aphics , 42(4):1–14. Kosslyn, S. M. (1980). Image and mind . Harv ard Universit y Press. Kosslyn, S. M. and Pylyshyn, Z. (1994). Image and brain: The resolution of the imagery debate. Natur e , 372(6503):289–289. Kunda, M. (2018). Visual mental imagery: A view from artificial intelligence. Cortex , 105:155–172. Lak e, B. M., Ullman, T. D., T enenbaum, J. B., and Gershman, S. J. (2017). Building mac hines that learn and think lik e p eople. Behavior al and Br ain Scienc es , 40:e253. Lei, Y., Ge, X., Zhang, Y., Y ang, Y., and Ma, B. (2025). Do large language mo dels think lik e the brain? sentence-lev el evidence from fmri and hierarc hical em b eddings. arXiv pr eprint arXiv:2505.22563 . Li, C., W u, W., Zhang, H., Xia, Y., Mao, S., Dong, L., V uli ´ c, I., and W ei, F. (2025). Imagine while reasoning in space: Multimo dal visualization-of-though t. arXiv pr eprint arXiv:2501.07542 . 18 P aivio, A. (1986). Mental r epr esentations: A dual c o ding appr o ach . Oxford Universit y Press. Pylysh yn, Z. W. (1973). What the mind’s eye tells the mind’s brain: A critique of mental imagery . Psycholo gic al bul letin , 80(1):1. Rose, D., Himakunthala, V., Ouy ang, A., He, R., Mei, A., Lu, Y., Saxon, M., Sonar, C., Mirza, D., and W ang, W. Y. (2023). Visual c hain of thought: Bridging logical gaps with m ultimo dal infillings. arXiv pr eprint arXiv:2305.02317 . Shepard, R. N. and Metzler, J. (1971). Mental rotation of three-dimensional ob jects. Scienc e , 171(3972):701–703. Shi, B., W u, Z., Mao, M., W ang, X., and Darrell, T. (2024a). When do w e not need larger vision mo dels? In Eur op e an Confer enc e on Computer Vision , pages 444–462. Springer. Shi, Y., W u, H., Xu, Z., and P an, X. (2024b). Language embedded 3d gaussians for op en-v o cabulary scene understanding. In Pr o c e e dings of the IEEE/CVF Confer enc e on Computer Vision and Pattern R e c o gnition , pages 2667–2677. Su, Z., Xia, P ., Guo, H., Liu, Z., Ma, Y., Qu, X., Liu, J., Li, Y., Zeng, K., Y ang, Z., et al. (2025). Thinking with images for m ultimo dal reasoning: F oundations, metho ds, and future fron tiers. arXiv pr eprint arXiv:2506.23918 . V aswani, A. et al. (2017). Atten tion is all you need. In A dvanc es in neur al information pr o c essing systems , pages 5998–6008. W ang, J., Chen, M., Karaev, N., V edaldi, A., Rupprech t, C., and No votn y , D. (2025a). Vggt: Visual geometry grounded transformer. In Pr o c e e dings of the Computer Vision and Pattern R e c o gnition Confer enc e , pages 5294–5306. W ang, J., Kang, Z., W ang, H., Jiang, H., Li, J., W u, B., W ang, Y., Ran, J., Liang, X., F eng, C., et al. (2025b). Vgr: Visual grounded reasoning. arXiv pr eprint arXiv:2506.11991 . W ang, J., Ming, Y., Shi, Z., Vineet, V., W ang, X., Li, S., and Joshi, N. (2024). Is a picture worth a thousand w ords? delving in to spatial reasoning for vision language mo dels. A dvanc es in Neur al Information Pr o c essing Systems , 37:75392–75421. W ang, S., Pei, M., Sun, L., Deng, C., Shao, K., Tian, Z., Zhang, H., and W ang, J. (2025c). Spatialviz-b enc h: An mllm benchmark for spatial visualization. arXiv pr eprint arXiv:2507.07610 . 19 W u, T. et al. (2025a). Video w orld models with long-term spatial memory . arXiv pr eprint arXiv:2506.05284 . W u, T., Y ang, S., Po, R., Xu, Y., Liu, Z., Lin, D., and W etzstein, G. (2025b). Video w orld mo dels with long-term spatial memory . Xu, Y., Li, C., Zhou, H., W an, X., Zhang, C., Korhonen, A., and V uli´ c, I. (2025). Visual planning: Let’s think only with images. arXiv pr eprint arXiv:2505.11409 . Y ang, Z., Y u, X., Chen, D., Shen, M., and Gan, C. (2025). Mac hine men tal im- agery: Emp ow er mu ltimo dal reasoning with laten t visual tokens. arXiv pr eprint arXiv:2506.17218 . Zhang, Y.-K., Lu, S., Li, Y., Ma, Y., Chen, Q.-G., Xu, Z., Luo, W., Zhang, K., Zhan, D.-C., and Y e, H.-J. (2024). Wings: Learning m ultimo dal llms without text-only forgetting. A dvanc es in Neur al Information Pr o c essing Systems , 37:31828–31853. 20 A Prompts This is the freeze version of the prompt. # T A S K A N D I T E R A T I V E P R O C E S S Y o u r t a s k i s t o s o l v e a 3 D m o d e l r o t a t i o n p r o b l e m . T h e p r o b l e m i n c l u d e s a n i m a g e w i t h 4 f i g u r e s , a n d t h e f o l l o w i n g s t a t e m e n t : ‘ T h e l e f t i m a g e s h o w s t h e o r i g i n a l c u b e s t a c k m a d e o f e q u a l - s i z e d s m a l l c u b e s . W h i c h o f t h e o p t i o n s o n t h e r i g h t c a n n o t b e o b t a i n e d b y r o t a t i n g t h e o r i g i n a l c u b e s t a c k ? P l e a s e a n s w e r f r o m o p t i o n s A , B o r C . ‘ # I M A G E M O D U L E T o s o l v e t h i s p r o b l e m , y o u w i l l w o r k t o g e t h e r w i t h a t o o l c a l l e d t h e i m a g e r y m o d u l e . T h e i m a g e r y m o d u l e h o l d s a 3 D r e p r e s e n t a t i o n o f t h e p r o b l e m o b j e c t s a n d p e r f o r m r o t a t i o n o p e r a t i o n s o n y o u r b e h a l f , a n d g e n e r a t e s n a p s h o t s ( i m a g e s ) c o r r e s p o n d i n g t o t h e c u r r e n t s t a t e ( i . e . , c a m e r a a n g l e ) . T h e s t a t e o f e a c h o b j e c t i s m a i n t a i n e d t h r o u g h o u t t h e e n t i r e p r o c e s s . T h e i n i t i a l s t a t e ( c a m e r a a n g l e ) o f e a c h o b j e c t c o r r e s p o n d s t o t h e i m a g e i n t h e p r o b l e m s t a t e m e n t . T h e p r o b l e m a s k s w h e t h e r o n e o b j e c t c a n h a v e t h e s a m e v i e w a s t h e o t h e r t h r o u g h r o t a t i o n . T h e i m a g e r y m o d u l e h e l p s s o l v e t h e p r o b l e m b y a c t u a l l y p e r f o r m i n g t h e r o t a t i o n a n d p r o v i d i n g t h e v i e w a f t e r - r o t a t i o n , e n a b l i n g a t r y - r o t a t e a n d c h e c k l o o p p r o c e s s , y o u d o n ’ t n e e d t o " i m a g i n e " i t . Y o u c a n r e q u e s t a d i r e c t r o t a t i o n t o a d e s i r e d f i n a l t a r g e t s t a t e o r d o i t i n c r e m e n t a l l y , i n a l o o p r o t a t e - v e r i f y u n t i l g e t t h e d e s i r e d v i e w o f c o n c l u d e t h a t i s n o t p o s s i b l e . I t i s l i k e t a k e t h e o b j e c t s i n y o u h a n d s , a n d p l a y i f a r o u n d c h e c k i n g v i s u a l l y i f y o u h a v e a m a t c h . T h e p r o b l e m a s k s t o r o t a t e t h e o r i g i n a l t o m a t c h t h e a l t e r n a t i v e , b u t f o r t h e p r o b l e m s p r e s e n t e d h e r e , i t i s e q u i v a l e n t r o t a t e t h e a l t e r n a t i v e t o m a t c h t h e o r i g i n a l . T h e i m a g e r y m o d u l e a l l o w s r o t a t e o n l y t h e a l t e r n a t i v e s . W o r k i n g w i t h t h e i m a g e r y m o d u l e i s a n i t e r a t i v e p r o c e s s , c o n t r o l l e d e x t e r n a l l y . I t w o r k s i n T U R N S b e t w e e n y o u a n d t h e i m a g e r y m o d u l e . O n y o u r t u r n , d o t h e a n a l y s i s b a s e d o n t h e p r o v i d e d i m a g e s , a n d g e n e r a t e r o t a t i o n i n s t r u c t i o n s t o t h e i m a g e r y m o d u l e . T h e n , t h e i m a g e r y m o d u l e , o n i t s t u r n , w i l l a p p l y t h e s e r o t a t i o n s a n d r e t u r n t h e s n a p s h o t i m a g e s o f t h e o b j e c t s i n t h e n e w s t a t e . T h e n i t i s y o u r t u r n , a n d s o o n . R o t a t i o n s c o m m a n d s a r e d e f i n e d i n c a m e r a s p a c e ( r e l a t i v e t o t h e c u r r e n t v i e w ) , s i m u l a t i n g t h e i n v e r s e o f c a m e r a m o v e m e n t . I n t u i t i v e l y , t h i s m a t c h e s t h e v i e w o f m a n i p u l a t i n g a n o b j e c t i n y o u r h a n d s : t h e o b j e c t s p i n s a r o u n d i t s c e n t e r w h i l e t h e c a m e r a ( y o u r v i e w p o i n t ) r e m a i n s f i x e d . P o s s i b l e c o m m a n d s a r e : - ‘ l e f t : v a l u e ‘ ( o b j e c t i s r o t a t e d t o l e f t ) - ‘ r i g h t : v a l u e ‘ ( o b j e c t i s r o t a t e d t o r i g h t ) - ‘ u p : v a l u e ‘ ( o b j e c t i s r o t a t e d u p ) - ‘ d o w n : v a l u e ‘ ( o b j e c t i s r o t a t e d d o w n ) - ‘ r o t a t e : c w : v a l u e ‘ ( o b j e c t r o t a t e s c l o c k w i s e i n t h e i m a g e p l a n e ) - ‘ r o t a t e : c c w : v a l u e ‘ ( o b j e c t r o t a t e s c o u n t e r c l o c k w i s e i n t h e i m a g e p l a n e ) ‘ v a l u e ‘ r e f e r s t o t h e r o t a t i o n a n g l e i n d e g r e e s . A n g l e 0 i s a l s o v a l i d a n d c a n b e u s e d t o g e t a s n a p s h o t o f t h e c u r r e n t s t a t e . # O U T P U T R e t u r n y o u r r e s p o n s e i n J S O N f o r m a t , f o l l o w i n g t h e f o r m a t b e l o w : ‘ ‘ ‘ j s o n 21 { " m e m o r y " : { " r a t i o n a l e " : " y o u r j u s t i f i c a t i o n u p t o t h i s p o i n t " , " p a r t i a l _ c o n c l u s i o n " : { " A " : " u n k n o w n " | " p r o b a b l y _ n o t _ t h e _ a n s w e r " | " p r o b a b l y _ t h e _ o d d _ o n e " , " B " : " u n k n o w n " | " p r o b a b l y _ n o t _ t h e _ a n s w e r " | " p r o b a b l y _ t h e _ o d d _ o n e " , " C " : " u n k n o w n " | " p r o b a b l y _ n o t _ t h e _ a n s w e r " | " p r o b a b l y _ t h e _ o d d _ o n e " } } , " i t e r a t i o n _ n u m b e r " : 1 , " c o m m a n d s " : [ { " t a r g e t " : " A " | " B " | " C " , " r o t a t i o n _ s e q u e n c e " : " r i g h t : 1 5 , r i g h t : 1 5 , u p : 1 0 " } ] , " f i n a l _ a n s w e r " : n u l l } ‘ ‘ ‘ D e t a i l s o f t h e o u t p u t f i e l d s : - ‘ m e m o r y ‘ : G e n e r a t e y o u r r a t i o n a l e a n d p a r t i a l c o n c l u s i o n t o h e l p t r a c e y o u r r e a s o n i n g p r o c e s s . T h i s b l o c k w i l l b e p r o v i d e d a s c o n t e x t i n f u t u r e t u r n s d u r i n g t h e i t e r a t i o n , s o i t w i l l s e r v e a s y o u r m e m o r y t h r o u g h o u t t h e i t e r a t i v e p r o c e s s . - ‘ c o m m a n d s ‘ : R o t a t i o n i n s t r u c t i o n s f o r t h e i m a g e r y m o d u l e . Y o u c a n g e n e r a t e f o r o n e o r m o r e t a r g e t s . R o t a t i o n s e q u e n c e c a n h a v e o n e o r m o r e c o m m a n d s , s e p a r a t e d b y c o m m a . E a c h c o m m a n d g e n e r a t e s a s n a p s h o t i m a g e o f a f t e r r o t a t i o n v i e w , a n d w i l l b e c o m b i n e d i n a g r i d i m a g e , p e r t a r g e t , h a v i n g t h e e f f e c t o f a s e q u e n c e s h o w i n g t h e o b j e c t r o t a t i n g i n c r e m e n t a l l y . - ‘ f i n a l _ a n s w e r ‘ : T h e a n s w e r f o r t h e p r o b l e m , i f y o u h a v e a c o n c l u s i o n . O t h e r w i s e , l e a v e a s n u l l . - ‘ i t e r a t i o n _ n u m b e r ‘ : I t e r a t i o n c o u n t e r . S t a r t w i t h 1 a n d i n c r e m e n t t h i s n u m b e r e a c h t u r n . E n c l o s e t h e J S O N o b j e c t i n ‘ ‘ ‘ j s o n a n d ‘ ‘ ‘ . * I M P O R T A N T * I n y o u t u r n , g e n e r a t e e x a c t l y o n e J S O N o u t p u t a n d F I N I S H . D O N ’ T s i m u l a t e t h e i t e r a t i o n o r t h e i m a g e r y m o d u l e t u r n . I t i s h a n d l e d e x t e r n a l l y . # C O N V E R S A T I O N C O N T E X T T h e c o n v e r s a t i o n c o n t e x t , i n e a c h t u r n , w i l l c o n t a i n t h e f o l l o w i n g c o n t e n t : - T h e t e x t a n d i m a g e f r o m t h e p r o b l e m s t a t e m e n t . - A l l t h e p r e v i o u s o u t p u t y o u h a v e g e n e r a t e d . - T h e i m a g e s g e n e r a t e d b y t h e i m a g e r y m o d u l e , f r o m t h e l a s t i t e r a t i o n o n l y . - T h e ‘ o r i g i n a l ‘ o b j e c t s n a p s h o t t o h e l p c o m p a r i s o n . # S T R A T E G Y - P e r f o r m a t l e a s t 5 i t e r a t i o n s b e f o r e g i v i n g t h e f i n a l a n s w e r . B Resolution sample Question: The left image shows the original cub e stack made of e qual-size d smal l cub es. Which of the options on the right c annot b e obtaine d by r otating the original cub e stack? Ple ase 22 answer fr om options A, B or C. Iteration 1: Need determine whic h option shap e is not a ro- tation of original. Use imagery mo dule to rotate eac h option to see if can match original view. Start b y c hecking o verall cube coun t/adjacency: original app ears to b e 5 cub es: a 2x2 platform of 4 cub es with one extra cube attached b elow/fron t? plus one cub e stack ed on bac k-right? Options show similar coun ts but unclear; m ust verify via rota- tions. First iteration: request snapshots of A,B,C without rotation (0 deg) to compare, then b egin rotating lik ely candidates. Iteration 2: F rom snapshots: Original looks like 6 cub es: a 2x2 flat plate (4 cubes) plus one extra cub e extending out on one side (making a 3-long arm) and one cub e stac ked on the far corner (heigh t 2 at one corner). Option A app ears to b e a 5-cub e shap e (a 4-long bar with one cub e stack ed on second p o- sition) or p ossibly 6 but arrangement seems dif- feren t; need rotate to v erify adjacency . Option B lo oks closer to original: a 2x2 base with one cub e stac k ed and one cub e protruding. Option C is a v ertical column of 3 with a 3-long top arm and one b ottom cub e offset—seems lik e 6 cub es but v ery different; likely not a rotation if original has only one cub e at height 2 and no height-3 column. Need rotations to confirm matches: try rotate B to matc h original first; also rotate A and C to see if an y can match original silhouette. 23 Iteration 3: After rotations, C clearly has a 3-cub e v ertical col- umn (heigh t 3) with a 3-long top bar, which orig- inal do es not (original max height seems 2). So C is very likely imp ossible. A and B rotations pro duced confusing edge-on views; need more sys- tematic: try to matc h original’s fo otprin t: lo oks lik e a 2x2 square with one extra cube extending from one side (making a 3-long row) and one cub e stac k ed on the far corner of the 2x2 (heigh t 2). W e’ll attempt to rotate B to sho w a 2x2 base + one protrusion + one stac ked. Also rotate A to see if it can ev er show 2x2 base; curren tly A seems lik e a long bar with a step, ma yb e not matching either. Next: rotate B around to get clearer top-do wn-ish view; rotate A similarly; keep C but likely o dd Iteration 12: Latest snapshots show original is a flat (all cub es coplanar) 6-cub e p olycub e. Option A after rota- tions (seq27) becomes a flat 6-cub e fo otprint that visually matches the original’s ‘4-long bar with a 2-cub e perp endicular arm’ arrangement, so A is obtainable. Option B in all shown views remains non-coplanar (has cub es at tw o heigh ts: a cub e stac k ed abov e the bar and another below), whic h cannot result from rotating a coplanar original (rigid rotations pres erv e coplanarit y). Therefore B is the only option that cannot b e obtained by rotation. Iteration 13 Comparing last snapshots: Original is a flat (single-la y er) 6-cub e p olycub e with a 4-cube bar and a 2-cub e p erp e ndicular arm. Option A is also flat and matches this fo otprint under rotation. Option C is flat to o and could b e another rotation of the same fo otprin t. Option B is clearly non- coplanar (has cub es at differen t heigh ts / stac k e d), whic h cannot b e produced b y rotating a copla- nar original b ecause rigid rotations preserv e copla- narit y . Therefore B cannot b e obtained by rotat- ing the original. Answ er: B 24 In this sample (which is representativ e of all observ ed cases), the mo del never demon- strates con trolled rotation to bring the silhouette closer to the original. It fails to gauge angular distance—ev en when very close—often rotating in the opposite direction and mo ving further a wa y . The chosen rotations frequen tly app ear arbitrary , highligh ting an inabilit y to predict the p ost-rotation state. F urthermore, the generated rationale is al- most entirely devoted to inferring the static structure of the ob ject rather than planning spatial transformations. 25

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment