TED: Training-Free Experience Distillation for Multimodal Reasoning

Knowledge distillation is typically realized by transferring a teacher model's knowledge into a student's parameters through supervised or reinforcement-based optimization. While effective, such approaches require repeated parameter updates and large…

Authors: Shuozhi Yuan, Jinqing Wang, Zihao Liu

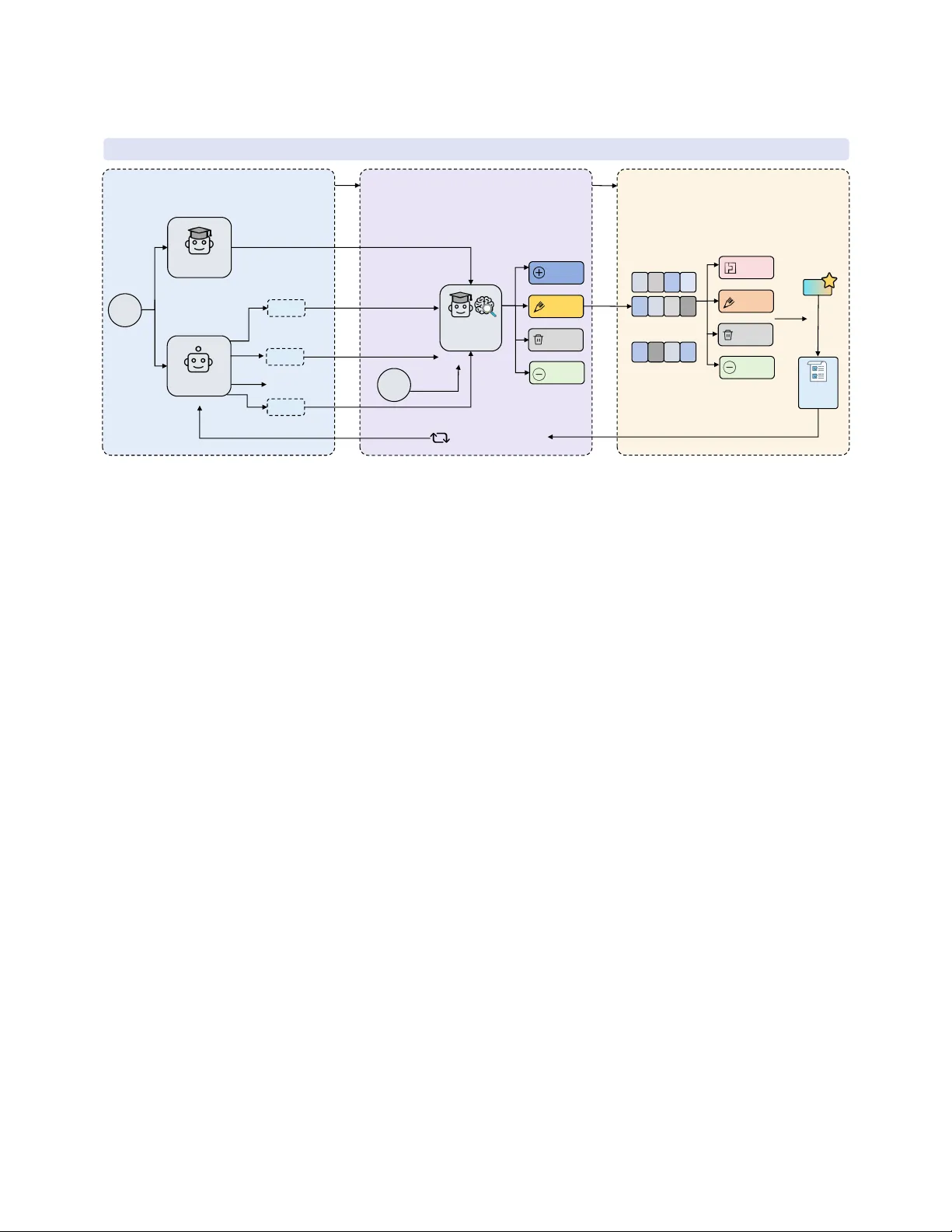

TED: T raining-Free Experience Distillation for Multimodal Reasoning Shuozhi Y uan ∗ Jinqing W ang Zihao Liu yuansz@chinatelecom.cn China T elecom Digital Intelligence T echnology Co.,Ltd. Beijing, China Miaomiao Y uan Institute of Computing T echnology , Chinese Academy of Sciences Beijing, China Haoran Peng Jin Zhao Bingwen W ang Haoyi W ang China T elecom Digital Intelligence T echnology Co.,Ltd. Beijing, China Abstract Knowledge distillation (KD) is typically realized by transferring a teacher model’s knowledge into a student’s parameters through su- pervise d or reinforcement-based optimization. While eective, such approaches require repeated parameter up dates and large-scale training data, limiting their applicability in r esource-constrained en- vironments. In this work, we pr opose TED, a training-fr ee, context- based distillation framework that shifts the update target of distil- lation from model parameters to an in-context experience injecte d into the student’s prompt. For each input, the student generates mul- tiple r easoning trajectories, while a teacher independently produces its own solution. The teacher then compar es the student trajecto- ries with its reasoning and the ground-truth answer , extracting generalized experiences that capture eective r easoning patterns. These experiences are continuously rened and updated over time. A key challenge of context-based distillation is unbounded experi- ence growth and noise accumulation. TED addresses this with an experience compression mechanism that tracks usage statistics and selectively merges, rewrites, or removes low-utility experiences. Experiments on multimodal reasoning benchmarks MathVision and VisualPuzzles show that TED consistently improves perfor- mance. On MathVision, TED raises the performance of Q wen3- VL- 8B from 0.627 to 0.702 , and on VisualPuzzles from 0.517 to 0.561 with just 100 training samples. Under this low-data, no-update setting, TED achieves performance competitive with fully trained parameter-based distillation while reducing training cost by o ver 20 × , demonstrating that meaningful knowledge transfer can b e achieved through contextual e xperience. CCS Concepts • Computing methodologies → Knowledge repr esentation and reasoning . Ke ywords Knowledge distillation; Multimodal reasoning; In-context learning; Training-fr ee learning 1 Introduction Knowledge distillation (KD) has become a standard appr oach for transferring capabilities from multimodal large language models (MLLMs) to smaller ones [ 9 , 14 ]. Most existing knowledge distilla- tion methods adopt a parameter-based strategy , where the student learns by ne-tuning on large-scale data generated by a teacher , Traditional KD (parameter-based) Teacher Model Loss Function Gradient-based Parameter Update Student Model Parameters � High Compute Cost TED (Parameter-free,Context-based) Teacher Model Student Model Persistent Context Contextual Experience No Parameter Update Shift Parameter Context Gradient Experience Figure 1: TED reformulates knowledge distillation from pa- rameter updates to contextual experience reuse. such as soft labels [ 21 ], rationales [ 13 ], or reasoning trajectories [ 8 ]. Although eective, these approaches usually rely on gradient- based optimization and repeated parameter updates, which require substantial computational cost [ 6 , 26 ] and large amounts of training data, limiting their practicality in many resource-constrained or rapidly evolving environments. For real-world use , especially on edge devices [ 7 ] or black-box APIs [ 4 ], updating model parameters is impractical or ev en impossi- ble. In such cases, ecient adaptation without retraining becomes crucial. This raises an imp ortant question: Can knowledge distil- lation be achieved without updating model parameters? In this work, we answer this question through TED , an alterna- tive formulation of distillation that operates entirely in the model’s context rather than its parameters. As illustrated in Figure 1, un- like traditional distillation methods that encode teacher knowledge into student parameters through optimization, TED r eformulates distillation as the continual extraction, abstraction, and reuse of transferable reasoning experiences. These experiences serve as dis- tilled knowledge that guides future inference , enabling the student to improve without any parameter updates. A key distinction between TED and existing memory-base d methods is what gets stored and updated. Prior methods such as Reexion[ 19 ] and Memento[ 33 ] typically reuse instance-level trajectories, demonstrations, or verbal feedback from previous at- tempts. In contrast, TED does not treat experience as a cache of ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Shuozhi Y uan,et al. past solutions. Instead, it uses teacher super vision to extract ab- stract, reusable reasoning experiences—such as transferable strate- gies, common failure patterns, and correction rules—that generalize across inputs. In this sense, TED distills not example-specic traces, but higher-level reasoning guidance . For each input, the student model generates multiple reasoning trajectories in parallel, while a teacher model independently pro- duces its own reasoning process. The teacher then jointly evaluates the student trajectories, its own reasoning, and the gr ound-truth, and abstracts generalize d experiences that capture eective rea- soning patterns, common failure modes, and correction strategies. Crucially , these experiences are not task-sp ecic exemplars or raw demonstrations, but compact and reusable reasoning principles distilled under teacher supervision. These experiences are accu- mulated across training samples and iteratively rened, forming a persistent experience that evolves over time. During inference, the learned experience is directly injected into the system prompt, allowing the student model to b enet from distilled knowledge without any parameter updates. This formulation enables TED to operate under strict constraints on training cost and model scale , while remaining compatible with standard black-box APIs. A key challenge in context-based distillation is that naively ac- cumulating experiences leads to unbounded context growth and low-utility information. TED addresses this challenge through a teacher-guided experience compression mechanism that explicitly models experience utility . Instead of performing simple summa- rization or heuristic pruning, TED tracks the usage frequency of individual experience items and retains high-utility experiences while selectively merging, rewriting, or remo ving others under teacher super vision. This compression process abstracts higher- level reasoning patterns from frequently co-occurring experiences and eliminates obsolete or noisy information, ensuring that the in-context experience remains compact, informativ e, and scalable over long training iterations. W e evaluate TED on multimo dal mathematical reasoning and logic benchmarks [ 15 , 20 , 25 , 28 , 31 , 32 ] using open-source vision- language models [ 2 , 22 ]. Despite performing no parameter updates and using only 100 training samples, TED achieves substantial per- formance improvements over dir ect inference. In particular , TED provides a strong performance-cost trade-o in low-data settings, approaching the gains of conv entional parameter-based distillation while reducing training cost by more than 20 × . These results demon- strate that eective knowledge transfer can b e realized through contextual experience, oering a lightweight and practical alterna- tive to traditional parameter-based distillation. Our main contributions in this paper are as follows: • W e propose TED, a training-free, context-based knowledge distillation framework that enables eective knowledge trans- fer without any parameter updates. • TED introduces a teacher-guided experience generation and compression mechanism that distills reusable reasoning prin- ciples and maintains a compact, high-utility in-context ex- perience. • Experiments on multimodal and textual reasoning bench- marks show that TED substantially improves model perfor- mance in lo w-data settings. Using only 100 training samples, TED achieves performance competitive with conventional distillation while reducing training cost by more than 20 × . 2 Related W ork In this section, we provide an ov erview of related work on knowl- edge distillation, in-context learning and other training-fr ee distilla- tion approaches, highlighting their relevance and main dierences to our proposed method. 2.1 Knowledge Distillation Knowledge distillation, as pione ered by [ 9 ], has become a basic approach for transferring the capability from a large teacher model into a compact student model. In the area of large language models, numerous approaches are proposed to address the task. For instance, instruction-based ap- proaches like Self-Instruct[ 26 ] and Alpaca [ 21 ] use teacher models to generate large-scale synthetic datasets for student ne-tuning. Furthermore, reasoning-based methods like Distilling Step-by-Step [ 10 ] e xtract rationales from the teacher to guide the student’s learn- ing pr ocess. Recent reasoning-focused framew orks, such as Bey ond Answers [ 23 ] and NesyCD[ 13 ] , have further improved the quality of rationales by incorporating multi-teacher feedback or symbolic knowledge. A dditionally , metho ds like DeepSeek-R1[ 8 ] demon- strate that distilling long-chain r easoning patterns can signicantly boost the performance of smaller op en-source models. From the analysis of existing knowledge distillation methods, it becomes clear that most approaches transfer knowledge by updat- ing student model parameters through large-scale optimization, re- lying on e xtensive training data and computational resources. Such parameter-centric designs limit their applicability in black-b ox, resource-constrained, or rapidly evolving settings. In contrast, our core motivation is to enable eective knowledge distillation without any parameter updates. T o this end, we propose TED , which shifts distillation from parameter optimization to context-level experi- ence accumulation, allowing knowledge to be distilled, compr essed, and reused entirely through prompts. 2.2 Multimodal Knowledge Distillation With the rise of multimodal large language models (MLLMs), knowl- edge distillation has extended from pure text to cross-modal rea- soning. Early works like MiniGPT -4 [ 34 ] and LLaV A [ 14 ] focus on aligning visual features with language spaces using teacher- generated data. Recently , more advanced methods attempt to distill specialized capabilities. For instance, Vigstandard [ 24 ] explores dis- tilling visual grounding capabilities. Similar to the challenges in text-only distillation, MLLM distillation often faces high computa- tional costs due to the large scale of vision-language pr ojectors and encoders. Recent eorts like ShareGPT4V [ 6 ] emphasize the quality of teacher-generated captions and rationales to improve student performance with less data. However , most of these MLLM distilla- tion methods still rely on ne-tuning parameters. Our work, TED , oers a potential paradigm shift by demonstrating that multimodal reasoning experiences could also be distilled and accumulated at the context level, p otentially bypassing the need for expensive cross-modal ne-tuning. TED: Training-Free Experience Distillation for Multimodal Reasoning ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil 2.3 In-context Learning In-context learning allows models to perform new tasks by pro- viding a few additional inputs in the prompt without updating any parameters[ 5 ]. T o improve reasoning performance, Chain-of- Thought [ 27 ] and retrieval-based methods like ERP [ 18 ] have been proposed to provide reasoning steps or relevant demonstrations. Recent advances, such as Reexion [ 19 ] and Long-context ICL [ 3 ], further use iterative feedback or larger conte xt windows to impr ove performance. These studies suggest that in-context learning is a practical way to adapt models without parameter updates. However , most ex- isting methods focus on improving prompt design for individual inputs, such as selecting demonstrations or adding feedback, and do not explicitly model how knowledge can be accumulated across ex- amples. In contrast, TED builds on in-context learning but fo cuses on e xperience accumulation. By distilling and reusing reasoning ex- periences under teacher guidance, TED enables knowledge transfer across inputs in a parameter-free manner . 2.4 Training-fr ee Distillation T o further reduce the cost of knowledge transfer , recent research has explored training-free distillation and memory-base d learning. For instance, AHA[ 12 ] and AgentDistill [ 17 ] facilitate knowledge transfer by collecting successful trajectories and reusing them in the inference stage without any weight updates. More recently , Me- mento [ 33 ] introduces a paradigm that allows models to "learn fr om experience" by storing past successes and failures in an external mental ling cabinet. T o address the eciency issues of large mem- ories, methods like MemCom [ 11 ] have been proposed to compress many-shot demonstrations into compact representations. Howev er , many training-free distillation and memory-based methods mainly reuse raw examples or xed past trajectories at inference time. When knowledge is treated as a set of instance- level demonstrations, the memory can become noisy and do es not generalize well across dierent inputs. In contrast, TED runs an on-policy-like distillation loop: for each training input, the student samples multiple reasoning traje ctories, and the teacher scores them based on the teacher’s own solution and the ground-truth supervision. This trajectory-level comparison allows TED to ex- tract and store abstract experiences (i.e., reusable reasoning tips and common failure patterns), instead of keeping example-spe cic traces. As a result, TED maintains a compact experience that is continuously updated and is more robust than standard many-shot retrieval when facing noisy or hard cases. 3 Formulation W e formulate knowledge distillation with a shared on-policy sam- pling and teacher judging protocol[ 1 ]. The dierence lies in the update target: vanilla KD updates parameters, while TED updates an in-context experience. 3.1 On-policy distillation protocol Considering supervised examples ( 𝑥 , 𝑦 ) , where 𝑥 is the input and 𝑦 is the ground-truth. Let 𝑆 denote the student model and 𝑇 the teacher model. For each input 𝑥 , the student samples 𝐾 reasoning trajectories { 𝜏 𝑖 } 𝐾 𝑖 = 1 , where each trajectory 𝜏 contains intermediate reasoning and a nal answer ˆ 𝑦 ( 𝜏 ) . Indep endently , the teacher gener- ates its o wn trajectory 𝜏 𝑇 . A teacher judging module then produces trajectory-level feedback which may incorporate correctness w .r .t. 𝑦 and teacher preference. 𝑟 𝑖 = Judge 𝜏 𝑖 , 𝜏 𝑇 , 𝑦 , (1) This sampling-and-judging step is shared by both vanilla KD and our parameter-free approach. 3.2 V anilla KD In vanilla knowledge distillation, the student has trainable parame- ters 𝜃 , and the teacher feedback is converted into a learning signal to optimize 𝜃 . A generic on-p olicy KD objective can be written as min 𝜃 E ( 𝑥 ,𝑦 ) ∼ D E 𝜏 ∼ 𝑆 𝜃 ( · | 𝑥 ) h L KD 𝜃 ; 𝑥 , 𝜏 , 𝜏 𝑇 , 𝑦 i , (2) where L KD denotes the distillation objective, which can be instan- tiated as maximizing the likelihood of the best-scored traje ctory , preference-based ranking, or reinforcement-style objectives using 𝑟 ( 𝜏 ) . Training procee ds via repeated gradient up dates can be de- scribed as: 𝜃 ← 𝜃 − 𝜂 ∇ 𝜃 L KD . (3) While eective, this approach requires parameter updates and typi- cally large-scale optimization. 3.3 TED TED freezes model parameters and performs distillation by updat- ing a contextual experience 𝐸 that is injected into the student’s prompt. W e denote the prompted context by 𝑝 ( 𝑥 ; 𝐸 ) = [ 𝑝 sys ; 𝐸 ; 𝑥 ] , (4) where 𝑝 sys is a xed system instruction and 𝐸 are experience items in the textual prex. The student then samples on-policy trajectories conditioned on this context: 𝜏 𝑖 ∼ 𝑆 ( · | 𝑝 ( 𝑥 ; 𝐸 ) ) . (5) Instead of optimizing 𝜃 , TED updates the experience using teacher feedback: 𝐸 ← Upda te 𝐸 ; 𝑥 , 𝑦, { 𝜏 𝑖 } 𝐾 𝑖 = 1 , 𝜏 𝑇 , { 𝑟 𝑖 } 𝐾 𝑖 = 1 , (6) where Upda te extracts generalized experience items (reusable rea- soning tips and common failure modes) from the comparison among student trajectories, the teacher trajectory , and the gr ound-truth, and incorporates them into 𝐸 . Spe cically , Upda te is realized as a set of actions generated by teacher model. At infer ence time, experience transfer is achiev ed purely through prompting: 𝜏 ∼ 𝑆 ( · | 𝑝 ( 𝑥 ; 𝐸 ) ) , ˆ 𝑦 = ˆ 𝑦 ( 𝜏 ) (7) 3.4 Core dierence Despite sharing the same on-policy sampling and teacher-judging protocol, the two approaches dier in the optimization variable. V anilla KD updates student parameters via gradient-based opti- mization, whereas TED freezes parameters and updates a persistent ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Shuozhi Y uan,et al. TED Detaile d Workflow Input Stage1: Trajectory Generation Teacher Student ...... GT Teacher Critique Stage2: Experience Generation Add Modify Delete None ...... Redundant Experience Stage3: Experience Compression Experience E System Prompt Iterative Improvem ent Merge D e l e t e None Rewrite Delete Figure 2: Overview of TED. Our proposed method includes three stages: trajector y generation, experience generation, and experience compression. The student rst samples multiple reasoning trajectories, which the teacher critiques against its own reasoning and the ground truth to distill generalized experiences. These experiences are then compressed and injecte d into the system prompt for iterative, parameter-free improvement. in-context experience serialized into the prompt for subsequent rollouts and inference. KD: 𝜃 ← 𝜃 − 𝜂 ∇ 𝜃 L TED: 𝐸 ← Upda te ( 𝐸 ; ·) (8) 4 TED Framework As shown in Figure 2, the TED framework consists of three key steps: reasoning trajectory generation, experience generation, and experience compression. Reasoning trajectory generation allows both the student and teacher models to generate their respective r ea- soning traje ctories. Exp erience generation creates abstract, reusable experience templates based on the teacher model’s reasoning path, the student’s multiple reasoning paths, and the ground truth. Experi- ence compr ession further compresses and renes these experiences to prevent context explosion and excessive noise intr oduction. A detailed explanation of each step is provided in the subsequent section. 4.1 Reasoning Trajectory Generation Given an input–lab el pair ( 𝑥 , 𝑦 ) , TED performs on-p olicy reasoning trajectory generation for b oth the student and the teacher models. The student model 𝑆 samples 𝑁 reasoning trajectories in parallel: { ˜ 𝜏 𝑖 } 𝑁 𝑖 = 1 ∼ 𝑆 ( · | 𝑝 ( 𝑥 ; 𝐸 ) ) , (9) where each raw trajectory ˜ 𝜏 contains intermediate reasoning traces and a nal answer . In parallel, the teacher model 𝑇 generates its own raw reasoning trajectory: ˜ 𝜏 𝑇 ∼ 𝑇 ( · | 𝑥 ) . (10) 4.1.1 Trajectory compression. Raw reasoning traces from the stu- dent and teacher models often contain redundant or noisy content, such as verbose explanations, self-corr ections, or exploratory de- tours. T o make the reasoning paths more concise and reusable, we apply a self-condensation step to each trajectory . Specically , for any ˜ 𝜏 , we ask the same model to rewrite the reasoning into a concise trajectory through prompt engineering. 𝜏 = Condense ( ˜ 𝜏 ) , (11) where the condense d trajectory 𝜏 is required to follow a same structured format: Premises → Step 1 → Step 2 → · · · → Conclusion . This process removes unnecessary content while keeping the key reasoning steps that lead to the nal answer . W e apply this step to all student trajectories ˜ 𝜏 𝑖 𝑖 = 1 𝑁 and the teacher trajector y ˜ 𝜏𝑇 , resulting in 𝜏 𝑖 𝑖 = 1 𝑁 and 𝜏 𝑇 . 4.1.2 T eacher trajector y filtering. T o ensure reliable teacher guid- ance, we only r etain teacher trajectories that correctly derive the ground-truth answer . Formally , a teacher trajectory 𝜏 𝑇 is consider ed valid if ˆ 𝑦 ( 𝜏 𝑇 ) = 𝑦 . (12) For samples where the teacher fails to produce a correct reason- ing trajector y , we treat them as negative cases and use them to construct critique experience. Through parallel student sampling, structured trajectory con- densation, and teacher ltering, TED pr oduces a set of clean and comparable reasoning trajectories. These trajectories serve as the foundation for subsequent experience generation and compression. TED: Training-Free Experience Distillation for Multimodal Reasoning ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Compression Trigger Experience exceeds context budget Too many experience items Utility Estimation Usage Tracking Estimate long-term usefuless during training Track how ofte n each exper ience is retrieved Teacher Guided compression None Delete Rewrite Merge Compressed Experience All generated Experience Experience Compression Workflow Figure 3: Overview of the Experience Compression module in TED . When the experience exceeds the context budget, TED estimates each experience’s utility and tracks its usage frequency . The teacher then compresses the experience by merging, rewriting, deleting, or retaining experiences, producing a compact system prompt that preser ves high-utility knowledge for ecient, parameter-free iterative improvement. 4.2 Experience Generation Based on the compressed reasoning trajectories, TED constructs and updates a reusable experience through teacher-driven critique. Given the student trajectories { 𝜏 𝑖 } 𝑁 𝑖 = 1 , the teacher’s valid traje ctory 𝜏 𝑇 , and the ground-truth label 𝑦 , the teacher model analyze dier- ences between correct and incorrect r easoning paths and extract generalized experience. 4.2.1 T eacher critique. The teacher jointly considers (i) multiple student reasoning trajectories, (ii) its own correct reasoning traje c- tory , and (iii) the ground-truth answer , and produces critiques that identify eective reasoning patterns, common failure modes, and corrective guidance. Formally , we denote the critique process as C = Critiqe { 𝜏 𝑖 } 𝑁 𝑖 = 1 , 𝜏 𝑇 , 𝑦 , (13) where C represents a set of candidate experience statements ex- pressed in natural language. 4.2.2 Experience update actions. TED maintains experience 𝐸 = { 𝑒 𝑗 } | 𝐸 | 𝑗 = 1 , where each item 𝑒 encodes a reusable reasoning guideline or error pattern. Instead of updating model parameters, TED updates 𝐸 by allowing the teacher to perform one of four discrete actions on the experience: • Add : generate a new experience item and insert it into 𝐸 ; • Modify : revise an existing experience item to impr ove cor- rectness or generality; • Delete : remov e an obsolete or harmful experience item from 𝐸 ; • None : take no action and keep 𝐸 unchanged. 4.2.3 Positive-negative sample balance. T o ensure stable e xperience generation, we control the balance between positive and negative student trajectories. For each input 𝑥 , the 𝑁 student-generated trajectories are divided into correct and incorrect sets according to their nal answers. W e require the number of correct trajectories to be no smaller than the number of incorrect ones: { 𝜏 𝑖 | ˆ 𝑦 ( 𝜏 𝑖 ) = 𝑦 } ≥ { 𝜏 𝑖 | ˆ 𝑦 ( 𝜏 𝑖 ) ≠ 𝑦 } . (14) If no correct trajectory is produced, we keep only one negative trajectory to generate a critical experience. Through teacher critique, experience updates, and iterative re- nement on balanced samples, TED builds an evolving experience that replaces parameter updates as the main mechanism for knowl- edge distillation. 4.3 Experience Compression As training proceeds, the experience set 𝐸 may grow beyond the con- text limit and accumulate redundant or noisy items. TED therefore compresses 𝐸 to keep it compact while retaining useful information. 4.3.1 Compression trigger . Let 𝐵 denote the maximum context budget measured in tokens, and let ℓ ( 𝑒 ) be the serialized length of an experience item 𝑒 . Compression is triggered whenev er ( Í 𝑒 ∈ 𝐸 ℓ ( 𝑒 ) > 𝐵 | 𝐸 | > 𝐵 item (15) where 𝐵 item denotes the maximum number of experience items. 4.3.2 Usage statistics and utility score. TED maintains usage sta- tistics for each experience item across training. Let 𝑢 𝑡 ( 𝑒 ) ∈ R ≥ 0 denote the accumulated usage fr equency of item 𝑒 up to step 𝑡 . After processing sample ( 𝑥 𝑡 , 𝑦 𝑡 ) , the usage counter is update d as 𝑢 𝑡 ( 𝑒 ) = 𝑢 𝑡 − 1 ( 𝑒 ) + I 𝑒 ∈ U ( 𝐸 ; 𝑥 𝑡 ) , (16) where U ( 𝐸 ; 𝑥 𝑡 ) denotes the subset of experience items injecte d for input 𝑥 𝑡 , and I [ ·] is the indicator function. During each for ward inference, the model reports the IDs of the experience items it uses. W e then count how many times each item is used. W e dene a utility score 𝑠 𝑡 ( 𝑒 ) , 𝑠 𝑡 ( 𝑒 ) = log ( 1 + 𝑢 𝑡 ( 𝑒 ) ) . (17) 4.3.3 T eacher-guided compression. At compression time, the teacher summarizes the experience into a smaller set ˆ 𝐸 . For a group of ex- perience items, the teacher selects one of the following actions: • Merge : replace a set of redundant items with a single higher- level experience; • Rewrite : rephrase an item to improve generality and appli- cability; • Delete : remove obsolete , noisy , or harmful items; • None : retain unchanged. 4.3.4 Utility-aware selection. During training, TED maintains us- age statistics for each experience item. At compression time, the teacher performs utility-aware selection based on the accumulated usage frequency . Sp ecically , only the top-R most frequently used ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Shuozhi Y uan,et al. experiences are r etained, while the remaining experiences are either merged with similar items or removed. 5 Experiments T o evaluate the performance of our TED , we pr esent the implemen- tation details, explain the experiments r esults, and oer a thorough analysis. 5.1 Datasets W e conducted experimental evaluations on multimo dal mathemati- cal reasoning benchmarks and visual logic benchmarks, including MathVision[ 25 ] and VisualPuzzles[ 20 ]. In addition, we performed experiments on purely textual mathematical reasoning datasets. T o ensure the reliability and r obustness of our results, each problem was independently evaluated ve times. W e report the average score as Mean@5. Mean@5 = 1 𝑁 𝑁 𝑖 = 1 1 5 5 𝑗 = 1 𝑠 𝑖 , 𝑗 ! (18) where 𝑁 denotes the total number of problems, and 𝑠 𝑖 , 𝑗 is the score obtained on the 𝑖 -th problem in the 𝑗 -th indep endent evaluation. 5.2 Base Models In this pap er , we adopt the Qwen3- VL [ 2 ] series as our student model and Kimi-K2.5[ 22 ] as our teacher model, given the leading performance in the eld of reasoning. W e evaluate the eective- ness of our framework across multiple model sizes, including 8B and 235B, to demonstrate its ability to enhance models. T o further explore the abilities of pure language models, we adopt Qwen3[ 29 ] series as student model and Kimi-k2.5 as teacher model. 5.3 Hyper-parameters In order to ensure the stability of our experiment results, we stan- dardized the hyper-parameters as follo ws. The temperature is xed at 0.7, the top-p parameter is set to 1.0, and the max-token length is 32768. The max experience item is set to 15 and max context budget is 4000. 5.4 Experimental Results Across all benchmarks, TED consistently impr oves ov er direct in- ference. Although fully trained knowledge distillation achieves the best overall performance, TED still obtains strong r esults with only a few hundred training samples and without updating model pa- rameters. This makes TED a lightweight and practical alternativ e in low-data or resource-constrained settings. The results of all other baseline methods are obtained using their publicly available code. 5.4.1 Results on the Multimodal Mathematical Reasoning. W e ran- domly sample 100 examples from MathV erse for training and eval- uate on MathVision. The learning process runs for 3 epochs with a batch size of 5 and a group size of 5. For comparison, the Naive- KD baseline is trained on the full 3940-sample MathV erse set. All models are evaluated in thinking mode. As shown in T able 1, TED consistently improves ov er direct in- ference despite using only a small number of training samples. For Qwen3- VL-8B, TED impr oves accuracy from 0.627 to 0.702 , and for Qwen3- VL-235B, from 0.746 to 0.762 . Although fully trained Naive- KD achieves higher absolute accuracy , TED remains competitive without parameter updates and with only 100 training examples. This suggests that distilled experiences stored in context can eec- tively transfer knowledge from teacher to student, esp ecially for smaller models with limited capacity . Overall, TED oers a light- weight and data-ecient alternativ e to traditional KD , achieving substantial gains without expensive retraining. T able 2 further compares TED and Naive-KD under the same training data budgets using Qwen3- VL-8B as the student mo del. TED already performs well with 100 samples (0.702) and improves only slightly as more data is added, suggesting that it can learn useful experiences ev en in low-data settings. In contrast, Naive-KD depends more on larger training sets, impr oving from 0.629 with 100 samples to 0.764 with 3000 samples. These results suggest that TED works better in low-data or resource-constrained settings, while Naive-KD benets more from larger datasets and eventually surpasses TED . T able 1: Results on the MathVision b enchmark. Method Train Set Student MathVision Direct – Qwen3- VL-8B 0.627 Direct – Qwen3- VL-235B 0.746 RA G [18] – Qwen3- VL-8B 0.639 RA G – Qwen3- VL-235B 0.751 Few-shot – Qwen3- VL-8B 0.631 Few-shot – Qwen3- VL-235B 0.744 Naive-KD [1] MathV erse Qwen3- VL-8B 0.729 Naive-KD MathV erse Qwen3- VL-235B 0.795 Reexion[19] MathV erse Qwen3- VL-8B 0.662 Reexion MathV erse Qwen3- VL-235B 0.751 Memento [33] MathV erse Qwen3- VL-8B 0.674 Memento MathV erse Qwen3- VL-235B 0.758 MemCom [11] MathV erse Q wen3- VL-8B 0.646 MemCom MathV erse Qwen3- VL-235B 0.741 TED MathV erse Qwen3- VL-8B 0.702 TED MathV erse Qwen3- VL-235B 0.762 T able 2: Comparison of dierent training data size Method 100 500 1000 3000 TED 0.702 0.707 0.710 0.725 Naive-KD 0.629 0.714 0.722 0.764 5.4.2 Results on the Multimodal Visual Logic. T o evaluate the gener- ality of our method, w e further conduct experiments on multimodal visual logic tasks under the same controlled setup. W e randomly sample 100 training examples and evaluate on the VisualPuzzles benchmark, following the same learning setting as in the multi- modal mathematical reasoning experiments. As shown in T able 3, TED consistently impro ves over direct inference on this benchmark. For Q wen3- VL-8B, TED improves TED: Training-Free Experience Distillation for Multimodal Reasoning ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil T able 3: Results on the VisualPuzzles b enchmark. Method Train Set Student VisualPuzzles Direct – Qwen3- VL-8B 0.517 Direct – Q wen3- VL-235B 0.572 Naive-KD LogicVista Q wen3- VL-8B 0.566 Naive-KD LogicVista Q wen3- VL-235B 0.582 Reexion[19] LogicVista Qwen3- VL-8B 0.524 Reexion LogicVista Qwen3-VL-235B 0.574 TED LogicVista Q wen3- VL-8B 0.561 TED LogicVista Q wen3- VL-235B 0.579 T able 4: Results on the textual AIME25 benchmark. Method Train Set Student T eacher AIME25 Direct – Qwen3-8B – 0.673 Direct – Qwen3-235B – 0.815 Naive-KD DAPO-Math Q wen3-8B Kimi-K2.5 0.792 Naive-KD DAPO-Math Q wen3-235B Kimi-K2.5 0.861 TED DAPO-Math Qwen3-8B Kimi-K2.5 0.733 TED DAPO-Math Qwen3-235B Kimi-K2.5 0.846 performance from 0.517 to 0.561 , and for Q wen3- VL-235B from 0.572 to 0.579 . Although fully trained Naive-KD remains slightly stronger in absolute performance, TED achie ves competitive r esults without parameter updates and with only a small numb er of training samples. This shows that context-based knowledge transfer can be a practical and data-ecient option for multimodal r easoning tasks, especially when gradient-based retraining is not preferred or not possible. 5.4.3 Results on the language only benchmark. W e randomly sam- ple 100 training instances from DAPO-Math-17k [ 30 ] and evaluate on AIME25[ 32 ]. The student models are from the Qwen3 series, with Kimi-K2.5 as the teacher . Training runs for 3 epochs with a batch size of 5, using a temperature of 0.7 and a group size of 5 during learning. All hyperparameters are the same as in the multi- modal experiments, and Naive-KD is implemented under the same training schedule. All models are evaluated in thinking mode. As shown in T able 4, TED consistently impro ves over direct inference in the language-only setting. For Qwen3-8B, accuracy increases from 0.673 to 0.733 , and for Qwen3-235B from 0.815 to 0.846 . This indicates that distilled experience remains eective in pure textual settings without any visual mo dality . Although Naive-KD achie ves the highest absolute performance, TED remains competitive without gradient-base d optimization, showing that contextual experience alone can provide meaningful gains in textual mathematical reasoning. The larger impr ovement on the smaller model further suggests that context-based knowledge transfer is especially benecial for capacity-limite d models. 5.4.4 A nalysis of training costs. W e compare the training costs of Naive-KD and TED on MathV erse under the same training budget, using 100 training samples and the same on-p olicy sampling setting T able 5: Training cost comparison on MathV erse under the same training budget (100 samples, 𝑁 = 5 ). Method GP U Hours Monetary Cost ($) Reduction Naive-KD 576 288.0 - TED – 12.6 22.9 × ( 𝑁 = 5 ). For Naive-KD, training is conducted on 8 N VIDIA A800 GP Us for 3 days, resulting in appr oximately 576 GP U-hours in total. Assuming a rental price of $0.5 per GP U-hour , the overall training cost is about $288. By contrast, TED is training-free and only requires experience updates over the same 100 samples for 3 epochs. In our setting, the entire process nishes within 8 hours. During this pr ocedure, the student model consumes approximately 21 million tokens, while the teacher model consumes about 6 million tokens. Based on the ocial pricing of Qwen3- VL and Kimi-K2.5, the total cost is only about $12.6. Overall, TED reduces the training cost by more than one order of magnitude compared with Naive-KD, achie ving a cost reduction of approximately 22 . 9 × . These results demonstrate that TED is highly cost-eective, as it av oids expensive gradient-based optimization while still delivering strong performance. 5.5 Ablation study T o evaluate the eectiveness of our proposed method, we perform several ablation studies using Q wen3- VL-8B and MathVision bench- mark. All other settings are kept unchanged. T able 6: Ablation Study of T eacher-Guided Experience Method Train Set T eacher MathVision Direct – – 0.627 Successful few-shot MathV erse - 0.631 TED MathV erse Q wen3- VL-8B 0.656 TED MathV erse Kimi-K2.5 0.702 5.5.1 Eect of T eacher-Guided Experience. As shown in T able 6, successful few-shot yields only a marginal improvement ov er direct inference (0.627 → 0.631), suggesting that simply r etrieving success- ful examples is not sucient to substantially enhance reasoning. In contrast, TED with the same student model as teacher (Qwen3- VL- 8B) improves performance to 0.656, sho wing that the gain mainly comes from the experience learning mechanism —iterative critique, renement, and experience accumulation—rather than fr om few- shot examples alone. Using a stronger teacher (Kimi-K2.5) further boosts performance to 0.702, indicating that better feedback can produce higher-quality experience and lead to stronger generaliza- tion. 5.5.2 Eect of Experience Compression. As shown in T able 7, ex- perience compression is essential for eective experience learning. Without compression, p erformance drops sharply from 0.702 to 0.594, even below direct inference, showing that simply accumulat- ing more experiences does not help and can instead hurt reasoning ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Shuozhi Y uan,et al. 5 15 30 50 Experience items 0.66 0.67 0.68 0.69 0.70 0.71 0.72 Score 0.673 0.702 0.711 0.704 (a) Experience Items Experiment 1 5 10 15 Trajectory nums 0.64 0.66 0.68 0.70 0.72 Score 0.664 0.702 0.713 0.716 (b) Trajectory Nums Experiment Qwen3-8B Deepseek Kimi2.5 ChatGPT5.2 Teacher model 0.62 0.64 0.66 0.68 0.70 0.72 0.74 Score 0.656 0.681 0.702 0.719 (c) T eacher Models Exp eriment Figure 4: Hyperparameter ablation of TED on MathVision. Performance is ae cted by the number of experience items, the number of sampled trajectories, and the choice of teacher model. TED performs best with a mo derate experience size, more diverse traje ctories, and stronger teachers. T able 7: Ablation Study of Experience Compression Method T eacher MathVision Direct – 0.627 TED (Full) Kimi-K2.5 0.702 TED W/o Compression Kimi-K2.5 0.594 TED naive cut Kimi-K2.5 0.648 TED random choose Kimi-K2.5 0.632 due to redundancy and noise. Naive cut (0.648) and random choose (0.632) both recover part of the p erformance by reducing experience length, but they remain clearly worse than full TED . In contrast, the full compression mechanism produces a compact and informative experience, achieving the best result of 0.702. These r esults verify the eectiveness of experience compr ession in making context- based distillation both stable and scalable. T able 8: Cross-modal generalization of experience. Experience source MathVision (Qwen- VL) AIME25 (Qwen) Direct 0.627 0.673 MathV erse 0.702 0.686 DAPO-Math 0.692 0.733 5.5.3 Cross-Modal Experience Transfer . As shown in T able 8, TED demonstrates eective cross-modal transfer . Experience learne d from multimodal MathV erse not only improves MathVision (0.627 → 0.702) but also brings gains on the text-only AIME25 benchmark (0.673 → 0.686). Similarly , experience learned from text-only D APO- Math improv es AIME25 mor e substantially (0.673 → 0.733) and also transfers w ell to multimodal MathVision (0.627 → 0.692). Although same-modality transfer yields the largest gains, the consistently positive cross-modal results sho w that TED captures transferable reasoning knowledge beyond modality-specic patterns. 5.5.4 Detaile d ablation study . W e further analyze three key factors of TED on MathVision, as shown in Figure 4. First, increasing the numb er of experience items consistently improves performance fr om 0.673 to 0.711 as the number of expe- rience items grows from 5 to 30, showing that richer experience provides more useful reasoning guidance. When the number of experience items is further expanded to 50, performance slightly drops to 0.704, suggesting that ov erly long experience introduces re- dundancy and reduces conte xt eciency . Therefor e, to balance cost, context length, and overall performance, we choose 15 experience items as the default setting. Second, increasing the number of sampled trajectories steadily improves performance from 0.664 to 0.716. Even a single trajec- tory already brings gains, indicating that teacher critique alone can rene reasoning to some extent. How ever , multiple trajectories pr o- vide more diverse correct and incorr ect reasoning paths, enabling the teacher to extract more generalizable experience knowledge. Third, TED also benets from stronger teacher models. Using the student itself as teacher already achieves 0.656, while str onger teachers further improve performance from 0.681 (DeepSe ek) to 0.702 (Kimi2.5) and 0.719 (ChatGPT5.2[ 16 ]). This trend shows that better teachers provide higher-quality supervision for experience extraction, leading to more informative and transferable e xperience. Overall, these results show that TED is robust to dierent design choices, and its performance is jointly determined by experience capacity , traje ctory diversity , and teacher quality . 6 Conclusion In this paper , we present TED , a parameter-free and context-based knowledge distillation framework that transfers knowledge through contextual experience accumulation rather than gradient-based optimization. By distilling teacher-guided reasoning trajectories into compact and reusable experiences, TED enables student models to continually improve without updating their parameters. Extensive experiments on multimodal reasoning and textual mathematical benchmarks demonstrate that TED consistently im- proves model performance in low-data settings while requiring only a small number of training samples. Across both multimodal and textual tasks, TED provides a strong performance-cost trade-o and achieves results competitive with conventional parameter-base d distillation, while reducing training cost by more than 20 × and avoiding parameter optimization. The proposed framework also TED: Training-Free Experience Distillation for Multimodal Reasoning ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil shows encouraging generalization across dier ent modalities and model scales. At the same time, TED is not intended to replace gradient-based distillation in large-scale settings with abundant data and sucient resources, where full parameter updates can still achiev e stronger performance. These results suggest that substantial knowledge transfer can be achieved through contextual experience injection, making TED a lightweight, data-ecient, and practical alternative for scenarios where conventional retraining is costly or infeasible . References [1] Rishabh Agarwal, Nino Vieillard, Y ongchao Zhou, Piotr Stanczyk, Sabela Ramos Garea, Matthieu Geist, and Olivier Bachem. 2024. On-policy distillation of lan- guage models: Learning fr om self-generated mistakes. In The twelfth international conference on learning representations . [2] Shuai Bai, Yuxuan Cai, Ruizhe Chen, Keqin Chen, Xionghui Chen, Zesen Cheng, Lianghao Deng, W ei Ding, Chang Gao, Chunjiang Ge, W enbin Ge, Zhifang Guo, Qidong Huang, Jie Huang, Fei Huang, Binyuan Hui, Shutong Jiang, Zhaohai Li, Mingsheng Li, Mei Li, Kaixin Li, Zicheng Lin, Junyang Lin, Xuejing Liu, Jiawei Liu, Chenglong Liu, Y ang Liu, Dayiheng Liu, Shixuan Liu, Dunjie Lu, Ruilin Luo, Chenxu Lv , Rui Men, Lingchen Meng, Xuancheng Ren, Xingzhang Ren, Sibo Song, Yuchong Sun, Jun Tang, Jianhong Tu, Jianqiang W an, Peng W ang, Pengfei W ang, Qiuyue W ang, Yuxuan W ang, Tianbao Xie, Yiheng Xu, Haiyang Xu, Jin Xu, Zhibo Y ang, Mingkun Y ang, Jianxin Y ang, An Y ang, Bowen Y u, Fei Zhang, Hang Zhang, Xi Zhang, Bo Zheng, Humen Zhong, Jingren Zhou, Fan Zhou, Jing Zhou, Y uanzhi Zhu, and Ke Zhu. 2025. Qwen3- VL T echnical Report. arXiv:2511.21631 [cs.CV] https://arxiv .org/abs/2511.21631 [3] Amanda Bertsch, Maor Ivgi, Emily Xiao, Uri Alon, Jonathan Berant, Matthew R Gormley , and Graham Neubig. 2025. In-context learning with long-context models: An in-depth exploration. In Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language T echnologies (V olume 1: Long Papers) . 12119–12149. [4] Max Biggs, W ei Sun, and Markus Ettl. 2021. Model distillation for revenue optimization: Interpretable personalized pricing. In International conference on machine learning . PMLR, 946–956. [5] T om Brown, Benjamin Mann, Nick Ryder , Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastr y , Amanda Askell, et al . 2020. Language mo dels are few-shot learners. Advances in neural information processing systems 33 (2020), 1877–1901. [6] Lin Chen, Jinsong Li, Xiaoyi Dong, Pan Zhang, Conghui He, Jiaqi W ang, Feng Zhao, and Dahua Lin. 2024. Sharegpt4v: Improving large multi-modal models with better captions. In European Conference on Computer Vision . Springer , 370– 387. [7] Luyang Fang, Xiaow ei Y u, Jiazhang Cai, Y ongkai Chen, Shushan W u, Zhengliang Liu, Zhenyuan Y ang, Haoran Lu, Xilin Gong, Yufang Liu, et al . 2026. Knowledge distillation and dataset distillation of large language models: Emerging tr ends, challenges, and future directions. Articial Intelligence Review 59, 1 (2026), 17. [8] Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi W ang, Xiao Bi, et al . 2025. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948 (2025). [9] Georey Hinton, Oriol Vinyals, and Je Dean. 2015. Distilling the Knowledge in a Neural Network. Computer Science 14, 7 (2015), 38–39. [10] Cheng- Y u Hsieh, Chun-Liang Li, Chih-Kuan Y eh, Hootan Nakhost, Y asuhisa Fujii, Alex Ratner , Ranjay Krishna, Chen- Yu Lee, and T omas Pster. 2023. Distilling step-by-step! outperforming larger language models with less training data and smaller model sizes. In Findings of the A ssociation for Computational Linguistics: ACL 2023 . 8003–8017. [11] Devvrit Khatri, Pranamya Kulkarni, Nilesh Gupta, Y erram V arun, Liqian Peng, Jay Y agnik, Praneeth Netrapalli, Cho-Jui Hsieh, Alec Go, Inderjit S Dhillon, et al . 2025. Compressing Many-Shots in In-Context Learning. arXiv preprint (2025). [12] Jinyang Li, Jack Williams, Nick McK enna, Arian Askari, Nicholas Wilson, and Reynold Cheng. [n. d.]. Agents Help Agents: Exploring T raining-Free Knowledge Distillation for Small Language Mo dels in Data Science Co de Generation. ([n. d.]). [13] Huanxuan Liao , Shizhu He, Y ao Xu, Yuanzhe Zhang, Kang Liu, and Jun Zhao . 2025. Neural-symbolic collaborative distillation: Advancing small language models for complex reasoning tasks. In Proceedings of the AAAI Conference on A rticial Intelligence , V ol. 39. 24567–24575. [14] Haotian Liu, Chunyuan Li, Qingyang Wu, and Y ong Jae Lee. 2023. Visual in- struction tuning. Advances in neural information processing systems 36 (2023), 34892–34916. [15] Math- AI. 2024. American Invitational Mathematics Examination ( AIME) 2024. Hugging Face dataset. [16] OpenAI. 2025. Introducing GPT -5.2. https://openai.com/index/introducing- gpt- 5- 2/. Accessed: 2026. [17] Jiahao Qiu, Xinzhe Juan, Yimin W ang, Ling Y ang, Xuan Qi, T ongcheng Zhang, Jiacheng Guo, Yifu Lu, Zixin Y ao, Hongru Wang, et al . 2025. AgentDistill: Training-Free Agent Distillation with Generalizable MCP Boxes. arXiv preprint arXiv:2506.14728 (2025). [18] Ohad Rubin, Jonathan Herzig, and Jonathan Berant. 2022. Learning to retrieve prompts for in-conte xt learning. In Proceedings of the 2022 conference of the North A merican chapter of the association for computational linguistics: human language technologies . 2655–2671. [19] Noah Shinn, Federico Cassano, Ashwin Gopinath, Karthik Narasimhan, and Shunyu Yao . 2023. Reexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems 36 (2023), 8634–8652. [20] Y ueqi Song, Tianyue Ou, Yibo K ong, Zecheng Li, Graham Neubig, and Xiang Y ue. 2025. Visualpuzzles: Decoupling multimodal reasoning evaluation from domain knowledge. arXiv preprint arXiv:2504.10342 (2025). [21] Rohan T aori, Ishaan Gulrajani, Tianyi Zhang, Y ann Dubois, Xuechen Li, Carlos Guestrin, Percy Liang, and T atsunori B. Hashimoto. 2023. Stanford Alpaca: An Instruction-following LLaMA model. https://github.com/tatsu- lab/stanford_ alpaca. [22] Kimi T eam, T ongtong Bai, Yifan Bai, Yiping Bao, SH Cai, Y uan Cao, Y Charles, HS Che, Cheng Chen, Guanduo Chen, et al . 2026. Kimi K2. 5: Visual Agentic Intelligence. arXiv preprint arXiv:2602.02276 (2026). [23] Yijun Tian, Yikun Han, Xiusi Chen, W ei W ang, and Nitesh V . Chawla. 2024. Beyond Answers: T ransferring Reasoning Capabilities to Smaller LLMs Using Multi- T eacher Knowledge Distillation. arXiv:2402.04616 [cs.CL] https://arxiv . org/abs/2402.04616 [24] Bin W ang, Fan W u, Xiao Han, Jiahui Peng, Huaping Zhong, Pan Zhang, Xiaoyi Dong, W eijia Li, W ei Li, Jiaqi W ang, et al . 2024. Vigc: Visual instruction generation and correction. In Proceedings of the AAAI Conference on A rticial Intelligence , V ol. 38. 5309–5317. [25] Ke Wang, Junting Pan, W eikang Shi, Zimu Lu, Houxing Ren, Aojun Zhou, Mingjie Zhan, and Hongsheng Li. 2024. Measuring multimodal mathematical reasoning with math-vision dataset. Advances in Neural Information Processing Systems 37 (2024), 95095–95169. [26] Yizhong W ang, Y eganeh K ordi, Swaroop Mishra, Alisa Liu, Noah A. Smith, Daniel Khashabi, and Hannaneh Hajishirzi. 2023. Self-Instruct: Aligning Language Models with Self-Generated Instructions. arXiv:2212.10560 [cs.CL] https://arxiv. org/abs/2212.10560 [27] Jason W ei, Xuezhi W ang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al . 2022. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information pr ocessing systems 35 (2022), 24824–24837. [28] Yijia Xiao, Edward Sun, Tianyu Liu, and W ei W ang. 2024. Logicvista: Mul- timodal llm logical reasoning benchmark in visual contexts. arXiv preprint arXiv:2407.04973 (2024). [29] An Y ang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Y u, Chang Gao , Chengen Huang, Chenxu Lv , et al . 2025. Qwen3 technical report. arXiv preprint arXiv:2505.09388 (2025). [30] Qiying Yu, Zheng Zhang, Ruofei Zhu, ..., and Mingxuan Wang. 2025. DAPO: An Op en-Source LLM Reinforcement Learning System at Scale. arXiv:2503.14476 [cs.LG] [31] Renrui Zhang, Dongzhi Jiang, Yichi Zhang, Haokun Lin, Ziyu Guo, Pengshuo Qiu, Aojun Zhou, Pan Lu, Kai- W ei Chang, Yu Qiao, et al . 2024. Mathverse: Does your multi-modal llm truly see the diagrams in visual math problems? . In European Conference on Computer Vision . Springer, 169–186. [32] Yifan Zhang and Math-AI T eam. 2025. American Invitational Mathematics Examination (AIME) 2025. Hugging Face dataset. [33] Huichi Zhou, Yihang Chen, Siyuan Guo, Xue Yan, Kin Hei Lee, Zihan W ang, Ka Yiu Lee, Guchun Zhang, Kun Shao, Linyi Y ang, et al . 2025. Memento: Fine- tuning llm agents without ne-tuning llms. arXiv preprint (2025). [34] Deyao Zhu, Jun Chen, Xiaoqian Shen, Xiang Li, and Mohamed Elhoseiny . 2023. Minigpt-4: Enhancing vision-language understanding with advanced large lan- guage models. arXiv preprint arXiv:2304.10592 (2023). ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Shuozhi Y uan,et al. A Limitation and discussion Despite the promising results, TED also has several limitations. The pr oposed framework is particularly suitable for scenarios with limited training data, restricted computational resources, or black-box APIs, where gradient-based optimization is impractical or unavailable . By operating entirely in the conte xt space, TED avoids parameter updates and signicantly reduces training cost. Ho wever , in settings with large-scale datasets and sucient computational resources, gradient-base d distillation or ne-tuning methods are still likely to achieve stronger performance, as they can fully update model parameters and exploit larger training signals. From a qualitative perspective, the eectiveness of TED may stem from its ability to reduce the reasoning sear ch space for smaller models. When solving complex problems, small models often face a large and inecient reasoning sear ch space during the thinking process. The trajectory-base d framework of TED iteratively collects reasoning paths and leverages teacher critiques together with positive and negative trajectory comparisons, which gradually constrain the exploration space and guide the model toward more eective reasoning strategies. As a result, even self-distillation or r elatively weak teacher models can still provide useful signals that impr ove performance. When stronger teacher models are used, their reasoning trajectories tend to b e more accurate and ecient, leading to higher-quality experience extraction and larger performance gains. In practice, the distilled experiences often function as high-lev el reasoning patterns or thinking templates, which help smaller models adopt more structured and eective problem-solving strategies. B Details of prompts This section reports the exact prompts used in our experiments. Inference with experience Please solve the problem in the figure: problem When solving problems, you MUST first carefully read and understand the helpful instructions and experiences: experiences Final answer should be start with Answer for example: Answer: A/B/C/D Inference of teacher Please solve the problem: problem Final answer should be start with for example: A/B/C/D Prompt of teacher critique You are given: (1) a problem, (2) multiple solution trajectories generated by a student network, (3) one or more trajectories generated by a teacher network. The objective is to extract generalizable reasoning experiences from the teacher trajectories that can guide and correct the student network in future attempts on structurally similar problems. Student trajectories are labeled with binary rewards: - POSITIVE (reward = 1): successful solutions - NEGATIVE (reward = 0): failed solutions. You must perform a structured comparative analysis before updating experiences. 1. Comparative Trajectory Analysis Teacher Trajectories: - Identify the key strategic decisions and pivotal reasoning steps. - Analyze how critical decision points, ambiguities, and potential pitfalls are resolved. - Distill recurring reasoning patterns that contribute to correctness. Student Trajectories: Positive Trajectories (reward = 1): - Identify strategies that directly contributed to success. - Analyze alignment with the teacher’s reasoning patterns. - Determine which reasoning components are transferable. Negative Trajectories (reward = 0): - Identify divergence points from successful reasoning paths. - Categorize error patterns, such as: • misapplied principles, • overlooked constraints, • incorrect assumptions, • premature simplifications, • local optimization traps. - Extract partially correct but incomplete reasoning components. Cross Comparison: - Identify decisive differences between positive and negative trajectories. - Determine reasoning strategies consistently applied by the teacher but absent in failed student attempts. 2. Updating the Experience Set Teacher trajectories may include both correct and incorrect reasoning paths. Only extract experiences that reliably promote correct strategic reasoning. You may perform one of the following operations: - "modify": refine an existing experience to improve clarity or correctness. - "add": introduce a new generalizable experience. - "delete": remove an incorrect or misleading experience. - "nan": make no updates if the experience set is already sufficient. 3. Experience Formulation Requirements TED: Training-Free Experience Distillation for Multimodal Reasoning ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Each experience must: - Begin with a concise description of the general problem context. - Emphasize strategic reasoning patterns rather than specific computations. - Highlight reusable decision points applicable across similar tasks. - Avoid referencing specific numeric values or problem-dependent details. Prompt of Trajectory compression You are given a problem, and the following rollouts to solve the given problem. Please summarize the trajectory step-by-step: For each step, describe **what action is being taken**. Only the abstract experiences. problem rollouts only return the summary of each step, e.g., 1. what happened in the first step and the core outcomes 2. what happened in the second step and the core outcomes Prompt of Exp erience compression An agent system maintains a set of reasoning experiences. Currently, there are some experiences, which introduces redundancy and weakens strategic clarity. Your objective is to perform experience compression under the FREE framework by consolidating overlapping reasoning patterns and removing duplication. The final number of experiences must not exceed 15. Each resulting experience must satisfy the following criteria: 1. It must express a clear and generalizable strategic lesson, within 32 words. 2. It must begin with a concise general background context. 3. It must focus on reasoning strategies rather than specific computations. 4. It must emphasize transferable decision points applicable to similar problems. 5. It must avoid semantic overlap with other retained experiences. You are provided with: experiences Compression Procedure: 1. Redundancy Analysis - Identify experiences expressing similar strategic principles. - Detect overlap in decision logic, structural reasoning, or error prevention themes. - Group experiences that differ superficially but share core reasoning patterns. 2. Strategic Abstraction - Generalize grouped experiences into a higher-level strategic principle. - Remove problem-specific language. - Preserve critical decision-point structure. 3. Compression Operations You may use the following update operations: - "modify": refine an existing experience to improve abstraction and generality. - "merge": combine multiple similar experiences into one more general and strategically expressive experience. (Merge is the primary mechanism for reducing count.) C Some examples of experience Some examples of experience E1 : For signed quantities with unknown signs, introduce sign variables, enumerate cases systematically, and optimize. Extrema often emerge when sign groups oppose each other. E2 : For optimization, push variables to constraints to find bounds, verify attainability. For maximin problems, identify the most restrictive objective to bound the optimum, then ensure others can meet it. E3 :For random walk hitting probabilities: model as renewal process, derive first-step recurrence, encode as generating function, analyze dominant singularity for limiting behavior. E4 : Geometric algebra: Use mass points with scaled vertex masses for ratios. Or translate to coordinates/vectors, exploit symmetry, compute via formulas, and identify linear relations. E5 :Geometry properties: For angle bisectors, use excenter relationships and half-angle formulas. For circle tangents, exploit perpendicularity to form right triangles and apply trigonometric ratios. ...... ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Shuozhi Y uan,et al. D Details of algorithm Algorithm 1: Reasoning Trajectory Generation in TED Input: input–label pair ( 𝑥 , 𝑦 ) , student model 𝑆 , teacher model 𝑇 , experience 𝐸 , trajector y number 𝑁 Output: condensed student trajectories { 𝜏 𝑖 } 𝑁 𝑖 = 1 , teacher trajectory 𝜏 𝑇 , teacher-validity ag 1 Construct the prompted context 𝑝 ( 𝑥 ; 𝐸 ) = [ 𝑝 sys ; 𝐸 ; 𝑥 ] ; 2 Sample 𝑁 student raw trajectories in parallel: { ˜ 𝜏 𝑖 } 𝑁 𝑖 = 1 ∼ 𝑆 ( · | 𝑝 ( 𝑥 ; 𝐸 ) ) ; 3 Generate the teacher raw trajector y: ˜ 𝜏 𝑇 ∼ 𝑇 ( · | 𝑥 ) ; 4 for 𝑖 ← 1 to 𝑁 do 5 𝜏 𝑖 ← Condense ( ˜ 𝜏 𝑖 ) ; 6 𝜏 𝑇 ← Condense ( ˜ 𝜏 𝑇 ) ; 7 Enforce the structured format: Premises → Step 1 → Step 2 → · · · → Conclusion ; 8 if ˆ 𝑦 ( 𝜏 𝑇 ) = 𝑦 then 9 mark 𝜏 𝑇 as valid; 10 else 11 mark 𝜏 𝑇 as invalid and treat this sample as a negative case for later critique; 12 return { 𝜏 𝑖 } 𝑁 𝑖 = 1 , 𝜏 𝑇 ; Algorithm 2: Experience Generation in TED Input: student trajectories { 𝜏 𝑖 } 𝑁 𝑖 = 1 , teacher trajectory 𝜏 𝑇 , ground-truth label 𝑦 , experience 𝐸 Output: updated experience 𝐸 1 Partition student trajectories into T + = { 𝜏 𝑖 | ˆ 𝑦 ( 𝜏 𝑖 ) = 𝑦 } and T − = { 𝜏 𝑖 | ˆ 𝑦 ( 𝜏 𝑖 ) ≠ 𝑦 } ; 2 if | T + | < | T − | then 3 down-sample T − until | T + | ≥ | T − | ; 4 if | T + | = 0 then 5 keep only one negative trajectory in T − ; 6 Generate teacher critique: 𝐶 = Critique ( { 𝜏 𝑖 } 𝑁 𝑖 = 1 , 𝜏 𝑇 , 𝑦 ) ; 7 T eacher selects one action from { Add , Modify , Delete , None } ; 8 switch selecte d action do 9 case Add do 10 insert a new experience item into 𝐸 ; 11 case Modify do 12 revise an existing e xperience item in 𝐸 ; 13 case Delete do 14 remove an obsolete or harmful e xperience item from 𝐸 ; 15 case None do 16 keep 𝐸 unchanged; 17 return 𝐸 ; TED: Training-Free Experience Distillation for Multimodal Reasoning ACM MM’26, June 03–05, 2026, Rio de Janeir o, Brazil Algorithm 3: Experience Compression in TED Input: experience 𝐸 = { 𝑒 𝑗 } | 𝐸 | 𝑗 = 1 , context budget 𝐵 , item budget 𝐵 item , step 𝑡 , current sample ( 𝑥 𝑡 , 𝑦 𝑡 ) Output: compressed experience ˆ 𝐸 1 foreach 𝑒 ∈ 𝑈 ( 𝐸 ; 𝑥 𝑡 ) do 2 𝑢 𝑡 ( 𝑒 ) ← 𝑢 𝑡 − 1 ( 𝑒 ) + I [ 𝑒 ∈ 𝑈 ( 𝐸 ; 𝑥 𝑡 ) ] ; 3 𝑠 𝑡 ( 𝑒 ) ← log ( 1 + 𝑢 𝑡 ( 𝑒 ) ) ; 4 if Í 𝑒 ∈ 𝐸 ℓ ( 𝑒 ) > 𝐵 or | 𝐸 | > 𝐵 item then 5 retain only the top- 𝑅 most frequently used / highest-utility experiences; 6 T eacher summarizes 𝐸 into a smaller set ˆ 𝐸 ; 7 foreach candidate item or item group in 𝐸 do 8 select one action from { Merge , Rewrite , Delete , None } ; 9 switch selected action do 10 case Merge do 11 replace redundant items with one higher-level e xperience; 12 case Rewrite do 13 rephrase an item to improv e generality and applicability; 14 case Delete do 15 remove obsolete , noisy , or harmful items; 16 case None do 17 retain the item unchanged; 18 else 19 ˆ 𝐸 ← 𝐸 ; 20 return ˆ 𝐸 ;

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment