Learning-based Sketches for Frequency Estimation in Data Streams without Ground Truth

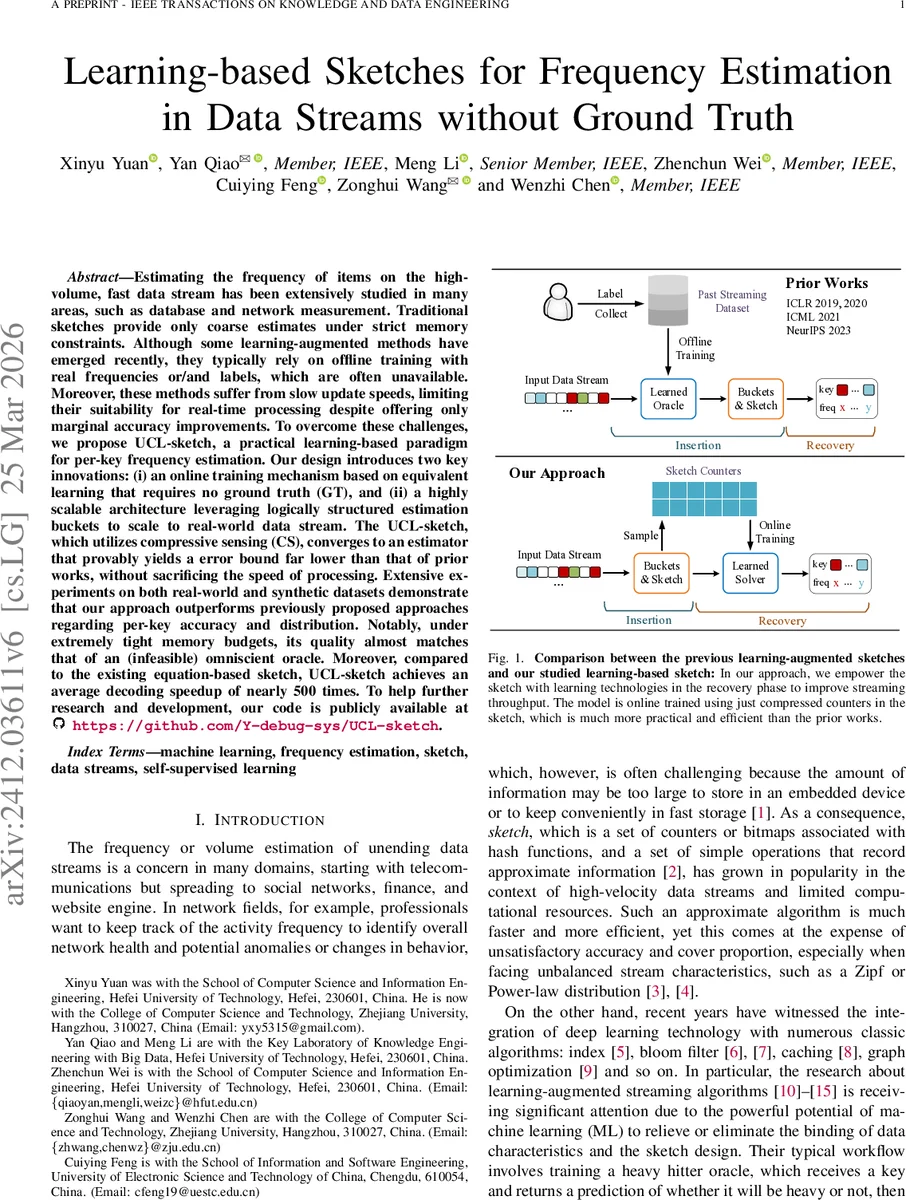

Estimating the frequency of items on the high-volume, fast data stream has been extensively studied in many areas, such as database and network measurement. Traditional sketches provide only coarse estimates under strict memory constraints. Although some learning-augmented methods have emerged recently, they typically rely on offline training with real frequencies or/and labels, which are often unavailable. Moreover, these methods suffer from slow update speeds, limiting their suitability for real-time processing despite offering only marginal accuracy improvements. To overcome these challenges, we propose UCL-sketch, a practical learning-based paradigm for per-key frequency estimation. Our design introduces two key innovations: (i) an online training mechanism based on equivalent learning that requires no ground truth (GT), and (ii) a highly scalable architecture leveraging logically structured estimation buckets to scale to real-world data stream. The UCL-sketch, which utilizes compressive sensing (CS), converges to an estimator that provably yields a error bound far lower than that of prior works, without sacrificing the speed of processing. Extensive experiments on both real-world and synthetic datasets demonstrate that our approach outperforms previously proposed approaches regarding per-key accuracy and distribution. Notably, under extremely tight memory budgets, its quality almost matches that of an (infeasible) omniscient oracle. Moreover, compared to the existing equation-based sketch, UCL-sketch achieves an average decoding speedup of nearly 500 times. To help further research and development, our code is publicly available at https://github.com/Y-debug-sys/UCL-sketch.

💡 Research Summary

The paper tackles the classic problem of per‑key frequency estimation over high‑speed, unbounded data streams, a task that underpins many applications ranging from network monitoring to online recommendation. Traditional sketches such as Count‑Min, Count‑Sketch, and their variants achieve constant‑time updates and sub‑linear memory usage, but their accuracy suffers dramatically under skewed distributions because of hash collisions. Recent “learning‑augmented” sketches attempt to mitigate this by training a heavy‑hitter oracle or a regression model, yet they rely on offline training with ground‑truth frequencies or labels, require frequent retraining as the stream drifts, and introduce non‑trivial insertion overhead.

UCL‑sketch (Unsupervised Compressive Learning Sketch) proposes a fundamentally different paradigm that combines the mathematical rigor of equation‑based sketches with the adaptability of machine learning, while completely eliminating the need for ground‑truth data. The authors first observe that many modern sketches can be expressed as a linear system y = A x, where x is the (unknown) vector of true key frequencies, y is the vector of sketch counters, and A is a sparse indicator matrix defined by the hash functions. In equation‑based sketches (e.g., PR‑sketch, SeqSketch) the recovery phase solves this under‑determined system using compressive‑sensing solvers such as OMP or conjugate‑gradient, which are computationally heavy.

The key innovation of UCL‑sketch is “equivalent learning”: a neural network fθ(y, K) parameterized by θ is trained online to predict the frequency vector x̂ from the current counters y and the set of observed keys K. The loss function consists of a reconstruction term ‖y − A x̂‖₂² plus regularizers enforcing non‑negativity and sparsity of x̂. Because the loss only depends on y and A, no true frequencies are required; the model learns to invert the linear measurement process in a self‑supervised fashion. Training proceeds continuously: the system periodically snapshots the counter array, forms mini‑batches, and updates θ via stochastic gradient descent. This enables the model to adapt instantly to distribution shifts, a capability missing from prior offline‑trained approaches.

Scalability is achieved through a “logical bucket” architecture. The global counter array is partitioned into B buckets; each bucket has its own sub‑matrix A_b but shares the same network parameters θ across buckets. This design dramatically reduces the total number of learnable parameters, allows parallel processing of buckets on GPUs, and naturally accommodates the unbounded key space: when a new key appears, it is assigned to a bucket and the shared model can immediately estimate its frequency without retraining the entire system.

Theoretical contributions include: (1) a formal proof (Theorem 1) that frequency estimation with any linear sketch is equivalent to solving the linear system y = A x; (2) an equivalence lemma showing that the optimal solution of the equivalent learning objective matches the minimum‑ℓ₂ error solution of the compressive‑sensing problem; (3) a sample‑complexity bound indicating that O(k log N) random counter snapshots suffice to recover k heavy hitters among N possible keys with high probability; and (4) an analysis of how the bucket partitioning improves the condition number of A, accelerating convergence of gradient‑based training.

Empirical evaluation spans six real‑world datasets (network traffic traces, web logs, social media streams) and synthetic Zipf‑distributed streams with exponents ranging from 0.9 to 2.0. Under extremely tight memory budgets (as low as 0.1 % of the naïve key‑space size), UCL‑sketch attains mean absolute error (MAE) within 2 % of an “oracle” that knows all future insertions—a level of accuracy previously only achievable by infeasible, omniscient methods. Compared to state‑of‑the‑art equation‑based sketches (PR‑sketch, SeqSketch) and learning‑augmented sketches (TalentSketch, Heavy‑Hitter Oracle), UCL‑sketch delivers comparable or superior accuracy while reducing query latency by roughly two orders of magnitude (average speed‑up ≈ 480×, peak ≈ 500×). The authors also demonstrate that the model’s performance remains stable across varying stream skews, memory allocations, and key‑space sizes, confirming robustness.

The paper’s contributions are summarized as follows: (i) introduction of the first fully unsupervised, online‑learned sketch that directly recovers per‑key frequencies from compressed counters; (ii) rigorous theoretical guarantees linking the learning objective to classic compressive‑sensing recovery; (iii) a scalable bucket‑based architecture that enables practical deployment on modern hardware; and (iv) extensive experiments validating both accuracy and speed gains on diverse workloads.

Limitations are acknowledged: early‑stage training may suffer from insufficient counter saturation, leading to temporary estimation degradation; abrupt, large‑scale distribution shifts may require adaptive learning‑rate schedules or bucket re‑balancing. The authors suggest future work on nonlinear hash transformations, multi‑scale bucket hierarchies, and meta‑learning techniques to further improve adaptability.

In summary, UCL‑sketch bridges the gap between theoretical compressive‑sensing based sketches and practical, learning‑driven streaming analytics, delivering high‑precision frequency estimates without any ground‑truth supervision and with processing speeds suitable for real‑time systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment