Red-Teaming Vision-Language-Action Models via Quality Diversity Prompt Generation for Robust Robot Policies

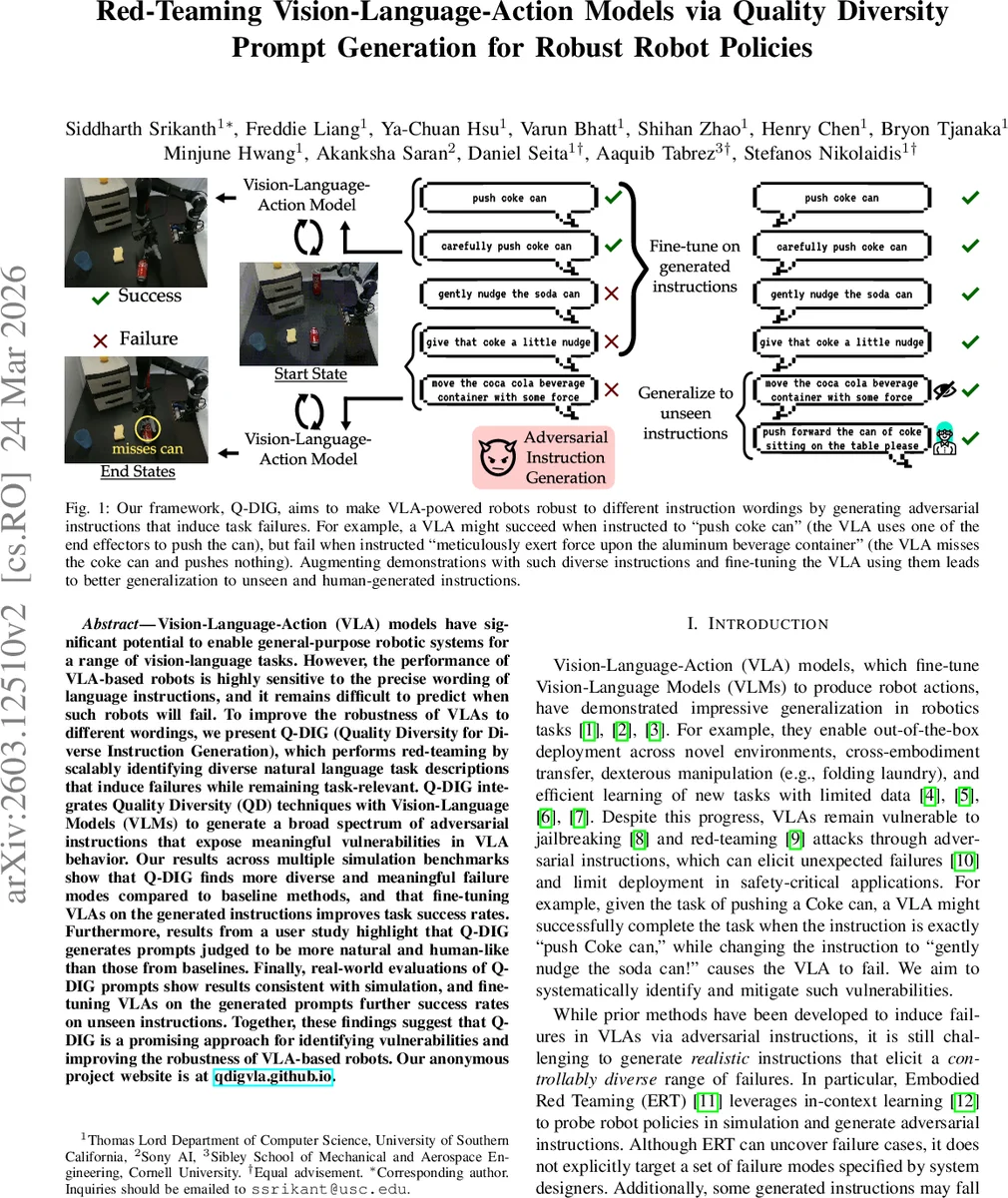

Vision-Language-Action (VLA) models have significant potential to enable general-purpose robotic systems for a range of vision-language tasks. However, the performance of VLA-based robots is highly sensitive to the precise wording of language instructions, and it remains difficult to predict when such robots will fail. To improve the robustness of VLAs to different wordings, we present Q-DIG (Quality Diversity for Diverse Instruction Generation), which performs red-teaming by scalably identifying diverse natural language task descriptions that induce failures while remaining task-relevant. Q-DIG integrates Quality Diversity (QD) techniques with Vision-Language Models (VLMs) to generate a broad spectrum of adversarial instructions that expose meaningful vulnerabilities in VLA behavior. Our results across multiple simulation benchmarks show that Q-DIG finds more diverse and meaningful failure modes compared to baseline methods, and that fine-tuning VLAs on the generated instructions improves task success rates. Furthermore, results from a user study highlight that Q-DIG generates prompts judged to be more natural and human-like than those from baselines. Finally, real-world evaluations of Q-DIG prompts show results consistent with simulation, and fine-tuning VLAs on the generated prompts further success rates on unseen instructions. Together, these findings suggest that Q-DIG is a promising approach for identifying vulnerabilities and improving the robustness of VLA-based robots. Our anonymous project website is at qdigvla.github.io.

💡 Research Summary

Paper Overview

Vision‑Language‑Action (VLA) models have shown impressive generalization across a variety of robotic tasks by fine‑tuning large vision‑language models (VLMs) to output robot actions. However, these systems are notoriously brittle: a slight re‑phrasing of the natural‑language instruction can cause the robot to fail, even when the semantic intent is unchanged. This vulnerability hampers deployment in safety‑critical settings and motivates systematic red‑teaming—i.e., the intentional generation of adversarial inputs to expose hidden failure modes.

Q‑DIG: Quality‑Diversity for Diverse Instruction Generation

The authors propose Q‑DIG, a framework that couples Quality‑Diversity (QD) evolutionary search with a VLM‑based mutator to automatically produce a repertoire of linguistically diverse, yet task‑relevant, adversarial instructions. The key components are:

-

Attack‑Style Taxonomy – Eight semantic “styles” are defined (z₀…z₇): step‑by‑step breakdown, rare/technical vocabulary, human‑centric tone, adverb insertion, unnecessary action detail, verbose reformulation, colloquial/slang, and mixed‑modality references. These styles capture realistic ways humans might vary an instruction while staying within the same task.

-

QD Formulation – The solution space is the set of possible instructions C. Quality is measured by the variance of the VLA’s failure rate on a given instruction:

J(c) = p·(1‑p) where p = E

Comments & Academic Discussion

Loading comments...

Leave a Comment