DINO-Tok: Adapting DINO for Visual Tokenizers

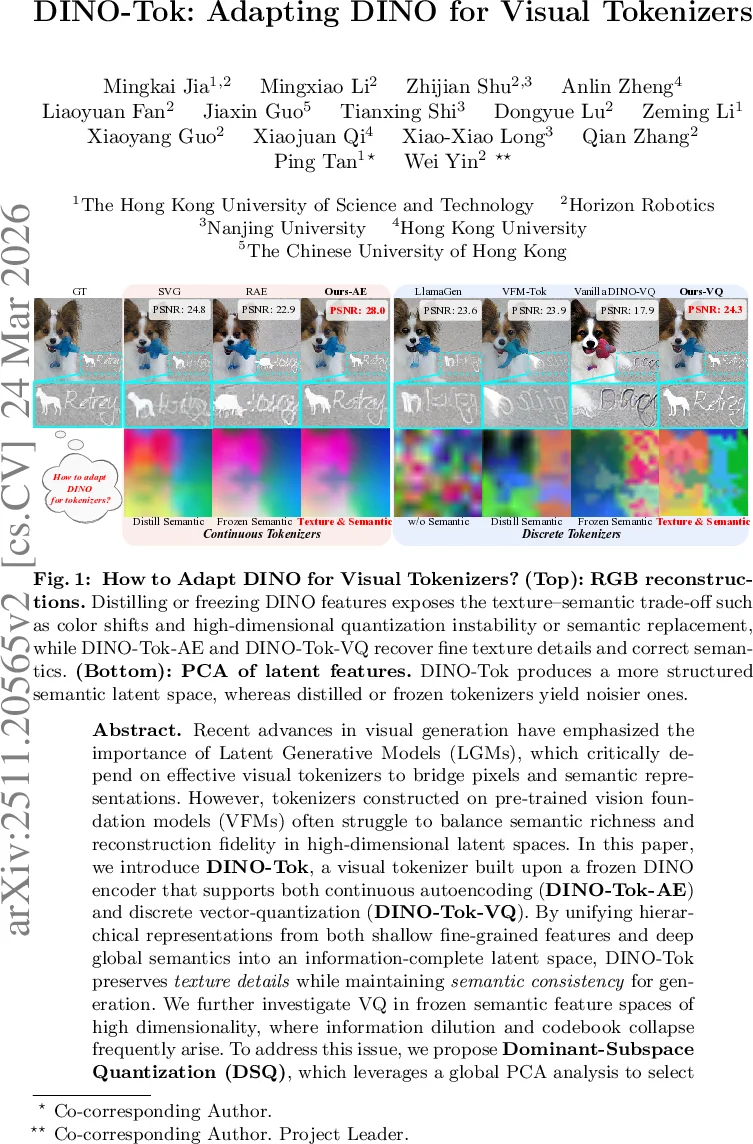

Recent advances in visual generation have emphasized the importance of Latent Generative Models (LGMs), which critically depend on effective visual tokenizers to bridge pixels and semantic representations. However, tokenizers constructed on pre-trained vision foundation models (VFMs) often struggle to balance semantic richness and reconstruction fidelity in high-dimensional latent spaces. In this paper, we introduce DINO-Tok, a visual tokenizer built upon a frozen DINO encoder that supports both continuous autoencoding (DINO-Tok-AE) and discrete vector-quantization (DINO-Tok-VQ). By unifying hierarchical representations from both shallow fine-grained features and deep global semantics into an information-complete latent space, DINO-Tok preserves texture details while maintaining \textit{semantic consistency} for generation. We further investigate VQ in frozen semantic feature spaces of high dimensionality, where information dilution and codebook collapse frequently arise. To address this issue, we propose Dominant-Subspace Quantization (DSQ), which leverages a global PCA analysis to select principal components while suppressing noisy dimensions, thereby stabilizing codebook optimization and improving reconstruction and generation quality. On ImageNet 256x256, DINO-Tok achieves strong reconstruction performance, achieving 0.28 rFID for continuous autoencoding and 1.10 rFID for discrete VQ, as well as strong few-step generation performance 1.82 gFID for diffusion and 2.44 gFID for autoregressive generation. These results demonstrate that pre-trained VFMs such as DINO can be directly adapted into high-fidelity, semantically aligned visual tokenizers for next-generation latent generative models. Code will be publicly available at https://github.com/MKJia/DINO-Tok.

💡 Research Summary

The paper introduces DINO‑Tok, a unified visual tokenizer that adapts a frozen DINO encoder for both continuous auto‑encoding (AE) and discrete vector‑quantization (VQ) tasks. The authors identify two fundamental obstacles when repurposing frozen vision foundation models (VFMs) as tokenizers: (1) a texture‑semantic trade‑off, where deep VFM features capture high‑level semantics but discard fine‑grained texture and color details, and (2) instability in high‑dimensional quantization, where the concentration of pairwise distances in a 768‑dimensional space leads to codebook collapse and semantic replacement. To address the first issue, DINO‑Tok‑AE fuses a shallow layer’s texture feature (F_tex) with the final layer’s semantic feature (F_sem). The texture feature is first compressed by a lightweight projector g(·) and then concatenated channel‑wise with F_sem, forming an information‑complete latent vector z_AE. A trainable decoder D_AE reconstructs the image using a combination of reconstruction, perceptual, and GAN losses, while the DINO encoder remains frozen. This dual‑branch design restores high‑frequency details without sacrificing semantic structure, achieving PSNR 24.8 and rFID 0.28 on ImageNet‑256, substantially outperforming prior frozen‑encoder baselines such as RAE. For the second issue, the paper proposes Dominant‑Subspace Quantization (DSQ). A global PCA on DINO features reveals that a small set of principal components carries most semantic information, whereas many tail dimensions are low‑variance noise. DSQ selects the top‑k principal components and performs VQ only within this subspace, suppressing noisy dimensions. Two specialized codebooks—one for semantics and one for texture—are learned jointly, stabilizing the quantization process even in the original 768‑dimensional space. This approach yields PSNR 23.9 and rFID 1.10, beating existing VQ‑VAE and LlamaGen variants that resort to aggressive dimensionality reduction. The authors further evaluate few‑step generation: a diffusion model conditioned on DINO‑Tok tokens reaches gFID 1.82, and an autoregressive model attains gFID 2.44, demonstrating that the tokenizer’s balanced latent representation benefits both diffusion‑based and AR‑based generation. Extensive ablations confirm that an intermediate shallow layer provides the optimal trade‑off between texture fidelity and semantic alignment. Overall, DINO‑Tok shows that a frozen VFM can be directly transformed into a high‑fidelity, semantically aligned visual tokenizer, eliminating the need for costly distillation while delivering state‑of‑the‑art reconstruction and generation performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment