Manta: Enhancing Mamba for Few-Shot Action Recognition of Long Sub-Sequence

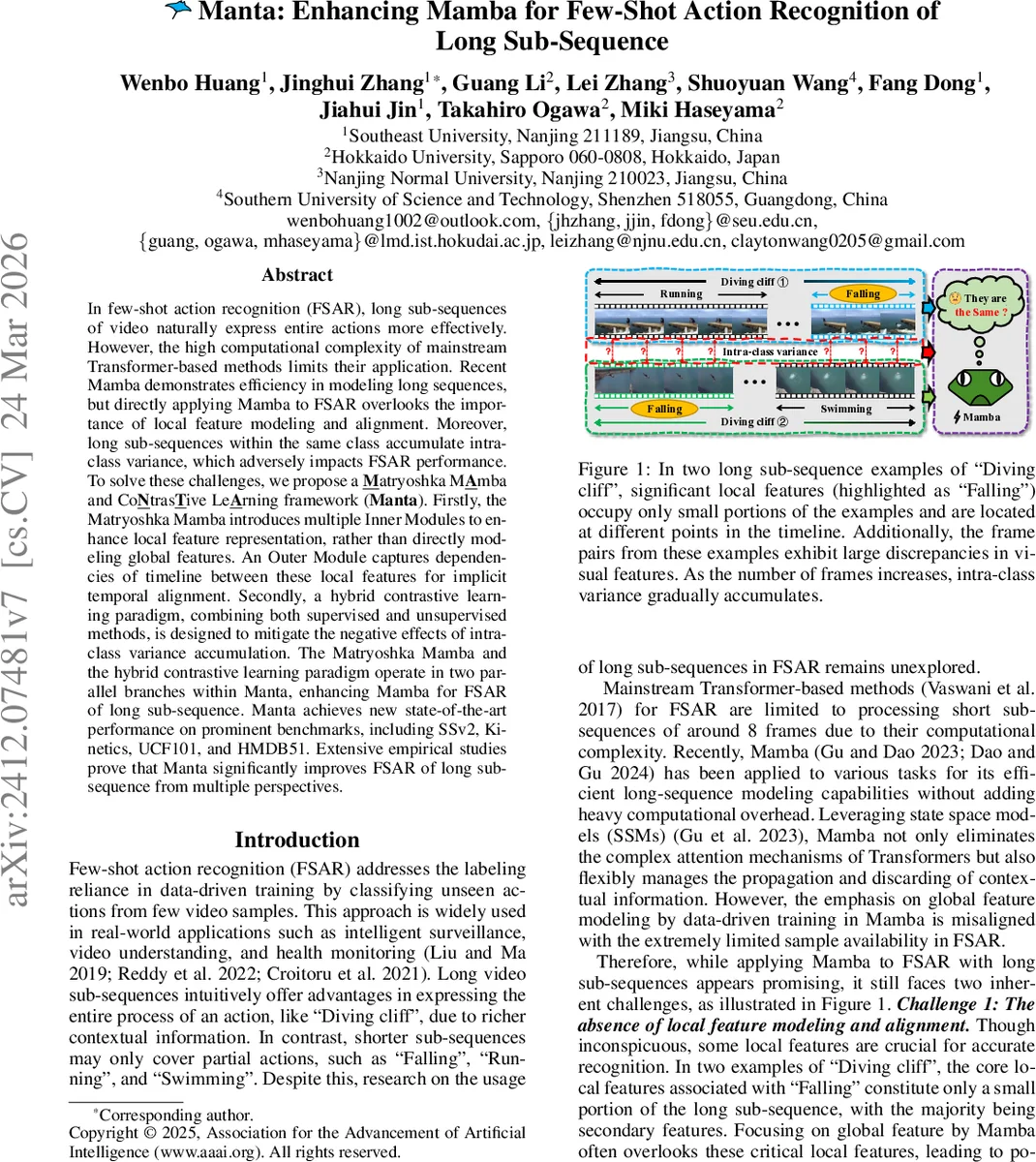

In few-shot action recognition (FSAR), long sub-sequences of video naturally express entire actions more effectively. However, the high computational complexity of mainstream Transformer-based methods limits their application. Recent Mamba demonstrates efficiency in modeling long sequences, but directly applying Mamba to FSAR overlooks the importance of local feature modeling and alignment. Moreover, long sub-sequences within the same class accumulate intra-class variance, which adversely impacts FSAR performance. To solve these challenges, we propose a Matryoshka MAmba and CoNtrasTive LeArning framework (Manta). Firstly, the Matryoshka Mamba introduces multiple Inner Modules to enhance local feature representation, rather than directly modeling global features. An Outer Module captures dependencies of timeline between these local features for implicit temporal alignment. Secondly, a hybrid contrastive learning paradigm, combining both supervised and unsupervised methods, is designed to mitigate the negative effects of intra-class variance accumulation. The Matryoshka Mamba and the hybrid contrastive learning paradigm operate in two parallel branches within Manta, enhancing Mamba for FSAR of long sub-sequence. Manta achieves new state-of-the-art performance on prominent benchmarks, including SSv2, Kinetics, UCF101, and HMDB51. Extensive empirical studies prove that Manta significantly improves FSAR of long sub-sequence from multiple perspectives.

💡 Research Summary

The paper introduces Manta, a novel framework designed to tackle the challenges of few‑shot action recognition (FSAR) when long video sub‑sequences are used as inputs. Traditional FSAR approaches rely heavily on Transformer‑based architectures, which are computationally prohibitive for sequences longer than a handful of frames. Although the recent Mamba model, built on state‑space models (SSMs), can process long sequences efficiently, it focuses on global feature modeling and lacks mechanisms for local feature extraction and temporal alignment—both crucial for FSAR where only a few labeled examples are available. Moreover, long sub‑sequences increase intra‑class variance due to diverse shooting conditions, further degrading performance.

Manta addresses these issues through two complementary components: (1) Matryoshka Mamba, a hierarchical architecture that nests multiple Inner Modules inside a single Outer Module, and (2) a hybrid contrastive learning scheme that jointly employs supervised and unsupervised contrastive objectives.

Matryoshka Mamba

The input video is sampled into F frames. The Inner Modules split the sequence into non‑overlapping fragments of size o (where o is a divisor of F) and process each fragment with a bidirectional Mamba‑2 block: a forward branch (IM_Fw) and a backward branch (IM_Bw) that do not share parameters. This design captures fine‑grained, local motion cues (e.g., the “fall” segment in a “diving cliff” action) that may occupy only a small temporal window. The outputs of the forward and backward branches are concatenated, linearly projected, and added back to the original fragment representation, yielding enhanced local features for each scale.

The Outer Module receives the locally enhanced features from all scales and performs a bidirectional scan (OM_Fw and OM_Bw share parameters) to model temporal dependencies across the entire sequence. Learnable scalar weights w_S^o and w_Q^o, produced by a small Conv2D‑BN‑Sigmoid sub‑network, adaptively weight each scale’s contribution. After weighted averaging, the scale‑specific outputs are summed and averaged across all scales to produce the final global representations (\hat{S}) and (\hat{Q}). This outer processing implicitly aligns the local features in time without explicit dynamic time warping, preserving computational efficiency (linear in sequence length).

Prototype Construction and Classification

Support prototypes are formed by averaging the global representations of the K‑shot samples per class. To further reinforce alignment, the authors compute four distance matrices: two using direct differences (global vs. global, inverted vs. inverted) and two cross‑differences (global vs. inverted, inverted vs. global). The final distance is the average of these four terms, and classification follows a standard nearest‑prototype rule with cross‑entropy loss (L_{ce}).

Hybrid Contrastive Learning

To mitigate intra‑class variance, Manta adds a contrastive branch that simultaneously optimizes supervised contrastive loss (using labeled support samples) and unsupervised contrastive loss (treating all query and support embeddings as a single batch). The combined contrastive loss (L_{hc}) follows the InfoNCE formulation with temperature (\tau), encouraging embeddings of the same class to cluster while pushing apart different‑class embeddings. The overall training objective is a weighted sum (L_{total}= \lambda L_{ce} + (1-\lambda) L_{hc}).

Experiments

The authors evaluate Manta on four few‑shot benchmarks: SSv2‑FewShot, Kinetics‑FewShot, UCF101‑FewShot, and HMDB51‑FewShot, using a 3‑way‑3‑shot episodic protocol. Manta consistently outperforms prior state‑of‑the‑art methods such as OTAM, TRX, SloshNet, and recent Mamba‑based vision models. Notably, when the number of frames is increased from 8 to 32, performance degradation is minimal, demonstrating the scalability of the Matryoshka design. Ablation studies reveal that (i) removing Inner Modules harms local feature capture, (ii) removing the Outer Module reduces temporal alignment, and (iii) disabling the hybrid contrastive loss leads to higher intra‑class variance and lower accuracy.

Conclusion

Manta introduces a principled way to adapt the efficient long‑sequence modeling capability of Mamba to the few‑shot setting. By nesting multiple scale‑aware, bidirectional SSM blocks (Inner Modules) within an outer alignment module and reinforcing representation learning with hybrid contrastive objectives, Manta simultaneously solves the lack of local feature modeling, the need for temporal alignment, and the problem of intra‑class variance accumulation. The framework sets new performance records on multiple FSAR benchmarks and opens avenues for applying long‑sequence, low‑label video understanding in surveillance, healthcare, and other domains where annotated data are scarce.

Comments & Academic Discussion

Loading comments...

Leave a Comment