Steering Sparse Autoencoder Latents to Control Dynamic Head Pruning in Vision Transformers (Student Abstract)

Dynamic head pruning in Vision Transformers (ViTs) improves efficiency by removing redundant attention heads, but existing pruning policies are often difficult to interpret and control. In this work, we propose a novel framework by integrating Sparse…

Authors: Yousung Lee, Dongsoo Har

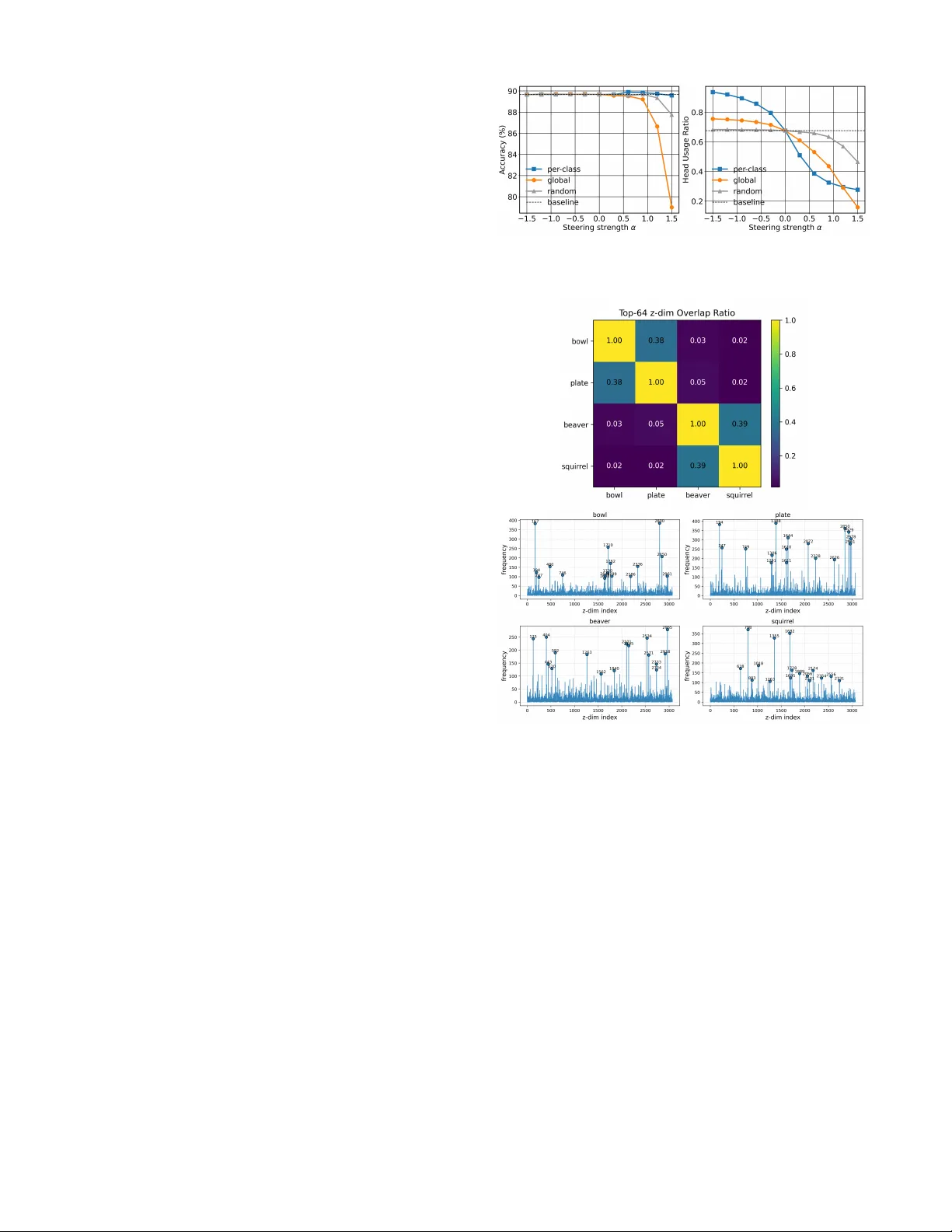

Steering Sparse A utoencoder Latents to Contr ol Dynamic Head Pruning in V ision T ransf ormers (Student Abstract) Y ousung Lee 1 , Dongsoo Har 1 1 K orea Adv anced Institute of Science and T echnology , Daejeon 34051, K orea yslee410@kaist.ac.kr , dshar@kaist.ac.kr Abstract Dynamic head pruning in V ision T ransformers (V iTs) im- prov es ef ficiency by remo ving redundant attention heads, b ut existing pruning policies are often difficult to interpret and control. In this work, we propose a novel frame work by inte- grating Sparse Autoencoders (SAEs) with dynamic pruning, lev eraging their ability to disentangle dense embeddings into interpretable and controllable sparse latents. Specifically , we train an SAE on the final-layer residual embedding of the V iT and amplify the sparse latents with different strategies to al- ter pruning decisions. Among them, per -class steering re veals compact, class-specific head subsets that preserve accurac y . For e xample, bowl improv es accuracy (76% → 82%) while re- ducing head usage (0.72 → 0.33) via heads h 2 and h 5 . These results show that sparse latent features enable class-specific control of dynamic pruning, effecti vely bridging pruning ef- ficiency and mechanistic interpretability in V iTs. Introduction V ision T ransformers (V iTs) le verage multi-head self- attention to capture di verse token interactions. Ho wever , many attention heads are redundant, increasing computa- tion without proportional performance gain. T o address this, adaptiv e frame works such as AdaV iT (Meng et al. 2022) hav e been introduced, which use auxiliary networks to select which heads to prune. This input-dependent pruning sub- stantially reduces computation while preserving accuracy . Howe ver , a key limitation remains: since these pruning policies rely on residual embeddings, the decision process is often opaque and dif ficult to control at the latent le vel. As a result, while dynamic head pruning impro ves efficiency , its mechanism lacks interpretability . If pruning decisions could be explained or controlled at the latent lev el, head selection would become both interpretable and controllable. Sparse Autoencoders (SAEs) of fer a natural tool for this purpose, as they disentangle dense, polysemantic embed- dings into sparse latent features that tend to encode more monosemantic and interpretable concepts in transformer representations (Cunningham et al. 2023; Lim et al. 2025). Recent studies have shown that this disentanglement enables steering specific SAE latent dimensions to control model be- Copyright © 2026, Association for the Adv ancement of Artificial Intelligence (www .aaai.org). All rights reserved. Figure 1: Comparison of dynamic head pruning before (left) and after (right) SAE latent steering, where x denotes the CLS tok en from the final-layer residual stream input, and ˜ x denotes the steered embedding. havior in a desired direction (Kang, W ang, and Xiong 2024; Chatzoudis et al. 2025). In this work, we propose a novel frame work that in- tegrates k -sparse autoencoders (Makhzani and Frey 2013) with dynamic head pruning in V iTs to make pruning deci- sions controllable at the latent lev el. This framew ork also en- hances interpretability by revealing class-specific head sub- sets. As illustrated in Figure 1, we amplify selected SAE latent dimensions, reconstruct the steered embedding, and feed it into the decision network to observe how pruning behavior changes. Our experiments show that per-class la- tent steering is particularly ef fecti v e, reducing head usage while largely maintaining accuracy . Overall, these results suggest that sparse latents pro vide an effecti v e way to inter- pret and control dynamic pruning, bridging the gap between efficienc y and mechanistic interpretability in V iTs. Method In this work, we adopt AdaV iT as the baseline dynamic pruning framew ork, with a particular focus on head prun- ing. W e first train a V ision T ransformer with layer-wise de- cision netw orks. In this setup, each lightweight network re- ceiv es the class ( CLS ) token from the residual stream in- put and outputs head importance logits a ℓ ∈ R H , where ℓ denotes the layer index and H the number of attention heads. W e use the CLS token as input for the decision net- work since it encodes global context rele v ant for classifica- tion. Binary masks M ℓ,i ∈ { 0 , 1 } are obtained via Gumbel- Sigmoid sampling from a ℓ,i , where i denotes the head index. The masked attention is computed as follo ws: h ℓ,i = M ℓ,i A ttn( Q, K, V ) ℓ,i . (1) The V ision T ransformer and the decision network are trained jointly to preserve accuracy while enforcing head sparsity to ward a target head usage ratio. After training, we extract x ∈ R d , the CLS token from the final layer’ s residual input and use it to train a k -sparse autoencoder . Formally , the encoder and decoder are giv en by z = T opK( W enc ( x − b dec )) , W enc ∈ R n × d , (2) ˆ x = W dec z + b dec , W dec ∈ R d × n , (3) where z is the sparse latent representation of x , ˆ x is its recon- struction, and b dec denotes the decoder bias. The parameters n and d represent the SAE latent and input embedding di- mensions, respectiv ely . Sparsity is enforced through a top- k activ ation, which preserves only the k largest dimensions. The SAE is trained with the following mean squared error (MSE) reconstruction objectiv e: L rec = ∥ x − ˆ x ∥ 2 2 , (4) which encourages ˆ x to remain close to x . After training the SAE, we amplify the selected latent dimensions S by z ′ i = ( z i + α, i ∈ S, z i , i / ∈ S, ˜ z = T opK( z ′ ) , (5) where α is the amplification strength and ˜ z denotes the steered sparse latent vector obtained after amplification and top- k activ ation. The steered embedding is then recon- structed as ˜ x = W dec ˜ z + b dec , which is finally fed into the decision network to obtain the steered pruning mask. Experiments Dynamic pruning baseline. W e fine-tune an ImageNet- pretrained V iT -Small ( 12 layers, 6 heads per layer , 384 - d embeddings) on CIF AR- 100 , reaching 91 . 27% accuracy . Each layer employs a single decision network for head se- lection, as described in the Method section. The final model prunes 30% of heads while maintaining 89 . 79% accuracy . Sparse autoencoder . The SAE expands the 384 -d residual embedding into the 3072 -d latent space ( 8 × ) with top- k ac- tiv ation ( k = 64 ). It is trained for 100 epochs, achie ving an MSE loss of 0 . 0228 . Replacing the original embedding with its reconstruction yields only minor differences in accuracy ( − 0 . 12% ) and head usage ratio ( +0 . 025 ), sho wing that the SAE preserves essential information for ef fecti v e pruning. Steering dynamic pruning. Inspired by the top- k mask- ing experiments in PatchSAE (Lim et al. 2025), we adapt this idea to dynamic head pruning using the latent steer - ing defined in Eq. (5), which amplifies selected SAE latent dimensions. For each sample, we ev aluate three strate gies for defining the index set S based on training-set activ ation frequency statistics: (1) Per -class frequent — top- k most frequently acti v ated latents within each class, (2) Global Figure 2: Accuracy (%) and head usage ratio in the final layer under different strate gies as α increases from 0 to 1 . 5 . Figure 3: Effect of per-class steering ( α = 1 . 2 ) on head ac- tiv ation patterns. Columns indicate the top- 5 classes ranked by accuracy gain, and ro ws correspond to heads ( h 0 – h 5 ). frequent — top- k most frequently activ ated latents across all classes, and (3) Random . Figure 2 shows that per-class steering reduces head usage while largely preserving accu- racy , whereas global and random strate gies lead to larger ac- curacy drops as α increases. The low overlap between global and per-class top- k frequent latent dimensions ( 0 . 1641 ) in- dicates that the SAE captures class-discriminativ e concepts. Figure 3 further illustrates head usage patterns in the fi- nal layer under per-class steering. For bowl , accuracy rises ( 76% → 82% ) while head usage falls ( 0 . 72 → 0 . 33 ), mainly relying on h 2 and h 5 . pine tr ee shows a similar pattern ( 79% → 84% , 0 . 93 → 0 . 35 ), relying on h 2 and h 3 . In- terestingly , semantically related classes such as bowl and plate share similar head subsets h 2 and h 5 , indicating that per-class steering reveals class-lev el semantic relationships among heads. These e xamples suggest that amplifying per- class top- k frequent activ ations enriches class-specific sig- nals in the decision netw ork input, leading to class-specific pruning behaviors. Overall, these results demonstrate that the Sparse Autoencoder provides an effecti ve way to inter- pret and control dynamic head pruning in V iTs. Conclusion This paper introduces a no vel Sparse Autoencoder-based framew ork that makes dynamic head pruning interpretable and controllable at the latent le v el in V ision T ransform- ers. By amplifying per-class frequent activ ations, we rev eal class-specific pruning behaviors that are both efficient and interpretable. Our current work focuses on the final layer and small datasets, and future work will extend the framework to earlier layers and foundation models. Acknowledgments This work was supported by the T echnology Innov ation Pro- gram (RS-2025-02613131) funded by the Ministry of T rade, Industry & Energy (MO TIE, Korea). References Chatzoudis, G.; Li, Z.; Moran, G. E.; W ang, H.; and Metaxas, D. N. 2025. V isual Sparse Steering: Improving Zero-shot Image Classification with Sparsity Guided Steer - ing V ectors. arXiv preprint . Cunningham, H.; Ewart, A.; Riggs, L.; Huben, R.; and Sharkey , L. 2023. Sparse autoencoders find highly in- terpretable features in language models. arXiv pr eprint arXiv:2309.08600 . Kang, H.; W ang, T .; and Xiong, C. 2024. Interpret and con- trol dense retriev al with sparse latent features. arXiv pr eprint arXiv:2411.00786 . Lim, H.; Choi, J.; Choo, J.; and Schneider , S. 2025. Sparse Autoencoders Rev eal Selectiv e Remapping of V isual Con- cepts during Adaptation. In Pr oceedings of the 13th Inter- national Confer ence on Learning Repr esentations (ICLR) . Makhzani, A.; and Frey , B. 2013. K-sparse autoencoders. arXiv pr eprint arXiv:1312.5663 . Meng, L.; Li, H.; Chen, B.-C.; Lan, S.; W u, Z.; Jiang, Y .- G.; and Lim, S.-N. 2022. Adavit: Adaptive vision trans- formers for ef ficient image recognition. In Pr oceedings of the IEEE/CVF conference on computer vision and pattern r ecognition , 12309–12318. A ppendix: Additional Analysis of SAE Latents W e provide additional analysis of SAE latents, focusing on those extracted from the final-layer residual embedding, to study their role in controlling dynamic head pruning. W e examine (1) the effect of negati ve steering, (2) reconstruc- tion behavior , and (3) the semantic structure of SAE latents. These results indicate that SAE latents disentangle class- specific information into sparse dimensions, which are re- flected in pruning decisions in the final layer . Effect of Negativ e Steering W e observe that negati ve steering ( α < 0 ) increases head usage primarily for the per-class strategy , while global and random strategies show minimal change in head usage. In contrast, positiv e steering ( α > 0 ) reduces head usage. This suggests that negati v e steering suppresses class-specific la- tent features, weakening the pruning signal in the residual embedding, while positi ve steering amplifies them and leads to more selective head activ ation. As a result, this change modifies the input to the decision netw ork, leading to dif fer - ent head activ ation patterns. Reconstruction Effect T o isolate the effect of latent steering, we replace the original residual embedding with its SAE reconstruction. Replacing the original residual embedding with its SAE reconstruction results in minimal changes in head usage (a verage < 0 . 03 ) Figure 4: Negativ e steering ( α < 0 ) increases head usage by suppressing pruning signals. Figure 5: Semantic structure of SAE latents, sho wing class- wise ov erlap and distinct latent usage patterns. and accuracy ( − 0 . 12% ), suggesting that SAE reconstruction preserves pruning-rele v ant information. These results sho w that the observed ef fects are primarily due to latent steering rather than reconstruction artifacts. Semantic Structure of SAE Latents As shown in Figure 5, semantically similar object classes such as bowl and plate exhibit high latent overlap ( ≈ 0 . 38 ), while unrelated classes show near-zero overlap. A similar pattern is observed for beaver and squirr el ( ≈ 0 . 39 ). The figure also shows that each class relies on distinct sparse la- tent dimensions. These results demonstrate that SAE latents encode class-specific semantic structure, which is reflected in pruning decisions in the final layer .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment