Unleashing the Potential of All Test Samples: Mean-Shift Guided Test-Time Adaptation

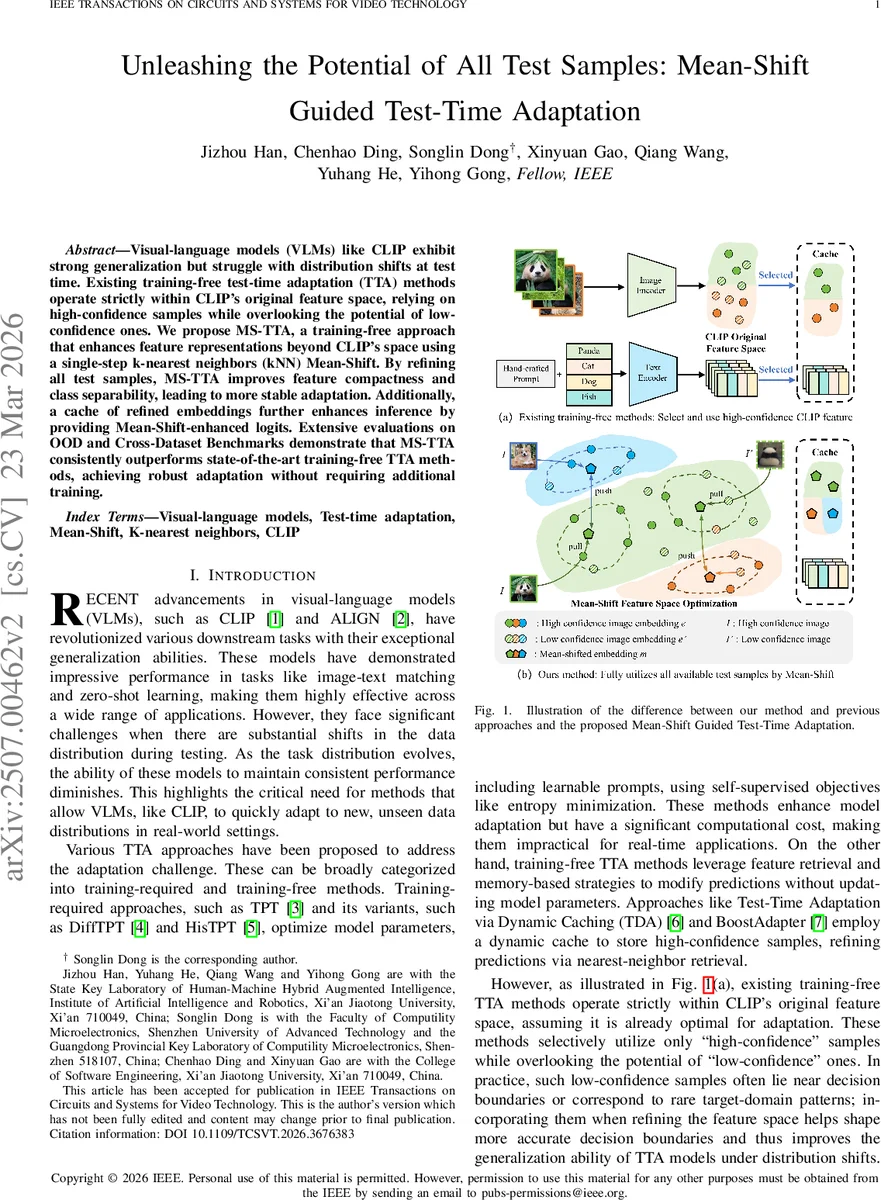

Visual-language models (VLMs) like CLIP exhibit strong generalization but struggle with distribution shifts at test time. Existing training-free test-time adaptation (TTA) methods operate strictly within CLIP’s original feature space, relying on high-confidence samples while overlooking the potential of low-confidence ones. We propose MS-TTA, a training-free approach that enhances feature representations beyond CLIP’s space using a single-step k-nearest neighbors (kNN) Mean-Shift. By refining all test samples, MS-TTA improves feature compactness and class separability, leading to more stable adaptation. Additionally, a cache of refined embeddings further enhances inference by providing Mean Shift enhanced logits. Extensive evaluations on OOD and cross-dataset benchmarks demonstrate that MS-TTA consistently outperforms state-of-the-art training-free TTA methods, achieving robust adaptation without requiring additional training.

💡 Research Summary

**

The paper addresses the vulnerability of large vision‑language models (VLMs) such as CLIP when faced with distribution shifts at test time. While CLIP’s zero‑shot capabilities are strong, existing test‑time adaptation (TTA) methods either require costly parameter updates or, in the training‑free category, rely exclusively on high‑confidence samples and remain confined to CLIP’s original feature space. This creates a performance ceiling and discards potentially informative low‑confidence examples that often lie near decision boundaries or represent rare target‑domain patterns.

To overcome these limitations, the authors propose MS‑TTA (Mean‑Shift Guided Test‑Time Adaptation), a completely training‑free framework that refines all test samples using a single‑step k‑nearest‑neighbors (kNN) mean‑shift operation. For each incoming image, the CLIP visual encoder produces an initial embedding f_test. The method retrieves the k nearest embeddings from the pool of already observed test samples, computes a weighted mean using a distance‑based kernel φ, and replaces f_test with this mean‑shifted vector f̂_test. By moving every sample toward locally dense regions, intra‑class compactness is increased and inter‑class separability is enhanced, even for samples that originally had low confidence.

Refined embeddings are stored in a dynamic cache of limited size Q per class. The cache maintains the lowest‑entropy (most confident) samples; new samples replace the highest‑entropy entries when necessary. During inference, the similarity between a query embedding and cached embeddings is used to compute a cache logit term (a weighted sum of one‑hot pseudo‑labels). The final prediction is obtained by simply adding this cache logit to the original CLIP logit: logits_final = logits_CLIP + logits_cache. Because the cache logit is derived from mean‑shift‑refined features, it complements CLIP’s zero‑shot predictions with an adaptation signal that does not require any gradient updates.

Extensive experiments on a suite of out‑of‑distribution (OOD) benchmarks (ImageNet‑R, ImageNet‑A, ImageNet‑Sketch) and cross‑dataset transfer sets (DomainNet, Office‑Home) demonstrate that MS‑TTA consistently outperforms state‑of‑the‑art training‑free methods such as TD‑A, BoostAdapter, and BCA. Gains range from 2.3 % to 4.1 % absolute accuracy improvement, with the most pronounced benefits on domains where low‑confidence samples are abundant. Computationally, the approach adds only a single kNN lookup and one mean‑shift computation per sample, incurring roughly 5–7 ms of extra latency per batch, making it suitable for real‑time applications.

In summary, the contributions are threefold: (1) introducing a mean‑shift‑based feature refinement that transcends CLIP’s fixed embedding space, (2) leveraging both high‑ and low‑confidence test samples to break the reliance on pseudo‑label quality, and (3) integrating a lightweight dynamic cache that produces mean‑shift‑enhanced logits, achieving robust, training‑free adaptation across diverse distribution shifts. Future work may explore multi‑step mean‑shift, extending the technique to text embeddings, and applying the framework to video or sequential data streams.

Comments & Academic Discussion

Loading comments...

Leave a Comment