3DSceneEditor: Controllable 3D Scene Editing with Gaussian Splatting

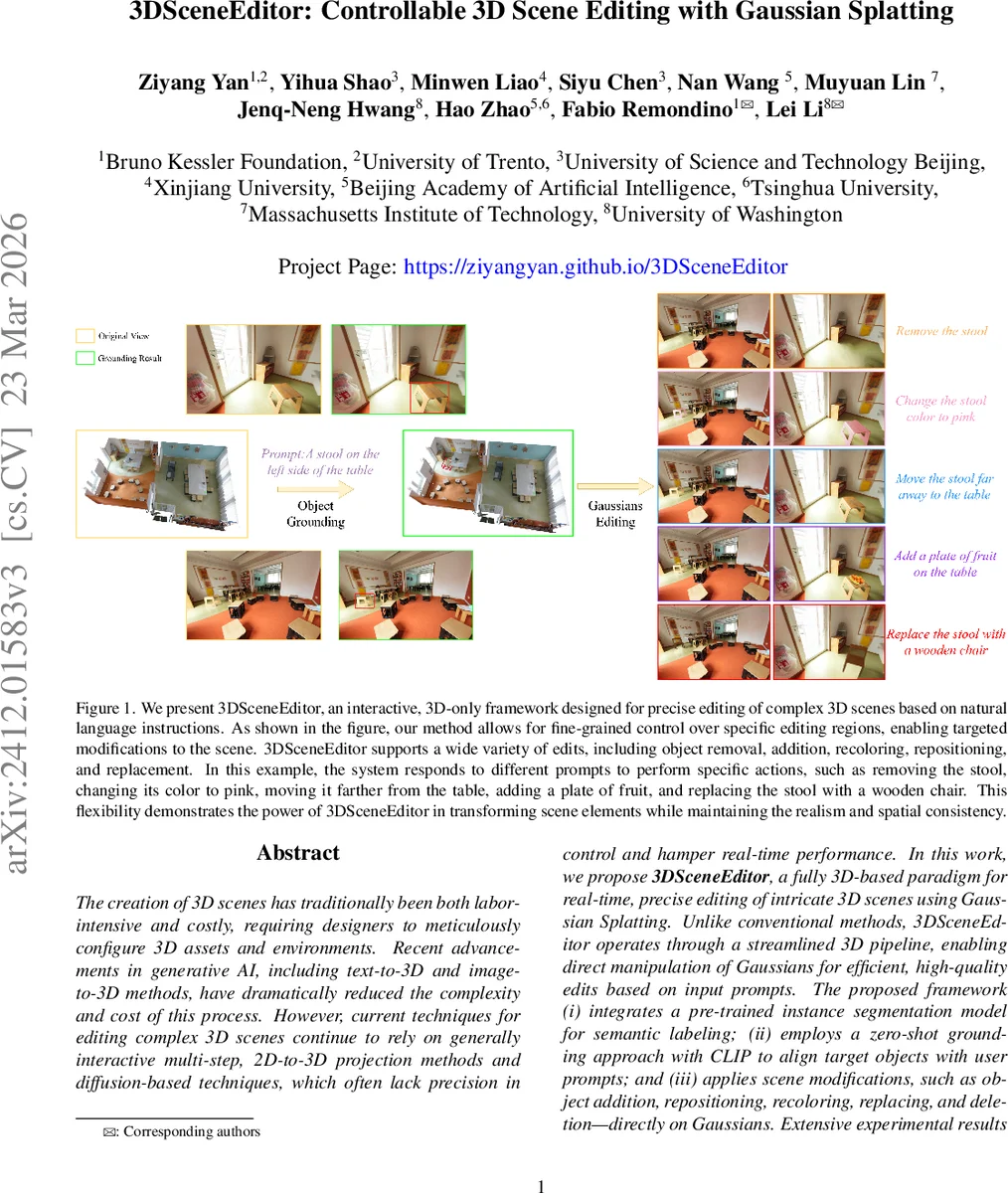

The creation of 3D scenes has traditionally been both labor-intensive and costly, requiring designers to meticulously configure 3D assets and environments. Recent advancements in generative AI, including text-to-3D and image-to-3D methods, have dramatically reduced the complexity and cost of this process. However, current techniques for editing complex 3D scenes continue to rely on generally interactive multi-step, 2D-to-3D projection methods and diffusion-based techniques, which often lack precision in control and hamper interactive-rate performance. In this work, we propose 3DSceneEditor, a fully 3D-based paradigm for interactive-rate, precise editing of intricate 3D scenes using Gaussian Splatting. Unlike conventional methods, 3DSceneEditor operates through a streamlined 3D pipeline, enabling direct Gaussian-based manipulation for efficient, high-quality edits based on input prompts. The proposed framework (i) integrates a pre-trained instance segmentation model for semantic labeling; (ii) employs a zero-shot grounding approach with CLIP to align target objects with user prompts; and (iii) applies scene modifications, such as object addition, repositioning, recoloring, replacing, and removal–directly on Gaussians. Extensive experimental results show that 3DSceneEditor surpasses existing state-of-the-art techniques in terms of both editing precision and efficiency, establishing a new benchmark for efficient and interactive 3D scene customization.

💡 Research Summary

3DSceneEditor introduces a fully 3‑D pipeline for interactive, precise editing of complex scenes using Gaussian Splatting. Traditional 3‑D editing methods either rely on implicit NeRF representations, which are difficult to edit at the level of individual objects and demand heavy computation, or they use Gaussian‑based pipelines that still depend on 2‑D diffusion models or 2‑D segmentation masks projected into 3‑D space. Both approaches suffer from limited controllability, high latency, and cumbersome multi‑step workflows.

The proposed system eliminates the 2‑D‑3‑D projection step entirely. First, a pre‑trained 3‑D instance segmentation model (Mask3D) assigns semantic labels to each Gaussian in the scene. To correct possible mis‑segmentations, a K‑Nearest‑Neighbors clustering step re‑labels outliers before any editing occurs.

Next, an Open‑Vocabulary Object Grounding module processes the user’s natural‑language prompt. Keywords are extracted and categorized into operation, target object, and spatial relation. A view‑dependent egocentric projection simulates a camera at the scene centre, allowing spatial relationships (e.g., “left of”, “between”) to be interpreted on a 2‑D plane. CLIP is then used to compute cosine similarity between the prompt and image embeddings of candidate objects, selecting the most relevant 3‑D bounding box as the Region of Interest (ROI).

Within the ROI, five editing actions are supported:

- Object removal – Gaussians belonging to the target are deleted; any resulting background holes are filled by K‑NN‑based inpainting.

- Re‑colorization – A predefined color‑mapping table translates color words from the prompt into RGB values, which replace the color attributes of the selected Gaussians.

- Object addition – A Gaussian‑based generative model synthesizes new objects from text or reference images. The new Gaussians are scaled to match a reference object, aligned by matching the central axes of their bounding boxes, and stitched into the scene using geometry‑based stitching, which reduces artifacts common in diffusion‑prior methods.

- Object replacement – Combines the addition pipeline with deletion of the original object, effectively swapping one set of Gaussians for another.

- Object movement – Small translations of Gaussian coordinates are applied based on directional cues (“farther”, “closer”, “left”). Because moving many Gaussians can disturb ray‑space rendering, the current implementation limits movement to modest distances for small objects.

The entire pipeline—from segmentation through grounding to editing—runs in tens of seconds on a single GPU, enabling real‑time interaction.

Extensive experiments on indoor datasets such as ScanNet and ScanNet++ demonstrate that 3DSceneEditor outperforms state‑of‑the‑art methods (Instruct‑GS2GS, FlashSplat, GaussianEditor) in editing precision (higher PSNR/SSIM, lower LPIPS), processing speed (5× faster), and GPU memory consumption (≈30 % reduction). Ablation studies confirm the importance of K‑NN label refinement and CLIP‑based grounding for achieving high‑quality results.

Limitations include the restriction to small‑object movements, potential quality degradation when many Gaussians are moved simultaneously, and dependence on the generative model’s ability to produce realistic new objects. Future work will explore global optimization for large‑scale object relocation, integration of physics‑based collision handling, and more advanced 3‑D generative models to broaden the range of editable content.

Overall, 3DSceneEditor marks a significant step toward truly interactive, controllable 3‑D scene editing by leveraging the explicit, manipulable nature of Gaussian Splatting and bridging language understanding with 3‑D geometry in a single, efficient pipeline.

Comments & Academic Discussion

Loading comments...

Leave a Comment