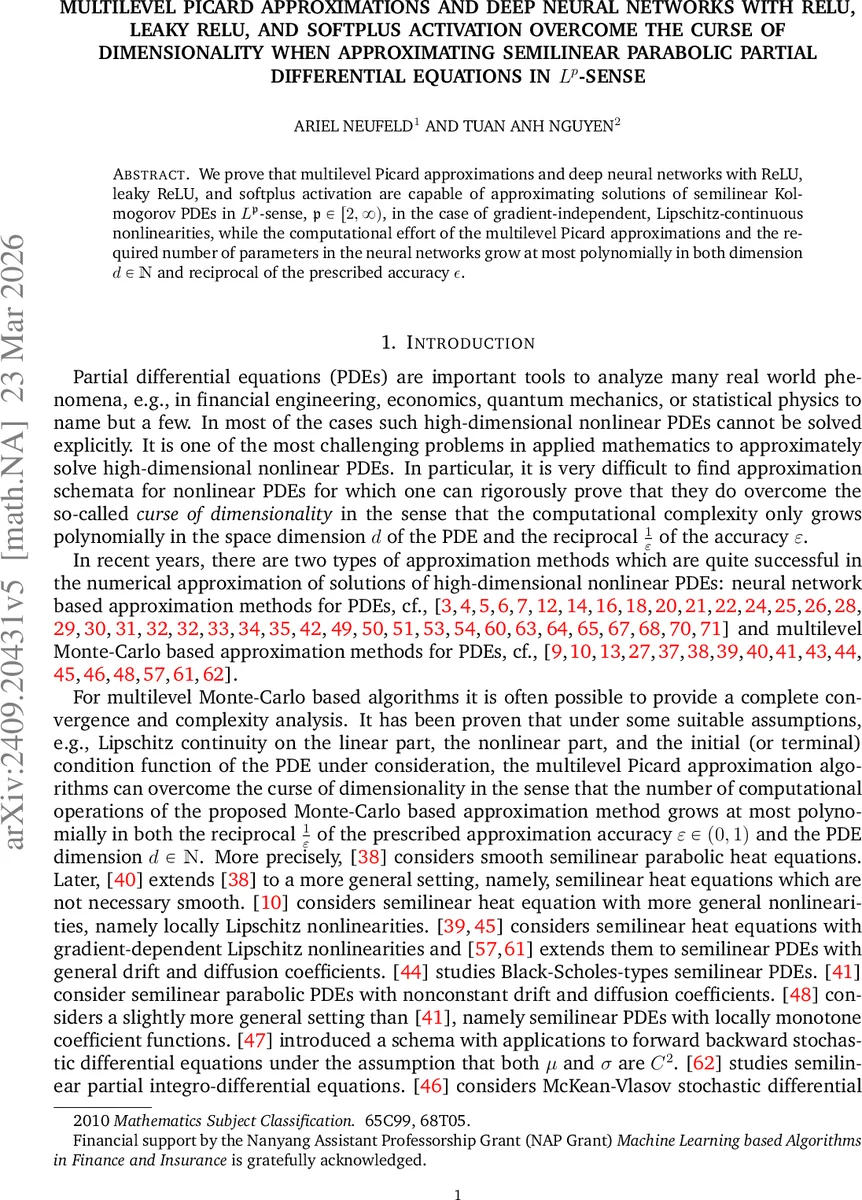

Multilevel Picard approximations and deep neural networks with ReLU, leaky ReLU, and softplus activation overcome the curse of dimensionality when approximating semilinear parabolic partial differential equations in $L^p$-sense

We prove that multilevel Picard approximations and deep neural networks with ReLU, leaky ReLU, and softplus activation are capable of approximating solutions of semilinear Kolmogorov PDEs in $L^\mathfrak{p}$-sense, $\mathfrak{p}\in [2,\infty)$, in the case of gradient-independent, Lipschitz-continuous nonlinearities, while the computational effort of the multilevel Picard approximations and the required number of parameters in the neural networks grow at most polynomially in both dimension $d\in \mathbb{N}$ and reciprocal of the prescribed accuracy $ε$.

💡 Research Summary

The paper addresses the long‑standing challenge of numerically solving high‑dimensional semilinear parabolic partial differential equations (PDEs) without suffering from the curse of dimensionality. It establishes rigorous error and complexity bounds for two families of algorithms: multilevel Picard (MLP) approximations and deep neural networks (DNNs) equipped with ReLU, leaky ReLU, or softplus activation functions.

Problem setting.

The authors consider a class of semilinear Kolmogorov PDEs of the form

\

Comments & Academic Discussion

Loading comments...

Leave a Comment