PROMPT2BOX: Uncovering Entailment Structure among LLM Prompts

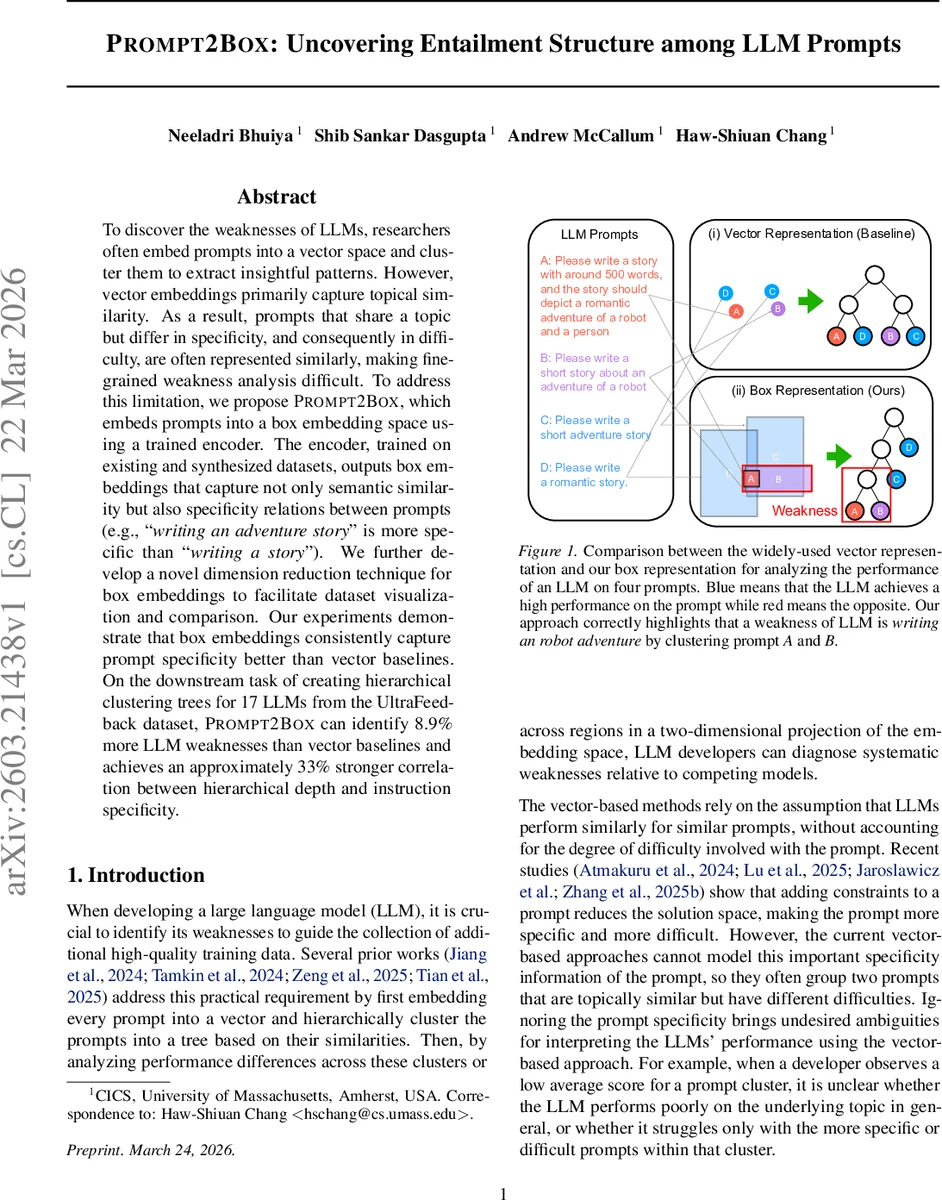

To discover the weaknesses of LLMs, researchers often embed prompts into a vector space and cluster them to extract insightful patterns. However, vector embeddings primarily capture topical similarity. As a result, prompts that share a topic but differ in specificity, and consequently in difficulty, are often represented similarly, making fine-grained weakness analysis difficult. To address this limitation, we propose PROMPT2BOX, which embeds prompts into a box embedding space using a trained encoder. The encoder, trained on existing and synthesized datasets, outputs box embeddings that capture not only semantic similarity but also specificity relations between prompts (e.g., “writing an adventure story” is more specific than “writing a story”). We further develop a novel dimension reduction technique for box embeddings to facilitate dataset visualization and comparison. Our experiments demonstrate that box embeddings consistently capture prompt specificity better than vector baselines. On the downstream task of creating hierarchical clustering trees for 17 LLMs from the UltraFeedback dataset, PROMPT2BOX can identify 8.9% more LLM weaknesses than vector baselines and achieves an approximately 33% stronger correlation between hierarchical depth and instruction specificity.

💡 Research Summary

The paper introduces PROMPT2BOX, a novel framework for analyzing large language model (LLM) weaknesses by embedding prompts into a box‑embedding space rather than the conventional vector space. The authors argue that vector embeddings capture only symmetric semantic similarity, which fails to distinguish between prompts that share a topic but differ in specificity and difficulty. This limitation hampers fine‑grained weakness detection because a cluster may contain both easy, general prompts and hard, specific ones, obscuring the true source of performance degradation.

Box embeddings, originally proposed for modeling asymmetric relations such as entailment, represent each prompt as an axis‑aligned hyper‑rectangle defined by a center vector (semantic location) and a width vector (semantic scope). Larger boxes correspond to more general prompts, while smaller boxes encode more specific prompts. Containment of one box within another directly models entailment: if Box(A) ⊆ Box(B), then prompt A entails prompt B (A is more specific). The authors leverage this property to capture prompt specificity and to interpret a box as the space of valid responses.

To train a prompt‑to‑box encoder, the authors assemble a large entailment dataset from three sources: (1) semantic relevance pairs from the Infinity Instruct instruction‑response collection, (2) sentence‑level entailment data from MultiNLI, and (3) synthetically generated prompt pairs that encode hierarchical constraints (e.g., “write a story” vs. “write a short story about a robot”). They adopt a contrastive learning objective that simultaneously maximizes intersection volume for similar prompts and enforces containment for entailment pairs. Because box intersection and containment involve non‑differentiable min/max operations, they employ the Gumbel‑Box formulation, which replaces hard interval endpoints with Gumbel‑distributed random variables, yielding smooth differentiable approximations. The encoder consists of a frozen Sentence‑Transformer followed by two MLP heads that output the center and width vectors.

Beyond the encoder, the paper proposes two methodological contributions: (a) Box‑SNE, a dimensionality‑reduction technique that preserves box‑specific geometry while projecting high‑dimensional boxes into 2‑D for visualization, and (b) a hierarchical clustering algorithm that respects box containment, producing trees where depth correlates with prompt specificity.

Experiments are conducted on the UltraFeedback benchmark, which contains performance scores for 17 LLMs across thousands of prompts. The authors map each prompt to a box, compute pairwise intersection volumes, and build hierarchical trees using their box‑aware clustering. They evaluate three metrics: (i) entailment prediction accuracy (precision/recall) against held‑out entailment pairs, (ii) the number of distinct weaknesses discovered (i.e., clusters where a model’s average score is low), and (iii) the Pearson correlation between tree depth and a manually annotated specificity score. Results show that PROMPT2BOX outperforms strong vector baselines (e.g., sentence‑transformer embeddings with cosine similarity) by 10–15 percentage points on entailment prediction, discovers 8.9 % more fine‑grained weaknesses, and achieves a 0.33 correlation between depth and specificity versus ~0.25 for vectors. Visualizations illustrate that specific prompts form tight, nested boxes inside broader ones, allowing analysts to pinpoint exactly which constrained sub‑tasks a model struggles with.

The paper’s contributions are significant for several reasons. First, it provides a principled way to model asymmetric relationships (specificity, constraint inclusion) that are central to instruction following but invisible to symmetric similarity measures. Second, by interpreting box volume as the space of valid responses, the framework links prompt difficulty to the size of the solution space, offering a theoretical explanation for observed performance drops as constraints increase. Third, the approach is readily applicable to existing LLM evaluation pipelines (e.g., Clio, SkillVerse, EvalTree), potentially improving their diagnostic power.

Future directions include extending the box representation to capture uncertainty (e.g., modeling width as a distribution), handling multi‑modal prompts (image‑text), and integrating box‑based similarity into active data collection for targeted fine‑tuning. Overall, PROMPT2BOX demonstrates that region‑based embeddings can substantially enhance our ability to dissect and improve LLM behavior.

Comments & Academic Discussion

Loading comments...

Leave a Comment