A Stable Neural Statistical Dependence Estimator for Autoencoder Feature Analysis

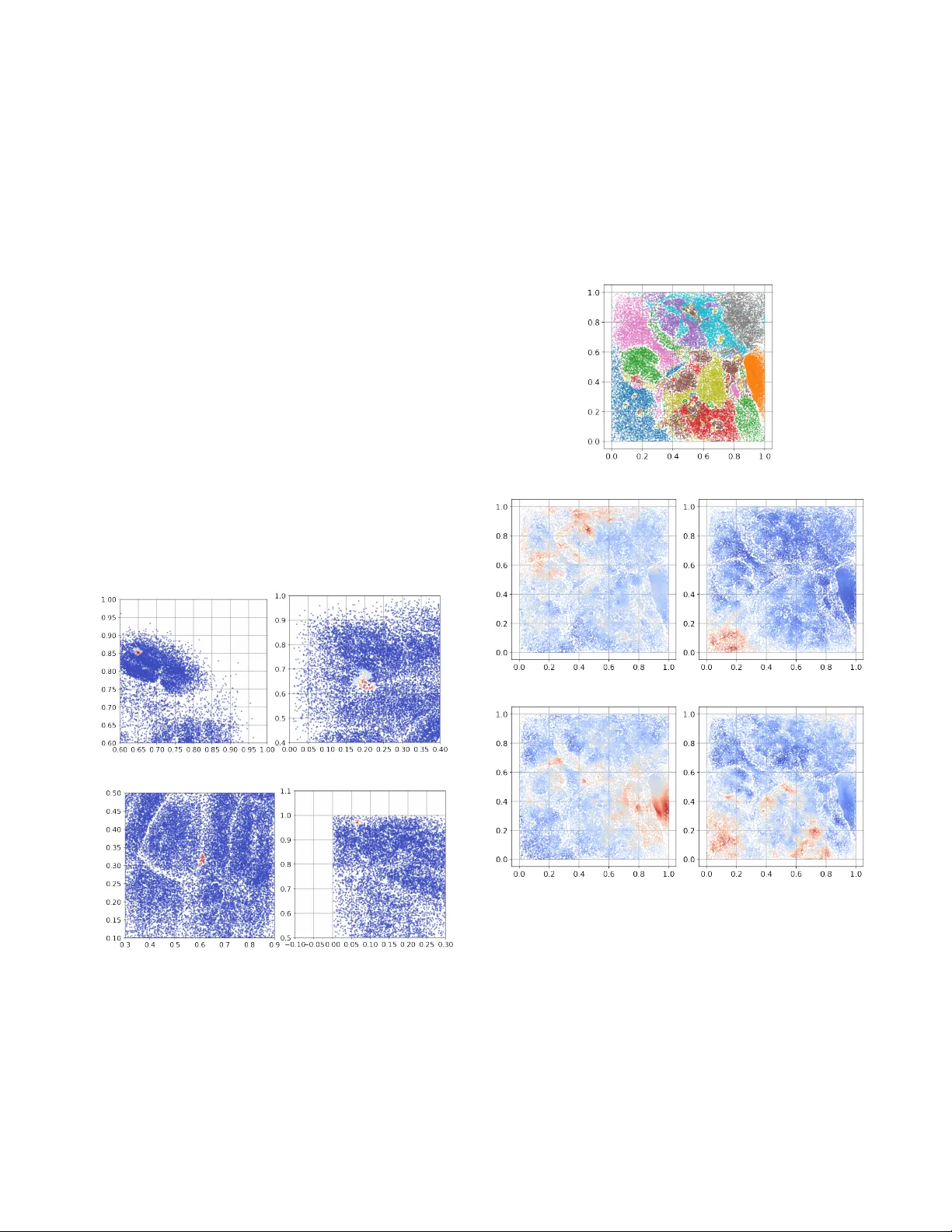

Statistical dependence measures like mutual information is ideal for analyzing autoencoders, but it can be ill-posed for deterministic, static, noise-free networks. We adopt the variational (Gaussian) formulation that makes dependence among inputs, l…

Authors: Bo Hu, Jose C Principe