Functional Estimation of Manifold-Valued Diffusion Processes

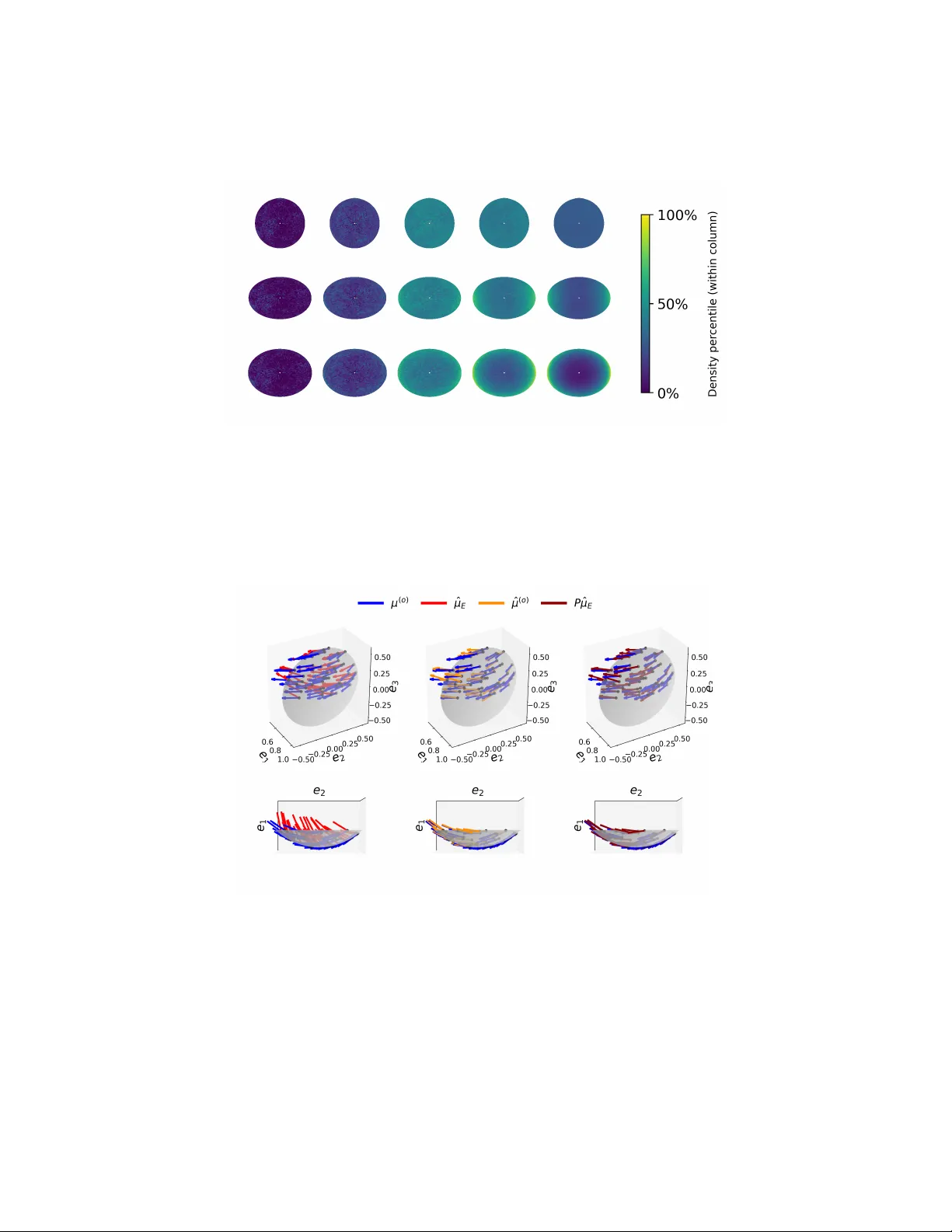

Nonstationary high-dimensional time series are increasingly encountered in biomedical research as measurement technologies advance. Owing to the homeostatic nature of physiological systems, such datasets are often located on, or can be well approxima…

Authors: Jacob McErlean, Hau-Tieng Wu