The Nature of Technical Debt in Research Software

Research software (also called scientific software) is essential for advancing scientific endeavours. Research software encapsulates complex algorithms and domain-specific knowledge and is a fundamental component of all science. A pervasive challenge…

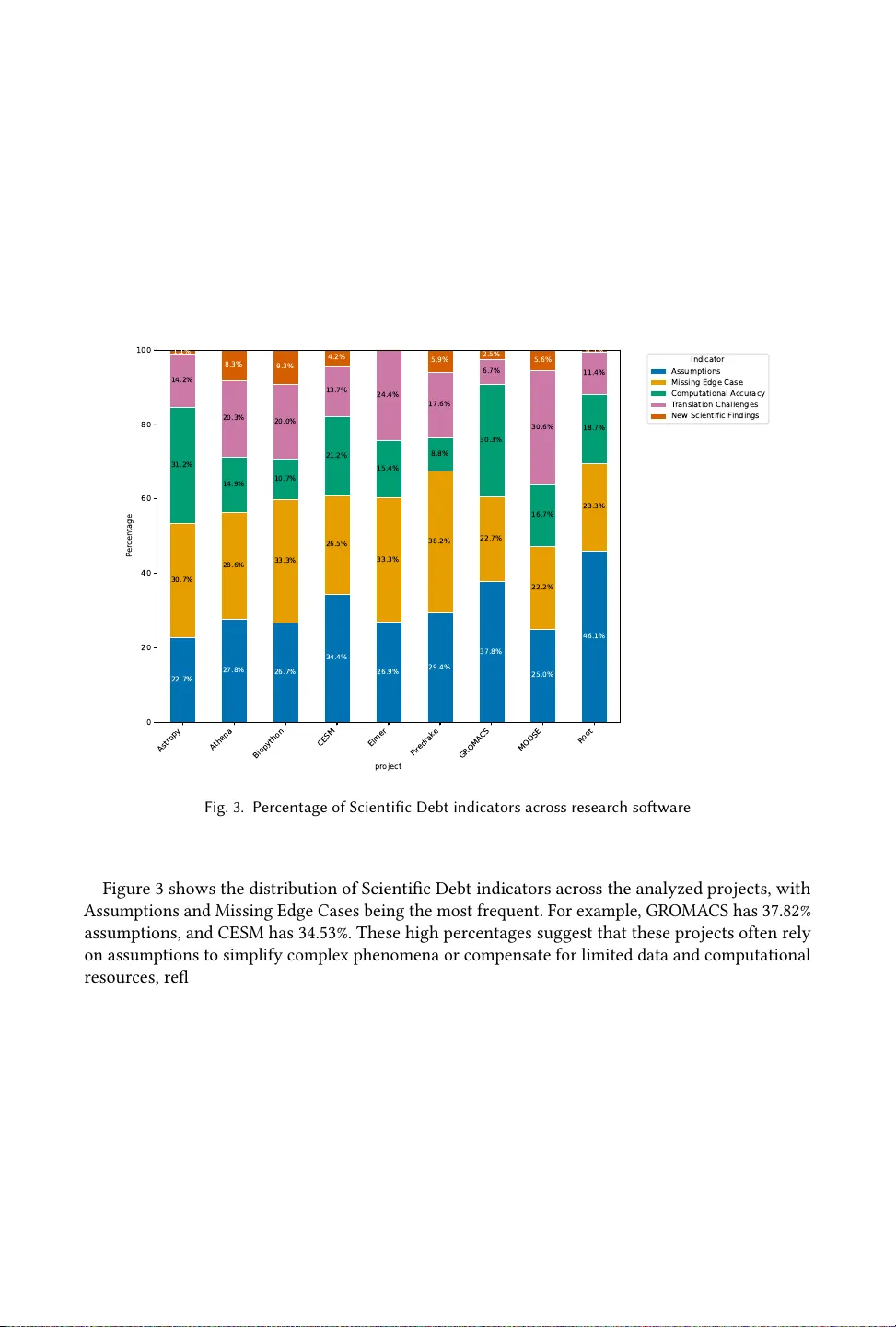

Authors: Neil A. Ernst, Ahmed Musa Awon, Swapnil Hingmire