Reasoning Gets Harder for LLMs Inside A Dialogue

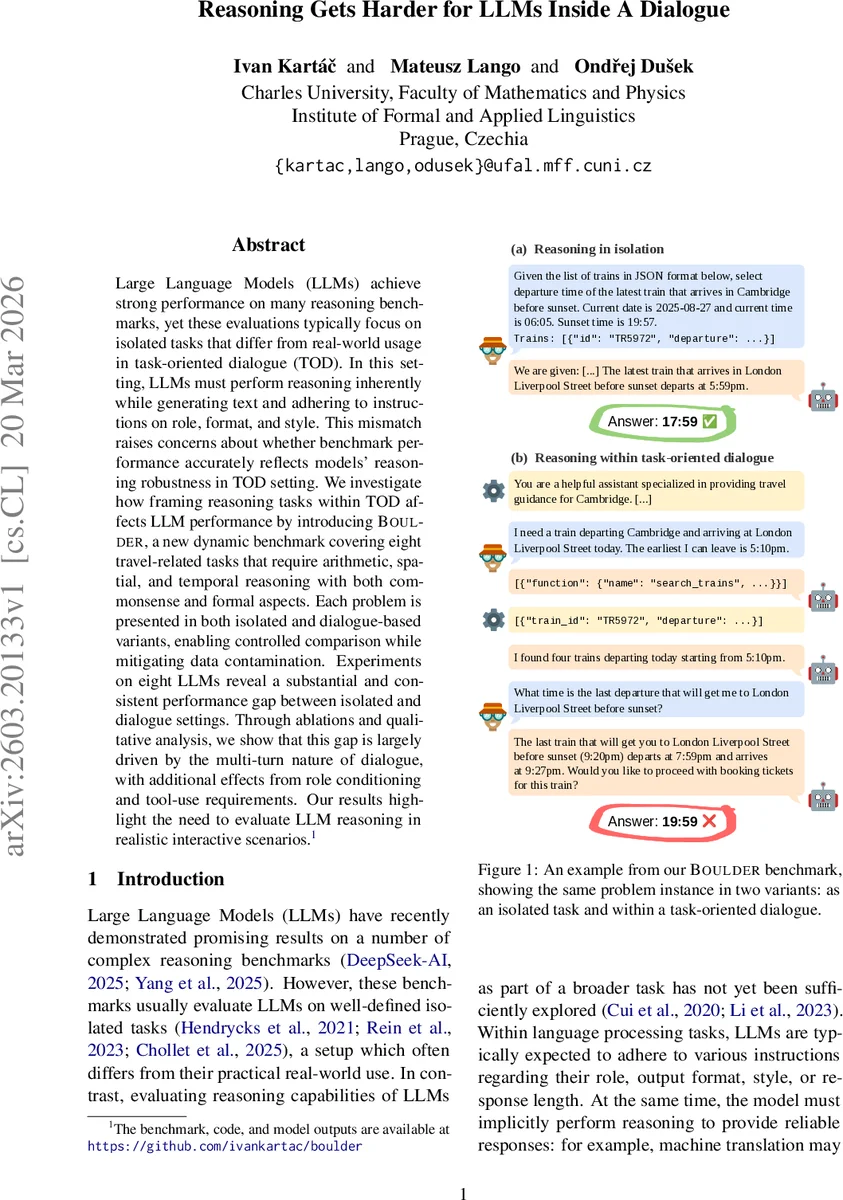

Large Language Models (LLMs) achieve strong performance on many reasoning benchmarks, yet these evaluations typically focus on isolated tasks that differ from real-world usage in task-oriented dialogue (TOD). In this setting, LLMs must perform reasoning inherently while generating text and adhering to instructions on role, format, and style. This mismatch raises concerns about whether benchmark performance accurately reflects models’ reasoning robustness in TOD setting. We investigate how framing reasoning tasks within TOD affects LLM performance by introducing BOULDER, a new dynamic benchmark covering eight travel-related tasks that require arithmetic, spatial, and temporal reasoning with both commonsense and formal aspects. Each problem is presented in both isolated and dialogue-based variants, enabling controlled comparison while mitigating data contamination. Experiments on eight LLMs reveal a substantial and consistent performance gap between isolated and dialogue settings. Through ablations and qualitative analysis, we show that this gap is largely driven by the multi-turn nature of dialogue, with additional effects from role conditioning and tool-use requirements. Our results highlight the need to evaluate LLM reasoning in realistic interactive scenarios.

💡 Research Summary

The paper investigates a critical gap between the strong reasoning performance of large language models (LLMs) on conventional benchmarks and their actual capabilities when embedded in task‑oriented dialogue (TOD) systems. While existing evaluations focus on isolated tasks—single‑turn prompts with clearly defined inputs and outputs—real‑world dialogue agents must simultaneously follow role‑specific instructions, adhere to output formats, manage multi‑turn context, and sometimes invoke external tools. To quantify how these additional constraints affect reasoning, the authors introduce BOULDER (Benchmarking Usefulness of LLMs in Dialogue‑Embedded Reasoning), a dynamic benchmark built around eight travel‑related tasks that require arithmetic, spatial, and temporal reasoning, both commonsense and formal.

Each task appears in two variants: (a) an isolated version that presents the problem statement and the necessary JSON data in a single prompt, and (b) a dialogue version that embeds the same problem within a fixed multi‑turn conversation. The dialogue variant includes a history of user and assistant messages, tool calls, and tool results, ending with a final user query that the model must answer. By keeping the underlying data identical across variants, the benchmark enables a controlled comparison while avoiding contamination from existing training data.

The eight tasks cover realistic travel scenarios: calculating total ticket price with discounts, computing hotel booking costs with varied room assignments, finding the latest train that arrives before sunset, estimating average train departure intervals, checking restaurant opening hours against a target interval (using Allen’s interval algebra), measuring distance between two venues, determining cardinal direction relations, and solving a miniature traveling‑salesman problem to produce the shortest walking path among attractions. The data are generated dynamically from a slightly modified MultiWOZ database, with random synthesis of new entities and attributes to increase diversity. Conversation templates are paraphrased by an LLM and manually validated, ensuring that the dialogue context remains natural while preserving placeholders for data insertion.

For evaluation, the authors test eight LLMs spanning open‑source (Qwen‑3 4B, Qwen‑3 30B, Mistral‑Small 24B, Llama‑2 13B, etc.) and proprietary models (Claude‑2, GPT‑4, Gemini‑1.5). Performance metrics are task‑specific: accuracy for categorical outputs, mean absolute error (MAE) for numeric predictions, and precision for list‑type answers. Because the models generate free‑form text, the authors employ a set of answer‑extraction parsers built on the Qwen‑3 30B MoE model. Each parser is prompted to locate the required semantic value(s) in the model’s response and return them as JSON. Human validation shows parser reliability above 94 % across tasks.

Results reveal a substantial and consistent performance drop when the same reasoning problem is presented within a dialogue. Across models, the average accuracy loss ranges from 10 to 20 percentage points, with the most pronounced degradation on tasks that combine temporal ordering and tool‑use (e.g., departure‑time and shortest‑path). Ablation studies isolate the contributing factors: removing the multi‑turn history largely restores performance, indicating that maintaining context is a primary challenge. Stripping role‑conditioning prompts yields modest gains, while eliminating tool‑call requirements produces the biggest improvement, suggesting that the dual responsibility of generating natural language and orchestrating tool usage overloads the model’s reasoning pipeline.

Qualitative error analysis uncovers several failure modes: (1) loss of information from earlier turns, leading to incorrect calculations; (2) misinterpretation of role instructions, causing format violations or overly verbose answers; (3) failure to incorporate tool‑provided JSON data, with the model attempting to recompute values instead of reusing the supplied results; and (4) ambiguous phrasing that hampers the parser’s ability to extract the correct answer. These findings underscore that current LLMs, even state‑of‑the‑art ones, are not robust to the combined pressures of dialogue management, role adherence, and tool integration.

The authors argue that benchmark design must evolve to reflect realistic interaction constraints. BOULDER demonstrates that isolated‑task benchmarks can dramatically overestimate a model’s practical utility in conversational agents. Future work should explore training regimes that explicitly teach models to preserve multi‑turn context, to separate reasoning from surface‑level generation, and to handle tool calls as first‑class actions. Moreover, more reliable answer‑extraction mechanisms are needed to reduce evaluation noise in free‑form settings.

In conclusion, the paper makes three key contributions: (1) the creation of a dynamic, verifiable benchmark that juxtaposes isolated and dialogue‑embedded reasoning; (2) a comprehensive empirical study showing a consistent performance gap across a diverse set of LLMs; and (3) an in‑depth analysis pinpointing multi‑turn interaction, role conditioning, and tool‑use as the primary sources of difficulty. These insights provide a roadmap for developing more dialogue‑aware LLMs and for designing evaluation suites that better predict real‑world performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment