A Super Fast K-means for Indexing Vector Embeddings

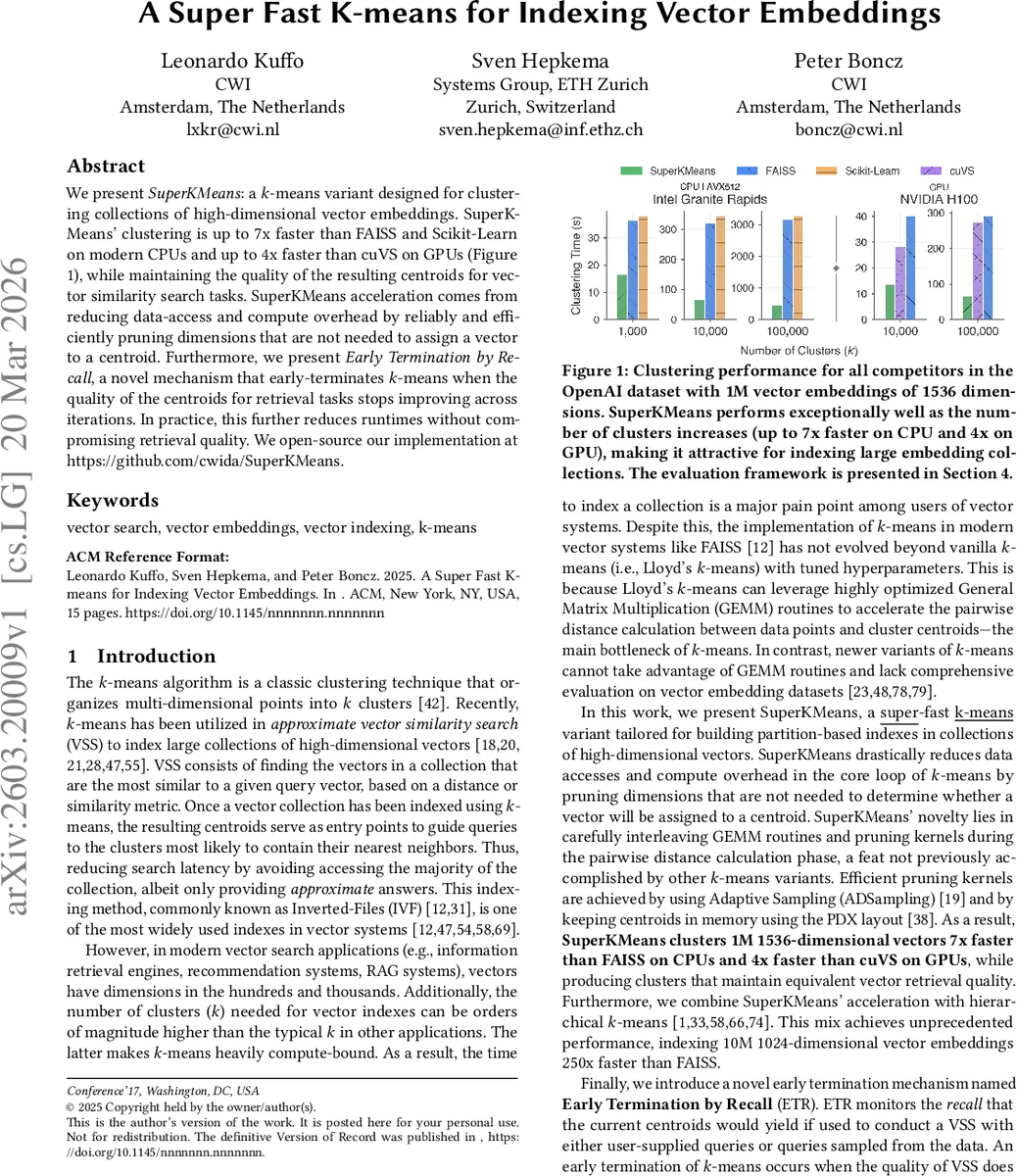

We present SuperKMeans: a k-means variant designed for clustering collections of high-dimensional vector embeddings. SuperKMeans’ clustering is up to 7x faster than FAISS and Scikit-Learn on modern CPUs and up to 4x faster than cuVS on GPUs (Figure 1), while maintaining the quality of the resulting centroids for vector similarity search tasks. SuperKMeans acceleration comes from reducing data-access and compute overhead by reliably and efficiently pruning dimensions that are not needed to assign a vector to a centroid. Furthermore, we present Early Termination by Recall, a novel mechanism that early-terminates k-means when the quality of the centroids for retrieval tasks stops improving across iterations. In practice, this further reduces runtimes without compromising retrieval quality. We open-source our implementation at https://github.com/cwida/SuperKMeans

💡 Research Summary

SuperKMeans is a purpose‑built variant of the classic k‑means clustering algorithm that targets the specific workload of indexing high‑dimensional vector embeddings. The authors identify two fundamental inefficiencies in existing solutions such as FAISS, Scikit‑Learn, and cuVS: (1) the naïve use of a full‑dimensional General Matrix Multiply (GEMM) for every distance computation, and (2) the lack of a termination criterion that directly reflects the downstream retrieval quality. To address these, SuperKMeans introduces a two‑phase iteration. In the GEMM phase, only the leading d′ dimensions (≈12 % of the total dimensionality) are used to compute a partial distance matrix via a highly optimized SGEMM call. In the subsequent Pruning phase, the partial distances are examined to decide whether the remaining trailing d″ dimensions can be safely ignored for a given vector‑centroid pair. This decision relies on Adaptive Sampling (ADS), which guarantees that pruning incurs negligible error while dramatically reducing memory traffic and arithmetic operations. The authors also store centroids in a PDX layout, improving cache locality and enabling efficient multi‑threaded execution across a wide range of CPUs (Intel Granite Rapids, AMD Zen 5/3, Apple M4, AWS Graviton 4).

A second major contribution is Early Termination by Recall (ETR). Traditional k‑means stops after a fixed number of iterations or when centroid movement falls below a threshold, but these criteria do not correlate with the actual recall of a vector‑search index. ETR periodically evaluates the current centroids on a set of query vectors (either user‑provided or sampled from the dataset) and measures recall. If recall does not improve beyond a small epsilon for a configurable number of checks, the algorithm halts. This mechanism typically saves 15‑30 % of the total runtime while preserving the final recall.

The experimental evaluation is thorough. On a 1 M‑vector, 1536‑dimensional dataset, SuperKMeans outperforms FAISS and Scikit‑Learn on CPUs by up to 7× and cuVS on GPUs by up to 4×, across a range of cluster counts (k = 10 k to 100 k). Importantly, recall metrics (Recall@1, @10, @100) remain statistically indistinguishable from the baselines. Additional studies reveal that: (i) only 5‑10 iterations are sufficient for convergence in typical embedding datasets, (ii) 20‑30 % subsampling of the data yields centroids of comparable quality, and (iii) k‑means++ initialization is detrimental in high‑dimensional settings because Voronoi boundaries become fuzzy and random initialization performs just as well.

When combined with hierarchical k‑means, SuperKMeans can index 10 M vectors of 1024 dimensions 250× faster than FAISS, demonstrating scalability to truly massive collections. The authors release a clean C++/CUDA implementation (https://github.com/cwida/SuperKMeans) with a FAISS‑compatible API, benchmark scripts for multiple hardware platforms, and detailed documentation, facilitating immediate adoption.

In summary, SuperKMeans achieves a rare combination of speed and retrieval quality by co‑designing algorithmic pruning, memory layout, and a retrieval‑aware stopping condition. It offers a practical solution for modern AI systems—large language model embeddings, recommendation engines, and retrieval‑augmented generation pipelines—where fast index construction is a critical bottleneck.

Comments & Academic Discussion

Loading comments...

Leave a Comment