On the Fundamental Limits of Hierarchical Secure Aggregation with Dropout and Collusion Resilience

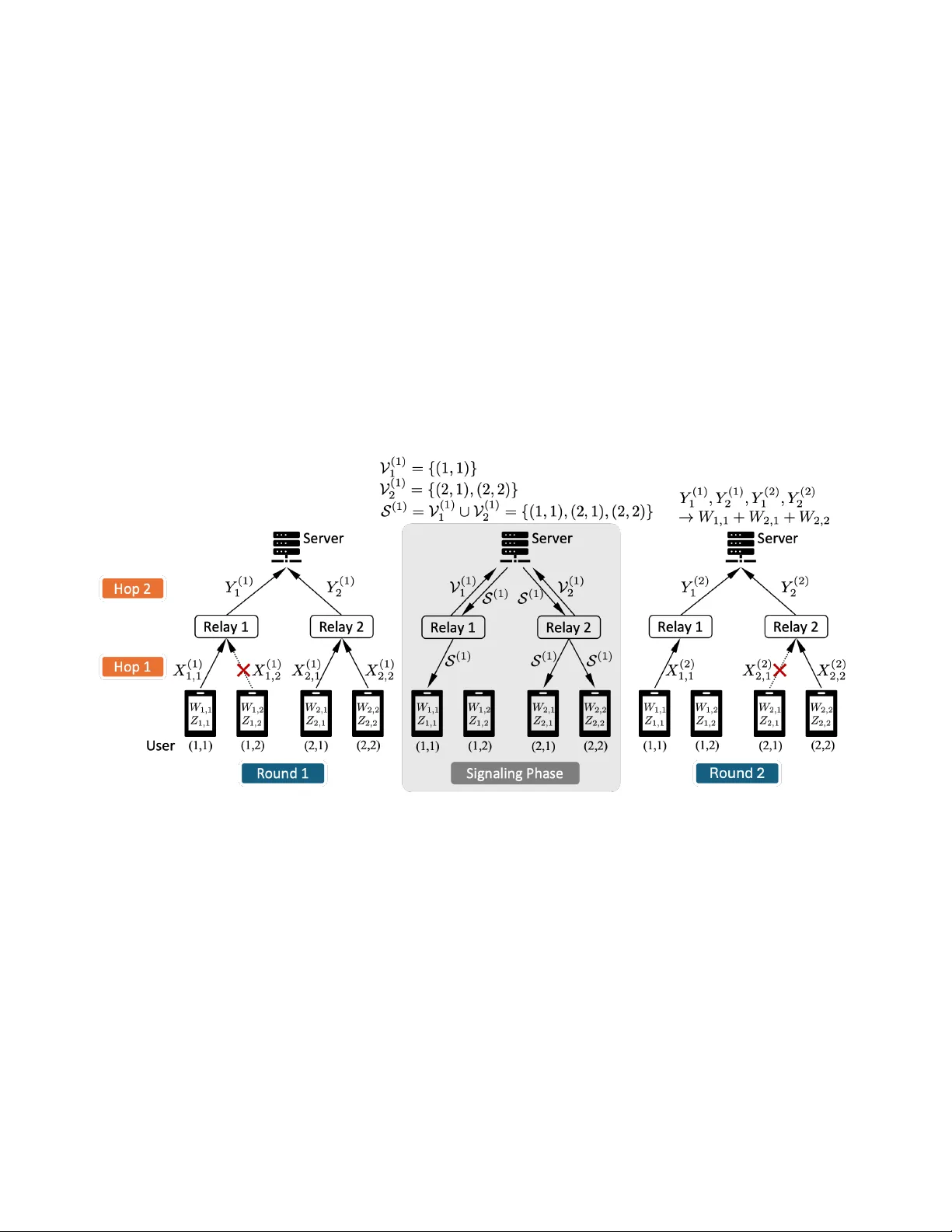

We study the fundamental communication limits of information-theoretic secure aggregation in a hierarchical network consisting of a server, multiple relays, and multiple users per relay. Communication proceeds over two rounds and two hops, and the sy…

Authors: Zhou Li, Yizhou Zhao, Xiang Zhang