Minimax and Adaptive Covariance Matrix Estimation under Differential Privacy

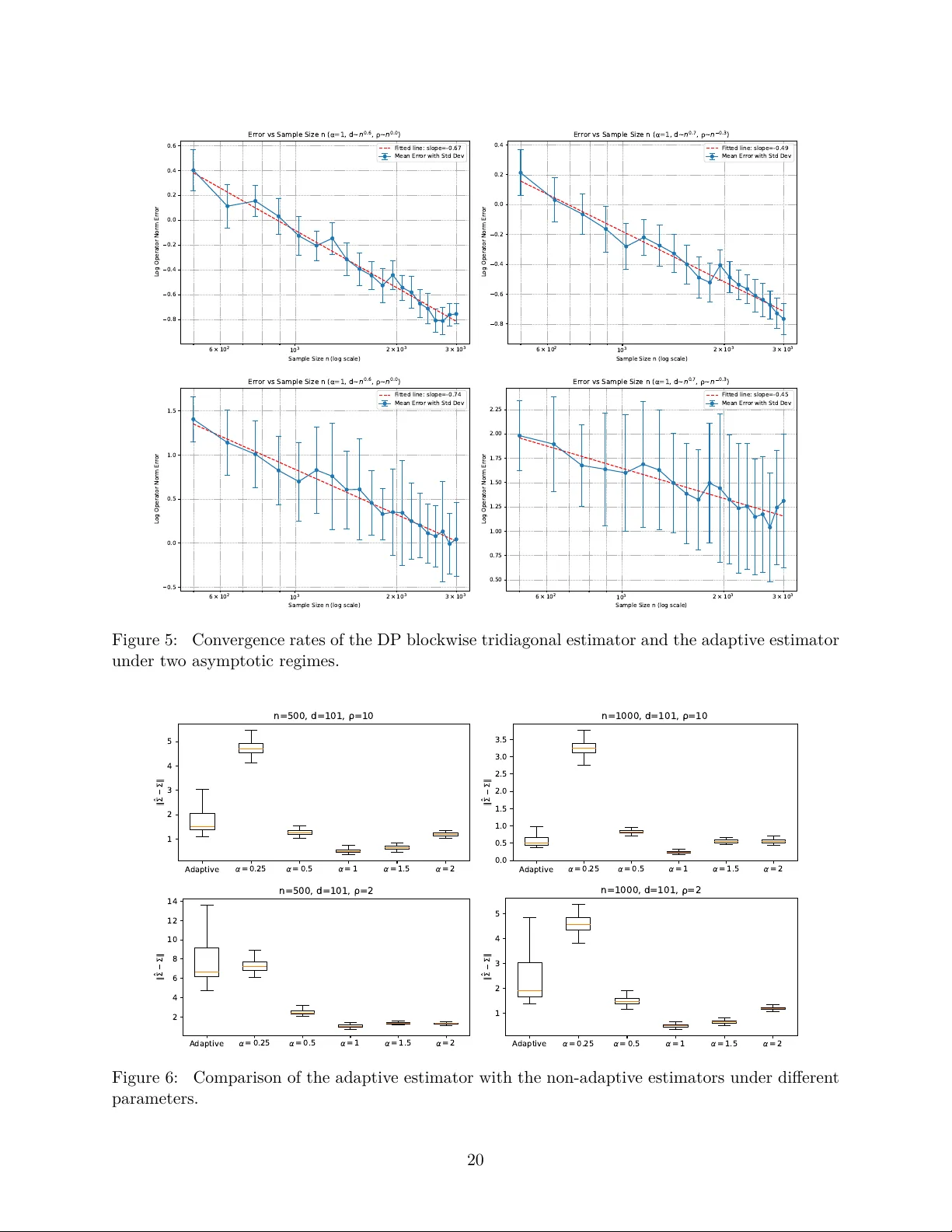

The covariance matrix plays a fundamental role in the analysis of high-dimensional data. This paper studies minimax and adaptive estimation of high-dimensional bandable covariance matrices under differential privacy constraints. We propose a novel di…

Authors: T. Tony Cai, Yicheng Li