The Exponentially Weighted Signature

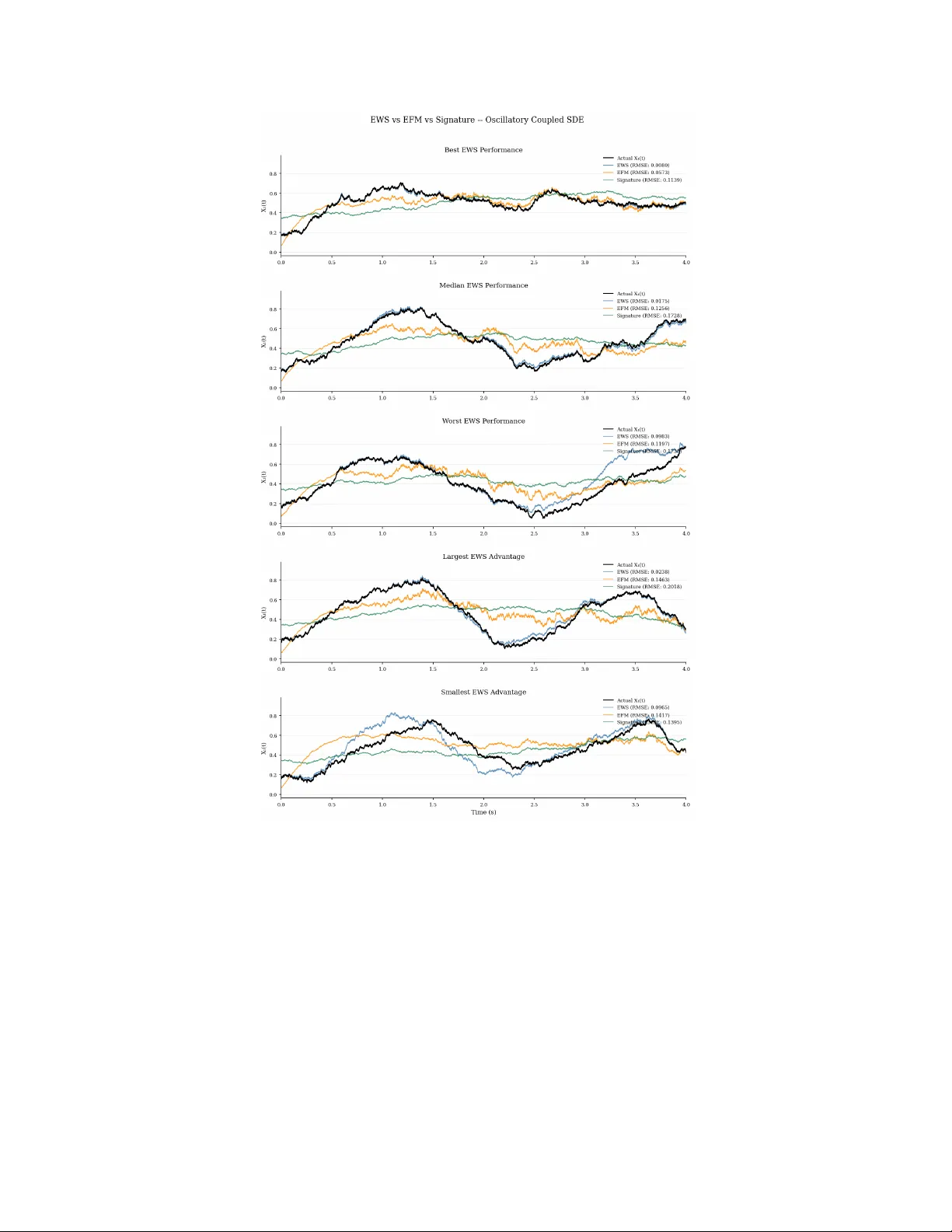

The signature is a canonical representation of a multidimensional path over an interval. However, it treats all historical information uniformly, offering no intrinsic mechanism for contextualising the relevance of the past. To address this, we intro…

Authors: Alex, re Bloch, Samuel N. Cohen