Translating MRI to PET through Conditional Diffusion Models with Enhanced Pathology Awareness

Positron emission tomography (PET) is a widely recognized technique for diagnosing neurodegenerative diseases, offering critical functional insights. However, its high costs and radiation exposure hinder its widespread use. In contrast, magnetic reso…

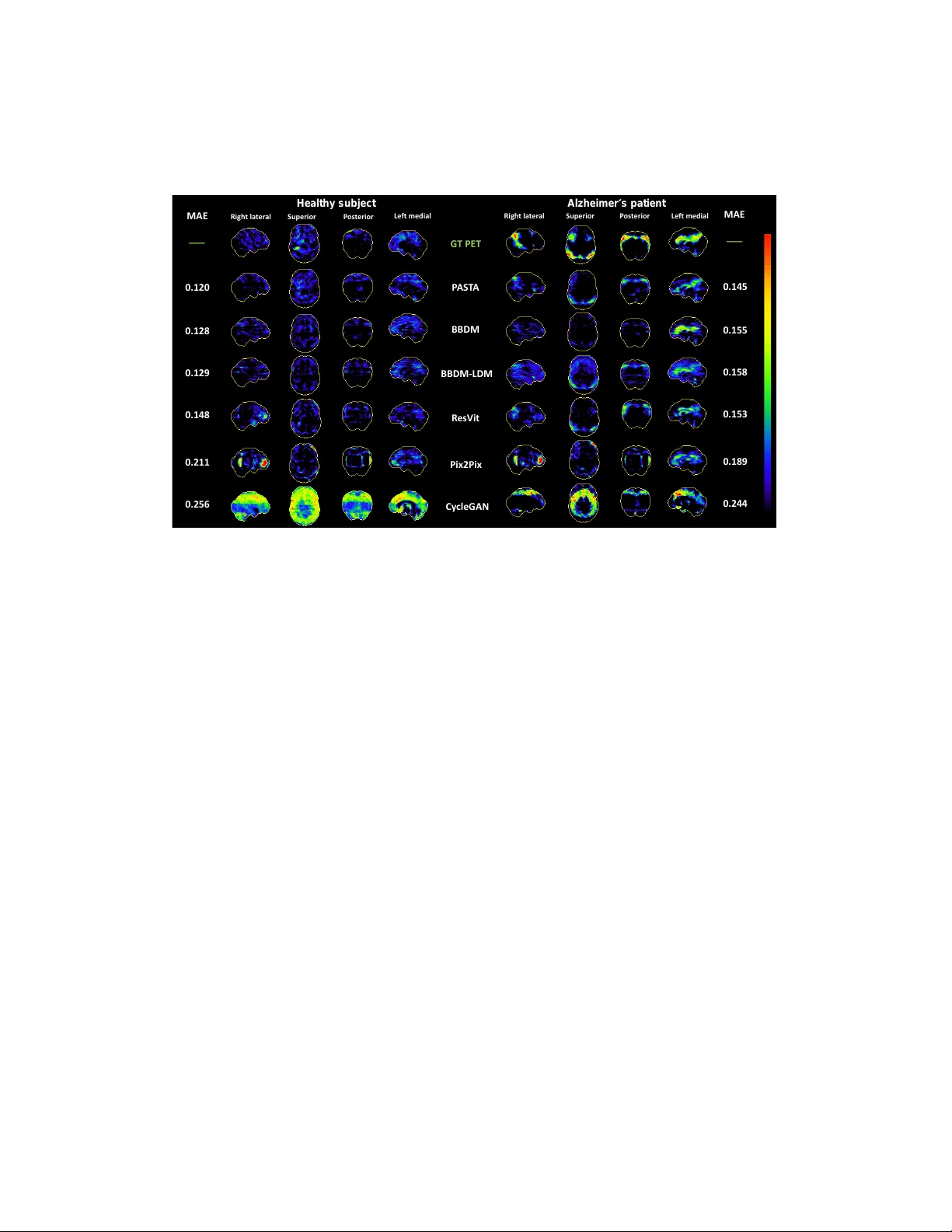

Authors: Yitong Li, Igor Yakushev, Dennis M. Hedderich