A Dual Certificate Approach to Sparsity in Infinite-Width Shallow Neural Networks

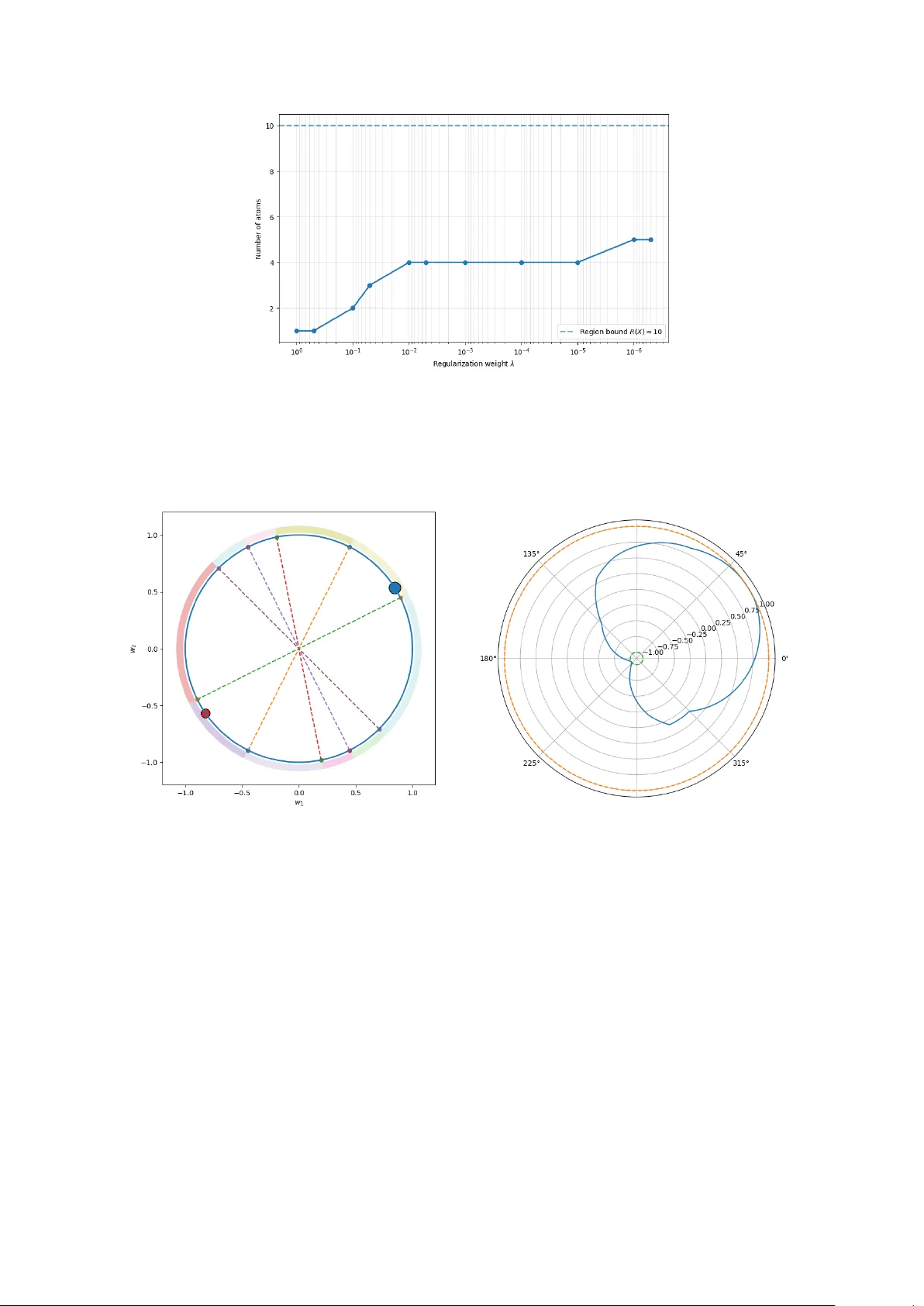

In this paper, we study total variation (TV)-regularized training of infinite-width shallow ReLU neural networks, formulated as a convex optimization problem over measures on the unit sphere. Our approach leverages the duality theory of TV-regularize…

Authors: Leonardo Del Gr, e, Christoph Brune