Fast and scalable inference in hidden Markov models with Gaussian fields

Hidden Markov models (HMMs) are powerful tools for analysing time series data that depend on discrete underlying but unobserved states. As such, they have gained prominence across numerous empirical disciplines, in particular ecology, medicine, and e…

Authors: Jan-Ole Fischer

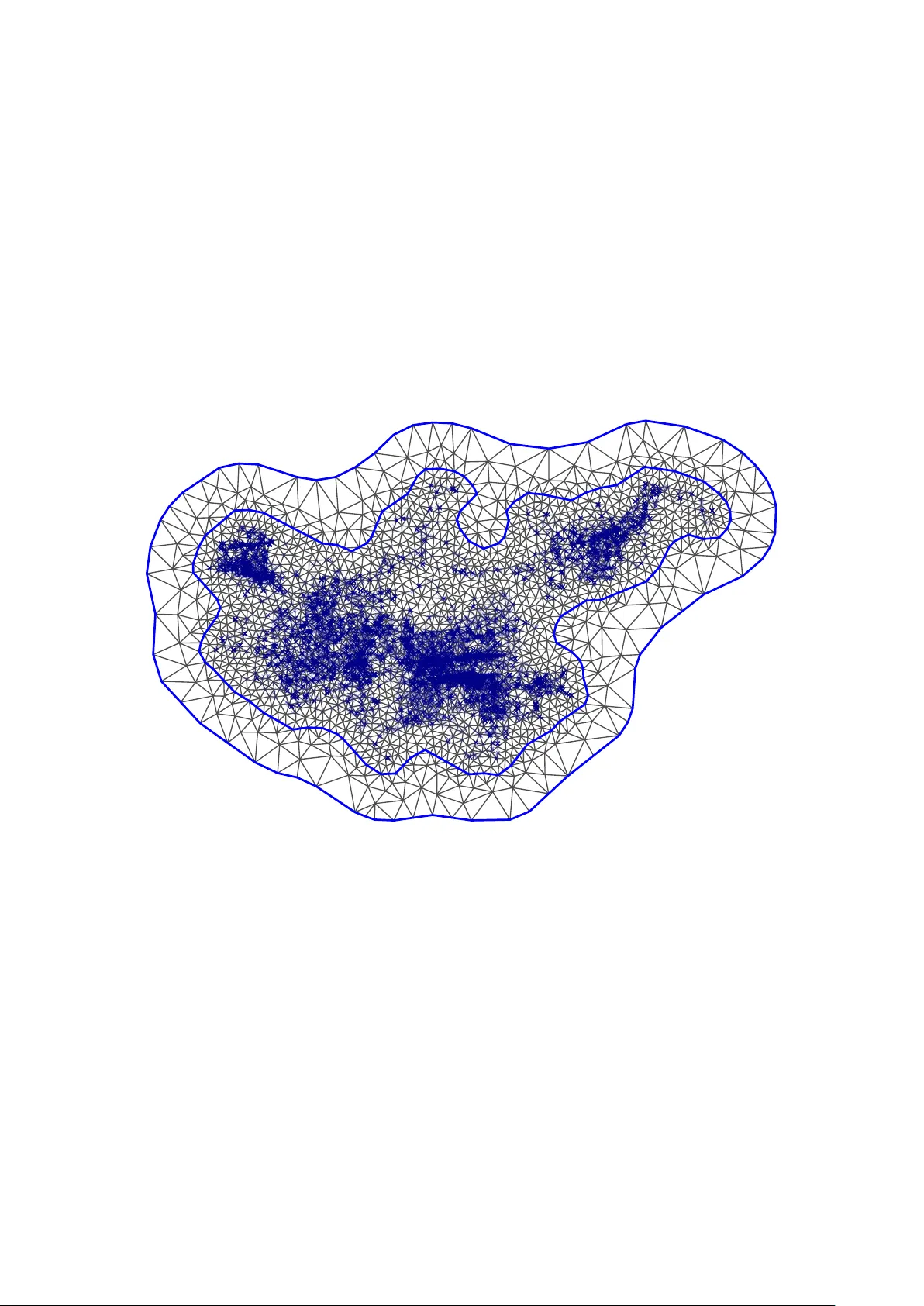

F ast and scalable inference in hidden Mark o v mo dels with Gaussian fields Jan-Ole Fisc her ∗ Departmen t of Business A dministration and Economics, Bielefeld Univ ersity Marc h 19, 2026 Abstract Hidden Mark ov models (HMMs) are p o werful tools for analysing time series data that dep end on discrete underlying but unobserved states. As such, they ha v e gained prominence across numerous empirical disciplines, in particular ecology , medicine, and economics. How ever, the increasing complexit y of empirical data is often accompanied b y additional latent structure such as spatial effects, temp oral trends, or measuremen t p erturbations. Gaussian fields pro vide an attractive building blo c k for incorp orating such structured latent v ariation into HMMs. F ast inference metho ds for Gaussian fields ha v e emerged through the sto c hastic partial differen tial equation (SPDE) approach. Due to their sparse represen tation, these integrate well with nov el frequentist estimation metho ds for random-effects mo dels via the use of automatic differentiation and the Laplace appro ximation. Scaling to high dimensions requires to ols such as (R)TMB to exploit sparsit y in the Hessian w.r.t. the laten t v ariables — a property satisfied b y SPDE fields but violated b y the HMM lik eliho o d. W e presen t a mo dified forward algorithm to compute the HMM lik eliho od, constructing sparsity in the Hessian and consequently enabling fast and scalable inference. W e demonstrate the practical feasibility and the usefulness through simulations and t w o case studies exploring the detection of stellar flares as well as mo delling the mo vemen t of lions. Keyw ords: Marko v-switching mo dels, state-space mo dels, Gaussian pro cess, Laplace appro ximation, automatic differen tiation ∗ Email: jan-ole.fischer@uni-bielefeld.de . ORCID: 0009-0004-1556-9053 1 1 In tro duction Ov er the past decade, hidden Marko v mo dels (HMMs) hav e b ecome a p o w erful to ol for mo delling noisy time series driv en by unobserved, serially correlated states. Their v ersatility has led to widespread adoption across disciplines such as ecology (McClintock, Langro c k, et al., 2020 ; Mews, Langrock, et al., 2022 ), finance (Liu et al., 2012 ; Zhang et al., 2019 ), medicine (Amoros et al., 2019 ; Sop er et al., 2020 ), and sp orts (Ötting et al., 2023 ; Mic hels, Ba jons, et al., 2026 ). Recen t adv ances hav e fo cused on replacing restrictive and p oten tially unrealistic parametric assumptions with more flexible mo del comp onen ts. A notable developmen t is the in tegration of p enalised splines in to HMMs, first prop osed by Langro c k, Kneib, Sohn, et al. ( 2015 ). Subsequent w ork (Langro c k, Kneib, Glennie, et al., 2017 ; Chen and Finkenstädt, 2023 ; F eldmann et al., 2023 ; K oslik, Dup on t, et al., 2025 ; Ammann et al., 2026 ) has expanded this approac h and fo cussed on selecting an adequate degree of smo othness through a mixed mo del representation (Koslik, 2024 ; Michelot, 2025 ) as w ell as multiv ariate extensions (Koslik, 2025 ; Mic hels and Langro c k, 2025 ). While p enalised splines hav e significantly adv anced the flexibilit y of HMMs b ey ond tradi- tional parametric formulations, their low-rank function approximations inv olv e inherent trade-offs. Current implementations often rely on calculations with dense matrices, which can b ecome computationally demanding as the dimensionalit y of the problem increases. A dditionally , the p enalties in p enalised splines are primarily designed to enforce global function smo othness, whic h may not alwa ys capture more complex or domain-sp ecific patterns in the data. On the other hand, Gaussian pro cesses (GPs) (O’Hagan, 1978 ) — also referred to as Gaussian fields (GF s) in spatial applications (Cressie, 2015 ) — offer a differen t, ric h framework for function approximat ion. Through the c hoice of co v ari- ance kernels, GPs can enco de prior kno wledge ab out the underlying structure of the function — such as p erio dicit y (e.g. F oreman-Mack ey et al., 2017 ), spatial correlations, or non-stationarity — making th em particularly w ell-suited for applications where the data exhibits suc h in tricate patterns. This adaptability op ens up new p ossibilities for mo delling complex phenomena that ma y not b e fully captured by p enalised splines alone. While historically GPs ha ve b een limited b y their p o or scalability , Lindgren, Rue, and Lindström ( 2011 ) dev elop ed a metho d to approximate Matérn fields on a triangulated mesh, transforming the finite-dimensional appro ximation into a Gaussian Mark ov random field (GMRF). This approac h enables sparse computations and has gained p opularit y through the R-INLA (Lindgren and Rue, 2015 ) and inlabru (Bac hl et al., 2019 ) R pac k ages, the latter tailored for distributional regression mo dels with latent Gaussian fields. In tegrating suc h fields into HMMs can b e b eneficial in tw o distinct scenarios. First, in cases where the state-dep endent observ ation pro cess within the HMM is p erturb ed by e.g. GPS measurement error or quasi-p erio dic trend (McClin to c k, King, et al., 2012 ; Esquivel 2 et al., 2025 ), this can b e accounted for. Second, in more standard mixed mo del settings — suc h as those considered in inlabru for regression — a comp onen t of the HMM (e.g., transition probabilities b et w een states) can b e related to a latent (spatial) Gaussian field. F ast frequen tist lik eliho o d-based inference for b oth scenarios is desirable but challenging due to the high-dimensional integration required to ev aluate the lik eliho o d function. The T emplate Mo del Builder framework and its asso ciated R pac k ages, TMB (Kristensen et al., 2016 ) and RTMB (Kristensen, 2026 ), address this challenge b y lev eraging automatic differen tiation to p erform the Laplace appro ximation of the high-dimensional in tegral. Ho wev er, the approach requires computing the Hessian matrix of the log-lik eliho o d with resp ect to the latent v ariables, which is only feasible in high-dimensional settings if the Hessian is a sparse matrix. Unfortunately , such a sparsit y pattern is not present in HMMs, necessitating a tailored approximation of the HMM log-likelihoo d function. In this pap er, we prop ose suc h an approximation by mo difying the forward algorithm to compute the HMM log-lik eliho o d. This mo dification ensures lo cal time-dep endence only and consequently yields a sparse second deriv ative with resp ect to latent v ariables spaced out ov er time. Combined with the SPDE approach b y Lindgren, Rue, and Lindström ( 2011 ), this enables the integration of Gaussian fields in to HMMs and facilitates rapid, n umerically efficient inference that scales well to high dimensions. W e demonstrate the practical utilit y and p erformance of our approach through tw o case studies: (1) mo delling stellar flares, where brigh tness measuremen ts are p erturb ed b y quasi-p erio dic oscillations in star brightness, and (2) mov emen t ecology , where a spatial field influences the probability of transitions b etw een b ehaviours. 2 Metho ds Before we detail the no v el inference pro cedures outlined ab ov e, we shall briefly introduce the t w o mo del comp onents required throughout this pap er. 2.1 Hidden Mark o v mo dels A basic hidden Marko v mo del comprises tw o sto c hastic pro cesses: an observed pro cess { Y t } and a hidden pro cess { S t } . The unobserved pro cess is an N -state Mark o v c hain on the state space { 1 , . . . , N } that satisfies the Mark ov prop ert y Pr( S t = s t | S t − 1 = s t − 1 , . . . , S 1 = s 1 ) = Pr( S t = s t | S t − 1 = s t − 1 ) . 3 Due to this Marko vian nature, the state pro cess is fully sp ecified by its initial distribution δ (1) = Pr ( S 1 = 1) , . . . , Pr ( S 1 = N ) and one-step transition probabilities γ ( t ) ij = Pr ( S t = j | S t − 1 = i ) which are commonly su mmarised in a transition probabilit y matrix (t.p.m.) Γ ( t ) = ( γ ( t ) ij ) i,j =1 ,...,N . The time index t is included here b ecause in many applications cov ariates z t ∈ R p , t = 1 , . . . , T , affect the probability of transitioning from one state to another. If at time t − 1 , the pro cess is in state i , the categorical distribution of states for time t is given by ( γ ( t ) i 1 , . . . , γ ( t ) iN ) . The co v ariate influence on this categorical distribution is usually mo delled using a m ultinomial logistic regression, achiev ed by appling the in v erse multinomial logistic link function (softmax) to linear predictors for each row of the t.p.m., γ ( t ) ij = exp( η ( t ) ij ) P N k =1 exp( η ( t ) ik ) , setting η ( t ) ii = 0 (reference category). The linear predictors η ( t ) ij ma y include any type of parametric relationship (e.g. linear, quadratic, interaction), simple random effects or, as will b e considered here, r andom fields . Giv en a realisation of the hidden state S t , the distribution of Y t is indep endent of all other observ ations and hidden states. More formally , if f denotes a general densit y , f ( y t | s 1 , . . . , s T , y 1 , . . . , y t − 1 , y t +1 , . . . , y T ) = f ( y t | s t ) . As S t only takes on finitely man y v alues, to simplify notation w e simply denote the corresp onding densities as f j ( y t ) = f ( y t | S t = j ) , j = 1 , . . . , N . If y t is multiv ariate, a common assumption is that its comp onents are conditionally indep ent given the current state. Consequen tly , in this case f j ( y t ) is the pro duct of univ ariate densities. 2.2 Gaussian pro cesses with sparse precision Gaussian pro cesses (GPs) provide a flexible framework for function mo delling by specifying their distribution through a mean function and a co v ariance kernel. The defining character- istic of a Gaussian pro cess u is that for any finite collection of ev aluation p oints r 1 , . . . , r l , the corresp onding function v alues follo w a multiv ariate Gaussian distribution with mean v ector and co v ariance matrix determined by the mean function m and cov ariance k ernel K , resp ectiv ely . More formally , u ( r 1 ) , . . . , u ( r l ) ⊤ ∼ N ( m , K ) , (1) 4 where m = m ( r 1 ) , . . . , m ( r l ) ⊤ , and K ij = K ( r i , r j ) . In spatial and spatio-temp oral settings, this framework is commonly referred to as a Gaussian random field (GRF). A ma jor practical limitation of GP mo dels is their computational cost. F or l ev aluation p oints, likelihoo d ev aluation and inference typically require O ( l 3 ) op erations and O ( l 2 ) storage due to dense cov ariance matrices. Ho wev er, Lindgren, Rue, and Lindström ( 2011 ) sho wed that Gaussian fields with Matérn co v ariance can b e represented as the w eak solution to the sto c hastic partial differential equation (SPDE) ( κ 2 − ∆) α/ 2 u ( r ) = W ( r ) , (2) where ∆ denotes the Laplacian op erator, W ( r ) is Gaussian white noise, κ > 0 controls the spatial range, and r ∈ R d . The parameter α determines the smo othness of the field and is usually fixed as it is p o orly identified. F or spatial dimension d , the corresp onding Matérn smo othness parameter is given by ν = α − d/ 2 . Appro ximating the w eak solution of ( 2 ) using a finite elemen t basis representation, u ( r ) ≈ l X i =1 ψ i ( r ) x i , where { ψ i } i =1 ,...,l are lo cally supported basis functions defined on a triangulated mesh, yields a finite-dimensional Gaussian approximation of the Matérn field. Due to the lo cal supp ort of the basis functions and the lo calit y of the differen tial op erator, the resulting system matrices are sparse. Consequen tly , the co efficient vector x = ( x 1 , . . . , x l ) ⊤ follo ws a Gaussian Marko v random field (GMRF) with sparse precision matrix. F or example, for dimension d = 2 and α = 2 , w e obtain the precision matrix Q τ ,κ = τ 2 κ 4 C + 2 κ 2 G + GC − 1 G , (3) where τ > 0 is an ov erall precision parameter that scales the driving white noise and thereb y determines the marginal v ariance of the resulting field. The l × l matrices C and G ha v e en tries C ij = Z ψ i ( r ) ψ j ( r ) d r , and G ij = Z ∇ ψ i ( r ) ⊤ ∇ ψ j ( r ) d r , resp ectiv ely . Using piecewise linear basis functions yields sparse matrices C and G . In practice, C is often replaced by its lump ed (diagonal) appro ximation suc h that C − 1 is sparse (see Bolin and Lindgren, 2009 for justification). 5 The sto chastic weigh ts x then hav e multiv ariate Gaussian density (2 π ) − l/ 2 | Q τ ,κ | 1 / 2 exp − 1 2 ( x − µ ) ⊤ Q τ ,κ ( x − µ ) , (4) where µ is determined b y the mean f unction m . Ev aluating this density is computationally efficien t b ecause the quadratic form only in volv es the non-zero entries of Q τ ,κ , and sparse Cholesky factorisation can b e used to compute the log-determinant efficiently . 2.3 Example mo del form ulations Before turning to inference, we shall highlight tw o examples in which combining hidden Mark ov mo dels (HMMs) with Gaussian fields or Gaussian processes is natural and b eneficial. Example 1 Supp ose the state-switching pro cess of interest is not observed directly but is contaminated by an additive noise comp onent. A natural mo del formulation for observ ations y 1 , . . . , y T is then Y t = X t + u t , t = 1 , . . . , T , where { X t } follo ws an HMM and represents the laten t pro cess of interest. The noise comp onen t u t ma y b e indep enden t white noise, but it may also exhibit temp oral structure, for example u t = u ( t ) with u follo wing a Gaussian pro cess. The latter allows for smo oth trends or temp orally correlated disturbances that cannot b e captured by indep endent errors. W e consider such a mo del in the first case study . Example 2 In spatial settings, supp ose w e ma y observ e not only y 1 , . . . , y T but also corresp onding spatial lo cations r 1 , . . . , r T , each in R 2 . The observ ations may then follo w a standard state-dep endent mo del, Y t | { S t = j } ∼ f j ( · ) , where { f j } j =1 ,...,N are parametric state-dep endent densities. While the observ ation mo del remains parametric, the state pro cess itself may dep end on spatial lo cation. F or example, transition probabilities γ ( t ) ij can b e linked to linear predictors of the form η ( t ) ij = β ⊤ ij z t + u ( r t ) , where z t denotes observed co v ariates and u ( r t ) is a spatial Gaussian field ev aluated at lo cation r t . This formulation allows b eha vioural switching dynamics to v ary smo othly across space. In the second case study , we apply such a mo del to animal mov ement data 6 to in v estigate ho w b eha vioural states of lions v ary across the study area. 2.4 Inference via marginal lik eliho o d using (R)TMB Conducting frequen tist inference, i.e. maxim um lik eliho o d estimation, in any of the aformen tioned mo dels requires access to the likelihoo d function of the observ ations under the particular mo del of interest. The main c hallenge is that, although it is usually straigh tforward to compute the join t lik eliho o d of the data and the laten t v ariables, the latter must b e integrated out to obtain the marginal lik eliho o d of the data. Precisely , letting f denote a general densit y and using subscripts to denote parameter dep endence, w e require the marginal likelihoo d function L ( θ ) = Z R l f θ ( y | x ) f θ ( x ) d x (5) of observ ations y = ( y 1 , . . . , y T ) ⊤ . The vector θ ∈ R p parameterises the t.p.m., the state- dep enden t distributions and the distribution of the Gaussian field. Equation ( 5 ) co vers t wo distinct cases, corresp onding to the example mo del form ulations from Section 2.3 and case studies from Section 3 . 1. In Example 1, f θ ( x ) giv es the HMM likelihoo d of a latent pro cess x = ( x 1 , . . . , x T ) ⊤ that is p erturb ed by noise, th us in this case l = T . f θ ( y | x ) is a GMRF density as in ( 4 ) ev aluated at y with mean x , corresp onding to a measurement mo del of the observ ations conditional on the pro cess. 2. In Example 2, f θ ( y | x ) is the HMM likelihoo d of ob erv ations y 1 , . . . , y T conditional on a latent Gaussian field x ∈ R l , the latter with GMRF density f θ ( x ) as in ( 4 ). Due to the structure of the HMM lik eliho o d, the high-dimensional integral in Equation ( 5 ) is analytically intractable. A p ow erful to ol for appro ximate frequen tist inference is given b y the so-called L aplac e appr oximation (Erk anli, 1994 ; V an der V aart, 2000 ). Omitting the dep endence on the data y for notational simplicity and letting g ( x , θ ) = − log f θ ( y | x ) − log f θ ( x ) , (6) w e can rewrite the marginal lik eliho o d in Equation ( 5 ) as L ( θ ) = Z R l exp − g ( x, θ ) d x . (7) F or fixed θ the Laplace approximation now pro ceeds by p erforming a second-order T aylor 7 appro ximation of g ab out its minimum ˆ x θ = argmin x g ( x , θ ) , yielding the quadratic appro ximation g ∗ ( x , θ ) = g ( ˆ x θ , θ ) + 1 2 ( x − ˆ x θ ) ⊤ H θ ( x − ˆ x θ ) , where H θ = g ′′ xx ( ˆ x θ , θ ) is the Hessian matrix of g w.r.t. x . Plugging g ∗ bac k into ( 7 ) , yields L ∗ ( θ ) = exp − g ( ˆ x θ , θ ) Z R l exp − 1 2 ( x − ˆ x θ ) ⊤ H θ ( x − ˆ x θ ) d x , where the latter part is a multiv ariate Gaussian in tegral with closed-form solution. Solving the integral, applying the logarithm and multiplying by − 1 , we arrive at the approximate marginal negativ e log-likelihoo d function − log L ∗ ( θ ) = g ( ˆ x θ , θ ) − l 2 log(2 π ) + 1 2 log | H θ | , (8) where | · | denotes the determinan t. This pro cedure is conv enien tly automated through the T emplate Mo del Builder framework and its asso ciated R pac k age TMB (Kristensen et al., 2016 ) and has recen tly b ecome ev en more attractive through the R in terface pro vided b y the nov el RTMB pac k age (Kristensen, 2026 ). With the latter, the user simply needs to implemen t and supply the join t negativ e log-lik eliho o d g as an R function. Using rev erse-mo de automatic differentiation for the deriv atives of g , the pack age then returns ( 8 ) along with i ts gradien t w.r.t. θ . Eac h call to the ob jectiv e function (or its gradient) then triggers an inner Newton optimisation to determine ˆ x θ , required for the Laplace appro ximation. Fitting the mo del then amounts to n umerically minimising ( 8 ) using an y suitable gradient based numerical minimisation routine, yielding a nested numerical optimisation pro cedure. Due to the nature of the Laplace approximation, after the optimisation predicted latent v ariables ˆ x ˆ θ , corresp onding to the p osterior mo de, are immediately av ailable. F urthermore, T emplate Mo del Builder provides an estimate of the join t precision matrix of the parameters and latent v ariables (Bates and W atts, 1988 ). Using this matrix, we can sample from the approximate Gaussian distribution and use the aquired samples to quan tify uncertain ty . A crucial practical consideration is the structure of H θ . If the dimensionalit y l of x is large, computation of H θ b ecomes prohibitive in general. Only if man y entries of H θ are exactly zero, i.e. it is a sp arse matrix, computations b ecome feasible and fast. An entry H ij is zero if ∂ 2 g /∂ x i ∂ x j = 0 ev erywhere. This requirement makes the SPDE approac h outlined in Section 2.2 particularly attractiv e. The term corresp onding to the GMRF log-lik eliho o d will b e the logarithm of ( 4 ) and hence tak e the form − 1 2 ( x − µ ) ⊤ Q τ ,κ ( x − µ ) + const. The second deriv ativ e of this summand w.r.t. x (or µ ) is simply − Q τ ,κ , a sparse matrix 8 b y construction. If suc h a pattern is present, it is automatically detected by ( R ) TMB and exploited for all required computations. Sp ecifically , only non-zero cross-deriv ativ es are computed and the linear algebra required to compute ( 8 ) is performed using a sparse Cholesky factor. In either of the tw o cases from Section 2.3 , the second summand in ( 6 ) corresp onds to the HMM log-likelihoo d. Ev aluating this term requires marginalisation ov er the laten t state sequence s 1 , . . . , s T whic h, as w e shall see, results in a dense Hessian matrix. Naiv ely trying to apply the Laplace approximation is hence deemed to fail as ev aluating H θ and p erforming computations with it is prohibitive. Hence, in the next section w e dev elop an appro ximation to the algorithm required to ev aluate the HMM log-lik eliho o d in order to mitigate a dense Hessian matrix. 2.5 A banded forward algorithm Giv en our t w o examples, w e either need to compute the HMM lik eliho o d of a latent pro cess x 1 , . . . , x T , or of observ ations y 1 , . . . , y T conditional on a random field x ∈ R l . T o simplify notation, in this section we fo cus on the first case and use the term “observ ations” for x 1 , . . . , x T . Note, ho w ever, that this do es not restrict generality . The main ob jective is to construct a log-likelihoo d function that yields zero cross-deriv ativ es w.r.t. an y t wo quan tities en tering log-lik eliho o d summands at time t and t ′ if | t − t ′ | is sufficiently large. Computing the lik eliho o d of x 1 , . . . , x T under an HMM requires marginalising o ver all p ossible unobserved states s 1 , . . . , s T that could hav e generated this state-dep endent sequence. The task of summing out the states is made feasible through the so-called forw ard algorithm (Zucc hini et al., 2016 ). Defining the forwar d variables α t ( j ) = f ( x 1 , . . . , x t , s t = j ) , α t = α t (1) , . . . , α t ( N ) , and the diagonal matrix P ( x t ) = diag f 1 ( x t ) , . . . , f N ( x t ) , w e hav e α 1 = δ (1) P ( x 1 ) , and α t = α t − 1 Γ ( t ) P ( x t ) . Running the recursion from t = 1 to t = T and building the sum of the en tries of the last forw ard v ariable yields the likelihoo d of interest. How ev er, this approach can suffer from n umerical under- or o verflo w, and th us it is common to consider the follo wing scaling strategy (Lystig and Hughes, 2002 ). Let 1 = (1 , . . . , 1) and define the sc ale d forw ard v ariables ϕ t = α t α t 1 ⊤ , and thus ϕ t ( j ) = f ( s t = j | x 1 , . . . , x t ) by the definition of α t ( j ) . No w it can b e shown 9 that ϕ t = ϕ t − 1 Γ ( t ) P ( x t ) ϕ t − 1 Γ ( t ) P ( x t ) 1 ⊤ , and ϕ t − 1 Γ ( t ) P ( x t ) 1 ⊤ = f ( x t | x 1 , . . . , x t − 1 ) . Observing that ϕ t , t = 1 , . . . , T , can also b e calculated recursively , this yields an alternative w ay to calculate the likelihoo d based on the pro duct rule f ( x 1 , . . . , x T ) = f ( x 1 ) | {z } δ (1) P ( x 1 ) 1 ⊤ T Y t =2 f ( x t | x 1 , . . . , x t − 1 ) | {z } ϕ t − 1 Γ ( t ) P ( x t ) 1 ⊤ , and taking logarithms, we obtain a summation ov er all time p oin ts: log f ( x 1 , . . . , x T ) = log f ( x 1 ) | {z } δ (1) P ( x 1 ) 1 ⊤ + T X t =2 log f ( x t | x 1 , . . . , x t − 1 ) | {z } ϕ t − 1 Γ ( t ) P ( x t ) 1 ⊤ . (9) The algorithm for computing ( 9 ) then inv olv es a recursion ov er time where at each time p oin t we a) up date the scaled forw ard v ariable based on the previous one and b) add the corresp onding log-likelihoo d contribution to a running summation. The key observ ation here is that each term in the summation in ( 9 ) is conditional on the entire pro cess history through the scaled forward v ariable ϕ t − 1 . Consequently , the state-dep enden t pro cess of a hidden Marko v mo del is not Marko vian. Hence, the Hessian of ( 9 ) with resp ect to x is a dense matrix. This second p oin t extends more generally to deriv ativ es tak en with resp ect to parameters that enter only one or a small num b er of summands in ( 9 ) . Suc h a situation arises in the second example, where we consider the HMM likelihoo d of an observed sequence y 1 , . . . , y T , conditional on the GMRF x = ( x 1 , . . . , x l ) ⊤ based on the SPDE approac h. Eac h weigh t is asso ciated with a basis function of compact supp ort. Indeed, at any spatial lo cation r , at most three basis functions ψ i ( r ) are non-zero, giving an index set I ( r ) with at most three entries. The summand in the log-lik eliho o d for time t can then b e written as log ϕ t − 1 Γ ( x i 1 , x i 2 , x i 3 ) P ( y t ) 1 ⊤ , i 1 , i 2 , i 3 ∈ I ( r t ) , only dep ending on a small subset of the GMRF weigh ts. Ho wev er, the forward v ariable ϕ t − 1 carries information from all previous time p oints. Without further mo dification, deriv atives with resp ect to field weigh ts app earing at different times can in teract through the recursion, pro ducing a dense Hessian unless mo difications are applied. T o ac hieve indep endence of terms sufficiently far apart in time, w e split the sum in ( 9 ) in to B non-o verlapping blo cks of equal length k . W e refer to k as the b andwidth parameter. T o simplify notation, we assume that T = B k and define t b = ( b − 1) k . The log-lik eliho o d 10 f ( x k +1 , . . . , x 2 k | x 1 , . . . , x k ) δ (1) ϕ k f ( x 2 k +1 , . . . , x 3 k | x k +1 , . . . , x 2 k ) ρ ˜ ϕ k 2 k f ( x 3 k +1 , . . . , x 4 k | x 2 k +1 , . . . , x 3 k ) ρ ˜ ϕ k 3 k . . . Figure 1: Visual demonstration of the approximation employ ed for computing the banded forw ard algorithm. Each blo c k is used twice: 1) for computing its likelihoo d contribution and 2) for constructing an approximate scaled forw ard v ariable for the next blo ck’s lik eliho o d contribution. can then b e written as log f ( x 1 , . . . , x T ) = log f ( x 1 , . . . , x k ) + B X b =2 bk X t = t b +1 log f ( x t | x 1 , . . . , x t − 1 ) | {z } ϕ t − 1 Γ ( t ) P ( x t ) 1 ⊤ . The first blo c k, log f ( x 1 , . . . , x k ) , can be ev aluated exactly , with the forw ard recursion initialised using the initial distribution δ (1) , yielding the scaled forw ard v ariable ϕ k . This quan tity can then also b e used to ev aluate the log-lik eliho o d contribution of the second blo c k exactly . The k ey difference arises when ev aluating the (approximate) log-likelihoo d con tributions for blo c ks b ≥ 3 . Usually , each corresp onding recursion needs to b e initialised with the scaled forw ard v ariable ϕ t b whic h dep ends on x 1 , . . . , x t b . Ho wev er, to achiev e indep endence of x 1 , . . . , x t b − 1 , w e construct an approximation ˜ ϕ k t b . T o do so, we define a fixed probabilit y v ector ρ , e.g. ρ = 1 N , . . . , 1 N , and propagate it through the forw ard recursion ov er the pr evious blo c k only . That is, at time t b , w e replace the exact scaled forw ard v ariable at the start of the blo ck, ϕ t b , b y ˜ ϕ k t b = ρ Q t b t = t b − 1 +1 Γ ( t ) P ( x t ) ρ Q t b t = t b − 1 +1 Γ ( t ) P ( x t ) 1 ⊤ , with en tries ˜ ϕ t b ( j ) = f ( s t b = j | x t b − 1 +1 , . . . , x t b ) . In doing so, for each blo ck we trunc ate the conditioning on the pro cess history and hence obtain the approximate log-likelihoo d 11 Algorithm 1 F orward Recursion for log-lik eliho o d and scaled forw ard v ariable Require: Observ ations x 1 , . . . , x T , transition matrices Γ ( t ) , state-dep endent densities f j ( x t ) , initial state vector ρ Ensure: Log-lik eliho o d l and last scaled forward v ariable ϕ 1: Initialise scaled forward v ariable: ϕ ← ρP ( x 1 ) ρP ( x 1 ) 1 ⊤ 2: Initialise log-lik eliho o d: l ← log( ρP ( x 1 ) 1 ⊤ ) 3: for t = 2 to T do 4: Up date scaled forward v ariable: ϕ ← ϕ Γ ( t ) P ( x t ) ϕ Γ ( t ) P ( x t ) 1 ⊤ 5: Up date log-likelihoo d: l ← l + log( ϕ Γ ( t ) P ( x t ) 1 ⊤ ) 6: end for 7: return ( l , ϕ ) function log f ( x 1 , . . . , x T ) ≈ log f ( x 1 , . . . , x k ) + B X b =2 bk X t = t b +1 log f ( x t | x t b − 1 +1 , . . . , x t − 1 ) | {z } ˜ ϕ t − 1 Γ ( t ) P ( x t ) 1 ⊤ . (10) The precise algorithms for the forward recursion and the banded forw ard algorithm are giv en in Algorithms 1 and 2 , resp ectively . Visual intution is given by Figure 1 . As each blo c k is used twic e to run the forward recursion, the computational cost of the algorithm is ab out twice that of the cost of the standard forward algorithm. In high-dimensional random-effect settings, this is ho w ever greatly comp ensated by the fact that it generates a banded Hessian matrix as shown in Figure 2 . Motiv ation for this lo cal appro ximation is giv en by the fact that Marko v chains forget exp onen tially fast ab out their initial condition. Hence, for a sufficiently large lag k , we exp ect ˜ ϕ k t to b e indistinguishable from ϕ t regarding numerical precision, and thus will b e the conditional likelihoo d con tribution. F ormal theoretical justification for the algorithm is giv en by the following theorem. Theorem 1 (Geometric decay of the forward-lik eliho o d approximation error) . L et ℓ T ( θ , x ) denote the exact lo g-likeliho o d and ˜ ℓ T ( θ , x ) its b andwidth- k forwar d appr oximation. Con- sider either of the fol lowing settings: 12 Algorithm 2 Banded F orw ard Algorithm for Approximate log-likelihoo d Require: Observ ations x 1 , . . . , x T , transition matrices Γ ( t ) , state-dep endent densities f j ( x t ) , initial distribution δ (1) , bandwidth k , fixed initialisation vector ρ Ensure: Appro ximate log-lik eliho o d l 1: B ← T /k ▷ Assume T is a multiple of k for simplicit y 2: Compute exact log-likelihoo d and final scaled forward v ariable of first blo ck: ( l , ϕ ) ← F orwardRecursion ( x 1 , . . . , x k , Γ ( t ) , f j , δ (1) ) 3: Compute exact log-likelihoo d for second blo ck l ← l + F orw ardRecursion ( x k +1 , . . . , x 2 k , Γ ( t ) , f j , ϕ ) 4: for b = 3 to B do 5: Compute appro ximate scaled forward v ariable from previous blo c k: ˜ ϕ ← F orwardRecursion ( x ( b − 2) k +1 , . . . , x ( b − 1) k , Γ ( t ) , f j , ρ ) 6: Up date log-likelihoo d for current blo ck: l ← l + F orw ardRecursion ( x ( b − 1) k +1 , . . . , x bk , Γ ( t ) , f j , ˜ ϕ ) 7: end for 8: return l (i) L atent-pr o c ess c ase: ℓ T ( θ , x ) is the lo g-likeliho o d of a latent pr o c ess x = ( x 1 , . . . , x T ) ⊤ . (ii) Observe d-data c ase: ℓ T ( θ , x ) is the lo g-likeliho o d of observations y 1 , . . . , y T c ondi- tional on latent variables x . Assume that θ ∈ Θ , x ∈ X , Θ × X is c omp act, the state-dep endent densities satisfy 0 < m ≤ f j ( · ) ≤ M < ∞ for al l j, t , and the underlying Markov chain is uniformly er go dic. Then ther e exist c onstants C > 0 and ρ ∈ (0 , 1) , indep endent of k , such that sup ( θ , x ) ∈ Θ ×X ℓ T ( θ , x ) − ˜ ℓ T ( θ , x ) ≤ T C ρ k , wher e the ine quality holds almost sur ely in c ase (ii). F or the pro of, see App endix A.1 . While Theorem 1 yields a theoretical guarantee that the approximation error can be made arbitrarily small b y choosing a sufficiently large k , in practice, the constan ts C and ρ can v ary substantially with the characteristics of the data at hand. F or go o d practical p erformance — balancing appro ximation accuracy and numerical efficiency — a suitable bandwidth should therefore alw a ys b e tuned to the sp ecific application. W e study the practical implications of v arying k , and o verall 13 Dimensions: 60 x 60 Column Row 10 20 30 40 50 10 20 30 40 50 − 40000 − 20000 0 20000 40000 60000 Dimensions: 60 x 60 Column Row 10 20 30 40 50 10 20 30 40 50 − 40000 − 20000 0 20000 40000 60000 Figure 2: Comparison of the Hessian matrix w.r.t. observ ation sequence x 1 , . . . , x 60 when the log-lik eliho o d is calculated using the regular forward algorithm (left) and using the banded forw ard algorithm with bandwidth k = 5 (right). Gra y corresp onds to v alues that are v ery close to zero in magnitude while white corresp onds to exact zeros. estimation p erformance in the simulation exp eriments in App endix A.3 . Ov erall these indicate adequate reco very of the true spatial field and all other mo del parameters. In these exp erimen ts, the impact of the bandwidth parameter was relatively mo dest. Ho w ever, co vering all p ossible real-data settings is imp ossible, hen ce i t is difficult to give general guidance. In practice, we exp ect v alues around k = 15 to b e useful initial guesses. F or highly p ersisten t pro cesses, i.e., when diagonal transition probability matrix en tries are large, a larger bandwidth is lik ely required. A useful strategy for c ho osing k is to compute the join t negativ e log-likelihoo d g at the initial parameter v alues and gradually increase the bandwidth k un til this v alue stabilises. This pro vides a sensible starting p oint for the first mo del fit. After this initial fit, a sanity chec k is recommended: analysts should increase the bandwidth b y , e.g., 5 , and verify that the estimated parameters remain equal (to a reasonable n um b er of decimal places). If they do, this indicates that the approximation is sufficien tly accurate. 2.6 Practial implemen tation W e use the automatic Laplace appro ximation implemen ted in the RTMB pac k age (Kristensen, 2026 ), choosing RTMB o ver TMB simply b ecause defining custom likelihoo d functions in R is usually more conv enien t than writing the required C++ co de necessary for TMB . 14 The approximate forward algorithm from Section 2.5 is implemented in the LaMa R pac k age (Fisc her, 2026 ), a mo dular framew ork for building and fitting latent Mark ovian mo dels (Mews, K oslik, et al., 2025 ). F or the tw o core functions that run the homogeneous and inhomogeneous forward algorithm, forward() and forward_g() , resp ectively , the user simply needs to pass a bw argument, corresp onding to the bandwidth parameter k . F or the SPDE approac h, we rely on the fmesher R pac k age (Lindgren, 2026 ) to construct the required triangulated mesh and finite element matrices. Efficien t computation of the GP likelihoo d is p ossible through RTMB ’s dgmrf() function which ev aluates a multiv ariate Gaussian density parameterised in terms of a sparse precision matrix, and th us w orks seamlessly with the sparse matrices provided by fmesher . 3 Case studies The following t wo case studies demonstrate the practical usage of the prop osed metho d for the mo del form ulations presented in Section 2.3 . All co de and data for full repro ducibility of the analyses is a v ailable at https://github.com/janolefi/HMMs_GFs . 3.1 Detecting stellar flares in photometric data W e revisit the analysis of Esquiv el et al. ( 2025 ), fo cussing on stellar flare detection in the brigh tness measurements of M dwarf TIC 031381302 observ ed by TESS at a 2-minute cadence. Our goal is to demonstrate the computational adv antages of our frequen tist estimation approac h ov er the dynamic Hamiltonian Mon te Carlo algorithm used in their analysis. Stellar flares are sudden bu rsts of energy emitted by stars, primarily caused by magnetic reconnection even ts. Such even ts are crucial for understanding stellar magnetic activit y , rotation, and the radiation en vironment of orbiting exoplanets. Hence, detecting and c haracterising flares in photometric time series data is essen tial for studying stellar b eha vior. Flares are c haracterised b y a rapid increase in brigh tness follo wed b y a gradual deca y . Detection is how ev er complicated by the fact that this is usually sup erimp osed on quasi- p erio dic oscillations in the star’s brightness. T raditional metho ds for flare detection, such as sigma-clipping, often struggle to distinguish low-energy flares from these oscillations, leading to biased energy estimates. T o address these challenges, Esquivel et al. ( 2025 ) in tro duced a 3 -state HMM, modelling the star’s light curve as transitioning b etw een “quiet”, “firing”, and “deca ying” states, sup erimp osed with an additive quasi-p erio dic trend. W e retain the core structure of their mo del, where the observed ligh t curv e { Y t } is decomp osed 15 in to a flaring channel { X t } and a quasi-p erio dic trend { u t } as Y t = X t + u t , t = 1 , . . . , T . W e mo del u t as a Gaussian pro cess with scalar mean µ and, slightly deviating from Esquiv el et al. ( 2025 ), with a Matérn GP via the SPDE approac h. In order to mirror the rotation k ernel emplo y ed b y Esquiv el et al. ( 2025 ), w e use the extension to oscil lating co v ariance functions, introduced in Section 3.3 of Lindgren, Rue, and Lindström ( 2011 ). This sp ecification results in a precision matrix of the form Q τ ,κ,ω = τ 2 κ 4 C + 2 cos( π ω ) κ 2 G + GC − 1 G with matrices C and G as in Section 2.2 and oscillation parameter ω ∈ (0 , 1) . The corresp onding oscillating co v ariance function is given in App endix A.4 . F or this GP with 1D domain, mesh construction is simple. Using the fmesher pac k age, w e construct degree one B-spline basis functions (ten t functions) with knots at the observed time p oints and compute the finite element matrices. Again follo wing Esquivel et al. ( 2025 ), for the states “quiet” (Q), “firing” (F), and “decaying” (D), w e assume the (partially autogressiv e) state-dep endent mo del X t | { S t = Q } ∼ N (0 , σ 2 ) , X t | { S t = F } , X t − 1 ∼ N ( X t − 1 , σ 2 ) + Exp ( λ ) , X t | { S t = D } , X t − 1 ∼ N ( r X t − 1 , σ 2 ) The state pro cess mo del is a homogeneous Marko v chain, where transitions b etw een quiet (Q) and deca ying (D) and transitions b etw een firing (F) and quiet (Q) are forbidden. T ransitions from decaying (D) to firing (F) are p ermitted, with such a b eha viour b eing called a c omp ound flar e . Hence, the mo del has transition matrix Γ = γ QQ γ QF 0 0 γ F F γ F D γ DQ γ DF γ DD , with all non-zero en tries b eing estimated freely . Note that, while Γ has zero entries, Theorem 1 still applies b ecause all entries of Γ 2 are strictly p ositive, i.e. the Marko v c hain is ergo dic with index of primitivity 2. W e implemen ted a custom likelihoo d function corresp onding to the mo del ab ov e via the LaMa R pac k age. W e split the time series in tw o b ecause of a long sequence of missing v alues in the middle. Finally , the unobserv ed true pro cess is integrated out using the 16 State probability 0 0.5 1 1335.1 1335.2 1335.3 1335.4 1335.5 0 50 100 150 Time (da ys) Flux Quiet Firing Deca ying T rend Figure 3: T op panel: Stac ked lo cally deco ded state probabilities ( Pr ( S t = i | y ) ) for states quiet (blac k), firing (red) and deca ying (orange) for a subsection of the brightness time series. Bottom panel: Corresp onding brightness v alues, colour-co ded according to the Viterbi-deco ded (most-likely) state (Viterbi, 2003 ). The purple line is the estimated quasi- p erio dic trend, i.e. p osterior mo de of the Gaussian pro cess. W e also show an appro ximate 95% credible interv al for the fitted smo oth in light purple, whic h is barely visible due to the high estimation precision. automatic Laplace appro ximation of the RTMB pac k age. The mo del is fitted b y numerically optimising the Laplace-appro ximated marginal (negative log-) likelihoo d function using R ’s nlminb() . Fitting the mo del to the full remaining data set of tw o time series and a total of T = 19 , 259 observ ations with a bandwidth parameter of k = 20 to ok ab out 6 min utes on a single core of an Apple M2 chip with 16GB of memory . This represents a dramatic impro v ement in computational efficiency compared to the 1–4 hours p er ch unk rep orted b y Esquivel et al. ( 2025 ). Their approac h required splitting the time series in to c hunks of 2,000 observ ations due to the computational complexity of their Gaussian pro cess (GP) implemen tation. In contrast, our metho d lev erages the linear scalabilit y of the SPDE-based approac h, enabling efficien t inference on the en tire time series without the need for ch unking. F or the time series of M dwarf TIC 031381302, the mo del flagged 18 flare ev ents, with an a verage duration of 17.4 minutes including the decaying phase. W e find that the mo del is able to detect flare even ts while simultaneously controlling for the quasi-p erio dic trend in oscillation (cf. Figure 3 ). Sp ecifically , note that the second flare in Figure 3 would 17 b e v ery difficult to detect using a simpler mo del without a quasi-p erio dic trend, as the brigh tness v alue of the observ ations deco ded as “flaring” is low er than that of previous observ ations that are deco ded as “quiet”. The full time series with detected flares is shown in App endix A.5 . W e do not aim to pro vide a formal p erformance comparison with the approach of Esquivel et al. ( 2025 ), nor to claim that our sp ecification achiev es the same level of physical v alidity . Their w ork carefully inv estigated mo del adequacy and detection p erformance using injection exp eriments, which is b eyond the scop e of this case study . Instead, our ob jective is to illustrate that a closely related mo delling framework can b e fitted using a Laplace-approximated marginal lik eliho o d combined with an SPDE-based GP represen tation, resulting in substantially reduced computational load. The example highligh ts the p otential of this frequen tist inference strategy as a scalable and practically attractiv e alternativ e. 3.2 Lion mo v emen t in Cen tral Kalahari In our second case study , we consider GPS mo vemen t data of lions ( Panther a le o ) in the Cen tral Kalahari, Botsw ana, publicly a v ailable through the Mov ebank Data Rep ository (MacF arlane, 2014 ). Data w ere collected from December 2008 to March 2012 and originally comprised 241,858 observ ations. T rac ks from sensors with long, systematically missing p erio ds not missing at random (e.g., no data b etw een 8 a.m. and 3 p.m.) w ere excluded. The remaining near-hourly data w ere regularised to exact hourly p ositions by aligning observ ations within 15 min utes of the full hour to the hour and discarding all others. This resulted in a final dataset of T = 53 , 453 hourly p ositions. The GPS measurements were con verted in to step lengths (km) and turning angles (radians) as it is commonly done when analysing horizontal mov emen t data (Langro ck, King, et al., 2012 ). W e sp ecified the state-dep enden t distributions for step lengths and turning angles as step t | { S t = j } ∼ Gamma ( µ j , σ j ) , angle t | { S t = j } ∼ wrapp ed Cauch y ( ν j , ρ j ) , for states j = 1 , . . . , N . After some initial exploration we decided that a 2 -state mo del — distinguishing b etw een predominantly inactive and more active b eha viour — w as the most appropriate. Although a 3-state mo del further sub divided the mov emen t state, the o verlap b et ween the state-dep endent distributions was substantial, and the estimated transition probability matrix exhibited little p ersistence. This indicates that, at an hourly temp oral resolution, different t yp es of active mov emen t could not b e reliably disentangled. 18 2 2 . 8 2 3 . 0 2 3 . 2 2 3 . 4 2 3 . 6 2 3 . 8 -2 1 . 7 -2 1 . 5 -2 1 . 3 P r ( ac ti v e → r es ti ng ) L o n g i t u d e L a t i t u d e 0.1 0.3 0.4 0.5 0.6 0.7 Figure 4: T ransition probability from activ e to resting, based on the p osterior mo de of the spatial field fitted to the lion data. Dark blue areas indicate a lo w probability of transitioning into the resting state, while yello w areas indicate a high probability . Pixels in the low er-right corner fall outside the triangulated mesh, so the field is predicted as zero there. W e mo delled the state-switching dynamics of the latent 2 -state Marko v chain as logit ( γ ( t ) 12 ) = β (12) 0 + h 12 ( hour t ) , logit ( γ ( t ) 21 ) = β (21) 0 + h 21 ( hour t ) + u ( r t ) , where r t is the 2D lo cation in the study area at time t and u is a Matérn field. The p erio dic function in b oth equations is h ij ( hour ) = 3 X k =1 β ( ij ) 1 k sin 2 π k hour 24 + 3 X k =1 β ( ij ) 2 k cos 2 π k hour 24 . Only the linear predictor for the transition from state 2 (activ e) to state 1 (resting) includes the spatial field, as state 1 is c haracterised b y virtually no mo vemen t. Consequen tly , a switch to state 2 occurs v ery near to the lo cation where the animal w as resting and dep ends primarily on the time sp ent there and the time of da y . Again using fmesher , w e approximated the spatial field by constructing a triangulated mesh with 2516 no des, leading to GMRF approximating with l = 2516 weigh ts and precision matrix as given in Equation ( 3 ) . The mesh is shown in App endix A.5 . Fitting 19 0.0 0.2 0.4 0.6 0.8 Time of da y Pr(activ e) 0 4 8 12 16 20 24 Figure 5: Probability of b eing active as a function of the time of day , obtained based on the p erio dically stationary state distribution. The gray band corresp onds to p oint wise 95% confid ence in terv als based on the approximate m ultiv ariate normal distribution of the MLE. the model with a bandwidth parameter of k = 15 to ok ab out 6 minutes on an Apple M2 chip with 16GB of memory . A dditionally , we also fitted a homogeneous mo del and mo dels including only the random field or only the p erio dic functions f ij . F or the fully parameteric mo dels, fitting times where negligible in comparison to the mo dels including a spatial field. Fitting the purely spatial mo del to ok ab out 4 minutes. W e find that the animals are very un lik ely to transition in to the resting state within a distinct region in the centre of the study area (cf. Figure 4 ). In other w ords, once active in this area, they tend to remain active rather than settle. A comparison with satellite imagery did not reveal any ob vious landscap e features explaining this pattern. This spatial structure ma y therefore reflect more subtle ecological drivers, suc h as prey distribution, so cial interactions, or unobserv ed habitat characteristics, and w arrants further ecological in vestigation. Isolating the effect of time of da y via the p erio dically stationary distribution (Koslik, F eldmann, et al., 2025 ) shows a clear diel pattern (cf. Figure 5 ). Activit y is highest during the night and early morning hours, and lo west during the daytime, which is consisten t with the predominantly no cturnal b ehaviour of lions in arid environmen ts. 20 4 Discussion W e consider com bining hidden Marko v mo dels (HMMs) and laten t Gaussian fields in a unified mo delling framework, where, dep ending on the application, the GF comp onen t ma y en ter at different levels of the mo del hierarc hy . Recent adv ances in automatic dif- feren tiation and automatic Laplace approximation hav e, in principle, made such mo dels computationally feasible. In particular, these to ols in tegrate naturally with the SPDE approac h, which provides Gaussian Mark ov random field appro ximations to Gaussian fields and consequently yields sparse precision matrices. This sparsit y is essen tial for scalable inference when the latent Gaussian comp onent is high-dimensional. A cen tral computational obstacle sp ecific to HMMs is that marginalisation o v er the discrete latent states — required to ev aluate the HMM log-lik eliho o d — induces global temp oral dep en- dence. Consequen tly , the Hessian matrix with resp ect to the laten t v ariables is dense, undermining the efficiency gains of the automatic Laplace appro ximation as implemen ted in ( R ) TMB . T o address this issue, we dev elop ed a mo dified forw ard algorithm for ev aluating the HMM log-likelihoo d. The key feature of this construction is that it generates a sparse Hessian, thereby restoring compatibility with SPDE-based GMRF represen tations and automatic Laplace approximation to ols. T aken together, these three ingredients allow rapid and scalable inference in mo dels that in tegrate discrete state switching with richly parameterised Gaussian fields. W e illustrated the practical p erformance of the approach in tw o case studies. The first concerned stellar flare detection, where a state-switc hing flaring pro cess was mask ed b y quasi-p erio dic oscillations in stellar brigh tness mo delled via a Gaussian pro cess. The second analysed mo v ement data from African lions in the Central Kalahari, where b ehavioural switc hing w as mo dulated by a latent spatial field entering the HMM lik eliho o d. In b oth cases, the prop osed framew ork enabled flexible mo delling while retaining remark able computational efficiency . Sev eral extensions app ear promising. F or instance, the SPDE approach has already b een generalised to latent fields that are non-stationary (Lindgren, Rue, and Lindström, 2011 ), and non-Gaussian or spatio-temp oral pro cesses are also p ossible (Lindgren, Bolin, et al., 2022 ). F urthermore, the SPDE framework can also b e applied to non-Euclidean manifolds (Lindgren, Rue, and Lindström, 2011 ), suc h as curved surfaces or ev en netw orks. Exploring these ric her settings as building blo cks inside HMMs is an in teresting av enue for future researc h. 21 A c kno wledgmen ts The author thanks Roland Langro ck for helpful comments on an earlier v ersion of this man uscript as well as Kasp er Kristensen for answ ering v arious questions related to RTMB . Conflict of In terest The author declares that there are no conflicts of interest. References Ammann, Katharina, Timo A dam, and Jan-Ole K oslik (2026). “ Non-homogeneous Marko v- switc hing generalised additiv e mo dels for lo cation, scale, and shap e”. In: arXiv pr eprint arXiv:2601.03760 . Amoros, Rub en et al. (2019). “ A contin uous-time hidden Mark ov mo del for cancer surveil- lance using serum biomarkers with application to hepato cellular carcinoma”. In: Metr on 77.2, pp. 67–86. Bac hl, F abian E, Finn Lindgren, David L Borchers, and Janine B Illian (2019). “ inlabru: an R pac k age for Bay esian spatial mo delling from ecological survey data”. In: Metho ds in Ec olo gy and Evolution 10.6, pp. 760–766. Bates, Douglas M and Donald G W atts (1988). Nonline ar Re gr ession Analysis and its Applic ations . V ol. 2. Wiley New Y ork. Bolin, David and Finn Lindgren (2009). “ W av elet Mark ov mo dels as efficient alternatives to tap ering and con volution fields”. In: Pr eprints in Mathematic al Scienc es 2009.13. Chen, Sida and Bärb el Finkenstädt (2023). “ Bay esian spline-based hidden Mark o v mo dels with applications to actimetry data and sleep analysis”. In: Journal of the Americ an Statistic al Asso ciation , pp. 1–11. Cressie, No el (2015). Statistics for Sp atial Data . John Wiley & Sons. Erk anli, Alaattin (1994). “ Laplace appro ximations for p osterior exp ectations when the mo de o ccurs at the b oundary of the parameter space”. In: Journal of the Americ an Statistic al Asso ciation 89.425, pp. 250–258. Esquiv el, J Arturo et al. (2025). “ Detecting stellar flares in photometric data using hidden Mark ov mo dels”. In: The Astr ophysic al Journal 979.2, p. 141. 22 F eldmann, Carlina C, Sina Mews, Angelica Co culla, Ralf Stanewsky, and Roland Langro ck (2023). “ Flexible mo delling of diel and other p erio dic v ariation in hidden Marko v mo dels”. In: Journal of Statistic al The ory and Pr actic e 17.3, p. 45. Fisc her, Jan-Ole (2026). LaMa: Fast Numeric al Maximum Likeliho o d Estimation for Latent Markov Mo dels . R pack age version 2.1.0. url : https://CRAN.R- project.org/ package=LaMa . F oreman-Mack ey , Daniel, Eric Agol, Siv aram Am bik asaran, and Ruth Angus (2017). “ F ast and scalable Gaussian pro cess mo deling with applications to astronomical time series”. In: The Astr onomic al Journal 154.6, p. 220. K oslik, Jan-Ole (2024). “ Efficien t smo othness selection for nonparametric Mark ov-switc hing mo dels via quasi restricted maximum likelihoo d”. In: arXiv pr eprint arXiv:2411.11498 . — (2025). “ T ensor-pro duct in teractions in Mark o v-switching mo dels”. In: arXiv pr eprint arXiv:2507.01555 . K oslik, Jan-Ole, F ann y Dup ont, et al. (2025). “ Flexible unimo dal density estimation in hidden Mark o v mo dels”. In: arXiv pr eprint arXiv:2511.17071 . K oslik, Jan-Ole, Carlina C F eldmann, Sina Mews, Rouv en Michels, and Roland Langro c k (2025). “Inference on the state pro cess of p erio dically inhomogeneous hidden Marko v mo dels for animal b eha vior”. In: The Annals of Applie d Statistics 19.4, pp. 2724–2737. Kristensen, Kasp er (2026). R TMB: ‘R’ Bindings for TMB’ . R pack age version 1.8. url : https://CRAN.R- project.org/package=RTMB . Kristensen, Kasp er, Anders Nielsen, Casp er W B erg, Hans Sk aug, and Bradley M Bell (2016). “ TMB: automatic differentiation and Laplace approximation”. In: Journal of Statistic al Softwar e 70, pp. 1–21. Langro c k, Roland, Ruth King, et al. (2012). “ Flexible and practical mo deling of animal telemetry data: hidden Marko v mo dels and extensions”. In: Ec olo gy 93.11, pp. 2336– 2342. Langro c k, Roland, Thomas Kneib, Richard Glennie, and Théo Mic helot (2017). “ Marko v- switc hing generalized additive mo dels”. In: Statistics and Computing 27, pp. 259– 270. Langro c k, Roland, Thomas Kneib, Alexander Sohn, and Stacy L DeRuiter (2015). “ Non- parametric inference in hidden Marko v mo dels using P-splines”. In: Biometrics 71.2, pp. 520–528. Le Gland, F rancçois and Laurent Mev el (2000). “ Exp onen tial forgetting and geometric ergo dicit y in hidden Marko v mo dels”. In: Mathematics of Contr ol, Signals and Systems 13.1, pp. 63–93. 23 Lindgren, Finn (2026). fmesher: Triangle Meshes and Relate d Ge ometry To ols . R pack age v ersion 0.7.0. url : https://CRAN.R- project.org/package=fmesher . Lindgren, Finn, David Bolin, and Håv ard Rue (2022). “ The SPDE approach for Gaussian and non-Gaussian fields: 10 years and still running”. In: Sp atial Statistics 50, p. 100599. Lindgren, Finn and Håv ard Rue (2015). “ Bay esian spatial mo delling with R-INLA”. In: Journal of Statistic al Softwar e 63, pp. 1–25. Lindgren, Finn, Håv ard Rue, and Johan Lindström (2011). “ An explicit link betw een Gaussian fields and Gaussian Marko v random fields: the sto c hastic partial differential equation approach”. In: Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy 73.4, pp. 423–498. Liu, Xinyi, Dimitris Margaritis, and P eiming W ang (2012). “Sto ck market v olatility and equit y returns: Evidence from a tw o-state Marko v-switc hing mo del with regressors”. In: Journal of Empiric al Financ e 19.4, pp. 483–496. Lystig, Theo dore C and James P Hughes (2002). “ Exact computation of the observ ed information matrix for hidden Marko v mo dels”. In: Journal of Computational and Gr aphic al Statistics 11.3, pp. 678–689. MacF arlane, K. (2014). “ The ecology and management of Kalahari lions in a conflict area in cen tral Botswana”. Dissertation. Canberra (Australia): Australian National Univ ersity. McClin to c k, Brett T, Ruth King, et al. (2012). “ A general discrete-time modeling framework for animal mo vemen t using multistate random walks”. In: Ec olo gic al Mono gr aphs 82.3, pp. 335–349. McClin to c k, Brett T, Roland Langro ck, et al. (2020). “ Uncov ering ecological state dynamics with hidden Marko v mo dels”. In: Ec olo gy Letters 23.12, pp. 1878–1903. Mews, Sina, Jan-Ole Koslik, and Roland Langro ck (2025). “ Ho w to build y our latent Mark ov mo del: The role of time and space”. In: Statistic al Mo del ling . in press. Mews, Sina, Roland Langro ck, Ruth King, and Nicola Quick (2022). “ Multistate capture– recapture mo dels for irregularly sampled data”. In: The Annals of Applie d Statistics 16.2, pp. 982–998. Mic helot, Théo (2025). “ hmmTMB: Hidden Mark ov mo dels with flexible co v ariate effects in R”. In: Journal of Statistic al Softwar e 114, pp. 1–45. Mic hels, Rouv en, Rob ert Ba jons, and Jan-Ole Fischer (2026). “ In tegrating unsup ervised and sup ervised learning for the p rediction of defensive schemes in American fo otball”. In: arXiv pr eprint arXiv:2602.10784 . 24 Mic hels, Rouv en and Roland Langro ck (2025). “ Nonparametric estimation of biv ariate hidden Mark o v mo dels using tensor-pro duct B-splines”. In: Statistic al Mo del ling 0.0, p. 1471082X251335431. O’Hagan, Anthon y (1978). “ Curve fitting and optimal design for prediction”. In: Journal of the Royal Statistic al So ciety: Series B (Metho dolo gic al) 40.1, pp. 1–24. Ogden, Helen (2021). “ On the error in Laplace appro ximations of high-dimensional in tegrals”. In: Stat 10.1, e380. Ötting, Marius, Roland Langro ck, and Antonello Maruotti (2023). “ A copula-based m ultiv ariate hidden Marko v mo del for mo delling momentum in fo otball”. In: AStA Advanc es in Statistic al Analysis 107.1, pp. 9–27. Sop er, Braden C, Mari Nygård, Ghaleb Ab dulla, Rui Meng, and Jan F Nygård (2020). “ A hidden Marko v mo del for p opulation-level cervical cancer screening data”. In: Statistics in Me dicine 39.25, pp. 3569–3590. Tierney , Luke and Joseph B Kadane (1986). “ Accurate appro ximations for p osterior momen ts and marginal densities”. In: Journal of the Americ an Statistic al Asso ciation 81.393, pp. 82–86. V an der V aart, Aad W (2000). Asymptotic Statistics . V ol. 3. Cam bridge Univ ersity Press. Viterbi, Andrew (2003). “ Error b ounds for con volutional co des and an asymptotically optim um deco ding algorithm”. In: IEEE Tr ansactions on Information The ory 13.2, pp. 260–269. Zhang, Mengqi, Xin Jiang, Zehua F ang, Y ue Zeng, and Ke Xu (2019). “ High-order hidden Mark ov mo del for trend prediction in financial time series”. In: Physic a A: Statistic al Me chanics and its Applic ations 517, pp. 1–12. Zucc hini, W alter, Iain L MacDonald, and Roland Langro ck (2016). Hidden Markov Mo dels for Time Series: An Intr o duction using R . 2nd. Chapman and Hall/ CR C press. A App endix A.1 Pro ofs Notation Let ℓ T ( θ , x ) denote the log-likelihoo d function based on T observ ations. This can either b e the log-lik eliho o d of latent observ ations x 1 , . . . , x T ( log f ( x 1 , . . . , x T ) ) or the log-likelihoo d of observ ations y 1 , . . . , y T giv en a latent field ( log f ( y 1 , . . . , y T | x ) ). Let ˜ ℓ T ( θ , x ) b e the resp ectiv e approximation given in Equation ( 10 ). 25 Let ϕ t denote the exact scaled forw ard v ariable with entries ϕ t ( j ) = Pr ( S t = j | x 1 , . . . , x t ) , and let ˜ ϕ k t denote the approximation based on the last k observ ations, with entries ˜ ϕ k t ( j ) = Pr ( S t = j | x t − k +1 , . . . , x t ) . Now define the prediction filter p t = ϕ t − 1 Γ ( t ) and its appro ximation ˜ p k t = ˜ ϕ t − 1 Γ ( t ) . Assumptions W e mak e the follo wing assumptions. 1. Compact parameter and laten t space: Θ × X is a compact subset of R p × R d . 2. Bounded state-dep endent densities: F or all states j and all times t , the emission densities satisfy 0 < m ≤ f j ( · ) ≤ M < ∞ , uniformly o v er all relev an t ( θ , x ) ∈ Θ × X . 3. Uniform ergo dicity of the Mark o v chain: There exists a blo ck length l ≥ 1 and a constant ε > 0 such that for all t , Γ ( t ) Γ ( t +1) · · · Γ ( t + l − 1) ij ≥ ε for all states i, j. This ensures that the Mark ov c hain is uniformly ergo dic. W e call l the index of primitivit y of the chain. Lemma 1 (Filter b ound propagates to log-likelihoo d) . Under Assumptions (1)-(3), we have sup ( θ , x ) ∈ Θ ×X ℓ T ( θ , x ) − ˜ ℓ T ( θ , x ) ≤ T X t =1 M m sup ( θ , x ) ∥ p t − ˜ p k t ∥ wher e ∥ u ∥ = P i | u i | denotes the L 1 -norm of a ve ctor u . If a r andom se quenc e y 1 , . . . , y T is involve d, the ine quality holds p athwise (i.e., for e ach r e alization of y ). Pr o of. F or j = 1 , . . . , N , let f ( t ) j denote the evaluate d state-dependent densities, i.e. f j ( x t | x ; θ ) (case 1) or f j ( y t | x ; θ ) (case 2). Then the conditional densities multiplied in the lik eliho o d are f t = N X i =1 N X j =1 ϕ t − 1 ( i ) γ ( t ) ij f ( t ) j = N X j =1 p t ( j ) f ( t ) j . Denote with ˜ f k t the same quan tity with ϕ t − 1 replaced b y ˜ ϕ k t − 1 . Since the f ( t ) j are b ounded, the error in the conditional densities is also b ounded: sup ( θ , x ) | f t − ˜ f k t | ≤ M sup ( θ , x ) ∥ p t − ˜ p k t ∥ . 26 Applying the mean v alue theorem for logarithms gives | log f t − log ˜ f k t | = | f t − ˜ f k t | ξ , for some ξ ∈ (min( f t , ˜ f k t ) , max( f t , ˜ f k t )) ≥ m . Hence, sup ( θ , x ) | log f t − log ˜ f k t | ≤ M m sup ( θ , x ) ∥ p t − ˜ p k t ∥ . Summing o v er t = 1 , . . . , T , and using the triangle inequalit y , we obtain sup ( θ , x ) | ℓ T ( θ , x ) − ˜ ℓ T ( θ , x ) | ≤ T X t =1 M m sup ( θ , x ) ∥ p t − ˜ p k t ∥ . Pr o of of The or em 1: Case 1. W e first cov er the deterministic result, i.e. when ℓ T ( x , θ ) = log f θ ( x 1 , . . . , x T ) , purely a function of a parameter θ and latent v ariables x . W e show that the approximation error decays geometrically in k , that is sup ( θ , x ) ∈ Θ ×X ∥ p t − ˜ p k t ∥ ≤ C ρ k , C > 0 and ρ ∈ (0 , 1) , relying on the work of Le Gland and Mev el ( 2000 ). Under our assumptions, the assumptions of Le Gland and Mevel ( 2000 ) are satisfied. The truncated filter ˜ p k t corresp onds to a filter started at time t − k from an arbitrary initial distribution and propagated forward to time t . In the notation of Le Gland and Mevel ( 2000 ), this is equiv alent to comparing filters on an interv al [ m, n ] with m = t − k and n = t , so that the exp onen tial forgetting rate dep ends only on the lag n − m = k . In our notation, Theorem 2.1 states ∥ p t − ˜ p k t ∥ ≤ 2 ⌊ ( k +1) /l ⌋ Y κ =1 (1 − ϵ l [ δ ( x ( t − k )+( κ − 1) r +1 ) · · · δ ( x ( t − k )+ κr − 1 )]) , where l is the index of primitivit y of the chain (see Assumptions), δ ( x ) = max j f j ( x ) min j f j ( x ) ϵ = min + γ ij is the minim um of the p ositiv e entries of the t.p.m. Since in our case Γ ( t ) migh t b e time-v arying, we use ϵ = min + i,j,t γ ( t ) ij . The p ro duct o ver κ corresp onds to successiv e blo c ks of length l . Boundedness of the state-dep endent densities implies δ ( x ) ≤ M m for an y x . Hence we arrive at the more crude b ound ∥ p t − ˜ p k t ∥ ≤ 2 ⌊ ( k +1) /l ⌋ Y κ =1 (1 − ( ϵ m M ) l ) = 2(1 − ϵ [ m M ] l ) ⌊ ( k +1) /l ⌋ ≤ 2 (1 − ( ϵ m M ) l ) 1 /l k = C ρ k , 27 and application of Lemma 1 gives sup ( θ , x ) ∈ Θ ×X ℓ T ( θ , x ) − ˜ ℓ T ( θ , x ) ≤ T X t =1 M m sup ( θ , x ) ∥ p t − ˜ p k t ∥ ≤ T M C m ρ k . Pr o of of The or em 1: Case 2. W e now cov er the case where ℓ T ( x , θ ) = log f θ ( y 1 , . . . , y T | x ) , is a function of a parameter θ , latent v ariables x and a sto chastic pro cess generated from the mo del. Hence, a deterministic result is not p ossible and we show sup ( θ , x ) ∈ Θ ×X ℓ T ( θ , x ) − ˜ ℓ T ( θ , x ) ≤ T C ρ k a.s. , In this case, we can indeed directly apply Theorem 2.2 of Le Gland and Mevel ( 2000 ) and ha ve sup ( θ , x ) ∥ p t − ˜ p k t ∥ ≤ C ρ k a.s. , where ρ again dep ends on the index of primitivity of the chain and the minimum p ositive en try of the t.p.m. Path wise application of Lemma 1 then again giv es sup ( θ , x ) ∈ Θ ×X ℓ T ( θ , x ) − ˜ ℓ T ( θ , x ) ≤ T X t =1 M m sup ( θ , x ) ∥ p t − ˜ p k t ∥ ≤ T M C m ρ k , a.s. Remarks on the assumptions R emark 1 (Compactness of X ) . Within the Laplace appro ximation, the ob jectiv e ℓ T ( θ , x ) − 1 2 x ⊤ Qx is maximised at ˆ x θ . The quadratic term dominates as ∥ x ∥ → ∞ , allo win g restriction to a compact ball around ˆ x θ . R emark 2 (Boundedness of state-dep enden t densities) . As in the main manuscript, notation is complicated b y the fact that w e either ev aluate the forward algorithm on laten t v ariables x 1 , . . . , x t (case 1) or genuine observ ations y 1 , . . . , y T (case 2). How ev er, under Assumption (1) and the additional assumption of non-zero v ariance parameters, the state-dep enden t densities are b ounded in any of the following cases: 1. f j ( y t ; θ ) , indep endent of x and assuming non-zero v ariance parameters. 28 2. f j ( y t ; θ , x ) , non-zero v ariance and with x in compact set X . 3. f j ( x t ; θ , x ) , non-zero v ariance and with x in compact set X . R emark 3 (Uniform ergo dicity of the Mark ov c hain) . If all en tries of Γ ( t ) are positive and dep end on b ounded cov ariates and parameters in θ , or on random effects x , the compactness of Θ × X implies γ ( t ) ij ≥ ε > 0 . Ergo dicit y is slightly weak er but also holds if en tries are fixed at γ ( t ) ij = 0 , as long as there exist an l , suc h that ( Γ ( t ) Γ ( t +1) · · · Γ ( t + l ) ) ij ≥ ε > 0 for all i, j, t . The integer l is called the index of primitivity of the chain, which is greater than 1 in this case. Consequently , the assumption is violated if the Mark ov chain is p erio dic or reducible and in such a case the forw ard-appro ximation is not applicable. A.2 Qualit y of the Laplace approximation The Laplace appro ximation replaces the conditional distribution of the latent v ariables x giv en the data with a Gaussian distribution centered at its mo de (Tierney and Kadane, 1986 ). If the function g in the exp onen t of the p osterior is exactly quadratic, the Laplace appro ximation is exact. This o ccurs, for example, when b oth the observ ation and pro cess distributions are Gaussian and linear in x . In our mo del, this condition only holds for one of the tw o densities, while the other corresp onds to a non-Gaussian, non-linear HMM lik eliho o d. Ev en in this case, the Laplace approximation provides a lo cal Gaussian approximation around the p osterior mo de. Its accuracy dep ends on the p osterior b eing dominated by a single mo de. In practice, it often p erforms w ell, particularly when the p osterior is concen trated around a single mo de and the sample size is sufficient to constrain the distribution in a relatively small region of the high-dimensional space, i.e., when the p osterior is sharply p eaked near the mo de, corresp onding to relativ ely large eigenv alues of the Hessian H θ (Tierney and Kadane, 1986 ; Ogden, 2021 ). A.3 A sim ulation exp eriment All code for full reproducibility of the sim ulation experiment is a v ailable at https: //github.com/janolefi/HMMs_GFs . W e generate data from a 2-state HMM where the transition probabilit y matrix is driven b y a spatial field. W e simulate state-dep enden t gamma-distributed step lengths, reparam- 29 eterised in terms of mean and standard deviation, step t | { S t = j } ∼ Gamma ( µ j , σ j ) , j = 1 , 2 with mean v alues µ 1 = 0 . 2 , µ 2 = 5 and standard deviations σ 1 = 0 . 5 , σ 2 = 3 . A t eac h time p oint, we also randomly dra w a turning angle from a von Mises distribution, indep enden tly of the laten t state. The mean is chosen as to imply a biased random w alk to wards the lo cation (0 , 0) to prev ent the simulated track from disp ersing to o muc h, with the concen tration parameter b eing set to 0 . 3 . W e then compute Euclidean lo cations at each time point, initialising with the position (0 , 0) and calculating the subsequen t p osition based on the sampled step length and direction. The transition probabilit y γ 12 is homogeneous while γ ( t ) 21 dep ends on a spatial field. Sp ecifically , we set logit ( γ 12 ) = β (12) 0 and logit ( γ ( t ) 21 ) = β (21) 0 + u ( r t ) , with β (12) 0 = β (21) 0 = − 2 . W e fix the in tial distribution of the chain at δ (1) = (0 . 5 , 0 . 5) and do not estimate it as it is not the fo cus of this study and usually of minor practical relev ance. The true spatial field u which we use for simulation is parametric with u ( r ) = u ( x, y ) = 2 sin(2 π x 40 ) + 2 cos(2 π y 40 ) . F or time-series length T ∈ { 5000 , 10000 } , we sim ulate 200 data sets each. F or eac h sim ulation run, we then fit the corresp onding HMM with bandwidth parameters k ∈ { 2 , 5 , 10 , 15 } to in v estigate the practical effect of v arying this parameter. F or eac h run, w e construct a GMRF appro ximation to the spatial field based on a triangulated mesh. Ho w ever, we missp ecify the mo del b y additionally including a spatial field for the transition probabilit y γ 12 in order to insp ect the mo del’s capabilit y of smoothing this field appropriately to zero. Figure A.1 shows the true and estimated fields for a single sim ulation run, indicating adequate recov ery of the true field. W e cannot directly compate the estimated intecept β (21) 0 to its true v alue as the predicted Gaussian field ˆ u is pulled to wards a zero mean by the GMRF density , while the true function u migh t ha ve a non-zero mean inside the area that was visited. Hence, we compute the mean of ˆ η ( t ) 21 − η ( t ) 21 o ver time, and similarly for η ( t ) 12 . W e find a minor up ward bias in the av erage difference for b oth linear predictors, i.e. the estimated mo dels tend to b e slightly less p ersistent than the true one on av erage for b oth sample sizes and all bandwidth parameters (cf. Figure A.2 ). Ov erall, the bandwidth parameter seems to ha ve little influence on the estimates: for k ≥ 5 all distributions are virtually identical. T o inv estigate interpolation p erformance, w e compute the true and estimated field on a 30 0.0 0.2 0.4 0.6 0.8 1.0 −40 −20 0 20 40 −40 −20 0 20 40 60 γ 21 x y −40 −20 0 20 40 −40 −20 0 20 40 60 γ ^ 21 x y −40 −20 0 20 40 −40 −20 0 20 40 60 γ ^ 12 x y Figure A.1: Estimated spatial field in one of the 200 sim ulation runs with 10,000 ob- serv ations and bandwidth k = 15 . T rue transition probabilit y γ 21 ( r ) as a function of lo cation (left), estimated transition probabilit y ˆ γ 21 ( r ) (middle) and estimated transition probabilit y ˆ γ 12 ( r ) (righ t). F or the latter, the true function is completely flat, hence the ideal fit would b e a flat surface. The white lines indicate the non-con vex hull around the observ ed lo cations used for mesh construction in the GMRF approximation. The right panel additionally shows the visited lo cations as white dots. 5000 10000 2 5 10 15 2 5 10 15 −0.2 −0.1 0.0 0.1 0.2 η ^ 12 − η 12 5000 10000 2 5 10 15 2 5 10 15 −0.2 0.0 0.2 0.4 Bandwidth η ^ 21 − η 21 Bandwidth 2 5 10 15 Figure A.2: Violin plots and b oxplots of the av erage difference 1 T P T t =1 ( ˆ η ( t ) ij − η ( t ) ij ) b etw een estimated and true linear predictors in sample, i.e. ev aluated at the observed loactions for sample sizes T = 5000 , 10000 and bandwidth parameters k = 2 , 5 , 10 , 15 . 31 5000 10000 2 5 10 15 2 5 10 15 0.7 0.8 0.9 1.0 Cor ( γ 21 , γ ^ 21 ) 5000 10000 2 5 10 15 2 5 10 15 0.05 0.10 0.15 0.20 RMSE ( γ ^ 12 ) 5000 10000 2 5 10 15 2 5 10 15 0.10 0.15 0.20 Bandwidth RMSE ( γ ^ 21 ) Bandwidth 2 5 10 15 Figure A.3: Violin plots and b oxplots of correlation b etw een true and estimated transition probabilit y γ 21 ( s ) on a grid (inside the non-conv ex h ull spanned by the observed lo cations) (top panel), and ro ot mean squared error (RMSE) b et ween estimated and true transition probabilities (b ottom tw o panels). The correlation b etw een ˆ γ 12 ( s ) and the constant true field is undefined as the latter has zero v ariance. 512 × 512 grid in each run. As we do not exp ect to approximate the true field outside the area of observ ations, we restrict ourselv es to those grid cells that lie inside the non-conv ex h ull spanned by observ ed lo cations, which is computed anyw ay in eac h run for mesh construction (cf. Figure A.1 ). Inside this region, the correlation b etw een the estimate ˆ γ 21 ( s ) and its true v alue γ 21 ( s ) is computed on the grid. Computing the corresp onding corresp onding quantit y for ˆ γ 12 ( s ) is not p ossible as the true field is constant and hence has zero v ariance. W e find that correlation is high, with an a verage v alue slightly b elow 0.9 (cf. Figure A.3 ). As exp ected, the distribution b ecomes more concentrated for the larger sample size of T = 10000 . Again, virtually no effect of the bandwidth parameter is visible. A dditionally , we compute the ro ot mean squared errors (RMSE) s 1 n X i ˆ γ 12 ( s i ) − γ 12 2 , and s 1 n X i ˆ γ 21 ( s i ) − γ 21 ( s i ) 2 , 32 where n is the n umber of grid cells inside the area of interest. The RMSE b et ween ˆ γ 12 ( s ) and the constant field has an a verage v alue around 0.06, with the distribution stabilising for k ≥ 5 (cf. Figure A.3 ). P erformance for the true field is slightly worse, with an av erage RMSE around 0.12. Again deviation in the distribution of v alues is only visible b etw een k = 2 and k = 5 . Lastly , the estimates for the state-dep enden t means µ 1 and µ 2 and standard deviations σ 1 and σ 2 app ear mostly un biased (cf. Figure A.5 ). As is to b e exp ected, v ariance decreases with in creasing sample size. The effect of the bandwidth parameter is barely noticable, again only with visible differences b etw een k = 2 and k = 5 . 33 A.4 GP with oscillating co v ariance function F or the stellar flare case study , w e use the Gaussian pro cess detailed in Section 3.3 of Lindgren, Rue, and Lindström ( 2011 ). This is based on a complex v ersion of the SPDE giv en in ( 2 ), i.e. κ 2 exp( iπ ω ) − ∆ u 1 ( r ) + iu 2 ( r ) = W 1 ( r ) + i W 2 ( r ) where ω ∈ (0 , 1) is the oscillation parameter, and W 1 ( r ) and W 2 ( r ) are indep endent Gaussian white noise pro cesses. Oscillation increases with the parameter ω . The real and imaginary parts, u 1 ( s ) and u 2 ( s ) , are indep endent with iden tical sp ectra. Hence, the same oscillating cov ariance function K ( v , w ) = 1 2 sin( π ω ) κ 3 exp − κ cos π ω 2 | v − w | sin π ω 2 + κ sin π ω 2 | v − w | applies to either comp onent. Its simple form combines exp onential decay with p erio di c oscillation. Figure A.4 giv es some in tuition for the b eha viour of K . The asso ciated precision matrix has closed form and is given in the main text. 0 2 4 6 8 10 −0.15 −0.05 0.05 0.15 |v − w| K(v , w) Figure A.4: Oscillating cov ariance function K ( v , w ) for parameters ω = 0 . 98 an d κ = 7 . 34 A.5 A dditional figures 5000 10000 µ 1 µ 2 2 5 10 15 2 5 10 15 0.150 0.175 0.200 0.225 0.250 0.275 4.7 4.8 4.9 5.0 5.1 5.2 µ 5000 10000 σ 1 σ 2 2 5 10 15 2 5 10 15 0.4 0.5 0.6 2.8 2.9 3.0 3.1 3.2 Bandwidth σ Bandwidth 2 5 10 15 Figure A.5: Violin plots and b o xplots of the estimated state-dependent means and standard deviations for T = 5000 , 10000 observ ations and bandwidth parameter k = 2 , 5 , 10 , 15 in the sim ulation exp eriment. 35 1325 1330 1335 1340 1345 1350 0 50 100 150 Time (da ys) Flux Quiet Firing Decaying T rend Figure A.6: Complete time series of M dwarf TIC 031381302, coloured according to the most likely decoded state. The dashed gra y b o x sho ws the section of the time series depicted in the main man uscript. Step length (km) Density 0 1 2 3 4 0.0 0.4 0.8 State 1 State 2 Marginal T urning angle (radians) Density 0.00 0.10 0.20 − π − π 2 0 π 2 π Figure A.7: State-dep enden t distributions for the 2-state lion HMM, w eighted b y the appro ximate frequency of state o ccupancy (based on the deco ded states). 36 Figure A.8: T riangulated mesh used to approximate the Gaussian field in the lion HMM b y a GMRF. Observed lo cations are sho wn as dark blue crosses. 37

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment