Symphony: A Cognitively-Inspired Multi-Agent System for Long-Video Understanding

Despite rapid developments and widespread applications of MLLM agents, they still struggle with long-form video understanding (LVU) tasks, which are characterized by high information density and extended temporal spans. Recent research on LVU agents …

Authors: Haiyang Yan, Hongyun Zhou, Peng Xu

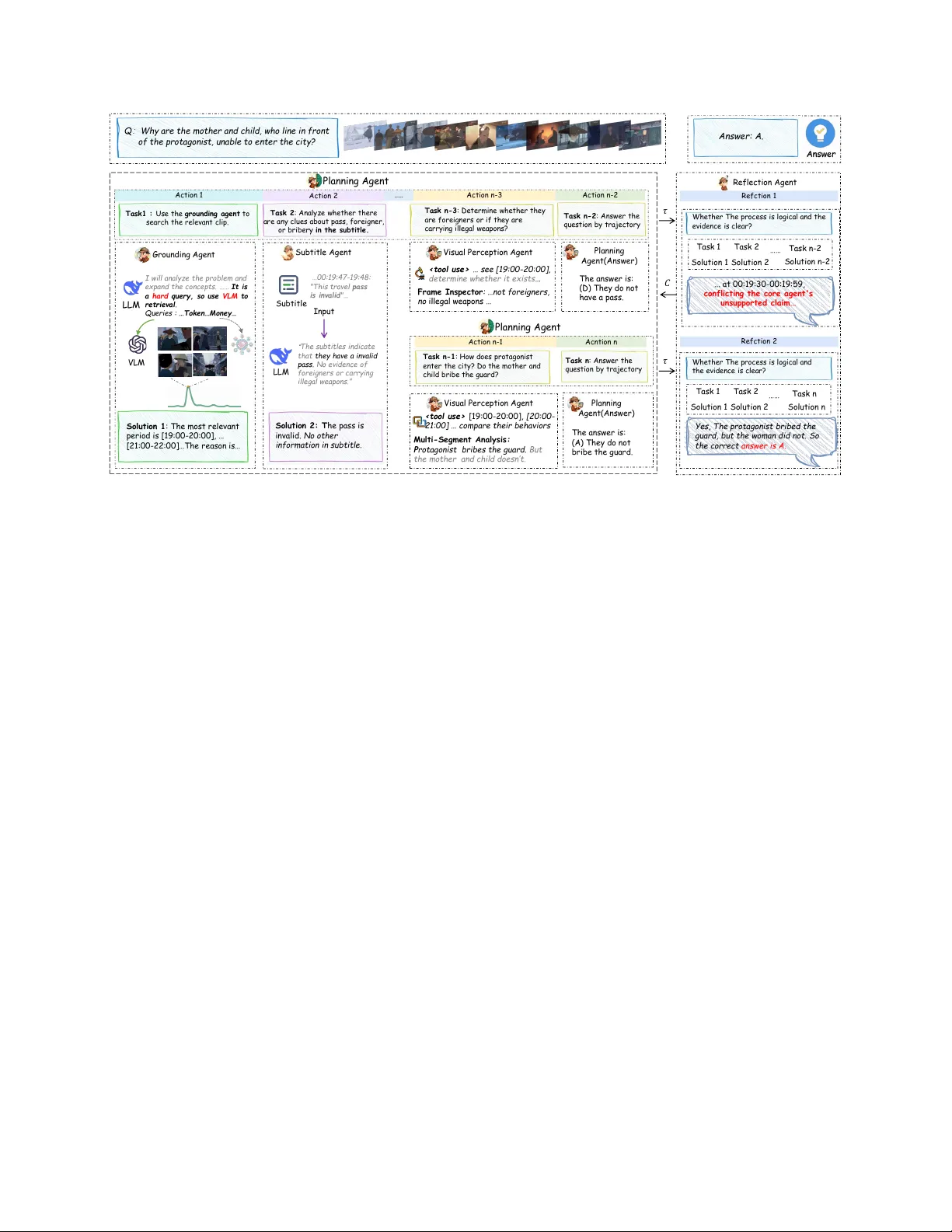

Symphony: A Cognitiv ely-Inspir ed Multi-Agent System f or Long-V ideo Understanding Haiyang Y an 1,3,* , Hongyun Zhou 2,* , Peng Xu 2 , Xiaoxue Feng 2 , Mengyi Liu 2,† 1 Institute of Automation, Chinese Academy of Sciences 2 Kuaishou T echnology 3 School of Future T echnology , Univ ersity of Chinese Academy of Sciences yanhaiyang2022@ia.ac.cn, { zhouhongyun, xupeng09, fengxiaoxue03, liumengyi } @kuaishou.com Abstract Despite rapid de velopments and widespr ead applications of MLLM agents, the y still struggle with long-form video un- derstanding (L VU) tasks, which are char acterized by high information density and extended temporal spans. Recent r esearc h on L VU agents demonstrates that simple task de- composition and collabor ation mechanisms ar e insufficient for long-chain r easoning tasks. Mor eover , dir ectly reduc- ing the time context thr ough embedding-based retrie val may lose k e y information of comple x pr oblems. In this paper , we pr opose Symphony , a multi-agent system, to alleviate these limitations. By emulating human cognition patterns, Symphony decomposes L VU into fine-grained subtasks and incorporates a deep r easoning collaboration mechanism enhanced by reflection, effectively impro ving the r eason- ing capability . Additionally , Symphony pr ovides a VLM- based gr ounding appr oach to analyze L VU tasks and as- sess the r elevance of video se gments, which significantly enhances the ability to locate complex pr oblems with im- plicit intentions and larg e tempor al spans. Experimental r esults show that Symphony ac hieves state-of-the-art per - formance on L VBench, LongV ideoBench, V ideoMME, and ML VU, with a 5.0% impr ovement over the prior state- of-the-art method on L VBench. Code is available at https://github .com/Haiyang0226/Symphony . 1. Introduction Long-form video understanding (L VU) is becoming in- creasingly important for a wide range of real-world appli- cations, such as sports commentary , intelligent surveillance, and film analysis [ 1 , 2 ]. Effecti ve L VU requires rob ust mul- W ork done during an internship at Kuaishou T echnology . * Equal contrib ution. † Corresponding author: Mengyi Liu (liu- mengyi@kuaishou.com). LLM A n s we r Mu lti - round … Q ue s ti on plann in g Loc a liz a tion Re fle c tion P e rc e ption Subt i t le C on text len g th Re flect io n A g ent LLM A n s we r Q ue s ti on Pl ann in g Ag ent A nswer Co mment Su btas k Sub - so luti o n Sub ti tle Agent A na lyz i ng su bti tles V i su al Percep ti o n A gent Percei v i ng v i su al i nfo rma ti o n Answer c red i ble (a) (b) G ro undin g A gent G ro undin g p ro ble m - relat ed se gm ent s Pe rc eptio n too ls VL M - based S co r i ng Cl ip - based Ret r ieva l M ul t i - Segme n t A na l yz Gl o b a l Sc o pe Sa m pl er F ra m e Ins pec t o r 1 . E nt it y r ec ognit io n 2 . S ent iment anal ys i s 3 . T opi c Mod el ing LLM Su btit le analy s is W or k flow T oo l call T oo l r espons e T oo l call T oo l r espons e S u bt it l e Anal ys is r esu l t s Gr oun d in g too ls Figure 1. (a) Single-agent approaches face limitations in reason- ing capacity when handling long-video understanding tasks requir- ing multi-step reasoning. (b) Our proposed multi-agent system achiev es enhanced reasoning capabilities through task decomposi- tion and collaboration along functional dimensions. timodal understanding to track entities and their relations ov er extended timelines and to employ advanced reasoning addressing complex queries. [ 3 – 6 ]. Ho wever , as video du- ration increases, both information density and complexity of questions increase significantly , e xacerbating the chal- lenges in multimodal understanding and logical inference. Despite recent adv ances in multimodal large language mod- els (MLLMs) [ 7 – 10 ], their instruction-follo wing and rea- soning capabilities degrade with longer inputs and higher task complexity [ 11 – 14 ], highlighting that accurate L VU re- mains a pressing and unresolved research challenge. MLLM-based agents have become the predominant paradigm for L VU. Some approaches [ 4 , 15 – 17 ] le ver - age vision-language models (VLMs) to construct video databases and employ retriev al-augmented generation (RA G) to extract relev ant video segments, thereby mitigat- ing the challenges posed by long sequences. Nev ertheless, generating ef fectiv e retriev al queries from complex ques- tions remains a significant challenge. This difficulty is further e xacerbated by the prev alence of noisy and redun- dant content in video databases, ultimately undermining the accuracy of retriev al processes [ 18 ]. Alternati vely , other works [ 6 , 19 – 21 ] leverage the reasoning capabilities of large language models (LLMs) by decomposing tasks and en- abling multi-step interactions with external tools to explore the solution space. Howe ver , since all reasoning is fully delegated to the core LLM, performance degrades signifi- cantly when task complexity exceeds the model’ s reasoning capacity [ 4 , 22 – 24 ]. Consequently , existing paradigms are hampered by limitations in grounding and reasoning, strug- gling to effecti vely tackle complex long-video tasks. T o enhance agents’ capability in addressing complex problems, multi-agent systems (MAS), which harness swarm intelligence through agent collaboration, are gain- ing significant traction [ 25 – 27 ]. V ideoMultiAgent [ 28 ] de- composes the reasoning tasks into modality-specific agents to mitigate the reasoning b urden of a single agent. Ho w- ev er, it introduces challenges in cross-modal information exchange and integration. Similarly , LvAgent [ 29 ] opti- mizes sub-agent task decomposition b ut relies on a linear pipeline for collaboration, which constrains the explorable task space during reasoning and f ails to o vercome the capa- bility limits of single-agent methods. Consequently , while MAS holds substantial promise for L VU applications, the design of ef fectiv e subtask decomposition and collaboration patterns remains a critical challenge. T o address these challenges, we propose Symphony , a centralized MAS as shown in Fig. 1 (b). It decom- poses the reasoning process of L VU tasks by emulating div erse dimensions of human cognition and orchestrates agents through a reflection-enhanced dynamic collabora- tion mechanism. Specifically , the planning agent performs task decomposition and orchestrates execution by delegat- ing sub-tasks to dedicated agents, facilitating iterativ e e vi- dence accumulation through multi-round interactions. Sub- sequently , the reflection agent e v aluates the reasoning chain to determine whether to output the answer or refine the rea- soning process. This collaborati ve strategy substantially reduces the reasoning load on individual models with low computational ov erhead. Moreover , we propose a ground- ing agent that lev erages an LLM to decompose queries and recognize intent, coupled with a VLM to analyze video- query relev ance, achie ving robust and precise video ground- ing. The main contributions are summarized as follo ws: • T o address the reasoning bottleneck in L VU tasks, we propose a cogniti vely-inspired MAS that decomposes problem-solving into specialized agents in functional dimensions. The task decomposition approach and reflection-enhanced dynamic collaboration mechanism effecti vely ele v ate the system’ s reasoning capabilities. • T o impro ve grounding accuracy for comple x questions, our proposed grounding agent le verages the reasoning ca- pabilities of LLMs and VLMs to enable deep semantic understanding of questions and achiev e more precise rel- ev ance assessment. • W e comprehensiv ely ev aluate Symphon y across four L VU datasets. On the most challenging L VBench, our approach surpasses the prior state-of-the-art method by 5.0%. It further attains results of 77.1%, 78.1%, and 81.0% on LongV ideoBench, V ideo MME, and ML VU. 2. Related W orks MLLMs for Long Video Understanding. Recent ad- vances in MLLMs have spurred the dev elopment of training-free strategies to address ultra-long sequence chal- lenges. Keyframe selection methods [ 30 – 33 ] employ pre- defined pipelines to extract question-relev ant video frames, while token compression approaches [ 34 – 36 ] reduce com- putational overhead by pruning redundant visual tokens. Howe ver , they struggle to preserve both long-term tempo- ral semantics and fine-grained details. RA G-based methods [ 15 – 17 , 37 , 38 ] lev erage external tools to generate te xtual descriptions from videos, constructing retriev able databases for querying relev ant snippets, yet the pre-extracted repre- sentations frequently suf fer from noise and misalignment with query-specific focus. In contrast to the single forward pass employed in prior methods, agent-based approaches offer a more comprehensiv e exploration of video content through planning and iterativ e tool inv ocation, thereby en- abling systematic and adaptive analysis be yond superficial feature extraction. Agent-based approaches can achieve more flexible video understanding. Specifically , V ideoAgent [ 19 ] employs an LLM to iterativ ely plan and make decisions through suc- cessiv e rounds of CLIP-based shot retriev al and VLM per- ception. V ideoChat-A1 [ 6 ] introduces a chain-of-shot rea- soning paradigm, employing LongCLIP [ 39 ] across succes- siv e iterations to select relev ant shots and progressi vely sub- divide them, thereby facilitating coarse-to-fine inference. Concurrently , D VD [ 4 ] constructs a multi-granular video database and implements tools grounded in the similar- ity between video captions and queries. Ho wever , these single-agent methodologies face two critical limitations: first, when task complexity surpasses the reasoning model’ s capacity , the agent tends to default to simplistic actions rather than engaging in deep reasoning, rendering perfor - mance heavily reliant on costly closed-source models [ 4 ]; Pla n n i n g A ge n t Q : W hy a r e th e mothe r a n d c hild , w ho l in e in fron t of th e pr ota g on is t, u na bl e to en ter the city ? Gr o und i ng A g ent Subt i t le A g ent V i sual Pe r c e pt i o n A gen t A c ti o n 1 A c ti o n 2 A c ti o n n - 3 …… An sw er: A. Re f le ct i o n A g ent A c ti o n n - 2 Pla nning A g ent (A nswe r ) Tas k 1 : Us e the gro un d in g agen t to s e a rc h the relev a n t c l ip . Tas k 2 : A n a l yz e wh e ther there a r e a n y c l u e s a bo u t p a s s , f o rei gn e r, o r brib e ry in the s ubti tle. Tas k n - 3 : Det e rmin e wh e ther they a re fo rei gn e rs o r if they a re c a rry in g ill e ga l we a p o n s ? Tas k n - 2 : A n s we r the que s ti o n by tra jec to ry Pla n n i n g A ge n t A c ti o n n - 1 A c n ti o n n Tas k n - 1 : Ho w d o e s p ro t a go n is t e n t e r t he c it y ? Do t he m o t her a n d c h ild brib e the gua rd ? Tas k n : A n s we r the qu e s t ion by t ra je c t o ry Vis ual Pe r ce pt i o n A g ent Planni ng Agent (A nswer ) R e fc ti o n 1 R e fc ti o n 2 I will a n a l yz e the p ro bl e m a n d e x p a n d the c o n c e p ts . …… It is a h ard que ry , s o us e V L M to re t rie v al . Qu e rie s : … To k en … M o n ey … LLM V L M So lut i o n 1 : The m o st r e le vant pe r i o d i s [1 9 : 0 0 - 2 0 : 0 0 ], … [21 : 0 0 - 2 2 : 0 0 ]… Th e r ea son i s… Subt i t le LLM … 00 : 1 9: 47 - 1 9: 4 8: " T h is tra v e l p as s is in v al id "… “ Th e s ubt it l e s in d ica t e tha t they h a v e a in v a l id p a s s . No e v id e n c e o f fo rei gn e rs o r c a rryin g ill e ga l we a p o n s . ” S o lut i o n 2 : T he pass i s i nvali d . No o t her i nf o rm at i o n in su bt i t le . Input < t o o l us e> … see [1 9 : 0 0 - 2 0 : 0 0 ], det e r m i ne w he t he r i t e xi st s … F ram e Inspec t o r : … no t f o re i g ner s, no i lle g al we apo ns … The answe r i s: (D) They d o no t hav e a pass. The answe r i s: ( A ) The y do no t br i be t he g uar d . < t o o l us e> [1 9 : 0 0 - 2 0 : 0 0 ], [20 : 0 0 - 2 1 : 0 0 ] … co mpa re t h eir behav i o rs M ult i - Segm ent A naly si s : P r o t ago nist b r i b e s t he gu ar d. B ut t he m o t her and chi ld d o esn’t . Task 1 Task 2 Task n - 2 …… So lut i o n 1 Solut i o n n - 2 So lut i o n 2 Whet h e r T h e p ro c e s s is l o gic a l a n d the e v id e n c e is c l e a r? ... at 0 0 : 1 9 : 3 0 - 0 0 : 1 9 : 5 9 , co nf lict i ng t he co re ag ent 's uns uppor t ed cl ai m ... Yes, Th e pr o t agoni st br i be d t he g uard , but t he wo m an d i d no t . So t he correct answe r is A. Whet h e r T h e p ro c e s s is l o gic a l a n d the e v id e n c e is c l e a r? 𝜏 𝜏 𝐶 Task 1 Task 2 Task n …… So lut i o n 1 So lut i o n n So lut i o n 2 Figure 2. The reflection-enhanced dynamic reasoning framework in Symphon y . The planning agent formulates a task plan and dynamically in vokes other agents to e xecute subtasks. Upon obtaining an initial solution, the Reflection agent ev aluates the reasoning chain τ , producing a critique C that guides a subsequent round of reasoning exploration. second, clip-based or RA G strategies often fail to com- prehensiv ely capture query-relev ant cues due to incomplete retriev al. Consequently , multi-agent systems emerge as a promising direction for achieving more accurate and rob ust long-video understanding. Multi-agent systems. V ideoMultiAgents [ 28 ] enables col- laborativ e multimodal reasoning by integrating specialized agents for te xt, visual, and graph analysis modalities. Ho w- ev er, these agents process their respecti ve features in iso- lation, failing to establish deep inter-modal interactions. L V Agent [ 29 ] employs a pre-selected fixed team of agents for multi-round discussions and v oting, yet it adheres to a linear static workflow , which constrains the exploration of complex solution spaces and pre vents dynamic, task-driv en adjustments. In contrast, our method achiev es deep decou- pling of complex reasoning tasks along the capability di- mension, significantly reducing the cognitive load for each agent. Additionally , we introduce a dedicated localization agent for precise key segment identification, providing a more accurate and robust solution for handling long videos with high spatio-temporal complexity . 3. Method W e propose Symphony , a multi-agent system composed of functionally specialized agents, as illustrated in Fig. 1 (b). In Section 3.1, we provide an o vervie w of the system. Section 3.2 details the collaboration mechanism among the agents, and Section 3.3 introduces our nov el grounding agent. 3.1. Overview Cognitiv e psychology traditionally decomposes human cognitiv e abilities into core dimensions: perception, at- tention, reasoning, language, and decision-making [ 40 ]. Building upon this framew ork, we propose a capability- dimension decoupled paradigm for L VU task decomposi- tion, implemented through a MAS. In our architecture, the planning and reflection agents jointly manage reasoning and decision-making; the grounding agent simulates the func- tion of attention by highlighting key video segments; the subtitle agent analyzes textual subtitles to fulfill the lan- guage processing component; and the visual perception agent performs perceptual tasks. In contrast to modality- based partitioning, which incurs high interaction costs from tight inter -module dependencies, our approach minimizes inter-agent coupling, significantly reducing the cost of in- formation integration. This strategic allocation of cognitiv e load across specialized modules effecti vely mitigates capac- ity overload in monolithic architectures, enhancing accu- racy and scalability in comple x L VU tasks. Specifically , the Planning Agent acts as the central co- ordinator , responsible for global task planning, multi-agent scheduling, information integration, and ultimately generat- ing the answer . T o efficiently and comprehensi vely identify question-relev ant segments and potential clues within the video, the Grounding Agent selects either a VLM-based relev ance scoring tool or a CLIP-based retriev al tool de- pending on the analysis of question comple xity . The Subti- Algorithm 1: Reflection-enhanced Dynamic Collaboration Input : Question Q , max attempts M Initialize: trajectory τ ← ∅ , state S t ← { τ , Q } A= {G (Grounding), V (V isual Perception), S (Subtitle) } m, n ← 0 while m < M do while n < M do a t ← PlanningAgent ( S t ) if a t = TERMIN A TE then break o t ← Execute a t using agent ∈ A Collect observation o t and update τ ← τ ∪ { ( a t , o t ) } n ← n + 1 C , V alid ← ReflectionAgent ( S t ) if V alid then break S ← S ∪ {C } , m ← m + 1 A ← PlanningAgent . answer ( S ) retur n A tle Agent processes video subtitles and performs semantic analysis to enable capabilities such as entity recognition, sentiment analysis, and topic modeling. The V isual P er- ception Agent conducts multi-dimensional visual percep- tion by in voking three tools: frame inspector, global sum- mary , and multi-segment analysis. Finally , the Reflection Agent performs a retrospective ev aluation of the reasoning trajectory; upon detecting logical inconsistencies or insuf- ficient evidence, it generates correcti ve suggestions to initi- ate a new round of refinement. The specific implementation and prompts for each agent can be found in Appendix A. 3.2. Collaboration Mechanism As illustrated in Fig. 2 , inspired by the classic Actor-Critic framew ork [ 41 ], we design a reflection-enhanced dynamic reasoning framew ork to orchestrate the agents for solv- ing L VU problems. The planning agent, denoted as the core polic y model π , lev erages the reasoning capabilities of LLM to generate sub-tasks for specialized agents, includ- ing grounding ( G ), visual perception ( V ), and subtitle ( S ). Specifically , the action space for the system is defined as A = {G , V , S } . (1) Motiv ated by recent explorations of V erifier’ s Law [ 42 ], which posits that verifying a solution is significantly easier than generating it, we introduce a reflection agent, repre- sented by ϕ as the v erification model, to validate both the reasoning process and the final task outcomes, providing critical analysis. This mechanism expands the exploration space of MAS and improves the precision of reasoning. The collaborativ e mechanism of the entire system is formalized in Algorithm 1. In the follo wing, we detail the forward rea- soning process and the reflection-enhanced procedure. In the forward reasoning stage, the planning agent e v al- uates and outputs the next specialized sub-task based on the state, iterating until an answer is obtained. The state S com- prises the initial question Q and the historical trajectory τ . For the t -th step, this process can be represented as a t = π ( S t ) ∈ A, S t = ( Q, τ t − 1 ) , τ t − 1 = ( a 1 , o 1 , . . . , a t − 1 , o t − 1 ) . (2) Subsequently , specialized agents e xecute action a t to gen- erate output o t , update the system state S t , and iterativ ely carry out the forward reasoning process until sufficient ev- idence is accumulated to formulate an answer . During this phase, the planning agent focuses exclusi vely on the core logical reasoning for L VU, concentrating model capabilities to dynamically construct solution paths tailored to varying queries. This approach significantly enhances both the ac- curacy and efficiency of task solving compared to existing fixed workflo ws. In the verification stage, the reflection agent processes S T . If the reasoning process is deemed rigorous with suffi- cient evidence, the procedure terminates; otherwise, the re- flection agent identifies deficiencies in the reasoning chain and generates a critique formalized as C = ϕ ( S T ) , followed by updates to the trajectory and state, as τ ′ t = τ ′ t ∪ {C } , S ′ t = ( Q, τ ′ t ) . (3) By lev eraging this verification mechanism, the reflection agent re-engages the planning agent’ s forward reasoning process, significantly expanding the exploration space of the MAS and yielding marked performance improvements on challenging problems. 3.3. Grounding Agent Grounding aims to identify multiple video segments S = { s 1 , s 2 , . . . , s n } from a video V that are relev ant to a gi ven query , thereby eliminating interference from irrele vant con- tent. W e define a query mapping function f to analyze and enhance Q . This process is formally defined as S = { s ∈ V | sim ( f ( Q ) , s ) ≥ θ } . (4) where sim ( · ) denotes a function to measure the rele v ance between the query and video segment. Complex questions are pre v alent in L VU tasks, thereby imposing higher demands on grounding capabilities. Specifically , this complexity is manifested in two aspects: (1) question ambiguity , where the question contains vague references, abstract concepts, or high-lev el actions without explicit entities, and (2) multi-hop reasoning , wherein the question requires chaining together multiple pieces of e vi- dence across interrelated scenes or inferring implicit inter- mediate scenes. CLIP-based retriev al using the initial ques- tion as the query , as f ( Q ) = Q, sim ( f ( Q ) , s ) = CLIP ( f ( Q ) , s ) . (5) 19:35 20:20 Ground-truth timestamp VLM-based Scoring Clip-based Retrieval 00:00 61:06 Q = “Why are the mo ther and child, who line in front of the protago nist, unable to enter the cit y? (A) They do not bribe th e guard ✅ (B) They a re foreigners (C) They bring illegal weapons (D) They do not have a pass” Why are the mother and child, who line in front of the … The key points to consider include: f(Q) = Q sim(f(Q), v) Result - topk sim socre f(Q) = LLM(Q) sim(f(Q), v) Result - topk sim socre 20:59 28:05 Score 4: ...and gu ards, suggesting checkpoint or entry requirement ... Score 3: ... show s a hand holding green coins, suggesting a br ibe ... Score 3: ... prota gonist ... could be related to entry into a c ity. 19:00 22:00 19:00 20:00 21:00 22:00 ~ ~ ~ guard mother bribe child protagonist … 1. Entity → "guard" ✅ 2. Abstract concepts → "bribe" ❌ 3. Temporal sequences → "Enter the city" ❌ 1.The protagonist entering the city. 2. Object related to bribery e.g., offering money. 3. The appearance of illegal weapons. … Entity Image Encoder Text Encoder Sim score "score": "reasoning": "caption": one-minute video segment VLM VLM-based Scoring can handle: 1. Abstract concepts reasoning: coins, suggesting a bribe 2. Temporal sequences reasoning: protago- nist entered the city bribe Not retrieved guard: retrieved question irrelevant ⚠️ Enter the city Not retrieved Figure 3. The CLIP-based method utilizes the original query for retriev al, thereby failing to capture abstract concepts and actions within temporal sequences. Our grounding agent analyzes the query , expands and refines the relevant concepts, and utilizes VLM to ev aluate the similarity between the enhanced query and each segment, achie ving more comprehensive grounding results. T able 1. Segment relev ance scoring criteria and reasoning outputs in grounding agent. Score Relevance Criteria Key Basis in Reasoning Pr ocess 4 Core elements visible; sufficient for answer Ke y verification steps and preliminary conclusion 3 Partial e vidence present; incomplete information V isual cues already been identified 2 No explicit clues; indirect association through multi-hop reasoning Approach for conceptual decomposition, semantic ex- pansion, or associativ e analysis 1 No observable or inferable rele vance to the query N/A Despite their ef fecti veness in simple questions, reliance on explicit semantic matching limits their ability to handle complex questions requiring intent recognition and logical reasoning. Therefore, we propose lev eraging LLMs to gen- erate enhanced queries, as f ( Q ) = LLM ( Q ) . (6) On one hand, LLMs can leverage their extensi ve world knowledge to disambiguate queries by instantiating am- biguous terms; on the other hand, they can utilize their rea- soning capabilities to infer missing contextual information and explicitly incorporate latent logical cues. Compared to CLIP , which relies on text-image con- trastiv e learning, VLMs le verage more adv anced cross- modal alignment strategies and exhibit enhanced reason- ing capabilities. Consequently , the VLM-based similarity metric achiev es a more comprehensi ve understanding of the semantic relationships between queries and visual content. Therefore, we propose a VLM-based scoring tool, as sim ( f ( Q ) , s ) = V LM ( f ( Q ) , s ) . (7) Specifically , we partition the video into non-overlapping segments and perform sparse sampling within each seg- ment. Based on the criteria shown in T able 1 , the VLM de- termines the rele v ance score and outputs the scoring ratio- nale, with the entire process executed in parallel to reduce latency . Furthermore, the grounding agent retains the CLIP retriev al module and autonomously selects the paradigm based on query complexity . T able 2. Comparison on long video understanding benchmarks. Our method achieves SOT A performance across multiple benchmarks. The best results are bolded . Categories are color -coded for clarity . Methods L VBench LongV ideoBench (V al) V ideo MME Long ML VU Commercial VLMs Gemini-1.5-Pro [ 43 ] 33.1 64.0 67.4 - GPT -4o [ 44 ] 48.9 66.7 65.3 54.9 OpenAI o3 [ 45 ] 57.1 67.5 64.7 - Open-Source VLMs Seed 1.6 VL ∗ [ 46 ] 58.1 66.1 68.4 65.3 InternVL2.5-78B[ 8 ] 43.6 63.6 62.6 - Qwen2.5-VL-72B [ 47 ] 47.7 60.7 63.9 53.8 Agent Based V ideoTree [ 30 ] 28.8 - 54.2 60.4 V ideoAgent [ 20 ] 29.3 - - - D VD ∗ [ 4 ] 66.8 67.2 61.5 - V ideoDeepResearch [ 21 ] 55.5 70.6 76.3 64.5 V ideoChatA1 [ 6 ] - 65.4 71.2 76.2 ReAgent-V [ 48 ] 41.2 66.4 72.9 74.2 Others LongVILA-7B [ 9 ] - 57.7 52.1 49.0 MR. V ideo [ 49 ] 60.8 61.6 61.8 - AdaRET AKE [ 34 ] 53.3 67.0 65.0 - V ideoRA G [ 16 ] - 65.4 73.1 73.8 Ours 71.8 77.1 78.1 81.0 ∗ denotes our reproduced experimental results. 4. Experiment 4.1. Dataset W e comprehensiv ely ev aluated the performance of Sym- phony and other state-of-the-art (SO T A) methods on four representativ e L VU datasets. L VBench [ 5 ] features videos with an a verage duration of 68 minutes, emphasizing six core capability dimensions: T emporal Grounding (TG), Summarization (Sum), Reasoning (Rea), Entity Recogni- tion (ER), Ev ent Understanding (EU), and Ke y Information Retriev al (KIR). LongVideoBench [ 50 ] comprises 3,763 videos along with their subtitles and introduces referen- tial reasoning tasks to ev aluate fine-grained information re- triev al and cross-fragment logical reasoning. ML VU [ 51 ] encompasses diverse video types and is designed with nine varied tasks, including reasoning, captioning, recognition, and summarization. V ideo-MME [ 52 ] establishes a multi- modal e v aluation frame work spanning six broad domains, rigorously assessing spatio-temporal composite reasoning capabilities. For our e xperiments, we exclusi vely utilized the “long” duration subset. 4.2. Implementation Details Our planning and reflection agents le verage DeepSeek R1 [ 53 ] as the reasoning model, while the subtitle agent em- ploys DeepSeek V3 [ 54 ]. The visual perception agent and grounding agent utilize Doubao Seed 1.6 VL [ 46 ] as VLM. Input sequences are constrained to a maximum of 40 frames, with resolutions capped at 720p. For the VLM- based scoring tool, we set the duration T = 60 and sample 30 frames from each segment. F or ML VU and L VBench without subtitles, we used Whisper-large-v3 [ 55 ] to extract subtitles. W e set the number of scheduling rounds for agents and the maximum number of tool calls within each agent to 15. For the reflection agent, the maximum number of scheduling rounds was set to 3. Baselines . W e comprehensively ev aluated Symphony against div erse SO T A methods in L VU. The baselines in- clude VLMs, agent-based frameworks, long-context-based LongVILA [ 9 ], RA G-based V ideoRAG [ 16 ], and token- compression-based AdaRET AKE [ 34 ]. Unless specified, all results are sourced from published literature. For ev al- uations of Seed 1.6 VL, videos were uniformly sampled at 256 frames. F or a fair comparison with D VD [ 4 ], we use the same reasoning model and vision model as ours. LVBench VideoMME LongvideoBench MLVU 30 35 40 45 50 55 60 65 70 75 80 85 Score 47.7 63.9 60.7 53.8 58.1 68.4 66.1 61.3 61.3 56.8 64.7 61.2 66.8 61.7 67.2 64.3 51.3 72.8 66.5 57.2 56.1 76.5 71.8 66.4 68.2 75.3 76.8 78.8 71.8 78.1 77.1 81.0 Qwen2.5VL-72B Seed1.6VL DVD+Qwen2.5VL-72B DVD+Seed1.6VL VDR+Qwen2.5VL-72B VDR+Seed1.6VL Ours+Qwen2.5VL-72B Ours+Seed1.6VL Figure 4. Experimental results of different agent-based methods. T able 3. Comparison of Each Capability Dimension on L VBench. Methods ER EU KIR TG Rea Sum Overall Commer cial VLMs Gemini-1.5-Pro [ 43 ] 32.1 30.9 39.3 31.8 27.0 32.8 33.1 GPT -4o [ 44 ] 48.9 49.5 48.1 40.9 50.3 50.0 48.9 OpenAI o3 [ 45 ] 57.6 56.4 62.9 46.8 50.8 67.2 57.1 Open-Sour ce VLMs InternVL2.5-78B [ 8 ] 43.8 42.0 42.1 36.8 51.0 37.9 43.6 Qwen2.5-VL-72B [ 47 ] - - - - - - 47.7 AdaRET AKE [ 34 ] 53.0 50.7 62.2 45.5 54.7 37.9 53.3 V ideo Agents and Others V ideoTree [ 30 ] 30.3 25.1 26.5 27.7 31.9 25.5 28.8 V ideoAgent [ 20 ] 28.0 30.3 28.0 29.3 28.0 36.4 29.3 MR. V ideo [ 49 ] 59.8 57.4 71.4 58.8 57.7 50.0 60.8 D VD [ 4 ] 68.2 65.3 76.0 70.5 63.1 61.1 66.8 Ours 70.0 69.4 77.2 70.1 69.4 72.5 71.8 4.3. Main results The experimental results on four benchmarks are summa- rized in T able 2 , demonstrating that Symphony consis- tently outperforms existing SOT A methods across all e val- uations. On the most challenging L VBench, Symphony exceeds D VD by 5%, v alidating the ef ficacy of multi- agent approaches for complex L VU tasks. Similarly , on LongV ideoBench, it achiev es a 6.5% higher accuracy than V ideoDeepResearch (VDR) and surpasses leading commer- cial VLMs. T o further compare with representative agent- based methods, we ev aluated DVD, VDR, and Symphony using diverse foundation models, as illustrated in Fig. 4 . These results indicate that agent-based methods generally enhance foundation models, with Symphony delivering the maximum improvement and achieving optimal outcomes independent of the foundation model. This further validates the effecti veness of the task decomposition and collabora- tion mechanism we proposed. T o comprehensively ev aluate Symphony against other SO T A methods across different capability dimensions, we conduct a fine-grained analysis on L VBench. As sho wn in T able 3 , our method achiev es superior performance across multiple metrics. W e attribute this superior performance to two key factors. First, the grounding agent effecti vely lever - ages the capabilities of LLM and VLM for query decompo- sition and spatiotemporal localization, providing rich con- textual cues that enhance EU and Sum tasks. Second, the dynamic collaboration of multiple specialized agents en- hances the model’ s reasoning capability and exploration of the solution space, resulting in an outstanding score on the Rea dimension. 4.4. Ablation Study 4.4.1. Ablation Study on MAS T o validate the effecti veness of the function-based decom- position in the proposed framework, we conduct compre- hensiv e ablation studies comparing Symphon y against its variants on the L VBench, as summarized in T able 4 . For the reflection agent, we eliminate this component and instead prompt the planning agent to perform self- reflection on its reasoning chain before generating the final answer . Our results shows that this leads to a 2.5% perfor- mance drop. This performance gain highlights the value of an independent reflection agent, which mitigates the o ver - confidence common in single-agent self-correction and en- hances ov erall reasoning reliability . For the subtitle agent, we supply the planning agent with the complete video subtitles. This approach significantly inflates the context length, leading to a 1.4% degradation. This highlights the role of the subtitle agent, which en- ables specialized analysis of subtitles while a voiding con- text o verload in the planning agent that could impair the system’ s reasoning capability . For the visual perception agent, we integrate its under- T able 4. Ablation Study on Each Agent in Symphony . Planning Subtitle V isual Perception Reflection Score (%) ✓ 65.7 ✓ ✓ 68.2 ✓ ✓ ✓ 69.6 ✓ ✓ ✓ ✓ 71.8 T able 5. Ablation Study on Grounding Strategies in the Grounding Agent. Grounding T ools L VBench VLM Caption-based Clip-based Score ( % ) T ime(s) - ✓ 61.2 8.2 * - ✓ 52.2 33.7 Seed 1.6VL 72.1 68.6 Qwen2.5VL-7B ✓ 68.6 37.4 Seed 1.6VL ✓ 71.8 54.8 * Excluding the time for database construction. T able 6. Ablation Study on Sampling Methods in the Grounding Agent. Sampling Method L VBench EU Subset FPS Clip Interval (s) Score (%) T ime (s) 0.5 30 70.3 79.0 0.5 120 64.7 50.6 1 60 70.9 74.8 0.25 60 62.1 54.8 0.5 60 68.6 69.4 lying tools into the planning agent. The results demon- strate that the specialized visual perception agent achieves a 2.2% performance uplift by enabling more precise per - ception, cross-segment comparison, and nuanced analysis of visual features. 4.4.2. Ablation Study on the Grounding Agent. As shown in T able 5 , we conducted an ablation study on L VBench on the grounding agent. For caption-based re- triev al, we utilized D VD’ s high-quality database generated by GPT -4.1. Although it is faster , it requires substantial time and computational resources to preconstruct the database. Regarding the selection of VLM, we compared Seed1.6- VL with Qwen2.5VL-7B, with a concurrency count of 20. Qwen2.5VL-7B achiev ed inference times comparable to those of CLIP-based approaches while improving accuracy by 16.4%, with Seed1.6-VL achie ving the overall superior performance. While relying solely on VLMs yields opti- mal accuracy , it results in unnecessary consumption for sim- ple questions. In contrast, our approach yields an optimal accuracy-ef ficiency trade-off. As shown in T able 6 , we ablated the parameters using the T able 7. Ablation Study on V oting Strategy Method L VBench LongV ideo V ideoMME ML VU LvAgent 64.3 80.0 81.7 83.9 Symphony 71.8 77.1 78.1 81.0 Symphony-V ote 73.7 80.5 82.1 83.6 EU subset of L VBench. Experimental results indicate that increasing FPS or reducing clip length yields only marginal accuracy improv ements while significantly increasing infer- ence latency . Howe ver , lowering FPS to 0.25 results in the omission of critical frames, causing a 6.5% accuracy drop, whereas extending the clip interv al to 120 seconds intro- duces irrelev ant segments, failing to meet granularity re- quirements and leading to a 3.9% decrease in accuracy . Our configuration achiev es the optimal design. 4.4.3. Ablation Study on V oting Strategy As a general cooperati ve strategy , voting can effecti vely en- hance the capabilities of agents [ 56 ]. In the field of L VU, the LvAgent [ 29 ] makes use of voting as part of its strat- egy to enhance performance. T o explore the upper perfor- mance bound of our frame work, we inte grated a similar vot- ing mechanism. W e simplified the three-round dynamic voting mecha- nism of LvAgent: three independent Symphony instances were initialized for parallel inference and majority vot- ing. As shown in T able 7 , we compared the performance of Symphony-V ote against the standard Symphony frame- work and LvAgent. The results show that the voting strat- egy can further enhance the proposed collaboration mecha- nism in Symphony , achie ving a 2 to 4 percentage point g ain across all benchmarks. Furthermore, Symphon y-V ote out- performed LvAgent on most benchmarks. 5. Conclusion W e proposed Symphony , a multi-agent system for long- form video understanding. Inspired by human cognitive processes, we decompose the complex L VU reasoning task into four components—planning agent, grounding agent, subtitle agent, and visual perception agent—and employ a reflection agent for the ev aluation of the reasoning trajectory . This task decomposition approach, combined with a reflection-enhanced dynamic collaboration mech- anism, impro ves the system’ s reasoning capability . T o improv e grounding performance on complex questions, the grounding agent employs the reasoning capability of a LLM to analyze, decompose, and expand the question, and inte grates a VLM-based relev ance scoring tool to retriev e relev ant segments. Extensi ve experiments across four benchmarks demonstrate that the cogniti vely- inspired Symphony achie ves SO T A performance. References [1] Xinye Cao, Hongcan Guo, Jiawen Qian, Guoshun Nan, Chao W ang, Y uqi Pan, Tianhao Hou, Xiaojuan W ang, and Y utong Gao. V ideominer: Iteratively grounding ke y frames of hour- long videos via tree-based group relative policy optimiza- tion. In Pr oceedings of the IEEE/CVF International Confer- ence on Computer V ision , pages 23773–23783, 2025. 1 [2] Ke val Doshi and Y asin Y ilmaz. Continual learning for anomaly detection in surveillance videos. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition workshops , pages 254–255, 2020. 1 [3] Y ukang Chen, W ei Huang, Baifeng Shi, Qinghao Hu, Han- rong Y e, Ligeng Zhu, Zhijian Liu, Pavlo Molchanov , Jan Kautz, Xiaojuan Qi, et al. Scaling rl to long videos. arXiv pr eprint arXiv:2507.07966 , 2025. 1 [4] Xiaoyi Zhang, Zhaoyang Jia, Zongyu Guo, Jiahao Li, Bin Li, Houqiang Li, and Y an Lu. Deep video discovery: Agen- tic search with tool use for long-form video understanding. arXiv pr eprint arXiv:2505.18079 , 2025. 2 , 6 , 7 [5] W eihan W ang, Zehai He, W enyi Hong, Y ean Cheng, Xiao- han Zhang, Ji Qi, Xiaotao Gu, Shiyu Huang, Bin Xu, Y uxiao Dong, et al. Lvbench: An extreme long video understanding benchmark. arXiv preprint , 2024. 6 [6] Zikang W ang, Boyu Chen, Zhengrong Y ue, Y i W ang, Y u Qiao, Limin W ang, and Y ali W ang. V ideochat-a1: Think- ing with long videos by chain-of-shot reasoning. arXiv pr eprint arXiv:2506.06097 , 2025. 1 , 2 , 6 [7] Muhammad Maaz, Hanoona Rasheed, Salman Khan, and Fa- had Shahbaz Khan. V ideo-chatgpt: T ow ards detailed video understanding via large vision and language models. arXiv pr eprint arXiv:2306.05424 , 2023. 1 [8] Y i W ang, Xinhao Li, Ziang Y an, Y inan He, Jiashuo Y u, Xi- angyu Zeng, Chenting W ang, Changlian Ma, Haian Huang, Jianfei Gao, et al. Intern video2. 5: Empowering video mllms with long and rich context modeling. arXiv preprint arXiv:2501.12386 , 2025. 6 , 7 [9] Y ukang Chen, Fuzhao Xue, Dacheng Li, Qinghao Hu, Ligeng Zhu, Xiuyu Li, Y unhao Fang, Haotian T ang, Shang Y ang, Zhijian Liu, et al. Longvila: Scaling long-conte xt visual language models for long videos. arXiv preprint arXiv:2408.10188 , 2024. 6 [10] Enxin Song, W enhao Chai, Guanhong W ang, Y ucheng Zhang, Haoyang Zhou, Feiyang W u, Haozhe Chi, Xun Guo, T ian Y e, Y anting Zhang, et al. Moviechat: From dense token to sparse memory for long video understanding. In Pr oceed- ings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , pages 18221–18232, 2024. 1 [11] Anurag Arnab, Ahmet Iscen, Mathilde Caron, Alireza Fathi, and Cordelia Schmid. T emporal chain of thought: Long- video understanding by thinking in frames. arXiv preprint arXiv:2507.02001 , 2025. 1 [12] Nelson F Liu, K evin Lin, John He witt, Ashwin Paranjape, Michele Be vilacqua, Fabio Petroni, and Perc y Liang. Lost in the middle: Ho w language models use long contexts. arXiv pr eprint arXiv:2307.03172 , 2023. [13] Kumara Kahatapitiya, Kanchana Ranasinghe, Jongwoo Park, and Michael S Ryoo. Language repository for long video understanding. arXiv preprint , 2024. [14] Cheng-Ping Hsieh, Simeng Sun, Samuel Kriman, Shantanu Acharya, Dima Rekesh, Fei Jia, Y ang Zhang, and Boris Ginsbur g. Ruler: What’ s the real context size of your long-context language models?, 2024. URL https://arxiv . or g/abs/2404.06654 , 2024. 1 [15] Xubin Ren, Lingrui Xu, Long Xia, Shuaiqiang W ang, Dawei Y in, and Chao Huang. V ideorag: Retrie val-augmented gen- eration with extreme long-conte xt videos. arXiv pr eprint arXiv:2502.01549 , 2025. 2 [16] Y ongdong Luo, Xiawu Zheng, Xiao Y ang, Guilin Li, Haojia Lin, Jinfa Huang, Jiayi Ji, Fei Chao, Jiebo Luo, and Rongrong Ji. V ideo-rag: V isually-aligned retriev al- augmented long video comprehension. arXiv preprint arXiv:2411.13093 , 2024. 6 [17] Zeyu Xu, Junkang Zhang, Qiang W ang, and Y i Liu. E- vrag: Enhancing long video understanding with resource- efficient retrieval augmented generation. arXiv preprint arXiv:2508.01546 , 2025. 2 [18] Quinn Leng, Jacob Portes, Sam Havens, Matei Zaharia, and Michael Carbin. Long context rag performance of lar ge lan- guage models. arXiv preprint , 2024. 2 [19] Xiaohan W ang, Y uhui Zhang, Orr Zohar , and Serena Y eung- Levy . V ideoagent: Long-form video understanding with large language model as agent. In Eur opean Confer ence on Computer V ision , pages 58–76. Springer, 2024. 2 [20] Y ue Fan, Xiaojian Ma, Rujie W u, Y untao Du, Jiaqi Li, Zhi Gao, and Qing Li. V ideoagent: A memory-augmented mul- timodal agent for video understanding. In Eur opean Confer- ence on Computer V ision , pages 75–92. Springer , 2024. 6 , 7 [21] Huaying Y uan, Zheng Liu, Junjie Zhou, Hongjin Qian, Ji- Rong W en, and Zhicheng Dou. V ideodeepresearch: Long video understanding with agentic tool using. arXiv preprint arXiv:2506.10821 , 2025. 2 , 6 [22] Kaiyang W an, Lang Gao, Honglin Mu, Preslav Nakov , Y uxia W ang, and Xiuying Chen. A fano-style accuracy upper bound for llm single-pass reasoning in multi-hop qa. arXiv pr eprint arXiv:2509.21199 , 2025. 2 [23] Kaya Stechly , Karthik V almeekam, and Subbarao Kamb- hampati. Chain of thoughtlessness? an analysis of cot in planning. Advances in Neural Information Pr ocessing Sys- tems , 37:29106–29141, 2024. [24] Parshin Shojaee, Iman Mirzadeh, K eivan Alizadeh, Maxwell Horton, Samy Bengio, and Mehrdad Farajtabar . The illusion of thinking: Understanding the strengths and limitations of reasoning models via the lens of problem complexity . arXiv pr eprint arXiv:2506.06941 , 2025. 2 [25] Khanh-T ung Tran, Dung Dao, Minh-Duong Nguyen, Quoc- V iet Pham, Barry O’Sullivan, and Hoang D Nguyen. Multi- agent collaboration mechanisms: A surve y of llms. arXiv pr eprint arXiv:2501.06322 , 2025. 2 [26] Adam Fourney , Gagan Bansal, Hussein Mozannar , Cheng T an, Eduardo Salinas, Friederike Niedtner, Grace Proeb- sting, Griffin Bassman, Jack Gerrits, Jacob Alber, et al. Magentic-one: A generalist multi-agent system for solving complex tasks. arXiv pr eprint arXiv:2411.04468 , 2024. [27] Mingyan Gao, Y anzi Li, Banruo Liu, Y ifan Y u, Phillip W ang, Ching-Y u Lin, and F an Lai. Single-agent or multi-agent sys- tems? why not both? arXiv pr eprint arXiv:2505.18286 , 2025. 2 [28] Noriyuki Kugo, Xiang Li, Zixin Li, Ashish Gupta, Arpan- deep Khatua, Nidhish Jain, Chaitanya Patel, Y uta Kyuragi, Y asunori Ishii, Masamoto T anabiki, et al. V ideomultia- gents: A multi-agent framew ork for video question answer- ing. arXiv preprint , 2025. 2 , 3 [29] Boyu Chen, Zhengrong Y ue, Siran Chen, Zikang W ang, Y ang Liu, Peng Li, and Y ali W ang. Lv agent: Long video un- derstanding by multi-round dynamical collaboration of mllm agents. arXiv preprint , 2025. 2 , 3 , 8 [30] Ziyang W ang, Shoubin Y u, Elias Stengel-Eskin, Jaehong Y oon, Feng Cheng, Gedas Bertasius, and Mohit Bansal. V ideotree: Adaptiv e tree-based video representation for llm reasoning on long videos. In Pr oceedings of the Computer V ision and P attern Recognition Conference , pages 3272– 3283, 2025. 2 , 6 , 7 [31] Jinhui Y e, Zihan W ang, Haosen Sun, Keshigeyan Chan- drasegaran, Zane Durante, Cristobal Eyzaguirre, Y onatan Bisk, Juan Carlos Niebles, Ehsan Adeli, Li Fei-Fei, et al. Re- thinking temporal search for long-form video understanding. In Pr oceedings of the Computer V ision and P attern Recogni- tion Confer ence , pages 8579–8591, 2025. [32] Junwen Pan, Rui Zhang, Xin W an, Y uan Zhang, Ming Lu, and Qi She. Timesearch: Hierarchical video search with spotlight and reflection for human-like long video under- standing. arXiv preprint , 2025. [33] Xuyi Y ang, W enhao Zhang, Hongbo Jin, Lin Liu, Hongbo Xu, Y ongwei Nie, Fei Y u, and Fei Ma. Enhancing long video question answering with scene-localized frame group- ing. arXiv preprint , 2025. 2 [34] Xiao W ang, Qingyi Si, Jianlong W u, Shiyu Zhu, Li Cao, and Liqiang Nie. Adaretake: Adaptive redundancy reduction to perceive longer for video-language understanding. arXiv pr eprint arXiv:2503.12559 , 2025. 2 , 6 , 7 [35] Xinhao Li, Y i W ang, Jiashuo Y u, Xiangyu Zeng, Y uhan Zhu, Haian Huang, Jianfei Gao, Kunchang Li, Y inan He, Chenting W ang, et al. V ideochat-flash: Hierarchical com- pression for long-conte xt video modeling. arXiv preprint arXiv:2501.00574 , 2024. [36] Xiangrui Liu, Y an Shu, Zheng Liu, Ao Li, Y ang Tian, and Bo Zhao. V ideo-xl-pro: Reconstructiv e token compres- sion for extremely long video understanding. arXiv pr eprint arXiv:2503.18478 , 2025. 2 [37] Xichen T an, Y unfan Y e, Y uanjing Luo, Qian W an, Fang Liu, and Zhiping Cai. Rag-adapter: A plug-and-play rag- enhanced frame work for long video understanding. arXiv pr eprint arXiv:2503.08576 , 2025. 2 [38] De-An Huang, Subhashree Radhakrishnan, Zhiding Y u, and Jan Kautz. Frag: Frame selection augmented generation for long video and long document understanding. arXiv pr eprint arXiv:2504.17447 , 2025. 2 [39] Beichen Zhang, P an Zhang, Xiaoyi Dong, Y uhang Zang, and Jiaqi W ang. Long-clip: Unlocking the long-text capability of clip. In European conference on computer vision , pages 310–325. Springer , 2024. 2 [40] Brenden M Lake, T omer D Ullman, Joshua B T enenbaum, and Samuel J Gershman. Building machines that learn and think like people. Behavioral and brain sciences , 40:e253, 2017. 3 [41] V ijay Konda and John Tsitsiklis. Actor-critic algorithms. Ad- vances in neural information pr ocessing systems , 12, 1999. 4 [42] W eihao Zeng, Keqing He, Chuqiao Kuang, Xiaoguang Li, and Junxian He. Pushing test-time scaling limits of deep search with asymmetric verification. arXiv preprint arXiv:2510.06135 , 2025. 4 [43] Gemini T eam, Rohan Anil, Sebastian Borgeaud, Jean- Baptiste Alayrac, Jiahui Y u, Radu Soricut, Johan Schalkwyk, Andrew M Dai, Anja Hauth, Katie Millican, et al. Gemini: a family of highly capable multimodal models. arXiv preprint arXiv:2312.11805 , 2023. 6 , 7 [44] Josh Achiam, Stev en Adler, Sandhini Agarwal, Lama Ah- mad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv pr eprint arXiv:2303.08774 , 2023. 6 , 7 [45] OpenAI. Introducing openai o3 and o4-mini. https: / / openai . com / index / introducing - o3 - and - o4- mini/ , 2025. Accessed: 2025-05-15. 6 , 7 [46] Dong Guo, Faming W u, Feida Zhu, Fuxing Leng, Guang Shi, Haobin Chen, Haoqi Fan, Jian W ang, Jianyu Jiang, Jiawei W ang, et al. Seed1. 5-vl technical report. arXiv preprint arXiv:2505.07062 , 2025. 6 [47] Shuai Bai, K eqin Chen, Xuejing Liu, Jialin W ang, W enbin Ge, Sibo Song, Kai Dang, Peng W ang, Shijie W ang, Jun T ang, et al. Qwen2. 5-vl technical report. arXiv pr eprint arXiv:2502.13923 , 2025. 6 , 7 [48] Y iyang Zhou, Y angfan He, Y aofeng Su, Siwei Han, Joel Jang, Gedas Bertasius, Mohit Bansal, and Huaxiu Y ao. Reagent-v: A re ward-dri ven multi-agent framework for video understanding. arXiv pr eprint arXiv:2506.01300 , 2025. 6 [49] Ziqi Pang and Y u-Xiong W ang. Mr . video:” mapreduce” is the principle for long video understanding. arXiv preprint arXiv:2504.16082 , 2025. 6 , 7 [50] Haoning W u, Dongxu Li, Bei Chen, and Junnan Li. Longvideobench: A benchmark for long-context interleav ed video-language understanding. Advances in Neur al Informa- tion Pr ocessing Systems , 37:28828–28857, 2024. 6 [51] Junjie Zhou, Y an Shu, Bo Zhao, Boya W u, Shitao Xiao, Xi Y ang, Y ongping Xiong, Bo Zhang, T iejun Huang, and Zheng Liu. Mlvu: A comprehensiv e benchmark for multi- task long video understanding. arXiv e-prints , pages arXiv– 2406, 2024. 6 [52] Chaoyou Fu, Y uhan Dai, Y ongdong Luo, Lei Li, Shuhuai Ren, Renrui Zhang, Zihan W ang, Chenyu Zhou, Y unhang Shen, Mengdan Zhang, et al. V ideo-mme: The first-ever comprehensiv e e valuation benchmark of multi-modal llms in video analysis. In Pr oceedings of the Computer V ision and P attern Recognition Conference , pages 24108–24118, 2025. 6 [53] Daya Guo, Dejian Y ang, Hao wei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi W ang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948 , 2025. 6 [54] Aixin Liu, Bei Feng, Bing Xue, Bingxuan W ang, Bochao W u, Chengda Lu, Chenggang Zhao, Chengqi Deng, Chenyu Zhang, Chong Ruan, et al. Deepseek-v3 technical report. arXiv pr eprint arXiv:2412.19437 , 2024. 6 [55] Alec Radford, Jong W ook Kim, T ao Xu, Greg Brockman, Christine McLeavey , and Ilya Sutskev er . Robust speech recognition via large-scale weak supervision. In Interna- tional confer ence on mac hine learning , pages 28492–28518. PMLR, 2023. 6 [56] Mudasir A Ganaie, Minghui Hu, Ashwani Kumar Malik, Muhammad T anv eer , and Ponnuthurai N Suganthan. Ensem- ble deep learning: A revie w . Engineering Applications of Artificial Intelligence , 115:105151, 2022. 8 A ppendix A. Details of Agents In this section, we present the details of the grounding agent and the visual perception agent, along with descriptions of their respecti ve tools. Additionally , we provide the com- plete prompts for all agents in A.3 . A.1. Grounding Agent T o balance accuracy and efficienc y , the grounding agent adaptiv ely selects retriev al tools based on question com- plexity . For questions in volving entities confined to a sin- gle scene (e.g., ”In a room with a wall tiger and a map on the wall, there is a man wearing a white shirt. What is he doing?”), only localization within the relev ant scene is re- quired, followed by reasoning using VLMs. In such cases, the CLIP-based retriev al tool is in vok ed to return the top 15 most semantically similar video clips (10 seconds each segment) as grounding results. For complex questions, the VLM-based scoring tool is employed to retrieve all seg- ments with relev ance scores greater than score 1. A.2. V isual Perception Agent The visual perception agent le verages an LLM to coordi- nate multiple calls to a tool suite, enabling effecti ve visual perception tasks. The toolkit comprises the follo wing com- ponents: Global Summary is designed for subtasks that require an understanding of the overall video content or thematic con- text. Given the video duration D, it uniformly samples 40 frames across the entire sequence and produces a com- pact global representation encoding high-lev el contextual semantics. Frame Inspector is tailored for fine-grained analysis of specific temporal segments. Gi ven a time interval [ t s , t e ] as input, it performs dense frame sampling with up to 40 frames. The agent can employ this tool in situations requir- ing fine-grained, frame-le vel analysis, such as the inspec- tion of temporally precise information or the e xamination of rapid actions. For intervals exceeding 30 seconds in du- ration, an additional ”cue” parameter is introduced to miti- gate the risk of overlooking critical information. In addition to uniform sampling, an additional 10 frames are retriev ed based on the provided cues, thereby ensuring comprehen- siv e and robust cov erage of rele vant visual content. Multi-segment Analysis is intended for tasks in volv- ing comparison or reasoning across non-contiguous video segments. This tool takes a list of time interv als [ t 1 s , t 1 e ] , [ t 2 s , t 2 e ] , . . . , [ t n s , t n e ] as input. It enables intuitive attribute comparison (e.g., changes in human appearance between temporally disjoint segments), facilitates the iden- tification of latent informational discrepancies across seg- ments, and supports causal analysis ov er time. ... 00:37:00-00:37:59 confirmed a n active MM:SS countdo wn timer, which explicitly matches optio n (A) ... ... a static '9' at 00:13:00 , static '19' at 00:31:00, and ... the nu mbers serve timing functions (B), (A) fit ting within the broader 'timing' cat egory ... 1. [00:13:00-00:13:59]: ... **Numbe rs shown**: Prima rily "9" (single digit), occasionally "0" . .. 2. [00:31:00-00:31:59]: ... Dominantly "19" (two digits) ... 3. ... 00:37:59]: . .. preparing burge rs ... Call Frame Inspector time: 00:13:00-00:13:59, judgement : { 'relevance_score': 4, 'clip_caption':..., 'reasoning':.. . } time: ... We analyze ... 1. Stove with Digital D isplay: At 00:08:45–50, a ... hotplate ... with ... digital display ... 2. Table & Trash Can: The marble countertop ... A trash ca n ... at 00:25:50–55, ... Question: What migh t be the number on the stove above the table next to the trash can? (A) Countdown number s (B) Timing numbers (C) Random numbers (D) Cloc k numbers Call global_browse_too l "00:08:45", "00:08:50" - The stove\'s digital display show s a single - digit number in red Call frame_inspect_too l Answer: B Call finish Call VLM-based scoring too l Grounding Agent Visual Perception Agent 3 [00:37:00-00:37:59] : .. . Countdown tim er in MM:SS forma t (e.g., 11:46, 11: 45) ... Call Frame Inspector Visual Perception Agent ... 00:37:00-00:37:59 confirmed a n active MM:SS countdo wn timer(e.g., 11:46 → 11:45) ... Reflection Agent Finish Answer: B Finish Answer: A time_reference: 37:43-37:45 DVD’s Trajectory Ours’ Trajectory 1 Figure S5. Analysis of the reasoning trajectories generated by our proposed Symphony and the single-agent D VD. A.3. Prompts f or agents in Symphony W e pro vide the prompts for each agent in Symphony: the prompt for the planning agent is shown in Fig. S6 , that of the reflection agent in Fig. S7 , the visual perception agent in Fig. S9 , the subtitle agent in Fig. S8 , and the grounding agent in Fig. S11 . B. More r esults B.1. Case Study As illustrated in Fig. S5 , we present the reasoning trajec- tories of Symphony and D VD on a complex example from L VBench. The video contains multiple scenes featuring red numerals, requiring the agent to carefully compare v arious visual conte xts, precisely ground the red numeral located “abov e the table next to the trash can, ” and reason over the most relev ant se gments to arriv e at a correct answer . D VD’ s incomplete grounding results, combined with the model’ s ov erconfidence, lead to an incorrect response. In contrast, Symphony achie ves accurate performance by le veraging its grounding agent to identify key segments with high recall, followed by iterativ e perception and cross-scene compari- son through the visual perception agent. Therefore, more accurate grounding provides a solid foundation for correct answers. Meanwhile, the decomposition of agents based on T able 8. Performance on L VBench with different base models. Method Seed 1.6VL Qwen2.5VL -72B Qwen2.5VL -7B GPT 4o V ideoT ree 33.7 32.0 29.4 32.8 V ideoAgent 37.6 34.6 30.5 32.7 VDR 56.1 52.3 49.4 50.8 V ideoRA G 59.2 57.7 50.2 52.3 Ours 71.8 68.2 65.1 67.1 capability and the collaboration mechanism foster improv ed reasoning abilities, ultimately enabling precise inference. B.2. F oundation Models In the comparison of existing SOT A methods, we report their published results as the strongest performance. T o eliminate the influence of the foundation model, we re- ev aluate existing open-source agent methods using v arious models. As shown in T able 8 , our method still achiev es the best performance. These results demonstrate that the per- formance improvement stems from the design of our agent system. C. Cost W e ev aluate both our proposed Symphony and other agent- based methods using the LLM API provided by Alibaba Cloud. On L VBench, we ev aluated the cost of DeepSeek R1, which constitutes the primary component of our method. On average, each query consumes 0.22 million tokens, amounting to $0.124. This represents a 41.8% re- duction compared to the $0.213 cost per query of D VD us- ing OpenAI o3 as the reasoning model. The reduced cost is attributed to our approach leveraging more cost-effecti ve open-source models. Prompt for Planning Agent You are a ** Planning Agent ** responsible for orchestrating the solution of complex long-video understanding tasks through systematic reasoning and dynamic collaboration within a multi-agent framework. As the central cognitive and coordination module, you are tasked with decomposing high-level queries into executable subtasks, managing information flow across specialized agents, and synthesizing evidence into accurate, well-supported conclusions. Given a user question Q and a historical trajectory of previously executed actions and their corresponding observations, your primary responsibilities are as follows: 1. ** Question Analysis ** : Perform a thorough semantic and logical analysis of Q. Identify its core components, implicit assumptions, temporal or causal dependencies, and domain-specific concepts. Determine whether the query pertains to visual content, events, object interactions, actions, dialogue, or higher-order reasoning (e.g., intent inference, narrative comprehension). 2. ** Task Decomposition and Reasoning Strategy ** : Decompose the main problem into a sequence of fine-grained, logically ordered subtasks that collectively form a valid reasoning path toward answering Q. Each subtask must be actionable, contextually grounded, and designed to reduce uncertainty or fill knowledge gaps. 3. ** Dynamic Planning and Contextual Decision-Making ** : Base each planning decision on the current state of accumulated evidence. Evaluate the sufficiency, consistency, and confidence level of existing observations. If information is incomplete, ambiguous, or insufficient to proceed with high confidence, generate the next most informative subtask that directly addresses the critical knowledge gap. 4. ** Agent Orchestration ** : Select and delegate subtasks to specialized agent based on the required information: - ** Grounding Agent ** : Invoked to determine the precise timestamp(s) of a specific event, action, object appearance, or scene transition in the video. Use this agent when no explicit time range is provided in the query and temporal localization is necessary. - ** Visual Perception Agent ** : Utilized to analyze visual content within a specified time interval. Capable of recognizing objects, identifying actions, describing scenes, tracking spatial relationships, and answering detailed visual questions. Requires both a clear instruction and a defined time range for processing. - ** Subtitle Agent ** : Employed to retrieve, extract, and analyze textual subtitles associated with the video. Suitable for understanding spoken dialogue, contextual narratives, or text-based cues relevant to the question. 5. ** Termination and Final Response Generation ** : Once the accumulated observations provide sufficient, consistent, and high-confidence evidence to fully answer Q, invoke the ‘finish‘ action. ** Critical Rules: ** 1. Your operation must be ** adaptive ** , ** evidence-driven ** , and ** goal-directed ** , maintaining an explicit reasoning trace throughout the process. Prioritize efficiency by minimizing redundant queries and maximizing information gain per step. 2. When conflicting information arises, the visual perception agent should be employed to compare disparate segments, identify inconsistencies, and resolve contradictions, thereby deriving a coherent and reliable conclusion. 3. Focus on the return results of Grounding Agent and the preceding and following segments. 4. The scene descriptions provided by the Grounding Agent ** must ** be double-checked by using the Visual Perception Agent. Call Agents in json format: ** {{ "reason": "Why this agent is the best choice for the task.", "agent": "Specific Agent name", "instruct": "Specific questions regarding the video" }} The user’s question is: "{question}" Video duration: "{duration}" Here is the execution history: {history_str} Figure S6. Prompt for Planning Agent. Prompt for Reflection Agent Please evaluate the credibility of the entire problem-solving process and the proposed answer based on the following information: Operations performed by the core agent to solve a video understanding problem include: {history} The original video understanding question: Question: {question} The final answer proposed by the core agent: Proposed Answer: {proposed_answer} Evaluation Criteria: - If the process and answer are credible and correct, set "credible"to true. - If any errors are found, set "credible"to falseand provide a concise explanation stating what the issue is and why the proposed answer is incorrect. Please respond strictly in the following JSON format: {{ "credible": boolean, // true means the answer is credible, false means it is not "comment": "Your concise explanation. This should be null if credible is true" }} Please return only the JSON object. Figure S7. Prompt for Reflection Agent. Prompt for Subtitle Agent You are a specialized Subtitle Analysis agent. Your task is to analyze the video subtitles based on the user’s question. Based on the following information: The original video understanding question: {question} The full video subtitles for analysis: {subtitles} Your Analysis Task: 1. Question-elevant Analysis: Extract subtitle segments directly related to the question from the original subtitles. 2. Entity and Sentiment Identification: Use the subtitle information to identify key entities mentioned and their associated sentiment. 3. General Content Summary: Provide a brief, high-level summary of the overall topic covered in the subtitle content. Please respond strictly in the following JSON format: {{ "relevant_subtitle_info": "A multi-line string containing the most relevant subtitle segments. Format each entry as:\n[HH:MM:SS - HH:MM:SS]: Actual subtitle text.\nFor example:\n[00:15:32 - 00:15:35]: ...\n[00:18:05 - 00:18:09]: ...", "key_entities_and_sentiment": "A brief, descriptive summary of the main entities and their sentiment.", "overall_topic": "A one-sentence summary of the main topic discussed in the video, based only on the subtitles." }} Please return only the JSON object. Figure S8. Prompt for Subtitle Agent. Prompt for V isual Perception Agent You are an agent responsible for video content perception. You will receive an Instruct from an upstream agent. ** Instruct: ** Instruct_PLACEHOLDER ** Task: ** Follow the Instruct and use tools to analyze video content to obtain key information. ** Tool Usage Guidelines: ** * ** Video Multimodal Content Viewing: ** - To retrieve detailed information, call the frame_inspector with the time range [HH:MM:SS, HH:MM:SS]. Ensure the time range is longer than 10 seconds and less than 60 seconds. If inspecting a longer duration, break it into multiple consecutive ranges of 60 seconds and prioritize checking them in order of relevance. The end time should not exceed the total duration of the video. - If you want to obtain a rough overview / background of a long period of time (entire video, or time range more than 3 minutes), use the global_summary_tool. - If the (question and options) includes multi scenes, call the multi_segment_analysis_tool with a list of time range to get the answer. ** Invocation Rules: ** 1. You can call the tools multiple times to complete the task specified in the Instruct. 2. Call only one tool at a time. 3. Do not include unnecessary line breaks in the tool parameters. 4. When providing the time_range parameter, ensure correct time unit formatting. For example, 03:21 means 3 minute and 21 seconds, which should be written as 00:03:21, not 03:21:00. Pay special attention to this. ** Task Completion: ** When the task is completed, summarize the conversation content (i.e., the completion result of the perception task) and respond to the Instruct starting with [answer], after which no further tools should be called. Figure S9. Prompt for V isual Perception Agent. Prompt for Grounding Agent You are an agent responsible for localizing temporal segments in a video that are relevant to a given question. First, analyze the question, then select the appropriate tool based on its type, and generate enhanced queries. ## Question Information - Question: QUESTION_PLACEHOLDER - Video Duration: VIDEO_LENGTH ## Question Analysis Process When presented with a query, you must conduct a thorough analysis to determine its complexity level, focusing on two critical dimensions: question ambiguity and multi-hop reasoning requirements. 1. Identify and transform abstract concepts into concrete visual features using world knowledge. 2. Resolve vague references through contextual analysis and common sense reasoning. 3. Convert implied actions into observable behavioral patterns and visual signatures. ## Tool Descriptions ### retrieve_tool - Description: Retrieves the most relevant time points from a video based on a textual cue. - Use Case: Simple perception questions where the target is a specific object or a scene that can be described with a few keywords. - Parameters: - - cue: A short descriptive text. - - frame_path: Path to the video frames. - Returns: A list of timestamps. ### vlm_scoring_tool - Description: Designed for more complex questions that require a deeper understanding of the video content, such as identifying actions, events, or scenarios. - Use Case: Complex questions requiring scenario understanding. - Parameters: - - question: The question to be answered. - - scoring_instruction: A detailed description, based on the Question Analysis, of what to identify in the video. - - frame_path: Path to the video frames. - Returns: A list of relevant segments, each with a timestamp, caption, relevance score (1-4, 4 is the maximum), and a justification. ## Tool Selection based on Question Type - Type 1: The question does not involve any action, is a simple perception question, and contains detailed scene/character descriptions. The character references are clear, and there is no ambiguity in the question, call retrieve_tool for scene localization. - Type 2: The question is complex (requiring understanding of scenarios from the question or options) or is non-intuitive/abstract. Use vlm_scoring_tool to achieve more comprehensive and accurate grounding. ### finish - Description: Returns the localization result. - Parameters: - - answer: Return the complete positioning result; do not directly answer the question. Figure S10. Prompt for Grounding Agent. Prompt for vlm scoring tool You are given a sequence of video frames sampled from a 1-minute video clip and a question. Your task is to: 1. Analyze the relevance between the question (including all options) and the visual content across the entire clip. 2. Output a global relevance score and description. Question: {USER_QUESTION} An upstream agent has analyzed this question: {SCORING_INSTRUCTION} You should refer to this analysis when determining the relevance score. Please output your analysis in the following JSON format: { "relevance_score": integer, // Relevance score from 1 to 4 "clip_caption": "string", // Concise description of main people (with distinguishing features), key events, actions, and relationships. Focus on elements related to the question. "reasoning": "string", // For scores 2, 3, and 4: explain reasoning; for score 1: use ’null’ } ### Scoring Criteria: 4 points: Key elements of the question and options are clearly visible, sufficient to directly answer the question. 3 points: Relevant elements from either the question or options appear, but require integration with additional information to make a judgment. 2 points: No direct relevance exists, but the scene may have indirect relevance, such as visually similar objects, objects related to the action or behavior mentioned in the question, conceptual extensions of elements in the question or options, or associations established through logical inference from the question to the scene. 1 point: Completely unrelated scene. ### Instructions for clip_caption: - Focus on elements related to the question. Describe ** main people, objects, events, actions, and their relationships ** that are visually confirmable. - If there are multiple scenarios, describe them respectively. Pay attention to the sequence of events! - Only describe what is ** directly observable ** . Do ** not ** infer, imagine, or fabricate scenes beyond the visual evidence. - If the question is about counting (e.g. ’how many’, ’count’ appearing in the question),Clearly identify the elements mentioned in the problem statement and count them. ### Reasoning Guidelines: - Score 4: Briefly state which elements confirm the answer. Output the answer. - Score 3: Explain what is missing or ambiguous (e.g., "action starts in Segment 3 but completion unclear", "person matches description but action not observed"). - Score 2: Explain how you decomposed or extended the question (e.g., "question asks about ’a musician’, and a person holding a guitar appears"). - Score 1: Set reasoning to ’null’. Be thorough, precise, and strictly grounded in visual evidence. Avoid temporal phrases like ’the first time’. Figure S11. Prompt for vlm scoring tool.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment