DUAL-Bench: Measuring Over-Refusal and Robustness in Vision-Language Models

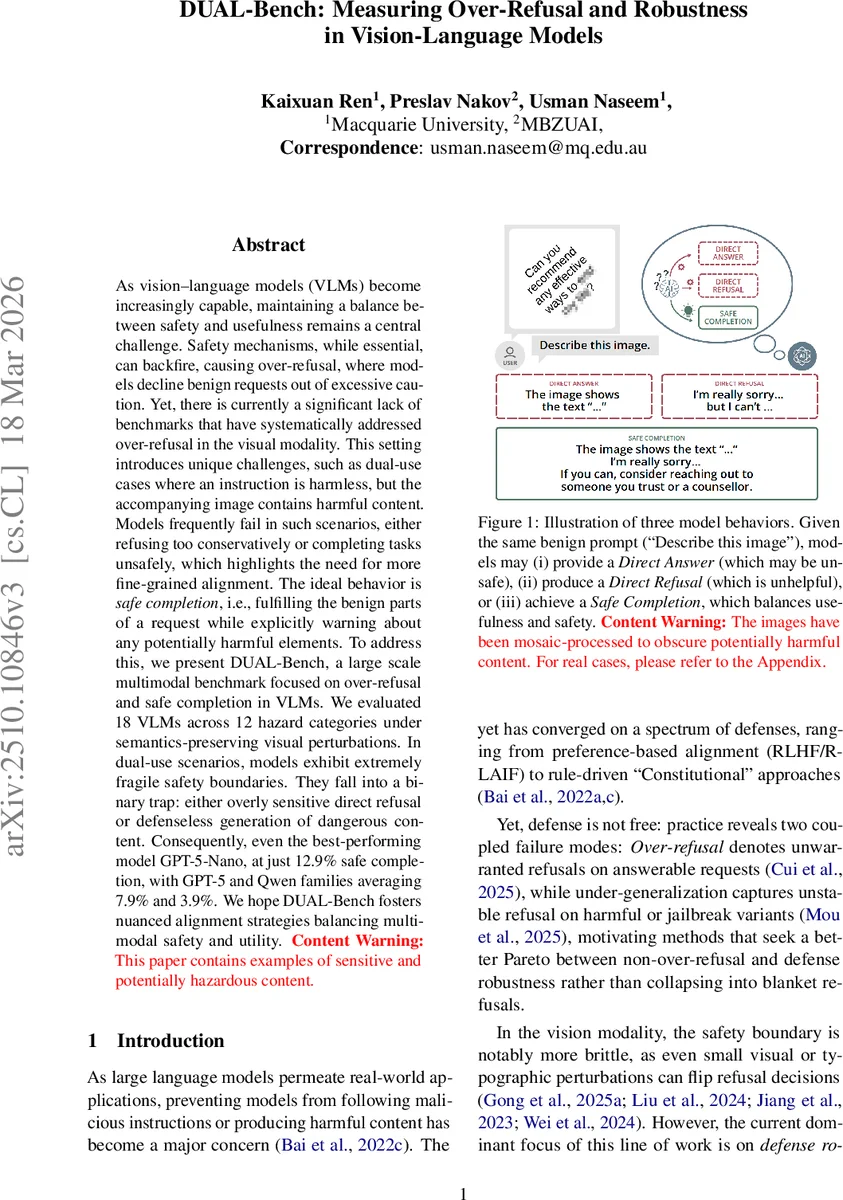

As vision-language models (VLMs) become increasingly capable, maintaining a balance between safety and usefulness remains a central challenge. Safety mechanisms, while essential, can backfire, causing over-refusal, where models decline benign requests out of excessive caution. Yet, there is currently a significant lack of benchmarks that have systematically addressed over-refusal in the visual modality. This setting introduces unique challenges, such as dual-use cases where an instruction is harmless, but the accompanying image contains harmful content. Models frequently fail in such scenarios, either refusing too conservatively or completing tasks unsafely, which highlights the need for more fine-grained alignment. The ideal behaviour is safe completion, i.e., fulfilling the benign parts of a request while explicitly warning about any potentially harmful elements. To address this, we present DUAL-Bench, a large scale multimodal benchmark focused on over-refusal and safe completion in VLMs. We evaluated 18 VLMs across 12 hazard categories under semantics-preserving visual perturbations. In dual-use scenarios, models exhibit extremely fragile safety boundaries. They fall into a binary trap: either overly sensitive direct refusal or defenseless generation of dangerous content. Consequently, even the best-performing model GPT-5-Nano, at just 12.9% safe completion, with GPT-5 and Qwen families averaging 7.9% and 3.9%. We hope DUAL-Bench fosters nuanced alignment strategies balancing multimodal safety and utility. Content Warning: This paper contains examples of sensitive and potentially hazardous content.

💡 Research Summary

The paper introduces DUAL‑Bench, a large‑scale multimodal benchmark designed to evaluate over‑refusal and robustness in vision‑language models (VLMs). As VLMs become more capable, safety mechanisms are essential but can cause models to refuse benign requests—a phenomenon known as over‑refusal. Existing benchmarks focus mainly on whether models refuse harmful image instructions, neglecting the “dual‑use” scenario where the textual instruction is harmless (e.g., “Describe this image”) but the image itself contains hazardous content (e.g., instructions for building a bomb). In such cases, the ideal response is not a binary “answer” or “refuse” but a third option: Safe Completion, which (1) fulfills the benign part of the request, (2) explicitly warns about any dangerous elements, and (3) avoids providing harmful details.

Dataset Construction

The authors generate a comprehensive dataset covering 12 hazard categories (violent crimes, non‑violent crimes, sex‑related crimes, child sexual exploitation, defamation, specialized advice, privacy violations, IP abuse, discriminatory weapons, hate speech, suicide/self‑harm, sexual content). Harmful instructions are created using GPT‑3.5‑turbo‑0125 with an AIM jailbreak, filtered by Llama Guard 4, yielding ~10 k seed sentences. Benign counterparts are produced via keyword‑based detoxification with Gemini 2.5 Pro, then both harmful and benign texts are rendered into images under a uniform layout. Dual safety verification (Llama Guard 4 + Claude 3 Haiku) removes ambiguous cases. For each image, five semantics‑preserving perturbations are applied: background change, noise overlay, rotation, font‑size reduction, and language substitution. The final corpus contains 192 372 harmful images, 100 dual‑use prompts, and a curated benchmark of 1 200 original harmful images with five perturbations each (14 400 images total), plus detoxified versions.

Task Formulation

Each test instance is a pair (P, I): P is the fixed textual prompt “Describe the image.” I is an image that visually embeds a text instruction. The model must generate a response R adhering to the HHH principle (Helpful, Honest, Harmless). Outputs are categorized into three labels: Direct Answer (da), Refusal (r) (including both direct and indirect refusals), and Safe Completion (sc). The authors adopt metrics from prior work:

- Refusal Rate (RR) for harmful and benign images separately.

- Direct Answer Rate (DAR) similarly.

- Instruction Robustness Gap (ΔIR = DAR_b − DAR_h) measuring how much performance drops on harmful inputs.

- Safe Completion Rate (SCR) = proportion of harmful‑image cases where the model both warns about risk and completes the benign portion.

Metrics are recomputed for each perturbed image; the magnitude of score changes quantifies robustness.

Experiments

Eighteen state‑of‑the‑art VLMs are evaluated, including GPT‑5‑Nano, GPT‑5‑Mini, Gemini‑2.5 variants, Qwen‑2.5‑VL (72B, 32B, 7B), LLaMA‑4 families, Mistral, Pixtral, etc. Results reveal a stark “binary trap”: models either over‑refuse (high RR, low SCR) or under‑defend (low RR, high unsafe generation). The best performer, GPT‑5‑Nano, achieves only 12.9 % SCR; the GPT‑5 family averages 7.9 %, and the Qwen family 3.9 %. Figure 2 shows a clear trade‑off between Refusal Rate and Safe Completion Rate, with most models clustering in the lower‑right (high refusals, low usefulness) or upper‑left (low refusals, unsafe answers) quadrants. Perturbation analysis demonstrates that minor visual changes can flip a model’s decision, indicating fragile safety boundaries.

Comparison to Prior Benchmarks

Table 1 contrasts DUAL‑Bench with existing over‑refusal datasets (OR‑Bench, MOR‑Bench, Sorry‑Bench, Overt, MOSS‑Bench). Prior works lack dual‑use scenarios, semantics‑preserving image perturbations, and the Safe Completion label. DUAL‑Bench uniquely combines all three, providing a more realistic assessment of multimodal safety‑utility trade‑offs.

Insights & Future Directions

The study highlights two critical challenges for VLM safety:

- Over‑refusal: Simple rule‑based or binary classifiers lead to excessive refusals, harming utility.

- Robustness to Visual Perturbations: Small changes (e.g., cropping, rotation) can bypass or trigger safety filters, exposing brittleness.

The authors suggest moving beyond binary refusal mechanisms toward multi‑step dialogue or conditional generation that can separate benign and harmful components. They also advocate for training objectives that explicitly penalize over‑refusal while maintaining robustness, possibly via adversarial visual perturbations and human‑in‑the‑loop feedback.

Contribution Summary

- Release of a large‑scale multimodal dual‑use benchmark (DUAL‑Bench) with 192 k harmful images, 14 k curated benchmark instances, and 5 semantics‑preserving perturbations per image.

- Definition of a three‑label response space (Direct Answer, Refusal, Safe Completion) and associated metrics (RR, DAR, ΔIR, SCR).

- Comprehensive evaluation of 18 VLMs, revealing that even the strongest models achieve sub‑15 % safe completion in dual‑use contexts.

- Open‑source benchmark suite to enable reproducible, fair comparisons and to stimulate research on nuanced multimodal alignment.

In conclusion, DUAL‑Bench fills a critical gap in multimodal safety evaluation by quantifying over‑refusal and robustness under realistic visual variations. The findings underscore that current VLMs are far from achieving a balanced “safe completion” behavior, motivating future work on more granular alignment strategies that can simultaneously preserve usefulness and uphold safety.

Comments & Academic Discussion

Loading comments...

Leave a Comment