Mamba2D: A Natively Multi-Dimensional State-Space Model for Vision Tasks

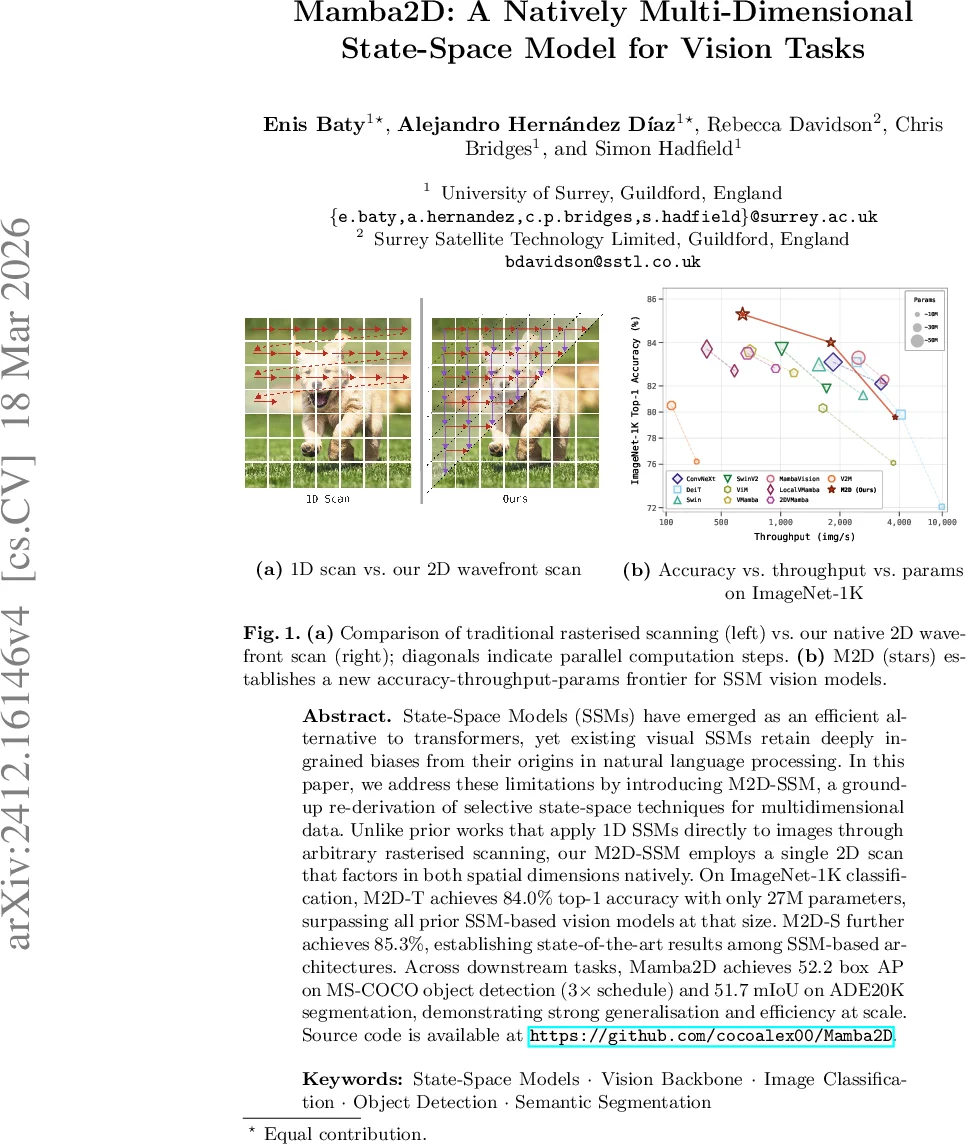

State-Space Models (SSMs) have emerged as an efficient alternative to transformers, yet existing visual SSMs retain deeply ingrained biases from their origins in natural language processing. In this paper, we address these limitations by introducing M2D-SSM, a ground-up re-derivation of selective state-space techniques for multidimensional data. Unlike prior works that apply 1D SSMs directly to images through arbitrary rasterised scanning, our M2D-SSM employs a single 2D scan that factors in both spatial dimensions natively. On ImageNet-1K classification, M2D-T achieves 84.0% top-1 accuracy with only 27M parameters, surpassing all prior SSM-based vision models at that size. M2D-S further achieves 85.3%, establishing state-of-the-art results among SSM-based architectures. Across downstream tasks, Mamba2D achieves 52.2 box AP on MS-COCO object detection (3$\times$ schedule) and 51.7 mIoU on ADE20K segmentation, demonstrating strong generalisation and efficiency at scale. Source code is available at https://github.com/cocoalex00/Mamba2D.

💡 Research Summary

The paper introduces Mamba2D, a novel vision backbone built on a truly two‑dimensional state‑space model (2D‑SSM). Existing visual SSMs inherit the one‑dimensional (1D) formulation of language‑oriented SSMs and therefore process images by flattening them into a rasterised sequence. This flattening destroys the native spatial relationships between neighboring pixels and forces the recurrent memory of the SSM to decay exponentially over the artificial 1D distance, limiting the model’s ability to capture local context.

Mamba2D addresses these shortcomings by deriving a 2D‑SSM from first principles. The authors start from the continuous‑time linear ODE formulation of a standard SSM and introduce two independent partial derivatives—one along the vertical axis (t) and one along the horizontal axis (z). After applying the Zero‑Order‑Hold (ZOH) discretisation separately to each axis, they obtain two discrete recurrences that update the same hidden state h(t, z) from its top neighbour h(t‑Δ_t, z) and its left neighbour h(t, z‑Δ_z). The updates are combined with a fixed ½ weighting, yielding the core 2D recurrence:

h(t, z) = ½

Comments & Academic Discussion

Loading comments...

Leave a Comment