Towards Clinical Practice in CT-Based Pulmonary Disease Screening: An Efficient and Reliable Framework

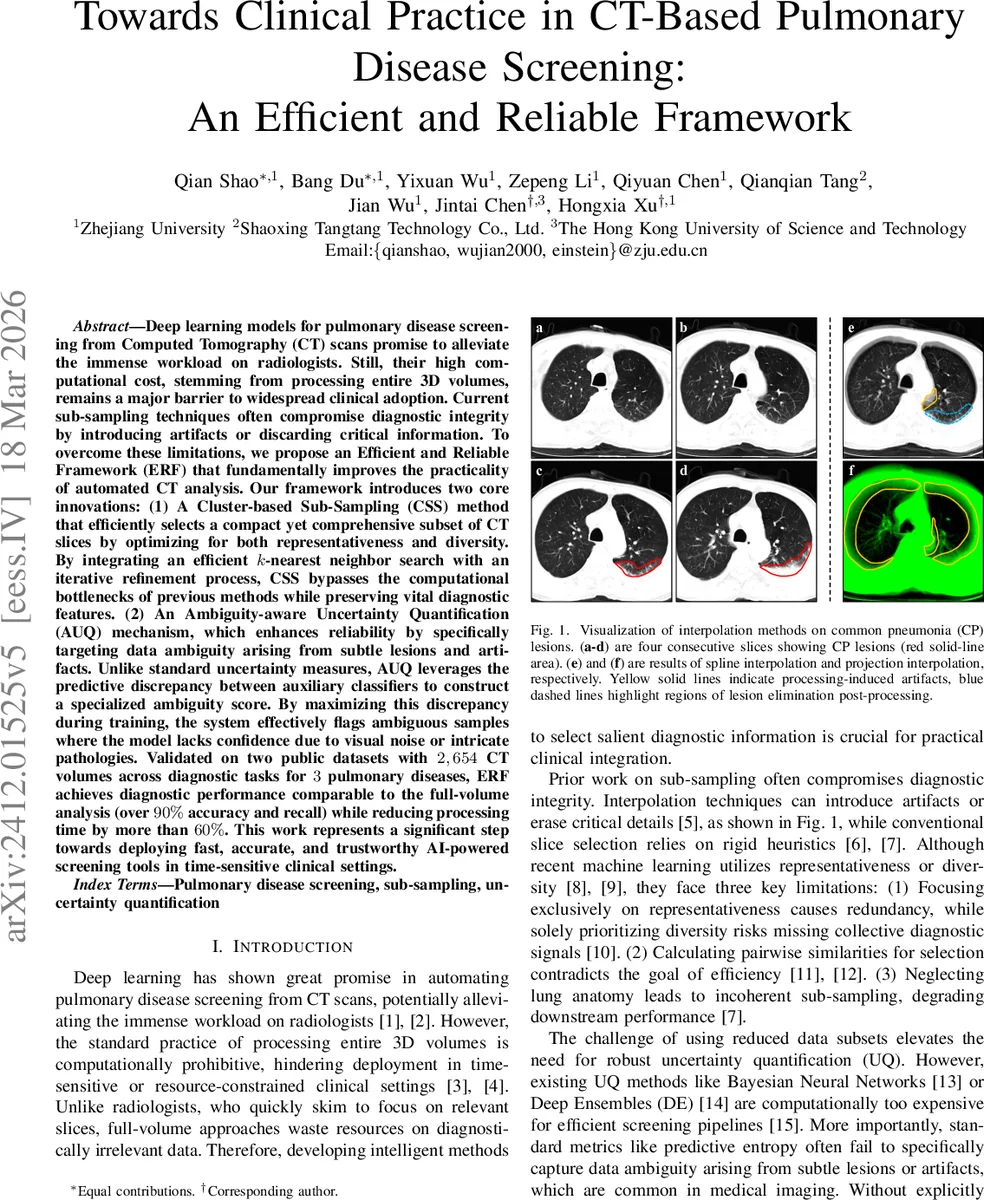

Deep learning models for pulmonary disease screening from Computed Tomography (CT) scans promise to alleviate the immense workload on radiologists. Still, their high computational cost, stemming from processing entire 3D volumes, remains a major barrier to widespread clinical adoption. Current sub-sampling techniques often compromise diagnostic integrity by introducing artifacts or discarding critical information. To overcome these limitations, we propose an Efficient and Reliable Framework (ERF) that fundamentally improves the practicality of automated CT analysis. Our framework introduces two core innovations: (1) A Cluster-based Sub-Sampling (CSS) method that efficiently selects a compact yet comprehensive subset of CT slices by optimizing for both representativeness and diversity. By integrating an efficient k-nearest neighbor search with an iterative refinement process, CSS bypasses the computational bottlenecks of previous methods while preserving vital diagnostic features. (2) An Ambiguity-aware Uncertainty Quantification (AUQ) mechanism, which enhances reliability by specifically targeting data ambiguity arising from subtle lesions and artifacts. Unlike standard uncertainty measures, AUQ leverages the predictive discrepancy between auxiliary classifiers to construct a specialized ambiguity score. By maximizing this discrepancy during training, the system effectively flags ambiguous samples where the model lacks confidence due to visual noise or intricate pathologies. Validated on two public datasets with 2,654 CT volumes across diagnostic tasks for 3 pulmonary diseases, ERF achieves diagnostic performance comparable to the full-volume analysis (over 90% accuracy and recall) while reducing processing time by more than 60%. This work represents a significant step towards deploying fast, accurate, and trustworthy AI-powered screening tools in time-sensitive clinical settings.

💡 Research Summary

The paper addresses two major obstacles that have prevented deep‑learning‑based pulmonary disease screening from being widely deployed in clinical practice: the prohibitive computational cost of processing full‑volume CT scans and the lack of reliable uncertainty estimation when only a subset of slices is used. To overcome these challenges, the authors propose an Efficient and Reliable Framework (ERF) that consists of two novel components: (1) Cluster‑based Sub‑Sampling (CSS) and (2) Ambiguity‑aware Uncertainty Quantification (AUQ).

Cluster‑based Sub‑Sampling (CSS)

CSS first partitions each CT volume into three anatomical regions (upper, middle, lower) using a fixed ratio (0.25 : 0.15 : 0.60). Within each region, a frozen MedCLIP‑ViT encoder extracts a d‑dimensional feature vector for every slice, which is L2‑normalized to enable distance‑based operations. The slices are then clustered with K‑Means; each cluster is represented by a “density peak” – the slice with the smallest average distance to its k‑nearest neighbours. To compute these distances efficiently, the authors employ Hierarchical Navigable Small World (HNSW) graphs for approximate k‑NN search, reducing the complexity from O(n²) to near‑linear time.

Selecting only density peaks would lead to redundancy because neighboring clusters may still be similar. Therefore, an iterative refinement step is introduced. At iteration t, a diversity regularizer Φ(z, t) penalizes a candidate slice z for being too close to any slice already selected in previous iterations (excluding the slice from the same cluster). Φ is smoothed over time with an exponential moving average (EMA) controlled by a momentum β. The final selection for each cluster maximizes a joint objective that balances representativeness (negative density) and diversity (λ·Φ). The search space is limited to the h‑nearest neighbours of the currently selected slice, which further speeds up computation. After T iterations, a compact set e_D of m ≪ n slices is obtained. The authors report that CSS can select 64 slices per volume while preserving more than 98 % of the diagnostic performance of the full‑volume model, and it remains robust when the budget is reduced to 32 slices.

Ambiguity‑aware Uncertainty Quantification (AUQ)

AUQ tackles the problem that standard uncertainty metrics (predictive entropy, MC‑Dropout, deep ensembles) either require multiple forward passes or fail to capture the specific type of ambiguity that arises from subtle lesions or imaging artifacts. The framework augments the primary classifier G with two auxiliary classifiers G₁ and G₂ that share the same feature extractor F but have independent prediction heads. During training, each auxiliary head minimizes its own cross‑entropy loss while simultaneously maximizing a discrepancy term d_dis, defined as the average L₁ distance among the three probability vectors (p₁, p₂, p). This encourages the auxiliary heads to diverge on ambiguous inputs while still learning the correct labels. Importantly, gradients from the discrepancy term are detached from the backbone to avoid degrading the shared representation.

At inference time, the uncertainty score U(x) is computed as the sum of the discrepancy d_dis(p₁, p₂) and the entropy H(p) of the primary classifier. High U indicates that the input lies near decision boundaries that are sensitive to subtle visual variations, i.e., a region of data ambiguity. Experiments show that AUQ outperforms entropy‑only, MC‑Dropout, and deep ensembles, especially on the hardest task (adenocarcinoma vs. normal), achieving over 94 % accuracy where other methods fall below 90 %.

Experimental Validation

The authors evaluate ERF on two public datasets—SARS‑CoV‑2 and LUNG‑PET‑CT‑Dx—combined into a corpus of 2,654 CT scans containing four categories: novel coronavirus pneumonia (NCP), common pneumonia (CP), adenocarcinoma (AC), and normal. Three binary classification tasks are defined: NCP vs. normal, CP vs. normal, and AC vs. normal. A 5‑fold cross‑validation protocol is used, and all methods share the same 3D ResNet‑50 backbone.

Baseline sub‑sampling methods include three interpolation techniques (Projection, Linear, Spline), two heuristic slice‑selection strategies (Subset Slice Selection, Even Slice Selection), and two learning‑based approaches (CoreSet, ActiveFT). The full‑volume model serves as an upper bound. Results (Table I) demonstrate that CSS consistently achieves the highest accuracy (≈98.9 %–99.1 %) and recall (≈98.8 %–99.0 %) among all 64‑slice methods, closely matching the full‑volume performance (≈99.1 %). When the slice budget is halved to 32, CSS degrades only modestly (≈90 % accuracy), whereas other methods drop below 85 %.

For uncertainty estimation (Table III), AUQ surpasses entropy, MC‑Dropout, and deep ensembles across all tasks, with the most pronounced gains on the adenocarcinoma task, where it reaches 94.9 % accuracy compared to 73 %–78 % for the alternatives. Visualizations illustrate that CSS selects slices containing the most informative lesions, and AUQ highlights ambiguous regions, providing clinicians with interpretable cues.

Discussion and Limitations

CSS relies on approximate k‑NN; while HNSW is fast, its approximation may affect density estimation in edge cases. AUQ uses only two auxiliary heads; adding more could improve the fidelity of the ambiguity score but would increase training complexity. The current design selects 2D slices independently, so full 3D spatial continuity is not guaranteed; future work could incorporate 3D clustering or temporal smoothness constraints.

Conclusion

ERF successfully combines (i) an anatomically aware, HNSW‑accelerated density‑and‑diversity optimization for slice selection and (ii) a discrepancy‑driven, low‑overhead uncertainty quantifier that explicitly targets data ambiguity. The framework delivers near‑full‑volume diagnostic accuracy while cutting inference time by more than 60 %, and it provides reliable uncertainty estimates that can flag cases needing radiologist review. These advances bring AI‑assisted CT screening closer to real‑world, time‑sensitive clinical deployment, and open avenues for multimodal integration, dynamic budgeting, and prospective validation in larger hospital networks.

Comments & Academic Discussion

Loading comments...

Leave a Comment