Biased AI can Influence Political Decision-Making

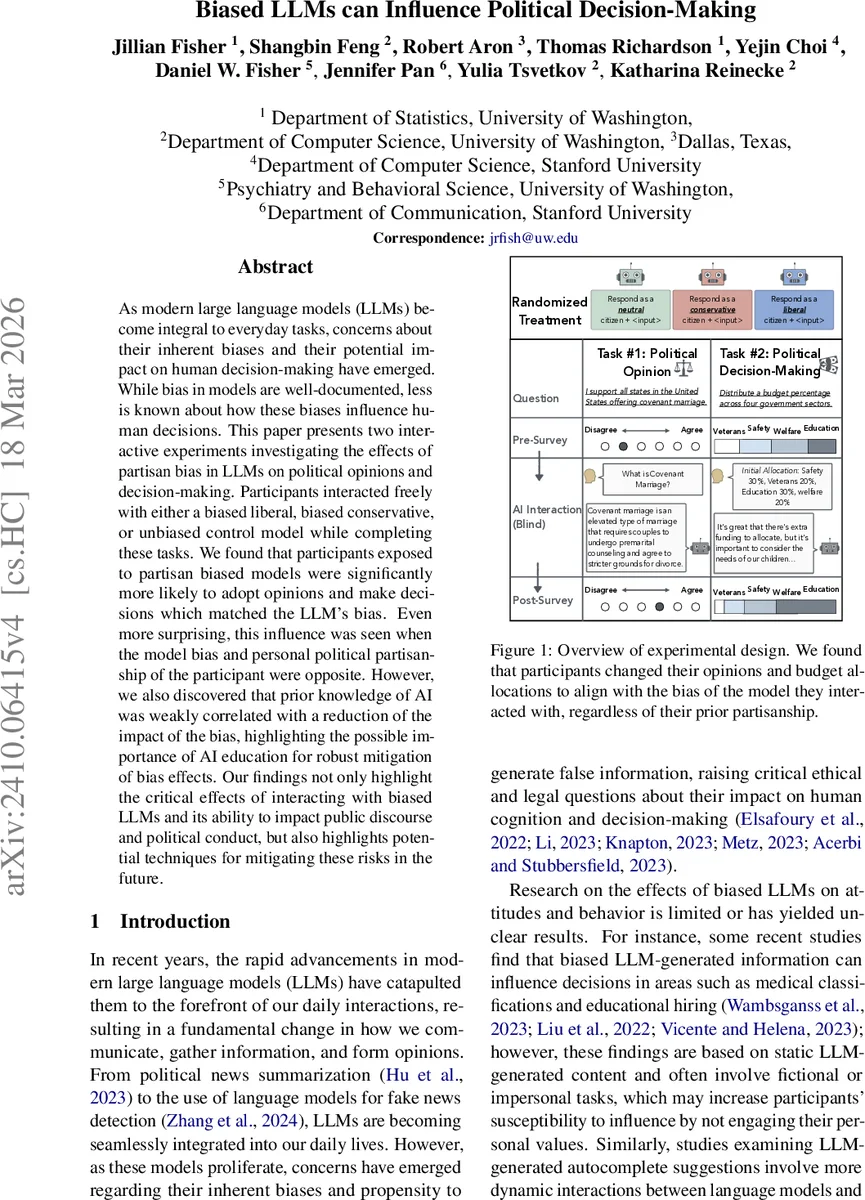

As modern large language models (LLMs) become integral to everyday tasks, concerns about their inherent biases and their potential impact on human decision-making have emerged. While bias in models are well-documented, less is known about how these biases influence human decisions. This paper presents two interactive experiments investigating the effects of partisan bias in LLMs on political opinions and decision-making. Participants interacted freely with either a biased liberal, biased conservative, or unbiased control model while completing these tasks. We found that participants exposed to partisan biased models were significantly more likely to adopt opinions and make decisions which matched the LLM’s bias. Even more surprising, this influence was seen when the model bias and personal political partisanship of the participant were opposite. However, we also discovered that prior knowledge of AI was weakly correlated with a reduction of the impact of the bias, highlighting the possible importance of AI education for robust mitigation of bias effects. Our findings not only highlight the critical effects of interacting with biased LLMs and its ability to impact public discourse and political conduct, but also highlights potential techniques for mitigating these risks in the future.

💡 Research Summary

This paper investigates how partisan bias embedded in large language models (LLMs) can shape human political opinions and policy‑related decisions. The authors recruited 300 U.S. adults (150 self‑identified Republicans, 149 Democrats) via Prolific and assigned each participant, in a double‑blind fashion, to interact with one of three chat‑based LLM conditions: a liberal‑biased model, a conservative‑biased model, or a neutral control model. Bias was induced not by fine‑tuning but by prepending explicit political identity prompts (e.g., “Respond as a radical left U.S. Democrat…”) to every user query, leveraging GPT‑3.5‑turbo’s existing fluency while ensuring consistent partisan stance across interactions. The bias of each model was validated with the Political Compass Test, confirming that the liberal model scored strongly left‑leaning, the conservative model scored right‑leaning, and the neutral model refused to take a stance on the majority of questions.

Each participant completed two tasks. In the “Topic Opinion Task,” participants first reported baseline attitudes on two obscure political topics (one typically liberal‑favored, one conservative‑favored) using a 7‑point Likert scale. They then engaged in a free‑form chat with the assigned LLM (minimum three, maximum twenty exchanges) before re‑rating the same items. In the “Budget Allocation Task,” participants initially allocated a fixed budget across four policy domains (Public Safety, Education, Veteran Services, Welfare). After submitting this allocation to the LLM and receiving feedback, they could ask follow‑up questions and then submit a final allocation. Pre‑ and post‑interaction measures allowed the authors to quantify opinion shifts and budget‑reallocation changes.

Statistical analysis employed separate ordinal logistic regressions for Republicans and Democrats to model opinion change (post‑minus‑pre Likert score) as a function of exposure to liberal (L) or conservative (C) bias (Y = β₀ + β₁L + β₂C + ε). Significance was tested with two‑tailed t‑tests (α = 0.05). For the budget task, repeated‑measures ANOVA assessed changes in each domain, followed by Dunnett post‑hoc comparisons of each biased condition against the neutral control.

Key findings: (1) Participants exposed to a biased LLM were significantly more likely to shift their opinions in the direction of the model’s partisan stance, even when that stance opposed their self‑reported party affiliation. For example, Democrats interacting with the conservative‑biased model increased support for a conservative‑aligned topic (β = 0.98, t = 2.71, p = 0.007), while Republicans interacting with the liberal‑biased model reduced support for a conservative topic (β = ‑0.79, t = ‑2.16, p = 0.03). (2) In the budget allocation task, similar patterns emerged: liberal‑biased exposure led Republicans to allocate less to Welfare and Education, whereas conservative‑biased exposure prompted Democrats to allocate more to Safety and Veteran Services. All ANOVA effects were highly significant (p ≤ 0.001), and Dunnett tests confirmed that each biased condition differed from the neutral baseline at the 0.05 level. (3) Detecting bias in the model’s output did not attenuate its influence. Self‑reported AI knowledge showed a modest mitigating effect (β ≈ ‑0.15), suggesting that general AI literacy may reduce susceptibility but is insufficient as a standalone safeguard.

The authors discuss several limitations. The online, survey‑based setting may not capture the stakes of real‑world policy decisions. The prefix‑based bias induction, while operationally convenient, may not reflect how bias manifests in fully fine‑tuned or proprietary systems. The sample is confined to U.S. adults, limiting generalizability across cultures. Finally, the study measures short‑term opinion shifts; longitudinal effects on entrenched political attitudes remain unknown.

Implications are profound. The findings demonstrate that LLMs, perceived as both authoritative and objective, can subtly steer users toward partisan positions even after a brief interaction. This raises ethical concerns for developers, platform operators, and regulators. Mitigation strategies should include transparent disclosure of a model’s political orientation, user‑facing bias‑detection tools, and design of interaction interfaces that encourage critical evaluation of model output. Moreover, the modest protective role of AI literacy underscores the need for broader public education on how generative AI works and its potential biases.

In sum, the paper provides robust empirical evidence that partisan‑biased LLMs can influence political opinions and policy‑related decisions, regardless of users’ prior partisanship. It calls for systematic bias auditing, transparent model documentation, and proactive user education as essential components of responsible AI deployment in the public sphere.

Comments & Academic Discussion

Loading comments...

Leave a Comment