OpenResearcher: A Fully Open Pipeline for Long-Horizon Deep Research Trajectory Synthesis

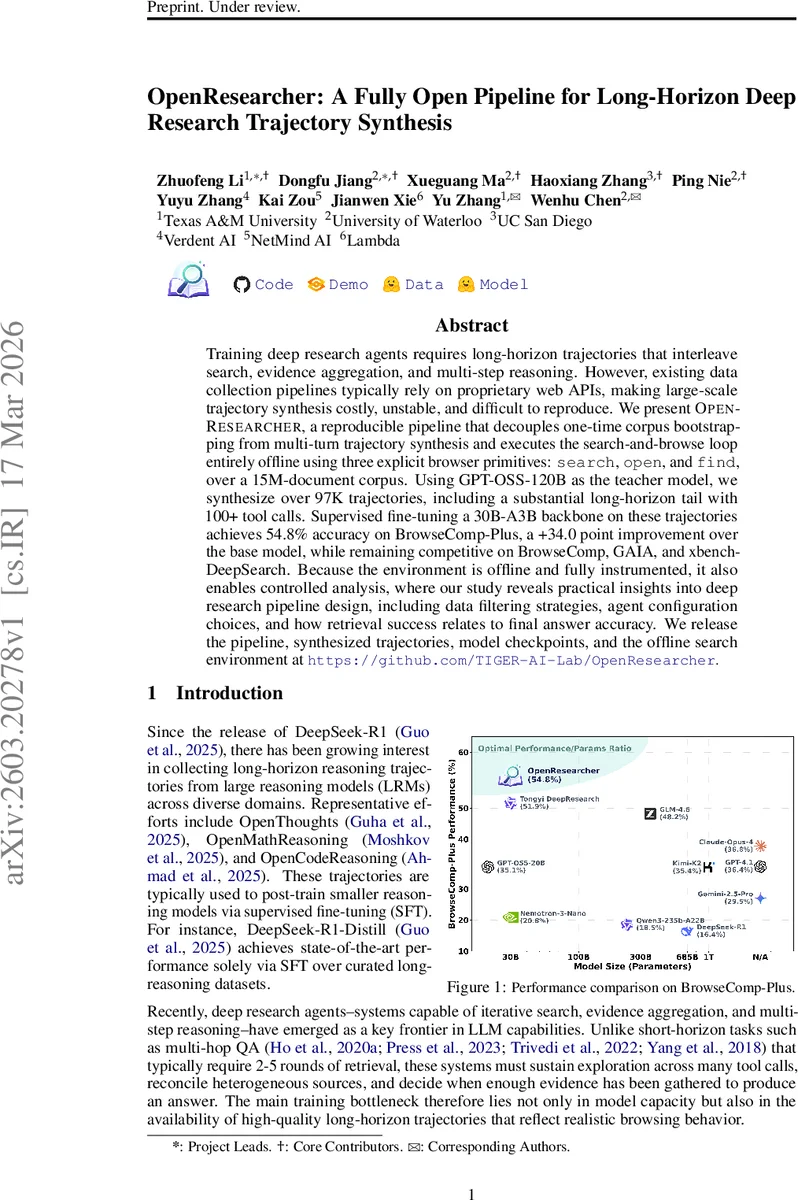

Training deep research agents requires long-horizon trajectories that interleave search, evidence aggregation, and multi-step reasoning. However, existing data collection pipelines typically rely on proprietary web APIs, making large-scale trajectory synthesis costly, unstable, and difficult to reproduce. We present OpenResearcher, a reproducible pipeline that decouples one-time corpus bootstrapping from multi-turn trajectory synthesis and executes the search-and-browse loop entirely offline using three explicit browser primitives: search, open, and find, over a 15M-document corpus. Using GPT-OSS-120B as the teacher model, we synthesize over 97K trajectories, including a substantial long-horizon tail with 100+ tool calls. Supervised fine-tuning a 30B-A3B backbone on these trajectories achieves 54.8% accuracy on BrowseComp-Plus, a +34.0 point improvement over the base model, while remaining competitive on BrowseComp, GAIA, and xbench-DeepSearch. Because the environment is offline and fully instrumented, it also enables controlled analysis, where our study reveals practical insights into deep research pipeline design, including data filtering strategies, agent configuration choices, and how retrieval success relates to final answer accuracy. We release the pipeline, synthesized trajectories, model checkpoints, and the offline search environment at https://github.com/TIGER-AI-Lab/OpenResearcher.

💡 Research Summary

OpenResearcher tackles the high cost, instability, and lack of reproducibility that have plagued the creation of long‑horizon research trajectories for training deep research agents. The authors split the pipeline into two distinct phases: (1) a one‑time online bootstrapping stage that gathers “gold” documents for a curated set of challenging questions, and (2) an entirely offline trajectory synthesis stage that operates on a 15‑million‑document corpus using only three primitive browser tools—search, open, and find.

In the bootstrapping phase the authors start from 6 K questions drawn from the MiroVerse‑v0.1 dataset, which are specifically designed to require extensive multi‑hop reasoning. For each question they construct a query that concatenates the question and its reference answer, then invoke the Serper web‑search API a single time to retrieve candidate pages. After cleaning, deduplication, and manual verification they obtain roughly 10 K gold documents that collectively contain the evidence needed to answer the questions. These gold documents are merged with a large “distractor” set of 15 M documents from the FineWeb dump, yielding an offline corpus that mimics the noise and scale of the real web while guaranteeing coverage of the target evidence.

The offline phase introduces a minimal yet expressive browsing abstraction. The search tool returns the top‑K results (title, URL, snippet) for a natural‑language query, the open tool fetches the full text of a selected URL, and the find tool performs exact string matching within the currently opened document. This three‑step hierarchy mirrors how humans conduct web research: broad query → document inspection → precise evidence location. By limiting the teacher model (GPT‑OSS‑120B) to these primitives, the system forces the model to learn not only what to retrieve but also how to navigate and verify information.

Trajectory generation proceeds by prompting the teacher model to reason step‑by‑step, invoke a tool, observe the result, and repeat until it is confident enough to produce a final answer. The model never sees the reference answer during generation; it must reconstruct it through iterative search and reasoning. Generated trajectories are filtered for (i) context‑length overflow, (ii) malformed tool calls, and (iii) failure to reach a conclusive answer within a fixed interaction budget. After filtering, more than 97 K trajectories remain, with a substantial tail of examples requiring over 100 tool calls—far longer than prior datasets that typically cap at 5 calls.

For supervised fine‑tuning, the authors select the 55 K trajectories that yield correct final answers and train a 30 B‑parameter student model (Nemotron‑3‑Nano‑30B‑A3B) using Megatron‑LM on eight NVIDIA H100 GPUs for roughly eight hours. The training uses a fixed learning rate of 5 × 10⁻⁵ and packs sequences up to 256 K tokens to accommodate the long‑horizon data. The resulting model achieves 54.8 % accuracy on the BrowseComp‑Plus benchmark, a 34‑point gain over the base model and surpassing strong proprietary baselines such as GPT‑4.1, Claude‑4‑Opus, and Gemini‑2.5‑Pro. On live‑web benchmarks (BrowseComp, GAIA, xbench‑DeepSearch) the model remains competitive, often matching or exceeding existing open‑source agents.

Because the entire environment is offline and fully instrumented, the authors conduct a series of controlled analyses (RQs 1‑5). They explore how trajectory filtering impacts downstream performance, how the proportion of gold documents versus distractors affects retrieval success, the trade‑off between turn budget and answer quality, the contribution of each browser primitive (search‑only vs. search+open vs. search+open+find), and the correlation between retrieval success (i.e., hitting a gold document) and final answer accuracy. Key findings include: (a) retaining only correct‑answer trajectories yields the largest performance boost; (b) even a modest number of gold documents (≈0.07 % of the corpus) provides sufficient coverage for most questions; (c) a turn budget of 150 steps is enough for >90 % of queries; (d) the find tool dramatically improves evidence localization, especially for long‑horizon tasks; and (e) retrieval success rates above 80 % correspond to a sharp increase in final accuracy.

In summary, OpenResearcher delivers four major contributions: (1) a cost‑effective, reproducible offline search infrastructure built after a single online bootstrapping pass; (2) a minimal yet powerful browser abstraction that captures realistic research behavior; (3) a large, high‑quality dataset of long‑horizon research trajectories; and (4) empirical insights into data construction, agent configuration, and the relationship between retrieval and answer quality. By open‑sourcing the pipeline, data, model checkpoints, and offline search engine, the work provides the community with a solid foundation for systematic study of search‑augmented language models and paves the way for future advances in deep research agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment