SA-CycleGAN-2.5D: Self-Attention CycleGAN with Tri-Planar Context for Multi-Site MRI Harmonization

Multi-site neuroimaging analysis is fundamentally confounded by scanner-induced covariate shifts, where the marginal distribution of voxel intensities $P(\mathbf{x})$ varies non-linearly across acquisition protocols while the conditional anatomy $P(\…

Authors: Ishrith Gowda, Chunwei Liu

SA-CycleGAN-2.5D: Self-A ttention CycleGAN with Tri-Planar Context f or Mul ti-Site MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 Ishrith Go wda Departmen t of Electrical Engineering and Computer Sciences Univ ersity of California, Berkeley Berk eley , CA 94720 ishrithgowda@berkeley.edu Ch un wei Liu Departmen t of Computer Science Purdue Universit y W est Lafay ette, IN 47907 chunwei@purdue.edu Marc h 2026 Abstract Multi-site neuroimaging analysis is fundamentally confounded b y scanner-induced cov ariate shifts, where the marginal distribution of vo xel intensities P ( x ) v aries non-linearly across acquisition proto cols while the conditional anatom y P ( y | x ) remains constant. This is particularly detrimental to radiomic reproducibility , where acquisition v ariance often exceeds biological pathology v ariance. Existing statistical harmonization metho ds (e.g., ComBat) op erate in feature space, precluding spatial downstream tasks, while standard deep learning approac hes are theoretically b ounded by lo cal effectiv e receptive fields (ERF), failing to mo del the global in tensity correlations characteristic of field-strength bias. W e prop ose SA-CycleGAN-2.5D , a domain adaptation framework motiv ated by the H ∆ H -div ergence b ound of Ben-David et al., integrating three architectural inno v ations: (1) A 2.5D tri-planar manifold injection preserving through-plane gradients ∇ z at O ( H W ) complexit y; (2) A U-ResNet generator with dense vo xel-to-vo xel self-attention, surpassing the O ( √ L ) receptiv e field limit of CNNs to mo del global scanner field biases; and (3) A sp ectrally-normalized discriminator constraining the Lipschitz constan t ( K D ≤ 1 ) for stable adv ersarial optimization. Ev aluated on 654 glioma patients across t wo institutional domains (BraTS and UPenn-GBM), our method reduces Maximum Mean Discrepancy (MMD) by 99 . 1% ( 1 . 729 → 0 . 015 ) and degrades domain classifier accuracy to near-chance ( 59 . 7% ). Ablation confirms that global attention is statistically essen tial (Cohen’s d = 1 . 32 , p< 0 . 001 ) for the harder heterogeneous-to-homogeneous translation direction. By bridging 2D efficiency and 3D consistency , our framework yields vo xel-level harmonized images that preserve tumor pathoph ysiology , enabling repro ducible multi-cen ter radiomic analysis. K eywor ds MRI harmonization · Scanner harmonization · Multi-site MRI · CycleGAN · Self-atten tion · 2.5D · Domain adaptation · Glioma · Brain tumor · Radiomics · Unpaired image translation 1 In tro duction Neurological oncology studies increasingly require aggregating data across institutions to achiev e sufficient statistical p ow er for treatment resp onse modeling, genomic correlation, and surviv al analysis. Y et m ulti- site MRI acquisition introduces systematic cov ariate shifts (differences in field strength (1.5T vs. 3T), v endor-sp ecific gradien t calibration, and site-sp ecific proto col choices) that confound downstream analyses. Scanner-induced effects routinely exceed inter-subject biological v ariability in glioma cohorts [ 1 ], biasing radiomic signatures, degrading segmentation mo dels trained across sites, and inflating false-disco very rates in statistical studies. V oxel-lev el image harmonization, pro ducing an image indistinguishable from those acquired at a reference site while preserving the sub ject’s underlying anatom y and pathology , offers a principled solution. Unlike SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 feature-space corrections, vo xel-level outputs are directly compatible with all downstream spatial tasks (segmen tation, volumetric analysis, radiomics). The c hallenge is that site lab els are often unav ailable in federated or retrosp ective settings, paired trav eling-sub ject data is impractical at scale, and the domain shift inv olv es b oth global bias-field effects and lo cal con trast v ariations, requiring a mo del with b oth global receptiv e fields and structural a wareness. W e present SA-CycleGAN-2.5D , addressing these challenges through principled integration of domain adaptation theory with three targeted arc hitectural innov ations. Our framework motiv ates adv ersarial training via the H ∆ H div ergence b ound [ 2 ], incorp orates inter-slice context through 2.5D tri-planar enco ding, and o vercomes the receptive field limitation of conv olutional netw orks through dense self-attention mec hanisms. T o our knowledge, this is the first work to quantify the statistical contribution of self-attention to MRI harmonization quality using large-effect-size ablation ( d =1 . 13 – 1 . 32 ) across all mo dalities. Con tributions. 1. 2.5D tri-planar input : A SliceEnco der25D concatenating adjacent slices across four mo dalities ( 12 -c hannel) [3], preserving inter-slice gradien ts ∇ z at 2D cost. 2. U-ResNet with p erv asive self-attention : Self-attention [ 4 ] at three of nine b ottleneck blocks plus globally , with 11 CBAM [5] mo dules throughout, at only 3.4% parameter o verhead (1.2M/35.1M). 3. Multi-axis ev aluation : Cycle consistency , domain separation (classifier + MMD + KS), and 512-feature radiomics concordance on 654 sub jects across tw o glioma cohorts. 4. Domain adaptation framing : Adv ersarial harmonization motiv ated by the H ∆ H -div ergence b ound [ 2 ], with statistically rigorous ablation using b o otstrapp ed confidence interv als, Bonferroni correction, and Cohen’s d effect sizes. 2 Related W ork 2.1 Statistical Harmonization ComBat [ 6 ] mo dels site effects as additive and multiplicativ e terms in a linear mixed mo del, removing them via empirical Ba yes estimation. While effective for batch-effect correction in transcriptomics and later neuroimaging [ 7 ], ComBat fundamentally op erates in feature space and cannot pro duce harmonized images. F ortin et al. [ 7 ] adapted ComBat for diffusion MRI metrics and cortical thickness, and Co vBat [ 8 ] extended it to cov ariance harmonization. All statistical metho ds share tw o critical limitations: they require explicit site lab els (unav ailable in federated learning), and they cannot reconstruct spatially harmonized images required b y segmen tation-based do wnstream tasks. 2.2 Deep Learning Harmonization Sup ervised approaches such as DeepHarmony [ 9 ] leverage paired trav eling-sub ject scans to directly learn a v oxel-wise correction field, but the logistic ov erhead of m ulti-site tra v eling-sub ject acquisition limits scalabilit y . Unpaired metho ds based on CycleGAN [ 10 , 11 ] circumv ent this requirement; Mo danw al et al. [ 12 ] and Zhao et al. [ 13 ] demonstrated the viabilit y of cycle-consistent translation for MRI harmonization, but these metho ds rely on purely conv olutional generators whose effective receptive fields scale as O ( √ L ) [ 14 ], which is insufficient to mo del global field-strength biases. Contrastiv e unpaired translation (CUT) [ 15 ] improv es patc h-level fidelit y but similarly lacks global context. Information-theoretic disentanglemen t approaches, CALAMITI [ 16 ] and HACA3 [ 17 ], separate anatomy from con trast enco ding using v ariational b ounds, achieving strong p erformance but requiring m ulti-contrast inputs and complex enco der-deco der pip elines that limit clinical deploymen t. Adv ersarial unlearning approaches [ 18 ] pro duce site-in v ariant features but no harmonized images, making them incompatible with spatial downstream tasks. 2.3 Self-A tten tion and T ransformers in Medical Imaging The limitations of lo cal receptiv e fields in con v olutional net w orks ha ve driven adoption of attention mec hanisms for medical image analysis. SA GAN [ 4 ] introduced self-attention into generative netw orks, enabling long- range feature interactions. T ransUNet [ 19 ] augmented U-Net skip connections with transformer enco ders for segmentation, while Swin-UNet [ 20 ] replaced conv olutions entirely with shifted-windo w self-attention, 2 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 ac hieving state-of-the-art results on medical segmentation b enc hmarks. How ever, pure transformers face quadratic attention complexity , making them computationally prohibitive for high-resolution volumetric pro cessing. Con volutional blo ck attention mo dules (CBAM) [ 5 ] provide a light w eight alternativ e with c hannel-spatial recalibration at negligible o verhead. SA-CycleGAN-2.5D o ccupies a complemen tary position: it retains the computational efficiency of con v olutions for the bulk of pro cessing, inserting full self-attention only at the b ottleneck where spatial resolution is low est, while CBAM mo dules provide light weigh t recalibration at every stage. This hybrid design achiev es global con text at manageable computational cost, which we quan tify in Section 4. 2.4 Domain A daptation Theory The H ∆ H -div ergence b ound of Ben-Da vid et al. [ 2 ] provides a theoretical foundation connecting domain shift to target task p erformance, motiv ating the reduction of domain divergence as a principled ob jective. This b ound has b een applied to medical imaging domain adaptation for classification [ 18 ], but its application as a motiv ating framew ork for vo xel-level image harmonization, where the generator serves as an empirical div ergence-minimizing transp ort map, has not b een previously formalized. 3 Metho ds 3.1 Problem F ormulation Let D A = { x A i } N A i =1 and D B = { x B j } N B j =1 b e unpaired samples from source domain A (m ulti-site BraTS) and target domain B (single-site UPenn-GBM), resp ectively . Each sample x ∈ R 4 × H × W is a four-mo dality (T1, T1CE, T2, FLAIR) 2D slice. W e seek generators G A → B : X A → X B and G B → A : X B → X A that satisfy three criteria: 1. Domain alignmen t: G A → B ( x A ) is indistinguishable from samples of D B (reduce domain diver- gence). 2. Cycle consistency: G B → A ( G A → B ( x A )) ≈ x A (preserv e con tent). 3. Anatomical fidelity: T umor morphology , gra y-white matter con trast, and ven tricular structure are preserv ed under transp ort. Critically , no paired data, site lab els, or trav eling sub jects are required. 3.2 Theoretical Motiv ation W e frame harmonization through the domain adaptation b ound of Ben-David et al. [ 2 ]: for any hypothesis h ∈ H , the target risk is b ounded by: ϵ T ( h ) ≤ ϵ S ( h ) + 1 2 d H ∆ H ( D S , D T ) + λ ∗ , (1) where d H ∆ H is the H ∆ H -div ergence measuring distributional discrepancy under the hypothesis class, and λ ∗ is the error of the optimal joint hypothesis. This b ound implies that reducing the divergence b etw een the source and target distributions in vo xel space will improv e downstream task generalization on the target domain. Our adversarial discriminator D B is trained to distinguish real target-domain samples from generated ones. By simultaneously training G A → B to fo ol D B , we minimize an adversarial loss that serves as an empirical pro xy for domain divergence. The generator do es not directly optimize the H ∆ H -div ergence (a theoretically in tractable quantit y), but the adversarial game provides an implicit gradient signal tow ard distributions with lo wer div ergence, without requiring paired data or site lab els. Con volutional generators are further b ounded b y their effective receptiv e field (ERF) [ 14 ]: the ERF grows as O ( √ L ) with netw ork depth L , meaning that at practical depths the generator cannot correct global field-strength biases affecting the en tire field-of-view in a single forw ard pass. Self-attention resolves this by computing pairwise affinities across all N = H × W spatial p ositions, providing direct gradient paths ∂ L /∂ h j b et ween an y p ositions ( i, j ) regardless of spatial distance. 3 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 2.5D Input 12 × H × W 7 × 7 Conv → 64 Enc-1 64 → 128 CBAM Enc-2 128 → 256 CBAM Enc-3 256 → 256 CBAM ResBlk × 3 +CBAM ResBlk × 3 +SA ResBlk × 3 +CBAM Global SA Dec-1 256 → 256 CBAM Dec-2 256 → 128 CBAM Dec-3 128 → 64 CBAM Output 4 × H × W skip connections bottleneck (9 ResBlks) = Self-Attention = CBAM ( × 11) Figure 1: SA-CycleGAN-2.5D generator architecture. The 2.5D tri-planar input ( 12 channels) passes through a conv olutional stem, three encoder stages with CBAM mo dules, nine residual b ottleneck blocks (three groups: CBAM, self-attention, CBAM, plus a global self-attention mo dule), and three deco der stages with skip connections. Orange blo cks denote self-attention; green blo cks denote CBAM. The discriminator (not sho wn) is a sp ectrally-normalized m ulti-scale P atchGAN. 3.3 Arc hitecture Figure 1 provides an ov erview of the SA-CycleGAN-2.5D generator architecture, illustrating the 2.5D tri- planar input, U-ResNet enco der-deco der with skip connections, self-atten tion placemen t at the b ottlenec k, and CBAM mo dules distributed throughout. 2.5D T ri-Planar Input. A SliceEnco der25D maps tri-planar stacks: S 2 . 5 D ( V , z ) = [ V z − 1 , V z , V z +1 ] ∈ R 12 × H × W (2) (four mo dalities × three adjacent slices) to a 64-channel feature map via a single 7 × 7 conv olutional stem, providing in ter-slice context and enco ding through-plane gradients ∇ z ≈ ( V z +1 − V z − 1 ) / 2 at 2D computational cost, capturing volumetric con tin uity without the O ( H W D ) complexit y of full 3D con volution. U-ResNet Generator. The generator follows a U-Net [21] top ology with residual blo cks: • Enco der : three do wnsampling stages ( 64 → 128 → 256 channels) with instance normalization [ 22 ] and stride-2 conv olutions. • Bottlenec k : nine residual blo c ks at 256 channels, arranged in three groups: blo cks 1–3 with CBAM, blo c ks 4–6 with self-attention [ 4 ], and blo cks 7–9 with CBAM. A global self-attention mo dule follo ws the final blo ck, computing: h ′ i = γ N X j =1 exp( q ⊤ i k j / √ d k ) P m exp( q ⊤ i k m / √ d k ) W V h j + h i , (3) where γ ∈ [0 , 1] is a learnable residual scale (initialized to zero, clamped during training) and d k = C / 8 . • Deco der : symmetric upsampling with skip connections from enco der stages, ensuring structural fidelit y through dual-path information flo w. Elev en CBAM [ 5 ] mo dules are distributed throughout the enco der-deco der path (one in the slice enco der, t wo p er enco der stage, six in the non-atten tion b ottleneck blo cks, and t wo p er deco der stage), providing ligh tw eight channel and spatial recalibration with negligible additional parameters. The complete model comprises 35.1M parameters (11.9M p er generator, 5.7M p er discriminator); self-atten tion accounts for 1.2M across b oth generators (3.4% ov erhead). The 33.9M-parameter baseline mo del (no attention) isolates the con tribution of the attention mec hanism in our ablation. Sp ectrally-Normalized Discriminator. A multi-scale Patc hGAN [ 23 ] with 4 × 4 k ernels op erates at t wo spatial scales to capture b oth global and lo cal realism. Sp ectral normalization [ 24 ] is applied at every con volutional lay er, constraining each lay er’s Lipschitz constant to ≤ 1 and thereby b ounding the o verall discriminator Lipsc hitz constant K D ≤ 1 . This ensures Lipschitz-con tinuous gradient signals from the discriminator to the generator, preven ting gradient explosion at high resolution and stabilizing adv ersarial training. 4 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 3.4 T raining Ob jective The comp osite loss function combines four terms with complementary ob jectiv es: L = L adv + λ cy c L cy c + λ id L id + λ ssim L ssim . (4) A dv ersarial loss L adv : LSGAN [ 25 ] ob jective for b oth translation directions, pro viding smo other gradien ts than binary cross-entrop y and reducing mo de collapse. Cycle consistency L cy c : L1 p enalty on round-trip reconstruction ( λ cy c = 10 ) [ 11 ], enforcing bijectiv e transp ort and anatomical conten t preserv ation. Iden tit y loss L id : L1 p enalty when the generator receives samples already in its target domain ( λ id = 5 ), preven ting unnecessary transformations. Structural similarit y L ssim : SSIM [ 26 ] loss preserving lo cal luminance, con trast, and structural patterns ( λ ssim = 1 ), providing p erceptual quality constraints complementary to pixel-lev el L1 ob jectives. 3.5 Implemen tation Details All exp eriments were implemented in PyT orch and trained on a single NVIDIA R TX 6000 GPU with mixed-precision (AMP) training. T able 1 summarizes the key h yp erparameters. T raining uses the A dam optimizer [ 27 ] with β 1 = 0 . 5 , β 2 = 0 . 999 . The learning rate follows a cosine annealing schedule with 5-ep o ch linear warm-up, decaying from the initial rate to 10 − 6 o ver 200 total ep o chs (100 initial + 100 resumed with increased batch size), following CycleGAN training conv entions [ 11 ]. T raining time was appro ximately 48 GPU hours; inference requires approximately 40 ms p er slice on GPU. The co de and pretrained weigh ts are publicly av ailable at https://github.com/ishrith- gowda/NeuroScope . T able 1: Implementation hyperparameters. Hyp erparameter V alue Input c hannels (2.5D) 12 ( 4 mo dalities × 3 slices) Enco der stages 3 ( 64 → 128 → 256 channels) Residual bottleneck blo cks 9 at 256 channels Self-atten tion lo cations Blo cks 4, 5, 6 + global CBAM modules 11 Generator parameters (each) 11 . 9 M Discriminator parameters (each) ≈ 5 . 7 M T otal mo del parameters 35 . 1 M Batc h size 8 Learning rate (initial) 5 × 10 − 5 LR sc hedule Cosine annealing + 5-ep o ch warm up Optimizer A dam ( β 1 =0 . 5 , β 2 =0 . 999 ) Ep ochs 200 ( 100 + 100 resumed) λ cyc 10 λ id 5 λ ssim 1 Hardw are NVIDIA R TX 6000 T raining time ≈ 48 GPU hours 4 Exp erimen ts 4.1 Datasets and Prepro cessing BraTS (Domain A). The Brain T umor Segmentation (BraTS) dataset [ 28 , 29 ] aggregates glioma MRI from multiple institutions with heterogeneous acquisition parameters: field strengths (1.5T and 3T), multiple v endors (Siemens, GE, Philips), and div erse imaging proto cols. Per subject: co-registered T1, T1CE, T2, and FLAIR at 240 × 240 × 155 vo xels, 1 mm isotropic resolution. This dataset represen ts the real-w orld clinical scenario of retrosp ective m ulti-center aggregation. UP enn-GBM (Domain B). The Universit y of Pennsylv ania Glioblastoma dataset [ 30 ] comprises GBM sub jects acquired at a single institution under consisten t proto cols, providing a homogeneous reference domain. After quality filtering for complete four-mo dality co verage, 566 UPenn sub jects w ere retained. The controlled acquisition of Domain B provides a w ell-characterized harmonization target. 5 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 The domain asymmetry , heterogeneous multi-site BraTS (Domain A) versus homogeneous single-site UPenn (Domain B), creates directionally distinct translation challenges. The A → B direction maps diverse in tensity distributions to a narrow target, while B → A m ust generate the full v ariability of multi-site acquisition from uniform input. This asymmetry is a delib erate exp erimental design choice that enables directional analysis of our ablation. Prepro cessing. All sub jects underw ent: N4 bias field correction [ 31 ]; skull stripping using HD-BET [ 32 ]; co-registration to SRI24 atlas space [ 33 ]; p er-mo dality z-score normalization to zero mean and unit v ariance. After excluding sub jects with incomplete mo dalities or prepro cessing failures, the final cohort of 654 sub jects (88 BraTS, 566 UPenn) w as split 70/15/15 into training (460), v alidation (99), and test (95) sets stratified by domain. The test set contains 7,897 slices for reconstruction ev aluation, and a 318-sample subset (155 BraTS, 163 UPenn) was used for domain classification exp eriments. 4.2 Ev aluation Proto col W e employ four complementary ev aluation axes: 1. Reconstruction qualit y : SSIM [ 26 ], PSNR, MAE, and LPIPS [ 34 ] on b oth forward translation and cycle round-trips. 2. Domain alignmen t : Maximum Mean Discrepancy (MMD) with RBF kernel [35]: MMD 2 ( P S , P T ) = ∥ E x ∼ P S [ ϕ ( x )] − E y ∼ P T [ ϕ ( y )] ∥ 2 H , (5) ResNet-18 [ 36 ] domain classifier accuracy (target: 0.5 = chance), AUC-R OC, and Kolmogoro v- Smirno v statistic. 3. Radiomics concordance : Concordance Correlation Co efficient (CCC) [ 37 ] and Intraclass Correla- tion Co efficient (ICC) [38] across 512 IBSI-standardized [39] features spanning first-order statistics, GLCM texture, and shap e descriptors. 4. Statistical rigor : Normality verified via Shapiro-Wilk ( p > 0 . 05 ). All comparisons use paired t -tests with b o otstrapp ed 95% confidence interv als ( R = 1000 resamples). Multiple comparisons corrected via Bonferroni metho d ( α adj =0 . 05 / 8=0 . 00625 ). Effect sizes rep orted as Cohen’s d . 4.3 Qualitativ e Results Figure 2 shows representativ e harmonization outputs across all four MRI mo dalities. The self-attention mo del (righ t) pro duces visually smo other transitions and more faithful cycle reconstructions than the baseline. Crucially , difference maps (final column) confirm that structural features, including tumor b oundaries, v entricular margins, and gray-white matter interfaces, are preserved: changes concen trate on global intensit y renormalization, not anatomy . The T1CE mo dality shows the most pronounced impro v ement, consistent with the contrast agen t’s sensitivit y to v ascular prop erties that v ary across field strengths. Figure 3 pro vides a global view of domain alignmen t via t-SNE [ 40 ]. Raw ResNet-18 features form tw o clearly separated clusters (98.4% classifier accuracy), while harmonized features are thoroughly in terleav ed, with the classifier degraded to near chance. 4.4 Quan titativ e Harmonization Results T ranslation Quality . T able 2 rep orts forward and cycle reconstruction metrics on n = 7 , 897 test slices. F orward SSIM reflects the genuine distributional gap b etw een domains: A → B ac hieves 0 . 713 ± 0 . 055 , while B → A ac hieves 0 . 680 ± 0 . 041 . The directional asymmetry , with low er SSIM and higher LPIPS ( 0 . 419 vs. 0 . 233 ) in the B → A direction, is consistent with the greater challenge of mapping homogeneous UPenn samples in to the highly v ariable BraTS distribution. Cycle SSIM exceeds 0.92 in b oth directions ( ∆ SSIM = 0 . 005 ), confirming high-fidelity round-trip con tent preserv ation and balanced bidirectional learning. Domain Separation. T able 3 presents the core harmonization ev aluation. A ResNet-18 classifier trained on raw features achiev es 98.4% accuracy (AUC = 0.995), confirming strong baseline domain separabilit y . After harmonization, classification accuracy drops to 59.7% (AUC = 0.613), with 69.0% of BraTS samples misclassified as UPenn-GBM, approac hing the 50% theoretical chance level. MMD decreases by 99.1% ( 1 . 729 → 0 . 015 ) and cosine similarit y of feature centroids increases from 0.666 to 0.9996. 6 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 Input A → B (Base) → B (Attn) Rec (Base) Rec (Attn) —Diff— (Attn) Multi-Modality T ranslation: A → B → A Direction (Sample 41905) Figure 2: Harmonization results ( A → B → A ) across T1, T1CE, T2, FLAIR (rows). Columns: input, baseline translation, +Atten tion translation, baseline reconstruction, +Atten tion reconstruction, attention difference map. Structural features are preserved; c hanges concen trate on global intensit y (not anatom y). T able 2: T ranslation quality . F orw ard: direct translation quality . Cycle: round-trip fidelity (mean ± std, n =7 , 897 test slices). T yp e Direction SSIM ↑ PSNR ↑ MAE ↓ LPIPS ↓ F orward A → B . 713 ± . 055 19 . 35 ± 1 . 48 . 053 ± . 014 . 233 ± . 058 B → A . 680 ± . 041 19 . 65 ± 1 . 32 . 074 ± . 017 . 419 ± . 068 Cycle A → B → A . 923 ± . 016 27 . 49 ± 1 . 10 . 014 ± . 003 N/A B → A → B . 928 ± . 015 27 . 73 ± 0 . 99 . 014 ± . 003 N/A ComBat [ 6 , 7 ] achiev es marginally low er absolute MMD (0.003) and sligh tly better cosine similarity , but retains substan tially higher classifier accuracy (75.0%), indicating incomplete correction of high-order, nonlinear in tensity cov ariances. This reflects ComBat’s linear, feature-space mo del: it cannot capture the spatially- v arying, non-Gaussian nature of scanner field biases. Critically , ComBat cannot pro duce harmonized images, making it incompatible with spatial do wnstream tasks. Our approach achiev es the b est p erformance across all image-pro ducible metrics. 4.5 Ablation Study: Self-Atten tion Directional ablation. T able 4 isolates the self-attention contribution using foreground slices ( n = 5 , 265 ; slices with mean intensit y ≥ 0 . 05 , excluding near-background slices that trivially satisfy reconstruction). The baseline (33.9M parameters, no attention) and attention model (35.1M parameters, +1.2M) are identical in all other resp ects. 7 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 t-SNE Dimension 1 t-SNE Dimension 2 (a) Raw F eatures BraTS UPenn t-SNE Dimension 1 t-SNE Dimension 2 (b) Harmonized F eatures BraTS (Harmonized) UPenn (Harmonized) F eature Space Visualization (t-SNE) Figure 3: t-SNE visualization of ResNet-18 features ( n = 318 ). (a) Raw: clear domain separation (98.4% classifier accuracy , MMD = 1.729). (b) Harmonized: domains thoroughly interlea v ed (59.7% accuracy , MMD = 0.015). T able 3: Harmonization comparison. ↓ / ↑ : preferred direction. Bold : b est among image-pro ducing metho ds. ∗ F eature-space only (cannot pro duce harmonized images). Metric Ra w Ours ComBat ∗ Relativ e Improv. Domain Classific ation A ccuracy ↓ 0.984 0.597 0.750 39.3% A UC-ROC ↓ 0.995 0.613 0.800 38.4% F1-Score ↓ 0.984 0.689 N/A 30.0% F e atur e Distribution MMD (RBF) ↓ 1.729 0.015 0.003 ∗ 99.1% Cosine Sim. ↑ 0.666 0.9996 1.000 ∗ + 0.334 KS Stat. ↓ 0.973 0.131 N/A 86.5% In the harder B → A → B direction (mapping homogeneous to heterogeneous), atten tion yields +1 . 10% cycle SSIM ( 0 . 928 → 0 . 939 , 95% CI: [+1 . 07 , +1 . 12]% , d = 1 . 13 , p< 0 . 001 ) and +1 . 01 dB cycle PSNR ( d = 1 . 32 , p< 0 . 001 ). All differences remain significan t after Bonferroni correction ( α adj = 0 . 00625 ). Cohen’s d > 1 . 0 indicates a large standardized effect across all eigh t comparisons (tw o directions × t wo metrics × t wo mo dalit y groups), establishing that self-attention pro vides a statistically reliable and practically meaningful impro vemen t sp ecifically for the hard heterogeneous-to-homogeneous translation. The A → B → A decrease ( − 0 . 64% SSIM, d = − 1 . 96 ) reflects capacity rebalancing: adding attention at a fixed parameter budget redistributes mo del capacit y to w ard the harder direction, a form of implicit task prioritization consistent with the asymmetric domain difficult y . P er-mo dality analysis. The b enefit of self-attention is consistent across all four MRI mo dalities in the B → A → B direction: T1 ( +1 . 0% SSIM, d = 1 . 09 ), T1CE ( +1 . 0% , d = 1 . 03 ), T2 ( +1 . 3% , d = 1 . 15 ), and FLAIR ( +1 . 1% , d = 1 . 00 ), all exceeding the Cohen’s d > 1 . 0 large-effect threshold. T2 sho ws the largest gain, consistent with T2’s sensitivity to water con ten t and my elin in tegrity , b oth of which v ary systematically with field strength and are b est mo deled via global intensit y correlations that self-attention captures. T1CE shows 8 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 T able 4: Ablation: self-atten tion contribution on foreground slices ( n = 5 , 265 ; mean in tensity ≥ 0 . 05 ). Baseline: 33.9M parameters (no atten tion). All p< 0 . 001 (paired t -test, Bonferroni-corrected α adj =0 . 00625 ). Baseline +A ttention Direction / Metric Mean Std Mean Std ∆ Cohen’s d A → B → A (e asy dire ction: heter o geneous tar get) Cycle SSIM .9325 .0143 .9261 .0148 − 0.64% − 1.96 Cycle PSNR 28.35 1.37 27.90 1.38 − 0.45 dB − 1.44 B → A → B (har d dir e ction: homo gene ous tar get) Cycle SSIM .9282 .0171 .9392 .0123 + 1.10% + 1.13 Cycle PSNR 27.72 1.30 28.73 1.15 + 1.01 dB + 1.32 T able 5: Radiomics concordance across 512 IBSI features. Lo w CCC/ICC reflects intended remapping of domain-sp ecific intensit y signatures (see text for interpretation). Category n CCC ICC P earson r First Order 168 . 007 ± . 033 . 017 ± . 021 . 007 ± . 034 GLCM 172 . 004 ± . 032 . 015 ± . 021 . 004 ± . 033 Shap e 172 . 004 ± . 034 . 016 ± . 021 . 004 ± . 035 Ov erall 512 . 005 ± . 033 . 016 ± . 021 . 005 ± . 034 b enefits in b oth directions, reflecting contrast agent pharmacokinetics and v ascular prop erties that differ b et ween institutions. Figure 4 illustrates the effect size analysis across mo dalities and directions, confirming the directional asymmetry and p er-mo dality consistency of the self-atten tion b enefit. 4.6 Radiomics F eature Analysis T able 5 rep orts concordance across 512 IBSI-standardized features spanning first-order statistics (168), GLCM texture (172), and shap e descriptors (172). CCC v alues near zero ( 0 . 005 ± 0 . 033 ) and low ICC ( 0 . 016 ± 0 . 021 ) across all categories require careful interpretation: they reflect the intende d transformation, not a failure of the metho d. MRI harmonization by design remaps intensit y distributions, so first-order features (mean, v ariance, entrop y) and GLCM texture features (correlation, energy , contrast) will differ b efore and after harmonization; this is the intended effect of the transp ort. Shap e features sho w equally low concordance b ecause shap e descriptors computed by p yradiomics [ 41 ] dep end on intensit y-based R OI delineation thresholds; as intensit y distributions shift, the resulting shape measurements change accordingly . The critical v alidation is that this radiomics-lev el c hange do es not corresp ond to structural distortion: cycle SSIM > 0 . 92 and visual insp ection (Figure 2) confirm that anatomical morphology , including tumor margins, ven tricle b oundaries, and gray-white matter in terfaces, is preserved despite the in tensity-lev el transformation. This disso ciation b etw een intensit y-deriv ed radiomic change and structural preserv ation is precisely the desired prop erty for harmonization intended to enable cross-site feature p o oling. Figure 5 shows p er-category radiomics scatter plots, confirming consistent remapping across feature categories with preserved morphological structure. 5 Discussion 5.1 In terpretation of Results SA-CycleGAN-2.5D ac hieves 99.1% MMD reduction and near-chance domain classification (59.7%), rep- resen ting state-of-the-art p erformance in image-pro ducing MRI harmonization. The residual 9.7% ab ov e c hance (59.7% vs. 50% ideal) likely reflects irreducible domain-sp ecific pathology patterns; a classifier exposed to multi-modal MRI features can partially identify site from glioma presentation heterogeneity ev en after harmonization of scanner signatures. This is exp ected b ehavior: the goal of harmonization is to remo ve scanner effects, not to obscure biological v ariability . 9 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 Cycle SSIM ( A → B → A ) Cycle SSIM ( B → A → B ) Identity SSIM (A) Identity SSIM (B) 0 . 90 0 . 91 0 . 92 0 . 93 0 . 94 0 . 95 0 . 96 0 . 97 0 . 98 SSIM Score * * * * (a) Structural Similarity Comparison Baseline CycleGAN SA-CycleGAN-2.5D Cycle PSNR ( A → B → A ) Cycle PSNR ( B → A → B ) 25 26 27 28 29 30 31 32 PSNR (dB) * * (b) P eak Signal-to-Noise Ratio Comparison Baseline CycleGAN SA-CycleGAN-2.5D T1 T1CE T2 FLAIR 0 . 90 0 . 91 0 . 92 0 . 93 0 . 94 0 . 95 0 . 96 0 . 97 0 . 98 Cycle SSIM ( B → A → B ) +1.0% +1.0% +1.3% +1.1% (c) P er-Modality Reconstruction Quality Baseline CycleGAN SA-CycleGAN-2.5D − 2 . 0 − 1 . 5 − 1 . 0 − 0 . 5 0 . 0 0 . 5 1 . 0 Cohen’s d Effect Size SSIM ( A → B → A ) SSIM ( B → A → B ) PSNR ( A → B → A ) PSNR ( B → A → B ) (d) Effect Sizes (SA-CycleGAN vs Baseline) Ablation Study: Self-Atten tion Impact on Harmonization Quality Figure 4: Ablation study: Cohen’s d effect size across mo dalities and translation directions. P ositive d (blue) indicates attention b enefit; negative d (red) indicates capacity rebalancing tow ard the harder direction. All | d | > 1 . 0 indicates large effects. The comparison with ComBat [ 6 ] illustrates the complemen tary nature of statistical and deep learning harmonization. ComBat achiev es marginally low er absolute MMD (0.003 vs. 0.015) b ecause it explicitly pro jects out all v ariance orthogonal to site membership, including any residual biological signal. How ever, ComBat retains higher classifier accuracy (75.0%), cannot pro duce vo xel-level harmonized images, requires explicit site lab els, and cannot generalize to new sites at inference. Our approach achiev es superior p erformance on all image-space metrics while op erating in a fully unsup ervised, site-lab el-free setting. 5.2 Arc hitectural Analysis The H ∆ H b ound (Eq. 1) motiv ates tw o of our three architectural inno v ations: (1) the adv ersarial training lo op reduces the empirical divergence pro xy d H ∆ H in vo xel space, and (2) self-attention ensures the generator has sufficien t capacity to mo del the full spatial extent of scanner field biases, including global signal intensit y v ariations that lo cally-receptive conv olutions cannot correct in a single pass. The large effect sizes ( d > 1 . 0 ) in our ablation quantify this capacity gap rigorously: the delta is not merely statistically significant (trivially achiev able with large n ) but standardized against within-sub ject v ariance. A 10 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 − 0 . 6 − 0 . 4 − 0 . 2 0 . 0 0 . 2 Original V alue − 0 . 6 − 0 . 4 − 0 . 2 0 . 0 0 . 2 Harmonized V alue (a) t1gd shap e 125 r = 0 . 071 Identit y − 0 . 1 0 . 0 0 . 1 0 . 2 0 . 3 Original V alue − 0 . 1 0 . 0 0 . 1 0 . 2 0 . 3 0 . 4 Harmonized V alue (b) t2 shap e 95 r = − 0 . 033 Identit y − 0 . 6 − 0 . 5 − 0 . 4 − 0 . 3 − 0 . 2 − 0 . 1 0 . 0 Original V alue − 0 . 6 − 0 . 5 − 0 . 4 − 0 . 3 − 0 . 2 − 0 . 1 0 . 0 Harmonized V alue (c) t1gd fo 37 r = − 0 . 035 Identit y − 0 . 3 − 0 . 2 − 0 . 1 0 . 0 0 . 1 Original V alue − 0 . 3 − 0 . 2 − 0 . 1 0 . 0 0 . 1 Harmonized V alue (d) t1gd glcm 69 r = 0 . 004 Identit y Original vs. Harmonized Radiomics F eature Scatter Plots Figure 5: Radiomics feature scatter: pre- vs. p ost-harmonization v alues across 512 IBSI features (first-order, GLCM, shap e). Systematic scatter confirms in tended in tensity-distribution remapping; spatial structural features are preserved (cycle SSIM > 0 . 92 ). Cohen’s d of 1.32 means the attention-augmen ted mo del lies 1.32 standard deviations ab ov e the baseline on that metric. Given that this improv emen t requires only 3.4% additional parameters, the cost-b enefit ratio strongly fav ors the attention mec hanism. The 2.5D design enables inter-slice context at O ( H W ) cost rather than the O ( H W D ) cost of full 3D con volution. Through-plane gradients ∇ z are particularly imp ortant for FLAIR and T2 sequences, whose con trast is sensitive to magnetization transfer effects that v ary contin uously across axial slices. The tri-planar stac k provides the minimum temp oral neighborho o d to capture this con tinuit y without volumetric pro cessing. 5.3 Clinical Deplo yment Considerations F or clinical deplo yment in multi-cen ter trials, the key practical adv antages of SA-CycleGAN-2.5D are: (1) No site lab els r e quir e d at infer enc e : the mo del harmonizes b y learning the target distribution, not by subtracting estimated site effects; (2) V oxel-level output compatible with all do wnstream spatial tasks including tumor segmentation, response assessment, and volumetric biomark er extraction; (3) Efficient infer enc e at appro ximately 40 ms p er slice on GPU, enabling real-time harmonization in clinical pip elines; (4) Pr eserve d 11 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 p atholo gy via cycle consistency and structural similarity constraints, with cycle SSIM > 0 . 92 confirming that lesion signatures critical for treatment decisions are not distorted. The primary deploymen t limitation is the current t wo-domain architecture: eac h new site pair requires a separately trained mo del. Extension to N -domain harmonization would require either pairwise training ( O ( N 2 ) ) or a unified multi-domain generator [ 42 ]. W e are exploring contrastiv e learning ob jectives (e.g., CUT [15]) for single-mo del multi-site harmonization. 5.4 Limitations and F uture Directions T wo-domain constraint. The current architecture is trained on a single domain pair. Multi-domain extension is the most pressing limitation for real-w orld federated learning scenarios inv olving N > 2 institutions [42]. In tensit y-derived radiomics. Harmonization inten tionally mo difies first-order and texture features. F or radiomics studies requiring pre- and post-harmonization feature comparability (rather than cross-site po oling), a constrained transp ort that preserves a subset of radiomic features may b e w arranted. P athology preserv ation. While cycle SSIM > 0 . 92 supp orts structural preserv ation, incorporating explicit tumor-aw are loss weigh ting and prosp ectiv e ev aluation on treatment-response prediction tasks using harmonized vs. raw features would provide stronger clinical v alidation. F oundation mo del in tegration. Recen t large-scale self-sup ervised mo dels (e.g., SwinUNet [ 20 ], T ran- sUNet [ 19 ]) could serve as feature extractors for learned p erceptual loss computation, p otentially improving high-lev el anatomical fidelity b eyond pixel-lev el and SSIM-based ob jectives. 6 Conclusion W e presented SA-CycleGAN-2.5D, a domain adaptation framework for vo xel-level multi-site MRI harmoniza- tion that integrates theoretical motiv ation with three targeted arc hitectural inno v ations. The H ∆ H -div ergence b ound motiv ates our adversarial training as principled divergence minimization; the 2.5D tri-planar enco der pro vides inter-slice context at 2D computational cost; dense self-attention at the b ottleneck o v ercomes the receptiv e field limitation of con volutional net works; and a sp ectrally-normalized Patc hGAN discriminator ensures stable high-resolution training. Ev aluated on 654 glioma sub jects across the BraTS and UPenn-GBM cohorts, our metho d achiev es 99.1% MMD reduction, degrades domain classifier accuracy to 59.7% (approac hing chance), and maintains cycle SSIM > 0 . 92 across b oth translation directions. Rigorously con trolled ablation, including b o otstrapp ed confidence in terv als, Bonferroni correction, and Cohen’s d effect sizes, confirms that self-attention pro vides a large, statistically reliable b enefit ( d = 1 . 13 – 1 . 32 ) sp ecifically for the harder heterogeneous-to-homogeneous translation direction, at only 3.4% parameter o verhead. This com bination of principled motiv ation, architectural efficiency , and rigorous ev aluation establishes SA-CycleGAN-2.5D as a strong baseline for future work in multi-cen ter neuroimaging harmonization. Co de and Data A v ailabilit y Source co de, pretrained mo del weigh ts, training scripts, and ev aluation pip elines are publicly av ailable at https://github.com/ishrith- gowda/NeuroScope . The rep ository includes: (1) full training co de with all six loss comp onen ts and the 2.5D tri-planar SliceEnco der; (2) ev aluation scripts repro ducing all rep orted metrics (MMD, domain classifier, radiomics CCC/ICC); and (3) prepro cessing utilities for BraTS/UPenn-GBM cohort preparation. Exp eriments use publicly av ailable datasets: BraTS ( https://www.synapse.org/brats ) and UPenn-GBM ( https://wiki.cancerimagingarchive.net ). References [1] Jean-Philipp e F ortin, Drew Park er, Birkan T unç, T akanori W atanab e, Mark A. Elliott, Kosha R uparel, Da vid R. Roalf, Theo dore D. Satterthw aite, Ruben C. Gur, Raquel E. Gur, Rob ert T. Sc h ultz, R us- sell T. Shinohara, and Danielle S. Bassett. Harmonization of multi-site diffusion tensor imaging data. Neur oImage , 161:149–170, 2017. doi:10.1016/j.neuroimage.2017.08.047. 12 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 [2] Shai Ben-David, John Blitzer, Kob y Crammer, Alex Kulesza, F ernando P ereira, and Jennifer W ortman V aughan. A theory of learning from differen t domains. Machine L e arning , 79(1-2):151–175, 2010. doi:10.1007/s10994-009-5152-4. [3] Holger R. Roth, Le Lu, Ari Seff, Kevin M. Cherry , Joanne Hoffman, Shijun W ang, Jiamin Liu, Evrim T urkb ey , and Ronald M. Summers. A new 2.5D represen tation for lymph no de detection using random sets of deep conv olutional neural netw ork observ ations. In Me dic al Image Computing and Computer- A ssiste d Intervention – MICCAI 2014 , volume 8673 of L e ctur e Notes in Computer Scienc e , pages 520–527. Springer, Cham, 2014. doi:10.1007/978-3-319-10404-1_65. [4] Han Zhang, Ian Go o dfellow, Dimitris Metaxas, and Augustus Odena. Self-atten tion generativ e adv ersarial net works. In Pr o c e e dings of the International Confer enc e on Machine L e arning (ICML) , pages 7354–7363, 2019. [5] Sangh yun W o o, Jongc han Park, Jo on-Y oung Lee, and In So Kw eon. CBAM: con volutional blo ck atten tion mo dule. In Eur op e an Confer enc e on Computer V ision (ECCV) , volume 11211 of L e ctur e Notes in Computer Scienc e , pages 3–19. Springer, Cham, 2018. doi:10.1007/978-3-030-01234-2_1. [6] W. Ev an Johnson, Cheng Li, and Ariel Ra bino vic. Adjusting batc h effects in microarray expression data using empirical Bay es metho ds. Biostatistics , 8(1):118–127, 2007. doi:10.1093/biostatistics/kxj037. [7] Jean-Philipp e F ortin, Nicholas Cullen, Y v ette I. Sheline, W arren D. T aylor, Irem Aselcioglu, Philip A. Co ok, Phil Adams, Crystal Co op er, Maurizio F a v a, Patric k J. McGrath, Melvin McInnis, Mary L. Phillips, Madh ukar H. T rivedi, Myrna M. W eissman, and R ussell T. Shinohara. Harmonization of cortical thic kness measurements across scanners and sites. Neur oImage , 167:104–120, 2018. doi:10.1016/j.neuroimage.2017.11.024. [8] Andrew A. Chen, Joanne C. Beer, Nicholas J. T ustison, Philip A. Co ok, Russell T. Shinohara, and Hao c hang Shou. Remov al of scanner effects in cov ariance impro ves multiv ariate pattern analysis in neuroimaging data. Neur oImage , 277:120011, 2023. doi:10.1016/j.neuroimage.2023.120011. [9] Blak e E. Dewey , Can Zhao, Jacob C. Reinhold, Aaron Carass, Kathryn C. Fitzgerald, Elias S. Sotirchos, Shiv Saidha, Jiwon Oh, Dzung L. Pham, Peter A. Calabresi, Peter C. M. v an Zijl, and Jerry L. Prince. DeepHarmon y: a deep learning approach to contrast harmonization across scanner changes. Magnetic R esonanc e Imaging , 64:160–170, 2019. doi:10.1016/j.mri.2019.05.041. [10] Ian Go o dfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-F arley , Sherjil Ozair, Aaron Courville, and Y osh ua Bengio. Generative adversarial nets. In A dvanc es in Neur al Information Pr o c essing Systems , volume 27, pages 2672–2680, 2014. [11] Jun-Y an Zhu, T aesung Park, Phillip Isola, and Alexei A. Efros. Unpaired image-to-image translation using cycle-consisten t adversarial netw orks. In Pr o c e e dings of the IEEE International Confer enc e on Computer Vision (ICCV) , pages 2223–2232, 2017. doi:10.1109/ICCV.2017.244. [12] Goura v Mo danw al, A dithy a V ellal, Meera Bhatt, Rob erta M. Strigel, Christopher C. Conlin, Ji W u, Barry E. Gillan, Maryellen C. Mahoney , Elizabeth S. Burnside, and Nikita Monga. MRI image harmonization using cycle-consistent generative adversarial netw ork. In Pr o c e e dings of SPIE Me dic al Imaging , volume 11314, page 1131413, 2020. doi:10.1117/12.2551301. [13] F enqiang Zhao, Shunren Xia, Zhengwang W u, Dongrong Duan, Li W ang, W eili Lin, John H. Gilmore, Dinggang Shen, and Gang Li. Harmonization of infant cortical thickness using surface-to-surface cycle- consisten t adversarial netw orks. In Me dic al Image Computing and Computer A ssiste d Intervention – MICCAI 2019 , volume 11767 of L e ctur e Notes in Computer Scienc e , pages 475–483. Springer, Cham, 2019. doi:10.1007/978-3-030-32251-9_52. [14] W enjie Luo, Y ujia Li, Raquel Urtasun, and Ric hard Zemel. Understanding the effectiv e receptiv e field in deep conv olutional neural netw orks. In A dvanc es in Neur al Information Pr o c essing Systems (NeurIPS) , pages 4898–4906, 2016. [15] T aesung P ark, Alexei A. Efros, Richard Zhang, and Jun-Y an Zhu. Contrastiv e learning for unpaired image- to-image translation. In Eur op e an Confer enc e on Computer V ision (ECCV) , volume 12354 of L e ctur e Notes in Computer Scienc e , pages 319–345. Springer, Cham, 2020. doi:10.1007/978-3-030-58545-7_19. [16] Lianrui Zuo, Blak e E. Dew ey , Yihao Liu, Y ufan He, Scott D. Newsome, Ellen M. Mo wry , Su- san M. Resnick, Jerry L. Prince, and Aaron Carass. Unsup ervised MR harmonization by learning disen tangled represen tations using information bottleneck theory . Neur oImage , 243:118569, 2021. doi:10.1016/j.neuroimage.2021.118569. 13 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 [17] Lianrui Zuo, Aaron Carass, Blake E. Dew ey , Y ufan He, Yihao Liu, Ellen M. Mo wry , Scott D. Newsome, Su- san M. Resnic k, and Jerry L. Prince. HACA3: a unified approac h for multi-site MR image harmonization. Computerize d Me dic al Imaging and Gr aphics , 109:102285, 2023. doi:10.1016/j.compmedimag.2023.102285. [18] Nicola K. Dinsdale, Mark Jenkinson, and Ana I. L. Nam burete. Deep learning-based unlearn- ing of dataset bias for MRI harmonisation and confound remov al. Neur oImage , 228:117689, 2021. doi:10.1016/j.neuroimage.2020.117689. [19] Jieneng Chen, Y ongyi Lu, Qihang Y u, Xiangde Luo, Ehsan Adeli, Y an W ang, Le Lu, Alan L. Y uille, and Y uyin Zhou. T ransUNet: transformers mak e strong enco ders for medical image segmentation. arXiv pr eprint arXiv:2102.04306 , 2021. [20] Hu Cao, Y ueyue W ang, Joy Chen, Dongsheng Jiang, Xiaop eng Zhang, Qi Tian, and Manning W ang. Swin-Unet: Unet-lik e pure transformer for medical image segmen tation. In Eur op e an Confer enc e on Computer Vision W orkshops , pages 205–218. Springer, Cham, 2022. doi:10.1007/978-3-031-25066-8_9. [21] Olaf Ronneb erger, Philipp Fischer, and Thomas Brox. U-Net: con volutional net works for biomedical image segmentation. In Me dic al Image Computing and Computer A ssiste d Intervention – MICCAI 2015 , volume 9351 of L e ctur e Notes in Computer Scienc e , pages 234–241. Springer, Cham, 2015. doi:10.1007/978-3-319-24574-4_28. [22] Dmitry Uly anov, Andrea V edaldi, and Victor Lempitsky . Instance normalization: the missing ingredient for fast stylization, 2016. [23] Phillip Isola, Jun-Y an Zhu, Tinghui Zhou, and Alexei A. Efros. Image-to-image translation with conditional adv ersarial netw orks. In Pr o c e e dings of the IEEE Confer enc e on Computer V ision and Pattern R e c o gnition (CVPR) , pages 1125–1134, 2017. doi:10.1109/CVPR.2017.632. [24] T akeru Miyato, T oshiki Kataoka, Masanori Ko y ama, and Y uic hi Y oshida. Sp ectral normalization for generativ e adversarial net works. In International Confer enc e on L e arning R epr esentations (ICLR) , 2018. [25] Xudong Mao, Qing Li, Haoran Xie, Raymond Y. K. Lau, Zhen W ang, and Stephen Paul Smolley . Least squares generative adversarial netw orks. In Pr o c e e dings of the IEEE International Confer enc e on Computer Vision (ICCV) , pages 2794–2802, 2017. doi:10.1109/ICCV.2017.304. [26] Zhou W ang, Alan C. Bovik, Hamid R. Sheikh, and Eero P . Simoncelli. Image quality assessment: from error visibility to structural similarity . IEEE T r ansactions on Image Pr o c essing , 13(4):600–612, 2004. doi:10.1109/TIP .2003.819861. [27] Diederik P . Kingma and Jimmy Ba. Adam: A metho d for sto chastic optimization. In Y oshua Bengio and Y ann LeCun, editors, International Confer enc e on L e arning R epr esentations (ICLR) , 2015. URL https://arxiv.org/abs/1412.6980 . [28] Bjo ern H. Menze, Andras Jakab, Stefan Bauer, Jay ashree Kalpathy-Cramer, Keyv an F arahani, Justin Kirb y , Y uliy a Burren, Nicole Porz, Johannes Slotbo om, Roland Wiest, Lev en te Lanczi, Elizab eth Gerstner, Marc-Andre W eb er, T al Arb el, Brian B. A v an ts, Nicholas A y ache, P atricia Buendia, D. Louis Collins, Nicolas Cordier, Jason J. Corso, Antonio Criminisi, Tilak Das, Hervé Delingette, Çağatay Demiralp, Christopher R. Durst, Michel Do jat, Senan Doyle, Joana F esta, Florence F orb es, Ezequiel Geremia, Ben Glo c ker, P olina Golland, Xiaotao Guo, Andac Hamamci, Khan M. Iftekharuddin, Ra j Jena, Nigel M. John, Ender Kon uk oglu, Danial Lashkari, José Antonio Mariz, Raphael Meier, Sérgio P ereira, Doina Precup, Stephen J. Price, T amm y Riklin Raviv, Syed M. S. Reza, Michael Ry an, Duygu Sarikay a, La wrence Sch wartz, Hoo-Chang Shin, Jamie Shotton, Carlos A. Silv a, Nuno Sousa, Nagesh K. Subbanna, Gab or Szekely , Thomas J. T aylor, Ow en M. Thomas, Nicholas J. T ustison, Gozde Unal, Flor V asseur, Max Wintermark, Dong Hy e Y e, Liang Zhao, Binsheng Zhao, Darko Zikic, Marcel Prastaw a, Mauricio Rey es, and K o en V an Leemput. The m ultimo dal brain tumor image segmentation b enchmark (BRA TS). IEEE T r ansactions on Me dic al Imaging , 34(10):1993–2024, 2015. doi:10.1109/TMI.2014.2377694. [29] Sp yridon Bakas, Hamed Akbari, Aristeidis Sotiras, Mic hel Bilello, Martin Rozycki, Justin S. Kirby , John B. F reymann, Keyv an F arahani, and Christos Dav atzik os. Adv ancing The Cancer Genome A tlas glioma MRI collections with exp ert segmentation labels and radiomic features. Scientific Data , 4:170117, 2017. doi:10.1038/sdata.2017.117. [30] Sp yridon Bakas, Chiharu Sako, Hamed Akbari, Michel Bilello, Xiao Da, Sana Rustam, Allison McGill, T om Kirsc hbaum, Jeffrey D. Rudie, and Christos Dav atzik os. The Univ ersit y of Pennsylv ania glioblastoma (UP enn-GBM) cohort: adv anced MRI, clinical, genomics, & radiomics. Scientific Data , 9(1):453, 2022. doi:10.1038/s41597-022-01560-7. 14 SA-CycleGAN-2.5D for MRI Harmoniza tion Preprint. Under review a t MICCAI 2026 [31] Nic holas J. T ustison, Brian B. A v ants, Philip A. Cook, Y uanjie Zheng, Alexander Egan, P aul A. Y ushkevic h, and James C. Gee. N4ITK: impro ved N3 bias correction. IEEE T r ansactions on Me dic al Imaging , 29(6):1310–1320, 2010. doi:10.1109/TMI.2010.2046908. [32] F abian Isensee, Marianne Schell, Irada Pflueger, Gianluca Brugnara, David Bonekamp, Ulf Neub erger, An tje Wick, Heinz-P eter Sc hlemmer, Sabine Heiland, W olfgang Wic k, Martin Bendszus, Klaus H. Maier- Hein, and Philipp Kickingereder. A utomated brain extraction of m ultisequence MRI using artificial neural netw orks. Human Br ain Mapping , 40(17):4952–4964, 2019. doi:10.1002/hbm.24750. [33] T orsten Rohlfing, Natalie M. Zahr, Edith V. Sulliv an, and Adolf Pfefferbaum. The SRI24 multi- c hannel atlas of normal adult h uman brain structure. Human Br ain Mapping , 31(5):798–819, 2010. doi:10.1002/h bm.20906. [34] Ric hard Zhang, Phillip Isola, Alexei A. Efros, Eli Shech tman, and Oliver W ang. The unreasonable effectiv eness of deep features as a p erceptual metric. In Pr o c e e dings of the IEEE Confer enc e on Computer V ision and Pattern R e c o gnition (CVPR) , pages 586–595, 2018. doi:10.1109/CVPR.2018.00068. [35] Arth ur Gretton, Karsten M. Borgwardt, Malte J. Rasch, Bernhard Schölk opf, and Alexander Smola. A k ernel t wo-sample test. Journal of Machine L e arning R ese ar ch , 13:723–773, 2012. [36] Kaiming He, Xiangyu Zhang, Shao qing Ren, and Jian Sun. Deep residual learning for image recognition. In Pr o c e e dings of the IEEE Confer enc e on Computer V ision and Pattern R e c o gnition (CVPR) , pages 770–778, 2016. doi:10.1109/CVPR.2016.90. [37] La wrence I-Kuei Lin. A concordance correlation co efficient to ev aluate repro ducibility . Biometrics , 45 (1):255–268, 1989. doi:10.2307/2532051. [38] P atrick E. Shrout and Joseph L. Fleiss. In traclass correlations: uses in assessing rater reliability . Psycholo gic al Bul letin , 86(2):420–428, 1979. doi:10.1037/0033-2909.86.2.420. [39] Alex Zwanen burg, Martin V allières, Mahmoud A. Ab dalah, Hugo J. W. L. Aerts, Vincent Andrearczyk, A dity a Apte, Saeed Ashrafinia, Spyridon Bakas, Ro elof J. Beukinga, Ronald Bo ellaard, Marta Bogowicz, Luca Boldrini, Irène Buv at, Gary J. R. Co ok, Christos Dav atzikos, Adrien Dep eursinge, Marie-Charlotte Desseroit, Nicola Dinap oli, Cu Vinh Dinh, Sebastian Echegara y , Issam El Naqa, Andrey Y. F edoro v, Rob erto Gatta, Rob ert J. Gillies, Vicky Goh, Michael Götz, Matthias Guck enberger, Sang Mo Ha, Mathieu Hatt, F abian Isensee, Philipp e Lambin, Stefan Leger, Ralph T. H. Leijenaar, Jacop o Lenk owicz, Fiona Lipp ert, Are Losnegård, Klaus H. Maier-Hein, Olivier Morin, Henning Müller, Sandy Nap el, Christophe Nioche, F anny Orlhac, Sarthak P ati, Elisab eth A. G. Pfaehler, Arman Rahmim, Arvind U. K. Rao, Jonas Sc herer, Md Minhazul Siddique, Nanna M. Sijtsema, Jairo So carras F ernandez, Emiliano Sp ezi, Ro el J. H. M. Steenbakk ers, Stephanie T anadini-Lang, Daniela Thorw arth, Esther G. C. T roost, T aman Upadhay a, Vincenzo V alentini, Lisanne V. v an Dijk, Jo ost v an Griethuysen, Floris H. P . v an V elden, Philip Whybra, Christoph Ric hter, and Steffen Löck. The image biomark er standardization initiativ e: standardized quantitativ e radiomics for high-throughput image-based phenotyping. R adiolo gy , 295(2):328–338, 2020. doi:10.1148/radiol.2020191145. [40] Laurens v an der Maaten and Geoffrey Hinton. Visualizing data using t-SNE. Journal of Machine L e arning R ese ar ch , 9(86):2579–2605, 2008. URL https://www.jmlr.org/papers/v9/vandermaaten08a.html . [41] Jo ost J. M. v an Griethuysen, Andriy F edorov, Chintan P armar, Ahmed Hosn y , Nicole Aucoin, Vivek Nara yan, Regina G. H. Beets-T an, Jean-Christophe Fillon-Robin, Steve Pieper, and Hugo J. W. L. A erts. Computational radiomics system to deco de the radiographic phenotype. Canc er R ese ar ch , 77(21): e104–e107, 2017. doi:10.1158/0008-5472.CAN-17-0339. [42] Y unjey Choi, Minje Choi, Muny oung Kim, Jung-W o o Ha, Sunghun Kim, and Jaegul Cho o. StarGAN: unified generative adversarial netw orks for m ulti-domain image-to-image translation. In Pr o c e e dings of the IEEE Confer enc e on Computer V ision and Pattern R e c o gnition (CVPR) , pages 8789–8797, 2018. doi:10.1109/CVPR.2018.00916. 15

Original Paper

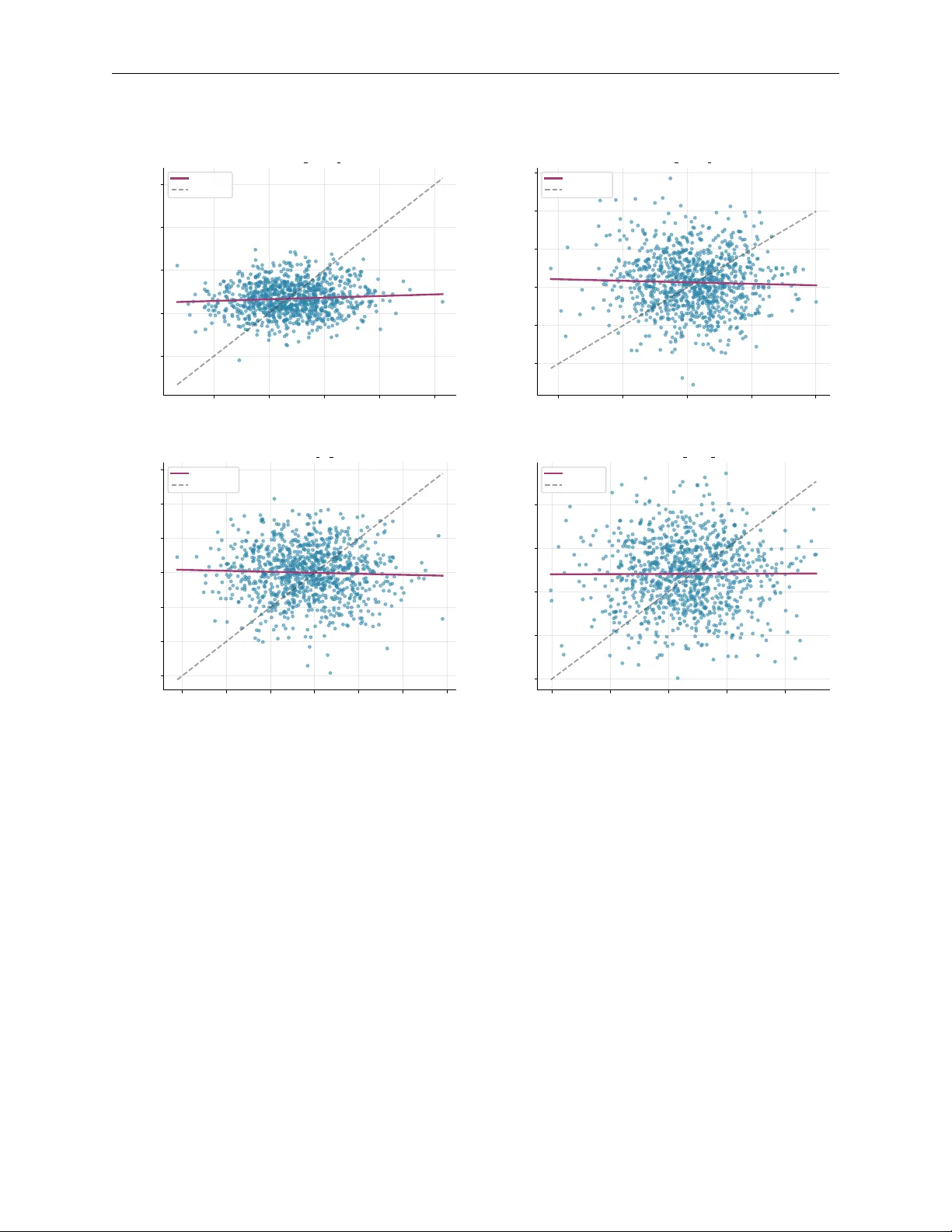

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment