Improving RCT-Based CATE Estimation Under Covariate Mismatch via Double Calibration

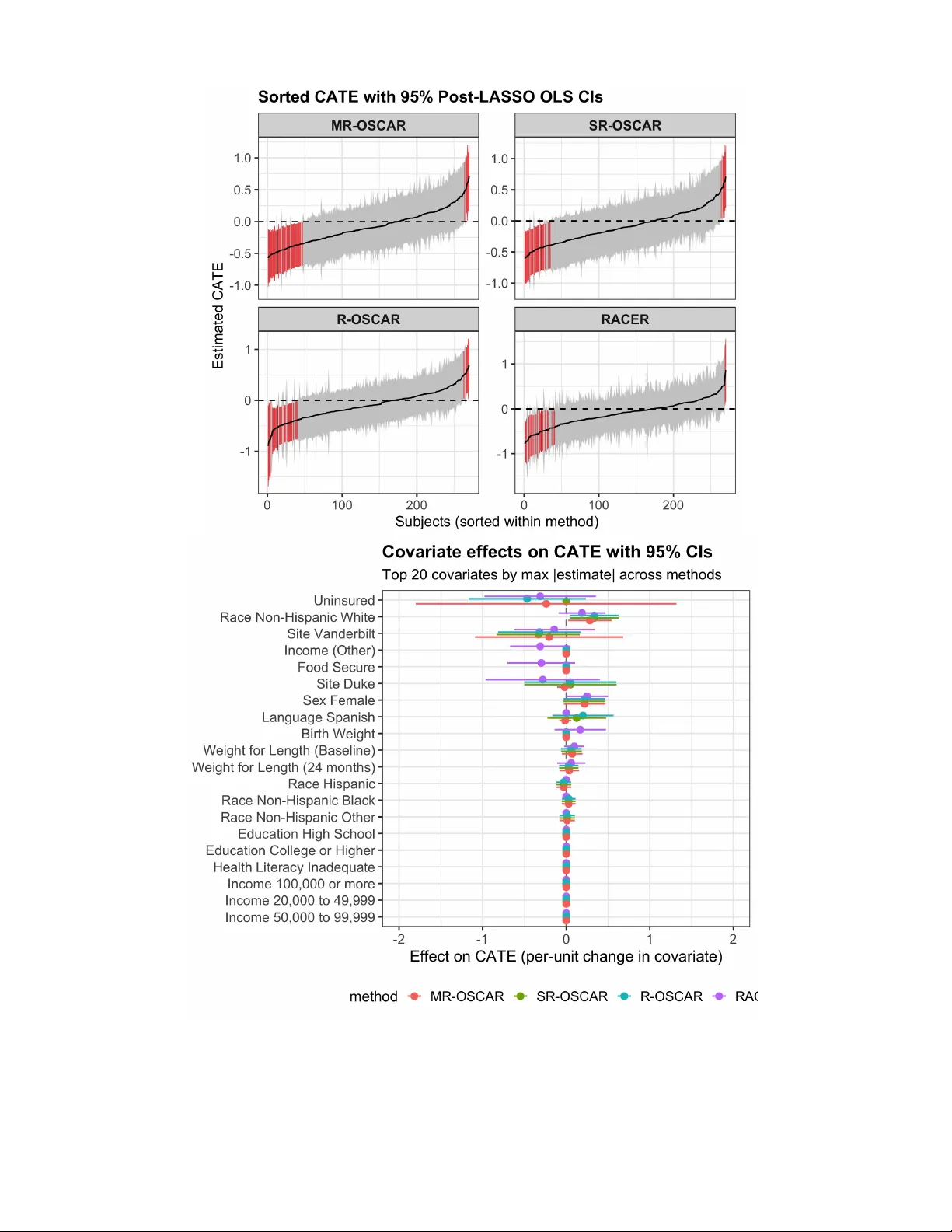

We develop estimators that improve precision of heterogeneous treatment effect estimates that allow borrowing information from observational studies when the available covariates in each data source do not perfectly match. Standard data-borrowing met…

Authors: Samhita Pal, Jared D. Huling, Amir Asiaee