Dependence Fidelity and Downstream Inference Stability in Generative Models

Recent advances in generative AI have led to increasingly realistic synthetic data, yet evaluation criteria remain focused on marginal distribution matching. While these diagnostics assess local realism, they provide limited insight into whether a ge…

Authors: Nazia Riasat

Published as a conference paper at MathAI 2026 D E P E N D E N C E F I D E L I T Y A N D D O W N S T R E A M I N F E R - E N C E S T A B I L I T Y I N G E N E R A T I V E M O D E L S Nazia Riasat North Dakota State Uni versity nazia.riasat@ndsu.edu A B S T R AC T Recent advances in generative artificial intelligence hav e led to increasingly real- istic synthetic data, yet the criteria used to ev aluate such models remain largely focused on marginal distrib ution matching and likelihood-based measures. While these diagnostics assess local realism, they provide limited insight into whether a generati ve model preserves the multi variate dependence structures that gov ern downstream inference. In this work, we argue that this gap represents a funda- mental limitation in current approaches to trustworthy generati ve modeling. W e introduce co variance-le vel dependence fidelity as a practical and interpretable cri- terion for e valuating whether a generati ve distrib ution preserv es the joint structure of data beyond univ ariate marginals. W e formalize this notion through cov ariance- based measures and establish three core results. First, we show that distributions can match all uni variate mar ginals exactly while e xhibiting substantially different dependence structures, demonstrating that marginal fidelity alone is insufficient. Second, we prov e that dependence di ver gence induces quantitati ve instability in downstream inference, including sign re versals in population regression coef fi- cients despite identical marginal beha vior . Third, we provide positi ve stability guarantees, showing that explicit control of co variance-le vel dependence diver - gence ensures stable behavior for dependence-sensitiv e tasks such as principal component analysis. Using minimal synthetic constructions, we illustrate how failures in dependence preserv ation lead to incorrect conclusions in extreme-ev ent estimation and re gression despite identical mar ginal distributions. T ogether , these results highlight dependence fidelity as a useful diagnostic for ev aluating gen- erativ e models in dependence-sensitive do wnstream tasks, with implications for diffusion models and variational autoencoders, and potential extensions to lar ge language models as future work. 1 I N T R O D U C T I O N Generativ e models are increasingly deployed as substitutes for real data in do wnstream scientific and decision-making workflo ws (Ho et al., 2020). In man y applied pipelines, a model is treated as “high-quality” if its synthetic samples are indistinguishable from the target data under marginal diag- nostics: uni variate goodness-of-fit tests, feature-wise summary statistics, or lik elihood-based objec- tiv es that implicitly prioritize low-dimensional representations (Goodfello w et al., 2016; Kingma & W elling, 2014). This ev aluation philosophy is widespread because marginal discrepancies are easy to measure, easy to visualize, and often correlate with perceptual realism (Kynk ¨ a ¨ anniemi et al., 2019; Naeem et al., 2020). Howe ver , do wnstream inference is rarely a marginal operation. Regression, dimensionality reduction, clustering, and representation learning depend critically on multivariate structur e cov ariances, tail dependence, conditional relationships, and higher -order interactions (Jol- liffe, 2002; Anderson, 2003). A generati ve model can therefore appear accurate under marginal audits while remaining structurally unreliable for the tasks that motiv ate its use. This paper formalizes a central claim: marginal distribution fidelity is insufficient to guarantee trust- worthy downstream behavior for dependence-sensitive tasks , whereas explicit control of covariance- lev el (second-order) dependence, extensions to higher -order or copula-le vel structure can yield quan- titativ e stability guarantees for a broad class of dependence-sensitiv e tasks. W e study a target data- 1 Published as a conference paper at MathAI 2026 generating distribution P on R d and a candidate generativ e distribution Q , interpreted as the output of a generativ e AI system. Our analysis separates two questions that are often conflated in practice: 1. Representation faithfulness: do samples from Q match samples from P under standard distributional diagnostics? 2. Inferential trustworthiness: if Q replaces P in a downstream analysis, are the resulting conclusions stable and qualitativ ely correct? W e show that the first question cannot be answered by marginal criteria alone, and that f ailures of dependence preservation can induce large and ev en sign-rev ersing inferential errors that are in visible to marginal e v aluations (W asserman, 2004; D’Amour et al., 2022). Main results : Our theoretical contributions establish a simple hierarchy linking distributional fidelity to downstream stability . (i) Mar ginal fidelity does not imply dependence fidelity . W e first prove an impossibility result (The- orem 1): for any dimension d ≥ 2 , there e xist distributions P and Q whose uni v ariate marginals match e xactly , yet whose dependence structures differ . In particular , the copulas can be distinct, and cov ariance-lev el discrepancies can be made arbitrarily lar ge through appropriate scaling construc- tions. This establishes that perfect marginal agreement does not constrain either linear or nonlinear dependence, and therefore cannot serve as a certificate of structural correctness. (ii) Dependence div ergence induces inferential instability . F or a population linear re gression task, we obtain the bound | β ( P ) − β ( Q ) | ≤ 1 √ 2 σ 2 X ∥ Σ P − Σ Q ∥ F . with equality when the cov ariance perturbation af fects only of f-diagonal entries (i.e., covariance- only perturbations under equal marginal v ariances). (iii) Dependence fidelity yields positive stability guarantees. Finally , we show that controlling cov ariance-lev el dependence provides a suf ficient condition for stability of dependence-sensitive downstream tasks. Focusing on principal component analysis (PCA), we deriv e bounds for both spectral stability (via W eyl-type eigen value perturbation) and subspace stability (via Davis–Kahan- type results (Davis & Kahan, 1963)) in terms of ∥ Σ P − Σ Q ∥ F and an eigengap condition (Theo- rem 3). Consequently , when a generati ve model preserves second-order dependence at an appro- priate scale, it provides guarantees on the stability of k ey geometric properties. These guarantees are informativ e in the small-perturbation regime where ∥ Σ P − Σ Q ∥ F ≪ γ , and may become loose when the perturbation is large relati ve to the eigengap. Synthetic constructions isolating dependence effects : T o make the theory concrete, we provide min- imal synthetic examples in which mar ginal distrib utions are fixed by construction while dependence is systematically altered. These examples demonstrate two practically relev ant failure modes: (a) mismatched tail dependence (e.g., Gaussian copula versus t -copulas) leading to se vere errors in joint extreme-e vent probabilities, and (b) correlation sign flips producing re gression coefficients of opposite sign. Both constructions are in visible to marginal-only ev aluation, yet they induce lar ge downstream discrepancies. Implications for evaluation of generative models : T aken together , our results motiv ate dependence fidelity as a principal criterion for trustworthy generativ e AI. Mar ginal realism does not guarantee stability for preserving multiv ariate structure, and thus cannot certify inferential reliability . In con- trast, dependence-aw are criteria including cov ariance-lev el div ergence, copula-based discrepancies, and task-aligned structural constraints, provide a mathematically meaningful axis along which gen- erativ e models can be audited, compared, and, in certain settings, accompanied by explicit stability guarantees. While each mathematical component used in this paper draws on classical results from multiv ariate analysis and matrix perturbation theory , our contribution is conceptual and structural. W e integrate these tools into a unified framework that connects distrib utional fidelity to inferential stability , ex- plicitly identifying cov ariance-lev el dependence preservation as a sufficient structural condition for trustworthy stability in dependence-sensiti ve downstream tasks. T o our kno wledge, this hierarchy , linking mar ginal fidelity , dependence di vergence, and do wnstream stability , has not been formalized in the generativ e modeling literature. 2 Published as a conference paper at MathAI 2026 Scope of the proposed criterion. W e emphasize that the goal of this work is not to propose a universal criterion for trustworthy generative modeling, but rather to highlight the importance of preserving dependence structure for do wnstream tasks that rely on second-order relationships between v ariables. In particular , we focus on covariance-le vel dependence fidelity as a tractable diagnostic for settings where inference procedures (e.g., regression and PCA) depend directly on cov ariance structure. Our results sho w that cov ariance-level control pro vides quantitati ve stability guarantees for this class of tasks, while illustrating that richer dependence criteria are needed for tasks beyond this scope. Roadmap : Section 2 introduces the problem setup and formal definitions of marginal fidelity and dependence fidelity for distributions P (target) and Q (model). Section 3 surveys related work on generativ e model ev aluation, distrib utional distances, dependence modeling, and trustworthy AI. Section 4 presents the main theoretical results establishing a hierarchy for trustworthy generative modeling. First, we sho w that marginal fidelity does not imply dependence fidelity (Theorem 1). Second, we quantify the downstream impact of dependence di ver gence by proving instability of regression-type functionals under cov ariance perturbations (Theorem 2). Third, we establish positiv e stability guarantees for dependence-sensiti ve tasks under e xplicit covariance control using spectral and subspace perturbation bounds (Theorem 3). Section 5 pro vides minimal synthetic constructions that isolate dependence effects, illustrating ho w models with identical marginals can yield qualita- tiv ely different do wnstream behavior . Section 6 discusses implications for the principled e valuation of generative models and for dependence-aware design in scientific and decision-making workflo ws. 2 P R O B L E M S E T U P A N D N O TA T I O N Let P denote a target data-generating distribution on R d , and let Q denote the distribution induced by a generativ e model. W e assume throughout that P and Q have finite second moments so that cov ariance matrices are well-defined. The goal of generativ e modeling is to approximate P by Q in a way that preserv es not only marginal behavior , b ut also multi variate structure relev ant to downstream statistical tasks. Marginal fidelity . W e say that Q achieves mar ginal fidelity if all univ ariate marginals match: P j = Q j , j = 1 , . . . , d, where P j and Q j denote the marginal distributions of the j -th coordinate under P and Q , respec- tiv ely . Marginal fidelity is commonly encouraged in practice via likelihood-based objectives, mo- ment matching, or univ ariate goodness-of-fit diagnostics. Dependence fidelity (covariance-le vel). Let Σ P := Co v X ∼ P ( X ) , Σ Q := Co v X ∼ Q ( X ) denote the cov ariance matrices under P and Q . W e measure cov ariance-lev el dependence discrep- ancy by the Frobenius distance D Σ ( P , Q ) := ∥ Σ P − Σ Q ∥ F , where ∥ · ∥ F is the Frobenius norm. Small values of D Σ ( P , Q ) indicate that the linear dependence structure of Q closely matches that of P . W e note that D Σ ( P , Q ) is scale-sensitiv e; in applications requiring scale in variance, correlation- based or whitened co variance comparisons may be preferable. W e adopt the cov ariance formulation here for mathematical transparency . Downstr eam functionals and inferential stability . W e consider population-lev el functionals T ( · ) that depend on multi variate structure, including: (i) population regression coef ficients, (ii) prin- cipal component eigen values and principal subspaces, and (iii) joint tail probabilities. W e say that the generative model is infer entially stable (for a specified class of dependence-sensiti ve function- als) if T ( Q ) is controlled by T ( P ) through bounds in terms of D Σ ( P , Q ) for the class of functionals under consideration. 3 Published as a conference paper at MathAI 2026 Norm relations. For an y matrix A , the operator norm is bounded by the Frobenius norm: ∥ A ∥ 2 ≤ ∥ A ∥ F . Our analysis focuses on cov ariance-level control for mathematical transparency . This relation allows Frobenius control of co variance error to imply spectral and subspace perturbation bounds used later in the stability guarantees. In this work, dependence fidelity refers specifically to second-order (cov ariance-lev el) structure. This notion directly controls stability for linear and spectral procedures such as regression and PCA. Higher -order or nonlinear forms of dependence (e.g., copula geometry , tail dependence, or conditional structure) are not captured by this metric and represent important directions for future work. Clarification on dependence notions. In this w ork we distinguish three related notions of depen- dence preserv ation. (i) Covariance-le vel fidelity refers to preserv ation of second-order structure and is the focus of our theoretical results. (ii) Copula-le vel fidelity captures nonlinear dependence and tail behavior that may not be reflected in cov ariance statistics. (iii) Gener al dependence fidelity refers to broader structural preservation beyond second-order statistics. The formal guarantees dev eloped in this paper apply specifically to cov ariance-level fidelity , while richer forms of dependence such as copula structure are discussed conceptually but f all outside the scope of the present analysis. The ne xt section formalizes the limitations of marginal fidelity and establishes quantitati ve links between dependence discrepancy and do wnstream instability/stability . 3 R E L A T E D W O R K Evaluation of generative models. A large body of work ev aluates generati ve models using likelihood-based criteria, distributional distances, or low-dimensional summary statistics (Theis et al., 2016; Sajjadi, 2018). For image and text generation, metrics such as lik elihood, reconstruction error , and embedding-based distances are widely used to assess sample quality and realism (Heusel et al., 2017). While these approaches capture aspects of mar ginal or feature-le vel fidelity , the y often provide limited guarantees reg arding the preserv ation of global multiv ariate structure. Our results formalize this limitation in a theoretical framework by quantifying how marginal agreement can coexist with lar ge differences in multi variate dependence and do wnstream behavior . Distributional distances and integral probability metrics. Classical distances between distribu- tions, including W asserstein distance (Ramdas et al., 2015), maximum mean discrepancy (MMD) (Gretton et al., 2012), and other integral probability metrics, provide stronger notions of global dis- tributional similarity . Howe ver , in practice these measures are frequently estimated using kernels, projections, or low-dimensional embeddings, which may implicitly emphasize mar ginal or local structure. Our analysis complements this literature by identifying second-order (co variance-le vel) dependence preserv ation as a minimal structural condition that directly controls the stability of com- mon statistical functionals. Dependence modeling and copula theory . The separation between marginal behavior and depen- dence structure is well understood in multi variate statistics through copula theory (Nelsen, 2006), which represents joint distributions via mar ginal transformations and a dependence component. Our work builds on this perspecti ve but focuses specifically on second-order (cov ariance-le vel) depen- dence and its consequences for inference. Rather than characterizing dependence structures them- selves, we quantify ho w deviations in cov ariance structure propagate to errors in downstream tasks. In particular , we deri ve e xplicit bounds linking co variance perturbations to instability in popula- tion regression coefficients and principal component analysis. This establishes a direct connection between second-order dependence preservation and inferential stability , providing an operational criterion for ev aluating generative models. Stability of statistical procedures. Sensiti vity analysis and matrix perturbation theory have long established that many statistical procedures depend continuously on the underlying cov ariance struc- ture (v an der V aart, 2000). Results such as W eyl’ s inequality and the Davis–Kahan theorem provide spectral and subspace stability guarantees under small perturbations. W e lev erage these classical 4 Published as a conference paper at MathAI 2026 tools to derive stability bounds: when cov ariance-level dependence di vergence is controlled, down- stream functionals remain stable. T rustworth y and r eliable AI. Recent work on trustworthy AI has emphasized robustness, cali- bration, and distributional shift (D’Amour et al., 2022; Zhou, 2021). Much of this literature focuses on predictive stability under cov ariate changes or adversarial perturbations. Our contribution is complementary: we study the reliability of generative models used as data surrogates and sho w that trustworthiness requires preserving second-order multiv ariate dependence, not only mar ginal realism. T aken together , these strands of work motiv ate the need for dependence-aware ev aluation. Our results formalize dependence fidelity as a minimal structural requirement that connects distributional approximation to inferential reliability . 4 M A I N R E S U L T S : D E P E N D E N C E F I D E L I T Y A N D T R U S T W O RT H Y I N F E R E N C E W e now formalize the central claim of this work: matching mar ginal distributions is insuf ficient to guarantee trustworthy behavior of generative models, while preservation of co variance-le vel de- pendence provides sufficient conditions for stability of a class of dependence-sensitiv e functionals, including linear regression and spectral methods. Throughout, let P denote a target data-generating distribution on R d and Q a generativ e distrib ution produced by a model. Our goal is to understand how discrepancies between P and Q at the le vel of dependence structure affect the reliability of do wnstream statistical tasks. 4 . 1 M A R G I N A L F I D E L I T Y D O E S N OT I M P LY D E P E N D E N C E F I D E L I T Y Current ev aluation practices for generati ve models often focus on marginal distribution matching, ei- ther explicitly through univ ariate goodness-of-fit diagnostics or implicitly through likelihood-based objectiv es (Goodfellow et al., 2014; Theis et al., 2016; Barratt & Sharma, 2018; Borji, 2019). W e first show that such criteria pro vide no guarantee that the joint structure of the data is preserved. This construction exploits scale sensitivity of co variance metrics; in applications where scale in- variance is desired, correlation-based or standardized cov ariance comparisons may provide more appropriate diagnostics. Theorem 1 (Mar ginal fidelity does not imply dependence fidelity) . Let d ≥ 2 . There exist pr oba- bility distributions P and Q on R d such that: 1. All univariate marginals matc h exactly: P j = Q j for each coor dinate j . 2. The copulas differ: C P = C Q , D cop ( P , Q ) > 0 for any char acteristic-kernel (e.g ., Gaussian kernel) MMD on the copula domain. 3. The covariance matrices can be chosen to dif fer: Σ P = Σ Q , D Σ ( P , Q ) := ∥ Σ P − Σ Q ∥ F > 0 . Mor eover , the covariance divergence can be made arbitrarily lar ge while maintaining exact mar ginal agr eement (Nelsen, 2006; Sklar, 1959). This can be achieved, for example, by scaling the marginal v ariance. If X ∼ N (0 , σ 2 ) and the cov ariance matrices differ only in their correlation structure, then D Σ ( P , Q ) = ∥ Σ P − Σ Q ∥ F = 2 √ 2 σ 2 | ρ | , where in the unit-v ariance construction of the proof ( σ 2 = 1 ), this simplifies to 2 √ 2 | ρ | and grows without bound as σ 2 → ∞ while the marginals remain matched. 5 Published as a conference paper at MathAI 2026 This result establishes a fundamental limitation of marginal-based e valuation: ev en perfect agree- ment across all univ ariate distributions leav es the joint dependence structure unconstrained. Conse- quently , mar ginal fidelity alone cannot serve as a criterion for trustworthy generative modeling. This perspectiv e aligns with recent discussions emphasizing task-aw are ev aluation of generativ e models, where structural properties relev ant to downstream inference may be more informati ve than marginal distributional fit (Theis et al., 2016; D’Amour et al., 2022). 4 . 2 D E P E N D E N C E D I V E R G E N C E I N D U C E S I N F E R E N T I A L I N S TA B I L I T Y Dependence mismatch is not merely a representational discrepancy; it directly affects do wn- stream inference. W e formalize this phenomenon using population linear regression as a canonical dependence-sensitiv e task (Anderson, 2003; van der V aart, 2000). Let ( X , Y ) ∈ R 2 . W e assume that variables are centered (or equiv alently that the regression model includes an intercept term), ensuring that the population least-squares slope is giv en by β ( P ) := Co v P ( X, Y ) V ar P ( X ) . The centering assumption ensures that the re gression slope depends only on second-order structure, which allows us to relate inferential sensiti vity directly to covariance perturbations. Theorem 2 (Dependence div ergence directly controls inferential sensitivity .) . Let P and Q be dis- tributions on R 2 with finite second moments and matching mar ginal variances acr oss P and Q : V ar P ( X ) = V ar Q ( X ) = σ 2 X > 0 , V ar P ( Y ) = V ar Q ( Y ) = σ 2 Y > 0 . Let Σ P and Σ Q denote the covariance matrices of ( X, Y ) under P and Q , respectively . Then | β ( P ) − β ( Q ) | ≤ 1 √ 2 σ 2 X ∥ Σ P − Σ Q ∥ F . Equality holds under equal marginal variances AND when the covariance perturbation is confined to the off-diagonal entry . This theorem provides a quantitative bound linking cov ariance-lev el dependence di ver gence to infer - ential error (Davis & Kahan, 1963; Anderson, 2003). In particular, two distributions with identical marginals b ut opposite correlation structures yield regression coef ficients of opposite sign. Thus, dependence changes that are in visible to marginal diagnostics can produce large inferential discrep- ancies, including sign re versals. The bound is interpreted in the standard perturbation re gime where the cov ariance perturbation is small relativ e to the eigengap of the reference matrix. Under this condition, W eyl’ s inequality implies | λ k (Σ P ) − λ k (Σ Q ) | ≤ ∥ Σ P − Σ Q ∥ 2 for all k . Since ∥ A ∥ 2 ≤ ∥ A ∥ F , we obtain | λ k (Σ P ) − λ k (Σ Q ) | ≤ ∥ Σ P − Σ Q ∥ F . This ensures the required spectral separation between the leading eigenspace of Σ Q and its comple- ment. 4 . 3 S TA B I L I T Y G U A R A N T E E S U N D E R D E P E N D E N C E F I D E L I T Y While the pre vious results highlight the limitations of mar ginal-based e valuation, we next sho w that explicit control of dependence div ergence yields positive guarantees. W e consider principal com- ponent analysis (PCA) (Jolliffe, 2002; Davis & Kahan, 1963), a fundamental dependence-sensitiv e operation underlying representation learning and dimensionality reduction. Theorem 3 (Stability of PCA under covariance-le vel dependence fidelity) . Let P and Q be distri- butions on R d with zer o mean and covariance matrices Σ P and Σ Q with finite second moments. 6 Published as a conference paper at MathAI 2026 (Eigen value stability) F or each k = 1 , . . . , d , | λ k (Σ P ) − λ k (Σ Q ) | ≤ ∥ Σ P − Σ Q ∥ 2 ≤ ∥ Σ P − Σ Q ∥ F . In particular , max k ≤ d | λ k (Σ P ) − λ k (Σ Q ) | ≤ D Σ ( P , Q ) . (Subspace stability) Let U P ∈ R d × r and U Q ∈ R d × r denote the matrices whose columns ar e the top- r orthonormal eigen vectors of Σ P and Σ Q r espectively . Let γ := λ r (Σ P ) − λ r +1 (Σ P ) > 0 denote the eigengap of Σ P . Under the small-perturbation assumption D Σ ( P , Q ) < γ , ∥ sin Θ( U P , U Q ) ∥ 2 ≤ ∥ Σ P − Σ Q ∥ F γ . The subspace perturbation bound is informativ e when the cov ariance discrepancy satisfies D Σ ( P , Q ) ≪ γ , where γ denotes the eigengap of the population covariance matrix. When the eigengap is small, ev en minor perturbations in the cov ariance structure may result in substantial instability of the esti- mated principal subspaces. The follo wing result sho ws that controlling co variance-le vel dependence div ergence is suf ficient for stability of common spectral learning procedures. 4 . 4 I M P L I C A T I O N S F O R T R U S T W O RT H Y G E N E R A T I V E A I T aken together , Theorems 1–3 establish a clear hierarchy: Theorem Claim Key quantity Theorem 1 Marginal fidelity does not imply dependence fidelity D Σ ( P , Q ) can be arbitrarily lar ge with P j = Q j Theorem 2 Dependence div ergence induces inferential instability | β ( P ) − β ( Q ) | ≤ 1 √ 2 σ 2 X ∥ Σ P − Σ Q ∥ F Theorem 3 Dependence fidelity yields stability guarantees ∥ sin Θ( U P , U Q ) ∥ 2 ≤ D Σ ( P , Q ) /γ T able 1: Summary of the three main theoretical results. These findings motiv ate dependence fidelity as a structural diagnostic for ev aluating generative mod- els in dependence-sensitiv e settings (Ovadia, 2019; Zhou, 2021). Generative systems intended for downstream scientific or decision-making tasks should therefore be assessed not only for mar ginal realism, but also for their ability to preserve multi variate dependence structures, particularly for tasks that rely on second-order structure such as regression and principal component analysis. 5 S Y N T H E T I C E X A M P L E S : I S O L A T I N G D E P E N D E N C E E FF E C T S U N D E R P E R F E C T M A R G I N A L F I D E L I T Y W e no w present tw o minimal synthetic constructions designed to isolate the ef fect of dependence div ergence while keeping all uni variate marginal distributions fixed (Nelsen, 2006; Sklar, 1959). These constructions serve two complementary purposes. First, the y provide concrete realizations of the structural non-identifiability established in Theorem 1. Second, they illustrate the inferential instability quantified in Theorem 2. In each construction, the marginal distributions are identical by design, ensuring that any observed discrepancy arises solely from differences in dependence structure. 5 . 1 E X A M P L E I : G AU S S I A N V S . T - C O P U L A — T A I L D E P E N D E N C E F A I L U R E Our first example demonstrates that nonlinear dependence, particularly tail dependence, can vary substantially ev en when marginal distrib utions match exactly . 7 Published as a conference paper at MathAI 2026 Construction. Let ( X 1 , X 2 ) be a biv ariate random vector with standard normal marginals. Con- sider two joint distrib utions: • Distribution P : a Gaussian copula with correlation parameter ρ ∈ (0 , 1) , corresponding to a joint normal distribution with co variance Σ P = 1 ρ ρ 1 . • Distribution Q : a t -copula with the same linear correlation parameter ρ but degrees of freedom ν > 2 (ensuring finite variance), transformed via probability inte gral transforms to obtain standard normal marginals. By construction, both P and Q hav e identical univ ariate mar ginals N (0 , 1) and the same copula correlation parameter ρ (the dependence parameter of the copula), rather than equality of Pearson correlation of the transformed variables. Consequently , any marginal goodness-of-fit metric (e.g., KS distance) cannot distinguish between the two. Dependence discrepancy . Although the marginal distributions coincide, the copulas are distinct. The Gaussian copula exhibits zero tail dependence, whereas the t -copula e xhibits positi ve tail de- pendence. Therefore, D cop ( P , Q ) := MMD k ( C P , C Q ) > 0 , where MMD k denotes a characteristic-kernel (e.g., Gaussian kernel) maximum mean discrepancy defined on the copula space, consistent with Theorem 1 (Gretton et al., 2012). This example illus- trates that dependence discrepancies beyond second-order structure can arise e ven when co variance and mar ginal properties are matched. While the theoretical results in this work focus on cov ariance- lev el dependence, the e xample highlights broader structural failure modes that remain in visible to marginal e v aluation. Downstr eam functional. Consider the dependence-sensitive quantity T ( P ) := Pr P ( X 1 > u, X 2 > u ) , T ( Q ) := Pr Q ( X 1 > u, X 2 > u ) , which represents the probability of a joint extreme e vent at threshold u . Observation. For moderate thresholds u , the heavy-tail dependence of the t -copula yields T ( Q ) ≫ T ( P ) , This discrepancy occurs despite perfect marginal fidelity , demonstrating that marginal realism pro vides no control over e xtreme-ev ent behavior (Figure 1). This e xample illustrates a critical failure mode for risk-sensiti ve applications: generativ e models that match marginals may still se verely misrepresent joint extremes, a phenomenon invisible to marginal-only e v aluation (Borji, 2019). Interpr etation. This example highlights a limitation of cov ariance-lev el dependence fidelity . While preserv ation of covariance structure ensures stability for second-order tasks such as re gres- sion and PCA, it does not capture higher-order or tail dependence. In particular , extreme-e vent probabilities may differ substantially e ven when co variance structure is preserved. This illustrates that covariance-le vel diagnostics address second-order stability , while richer dependence features require complementary diagnostics beyond the scope of the present analysis. 5 . 2 E X A M P L E I I : C O R R E L A T I O N S I G N F L I P — R E G R E S S I O N I N S TA B I L I T Y Our second example provides a direct illustration of Theorem 2, showing that linear dependence div ergence alone can in vert inferential conclusions. Construction. Let P and Q be biv ariate normal distributions with zero means and unit v ariances, differing only in the sign of correlation: Σ P = 1 ρ ρ 1 , Σ Q = 1 − ρ − ρ 1 , ρ ∈ (0 , 1) . Both distributions share identical mar ginals N (0 , 1) . 8 Published as a conference paper at MathAI 2026 Figure 1: Joint e xtreme-ev ent probabilities for Gaussian and t copulas. Despite identical marginals, the t copula exhibits substantially higher joint tail risk due to hea vy-tail dependence. Downstr eam task. Consider the population least-squares regression slope (Anderson, 2003) β ( P ) := Co v P ( X, Y ) V ar P ( X ) . Under these distributions, β ( P ) = ρ, β ( Q ) = − ρ. Both distributions satisfy V ar P ( X ) = V ar Q ( X ) = 1 , so the conditions of Theorem 2 hold with σ 2 X = 1 . Quantitative instability . The coefficient dif ference satisfies | β ( P ) − β ( Q ) | = 2 | ρ | , while the cov ariance div ergence is ∥ Σ P − Σ Q ∥ F = 2 √ 2 | ρ | . Thus, | β ( P ) − β ( Q ) | = 1 √ 2 ∥ Σ P − Σ Q ∥ F , which matches the bound in Theorem 2 with equality . Figure 2: Cov ariance structures for two biv ariate normal distributions with identical marginal distri- butions but opposite correlation signs. The change in dependence structure rev erses the population regression slope, illustrating inferential instability under dependence di ver gence. 9 Published as a conference paper at MathAI 2026 This e xample sho ws that e ven the sign of an inferred relationship can rev erse under dependence div ergence that lea ves all marginals unchanged. From the perspectiv e of scientific inference or decision-making, such sign rev ersals correspond to qualitati vely incorrect conclusions, representing a fundamental failure of reliability . Because the covariance changes sign between P and Q , the regression coef ficient also rev erses sign (Figure 2). 5 . 3 S U M M A RY O F S Y N T H E T I C F I N D I N G S T ogether , these examples reinforce the theoretical results of Section 4: • Marginal fidelity does not constrain dependence (Theorem 1). • Dependence div ergence induces substantial do wnstream instability (Theorem 2). • Marginal-only e valuation fails to detect these dependence-induced errors. The constructions are intentionally simple and reproducible. Their purpose is not to provide bench- marks, but to serve as existence proofs of failure modes that any trustworthy ev aluation framework for generativ e models must address. Complementary empirical v alidation on a real high-dimensional dataset is provided in Appendix B.6. 6 D I S C U S S I O N : D E P E N D E N C E F I D E L I T Y F O R T R U S T W O R T H Y G E N E R AT I V E M O D E L I N G The theoretical and synthetic results establish a central conclusion: for dependence-sensitiv e tasks, reliability is fundamentally a structural (covariance-le vel) property rather than a marginal one. Ex- act agreement of univ ariate distrib utions does not constrain multiv ariate dependence and therefore cannot guarantee stable downstream inference (Theorem 1). Even moderate covariance distortions can induce substantial inferential errors, including sign re versals in regression (Theorem 2), whereas explicit control of co variance di vergence yields quantitativ e stability guarantees for procedures such as PCA (Theorem 3). These findings highlight a limitation of current ev aluation practices, which often optimize likelihood or low-dimensional summary statistics while downstream decisions depend critically on multiv ariate structure. Dependence mismatches may remain in visible to marginal diagnostics and cannot be mitigated simply by increasing sample size or improving marginal accuracy , reflecting a structural limitation of marginal-based e v aluation (Theis et al., 2016; Borji, 2019). Although our analysis is model-agnostic, the implications are particularly relev ant for modern gen- erativ e architectures. Iterativ e generation procedures such as diffusion models may accumulate structural distortions across steps (Ho et al., 2020; Song et al., 2021) and factorization assumptions in latent-variable models can induce dependence collapse despite accurate marginals (Locatello & Bauer, 2019). In conditional generation settings, calibrated marginal probabilities may coexist with misspecified conditional dependence. T aken together, these results moti vate dependence fidelity as a practical ev aluation principle: mod- els intended for downstream use should preserve task-relev ant multiv ariate structure. In practice, cov ariance-lev el fidelity can be estimated from samples via ˆ D Σ = ∥ ˆ Σ P − ˆ Σ Q ∥ F , with correlation normalization or regularization used in high-dimensional settings. This enables dependence fidelity to be incorporated as a diagnostic alongside existing ev aluation metrics (Naeem et al., 2020). High-dimensional covariance estimation. Reliable estimation of the cov ariance discrepancy D Σ ( P , Q ) from samples requires sufficient sample size relativ e to the ambient dimension. Under sub-Gaussian assumptions, consistent estimation of co variance matrices under the Frobenius norm typically requires n = Ω( d ) samples, with empirical guidelines suggesting that n ≈ 5 d observations often pro vide stable estimates in moderate dimensions (V ershynin, 2018) as a rough practical guide- line. In high-dimensional regimes where d ≫ n , naive sample cov ariance estimates may become 10 Published as a conference paper at MathAI 2026 unstable. In such settings, shrinkage or regularized cov ariance estimators such as the Ledoit–W olf estimator can provide reliable estimates of the underlying cov ariance structure. Incorporating these estimators into dependence-fidelity diagnostics represents an important direction for scaling the pro- posed framew ork to high-dimensional generativ e modeling applications. Empirical evaluation. The present work focuses on theoretical characterization and illustrative synthetic constructions designed to isolate dependence mismatches that are in visible to marginal di- agnostics. An additional empirical illustration using gene expression data is provided in Appendix B.6. Evaluating cov ariance-le vel dependence diagnostics on modern generative models (e.g., diffu- sion models, GAN-based generators, or tab ular synthesis models) is an important ne xt step and will be explored in future work. 7 C O N C L U S I O N This work establishes dependence fidelity as a minimal structural requirement for trustworthy gen- erativ e modeling. While contemporary e valuation practices lar gely emphasize marginal distribution matching, our analysis sho ws that such criteria are insufficient to guarantee reliable do wnstream behavior . Even exact agreement across all mar ginal distributions leaves the joint structure lar gely unconstrained, allowing substantial distortions in multiv ariate dependence. W e formalized the con- sequences of this gap through three complementary results: (i) marginal fidelity does not imply dependence fidelity (Theorem 1); (ii) cov ariance discrepancies induce quantifiable downstream in- stability , including sign rev ersals in regression despite identical mar ginal beha vior (Theorem 2); and (iii) bounding cov ariance-level dependence di ver gence provides stability guarantees for dependence- sensitiv e procedures such as PCA (Theorem 3). These guarantees operate at the le vel of second- order structure and therefore apply broadly to methods whose behavior is go verned by cov ariance geometry . Minimal synthetic constructions demonstrated that these effects arise ev en in simple settings where marginal goodness-of-fit metrics are indistinguishable, yet downstream beha vior differs dramati- cally . These findings complement recent e vidence that models with well-calibrated marginals may remain structurally underspecified or unreliable for inference (D’Amour et al., 2022; Ovadia, 2019). T aken together, our results suggest that trustworthiness should be ev aluated in terms of multiv ari- ate structural preserv ation rather than mar ginal agreement alone. Incorporating dependence-a ware diagnostics and control provides a principal pathway tow ard more reliable generati ve systems and aligns ev aluation with the requirements of scientific and decision-making applications. 11 Published as a conference paper at MathAI 2026 R E F E R E N C E S T . W . Anderson. An Intr oduction to Multivariate Statistical Analysis . W iley , 3rd edition, 2003. Shane Barratt and Rishi Sharma. A note on the inception score. arXiv preprint , 2018. Ali Borji. Pros and cons of gan ev aluation measures. Computer V ision and Image Understanding , 2019. Alexander D’Amour , Katherine Heller , Dan Moldov an, Ben Adlam, Babak Alipanahi, Alex Beutel, et al. Underspecification presents challenges for credibility in modern machine learning. Journal of Machine Learning Researc h , 23(226):1–61, 2022. URL https://jmlr.org/papers/ v23/20- 1255.html . Chandler Davis and W illiam Kahan. The rotation of eigen vectors by a perturbation. SIAM Journal on Numerical Analysis , 7(1):1–46, 1963. Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, et al. Generativ e adversarial nets. In Advances in Neural Information Pr ocessing Systems (NeurIPS) , 2014. Ian Goodfellow , Y oshua Bengio, and Aaron Courville. Deep learning , volume 1. MIT Press, 2016. Arthur Gretton, Karsten Borgwardt, Malte Rasch, Bernhard Sch ¨ olkopf, and Alexander Smola. A Kernel T wo-Sample T est. J ournal of Machine Learning Resear ch , 13:723–773, 2012. Martin Heusel, Hubert Ramsauer , Thomas Unterthiner, Bernhard Nessler , and Sepp Hochreiter . Gans trained by a tw o time-scale update rule conv erge to a local nash equilibrium. In Advances in Neural Information Pr ocessing Systems (NeurIPS) , 2017. Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising dif fusion probabilistic models. In Advances in Neural Information Pr ocessing Systems (NeurIPS) , 2020. Ian T . Jollif fe. Principal Component Analysis . Springer , 2nd edition, 2002. Diederik Kingma and Max W elling. Auto-encoding variational bayes. In International Conference on Learning Repr esentations (ICLR) , 2014. T uomas Kynk ¨ a ¨ anniemi, T ero Karras, T imo Aila, Jaakko Lehtinen, and Samuli Laine. Improv ed pre- cision and recall metrics for assessing generati ve models. IEEE T ransactions on P attern Analysis and Machine Intelligence , 2019. Francesco Locatello and Stefan Bauer . Challenging common assumptions in the unsupervised learn- ing of disentangled representations. Pr oceedings of the 36th International Confer ence on Machine Learning (ICML) , 2019. Muhammad Ferjad Naeem, Seonghyeon Oh, Y oungjung Uh, Y unjey Choi, and Jaesik Y oo. Reli- able fidelity and di versity metrics for generati ve models. International Confer ence on Machine Learning , 2020. Roger B. Nelsen. An Intr oduction to Copulas . Springer , 2nd edition, 2006. Y aniv Ov adia. Can you trust your model’ s uncertainty? ev aluating predictiv e uncertainty under dataset shift. NeurIPS , 2019. Aaditya Ramdas, Nicolas Garcia, and Marco Cuturi. On wasserstein tw o sample testing. arXiv pr eprint arXiv:1509.02237 , 2015. Mehdi Sajjadi. Assessing generati ve models via precision and recall. In NeurIPS , 2018. Abe Sklar . F onctions de r ´ epartition ` a n dimensions et leurs mar ges , volume 8. Publications de l’Institut de Statistique de l’Univ ersit ´ e de Paris, 1959. Y ang Song, Jascha Sohl-Dickstein, and Diederik P . Kingma. Score-based generativ e modeling through stochastic differential equations. In International Confer ence on Learning Repr esen- tations (ICLR) , 2021. 12 Published as a conference paper at MathAI 2026 Krishnan Sriniv asan, Bradley A. Friedman, Ainhoa Etxeberria, M. Angela Huntley , Marieke P . van der Brug, Owen Foreman, Joel S. Paw , Zora Modrusan, Thomas G. Beach, Gonzalo E. Serrano, Ben A. Barres, and David V . Hansen. Alzheimer’ s patient microglia exhibit en- hanced aging and unique transcriptional activ ation. Cell Reports , 31(13):107843, 2020. doi: 10.1016/j.celrep.2020.107843. Lucas Theis, Aaron van den Oord, and Matthias Bethge. A note on the e v aluation of generati ve models. International Confer ence on Learning Representations , 2016. Aad W . van der V aart. Asymptotic Statistics . Cambridge University Press, 2000. Roman V ershynin. High-Dimensional Pr obability: An Intr oduction with Applications in Data Sci- ence . Cambridge Uni versity Press, 2018. Larry W asserman. All of Statistics . Springer , 2004. Y i Y u, T engyao W ang, and Richard J. Samworth. A useful variant of the Davis–Kahan theorem for statisticians. Biometrika , 102(2):315–323, 2015. Zhi-Hua Zhou. A re view of distribution shift in machine learning. IEEE T ransactions on P attern Analysis and Machine Intelligence , 2021. 13 Published as a conference paper at MathAI 2026 Appendix Overview . This appendix provides technical details and additional experimental results supporting the theoretical claims presented in the main text. Appendix A contains complete proofs of Theorems 1–3 together with auxiliary lemmas used in the arguments. Appendix B presents syn- thetic experiments designed to isolate the effects of dependence structure while preserving identical marginal distributions, illustrating the phenomena described in the theoretical results. Finally , Ap- pendix B.6 provides an empirical illustration using the Huntley Alzheimer’ s gene expression dataset, demonstrating that similar dependence discrepancies can arise in realistic high-dimensional biolog- ical data. A A P P E N D I X A : P R O O F S O F M A I N R E S U L T S A P P E N D I X A 0 : A U X I L I A RY R E S U LT S This appendix provides detailed proofs of Theorems 1–3 stated in the main text. Throughout, we use the same notation as in Sections 2–4. All constructions are explicit and are chosen to isolate dependence effects while preserving identical uni variate marginals. Lemma A.1 (Uniqueness of copula). If a multi v ariate distrib ution has continuous marginals, its copula is unique. This follo ws directly from Sklar’ s theorem. Lemma A.2 (Characteristic kernels). If k is characteristic, then MMD k ( µ, ν ) = 0 ⇐ ⇒ µ = ν. Hence distinct copulas imply strictly positiv e MMD. A P P E N D I X A 1 . P RO O F O F T H E O R E M 1 Pr oof of Theor em 1. W e construct Gaussian distributions that match exactly in all univ ariate marginals while dif fering in their multi variate dependence structure. Step 1: Construction in dimension d = 2 . Let ρ ∈ (0 , 1) and define two centered bi variate Gaussian distributions P = N (0 , Σ ρ ) , Q = N (0 , Σ − ρ ) , where Σ ρ = 1 ρ ρ 1 , Σ − ρ = 1 − ρ − ρ 1 . Step 2: Exact marginal matching. Since both cov ariance matrices ha ve unit diagonal entries, each coordinate marginal under P and Q is N (0 , 1) . Hence, for j = 1 , 2 , P j = Q j = N (0 , 1) Thus the distributions agree e xactly on all univ ariate marginals. Step 3: Second-order dependence mismatch. The cov ariance matrices dif fer: Σ P − Σ Q = 0 2 ρ 2 ρ 0 . Therefore, D Σ ( P , Q ) = ∥ Σ P − Σ Q ∥ F = p (2 ρ ) 2 + (2 ρ ) 2 = 2 √ 2 | ρ | > 0 . Hence the second-order dependence structures are different. 14 Published as a conference paper at MathAI 2026 Step 4: Copula mismatch. Both P and Q hav e continuous marginals. By Sklar’ s theorem, each distribution admits a unique copula. Since the joint Gaussian distrib utions dif fer whenev er ρ = 0 , it follows that P = Q = ⇒ C P = C Q . If D cop is defined using a characteristic-kernel maximum mean discrepanc y (MMD) on [0 , 1] 2 , then D cop ( P , Q ) := MMD k ( C P , C Q ) > 0 , Since the Gaussian copula is uniquely determined by its correlation parameter, and ρ = − ρ when- ev er ρ = 0 , it follows that the corresponding copulas are dif ferent. Step 5: Contr ol of the dependence gap. From Step 3, the Frobenius difference satisfies D Σ ( P , Q ) = 2 √ 2 | ρ | . Thus for any ε ∈ (0 , 2 √ 2) , choosing ρ = ε 2 √ 2 yields ∥ Σ P − Σ Q ∥ F = ε. Step 6: Extension to general d ≥ 2 . For d > 2 , define P ( d ) and Q ( d ) by letting the first two coordinates follo w biv ariate P and Q as constructed abov e, and letting the remaining coordinates be independent N (0 , 1) variables independent of the first two. Then: • All univ ariate marginals of P and Q on R d are identical. • The cov ariance matrices differ only in the (1 , 2) and (2 , 1) entries, so ∥ Σ P ( d ) − Σ Q ( d ) ∥ F = 2 √ 2 | ρ | > 0 . • Since the joint distributions differ and marginals are continuous, the copulas differ , imply- ing D cop ( P ( d ) , Q ( d ) ) > 0 . Theorem 1 establishes a fundamental limitation: exact agreement of all univ ariate marginals does not constrain the multi variate dependence structure. Consequently , two distrib utions may be in- distinguishable under any mar ginal goodness-of-fit criterion while exhibiting substantially different joint behavior . This result formalizes why marginal fidelity alone cannot serve as a certificate of distributional realism for do wnstream tasks that depend on dependence structure. A P P E N D I X A 2 . P RO O F O F T H E O R E M 2 Pr oof of Theor em 2. Let P and Q be probability distributions on ( X, Y ) ∈ R 2 with finite second moments, and assume that V ar P ( X ) > 0 and V ar Q ( Y ) > 0 . Step 1: Population slope f ormula. Throughout this proof we use the centering assumption of Theorem 2: E P [ X ] = E P [ Y ] = E Q [ X ] = E Q [ Y ] = 0 . For distribution P , consider the population risk R P ( b ) := E P [( Y − bX ) 2 ] . Expanding, R P ( b ) = E P [ Y 2 ] − 2 b E P [ X Y ] + b 2 E P [ X 2 ] . Differentiating with respect to b and setting the deriv ati ve to zero giv es − 2 E P [ X Y ] + 2 b E P [ X 2 ] = 0 , hence β ( P ) = E P [ X Y ] E P [ X 2 ] . 15 Published as a conference paper at MathAI 2026 By the centering assumption stated in Theorem 2, we have E P [ X ] = E P [ Y ] = 0 . Under this assumption, the numerator satisfies E P [ X Y ] = E P [ X Y ] − E P [ X ] E P [ Y ] = Cov P ( X, Y ) , and the denominator satisfies E P [ X 2 ] = E P [ X 2 ] − ( E P [ X ]) 2 = V ar P ( X ) . Therefore, β ( P ) = Co v P ( X, Y ) V ar P ( X ) . The same argument yields β ( Q ) = Co v Q ( X, Y ) V ar Q ( X ) . Step 2: Equal-variance case. Assume V ar P ( X ) = V ar Q ( X ) = σ 2 X > 0 and V ar P ( Y ) = V ar Q ( Y ) = σ 2 Y > 0 . Then | β ( P ) − β ( Q ) | = Co v P ( X, Y ) − Cov Q ( X, Y ) σ 2 X . Define ∆ 12 := Co v P ( X, Y ) − Cov Q ( X, Y ) . Thus, | β ( P ) − β ( Q ) | = | ∆ 12 | σ 2 X . In the unit-variance case: σ 2 X = 1, this reduces to the bound stated in the main text. Step 3: Relation to co variance matrices. Let Σ P = σ 2 X Co v P ( X, Y ) Co v P ( X, Y ) V ar P ( Y ) , Σ Q = σ 2 X Co v Q ( X, Y ) Co v Q ( X, Y ) V ar Q ( Y ) . If the mar ginal v ariances are equal and the difference between Σ P and Σ Q occurs only in the of f- diagonal entry , then Σ P − Σ Q = 0 ∆ 12 ∆ 12 0 . The Frobenius norm satisfies ∥ Σ P − Σ Q ∥ F = q ∆ 2 12 + ∆ 2 12 = √ 2 | ∆ 12 | . Hence | ∆ 12 | = 1 √ 2 ∥ Σ P − Σ Q ∥ F . Step 4: Final bound. Substituting into the slope dif ference, | β ( P ) − β ( Q ) | = 1 √ 2 σ 2 X ∥ Σ P − Σ Q ∥ F . This establishes the stated relationship between regression instability and covariance distortion. Theorem 2 translates dependence div ergence into an explicit inferential consequence. Even when marginal distrib utions are identical, changes in cov ariance structure can induce large changes in the population regression coef ficient, including sign rev ersals. Thus, dependence mismatch directly leads to instability in downstream inference, re vealing a failure mode that is in visible to marginal- based ev aluation. 16 Published as a conference paper at MathAI 2026 A P P E N D I X A 3 . P RO O F O F T H E O R E M 3 Pr oof of Theor em 3. Let P and Q be distributions on R d with E P [ X ] = E Q [ X ] = 0 and finite second moments, and define the cov ariance matrices Σ P := E P [ X X ⊤ ] , Σ Q := E Q [ X X ⊤ ] . Both Σ P and Σ Q are symmetric positiv e semidefinite. Recall D Σ ( P , Q ) := ∥ Σ P − Σ Q ∥ F . (a) Eigenv alue stability (W eyl). Let λ 1 (Σ) ≥ · · · ≥ λ d (Σ) denote the ordered eigenv alues of a symmetric matrix Σ . By W eyl’ s inequality for symmetric matrices, for all k = 1 , . . . , d , | λ k ( A ) − λ k ( B ) | ≤ ∥ A − B ∥ 2 . where ∥ · ∥ 2 denotes the operator norm. Applying this with A = Σ P and B = Σ Q yields | λ k (Σ P ) − λ k (Σ Q ) | ≤ ∥ Σ P − Σ Q ∥ 2 . Using ∥ M ∥ 2 ≤ ∥ M ∥ F for all matrices M , we obtain | λ k (Σ P ) − λ k (Σ Q ) | ≤ ∥ Σ P − Σ Q ∥ F = D Σ ( P , Q ) . T aking the maximum ov er k yields max 1 ≤ k ≤ d | λ k (Σ P ) − λ k (Σ Q ) | ≤ D Σ ( P , Q ) . (b) Principal subspace stability (Da vis–Kahan). Let U P ∈ R d × r and U Q ∈ R d × r be matrices whose columns are the top- r orthonormal eigen vectors of Σ P and Σ Q , respectiv ely . By assumption (small-perturbation regime in Theorem 3), we ha ve D Σ ( P , Q ) < γ , which ensures the required spectral separation between the target r -dimensional eigenspace of Σ P and its orthogonal comple- ment under perturbation by Σ Q − Σ P . This is the precise condition under which the Davis–Kahan sin Θ theorem applies (Davis & Kahan, 1963; Y u et al., 2015). Therefore, by the Da vis–Kahan sin Θ theorem for symmetric matrices, ∥ sin Θ( U P , U Q ) ∥ 2 ≤ ∥ Σ P − Σ Q ∥ 2 γ . Again using ∥ Σ P − Σ Q ∥ 2 ≤ ∥ Σ P − Σ Q ∥ F , we conclude ∥ sin Θ( U P , U Q ) ∥ 2 ≤ ∥ Σ P − Σ Q ∥ F γ = D Σ ( P , Q ) γ . Combining (a) and (b), small covariance dependence diver gence D Σ ( P , Q ) guarantees stability of the PCA spectrum and the leading r -dimensional subspace. Theorem 3 provides a positi ve guarantee complementary to Theorems 1 and 2. If a generati ve model preserves covariance structure such that D Σ ( P , Q ) = ∥ Σ P − Σ Q ∥ F is small, then the ke y geometric quantities used by principal component analysis remain stable. In particular , the variance e xplained by each principal component changes by at most D Σ ( P , Q ) , and the leading PCA subspace is stable up to error D Σ ( P , Q ) /γ , where γ is the eigengap. Thus, cov ariance-lev el dependence fidelity is sufficient to ensure stability of dependence-sensiti ve downstream representations. Remark. The Davis–Kahan bound is informati ve when ∥ Σ P − Σ Q ∥ F ≪ γ . If the perturbation is large relati ve to the eigengap, the bound may become v acuous. 17 Published as a conference paper at MathAI 2026 B S Y N T H E T I C E X P E R I M E N T S I L L U S T R A T I N G D E P E N D E N C E E FF E C T S This appendix provides synthetic experiments that illustrate the mechanisms described in Theo- rems 1–3. All constructions use distributions with identical univ ariate marginals b ut different de- pendence structures, allowing us to isolate the ef fect of dependence di vergence on joint behavior and downstream inference. B . 1 E X P E R I M E N TA L S E T U P W e consider two bi v ariate distributions with standard normal marginals: • P : a Gaussian copula with correlation ρ , • Q : a Student- t copula with the same linear correlation ρ and degrees of freedom ν . The models are constructed such that X 1 , X 2 ∼ N (0 , 1) under both P and Q , ensuring exact mar ginal agreement. Samples of size n = 10 5 are generated for each model. The large sample size ensures that differences reflect population-level structure rather than sampling variability . This setup isolates dependence differences arising from the copula while holding marginal distrib utions fixed. B . 2 M A R G I N A L F I D E L I T Y Figure 3 sho ws the empirical mar ginal cumulati ve distribution functions (CDFs) for both models. The curves are visually indistinguishable, confirming that the univ ariate marginals coincide by con- struction. This experiment illustrates the phenomenon described in Theorem 1: agreement in all uni variate marginals does not imply agreement in the joint distrib ution. Mar ginal diagnostics alone therefore fail to detect dependence dif ferences. Figure 3: Empirical mar ginal CDFs for Gaussian and t -copula samples. The mar ginals are indistin- guishable, demonstrating that marginal fidelity alone cannot detect dependence dif ferences. B . 3 D E P E N D E N C E D I FF E R E N C E S W e e valuate the dependence-sensiti ve functional T ( P ) = Pr( X 1 > u, X 2 > u ) , 18 Published as a conference paper at MathAI 2026 introduced in Section 5.1. Although the marginal distrib utions coincide, the dependence structure differs mark edly . T o quantify this dif ference, we examine joint extreme-e vent probabilities Pr( X 1 > u, X 2 > u ) across a range of thresholds u . The corresponding results are presented in the main te xt (Figure 1). The t -copula exhibits substantially higher joint tail probabilities due to stronger tail dependence. This demonstrates that identical marginals can correspond to materially different joint risk profiles, consistent with Theorem 1. B . 4 C O V A R I A N C E - L E V E L D I S T O RT I O N T o illustrate dependence differences at the second-order level, we construct tw o Gaussian distribu- tions with identical marginals b ut correlations of equal magnitude and opposite sign: P = N (0 , Σ ρ ) , Q = N (0 , Σ − ρ ) , where Σ ρ = 1 ρ ρ 1 . The cov ariance matrices differ only in the of f-diagonal entries, yielding the Frobenius difference ∥ Σ P − Σ Q ∥ F = 2 √ 2 | ρ | . This construction isolates second-order dependence di vergence while preserving the mar ginal dis- tributions, allowing us to study the effect of cov ariance changes independently of marginal behavior . B . 5 R E G R E S S I O N I N S T A B I L I T Y W e examine the impact of dependence diver gence on downstream inference. For each distribution, we compute the population regression slope β = Co v ( X , Y ) V ar( X ) . Because the cov ariance changes sign between P and Q , the regression coefficient also changes sign, e ven though the mar ginal distrib utions are identical (Figure 4). This demonstrates that iden- tical marginals do not guarantee stability of do wnstream estimators. Small changes in dependence structure can induce large qualitati ve changes in inference, consistent with Theorem 2. Figure 4: Population regression slope under two dependence structures with identical marginal dis- tributions but opposite correlations. Dependence di vergence induces a sign re versal in the regression coefficient, illustrating instability of do wnstream inference. 19 Published as a conference paper at MathAI 2026 Figure 5: Distribution of K olmogorov–Smirnov (KS) distances across genes comparing real and synthetic gene e xpression samples. Most KS v alues are small, indicating that the synthetic data approximately preserves uni variate mar ginal distributions. B . 6 E M P I R I C A L I L L U S T R A T I O N O N G E N E E X P R E S S I O N D A T A T o complement the synthetic constructions abo ve, we provide a small empirical illustration using the Huntley Alzheimer’ s gene expression dataset av ailable in the Gene Expression Omnibus (GEO) repository (accession: GSE125050). The dataset contains RN A-seq measurements from 113 sam- ples of several purified brain cell types obtained from post-mortem superior frontal gyrus (SFG) tissue of Alzheimer’ s disease and control patients (Sriniv asan et al., 2020). The goal of this ex- periment is not to introduce a new benchmark b ut to demonstrate that dependence discrepancies between real and generated data can appear even when marginal distributions appear similar . W e compare real gene expression measurements with synthetic data generated from a Gaussian model fitted to the marginal distributions. W e note that with n = 113 samples and a high-dimensional gene expression setting, this illustration falls below the n ≈ 5 d guideline discussed in Section 6; results should therefore be interpreted as qualitati ve rather than as precise numerical estimates of cov ariance discrepancy . Marginal similarity (KS) W e first e valuate mar ginal fidelity using K olmogorov–Smirnov (KS) statistics across genes. Figure 5 shows the resulting histogram of KS distances indicates that most genes exhibit small marginal discrepancies, suggesting that the synthetic data approximately pre- serves uni variate distrib utions. Covariance distortion Next, we e xamine cov ariance-lev el dif ferences between real and synthetic data. Let Σ real and Σ syn denote the empirical cov ariance matrices of the real and synthetic datasets. W e measure second-order dependence di vergence using the Frobenius norm ∥ Σ real − Σ syn ∥ F . Figure 6 visualizes the cov ariance difference matrix. Despite similar marginal behavior , noticeable cov ariance discrepancies remain, indicating that dependence structure is not fully preserved. Downstr eam regr ession sensitivity Finally , we examine the effect on downstream inference by comparing regression coef ficients estimated from real and synthetic data (Figure 7). Although the marginal distributions are similar , the regression coefficients dif fer across datasets, reflecting sensi- tivity to underlying dependence structure. 20 Published as a conference paper at MathAI 2026 Figure 6: Heatmap of the covariance dif ference matrix Σ real − Σ syn for the gene expression dataset. While marginal distrib utions appear similar, visible cov ariance discrepancies remain, illustrating differences in dependence structure between real and synthetic data. Figure 7: Comparison of regression coefficients estimated from real and synthetic gene e xpression data. Each point represents a coefficient estimate for a gene. Deviations from the identity relation- ship indicate sensitivity of do wnstream inference to differences in dependence structure. B . 7 S U M M A RY These experiments collecti vely illustrate three ke y phenomena: 1. Identical marginals do not imply identical joint distributions (Theorem 1). 2. Differences in dependence structure can substantially alter joint tail risk and extreme-e vent behavior . 3. Cov ariance-level distortions can induce instability in do wnstream inference (Theorem 2), while small cov ariance div ergence ensures stability for co variance-based procedures (The- orem 3). T ogether , these results provide empirical illustrations for the central claim of the paper: dependence fidelity is essential for trustworthy inference, e ven when marginal fidelity is satisfied. The additional gene e xpression experiment demonstrates that the same dependence-related phenomena can arise in realistic high-dimensional biological datasets. 21 Published as a conference paper at MathAI 2026 These experiments are constructed at the population lev el and therefore isolate structural effects of dependence rather than sampling variability . They illustrate that dependence distortions can remain undetected by marginal diagnostics while producing substantial changes in joint risk and down- stream inference, consistent with the theoretical results. B . 8 R E P R O D U C I B I L I T Y Code and instructions to reproduce the synthetic experiments are av ailable at https://github. com/NaziaRiasat/dependence- fidelity . 22

Original Paper

Loading high-quality paper...

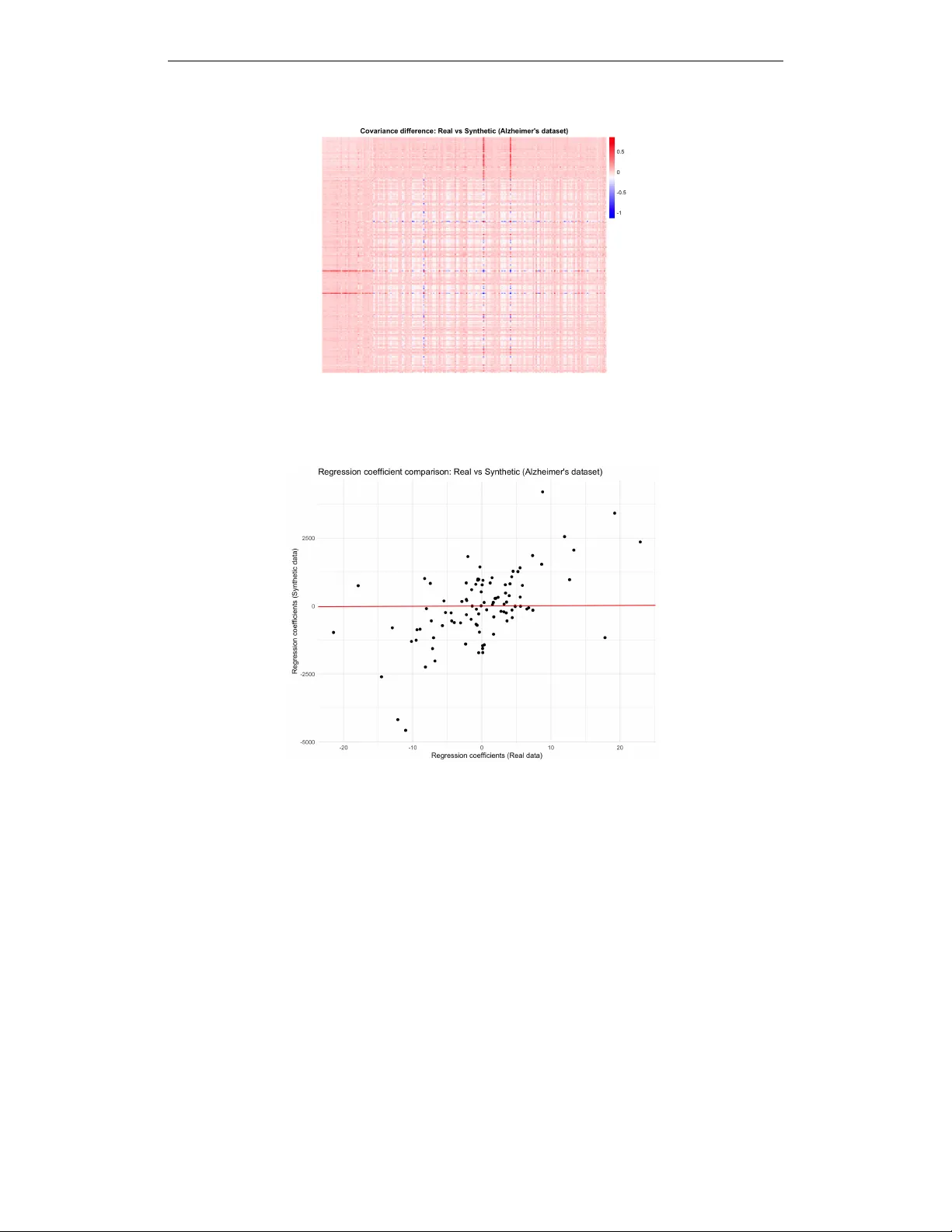

Comments & Academic Discussion

Loading comments...

Leave a Comment