Sequential Bayesian Experimental Design for Prediction in Physical Experiments Informed by Computer Models

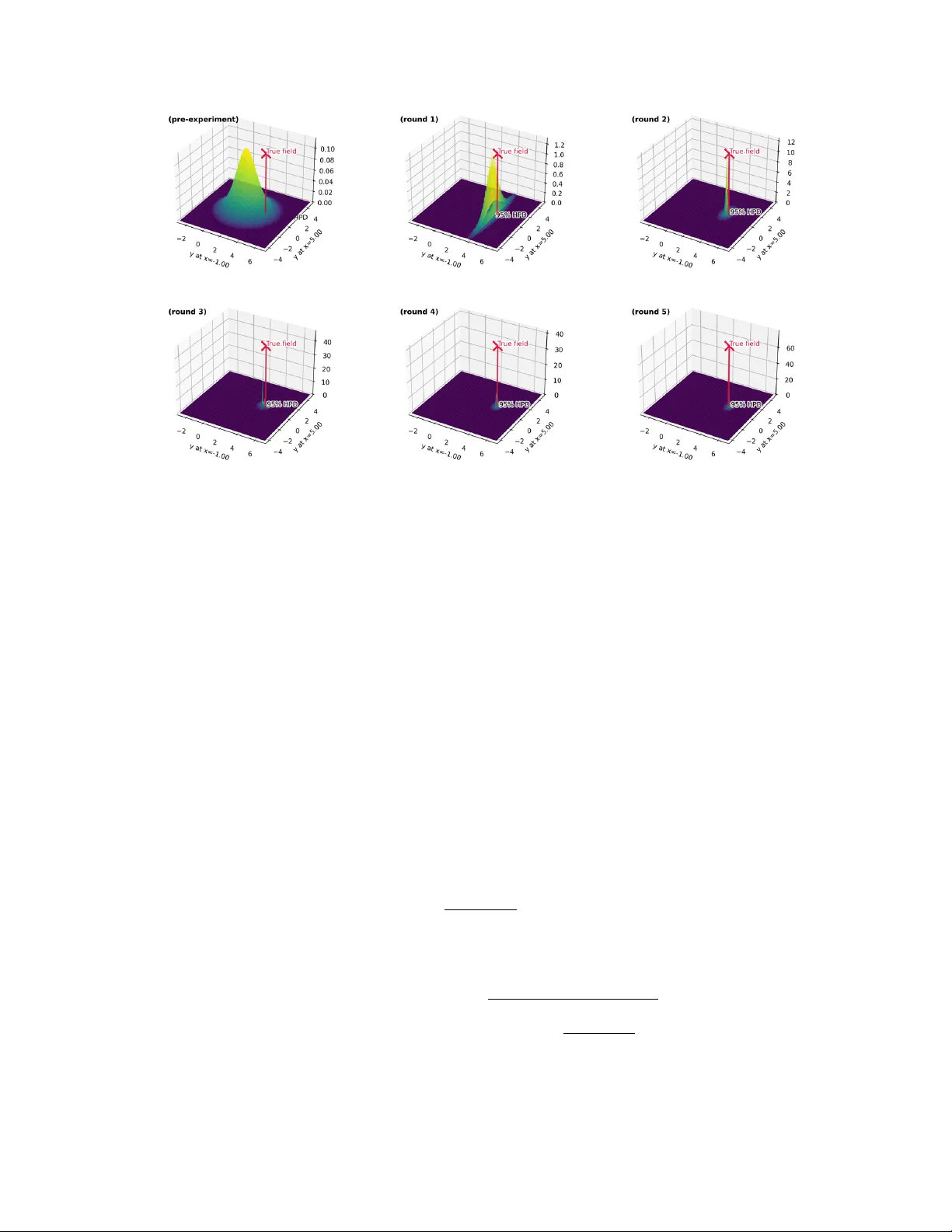

In many scientific and engineering domains, physical experiments are often costly, non-replicable, or time-consuming. The Kennedy and O'Hagan (KOH) model framework has become a widely used approach for combining simulator runs with limited experiment…

Authors: Hao Zhu, Markus Hainy